Constructing directed networks from multivariate time series using linear modelling technique

We describe a method to construct directed networks from multivariate time series which has several advantages over the widely accepted methods. This method is based on an information theoretic reduction of linear (auto-regressive) models. The models are called reduced auto-regressive (RAR) models. The procedure of the proposed method is composed of three steps: (i) each time series is treated as a basic node of a network, (ii) multivariate RAR models are built and the constituent information in the models is summarized, and (iii) nodes are connected with a directed link based on that summary information. The proposed method is demonstrated for numerical data generated by known systems, and applied to several actual time series of special interest. Although the proposed method can identify connectivity, there are three points to keep in mind: (1) the proposed method cannot always identify nonlinear relationships among components, (2) as constructing RAR models is NP-hard, the network constructed by the proposed method might be near-optimal network when we cannot perform an exhaustive search, and (3) it is difficult to construct appropriate networks when the observational noise is large.

💡 Research Summary

**

The paper introduces a novel framework for constructing directed networks from multivariate time‑series data, addressing several shortcomings of the widely used frequency‑based (Directed Transfer Function, DTF; Partial Directed Coherence, PDC) and time‑based (cross‑correlation, CC) approaches. While DTF and PDC rely on a multivariate autoregressive (MV‑AR) model transformed into the frequency domain, and the naive CC method uses a fixed threshold on pairwise correlations, both methods suffer from ambiguous interpretation of the resulting links, limited sensitivity to nonlinear or non‑stationary dynamics, and difficulty handling multiple time‑scales within the same dataset.

To overcome these issues, the authors adopt the Reduced Auto‑Regressive (RAR) model, an information‑theoretic reduction of linear AR models. The RAR procedure selects a subset of lagged variables that minimizes an information criterion (e.g., AIC or BIC). Because the combinatorial selection of lagged terms is NP‑hard, the authors employ heuristic search strategies (forward selection, backward elimination, or other greedy algorithms) to obtain a near‑optimal model without exhaustive enumeration.

The proposed network‑construction pipeline consists of three steps:

- Node definition – each individual time series is treated as a node.

- Multivariate RAR modeling – for each node, a regression model is built with all other series (and their various lags) as candidate predictors. Only those predictors retained by the RAR selection process are considered to exert a direct causal influence on the target node.

- Link formation – a directed edge from node j to node i is drawn if the lagged variable x_j(t‑ℓ) appears in the RAR model for x_i(t). If no predictor from node j survives the selection, no edge is placed. The resulting adjacency matrix is binary, reflecting the presence or absence of a direct linear periodic relationship.

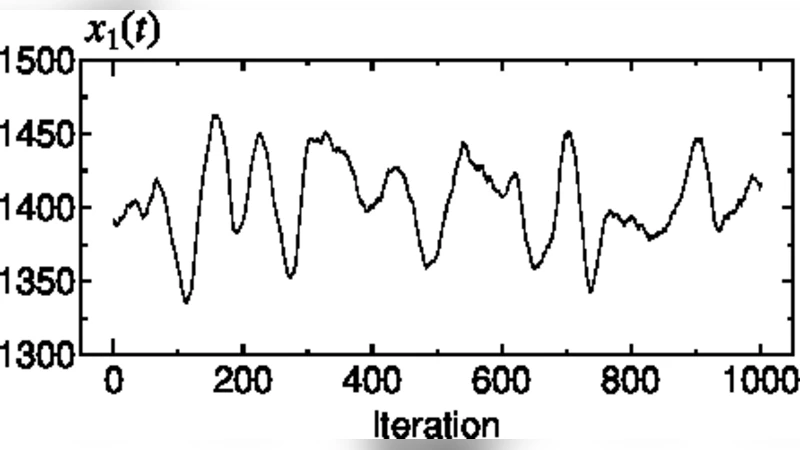

The authors illustrate the method with two synthetic systems. System 1 is a purely linear AR network (Eqs. 1‑4) where the true connectivity is known; System 2 combines linear AR components with a chaotic Rössler subsystem (Eqs. 5‑8), providing a test case where the underlying linear structure is partially obscured by nonlinear dynamics. In both cases, the RAR‑based networks correctly recover the intended directed connections, whereas DTF, PDC, and the naive CC method either miss links or generate spurious ones due to their reliance on phase differences or simple correlation thresholds.

Real‑world applications are presented on (a) meteorological data (temperature, humidity, wind speed, etc.) and (b) electroencephalography (EEG) recordings. In the meteorological example, the RAR network captures multi‑scale interactions (e.g., diurnal vs. seasonal influences) that are not evident in frequency‑domain measures. In the EEG case, the method reveals directed functional connectivity among cortical regions, offering clearer interpretation than PDC, which often yields dense, hard‑to‑interpret graphs.

Three important limitations are acknowledged:

- Linear assumption – RAR models cannot capture purely nonlinear dependencies; they only identify linear periodic structures that dominate the data.

- NP‑hard model selection – Because exhaustive search is infeasible, the obtained network is near‑optimal; different heuristic choices may lead to slightly different adjacency matrices.

- Sensitivity to observational noise – High noise levels degrade the reliability of variable selection, potentially leading to false positives or missed edges.

The discussion suggests future extensions: incorporating nonlinear basis functions into the RAR framework (e.g., kernel‑based RAR), employing Bayesian model averaging or Markov‑Chain Monte Carlo to better explore the model space, and developing robust preprocessing pipelines (differencing, high‑pass filtering) to mitigate noise effects.

In summary, the paper demonstrates that reduced auto‑regressive modeling provides a principled, information‑theoretic route to construct directed networks that faithfully reflect the underlying linear periodic relationships in multivariate time series. Compared with traditional DTF/PDC and cross‑correlation methods, the RAR‑based approach yields more interpretable, sparsely connected graphs, while also highlighting the need for careful handling of nonlinearity, computational complexity, and noise. This work thus contributes a valuable tool for researchers analyzing complex dynamical systems such as climate networks, brain connectivity, and other domains where multivariate temporal data are abundant.

Comments & Academic Discussion

Loading comments...

Leave a Comment