A Tutorial on Network Embeddings

Network embedding methods aim at learning low-dimensional latent representation of nodes in a network. These representations can be used as features for a wide range of tasks on graphs such as classification, clustering, link prediction, and visualization. In this survey, we give an overview of network embeddings by summarizing and categorizing recent advancements in this research field. We first discuss the desirable properties of network embeddings and briefly introduce the history of network embedding algorithms. Then, we discuss network embedding methods under different scenarios, such as supervised versus unsupervised learning, learning embeddings for homogeneous networks versus for heterogeneous networks, etc. We further demonstrate the applications of network embeddings, and conclude the survey with future work in this area.

💡 Research Summary

The paper provides a comprehensive tutorial on network embedding, a set of techniques that map graph nodes into low‑dimensional continuous vectors suitable for downstream tasks such as node classification, clustering, link prediction, and visualization. It begins by outlining five desirable properties of embeddings—adaptability, scalability, community awareness, low dimensionality, and continuity—and argues that any practical method should strive to satisfy these criteria.

A historical overview follows, covering classical dimensionality‑reduction methods such as Principal Component Analysis (PCA) and Multidimensional Scaling (MDS). While these techniques can capture linear structure, they require O(n³) time for eigen‑decomposition and fail to model non‑linear relationships inherent in many real‑world graphs. Non‑linear extensions like IsoMap and Locally Linear Embedding (LLE) are introduced, but they still depend on constructing a neighborhood graph and suffer from quadratic or higher computational costs. Spectral approaches based on the graph Laplacian (Laplacian Eigenmaps, SoCDim) improve community detection by leveraging eigenvectors of the Laplacian, yet they remain computationally intensive for large networks.

The “Age of Deep Learning” section centers on DeepWalk, the first method that bridges graph embedding with word‑embedding techniques. DeepWalk treats random walks on a graph as sentences and applies the Skip‑gram model to predict context nodes given a center node. The authors decompose DeepWalk into three modular steps: (1) matrix selection (e.g., transition matrix, normalized Laplacian, powers of the adjacency matrix), (2) graph sampling (truncated random walks or direct matrix computation), and (3) embedding learning (Skip‑gram with hierarchical or sampled softmax). This modularity enables extensions by swapping any component: alternative matrices (HOPE for directed graphs, SiNE for signed graphs, various attributed‑graph matrices), new sampling strategies, or different sequence models (RNNs, CNNs).

The paper surveys a broad family of extensions built on the DeepWalk paradigm. Directed graphs are handled by HOPE, signed graphs by SiNE, and attributed graphs by methods that incorporate node features into the random‑walk generation or the loss function. Heterogeneous networks are addressed through meta‑path based random walks and type‑aware embedding spaces. Semi‑supervised and transductive approaches are also discussed, showing how label information can be injected into the Skip‑gram objective or used to regularize the embedding space.

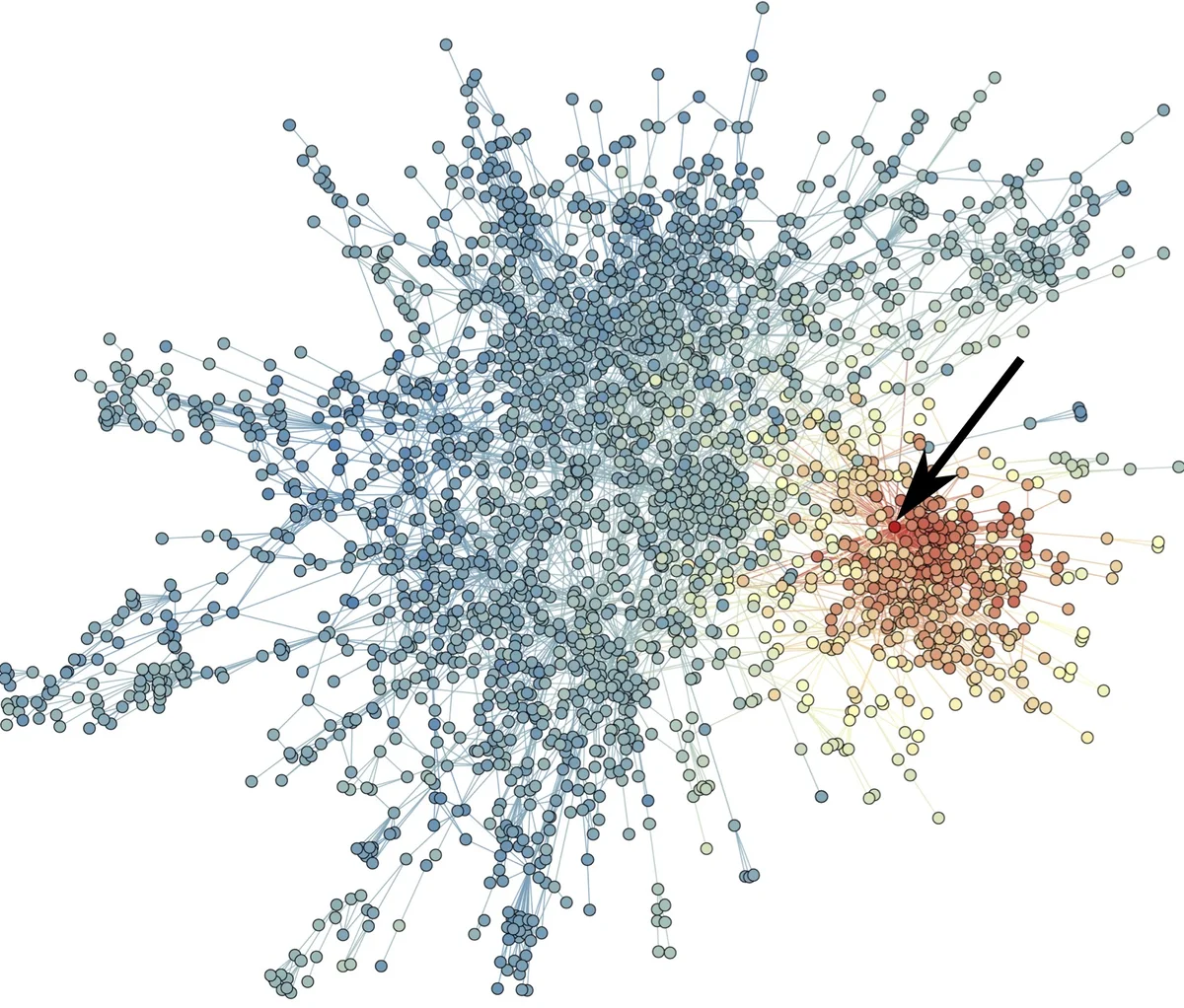

Empirical results on the classic Zachary Karate Club network illustrate the advantages of DeepWalk over traditional techniques. Two‑dimensional embeddings produced by DeepWalk align closely with modularity‑based community partitions, yielding linearly separable clusters. In contrast, PCA, MDS, LLE, Laplacian Eigenmaps, and SVD produce embeddings where community boundaries are blurred, confirming that the random‑walk‑based context captures higher‑order structural similarity more effectively.

The authors conclude by identifying current limitations and future research directions. Key challenges include: (i) developing continuous, incremental embeddings for dynamic or temporal graphs; (ii) designing unified frameworks that simultaneously model multiple node and edge types in heterogeneous networks; (iii) improving interpretability, fairness, and privacy preservation of embeddings; and (iv) creating memory‑ and compute‑efficient implementations that exploit modern hardware (GPU/TPU) and distributed systems. Addressing these issues will broaden the applicability of network embeddings across domains such as social network analysis, bioinformatics, and knowledge‑graph construction, enabling more scalable and semantically rich graph‑based machine learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment