Design an Advance computer-aided tool for Image Authentication and Classification

Over the years, advancements in the fields of digital image processing and artificial intelligence have been applied in solving many real-life problems. This could be seen in facial image recognition for security systems, identity registrations. Hence a bottleneck of identity registration is image processing. These are carried out in form of image preprocessing, image region extraction by cropping, feature extraction using Principal Component Analysis (PCA) and image compression using Discrete Cosine Transform (DCT). Other processing includes filtering and histogram equalization using contrast stretching is performed while enhancing the image as part of the analytical tool. Hence, this research work presents a universal integration image forgery detection analysis tool with image facial recognition using Back Propagation Neural Network (BPNN) processor. The proposed designed tool is a multi-function smart tool with the novel architecture of programmable error goal and light intensity. Furthermore, its advance dual database increases the efficiency of a high-performance application. With the fact that, the facial image recognition will always, give a matching output or closest possible output image for every input image irrespective of the authenticity, the universal smart GUI tool is proposed and designed to perform image forgery detection with the high accuracy of 2% error rate. Meanwhile, a novel structure that provides efficient automatic image forgery detection for all input test images for the BPNN recognition is presented. Hence, an input image will be authenticated before being fed into the recognition tool.

💡 Research Summary

The paper proposes an integrated computer‑aided tool that simultaneously performs image forgery detection and facial recognition. The authors argue that image processing, especially preprocessing, region extraction, feature extraction (via PCA), and compression (via DCT) constitute a bottleneck in current biometric registration systems. To address this, they design a four‑stage pipeline implemented as a MATLAB‑GUI application.

Stage 1 – Image Authentication (Forgery Detection).

The system first checks whether an input image has been tampered with by looking for resampling or interpolation traces. A derivative operator is applied to the image variance, and the resulting signal is processed with a Radon transform. By projecting the image gradient along 0°–179° in 1° increments, 180 one‑dimensional vectors are obtained. Auto‑covariance of these vectors is computed; a strong periodic pattern indicates a forged image, while a simple impulse‑like spectrum suggests authenticity. The authors present mathematical formulations for affine transformations, derivative kernels, and the Radon projection, but they do not provide quantitative metrics (e.g., detection rate, false‑positive rate) or comparisons with existing forensic methods.

Stage 2 – Image Pre‑processing.

If the image passes the authenticity test, it undergoes average filtering, histogram equalization, and contrast stretching to improve visual quality. The image is then down‑sampled to reduce computational load. Feature extraction proceeds by applying Principal Component Analysis (PCA) and a two‑dimensional Discrete Cosine Transform (DCT) to convert the 2‑D image into a 1‑D feature vector suitable for neural‑network input. The paper lacks details on how many principal components are retained, the DCT block size, or the impact of these choices on downstream recognition accuracy.

Stage 3 – Neural‑Network Training (Facial Recognition).

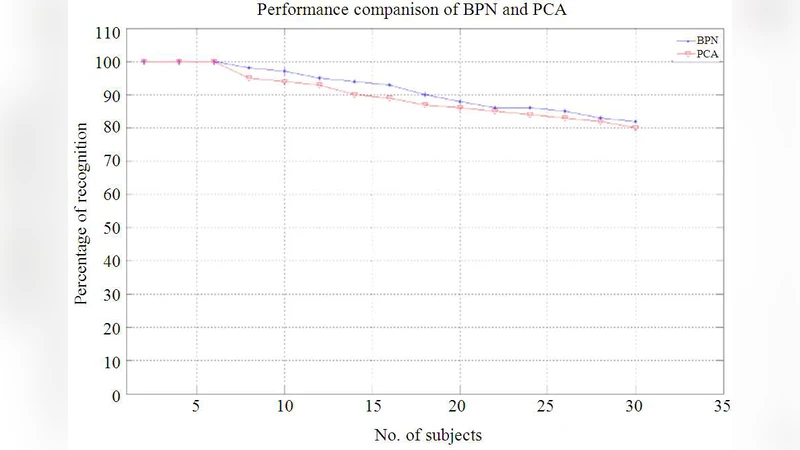

A Back‑Propagation Neural Network (BPNN) is employed for classification. The network consists of an input layer (receiving the PCA‑DCT vector), one hidden layer with a number of neurons chosen heuristically, and an output layer representing the enrolled subjects. Training minimizes the mean‑square error (MSE) and aims for an error goal of ±2 % (i.e., a claimed 98 % recognition accuracy). The authors display a training curve and a plot of execution time versus hidden‑layer size, showing that more hidden neurons accelerate convergence but increase runtime. However, critical hyper‑parameters such as learning rate, activation functions, weight initialization, and regularization are omitted.

Stage 4 – Testing and Classification.

Test images, after undergoing the same preprocessing and vectorization, are fed to the trained BPNN. Euclidean distance between the test vector and each database vector is computed, and the closest match is reported. The authors report that with a dual‑database architecture (two sets of ten images each) and a modest number of subjects, the system achieves an error rate of about 2 %. Figures illustrate original versus forged image spectra, recognition results for shifted faces, and the relationship between the number of subjects and error percentage.

Critical Evaluation.

While the concept of coupling forgery detection with biometric recognition is appealing, the paper suffers from several methodological weaknesses:

-

Dataset Size and Diversity – Only ten images per database (twenty total) are used, all of the same size and format. Such a tiny dataset cannot support statistically meaningful claims about 2 % error rates. No public benchmark (e.g., LFW, CASIA) is employed, and there is no cross‑validation or hold‑out testing.

-

Lack of Comparative Baselines – The authors do not compare their forgery detector with established forensic tools (e.g., JPEG‑ghost, PRNU analysis) nor their BPNN recognizer with state‑of‑the‑art deep‑learning models (e.g., CNNs, FaceNet). Consequently, the reported performance cannot be contextualized.

-

Insufficient Performance Metrics – Only a single error percentage is reported. No precision, recall, F‑score, ROC curves, or equal‑error‑rate (EER) values are provided for either the detection or recognition modules.

-

Reproducibility Gaps – Key implementation details (number of PCA components, DCT block size, learning rate, weight decay, stopping criteria) are missing, making independent replication difficult.

-

Theoretical Justification – The use of Radon transform to expose periodic patterns caused by resampling is theoretically sound, yet the paper does not quantify robustness against common post‑processing (e.g., JPEG compression, noise addition) that could mask such patterns.

-

Dual‑Database Concept – The notion of a “dual database” is introduced but never explained; it is unclear whether the two databases are meant for forged vs. authentic images, for different illumination conditions, or simply to increase capacity.

Future Directions.

To strengthen the contribution, the authors should:

- Evaluate the forgery detector on a larger, publicly available forensic dataset, reporting detection accuracy, false‑positive/negative rates, and robustness to compression.

- Replace or augment the BPNN with modern convolutional architectures and compare against baseline face‑recognition systems.

- Provide a thorough ablation study showing the impact of each preprocessing step (contrast stretching, PCA, DCT) on recognition performance.

- Clarify the dual‑database architecture and demonstrate how it improves scalability or security.

- Release code and parameter settings to enable reproducibility.

In summary, the paper presents an ambitious integration of image authentication and facial classification within a single GUI tool, but the experimental validation is limited, and many technical details are omitted. Substantial additional work is required to substantiate the claimed 2 % error rate and to position the system as a viable alternative to existing biometric and forensic solutions.

Comments & Academic Discussion

Loading comments...

Leave a Comment