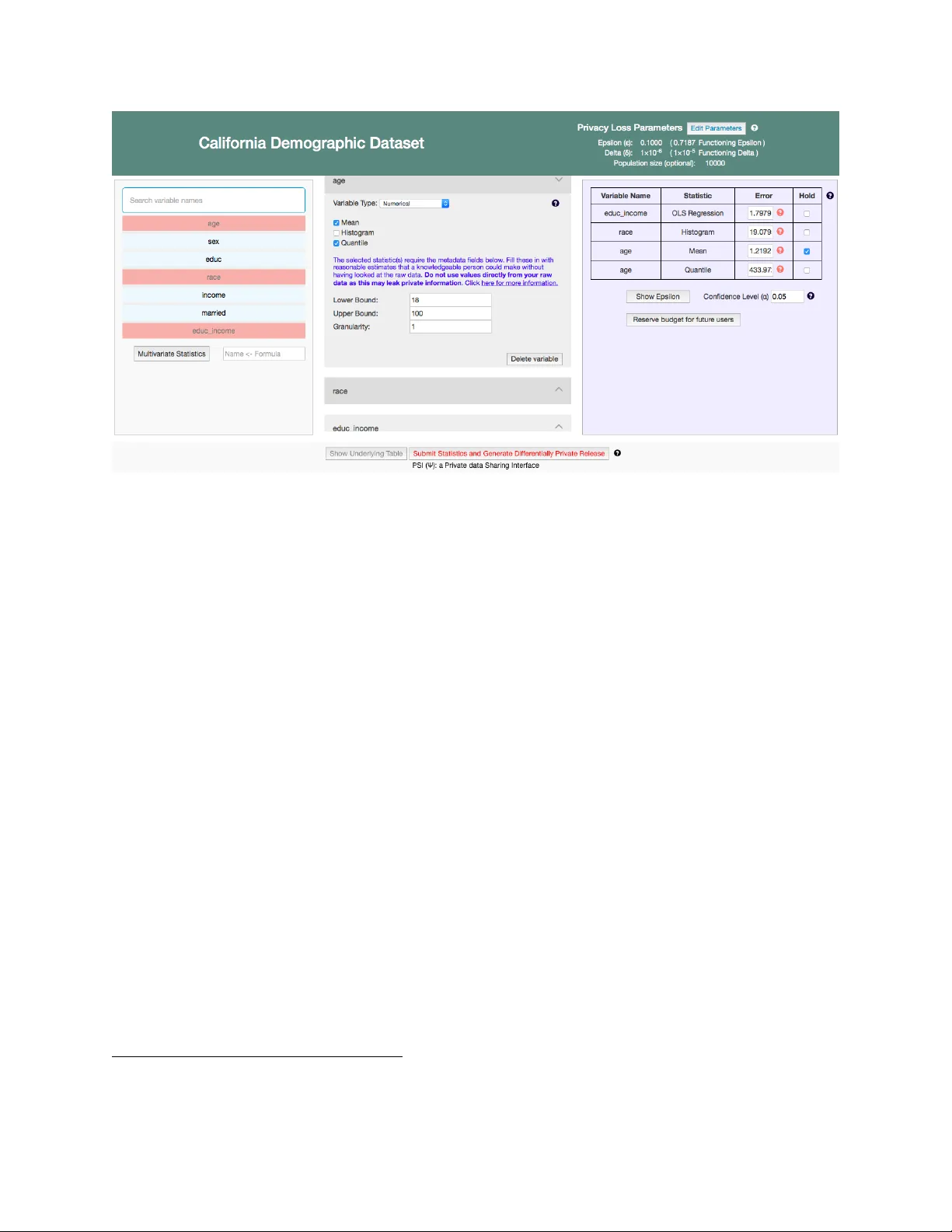

PSI ({Psi}): a Private data Sharing Interface

We provide an overview of PSI ("a Private data Sharing Interface"), a system we are developing to enable researchers in the social sciences and other fields to share and explore privacy-sensitive datasets with the strong privacy protections of differ…

Authors: Marco Gaboardi, James Honaker, Gary King