Likelihood-free inference with an improved cross-entropy estimator

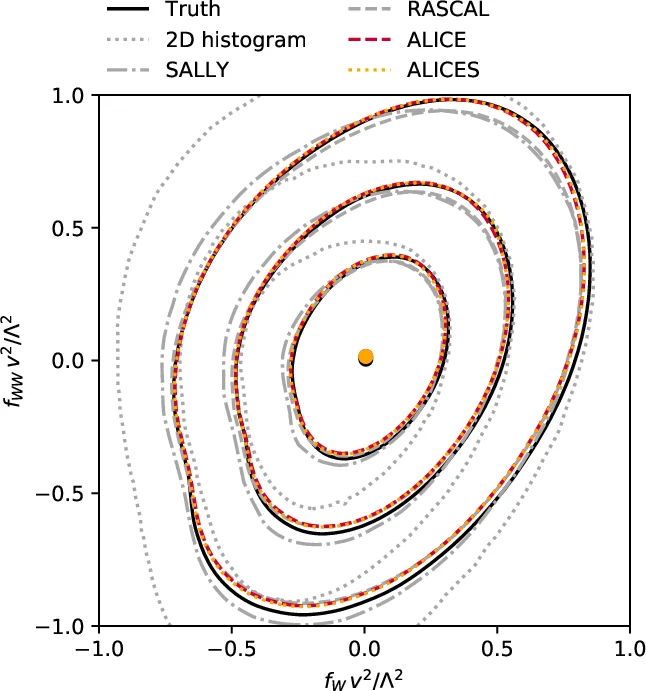

We extend recent work (Brehmer, et. al., 2018) that use neural networks as surrogate models for likelihood-free inference. As in the previous work, we exploit the fact that the joint likelihood ratio and joint score, conditioned on both observed and latent variables, can often be extracted from an implicit generative model or simulator to augment the training data for these surrogate models. We show how this augmented training data can be used to provide a new cross-entropy estimator, which provides improved sample efficiency compared to previous loss functions exploiting this augmented training data.

💡 Research Summary

The paper builds on the “gold‑mining” paradigm introduced by Brehmer et al. (2018), which exploits additional information that can be extracted from a simulator: the joint likelihood ratio r(x,z|θ₀,θ₁) and the joint score t(x,z|θ₀). While the original works used mean‑squared‑error (MSE) based losses to train neural networks that approximate the intractable likelihood ratio, those losses suffer from high variance because a few samples with large ratio values dominate the loss.

The authors propose to replace the MSE loss with a cross‑entropy based loss that directly uses the exact conditional class probability

s(x,z|θ₀,θ₁)=p(y=1|x,z)=1/(1+r(x,z|θ₀,θ₁)).

Because s is known for every simulated event (both for class 0 and class 1), each sample contributes information to both terms of the binary cross‑entropy, effectively doubling the amount of usable information per event. This leads to a lower‑variance estimator of the cross‑entropy, which they call the ALICE (Approximate Likelihood with Improved Cross‑Entropy) estimator.

Mathematically, the ALICE loss is

L_ALICE = – (1/N) Σ_i

Comments & Academic Discussion

Loading comments...

Leave a Comment