Focused Model-Learning and Planning for Non-Gaussian Continuous State-Action Systems

We introduce a framework for model learning and planning in stochastic domains with continuous state and action spaces and non-Gaussian transition models. It is efficient because (1) local models are estimated only when the planner requires them; (2)…

Authors: Zi Wang, Stefanie Jegelka, Leslie Pack Kaelbling

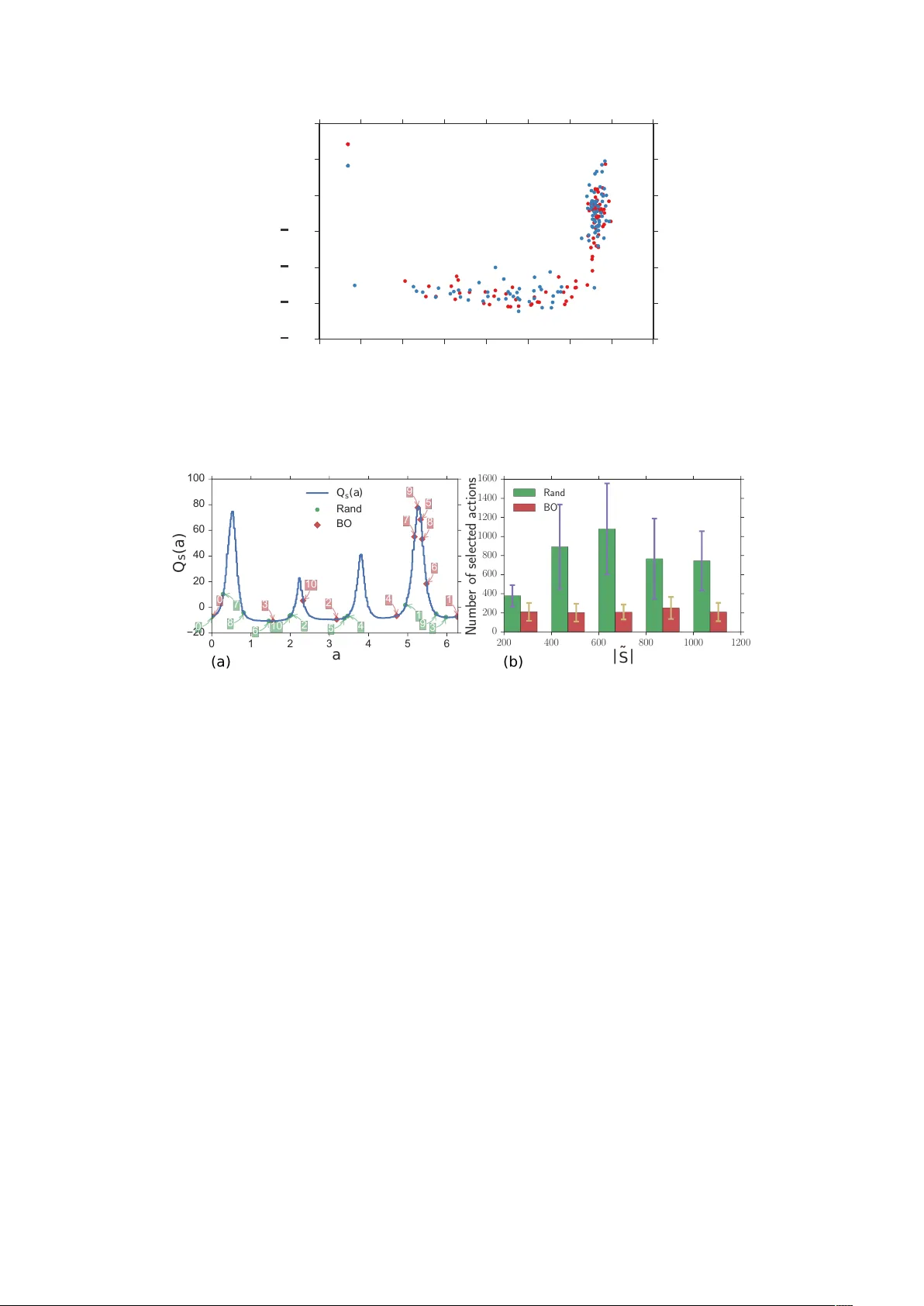

F ocused Model-Lear ning and Planning f or Non-Gaussian Continuous State-Action Systems Zi W ang Stefanie Je gelka Leslie Pack Kaelbling T om ´ as Lozano-P ´ erez ∗ Abstract W e introduce a frame work for model learning and planning in stochastic domains with continuous state and action spaces and non-Gaussian transition models. It is efficient because (1) local models are estimated only when the planner requires them; (2) the planner focuses on the most rele vant states to the current planning problem; and (3) the planner focuses on the most informati ve and/or high-v alue actions. Our theoretical analysis shows the v alidity and asymptotic optimality of the proposed approach. Empirically , we demonstrate the ef fecti veness of our algorithm on a simulated multi-modal pushing problem. 1 Intr oduction Most real-world domains are suf ficiently complex that it is dif ficult to b uild an accurate deterministic model of the effects of actions. Even with highly accurate actuators and sensors, stochasticity still widely appears in basic manipulations, especially non-prehensile ones [36]. The stochasticity may come from inaccurate ex ecution of actions as well as from lack of detailed information about the underlying w orld state. For example, rolling a die is a deterministic process that depends on the forces applied, air resistance, etc.; ho wev er , we are not able to model the situation sufficiently accurately to plan reliable actions, nor to ex ecute them repeatably if we could plan them. W e can plan using a stochastic model of the system, but in many situations, such as rolling dice or pushing a can shown in Fig. 1, the stochasticity is not modeled well by additi ve single-mode Gaussian noise, and a more sophisticated model class is necessary . In this paper , we address the problem of learning and planning for non-Gaussian stochastic systems in the practical setting of continuous state and action spaces. Our framework learns transition models that can be used for planning to achiev e different objectiv es in the same domain, as well as to be poten- tially transferred to related domains or ev en different types of robots. This strategy is in contrast to most reinforcement-learning approaches, which build the objectiv e into the structure being learned. In addition, rather than constructing a single monolithic model of the entire domain which could be dif ficult to represent, our method uses a memory-based learning scheme, and computes localized models on the fly , only when the planner requires them. T o a void constructing models that do not contrib ute to improving the policy , the planner should focus only on states relev ant to the current planning problem, and actions that can lead to high re ward. W e propose a closed-loop planning algorithm that applies to stochastic continuous state-action systems with arbitrary transition models. It is assumed that the transition models are represented by a function that may be expensi ve to ev aluate. V ia two important steps, we focus the computation on the current problem instance, defined by the starting state and goal region. T o focus on rele v ant states, we use real time dynamic programming (R TDP) [2] on a set of states strategically sampled by a rapidly-exploring random tree (RR T) [18, 13]. T o focus selection of actions from a continuous space, we dev elop a ne w batch Bayesian optimization (BO) technique that selects and tests, in parallel, action candidates that will lead most quickly to a near-optimal answer . W e sho w theoretically that the expected accumulated difference between the optimal value function of the original problem and the value of the policy we compute vanishes sub-linearly in the number of actions ∗ Computer Science and Artificial Intelligence Laboratory , Massachusetts Institute of T echnology , 77 Massachusetts A ve., Cam- bridge, MA 02139. { ziw,stefje,lpk,tlp } @csail.mit.edu 1 Figure 1: A quasi-static pushing problem: the pusher has a velocity controller with low gain, resulting in non-Gaussian transitions. W e sho w trajectories for object and pusher resulting from the same push velocity . we test, under mild assumptions. Finally we ev aluate our approach empirically on a simulated multi-modal pushing problem, and demonstrate the ef fectiv eness and efficienc y of the proposed algorithm. 2 Related W ork Learning The class of problems that we address may be viewed as reinforcement-learning (RL) problems in observable continuous state-action spaces. It is possible to address the problem through model-free RL, which estimates a v alue function or policy for a specific goal directly through experience. Though the majority of work in RL addresses domains with discrete action spaces, there has been a thread of rele vant work on value-function-based RL in continuous action spaces [12, 1, 24, 31, 23]. An alternativ e approach is to do direct search in the space of policies [6, 14]. In continuous state-action spaces, model-based RL, where a model is estimated to optimize a policy , can often be more effecti ve. Gaussian processes (GP) can help to learn the dynamics [7, 26, 22], which can then be used by GP-based dynamic programming [8, 26] to determine a continuous-v alued closed-loop policy for the whole state space. More details can be found in the excellent surve y [10]. Unfortunately , the common assumption of i.i.d Gaussian noise on the dynamics is restrictive and may not hold in practice [36], and the transition model can be multi-modal. It may additionally be difficult to obtain a good GP prior . The basic GP model is can capture neither the multi-modality nor the heteroscedas- ticity of the noise. While more adv anced GP algorithms may address these problems, they often suf fer from high computational cost [29, 37]. Moldov an et al. [21] addressed the problem of multi-modality by using Dirichlet process mixture mod- els (DPMMs) to learn the density of the transition models. Their strategies for planning were limited by deterministic assumptions, appropriate for their domains of application, b ut potentially resulting in colli- sions in ours. Kopicki et al. [16, 15, 17] addressed the problem of learning to predict the behavior of rigid objects under manipulations such as pushing, using kernel density estimation. In this paper , we propose an efficient planner that can work with arbitrary , especially multi-modal stochastic models in continuous state-action spaces. Our learning method in the experiment resembles DPMMs b ut we estimate the density on the fly when the planner queries a state-action pair . W e were not able to compare our approach with DPMMs because we found DPMMs not computationally feasible for large datasets. Planning W e are interested in domains for which queries are made by specifying a starting state and a goal set, and in which the solution to the given query can be described by a policy that covers only a small fraction of the state space that the robot is likely to encounter . Planning only in the fraction of the state-action space that the robot is lik ely to encounter is, in general, very challenging. Other related work uses tree-based search methods [33, 19, 35], where the actions are selected by optimizing an optimistic heuristic. These algorithms are impractical for our problem because of the exponential gro wth of the tree and the lack of immediate re wards that can guide the pruning of the tree. In contrast to the tree-search algorithms, iMDP [13], which is most related to our work, uses sampling techniques from RR Ts to create successi vely more accurate discrete MDP approximations of the original continuous MDP , ultimately conv erging to the optimal solution to the original problem. Their method as- 2 sumes the ability to solve the Bellman equation optimally (e.g. for a simple stochastic LQR problem), the av ailability of the backward transition models, and that the dynamics is modeled by a W iener process, in which the transition noise is Gaussian with execution-time-dependent v ariance. Ho wev er , the assumptions are too restrictiv e to model our domains of interest where the dynamics is non-closed-form, costly to e valu- ate, non-rev ersible, and non-Gaussian. Furthermore, iMDP is designed for stochastic control problems with multiple starting states and a single goal, while we are interested in multiple start-goal pairs. Our work builds on the idea of constructing a sequence of MDPs from iMDP [13], and aims at prac- tically resolving the challenges of state/action selection faced by both iMDP and tree-search-based plan- ners [33]. Bayesian optimization There ha ve been a number of applications of BO in optimal control, although to our knowledge, it has not been pre viously applied to action-selection in continuous-action MDPs. BO has been used to find weights in a neural network controller [9], to solve for the parameters of a hierar- chical MDP [3], and to address safe exploration in finite MDPs [30]. T o our knowledge, BO has not been pre viously applied to action-selection in continuous-action MDPs. 3 Pr oblem formulation Let the state space S ⊂ R d s with metric d and the control space U ⊂ R d u both be compact and measurable sets. The interior of the state space S is S o and the boundary is ∂ S . For the control space U , there e xists an open set U o in R d u such that U is the closure of U o . W e assume the state is fully observed (any remaining latent state will manifest as stochasticity in the transition models). Actions a = ( u, ∆ t ) are composed of both a control on the robot and the duration for which it will be exerted, so the action space is A = U × [ T min , T max ] , where T min , T max ∈ R + \ {∞} are the minimum and the maximum amount of duration allo wed. The action space A is also a compact set. The starting state is s 0 , and the goal re gion is denoted as G ⊂ S , in which all states are terminal states. W e assume G has non-zero measure, and S has finite measure. The transition model has the form of a continuous probability density function p s 0 | s,a on the resulting state s 0 , gi ven pre vious state s and action a , such that ∀ s 0 ∈ S, p s 0 | s,a ( s 0 | s, a ) ≥ 0 , R S p ( s 0 | s, a ) d s 0 = 1 . Gi ven a transition model and a cost function C : S × S × A → R associated with a goal re gion, we can formulate the problem as a continuous state-action MDP ( S, A, p s 0 | s,a , R, γ ) , where R ( s 0 | s, a ) = − C ( s 0 | s, a ) is the immediate rew ard function and γ is the discount factor . A high reward is assigned to the states in the goal region G , and a cost is assigned to colliding with obstacles or taking any action. W e would like to solve for the optimal polic y π : S → A , for which the value of each state s is V π ( s ) = max a ∈ A Z s 0 ∈ S p s 0 | s,a ( s 0 | s, a ) R ( s 0 | s, a ) + γ ∆ t V π ( s 0 ) d s 0 . 4 Our method: B O I D P W e describe our algorithm Bayesian Optimization Incremental-realtime Dynamic Programming ( B O I D P) in this section. At the highest lev el, B O I D P in Alg. 1 operates in a loop, in which it samples a discrete set of states ˜ S ⊂ S and attempts to solve the discrete-state, continuous-action MDP ˜ M = ( ˜ S , A, ˆ P s 0 | s,a , R, γ ) . Here ˆ P s 0 | s,a ( s 0 | s, a ) is the probability mass function for the transition from state s ∈ ˜ S and action a ∈ A to a new state s 0 ∈ ˜ S . The v alue function for the optimal policy of the approximated MDP ˜ M is V ( s ) = max a ∈ A Q s ( a ) , where Q s ( a ) = X s 0 ∈ ˜ S ˆ P s 0 | s,a ( s 0 | s, a ) R ( s 0 | s, a ) + γ ∆ t V ( s 0 ) . (1) If the value of the resulting policy is satisfactory according to the task-related stopping criterion 1 , we can proceed; otherwise, additional state samples are added and the process is repeated. Once we hav e a policy 1 For e xample, one stopping criterion could be the conv ergence of the starting state’ s value V ( s 0 ) . 3 Algorithm 1 B O I D P 1: function B O I D P ( s 0 , G , S, p s 0 | s,a , N min ) 2: ˜ S ← { s 0 } 3: loop 4: ˜ S ← S A M P L E S TA T E S ( N min , ˜ S , G , S, p s 0 | s,a ) 5: π , V = RT D P ( s 0 , G , ˜ S , p s 0 | s,a ) 6: until stopping criteria reached 7: E X E C U T E P O L I C Y ( π , ˜ S , G ) 8: function E X E C U T E P O L I C Y ( π , ˜ S , G ) 9: loop 10: s c ← current state 11: ˜ s ← arg min s ∈ ˜ S d ( s, s c ) 12: Execute π ( ˜ s ) 13: until current state is in G Algorithm 2 T ransition model for discrete states 1: function T R A N S I T I O N M O D E L ( s, a, ˜ S , p s 0 | s,a ) 2: ˆ S ← H I G H P R O B N E X T S TA T E S ( p s 0 | s,a ( ˜ S | s, a )) ∪ { s obs } s obs is a terminal state 3: ˆ P s 0 | s,a ( ˆ S | s, a ) ← p s 0 | s,a ( ˆ S | s, a ) 4: for s 0 in ˆ S do 5: if s 0 ∈ S o and E X I S T S C O L L I S I O N ( s, a, s 0 ) then 6: ˆ P s 0 | s,a ( s obs | s, a ) ← ˆ P s 0 | s,a ( s obs | s, a ) + ˆ P s 0 | s,a ( s 0 | s, a ) 7: ˆ P s 0 | s,a ( s 0 | s, a ) ← 0 8: ˆ P s 0 | s,a ( ˆ S | s, a ) ← N O R M A L I Z E ( ˆ P s 0 | s,a ( ˆ S | s, a )) 9: retur n ˆ S , ˆ P s 0 | s,a ( ˆ S | s, a ) π on ˜ S from R TDP , the robot can iterati vely obtain and ex ecute the policy for the nearest state to the current state in the sampled set ˜ S by the metric d . There are a number of challenges underlying each step of B O I D P . First, we need to find a way of accessing the transition probability density function p s 0 | s,a , which is critical for the approximation of ˆ P s 0 | s,a ( s 0 | s, a ) and the v alue function. W e describe our “lazy access” strategy in Sec. 4.1. Second, we must find a way to compute the v alues of as few states as possible to fully exploit the “lazy access” to the transition model. Our solution is to first use an RR T -like process [18, 13] to generate the set of states that asymptotically cov er the state space with lo w dispersion (Sec. 4.2), and then “prune” the irrelev ant states via R TDP [2] (Sec. 4.3). Last, each dynamic-programming update in R TDP requires a maximization ov er the action space; we cannot achieve this analytically and so must sample a finite set of possible actions. W e de velop a ne w batch BO algorithm to focus action sampling on regions of the action space that are informati ve and/or likely to be high-v alue, as described in Sec. 4.4. Both the state sampling and transition estimation processes assume a collision checker E X I S T S C O L L I S I O N ( s, a, s 0 ) that checks the path from s to s 0 induced by action a for collisions with per- manent objects in the map. 4.1 Estimating transition models in B O I D P In a typical model-based learning approach, first a monolithic model is estimated from the data and then that model is used to construct a policy . Here, ho wev er , we aim to scale to large spaces with non-Gaussian dynamics, a setting where it is very dif ficult to represent and estimate a single monolithic model. Hence, we take a dif ferent approach via “lazy access” to the model: we estimate local models on demand, as the planning process requires information about rele vant states and actions. W e assume a dataset D = { s i , a i , s 0 i } N i =0 for the system dynamics and the dataset is large enough to provide a good approximation to the probability density of the next state given any state-action pair . If a 4 stochastic simulator exists for the transition model, one may collect the dataset dynamically in response to queries from B O I D P . The “lazy access” provides a flexible interface, which can accommodate a v ariety of different density-estimation algorithms with asymptotic theoretical guarantees, such as kernel density estimators [34] and Gaussian mixture models [20]. In our experiments, we focus on learning Gaussian mixture models with the assumption that p s 0 | s,a ( s 0 | s, a ) is distributed according to a mixture of Gaussians ∀ ( s, a ) ∈ S × A . Gi ven a discrete set of states ˜ S , starting state s and action a , we compute the approximate discrete transition model ˆ P s 0 | s,a as shown in Algorithm 2. W e use the function H I G H P R O B N E X T S TA T E S to select the lar gest set of next states ˆ S ⊆ ˜ S such that ∀ s 0 ∈ ˆ S , p s 0 | s,a ( s 0 | s, a ) > . is a small threshold parameter , e.g. we can set = 10 − 5 . If p s 0 | s,a does not take obstacles into account, we have to check the path from state s to next state s 0 ∈ ˜ S induced by action a for collisions, and model their effect in the approximate discrete transition model ˆ P s 0 | s,a . T o achiev e this, we add a dummy terminal state s obs , which represents a collision, to the selected next-state set ˆ S . Then, for any s, a, s 0 transition that generates a collision, we mov e the probability mass ˆ P s 0 | s,a ( s 0 | s, a ) to the transition to the collision state ˆ P s 0 | s,a ( s obs | s, a ) . Finally , ˆ P s 0 | s,a ( ˆ S | s, a ) is normalized and returned together with the selected set ˆ S . These approximated discrete transition models can be indexed by state s and action a and cached for future use in tasks that use the same set of states ˜ S and the same obstacle map. The memory-based essence of our modeling strategy is similar to the strategy of non-parametric models such as Gaussian processes, which make predictions for ne w inputs via smoothness assumptions and similarity between the query point and training points in the data set. For the case where the dynamics model p s 0 | s,a is giv en, computing the approximated transition ˆ P s 0 | s,a could still be computationally expensi ve because of the collision checking. Our planner is designed to alle viate the high computation in ˆ P s 0 | s,a by focusing on the relev ant states and actions, as detailed in the next sections. 4.2 Sampling states Algorithm 3 describes the state sampling procedures. The input to S A M P L E S TA T E S in Alg. 3 includes the minimum number of states, N min , to sample at each iteration of B O I D P . It may be that more than N min states are sampled, because sampling must continue until at least one terminal goal state is included in the resulting set ˜ S . T o generate a discrete state set, we sample states both in the interior of S o and on its boundary ∂ S . Notice that we can always add more states by calling S A M P L E S TA T E S . T o generate one interior state sample, we randomly generate a state s rand , and find s nearest that is the nearest state to s rand in the current sampled state set ˜ S . Then we sample a set of actions from A , for each of which we sample the next state s n from the dataset D gi ven the state-action pair s neareast , a (or from p s 0 | s,a if gi ven). W e choose the action a that gives us the s n that is the closest to s rand . T o sample states on the boundary ∂ S , we assume a uniform random generator for states on ∂ S is av ailable. If not, we can use something similar to S A M P L E I N T E R I O R S TA T E S but only sample inside the obstacles uniformly in line 7 of Algorithm 3. Once we have a sample s rand in the obstacle, we try to reach s rand by moving along the path s rand → s n incrementally until a collision is reached. 4.3 F ocusing on the rele vant states via R TDP W e apply our algorithm with a known starting state s 0 and goal re gion G . Hence, it is not necessary to compute a complete policy , and so we can use R TDP [2] to compute a value function focusing on the rele vant state space and a policy that, with high probability , will reach the goal before it reaches a state for which an action has not been determined. W e assume an upper bound of the values for each state s to be h u ( s ) . One can approximate h u ( s ) via the shortest distance from each state to the goal region on the fully connected graph with vertices ˜ S . W e sho w the pseudocode in Algorithm 4. When doing the recursion ( T R I A L R E C U R S E ), we can save additional computation when maximizing Q s ( a ) . Assume that the last time arg max a Q s ( a ) was called, the result was a ∗ and the transition model tells us that ¯ S is the set of possible next states. The next time we call arg max a Q s ( a ) , if the v alues for ¯ S hav e not changed, we can just return 5 Algorithm 3 RR T states sampling for B O I D P 1: function S A M P L E S TA T E S ( N min , ˜ S , G , S, p s 0 | s,a ) 2: ˜ S o ← S A M P L E I N T E R I O R S TA T E S ( d N min / 2 e , ˜ S , G , S, p s 0 | s,a ) 3: ∂ ˜ S ← S A M P L E B O U N DA RY S TA T E S ( d N min / 2 e , ˜ S , G , S, p s 0 | s,a ) 4: retur n ˜ S o ∪ ∂ ˜ S 5: function S A M P L E I N T E R I O R S TA T E S ( N min , ˜ S , G , S, p s 0 | s,a ) 6: while | ˜ S | < N min or G ∩ ˜ S = ∅ do 7: s rand ← U N I F O R M S A M P L E ( S ) 8: s nearest ← N E A R E S T ( s rand , ˜ S ) 9: s n , a n ← R RT E X T E N D ( s nearest , s rand , p s 0 | s,a ) 10: if found s n , a n then 11: ˜ S ← ˜ S ∪ { s n } 12: retur n ˜ S 13: function R RT E X T E N D ( s nearest , s rand , p s 0 | s,a ) 14: d n = ∞ 15: while stopping criterion not reached do 16: a ← U N I F O R M S A M P L E ( A ) 17: s 0 ← S A M P L E p s 0 | s,a ( · | s nearest , a ) 18: if ( not E X I S T S C O L L I S I O N ( s, s 0 , a )) and d n > d ( s rand , s 0 ) then 19: d n ← d ( s rand , s 0 ) 20: s n , a n ← s 0 , a 21: retur n s n , a n a ∗ as the result of the optimization. This can be done easily by caching the current (optimistic) policy and transition model for each state. 4.4 F ocusing on good actions via BO R TDP in Algorithm 4 relies on a challenging optimization over a continuous and possibly high-dimensional action space. Queries to Q s ( a ) in Eq. (1) can be very expensiv e because in many cases a new model must be estimated. Hence, we need to limit the number of points queried during the optimization. There is no clear strategy for computing the gradient of Q s ( a ) , and random sampling is very sample-inefficient especially as the dimensionality of the space grows. W e will view the optimization of Q s ( a ) as a black-box function optimization problem, and use batch BO to efficiently approximate the solution and make full use of the parallel computing resources. W e first briefly re view a sequential Gaussian-process optimization method, GP-EST [32], sho wn in Al- gorithm 5. For a fixed state s , we assume Q s ( a ) is a sample from a Gaussian process with zero mean and kernel κ . At iteration t , we select action a t and observe the function value y t = Q s ( a t ) + t , where t ∼ N (0 , σ 2 ) . Gi ven the observ ations D t = { ( a τ , y τ ) } t τ =1 up to time t , we obtain the pos- terior mean and cov ariance of the Q s ( a ) function via the kernel matrix K t = [ κ ( a i , a j )] a i ,a j ∈ D t and κ t ( a ) = [ κ ( a i , a )] a i ∈ D t [25]: µ t ( a ) = κ t ( a ) T ( K t + σ 2 I ) − 1 y t κ t ( a, a 0 ) = κ ( a, a 0 ) − κ t ( a ) T ( K t + σ 2 I ) − 1 κ t ( a 0 ) . The posterior variance is gi ven by σ 2 t ( a ) = κ t ( a, a ) . W e can then use the posterior mean function µ t ( · ) and the posterior variance function σ 2 t ( · ) to select which action to test in the next iteration. W e here make use of the assumption that we hav e an upper bound h u ( s ) on the value V ( s ) . W e select the action that is most likely to hav e a value greater than or equal to h u ( s ) to be the next one to e valuate. Algorithm 5 relies on sequential tests of Q s ( a ) , but it may be much more ef fectiv e to test Q s ( a ) for multiple values of a in parallel. This requires us to choose a div erse subset of actions that are expected to be informative and/or hav e good values. 6 Algorithm 4 R TDP for B O I D P 1: function RT D P ( s 0 , G , ˜ S , p s 0 | s,a ) 2: for s in ˜ S do 3: V ( s ) = h u ( s ) Compute the value upper bound 4: while V ( · ) not con verged do 5: π , V ← T R I A L R E C U R S E ( s 0 , G , ˜ S , p s 0 | s,a ) 6: retur n π , V 7: function T R I A L R E C U R S E ( s, G , ˜ S , p s 0 | s,a ) 8: if reached cycle or s ∈ G then 9: retur n 10: π ( s ) ← arg max a Q ( s, a, ˜ S , p s 0 | s,a ) Max via BO 11: s 0 ← S A M P L E ( ˆ P s 0 | s,a ( ˜ S | s, π ( s ))) 12: T R I A L R E C U R S E ( s 0 , G , ˜ S , p s 0 | s,a ) 13: π ( s ) ← arg max a Q ( s, a, ˜ S , p s 0 | s,a ) Max via BO 14: V ( s ) ← Q ( s, π ( s ) , ˜ S , p s 0 | s,a ) 15: retur n π , V 16: function Q ( s, a, ˜ S , p s 0 | s,a ) 17: if ˆ P s 0 | s,a ( ˜ S | s, a ) has not been computed then 18: ˆ P s 0 | s,a ( ˜ S | s, a ) = 0 ˆ P s 0 | s,a is a shared matrix 19: ˆ S , ˆ P s 0 | s,a ( ˆ S | s, a ) ← T R A N S I T I O N M O D E L ( s, a, ˜ S , p s 0 | s,a ) 20: retur n R ( s, a ) + γ ∆ t P s 0 ∈ ˆ S ˆ P s 0 | s,a ( s 0 | s, a ) V ( s 0 ) W e propose a new batch Bayesian optimization method that selects a query set that has large div ersity and lo w values of the acquisition function G s,t ( a ) = h u ( s ) − µ t − 1 ( a ) σ t − 1 ( a ) . The ke y idea is to maximize a submodular objectiv e function with a cardinality constraint on B ⊂ A, | B | = M that characterize both di versity and quality: F s ( B ) = log det K B − λ X a ∈ B h u ( s ) − µ t − 1 ( a ) σ t − 1 ( a ) (2) where K B = [ κ ( a i , a j )] a i ,a j ∈ B and λ is a trade-off parameter for diversity and quality . If λ is large, F s will prefer actions with lower G s,t ( a ) , which means a better chance of having high v alues. If λ is lo w , log det K B will dominate F s and a more div erse subset B is preferred. λ can be chosen by cross-v alidation. W e optimize the heuristic function F s via greedy optimization which yield a 1 − 1 e approximation to the optimal solution. W e describe the batch GP optimization in Algorithm 6. The greedy optimization can be efficiently implemented using the following property of the determi- Algorithm 5 Optimization of Q s ( a ) via sequential GP optimization 1: D 0 ← ∅ 2: f or t = 1 → T do 3: µ t − 1 , σ t − 1 ← GP-predict( D t − 1 ) 4: a t ← arg min a ∈ A h u ( s ) − µ t − 1 ( a ) σ t − 1 ( a ) 5: y t ← Q s ( a t ) 6: D t ← D t − 1 ∪ { a t , y t } 7 Algorithm 6 Optimization of Q s ( a ) via batch GP optimization 1: D 0 ← ∅ 2: f or t = 1 → T do 3: µ t − 1 , σ t − 1 ← GP-predict( D t − 1 ) 4: B ← ∅ 5: for i = 1 → M do 6: B ← B ∪ { arg max a ∈ A F s ( B ∪ { a } ) − F s ( B ) } 7: y B ← Q s ( B ) T est Q s in parallel 8: D t ← D t − 1 ∪ { B , y B } nant: F s ( B ∪ { a } ) − F s ( B ) (3) = log det K B ∪{ a } − log det K B − h u ( s ) − µ t − 1 ( a ) σ t − 1 ( a ) (4) = log( κ a − κ T B a K − 1 B κ B a ) − h u ( s ) − µ t − 1 ( a ) σ t − 1 ( a ) (5) where κ a = κ ( a, a ) , κ B a = [ κ ( a i , a )] a i ∈ B . 5 Theor etical analysis In this section, we characterize the theoretical behavior of B O I D P . Thm. 1 establishes the error bound for the v alue function on the ˆ π ∗ -r elevant set of states [2], where ˆ π ∗ is the optimal polic y computed by B O I D P . A set B ⊆ S is called π -r elevant if all the states in B is reachable via finite actions from the starting state s 0 under the policy π . W e denote | · | B as the L ∞ norm of a function · ov er the set B . W e assume the existence of policies whose relev ant sets intersect with G . If there e xists no solution to the continuous state-action MDP M , our algorithm will not be able to generate an RR T whose vertices contain a state in the goal region G , and hence no policy will be generated. W e use the re ward setup described in Sec. 3. For the simplicity of the analysis, we set the re ward for getting to the goal large enough such that the optimal value function V ∗ ( s ) = max a ∈ A Q s ( a ) is positi ve for any state s on the path to the goal region under the optimal polic y π ∗ . W e denote the measure for the state space S to be ρ and the measure for the action space A to be ψ . Both ρ and ψ are absolutely continuous with respect to Lebesgue measure. The metric for A is g , and for S is d . W e also assume the transition density function p s 0 | s,a is not a generalized function and satisfies the property that if R F p s 0 | s,a ( s 0 | s, a ) d s 0 > 0 , then ρ ( F ) > 0 . W ithout loss of generality , we assume min ∆ t = 1 and max ∆ t = T . Under mild conditions on Q s ( a ) specified in Thm. 1, we show that with finitely many actions selected by BO, the expected accumulated error expressed by the dif ference between the optimal value function V ∗ and the v alue function ˆ V of the polic y computed by B O I D P in Alg. 1 on the ˆ π ∗ -r elevant set decreases sub-linearly in the number of actions selected for optimizing Q s ( · ) in Eq. (1). Theorem 1 (Error bound for B O I D P ) . Let D = { s i , a i , s 0 i } N i =0 be the dataset that is collected fr om the true transition pr obability p s 0 | s,a , ∀ ( s, a ) ∈ S × A . W e assume that the transition model p s 0 | s,a estimated by the density estimator asymptoticly conver ges to the true model. ∀ s ∈ S , we assume Q s ( a ) = R s 0 ∈ S p s 0 | s,a ( s 0 | s, a ) R ( s 0 | s, a ) + γ ∆ t V ∗ ( s 0 ) d s 0 is a function locally continuous at arg max a ∈ A Q s ( a ) , wher e V ∗ ( · ) = max a ∈ A Q · ( a ) is the optimal value function for the continuous state-action MDP M = ( S, A, p s 0 | s,a , R, γ ) . V ∗ ( · ) is associated with an optimal policy whose r elevant set contains at least one state in the goal r e gion G . At iteration k of RTDP in Alg. 4, we define ˆ V k to be the value function for ˜ M = ( ˜ S , A, ˆ P s 0 | s,a , R, γ ) appr oximated by B O I D P , ˆ π k to be the policy corresponding to ˆ V k , and B k to be the ˆ π k -rele vant set . W e assume that Q s,k ( a ) = X s 0 ∈ ˜ S ˆ P s 0 | s,a ( s 0 | s, a ) R ( s 0 | s, a ) + γ ∆ t ˆ V k − 1 ( s 0 ) 8 is a function sampled fr om a Gaussian pr ocess with known priors and i.i.d Gaussian noise N (0 , σ ) . If we allow Bayesian optimization for Q s,k ( a ) to sample T actions for each state and run RTDP in Alg . 4 until it con ver ges with r espect to the Cauchy’ s con ver gence criterion [5] with K < ∞ iterations, in expectation, lim | ˜ S | , | D |→∞ | ˆ V K ( · ) − V ∗ ( · ) | B K ≤ ν 1 − γ s 2 η T T log(1 + σ 2 ) , wher e η T is the maximum information gain of the selected actions [27, Theorem 5], ν = max s,t,k min a ∈ A G s,t,k ( a ) , and G s,t,k ( · ) is the acquisition function in [32, Theor em 3.1] for state s ∈ ˜ S , iteration t = 1 , 2 , · · · , T in Alg. 5 or 6, and iter ation k = 1 , 2 , · · · , K of the loop in Alg. 4. Pr oof. T o pro ve Thm. 1, we first sho w the following f acts: (1) The state sampling procedure in Alg. 3 stops in finite steps; (2) the difference between the value function computed by B O I D P and the optimal v alue function computed via asynchronous dynamic programming with an exact optimizer is bounded in expectation; (3) the optimal value function of the approximated MDP ˜ M asymptotically conv erges to that of the original problem defined by the MDP M . Claim 1.1: The expected number of iterations for the set of sampled states ˜ S computed by Alg. 3 to contain one state in G is finite. Proof of Claim 1.1: Let S g ood be the set of states with non-zero probability to reach the goal region via finite actions. Clearly , G ⊂ S g ood . Because there exists a state in the goal region that is reachable with finite steps follo wing the policy π ∗ starting from s 0 , we hav e s 0 ∈ S g ood . Hence ˜ S ∩ S g ood is non-empty in any iteration of S A M P L E I N T E R I O R S TA T E S of Alg. 3. W e can show that if the nearest state selected in Line 7 of Alg. 3 is in S g ood , there is non-zero probability to e xtend another state in S g ood with the RR T procedure. T o prov e this, we first show for ev ery state s ∈ S g ood ∩ ˜ S there exists a set of actions with non-zero measure, in which each action a satisfies R S good p s 0 | s,a ( s 0 | s, a ) d s 0 > 0 . For ev ery state s ∈ S g ood ∩ ˜ S , because Q s ( a ) is locally continuous at a = π ∗ ( s ) , for any positiv e real number ζ , there exists a positi ve real number δ such that ∀ a ∈ { a : g ( a, π ∗ ( s )) < δ, a ∈ A } , we hav e | Q s ( a ) − Q s ( π ∗ ( s )) | < ζ . (6) Notice that by the design of the re ward function R , we hav e Q s ( a ) = Z S good ∪ ( S \ S good ) p s 0 | s,a ( s 0 | s, a ) R ( s 0 | s, a ) + γ ∆ t V ∗ ( s 0 ) d s 0 (7) ≤ Z S good p s 0 | s,a ( s 0 | s, a ) R ( s 0 | s, a ) + γ ∆ t V ∗ ( s 0 ) d s 0 + C a 1 − γ T Z S \ S good p s 0 | s,a ( s 0 | s, a ) d s 0 (8) where − C a > 0 is the smallest cost for either executing one action or colliding with obstacles. The inequality is because ∀ s 0 ∈ S \ S g ood , R ( s 0 | s, a ) + γ ∆ t V ∗ ( s 0 ) ≤ C a 1 − γ T < 0 . (9) Because π ∗ ( s ) = arg max a ∈ A Q s ( a ) and the rew ard for the goal region is set lar ge enough so that Q s ( π ∗ ( s )) > 0 , there exists r > 0 such that ∀ q ∈ R satisfying q > Q s ( π ∗ ( s )) − r , we hav e q > 0 . Let the arbitrary choice of ζ in Eq. (6) be ζ = r . Because Q s ( π ∗ ( s )) − ζ = Q s ( π ∗ ( s )) − r < Q s ( a ) , we hav e Q s ( a ) > 0 , ∀ a ∈ { a : g ( a, π ∗ ( s )) < δ, a ∈ A } , and Z S good p s 0 | s,a ( s 0 | s, a ) R ( s 0 | s, a ) + γ ∆ t V ∗ ( s 0 ) d s 0 > − C a 1 − γ T Z S \ S good p s 0 | s,a ( s 0 | s, a ) d s 0 > 0 9 Hence R s 0 ∈ S good p s 0 | s,a ( s 0 | s, a ) d s 0 > 0 must hold for any action a ∈ { a : g ( a, π ∗ ( s )) < δ, a ∈ A } . Recall that one dimension of a = ( u, ∆ t ) is the duration ∆ t of the control u . Because the dynamics of the physics world is continuous, for any a = ( u, ∆ t 0 ) ∈ A such that g (( u, ∆ t ) , π ∗ ( s )) < δ and 1 ≤ ∆ t 0 ≤ ∆ t , we hav e R S good p s 0 | s,a ( s 0 | s, a ) d s 0 > 0 . Let A s = { ( u, ∆ t 0 ) : g (( u, ∆ t ) , π ∗ ( s )) < δ, 1 ≤ ∆ t 0 ≤ ∆ t } ∩ A . What remains to be shown is ψ ( A s ) > 0 . Because there exists an open set A o such that A is the closure of A o , π ∗ ( s ) is either in A o or a limit point of A o . If π ∗ ( s ) is in the open set A o , there exist 0 < δ 0 ≤ δ such that { a : g ( a, π ∗ ( s )) < δ 0 } ⊂ A , and so we also hav e ψ ( A s ) > 0 . If π ∗ ( s ) is a limit point of the open set A o , there exist a 0 ∈ A o such that g ( a 0 , π ∗ ( s )) < δ / 2 and a 0 6 = π ∗ ( s ) . Because a 0 ∈ A o , there exist 0 < δ 0 ≤ δ / 2 such that { a : g ( a 0 , a ) < δ 0 } ⊂ A . F or any a ∈ { a : g ( a 0 , a ) < δ 0 } , we ha ve g ( a, π ∗ ( s )) ≤ g ( a, a 0 ) + g ( a 0 , π ∗ ( s )) < δ 0 + δ / 2 ≤ δ . Hence { a : g ( a 0 , a ) < δ 0 } ⊂ A s , and so ψ ( A s ) > 0 . Thus for any π ∗ ( s ) ∈ A , we hav e ψ ( A s ) > 0 . So, for every state s ∈ S g ood ∩ ˜ S and action a ∈ A s with ψ ( A s ) > 0 , R S good p s 0 | s,a ( s 0 | s, a ) d s 0 > 0 . As a corollary , ρ ( { s 0 : p ( s 0 | s, a ) > 0 } ∩ S g ood ) > 0 holds ∀ s ∈ S g ood ∩ ˜ S , a ∈ A s . No w we can show that there is non-zero probability to extend a state on the near-optimal path in S g ood with the RR T procedure in one iteration of S A M P L E I N T E R I O R S TA T E S in Alg. 3. Let θ = min s ∈ ˜ S ∩ S good ,a ∈ A s ρ ( { s 0 : p ( s 0 | s, a ) > 0 } ∩ S g ood ) > 0 . With the finite ˜ S for some iteration, we can construct a V oronoi diagram based on the v ertices from the current set of sampled states ˜ S . ∀ s ∈ S g ood ∩ ˜ S , there exists a V oronoi region V or( s ) associated with state s . W e can partition this V oronoi region V or( s ) to one part, V or( A s ) ⊂ V or( s ) , containing states in S g ood generated by actions in A s and its complement, V or( A \ A s ) = V or( s ) \ V or( A s ) . Notice that A s includes actions with the minimum duration, and the unit for the minimum duration can be set small enough so that ρ ( { s 0 : p ( s 0 | s, a ) > 0 , a ∈ A s , s 0 ∈ S g ood } ∩ V or( s )) > 0 2 . Since { s 0 : p ( s 0 | s, a ) > 0 , a ∈ A s } ∩ S g ood ∩ V or( s ) ⊂ V or( A s ) , we ha ve ρ (V or( A s )) > 0 , ∀ s ∈ ˜ S . W e denote p s = min s ρ (V or( A s )) ρ ( S ) > 0 and p a = min s ψ ( A s ) ψ ( A ) > 0 . W ith proba- bility at least p s , there is a random state sampled in V or( A s ) in this iteration. W ith probability at least p a , at least an action in A s is selected to test distance, and with probability at least θ , a state in S g ood can be sampled from the transition model conditioned on the state s and the selected action in A s . Next we sho w that S A M P L E I N T E R I O R S TA T E S in Alg. 3 constructs an RR T whose finite set of sampled states ˜ S contains at least one goal state in expectation. By assumption, the goal state is reachable with finite actions. For an y s ∈ S g ood ∩ ˜ S , the goal region is reachable from s in finite steps. Notice that once a new state in { s 0 : p ( s 0 | s, a ) > 0 , a ∈ A s } ∩ S g ood is sampled, s 0 uses one less step than s to reach the goal region. Let K be the largest finite number of actions necessary to reach the goal region G from the initial state s 0 . Hence, with at most a finite number of K θp s p a iterations in expectation (including both loops for sampling actions and loops for sampling states), at least a goal state will be added to ˜ S . Q.E.D. Claim 1.2: Let ˜ V ∗ be the optimal value function computed via asynchronous dynamic programming with an exact optimizer . If Alg. 4 con ver ges with K < ∞ iterations, | ˆ V K ( · ) − ˜ V ∗ ( · ) | B K ≤ ν 1 − γ s 2 η T T log(1 + σ 2 ) . Proof of Claim 1.2: The R TDP process of B O I D P in Alg. 4 searches for the relev ant set B K of B O I D P ’ s policy ˆ π via recursion on stochastic paths (trials). If B O I D P con ver ges, all states in B ˆ π should hav e been visited and their values ˆ V ( s ) , ∀ s ∈ B K hav e con ver ged. Compared to asynchronous dynamic programming (ADP) with an exact optimizer , our R TDP process introduces small errors at each trial, but e ventually the difference between the optimal value function computed by ADP and the value function computed by B O I D P is bounded. 2 This is because V or( s ) is a neighborhood of s , and there exists an action a ∈ A s such that a next state s 0 ∈ S good is in the interior of V or( s ) giv en the current state s . So there exists a small ball in S with s 0 as the center such that this ball is a subset of both V or( s ) and { s 0 : p ( s 0 | s, a ) > 0 , a ∈ A s , s 0 ∈ S good } (by the continuity of p s 0 | s,a ). 10 In the following, the order of states to be updated in ADP is set to follow R TDP . This order does not matter for the con ver gence of ADP as an y state in B ˆ π will ev entually be visited infinitely often if K → ∞ [28]. W e denote the value for the i -th state updated at iteration k of R TDP to be ˆ V ki , the corresponding v alue function updated by R TDP with an exact optimizer only for this update to be ˆ V ∗ ki , and the difference between them to be ki = | ˆ V ∗ ki − ˆ V ki | . According to [32, Theorem 3.1], in expectation, for any i -th state s ki to be updated at iteration k , the follo wing inequality holds: ki ≤ ν ki s 2 η T T log(1 + σ 2 ) , where ν ki = max t ∈ [1 ,T ] min a ∈ A G s i ,t,k ( a ) , and G s i ,t, · ( a ) = h u ( s i ) − µ t − 1 ( a ) σ t − 1 ( a ) is the acquisition function in [32, Theorem 3.1], which makes use of the assumed upper bound h u ( · ) on the value function. Let the sequence of states to be updated at iteration k be s k 1 , s k 2 , · · · , s kn k and ν = max k ∈ [1 , K ] ,i ∈ [1 ,n k ] ν ki . For any iteration k and state s ki , we hav e ki ≤ ν s 2 η T T log(1 + σ 2 ) = . So our optimization introduces error of at most to the optimization of the Bellman equation at any iteration. Furthermore, we can bound the dif ference between the v alue for the i -th state updated at iteration k of R TDP ( ˆ V ki ) and the corresponding v alue function updated by ADP ( ˜ V ∗ ki ). More specifically , the following inequalities hold for any K = 1 , 2 , · · · , ∞ : | ˆ V 11 − ˜ V ∗ 11 | ≤ , | ˆ V 12 − ˜ V ∗ 12 | ≤ + γ , · · · , | ˆ V 1 n 1 − ˜ V ∗ 1 n 1 | ≤ n 1 X i =1 γ i − 1 , · · · , · · · , | ˆ V K 1 − ˜ V ∗ K 1 | ≤ + γ n 1 + ··· + n K− 1 X i =1 γ i − 1 , | ˆ V K 2 − ˜ V ∗ K 2 | ≤ + γ + γ 2 n 1 + ··· + n K− 1 X i =1 γ i − 1 , · · · , | ˆ V K n K − ˜ V ∗ K n K | ≤ n 1 + ··· + n K X i =1 γ i − 1 < 1 − γ = ν 1 − γ s 2 η T T log(1 + σ 2 ) . Notice that ˆ V ki con ver ges because it monotonically decreases for any state index i and it is lower bounded by C 1 − γ T where C < 0 is the highest cost, e.g. colliding with obstacles. Since we use Cauchy’ s 11 con ver gence test [5], we can set the threshold for the con ver gence test to be negligible. Hence for some K < ∞ , both ˆ V k and ˜ V k con ver ge according to Cauchy’ s con ver gence test, and we have | ˆ V K ( · ) − ˜ V ∗ ( · ) | B K ≤ ν 1 − γ s 2 η T T log(1 + σ 2 ) . Q.E.D. Claim 1.3: ˜ V ∗ , the optimal value function of the approximated MDP ˜ M , asymptotically con verges to V ∗ , the optimal v alue function of the original problem defined by the MDP M . Proof of Claim 1.3: W e consider the asymptotic case where the size of the dataset | D | → ∞ and the number of states sampled | ˜ S | → ∞ . Notice that these tw o limit does not contradict Claim 1.1 because B O I D P operates in a loop and we can iterativ ely sample more states by calling S A M P L E S TA T E S in Alg. 1. When | D | → ∞ , p s 0 | s,a con ver ges to the true transition model. Because the states are sampled uniformly randomly from the state space S in Line 7 of Alg. 3, when | ˜ S | → ∞ , the set ˜ S can be vie wed as uniform random samples from the reachable state space 3 . So the v alue function for the optimal policy of ˜ M asymptotically con ver ges to that of M : lim | ˜ S | , | D |→∞ | ˜ V ∗ − V ∗ | ∞ = 0 . Q.E.D. Thm. 1 directly follo ws Claim 1.1, 1.2, and 1.3. By the triangle inequality of L ∞ , we hav e lim | ˜ S | , | D |→∞ | ˆ V K − V ∗ | B K ≤ lim | ˜ S | , | D |→∞ | ˆ V K − ˜ V ∗ | B K + lim | ˜ S | , | D |→∞ | ˜ V ∗ − V ∗ | B K ≤ ν 1 − γ s 2 η T T log(1 + σ 2 ) holds in expectation. 6 Implementation and Experiments W e tested our approach in a quasi-static problem, in which a robot pushes a circular object through a planar workspace with obstacles in simulation 4 . W e represent the action by the robot’ s initial relativ e position x to the object (its distance to the object center is fixed), the direction of the push z , and the duration of the push ∆ t , which are illustrated in Fig. 2. The companion video shows the behavior of this robot, controlled by a policy deri ved by B O I D P from a set of training examples. In this problem, the basic underlying dynamics in free space with no obstacles are location in variant; that is, that the change in state ∆ s resulting from taking action a = ( u, ∆ t ) is independent of the state s in which a was executed. W e are given a training dataset D = { ∆ s i , a i } N i =0 , where a i is an action and ∆ s i is the resulting state change, collected in the free space in a simulator . Giv en a ne w query for action a , we predict the distrib ution of ∆ s by looking at the subset D 0 = { ∆ s j , a j } M j =0 ⊆ D whose actions a j are the most similar to a (in our experiments we use 1-norm distance to measure similarity), and fit a Gaussian mixture model on ∆ s j using the EM algorithm, yielding an estimated continuous state-action transition model p s 0 | s,a ( s + ∆ s | s, a ) = p ∆ s | a (∆ s | a ) . W e use the Bayesian information criterion (BIC) to determine the number of mixture components. 3 Alg. 3 does not necessarily lead to uniform samples of states in the state space. Howe ver, as the number of states sampled approaches infinity , | ˜ S | → ∞ , we can construct a set of finite and arbitrarily small open balls that cover the (reachable) state space such that there exists at least one sampled state in an y of those balls. Such a cover exists because the state space is compact. If the samples are not uniform, we can simply adopt a uniform sampler on top of Alg. 3, and throw away a fixed proportion of states so that the remaining set of states are uniform samples from the state space S . 4 All experiments were run with Python 2.7.6 on Intel(R) Xeon(R) CPU E5-2680 v3 @ 2.50GHz with 64GB memory . 12 Figure 2: Pushing a circular object with a rectangle pusher . − 20 − 15 − 10 − 5 0 5 10 15 20 − 20 − 15 − 10 − 5 0 5 10 15 20 s[1] K=1 K=2 1500 2000 2500 3000 3500 4000 4500 5000 5500 | ˜ S | 0 500 1000 1500 2000 2500 3000 Number of visitaed states in RTDP K=1 K=2 (a) (b) s[0] Figure 3: (a) Samples from the single-mode Gaussian transition model ( K = 1 ) and the two-component Gaussian mixture transition model ( K = 2 ) in the free space when a = 0 . (b) The number of visited states (y-axis) increases with the number of sampled states | ˜ S | (x-axis). Planning with K = 2 visits fewer states in R TDP than with K = 1 . 6.1 Importance of learning accurate models Our method was designed to be appropriate for use in systems whose dynamics are not well modeled with uni-modal Gaussian noise. The experiments in this section explore the question of whether a uni-modal model could work just as well, using a simple domain with kno wn dynamics s 0 = s + T ( a ) ρ , where the relati ve position x = 0 and duration ∆ t = 1 are fixed, the action is the direction of motion, a = z ∈ [0 , 2 π ) , T ( a ) is the rotation matrix for angle, and the noise is ρ ∼ 0 . 6 N ( 5 . 0 5 . 0 , 2 . 0 0 . 0 0 . 0 2 . 0 ) + 0 . 4 N ( 5 . 0 − 5 . 0 , 2 . 0 0 . 0 0 . 0 2 . 0 ) . W e sample ρ from its true distrib ution and fit a Gaussian ( K = 1 ) and a mixture of Gaussians ( K = 2 ). The samples from K = 1 and K = 2 are shown in Fig. 3 (a). W e plan with both models where each action has an instantaneous reward of − 1 , hitting an obstacle has a reward of − 10 , and the goal region has a re ward of 100 . The discount factor γ = 0 . 99 . T o show that the results are consistent, we use Algorithm 3 to sample 1500 to 5000 states to construct ˜ S , and plan with each of them using 100 uniformly discretized actions within 1000 iterations of R TDP . T o compute the Monte Carlo re ward, we simulated 500 trajectories for each computed policy with the true model dynamics, and for each simulation, at most 500 steps are allo wed. W e show 10 samples of trajectories for both K = 1 and K = 2 with | ˜ S | = 5000 , in Fig 4. Planning with the right model K = 2 tends to find better trajectories, while because K = 1 puts density on many states that the true model does not reach, the policy of K = 1 in Fig 4 (a) causes the robot to do extra maneuvers or ev en choose a longer trajectory to av oid obstacles that it actually has very low probability of hitting. As a result, the reward and success rate for K = 2 are both higher than K = 1 , as shown in Fig. 5. Furthermore, because the single- mode Gaussian estimates the noise to have a large v ariance, it causes R TDP to visit many more states than necessary , as shown in Fig. 3 (b). 13 − 40 − 20 0 20 40 − 40 − 20 0 20 40 Start Obstacle Obstacle Goal − 40 − 20 0 20 40 − 40 − 20 0 20 40 Start Obstacle Obstacle Goal (a) (b) Figure 4: (a) Samples of 10 trajectories with K = 1 . (b) Samples of 10 trajectories with K = 2 . Using the correct number of components for the transition model improv es the quality of the trajectories. 1500 2000 2500 3000 3500 4000 4500 5000 − 150 − 100 − 50 0 50 100 R eward K=1 K=2 1500 2000 2500 3000 3500 4000 4500 5000 | ˜ S | 0 . 990 0 . 995 1 . 000 1 . 005 Success Rate K=1 K=2 | ˜ S | (a) (b) Figure 5: (a): Rew ard. (b): Success rate. Using two components ( K = 2 ) performs much better than using one component ( K = 1 ) in terms of re ward and success rate. 6.2 F ocusing on the good actions and states In this section we demonstrate the effecti veness of our strategies for limiting the number of states visited and actions modeled. W e denote using Bayesian optimization in Lines 10 and 13 in Algorithm 4 as BO and using random selections as Rand. W e first demonstrate why BO is better than random for optimizing Q s ( a ) with the simple example from Sec. 6.1. W e plot the Q s ( a ) in the first iteration of R TDP where s = [ − 4 . 3 , 33 . 8] , and let random and BO in Algorithm 5 each pick 10 actions to ev aluate sequentially as sho wn in Fig 7 (a). W e use the GP implementation and the def ault Matern52 kernel implemented in the GPy module [11] and optimize its kernel parameters ev ery 5 selections. The first point for both BO and Rand is fix ed to be a = 0 . 0 . W e observe that BO is able to focus its action selections in the high-value region, and BO is also able to explore informati ve actions if it has not found a good value or if it has finished exploiting a good region (see selection 10). Random action selection wastes choices on regions that have already been determined to be bad. Next we consider a more complicated problem in which the action is the high le vel control of a pushing problem a = ( z , x, ∆ t ) , z ∈ [0 , 2 π ] , x ∈ [ − 1 . 0 , 1 . 0] , ∆ t ∈ [0 . 0 , 3 . 0] as illustrated in Fig. 2. The instan- taneous reward is − 1 for each free-space motion, − 10 for hitting an obstacle, and 100 for reaching the goal; γ = 0 . 99 . W e collected 1 . 2 × 10 6 data points of the form ( a, ∆ s ) with x and ∆ t as variables in the Box2D simulator [4] where noise comes from variability of the executed action. W e make use of the fact that the object is cylindrical (with radius 1.0) to reuse data. An example of the distribution of ∆ s giv en a = (0 . 0 , 0 . 3 , 2 . 0) is shown in Fig. 6. W e compare policies found by Rand and BO with the same set of sampled states ( | ˜ S | = 200 , 400 , 600 , 800 , 1000 ) within approximately the same amount of total computation time. They are both 14 2 4 6 8 10 12 14 16 18 s[0] 2.0 1.5 1.0 0.5 0.0 0.5 1.0 S[1] Samples from data Samples from model Figure 6: The conditional distribution of ∆ s giv en a = ( z , x, ∆ t ) = (0 . 0 , 0 . 3 , 2 . 0) is a multi-modal Gaussian. Number of selec ted ac ti ons 200 400 600 800 1000 1200 | ˜ S | 0 200 400 600 800 1000 1200 1400 1600 Ra nd BO 0 1 2 3 4 5 6 a 20 0 20 40 60 80 100 Q s (a ) 0 1 2 3 4 5 6 7 8 9 10 0 1 2 3 4 5 6 7 8 9 10 Q s (a ) Rand BO (b) (a ) Figure 7: (a) W e optimize Q s ( a ) with BO and Rand by sequentially sampling 10 actions. BO selects actions more strategically than Rand. (b) BO samples fewer actions than Rand in the pushing problem for all settings of | ˜ S | . able to compute the policy in 30 ∼ 120 seconds, as sho wn in Fig. 8 (b). In more realistic domains, it is pos- sible that learning the transition model will take longer and dominate the action-selection computation. W e simulate 100 trajectories in the Box2D simulator for each planned policy with a maximum of 200 seconds. W e show the result of the rew ard and success rate in Fig. 9, and the av erage number of actions selected for visited states in Fig. 7(b). In our simulations, BO consistently performs approximately the same or better than Rand in terms of reward and success rate while BO selects fewer actions than Rand. W e sho w 10 simulated trajectories for Rand and BO with | ˜ S | = 1000 in Fig. 10. From Fig. 8 (a), it is not hard to see that R TDP successfully controlled the number of visited states to be only a small fraction of the whole sampled set of states. Interestingly , BO was able to visit slightly more states with R TDP and as a result, e xplored more possible states that it is lik ely to encounter during the ex ecution of the policy , which may be a factor that contributed to its better performance in terms of rew ard and success rate in Fig. 9. W e did not compare with pure value iteration because the high computational cost of computing models for all the states made it infeasible. B O I D P is able to compute models for only around 10% of the sampled states and about 200 actions per state. If we consider a naiv e grid discretization for both action (3 dimension) and state (2 dimension) with 100 cells for each dimension, the number of models we w ould ha ve to compute is on the order of 10 10 , compared to our approach, which requires only 10 4 . 15 200 400 600 800 1000 1200 0 20 40 60 80 100 120 Learning and planning Time (s) Rand BO (a) (b) 200 400 600 800 1000 1200 | ˜ S | 0 20 40 60 80 100 120 140 Number of visited states in RTDP Rand BO | ˜ S | Figure 8: (a) Number of visited states in R TDP . Both of Rand and BO consistently focus on about 10% states for planning. (b) Learning and planning time of BO and Rand. 200 300 400 500 600 700 800 900 1000 | ˜ S | 200 150 100 50 0 50 Reward Rand BO (a) 200 300 400 500 600 700 800 900 1000 | ˜ S | 0 . 70 0 . 75 0 . 80 0 . 85 0 . 90 0 . 95 1 . 00 Success Rate Rand BO (b) Figure 9: (a) Re ward. (b) Success rate. BO achie ves better reward and success rate, with many fe wer actions and slightly more visited states. 7 Conclusion An important class of robotics problems are intrinsically continuous in both state and action space, and may demonstrate non-Gaussian stochasticity in their dynamics. W e have provided a frame work to plan and learn ef fectiv ely for these problems. W e achiev e efficienc y by focusing on relev ant subsets of state and action spaces, while retaining guarantees of asymptotic optimality . Refer ences [1] Leemon C. Baird, III and A. Harry Klopf. Reinforcement learning with high-dimensional continuous actions. T echnical report, Wright Laboratory , Wright Patterson Air F orce Base, 1993. [2] Andrew G Barto, Stev en J Bradtke, and Satinder P Singh. Learning to act using real-time dynamic programming. Artificial Intelligence , 72(1):81–138, 1995. [3] Eric Brochu, Vlad M Cora, and Nando De Freitas. A tutorial on Bayesian optimization of e xpen- si ve cost functions, with application to activ e user modeling and hierarchical reinforcement learning. T echnical Report TR-2009-023, University of British Columbia, 2009. [4] Erin Catto. Box2D, a 2D physics engine for games. http://box2d.org , 2011. [5] Augustin Louis Cauchy . Cours d’analyse de l’Ecole Royale P olytechnique , volume 1. Imprimerie royale, 1821. [6] Marc Deisenroth and Carl E Rasmussen. PILCO: A model-based and data-ef ficient approach to polic y search. In ICML , 2011. 16 − 20 − 10 0 10 20 − 20 − 10 0 10 20 Start Obstacle Goal − 20 − 10 0 10 20 − 20 − 10 0 10 20 Start Obstacle Goal (a) (b) Figure 10: (a) 10 samples of trajectories generated via Rand with 1000 states. (b) 10 samples of trajectories generated via BO with 1000 states. [7] Marc Peter Deisenroth, Carl Edward Rasmussen, and Jan Peters. Model-based reinforcement learning with continuous states and actions. In ESANN , 2008. [8] Marc Peter Deisenroth, Carl Edward Rasmussen, and Jan Peters. Gaussian process dynamic program- ming. Neur ocomputing , 72(7):1508–1524, 2009. [9] Marcus Frean and Phillip Boyle. Using Gaussian processes to optimize expensi ve functions. In Austr alasian Joint Confer ence on Artificial Intelligence , 2008. [10] Mohammad Ghav amzadeh, Shie Mannor , Joelle Pineau, A viv T amar , et al. Bayesian reinforcement learning: A survey . F oundations and T r ends in Machine Learning , 8(5–6):359–483, 2015. [11] GPy. GPy: A Gaussian process framew ork in python. http://github.com/SheffieldML/ GPy , since 2012. [12] V . Gullapalli, J. A. Franklin, and H. Benbrahim. Acquiring robot skills via reinforcement learning. IEEE Contr ol Systems , 14(1):13–24, 1994. [13] V u Anh Huynh, Sertac Karaman, and Emilio Frazzoli. An incremental sampling-based algorithm for stochastic optimal control. IJRR , 35(4):305–333, 2016. [14] Hunor S Jakab and Lehel Csat ´ o. Reinforcement learning with guided policy search using Gaussian processes. In IJCNN , 2012. [15] Marek Kopicki. Pr ediction learning in r obotic manipulation . PhD thesis, Univ ersity of Birmingham, 2010. [16] Marek Kopi cki, Jeremy W yatt, and Rustam Stolkin. Prediction learning in robotic pushing manipula- tion. In ICAR , 2009. [17] Marek K opicki, Sebastian Zurek, Rustam Stolkin, Thomas M ¨ orwald, and Jeremy W yatt. Learning to predict ho w rigid objects behave under simple manipulation. In ICRA , 2011. [18] Stev en M LaV alle. Rapidly-exploring random trees: A new tool for path planning. T echnical Report TR 98-11, Computer Science Dept., Io wa State Univ ersity , 1998. [19] Christopher R Mansley , Ari W einstein, and Michael L Littman. Sample-based planning for continuous action marko v decision processes. In ICAPS , 2011. [20] Ankur Moitra and Gregory V aliant. Settling the polynomial learnability of mixtures of gaussians. In FOCS , 2010. 17 [21] T eodor Mihai Moldovan, Sergey Levine, Michael I Jordan, and Pieter Abbeel. Optimism-dri ven exploration for nonlinear systems. In ICRA , 2015. [22] Duy Nguyen-T uong and Jan Peters. Model learning for robot control: A survey . Cognitive pr ocessing , 12(4):319–340, 2011. [23] Barry D. Nichols. Continuous action-space reinforcement learning methods applied to the minimum- time swing-up of the acrobot. In ICSMC , 2015. [24] D. V . Prokhorov and D. C. W unsch. Adapti ve critic designs. IEEE T rans. Neural Networks , 8(5):997– 1007, 1997. [25] Carl Edward Rasmussen and Christopher KI W illiams. Gaussian processes for machine learning. The MIT Pr ess , 2006. [26] Axel Rottmann and W olfram Bur gard. Adapti ve autonomous control using online v alue iteration with Gaussian processes. In ICRA , 2009. [27] Niranjan Sriniv as, Andreas Krause, Sham M Kakade, and Matthias Seeger . Gaussian process opti- mization in the bandit setting: No regret and experimental design. In ICML , 2010. [28] Richard S Sutton and Andrew G Barto. Reinfor cement learning: An intr oduction , v olume 1. MIT press Cambridge, 1998. [29] Michalis K Titsias and Miguel L ´ azaro-Gredilla. V ariational heteroscedastic Gaussian process regres- sion. In ICML , 2011. [30] Matteo T urchetta, Felix Berkenkamp, and Andreas Krause. Safe e xploration in finite Markov decision processes with Gaussian processes. In NIPS , 2016. [31] Hado v an Hasselt and Marco A. W iering. Reinforcement learning in continuous action spaces. In ICAR , 2007. [32] Zi W ang, Bolei Zhou, and Stefanie Jegelka. Optimization as estimation with Gaussian processes in bandit settings. In AIST A TS , 2016. [33] Ari W einstein and Michael L Littman. Bandit-based planning and learning in continuous-action Marko v decision processes. In ICAPS , 2012. [34] Dominik W ied and Rafael W eißbach. Consistency of the kernel density estimator: a survey . Statistical P apers , 53(1):1–21, 2012. [35] Timoth y Y ee, V iliam Lisy , and Michael Bo wling. Monte carlo tree search in continuous action spaces with ex ecution uncertainty . In IJCAI , 2016. [36] Kuan-T ing Y u, Maria Bauza, Nima Fazeli, and Alberto Rodriguez. More than a million ways to be pushed: A high-fidelity experimental data set of planar pushing. In IR OS , 2016. [37] Chao Y uan and Claus Neubauer . V ariational mixture of Gaussian process e xperts. In NIPS , 2009. 18

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment