Approximate Probabilistic Neural Networks with Gated Threshold Logic

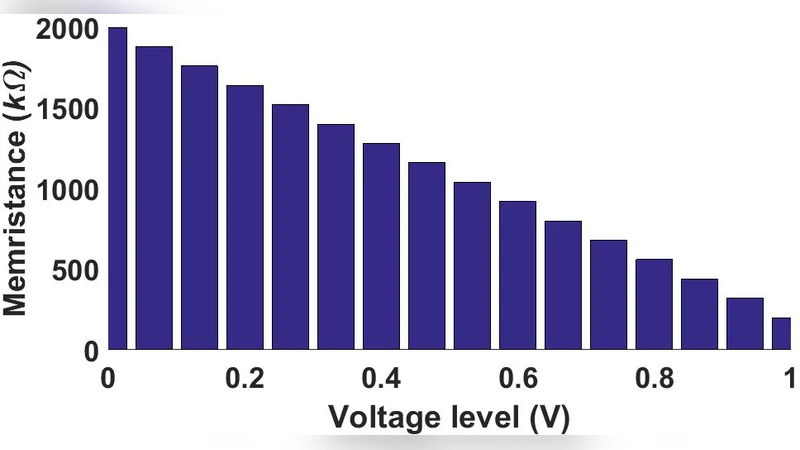

Probabilistic Neural Network (PNN) is a feed-forward artificial neural network developed for solving classification problems. This paper proposes a hardware implementation of an approximated PNN (APNN) algorithm in which the conventional exponential function of the PNN is replaced with gated threshold logic. The weights of the PNN are approximated using a memristive crossbar architecture. In particular, the proposed algorithm performs normalization of the training weights, and quantization into 16 levels which significantly reduces the complexity of the circuit.

💡 Research Summary

The paper presents an analog hardware implementation of an Approximate Probabilistic Neural Network (APNN) that replaces the conventional exponential activation of a Probabilistic Neural Network (PNN) with a gated threshold logic. The authors first identify two major challenges in hardware PNNs: the need to compute the Gaussian‑based exponential term and the large memory footprint caused by storing a separate weight set for each class. To address these, they propose (1) a threshold‑based decision rule (|xW-\sigma|<\theta) that approximates the exponential probability, and (2) a memristive cross‑bar array using GST memristors to store normalized training weights quantized into 16 discrete levels. Each class is allocated its own cross‑bar; rows correspond to input features and columns to training samples. During inference, a column is selected via a transistor switch, the resulting dot‑product current is buffered, converted to voltage by a current‑to‑voltage converter (IVC), and compared against a class‑specific threshold voltage in a comparator. The comparator outputs (binary 0/1) are stored, averaged across all columns of a class, and fed into a Winner‑Takes‑All (WTA) circuit that selects the class with the highest average. Two threshold schemes are explored: a fixed global threshold for all classes and an adaptive per‑class threshold learned during training.

Circuit details are provided for a 180 nm CMOS process: current mirrors, an operational amplifier with 200 kΩ memristive loads for the IVC, a 9‑transistor comparator, and the WTA network. Power and area estimates for a three‑class system (each with 10 training samples and 4 features) amount to 123.76 mW and 5.76 mm², with the IVC’s op‑amp dominating power consumption.

Simulation results combine SPICE circuit simulations and MATLAB system‑level tests on the Iris dataset. Table I shows that the conventional PNN achieves 96 % accuracy, which drops to 93 % when weights are quantized. The APNN with fixed threshold logic reaches 98 % accuracy, while the adaptive‑threshold version with quantized weights attains 98.9 %, surpassing the original network. SPICE timing diagrams confirm that the correct class produces the highest column current, leading to the highest averaged comparator output and correct WTA decision.

The discussion compares the proposed analog APNN to prior FPGA implementations, highlighting lower component count, reduced area, and eliminated analog‑to‑digital conversion overhead, which together improve processing speed. However, the authors acknowledge several open issues: the impact of memristor variability and non‑idealities, scalability of the cross‑bar for larger feature sets, the need for efficient mixed‑signal control and storage circuits, and the immature state of memristive technology. They suggest future work on low‑voltage op‑amps, robust threshold calibration, and extensive large‑scale evaluations.

In conclusion, the APNN demonstrates that replacing the exponential kernel with gated threshold logic and using 16‑level quantized memristive weights can yield a compact, low‑power analog classifier that outperforms a standard PNN on small‑scale problems. The paper provides a concrete circuit blueprint and points to necessary future research to extend the approach to larger, more demanding applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment