DCASE 2018 Challenge - Task 5: Monitoring of domestic activities based on multi-channel acoustics

The DCASE 2018 Challenge consists of five tasks related to automatic classification and detection of sound events and scenes. This paper presents the setup of Task 5 which includes the description of the task, dataset and the baseline system. In this…

Authors: Gert Dekkers, Lode Vuegen, Toon van Waterschoot

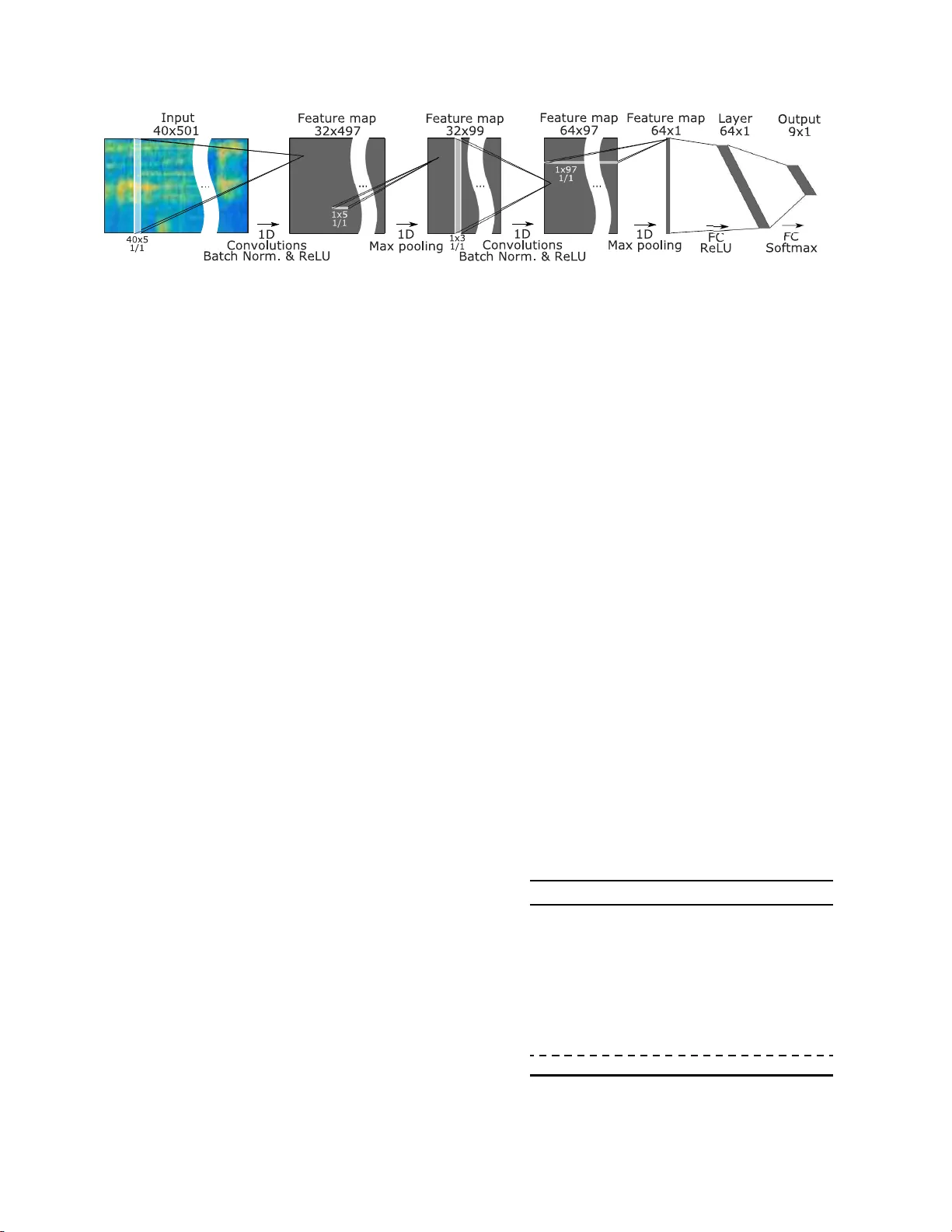

DCASE 2018 CHALLENGE - T ASK 5: MONITORING OF DOMEST IC A CTIVITIES B ASED O N MUL TI-CHANNEL A COUSTICS Gert Dekker s 1 , 2 , ∗ , Lode V u e gen 1 , 2 , T oon van W aterschoot 2 , Bart V anrumste 2 , P eter Karsmaker s 1 1 KU Leuven, Department of Comput er Science, Geel, Belgi u m. 2 KU L euven, Department of Electrical Engin eering, Leuven, Belgium . ABSTRA CT The DCASE 2018 Challenge consists of five tasks related to auto- matic classification an d detection o f sound e vents and scenes. This paper presents the setup of T ask 5 which includes the desc ription of the task, dataset and the baseline system. In this task, it is in vesti- gated to which extent multi-channel acou stic recordings are benefi- cial for the purpose of classifying domestic activities. The goal is to exploit spectral and spatial cues independent of sensor location using multi-channel audio. For this purpose we provid ed a dev el- opment and ev aluation dataset which are deriv atives of the SINS database and contain domestic activ ities recorded by multiple mi- crophone arrays. The baseline system, based on a Neural Network architecture using con volutional and dense l ayer(s), is intended to lo wer the hurdle to participate t he challenge and to provide a refer- ence performance. Index T erms — Acoustic scene classifi cation, Multi-channel, Activities of the Daily Living 1. INTRODUCTION There is a rising interest in smart en vironments that enhance the quality of life for humans in terms of e.g. safety , security , com- fort, and home care [2 ]. In order to ha ve smart functionality , situa- tional awareness is r equired, which might be obtained by interpret- ing a multitude of sensing modalities i ncluding acoustics. Com- pared to other modalities, microphone sensors contain highly i n- formativ e data which can be exploited for multi ple purposes [3]. Ho we ver , many challenges remain regarding the automatic recog- nition of sounds. In order to properly compare different compu- tational methods t he community needs common publicly av ailable datasets. P rev ious editi ons of the Detection and Classification of Acoustic Scenes and Events (DCASE) challenge of fered a compet- itiv e platform to compare dif ferent computational method s using common datasets for v ari ous problems related to automatic classi- fication and detection of sound ev ents and scenes [4, 5, 6]. This year’ s challenge, DCASE 2018 [7], consists of fiv e tasks. This pa- per describes T ask 5 which resides in t he context of Ambient As- sisted L ivin g ( A A L ) where persons are monitored, e.g. to support patients wit h a chronic illness and older persons, by tracking their activ ities being performed at home [3, 8 , 9, 10]. When consider- ing an acoustic sensing modality , a domestic activity can be seen as an acoustic scene. A n acoustic even t is defined as a single con- secuti ve ev ent originating from a single sound source, e.g. a hand clap or a door knock. The ensemble of multiple ev ents create an acoustic scene describing a certain en vironment (e.g. a park or a ∗ Thanks to VLAIO-SBO project Sound INterf acing through the Sw arm (SINS) [1] for funding (contrac t 130006). living room) or , relev ant to this task, an activity being performed by a person (e.g. cooking or watching TV). The acoustic sensing lit- erature has mainly co vered the problems of automatic classification and detection of sound ev ents and scenes by using spectral informa- tion [8, 9, 4]. S imilarly , pre vious DCASE challenges did no t focus on letting participants exploit spectral and spatial information. T ask 5 offers a multi-channel dataset to compare computional methods that use both types of information. The goal is to exploit spectral and spatial cues independen t of sensor location using multi-channel audio for the purpose of classifying do mestic acti vities. This paper presents DCASE 2018 T ask 5 in detail. A task defini- tion, information abo ut the dataset, the task setup , baseline system, and baseline results on the dev elopment dataset are provided. 2. T ASK SETUP 2.1. Description The goal of this task is to classify multi-channel audio se gments (i.e. segmen ted data is given ), acquired by a microph one array , into one of the provided predefined classes as illustrated by Figure 1. T hese classes are daily activities performed in a home en vironment (e.g. Cooking, W atching T V and W orking). Figure 1: DCAS E 2018 T ask 5 description As they can be composed out of different sound ev ents, such activ ities are considered as acoustic scenes. Therefore, this task is quite similar to DCASE 2018 T ask 1: Acoustic scene classification . The difference lies in the type of scenes and the possibility to use multi-channel audio. For the gi ven prob lem a single perso n li ving at home is considered. Release of de velopment dataset 30 Ma rch 2018 Release of baseline system 16 April 2018 Release of e valuation dataset 30 Jun e 2018 Challenge submission 31 July 2018 Publication of results 15 Sept 2018 DCASE2018 W orkshop 19-20 Nov 2018 T able 1: Challenge/T ask timeline This reduces the comp lexity since the number of o verlapping acti v- ities is expected to be small. In fact, in the considered data set no ov erlapping acti vities are present. These cond itions were chosen to be able to focus on the main goal of this task which is to in vestigate to which exten t multi-channel aco ustic recordings are beneficial for the purpose of detecting domestic activ ities. This means that spatial properties can be exploited to serve as input features to t he classifi- cation problem. Howe ver , using estimates of absolute an gles or po- sitions of soun d sourc es as input for t he detection mod el is doomed not to generalize well t o cases where the position of the microphone array is altered. Therefore, in this task the focus is on systems which can exploit spatial cues i ndepende nt of sensor position using multi- channel audio. 2.2. Timeline The DCASE 2018 Challenge consists of five tasks related to auto- matic classifi cation and detection of sound events and scenes [7]. All these tasks follow the same timeline and similar submission guidelines. T able 1 introduces the timeline of the Challenge/T ask. First, a dev elopment dataset was provided along wi th reference an- notations and a baseline system. A month prior t o the submission deadline the ev aluation dataset was released. Challenge submis- sions consist of a systems output on t he e valuation dataset formatted according to the requirements described in [11]. In order to com- pare and understand all system(s), participants were also required to submit a technical report containing the description of the sys- tem(s) in detail. Reference anno tations for t he e valuation data were only available to us, therefore we were responsible for ev aluating the results according to the defined metric [11]. These results will be made public aft er ev aluation. Fi nally , the results will also be pre- sented on the DCASE2018 W orkshop. Participants co uld optionally submit their techn ical report as a paper to this conference. Figure 2: 2D map of the va cation hom e Figure 3: F r om SINS to DCASE 2018 T ask 5 dataset 2.3. Dev elop ment and ev aluation dataset The datasets used in this task are a deriv ative of t he SINS dataset [12]. The SINS dataset contains a continuo us recording of one per- son living in a vacation home over a period of one week. It was collected usin g a network of 13 microphone arrays distributed o ver the entire home. The microphone array consists of four linearly ar - ranged microphones. For this task se ven microphone arrays in the combined l iving room and kitchen area are used. More informa- tion about the SINS dataset can be found in [12]. Fi gure 2 shows the fl oorplan of the recorded en vironment along wit h the position of the used microphone array . For both the dev elopment and ev aluation dataset the continuous recordings were split into audio segments of 10 s. W e choose this windo w si ze as it is i n the same order as the shortest duration of an activity . Each audio segment contains four channels which r ep- resent the four microphone channels from a particular microphone array . Segments containing more than one acti ve class (e.g. a tran- sition of two actitivies) were left out. This means t hat each segment represents a si ngle acti vity . For the de velopme nt and e valuation set we took a time-wise subset (from the SINS dataset) of 2 / 3 and 1 / 3 re- specti vely . Full sessions of a particular activity were kept together as sho wn by Figure 3. Approximately 50% of t he data w as left out by sub sampling t o make the dataset easier to use for a challenge . For the de velopment dataset we provided the data along with reference annotations. The pro vided data was recorded by four out of the sev en microphone arrays gi ven the time-wise subset. The exact positions of these microp hone arrays were not mad e av ail able and not should not be e xploited. W e also provided a cross-v alidation setup containing four folds. Participan ts were encouraged to use these to make results reported on the dev el opment set uniform but it was not mandatory to use them. T he daily activities (9) are sho wn i n T able 2 along wit h the number of 10 second multi-channel segments Set Activity Se gments Sessions De velopme nt Absence 18860 42 Cooking 5124 13 Dishwashing 1424 10 Eating 2308 13 Other 2060 118 Social Activity 4944 21 V acuum cleaning 972 9 W atching TV 186 48 9 W orking 18644 33 T able 2: A vailable se gments and session in dev elopment set Figure 4: Neural Network architecture of the baseline system consisting of two 1D con volution al layers and one fully conne cted layer and t he amount of full sessions of a certain activity (e.g. a cooking session). Comp ared to the SINS dataset, we have combined the activ ity ”Phone call” and ”V isit” into one activ ity named ”Social activ ity”. The class ”Absence” is referred to as not being present in the room. Ho weve r , the data does contain recordings when a person i s present in another room. Depending on the activity , this is noticable in the recording. The class ”Other” is referred to as being present in the room but not doing any another acti vity in t he list. All the other activ ities are self-e xplanatory . Note that the dataset is unbalanced . The ev aluation dataset is acquired in a similar manner as the de- velopm ent dataset and has a similar class distribution. More statis- tics about this dataset will be made av ailable after the task is fin- ished. The dataset was provided as audio only . It contains data recorded by all sev en microphone arrays on a differen t time-wise subset as the dev elopment set. The fi nal ev aluation will be based on the data obtained by the microphone arrays not present in the de velopment set. The other microphon e arrays are pro vided to giv e insights about the ov erfi tting on those positions. Participants were allowed t o use external data (including pre- trained models) and data augmentation for system de velopment. It was not allowe d to use the ev aluation data to train the submitted system in an (un)supervised manne r . 2.4. Baseline system The baseline system is i ntended to make it easier t o participate in the task and to provide a reference performance. The system has all the functionality for dataset handling, calculating features and models, and ev aluating the results. The system is implemented in Python, primarily using the DCASE UTIL libary [13] for dataset handling and feature extraction and the Keras library [14] for learn- ing. The baseline system trains a single classifier model that takes a sin- gle channel as input. During the recording campaign, data was mea- sured simultaneously using multiple microphone arrays each con- taining 4 microphones. Hence, each domestic activity is recorded as many times as there were microphones. Each parallel recording of a single acti vity is considered as a different exa mple during training. The learner in the baseline system is based on a Neural Network architecture using tw o con volutiona l and one dense layer . As input, log mel-band energies are provided t o the network. The features extracted from each micropho ne channel are treated as seperate ex- amples. In the prediction stage a single outcome is computed f or each micropho ne array by averag ing the 4 model outcom es (poste- riors) that were computed by ev aluating the trained classifier mod el on all 4 microphon es. The features are calculated in frames of 40 ms with 50 % ov erlap, using 40 mel bands cov ering a frequenc y range from 50 tot 8000 Hz. An overv iew of the Neural Network archi- tecture is sho wn in Figure 4. It uses an input size of 40x501 which are the log-mel frames i n a total duration of 10 s. The first con volu- tional layer has 32 filt ers wi th a kernel size of 40x5 with a stri de of one, so therefore con volution is only performed over the time axis. Subsampling is then performed by Max Pooling by a factor 5. The resulting feature map of 32x 99 is then pro vided to a secon d con volu- tional layer that has 64 filt ers with a kernel si ze of 32x3 and a stride of one. Subsequentially , t his is subsampled using Max Pooling by a fac tor 3. After each con volutional layer Batch Normalization and ReLU activ ation i s used. T he resulting output, a feature vector of 64 coefficients, is then provided to a F ull y Connected (F C) layer of 64 neurons with ReLU activ ation. T he output layer consists of 9 neurons representing the output classes with Softmax activ ation. For regularization we have used Dropout (20%) between each layer . The network is trained using Adam optimizer with a learning rate of 0.0001. T he used batch size is 256 segments which results in a total of 1024 examples provid ed to the l earner gi ven that each segmen t contains 4 channels of audio. On each epoc h, t he training dataset is randomly subsampled so that the number of examples for each cl ass match the size of the smallest class. The performance of the model is e valuated e very 10 epochs, of 500 in total, on a validation subset (30% subsampled from the training set) . The model with the high- est score is used as the final model. As a metric the macro-averag ed F 1 -score is used, which is the mean of the class-wise F 1 -scores. 3. BASELINE SYS TEM RESUL TS T able 3 presents the results for the baseline system on the de vel- opment set using t he provided cross-validation folds. T he perfor- mance on the e valuation set wi l l be released when the results of task are made pub lic. Regarding the results on the de velopment set, Activity F 1 -score dev . F 1 -score ev al. Absence 85.41% N A Cooking 95.14% N A Dishwashing 76.73% N A Eating 83.64% N A Other 44.76% N A Social Activity 93.92% N A V acuum cleaning 99.31% N A W atching TV 99.59% NA W orking 82.03% N A A vera ge 84.50 ± 0.8% N A T able 3: Performance on the de velopmen t set the system was trained and tested fiv e times to provide an estimate on the variance related to random weight initializations and a ran- dom validation split. On average t he model has a performance of 84.50%. Class-wise performances vary from 44.76% up to 99.59%. W orst performing classes are Other and Dishwa shing, while the best performing ones are V acuum cleaning, W atching TV and Cooking. 4. CONCLUSIONS In this paper we introduced the setup of the DCAS E2018 T ask 5 challenge, a t ask primarily concerned with systems exp loiting both spectral and spatial information indepent of sensor location using multi-channel audio. The dataset used for the task offers record- ings of domestic activities in a single home en vironment [15, 16]. A baseline system was introduced based on a Neural Network ar- chitecure usin g two con volutional layers and a dense layer . Results were reported on this dataset using the provided publicly av ai l able baseline system [17] showing a macro-a veraged F 1 -score of 84.5%. 5. NO TE More information on the e valuation dataset and t he performance will be made av ailable when the task results are made public. 6. A CKNO WLEDGMENT Thanks to S tev en L auwereins, Bart T hoen, Mulu W eldegebreal Ad- hana, Henk Brouckxon and Bertold V an den Bergh t o their contri- bution of acquiring the SINS datase t [12]. 7. REFERENCES [1] S INS. S ound INterfacing through the Swarm. [Online]. A vailable: http:// www . esat.kuleuv en.be/sins/ [2] F . Erden, S. V elipasalar , A. Z. Alkar , and A. E. Cetin, “Sensors in assisted living : A survey of signal and image processing methods, ” IEEE Signal Proces sing Magazine , vol. 33, no. 2, pp. 36–44, March 2016. [3] M. V acher , F . Portet, A. Fleury , and N. Noury , “Develo pment of audio sensing t echnology for ambient assisted living: Ap- plications and challeng es, ” International Jou rnal of E-Health and Medical Communications (IJEHMC) , vol. 2, no. 1, pp. 35–54, January 2011 . [4] A. Mesaros, T . Heitt ola, A . Dimen t, B. Elizalde, A. Shah, E. V incent, B. Raj, an d T . V irtanen, “DCASE 2017 Chal- lenge setup: T asks, datasets and baseline system, ” in Proc eed- ings of the Detection and Classification of Acoustic Scenes and Events 2017 W orkshop (DCASE2017) , Munich, Germany , Nov ember 2017 . [5] D. S towell, D. Gi annoulis, E . Benetos, M. Lagrange, and M. D. Plumbley , “Detection and classification of acous- tic scenes and ev ents, ” IEEE T ransactions on Multimedia , vol. 17, no. 10, pp. 1733–174 6, Oct 20 15. [6] A. Mesaros, T . Heittola, E. B enetos, P . Foster , M. Lagrange, T . V irtanen, and M. D. Plumbley , “Detection and classification of acoustic scenes and e vents: Outcome of the dcase 2016 challenge, ” IEEE/ACM T rans. Audio, Speech and Lang. Pr oc. , vol. 26, no. 2, pp. 379–393, Feb . 2018. [ O nli ne]. A vailable: https://doi.org/10.11 09/T ASLP .2017.2778 423 [7] DCASE. (2018) DCAS E2018 Challenge. [Online]. A vailable: http://dcase.community/cha llenge2018/ [8] L. V uegen, B. V an Den Broe ck, P . Karsmakers, H. V an hamme, and B. V anrumste, “ Automatic monitor- ing of activ ities of daily living based on real-life acoustic sensor data: A preli mi nary study , ” in Pr oc. F ourth workshop on speec h and languag e pr ocessing for assistive technolo gies (SLP A T) , 2013, pp. 113–118. [9] ——, “Energy efficient monitoring of activities of daily living using wireless acoustic sensor network s in clean and noisy conditions, ” in 2015 23r d Europ ean Signal Pr ocessing Con- fer ence (EUSIPCO) , A ug 2015, pp. 449– 453. [10] M. V acher, B. Lecouteux, P . Chahuara, F . P ortet, B. Meillon, and N. Bonnefond, “The Sweet-Home speech and multimodal corpus for home automation interaction, ” in The 9th edition of the L angua ge Resour ces and Evaluation Con fer ence (LREC) , R eykja vik, Iceland, 2014, pp. 4499–450 6. [Onli ne]. A vailable: http:// hal.archi ves- ouvertes.fr/hal- 00953006 [11] DCASE and KU Leuv en. (2018) DCASE2018 T ask 5: Monitoring of domestic activities based on multi-channel acoustics. [Online]. A v ailable: http://dcase.community/cha llenge2018/task- monitoring- domestic- activities [12] G. Dekkers, S. Lauwereins, B. Thoen, M. W . Adhana, H. Brouckxo n, T . van W aterschoot, B. V anrumste, M. V er- helst, and P . Karsmakers, “The S I NS database for detection of daily activities in a home enviro nment using an acous- tic sensor network, ” in Proceed ings of the Detection and Classification of Acoustic Scenes and Events 2017 W orkshop (DCASE2017) , Munich, Germany , Novemb er 2017, pp. 32– 36. [13] T oni Heittola. ( 2018) DCASE UTIL: Uti lities for Detection and Classification of Acoustic Scenes. [ Onli ne]. A vailable: https://dcase- repo.github .io/dcase util/ [14] Keras. (2018) Keras: The Python Deep Learning library. [Online]. A v ailable: https://keras.io/ [15] G. Dekk ers and P . Karsmakers. (2018) DCASE 2018, T ask 5: Monitoring of domestic activities based on multi- channel acoustics - De velopme nt dataset. [ Online]. A vailable: https://zenodo.or g/record/1247 102 [16] ——. (2018) DCASE 2018, T ask 5: Monitor- ing of domestic activities based on multi-channe l acoustics - De velopment dataset. [Online]. A vailable: https://zenodo.or g/record/1291 760 [17] G. Dekkers, T . Heittola, and P . Karsmak ers. (2018) DCASE2018 T ask 5: system baseline. [Online]. A vailable: https://github .com/DCASE- RE PO/dcase2018 baseline/tree/master/task5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment