Using Pilot Systems to Execute Many Task Workloads on Supercomputers

High performance computing systems have historically been designed to support applications comprised of mostly monolithic, single-job workloads. Pilot systems decouple workload specification, resource selection, and task execution via job placeholders and late-binding. Pilot systems help to satisfy the resource requirements of workloads comprised of multiple tasks. RADICAL-Pilot (RP) is a modular and extensible Python-based pilot system. In this paper we describe RP’s design, architecture and implementation, and characterize its performance. RP is capable of spawning more than 100 tasks/second and supports the steady-state execution of up to 16K concurrent tasks. RP can be used stand-alone, as well as integrated with other application-level tools as a runtime system.

💡 Research Summary

The paper presents RADICAL‑Pilot (RP), a general‑purpose pilot system designed to enable high‑throughput execution of many‑task workloads on large‑scale supercomputers. Traditional HPC batch systems are optimized for monolithic, single‑job applications, which creates a mismatch when applications consist of thousands of relatively small, heterogeneous tasks. Pilot systems address this gap by decoupling resource acquisition from task scheduling: a “pilot” (or placeholder job) reserves a block of resources, and once active it can accept and execute tasks submitted by the application.

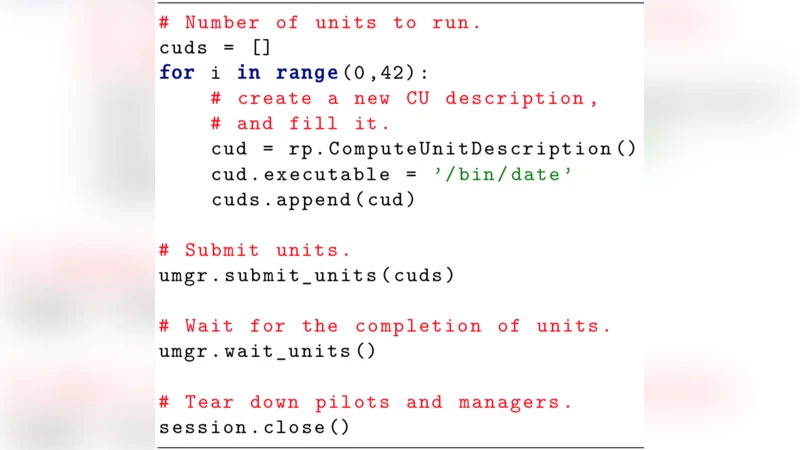

RP implements the pilot paradigm in Python and is built around three core components: PilotManager, UnitManager, and Agent. The PilotManager uses the RADICAL‑SAGA API to submit pilots to diverse batch schedulers (e.g., PBS, SLURM, Cray ALPS). The UnitManager creates Compute Units (CUs) that encapsulate the executable, arguments, and optional data staging directives. Both pilots and CUs are represented as stateful entities with well‑defined life‑cycle models; pilots transition through NEW → PM_LAUNCH → P_ACTIVE → DONE, while CUs progress through a nine‑state model that includes scheduling, execution, and optional input/output staging. Communication between the UnitManager and one or more Agents is mediated by a MongoDB instance, allowing asynchronous, fault‑tolerant exchange of scheduling decisions. Inside each Agent, three sub‑components—Stager, Scheduler, and Executer—are linked via ZeroMQ sockets. This modularity enables the Agent to be deployed on head nodes, compute nodes, virtual machines, or any combination thereof, and to support multiple launch methods (e.g., aprun, ccmrun, Open Run‑Time Environment).

A key contribution of the work is the detailed description of how RP is adapted to Cray systems, which have unique constraints such as the ALPS limit of ~100 concurrent applications per batch job and the optional availability of the Cluster Compatibility Mode (CCM). RP offers four integration pathways: direct ALPS usage, CCM‑inside‑job, CCM‑outside‑job, and leveraging the Open Run‑Time Environment. By abstracting these details behind the Agent, RP allows users to write a single Python script that runs unchanged on Blue Waters, Titan, ARCHER, or any other supported platform.

Performance experiments demonstrate that a single RP pilot can launch more than 100 tasks per second and sustain steady‑state execution of up to 16 000 concurrent tasks. Resource utilization remains above 80 % even at this scale, and the system’s design permits the insertion of custom scheduling policies without degrading throughput. The authors also compare the native Cray schedulers with an “enhanced” RP scheduler, showing that while absolute execution time differences are modest, the ability to extend the scheduler is crucial for workload‑specific optimizations.

In summary, RADICAL‑Pilot provides a scalable, extensible runtime environment that bridges the gap between traditional batch schedulers and modern many‑task scientific applications. Its multi‑level, multi‑entity scheduling architecture, Python‑centric API, and flexible deployment on heterogeneous HPC resources make it suitable for a broad range of domains, including ensemble simulations, data‑intensive analytics, and emerging AI‑driven workflows. Future work outlined by the authors includes native GPU support, container integration, and the incorporation of machine‑learning‑based dynamic scheduling to further improve efficiency on next‑generation exascale systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment