2P-DNN : Privacy-Preserving Deep Neural Networks Based on Homomorphic Cryptosystem

Machine Learning as a Service (MLaaS), such as Microsoft Azure, Amazon AWS, offers an effective DNN model to complete the machine learning task for small businesses and individuals who are restricted to the lacking data and computing power. However, here comes an issue that user privacy is ex-posed to the MLaaS server, since users need to upload their sensitive data to the MLaaS server. In order to preserve their privacy, users can encrypt their data before uploading it. This makes it difficult to run the DNN model because it is not designed for running in ciphertext domain. In this paper, using the Paillier homomorphic cryptosystem we present a new Privacy-Preserving Deep Neural Network model that we called 2P-DNN. This model can fulfill the machine leaning task in ciphertext domain. By using 2P-DNN, MLaaS is able to provide a Privacy-Preserving machine learning ser-vice for users. We build our 2P-DNN model based on LeNet-5, and test it with the encrypted MNIST dataset. The classification accuracy is more than 97%, which is close to the accuracy of LeNet-5 running with the MNIST dataset and higher than that of other existing Privacy-Preserving machine learning models

💡 Research Summary

The paper addresses a critical privacy concern in Machine Learning as a Service (MLaaS) platforms, where users must upload raw data to cloud servers to benefit from powerful deep learning models. To prevent exposure of sensitive information, the authors propose a novel framework called 2P‑DNN (Privacy‑Preserving Deep Neural Network) that enables inference on encrypted data using the Paillier partially homomorphic encryption scheme.

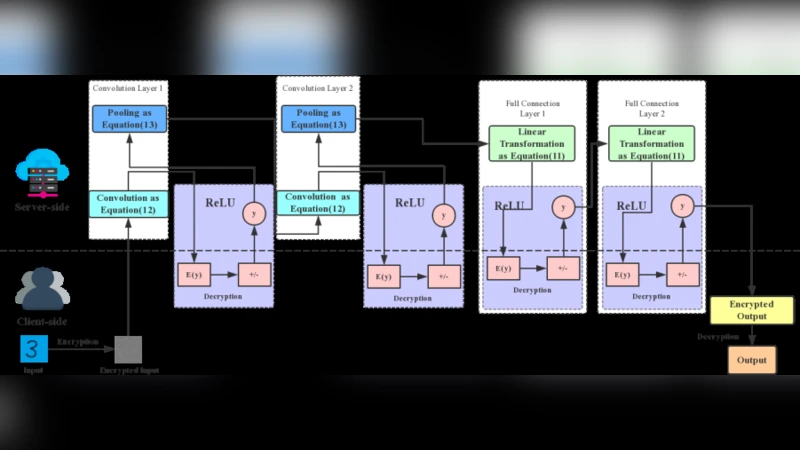

The core idea exploits Paillier’s additive homomorphism and scalar‑multiplication property to perform the linear components of a neural network—matrix multiplications, bias additions, and convolution operations—directly on ciphertexts. Since Paillier does not support ciphertext‑ciphertext multiplication, the authors handle non‑linear operations (specifically ReLU) through a lightweight client‑server interaction: the server sends the encrypted intermediate value to the client, the client decrypts it, determines its sign, and returns a single bit indicating whether the value is non‑negative. The server then applies the ReLU logic based on this bit. This approach avoids the heavy computational overhead of fully homomorphic encryption (FHE) while preserving privacy.

To accommodate negative weights and biases, the authors split the ciphertext space into two halves representing positive and negative integers, and they scale floating‑point parameters to integers using a large amplification factor before encryption. This scaling introduces a small precision loss, which the experiments show results in less than a 0.4 % drop in classification accuracy compared with the plaintext model.

The framework is instantiated on a classic LeNet‑5 architecture and evaluated on the MNIST handwritten digit dataset. The training phase is performed on plaintext data, achieving 99 % accuracy. For inference, each pixel of the test images is encrypted with Paillier, and the encrypted model processes these ciphertexts using the described linear‑transform, ReLU interaction, and average‑pooling (chosen over max‑pooling because ciphertext comparison is infeasible). Across various convolution kernel sizes (3×3, 5×5) and filter counts (32, 64), the encrypted inference yields accuracies ranging from 98.6 % to 99.0 %, closely matching the plaintext baseline.

The authors compare 2P‑DNN with prior works such as CryptoNets and FHE‑DiNN. Unlike CryptoNets, which also uses homomorphic encryption but is limited to specific activation functions, 2P‑DNN supports ReLU without restricting the input range. In contrast to FHE‑DiNN, which requires inputs to be binary (‑1, 1) and suffers from severe accuracy loss, 2P‑DNN can handle arbitrary integer‑scaled inputs, making it applicable to a broader set of datasets (MNIST, CIFAR‑10, Iris, Wine).

Despite its advantages, the paper acknowledges several limitations. The reliance on client‑server interaction for every ReLU layer introduces additional latency and network overhead, which may become significant for deep networks with many non‑linear layers. The need to quantize weights and biases to integers may affect models that are highly sensitive to precision, and the current implementation uses a CPU‑based Python library (Phe1.3.1), limiting scalability to larger architectures such as ResNet or Transformer models. Moreover, only average pooling is supported; extending the method to max‑pooling or more complex non‑linear functions would require further protocol design.

In conclusion, 2P‑DNN demonstrates a practical pathway for privacy‑preserving inference in cloud‑based deep learning services. By leveraging the efficiency of Paillier’s additive homomorphism and a minimal interactive protocol for ReLU, the framework achieves near‑plaintext accuracy while keeping user data encrypted throughout the computation. Future work should explore more sophisticated activation functions, reduce communication rounds, and integrate GPU‑accelerated homomorphic operations to enable deployment on larger, real‑world models.

Comments & Academic Discussion

Loading comments...

Leave a Comment