A New Noise-Assistant LMS Algorithm for Preventing the Stalling Effect

In this paper, we introduce a new algorithm to deal with the stalling effect in the LMS algorithm used in adaptive filters. We modify the update rule of the tap weight vectors by adding noise, generated by a noise generator. The properties of the proposed method are investigated by two novel theorems. As it is shown, the resulting algorithm, called Added Noise LMS (AN-LMS), improves the resistance capability of the conventional LMS algorithm against the stalling effect. The probability of update with additive white Gaussian noise is calculated in the paper. Convergence of the proposed method is investigated and it is proved that the rate of convergence of the introduced method is equal to that of LMS algorithm in the expected value sense, provided that the distribution of the added noise is uniform. Finally, it is shown that the order of complexity of the proposed algorithm is linear as the conventional LMS algorithm.

💡 Research Summary

**

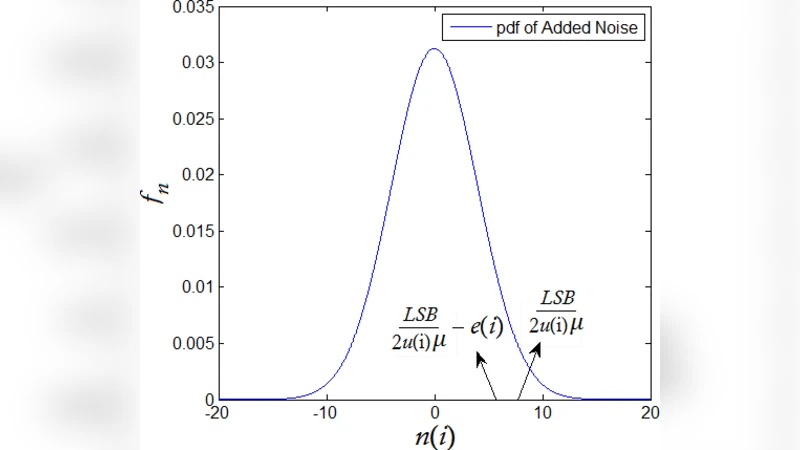

The paper addresses a well‑known practical problem in digital adaptive filtering: the “stalling” effect that occurs when the LMS algorithm is implemented with a limited number of bits. In finite‑precision arithmetic, once the error term that drives the weight update becomes smaller than half of the least‑significant bit (LSB), the rounding rule prevents any change in the tap weights, causing the algorithm to stop adapting. Existing remedies—such as increasing the word length, enlarging the step‑size, or exploiting quantization noise—either raise hardware cost or add computational overhead.

The authors propose a fundamentally different approach called Added‑Noise LMS (AN‑LMS). The classic LMS weight‑update equation

\

Comments & Academic Discussion

Loading comments...

Leave a Comment