A Survey on Online Judge Systems and Their Applications

Online judges are systems designed for the reliable evaluation of algorithm source code submitted by users, which is next compiled and tested in a homogeneous environment. Online judges are becoming popular in various applications. Thus, we would like to review the state of the art for these systems. We classify them according to their principal objectives into systems supporting organization of competitive programming contests, enhancing education and recruitment processes, facilitating the solving of data mining challenges, online compilers and development platforms integrated as components of other custom systems. Moreover, we introduce a formal definition of an online judge system and summarize the common evaluation methodology supported by such systems. Finally, we briefly discuss an Optil.io platform as an example of an online judge system, which has been proposed for the solving of complex optimization problems. We also analyze the competition results conducted using this platform. The competition proved that online judge systems, strengthened by crowdsourcing concepts, can be successfully applied to accurately and efficiently solve complex industrial- and science-driven challenges.

💡 Research Summary

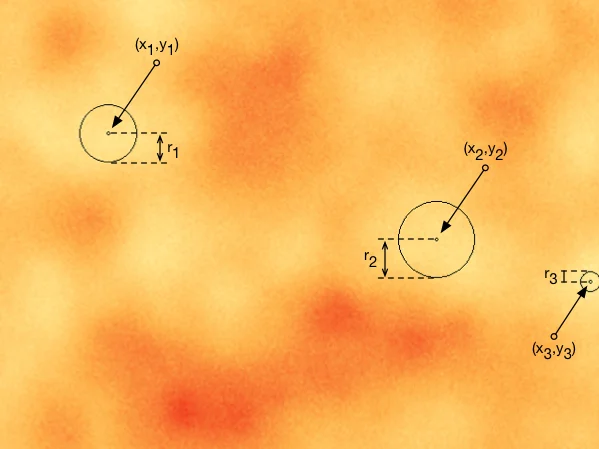

The paper provides a comprehensive survey of online judge (OJ) systems, which are platforms that automatically compile, execute, and evaluate user‑submitted source code in a homogeneous, often cloud‑based environment. After tracing the historical roots of OJ technology—from early contests such as the ACM‑ICPC (first organized in 1970) and the International Olympiad in Informatics (IOI, 1989) to the first known implementations at Stanford in 1961—the authors formalize the concept of an online judge. They define an evaluation procedure consisting of three mandatory phases: submission (compilation and sandbox verification), assessment (execution against a problem‑specific test suite with resource limits), and scoring (aggregation of per‑test results). This definition (see Definition 2.1 and 3.6) serves as a baseline for all subsequent analysis.

Security and timing precision are identified as the two most critical technical challenges. Modern OJs mitigate malicious code execution by isolating each submission in virtual machines, LXC containers, or Docker images. Precise time measurement—often required at the millisecond level—is achieved through a range of techniques, including simple command‑line timers, hardware performance counters, code instrumentation, and sampling. The authors discuss the trade‑offs of each method in terms of overhead, determinism, and integration complexity.

The core contribution of the survey is a purpose‑driven taxonomy that groups existing OJ platforms into four categories:

-

Competitive programming judges – systems that support large‑scale contests such as ACM‑ICPC, IOI, ROADEF, and various planning or architecture competitions. They provide real‑time ranking, binary or partial scoring, and strict time‑memory limits.

-

Educational and recruitment judges – platforms used in university courses, coding bootcamps, and corporate hiring pipelines. They emphasize multi‑language support, detailed feedback, code‑style analysis, and statistical dashboards for instructors or recruiters.

-

Data‑mining / machine‑learning challenge platforms – exemplified by Kaggle, these judges handle massive datasets and evaluate submissions using quantitative metrics such as accuracy, AUC, or log‑loss. While primarily focused on data‑science problems, they can also host optimization challenges.

-

Custom development / compiler‑as‑a‑service platforms – APIs or plug‑ins that expose code execution as a service for integration into IDEs, MOOCs, or other bespoke systems. Their scope may be limited to compilation and sandboxed execution without full scoring logic.

For each category the paper discusses typical functional requirements, architectural patterns (e.g., SMP‑based parallel processing, container orchestration), and quality‑control aspects such as test‑case design and result visualization.

A particularly insightful case study is the Optil.io platform, which the authors present as an example of an OJ that departs from binary evaluation and instead uses an objective‑function‑based scoring model. Optil.io targets complex combinatorial optimization problems; participants submit heuristic or meta‑heuristic solutions, and the system ranks them according to the value of a predefined objective function evaluated on hidden test instances. The authors report that 34 contests were run on Optil.io in 2016, attracting an average of 500 teams per contest, with a total estimated human effort of 370 person‑years. This demonstrates how crowdsourcing, when combined with a robust OJ infrastructure, can solve industrial‑scale optimization tasks efficiently and cost‑effectively.

The survey also outlines a set of design principles that should guide future OJ development: (i) strong isolation and resource‑quota enforcement, (ii) high‑resolution, reproducible timing mechanisms, (iii) scalable cloud‑native architectures (potentially serverless), (iv) automated test‑case generation and validation, (v) transparent scoring dashboards, and (vi) support for emerging problem types such as multimodal or AI‑driven challenges.

In conclusion, the paper fills a gap in the literature by providing a formal definition of online judges, a systematic classification of existing systems, and an analysis of their evaluation methodologies. By showcasing Optil.io’s success in leveraging crowdsourced expertise for optimization problems, the authors argue that online judges are evolving from niche contest tools into general‑purpose “Evaluation‑as‑a‑Service” platforms with broad applicability across research, education, and industry.

Comments & Academic Discussion

Loading comments...

Leave a Comment