Signals as Parametric Curves: Application to Independent Component Analysis and Blind Source Separation

Images Stacks as Parametric Surfaces (ISPS) is a powerful model that was originally proposed for image registration. Being closely related to mutual information (MI) - the most classic similarity measure for image registration, ISPS works well across…

Authors: Birmingham Hang Guan, An, Rangarajan

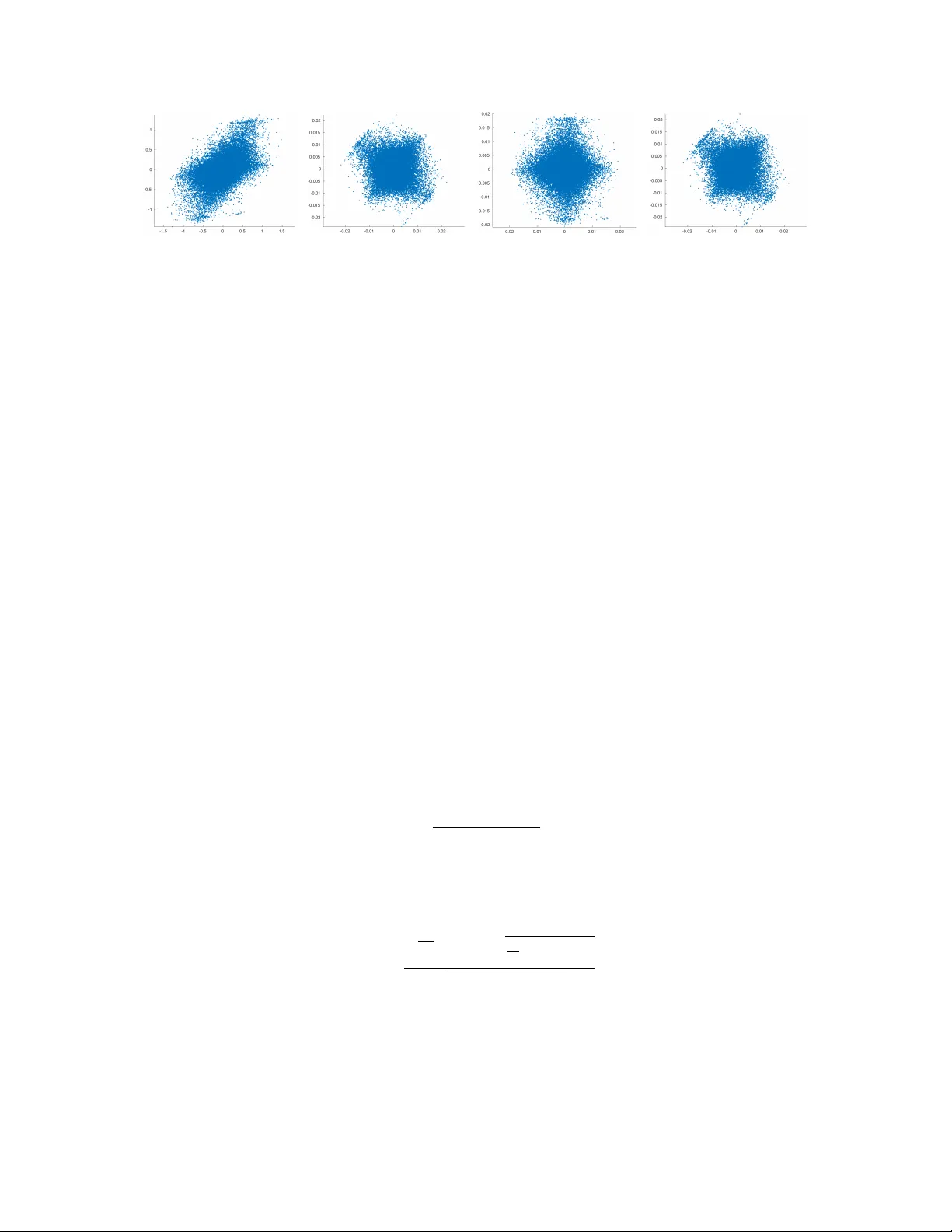

Signals as P arametric Curv es: Application to Indep enden t Comp onen t Analysis and Blind Source Separation Birmingham Hang Guan and Anand Rangara jan ∗ Abstract Images Stacks as P arametric Surfaces (ISPS) is a p o werful model that was originally pro- p osed for image registration. Being closely related to m utual information (MI) – the most classic similarit y measure for image registration, ISPS works well across different categories of registra- tion problems. The Signals as Parametric Curves (SPC) model is derived from ISPS extended to 1-dimensional signals. Blind Source Separation (BSS) is a classic problem in signal pro cess- ing, where Indep enden t Comp onent Analysis (ICA) based approaches are p opular and effective. Since MI pla ys an imp ortan t role in ICA, based on the close relationship with MI, we apply SPC mo del to BSS in this pap er, and prop ose a group of geometrical ob jective functions that are simple y et p o werful, and serve as replacemen ts of original MI-based ob jective functions. Moti- v ated by the geometrical ob jective functions, we also propose a second-order-statistics approach, FT-PCA. Both geometrical ob jective functions and FT-PCA consider signals as functions in- stead of sto c hastic processes, mak e use of deriv ativ e information of signals, and do not rely on the indep endence assumption. In this pap er, w e discuss the reasonability of the assump- tions of geometrical ob jectiv e functions and FT-PCA, and show their effectiv eness by syn thetic exp erimen ts, comparing with other previous classic approac hes. 1 In tro duction Indep enden t comp onen t analysis (ICA) ([11, 17]) is a well-kno wn topic in mac hine learning, statis- tics, and signal pro cessing. The original ICA problem ([10, 3]) and its v arious extensions ([16, 2]) ha ve b een researc hed through the past 25 years. Being a theoretical topic in statistics, it w as origi- nally proposed and applied for signal pro cessing problems, esp ecially blind source separation (BSS) ([10]). ICA-based BSS has v arious practical applications, like electro encephalographic data analysis (EEG) ([13, 24]). ICA w as also applied to image problems, like the work in [23, 26], where the laten t independent v ariable linear mixture mo del were used for image fusion, etc.. F ormally , and ICA problem is describ ed in the following manner: Let x b e a random vector of size N . The generative mo del for x is via a matrix A of size N × M and a random v ector s of size M whose comp onen ts are independent of each other, such that x = A s . Mutual information (MI), as a natural independence measure of random v ariables, is considered as a standard approach to solve for the ICA problem: M I ( s ) = Z p s ( s ) log p s ( s ) Q i p s i ( s i ) d s . ∗ The authors are with the Department of Computer and Information Science and Engineering, Univ ersity of Florida, Gainesville, FL, USA. E-mail: bkwan,anandr@ufl.edu. 1 If eac h s i are indep enden t, M I ( s ) = 0 . Therefore, b y minimizing MI, one can get separated random v ariables as indep enden t as possible. The definition of a BSS problem has a very close structure: Let x ( t ) = ( x 1 ( t ) , . . . , x N ( t )) b e a set of observed signals. Supposing that they are a linear mixture of a set of unknown source signals s ( t ) = ( s 1 ( t ) , . . . , s M ( t )) , we can express it as x ( t ) = A s ( t ) where A is the mixing matrix. There are t w o main differences b et ween an ICA problem and a BSS problem: First, eac h comp onen t of the x and s in the ICA problem is a random v ariable, whose densit y is estimated by observ ations of the sample data; while the comp onen ts of x ( t ) and s ( t ) are signals, which can b e considered as sto chastic pro cesses. The core difference is ab out the data b eing discrete or con tinuous. Second, b eing random v ariables, the comp onen ts of s in the ICA problem are indep enden t. In BSS, indep endence is not a necessary assumption. Apparen tly , BSS is a highly op en and nondeterministic problem, where b oth A and s ( t ) need to b e determined. F or an y approac h to solve BSS, additional assumption on sources should be made to decrease the infinitely many solutions do wn to a small subset. Similar to ICA, the signals x ( t ) and s ( t ) are commonly assumed to b e zero-mean, and signals in s ( t ) are uncorrelated with eac h other. Under this assumption, most approaches applied principal comp onen t analysis (PCA) to standardize signals as the first step. As early as 1981 ([25]), it was p oin ted out that the information in the sp ectral matrix is not sufficien t for separation, and additional assumptions are needed. The w ork in [22, 9] started to consider higher order statistics, and the assumption of source signals b eing indep endence w as put forth ([9, 12]). F rom then on, the assumption of independence was taken for gran ted b y most approaches (and these are not necessarily restricted to just ICA) ([4, 6]) for BSS. Though the indep endence assumption in ICA is natural, few pap ers p ointed out the difference in mo dels b y applying ICA to BSS. The indep endence of a set of signals is defined by the indep endence of the distributions underlying eac h signal, considering each signal as a sample function from a sta- tionary sto c hastic pro cess whose distribution at each time p oint is identical. By the ergo dicit y of the stationary sto c hastic pro cesses, the sample v alues of the signals can b e used to estimate the sta- tistical prop erties of the distributions, and hence can b e used to estimate the MI and indep endence. This is different from ICA, where data are observ ations of samples of each underlying distribution. Unfortunately , most ICA work, lik e the w ork in [18, 10] just mention that consideration of time t should b e neglected, and the signal v alues should b e considered as a collection of unordered obser- v ations. The dra wback of this p ersp ectiv e is that information contained in the “c hanging o v er time” of signals are ignored. Another p ersp ectiv e is to consider time t as a uniform distributed random v ariable, like the work in [27]. In this wa y , b eing contin uous functions of time, signals can still b e considered as random v ariables and the “c hanging ov er time” information of signals are taken into consideration. Ho w- ev er, under this model, indep endence do es not exist since all signals are functions of the same random v ariable. Therefore, the definition of indep endence of signals is not well-defined under this p erspective. F or BSS, the indep endence assumption is not necessary . Similar to the work in [4, 5], we are dedicated to consider additional information pro vided b y signals as functions, and solve the BSS problems without the assumption of indep endence. Signals can b e either considered as deterministic functions of time, or sto c hastic pro cesses, based on its application. Considering signals as deter- ministic functions, w e extend the Images Stac ks as Parametric Surfaces mo del (ISPS), a p o werful mo del originally designed for image registration, to 1D case (w e call it Signals as P arametric Curves 2 (SPC), accordingly), and apply SPC to BSS. Based on the close relationship b et w een SPC and MI, w e propose geometrical ob jectiv e functions that can approximate the MI-based ob jectiv e functions. W e are also able to analyze signals in the frequency domain b y the F ourier transform of the signals, and prop ose the FT-PCA algorithm, which do es not rely on the indep endence of signals, and fo cuses on the lo cal orthogonalit y in the frequency domain. F or simplicit y , in this pap er, we only focus on the tw o-dimensional case (where there are only tw o observed signals and tw o source signals), and higher dimensional cases can b e extended naturally . The main con tent of this paper is as follo ws: Section 2 briefly introduces w ell-kno wn previous approac hes for BSS, including ICA and second-order-statistics approaches; Section 3 briefly sum- marizes and analyzes the ICA framew ork and its assumption; Section 4 applies SPC to BSS and prop ose geometrical ob jectiv e functions that are comp etitiv e with the traditional MI approac h; Section 5 introduces FT-PCA algorithm based on the assumption of k ernel-orthogonality in the frequency domain; Section 6 shows synthetic sim ulation exp erimen ts and compare our approaches with several w ell-known approac hes, and sho ws the effectiveness of our algorithms; and the pap er is concluded in Section 7 to highligh t our simple y et effective approac hes for BSS. 2 Previous W ork Most approac hes of BSS can roughly be categorized in to tw o classes: high-order statistics based approac hes, or second-order statistics based approaches. ICA approac hes stick to the assumption of indep endence, and try to minimize the en tropies of signals to recov er sources whic h are as in- dep enden t as p ossible; while join t diagonalization approaches try to make use of information and prop erties of second-order statistics of signals to solv e for the unmixing matrix, bypassing the direct usage of indep endence to a v oid higher-order statistics. The w ork in [9, 12, 10] firstly introduced the concept of ICA, and created the indep endence assump- tion as a foundation of BSS. [3] is another well-kno wn pap er that highlighted m utual information based approaches for BSS. In the original ICA framework, the ob jectiv e function w as directly based on the assumption of indep endence: the Kullback divergence of the joint density and the pro duct of marginal densities, i.e. the mutual information. Nev ertheless, its estimation is difficult, and high- order cum ulants w ere introduced to estimate en tropies. The work also suggested a standardization step using PCA to standardize the deviation, and p oin ted out that after PCA, the minimization of MI is equiv alen t to minimization of negen tropies with respect to a sequence of pairwise rotations of signals. The w ork in [16] put forw ard the F astICA algorithm. Based on their previous w ork in esti- mating entropies ([15]), they suggested a set of contrast functions that are muc h simpler to compute than high order cumulan ts in the work in [10]. They also adopted Newton metho d to decrease the time complexit y , so that eac h signal can b e optimized one b y one. The F astICA algorithm is very efficien t and widely used un til now. Another most well-kno wn approach in the ICA category is Ker- nel ICA, prop osed in the w ork in [2]. The goal of Kernel ICA is to maximize the kernel correlation of whitened signals. It constructs an eigen-decomp osition structure, and computes the minim um eigen v alue of a matrix constructed by certain Gram matrices of signal data p oints. Though the idea is somehow close to our kernel orthogonalit y , the approac h is totally different. It is still within the ICA optimization framework. Comparing to the ICA series where signals v alues are used to estimate the indep endence of the underlying distributions, the second-order-statistics class (we call it SOBI series) tried to tak e use of other sto c hastic pro cess prop erties to b ypass the appro ximation of en tropies. AMUSE ([29]) algorithm is an early w ork of these approac hes. Its assumption on source signals is that giv en some 3 time shift τ , the auto-correlation matrix is diagonal but not identit y , i.e. for i 6 = j , E ( s i ( t ) s i ( t − τ )) 6 = E ( s j ( t ) s j ( t − τ )) and E ( s i ( t ) s j ( t − τ )) = 0 . This assumption gran ts another eigen-decomp osition structure than the PCA step, and makes AMUSE an approach where no optimization is required. Ho wev er, not all τ gran ts diagonal matrices. Once the selected τ makes the auto-correlation matrix isomorphic to identit y , the eigen-decomposition giv es trivial results, and AMUSE fails. The work in [4] put forward a joint diagonalization sc heme, and an extended algorithm, named SOBI. Instead of a certain τ , SOBI is based on the assumption that E τ ( s ( t + τ ) s H ( t )) is diagonal, assuming that s is a m ultiv ariate stationary pro cess of b oth t and τ . (It also has an equiv alen t assumption where the exp ectation of τ is defined as arithmetic av erage of a set of different τ ’s.) T o select a bunc h of differen t τ and use the joint diagonalization scheme, SOBI a voided the o ccurrence of a single trivial τ , and is able to solv e the problem by K times matrix diagonalization, where K is the n umber of τ selected. A following work in [5] extended this idea to non-stationary signals, where time-frequency distribution (TFD) ([8]) was introduced. Based on similar fact that the spatial TFD matrices (STFD) of signals b eing diagonal but not iden tit y , eigen-decomposition scheme is also able to b e applied to STFD matrices. Since STFD are dep enden t with time and frequency indices ( t, f ) , and for some sp ecial ( t, f ) , the STFD matrix can b e rank deficient, they again applied joint diagonalization scheme to solve the problem by a set of differen t selected ( t, f ) . STFD is close to our approac h, except that designed for non-stationary signals, the time-frequency domain analysis w as in tro duced. And similar to SOBI, it adopted selection of parameters and join t diagonalization. Though this approach can handle non-stationary signals and Gaussian signals, it was criticized b y complexit y and p erformance ([1, 20]). After this w ork, many following w ork came out based on time-frequency analysis and join t diagonalization ([7]). How ev er, most of them, lik e the work in [14], did not improv e the fact that STFD needs lo cal parameter selection and joint diagonalization, and fo cus on non-stationary signals, whic h is out of the scope of this pap er. The w ork in [2 1] is another one close to ours. It also put forward the assumption of disjoint orthogonalit y . Ho w ever, it and its follo wing w ork, like the w ork in [31], are based on a differen t problem from x = A s where other sp ecial conditions are applied, and therefore, are able to solve for more sources than observed signals. This is also not the focus of this pap er. Other work on BSS with frequency domain analysis, lik e the w ork in [30, 19, 28], though consider the mixing relation b et ween sources and observ ed signals in frequency domain, are differen t from our w ork by assumption, mo del, and algorithms. 3 ICA Revisited 3.1 A T wo-Step F ramew ork T ypically , ICA consists of t w o steps: the ICA optimization following a prewhitening step, where a PCA is p erformed. Though in most w ork ([10, 18]), the prewhitening of the input data x w as tak en for granted, it is also well kno wn that the purpose of the PCA is not merely to “standardize” x so as to make its co v ariance identit y . The key is whether to accept an additional assumption that ss T = I . This assumption was accepted in the pap er of [10] but not in the pap er of [16]. Since b y the assumption of indep endence, the source random v ariable s are uncorrelated. Hence, the assumption of ss T = I only adds an additional condition that each source random v ariable has unit v ariance. In ICA, the scaling of the source random v ariables is nondeterministic, th us the assumption is reasonable. With this assumption, ICA b ecomes a t wo-step algorithm, as analyzed in the following: 4 Expressing A as its singular v alue decomp osition (SVD) A = U A Σ A V T A , (1) and given that ss T = I , we ha ve xx T = A ss T A T = AA T . i.e. C X = U A Σ − 2 A U T A , where C X = xx T . Note that A is not orthogonal, otherwise x are uncorrelated and no PCA is needed. Hence, Σ − 2 A is a diagonal matrix whose main diagonal elements are not equal. And C X = U A Σ − 2 A U T A is a unique eigen decomp osition. This implies that, applying PCA to x , w e can solv e for b oth U A and Σ A . Considering the SVD of the linear mixing matrix A , we can call the equation x = A s = U A Σ A V T A s a “rotation-scaling-rotation” pro cedure (up to some permutation and reflection): V T A is the first rotation applied to s , Σ A applies scalings to s , and U A is the second rotation. F rom ab o v e w e sa w that from the mathematical p oin t of view, the PCA step in fact solve for the second rotation U A and the scaling Σ A . Therefore, a whole ICA pro cedure should b e considered as a tw o-step framework, which is also v ery w ell-known in signal pro cessing literature ([5]): solving for the second rotation U T A and the scaling Σ A b y PCA; and then solving for the first rotation V T A based on other assumptions, like “indep endence” in the w ork in [10], or auto-correlation matrices b eing diagonal in the w ork in [4]. Let’s call the signals after PCA as z , i.e. z = Σ A U T A x , and we ha ve s = V A z . In the t w o-signal cases, the orthonormal matrix V A is just a rotation matrix, up to some reflection and p erm utation. And in higher dimensional cases, it is a composition of a series of rotations (and p ossible reflections) within t wo-dimensional subspaces. This implies an imp ortan t fact, which can also b e noticed from the MI-based ICA ob jectiv e functions, that: The joint entr opy of ˆ V A z is invariant to r otation ˆ V A . Therefore, after the first step of an MI-based ICA, the join t entrop y is already maximized, and the second step is just a searc hing for rotations that minimize the summation of each marginal entrop y . This agrees with the fact that for any MI-based ICA approac h, the true ob jectiv e function is the summation of negentropies X i J ( p z i ) = X i ( H ( φ z i ) − H ( p z i )) where p z i is the densit y of a random v ariable z i , and φ z i is the Gaussian density with the same mean and v ariance as p z i . This w as interpreted as “F ara wa y from Gaussian distribution implies indep endence” ([18]). Note that, during the searc hing of the rotation angle, the mean (standardized as zero) and v ariance do es not chang e for eac h z i , so that H ( φ z i ) do es not c hange, and minimizing the negentrop y is equiv alen t to minimizing the sum of marginal entropies. 5 Figure 1: Scatter plots of example signals indicating the t wo-step framew ork of ICA. Left: the scatter plot of x ( t ) ; Mid: the scatter plot of z ( t ) ; Righ t: the scatter plot of s ( t ) . F rom x ( t ) to z ( t ) , a rotation and a scaling w as applied, while from z ( t ) to s ( t ) , merely a rotation w as applied. This also implies that ICA only v alid for the case where at most one Gaussian comp onen t exists, since if all comp onen ts are Gaussian, after the first step, the resulted distribution is rotational symmetric, given the assumption that ss T = I . This fact can b e understo o d as: under the linear mixing mo del, uncorrelatedness implies maxi- mization of joint en tropy , and that independence and uncorrelatedness only differ b y a series of rotations.Fig. 1 shows an example where we can observe that the seeking of indep endence is a seeking of an angle, so that eac h marginal distribution has as less marginal en tropies as p ossible. 3.2 The Reasonabilit y of the Indep endence Assumption In this section, w e discuss the indep endence assumption formally . The statistical indep endence can b e defined from t wo different persp ectives: the signals b eing deterministic functions, or sto chastic pro cesses. F rom the sto c hastic pro cess p oin t of view, we consider the source signals s ( t ) = ( s 1 ( t ) , . . . , s M ( t )) b eing sample functions of contin uous stationary sto c hastic pro cesses ˜ s ( t ) = ( ˜ s 1 ( t ) , . . . ˜ s M ( t )) . F or an y p ositiv e in teger n , pic k time p oin ts t 1 , t 2 , . . . , t n ∈ D and an y time in terv al ∆ t ∈ D , where D is the time domain, for i = 1 , 2 , . . . , M , the random v ector ( ˜ s i ( t 1 ) , ˜ s i ( t 2 ) , . . . , ˜ s i ( t n )) and ( ˜ s i ( t 1 + ∆ t ) , ˜ s i ( t 2 + ∆ t ) , . . . , ˜ s i ( t n + ∆ t )) has identical distribution p ˜ s i . The indep endence of the signals are defined as the indep endence of p ˜ s i for i = 1 , 2 , . . . , M . By the ergo dicit y theorem of the stationary sto c hastic pro cesses, the v alues of the sample functions – the source signals – can be used to estimate the entrop y of underlying distribution, and compute their m utual information. Therefore, assuming independence of the distributions underlying a set of signals is reasonable, and hence ICA can b e directly applied to BSS with the independence assumption. Ho wev er, “indep endence” is not the truth, but just an assumption to admit so that ICA can be applied to BSS. It is not p erfect, and has the following disadv an tages: Firstly , the sto c hastic pro cess mo del of signals disregards the deriv ativ e information contained in the signals. That is, if w e reorder the signal sample v alues, there are no difference from the stochastic process p ersp ectiv e. W e assert that an approac h may w ork for more cases if it tak es the deriv ativ e information in to consideration. Secondly , there exists pairs of source signals that are generated and sampled “indep enden tly”, but b y ICA, i.e. b y the minimization of sum of marginal entropies, the original signals ma y not b e reco vered. See Fig. 2. This indicates the fact that the indep endence assumption may not b e the 6 Figure 2: A Counterexample for the indep endence assumption. Left: the scatter plot of x ( t ) ; Mid-left: the scatter plot of z ( t ) ; Mid-right: the scatter plot of s ( t ) ; Righ t: the scatter plot of y ( t ) recov ered b y minimizing sum of marginal en tropies. The source signals s ( t ) has larger sum of marginal entropies than the minim um case, and hence MI is not able to solve for the true source signals from the mixed observ ation x ( t ) . most reasonable assumption for these source signals. Finally , ICA do not w ork for the case where at least tw o source signals are Gaussian, as w e mentioned ab o v e. 4 Applying SPC to BSS 4.1 The SPC Mo del Based on the effectiv eness of the MI-based ICA for most cases of BSS, as w ell as the disadv antages of the sto c hastic pro cess mo del of signals underlying ICA for BSS, we need a different approac h that is based on the deterministic function mo del of signals where deriv atives of the signals are av ailable, and is closely related to MI. SPC is one of the b est c hoices. The SPC mo del is expressed as follows: Supp ose that we hav e a set of 1D signals { s 1 ( t ) , s 2 ( t ) , . . . , s N ( t ) } defined on the domain of time D ⊂ R . Consider the mapping S : D → R N +1 b y t 7→ ( t, s 1 ( t ) , . . . , s N ( t )) , and w e hav e a 1D parametric curv e S ( t ) em b edded in an N + 1 dimensional Euclidean space. And its curve arc length is Z D v u u t 1 + N X i =1 ( s 0 i ( t )) 2 d t. Analogizing the ISSRA ob jective function in 2D case, w e hav e the follo wing ob jectiv e function: O 1 = Z √ N Q N i =1 2 N q 1 N + ( s 0 i ( t )) 2 q 1 + P N i =1 ( s 0 i ( t )) 2 d t (2) where N is the num b er of signals. W e can call it the “Signal P arametric Curve Relativ e Arc Length”, comparing to the name of ISSRA in the ISPS mo del. Note that in Eq. 2, comparing to ISSRA, the denominator and numerator of the in tegrand are flipp ed. In ISSRA, the join t area is to b e minimize for getting images similar, so it is in the n umerator. And here we wan t to minimize O 1 to get signals as separated as p ossible, so we flip the 7 in tegrand in order to fit this “opp osite” problem, b y putting the pro duct of each arc length in the n umerator and the joint arc length in the denominator. 4.2 The Relationship with MI In order to discuss the relationship betw een SPC and MI, w e need to consider the pseudo-SPC mo del and understand the signals b eing random v ariables as functions of time. Consider time t as a uniformly distributed random v ariable, each signals s i ( t ) b eing a differen tiable function of t is also a random v ariable. T o estimate the join t entrop y of the “stac k of signals”, the pseudo-SPC ˜ S : D → R N b y t 7→ ( s 1 ( t ) , . . . , s N ( t )) is considered. The difference betw een SPC S and pseudo-SPC ˜ S is that the first dimension t do es not app ear in ˜ S , and ˜ S is not injective, similar to the relationship b et w een ISPS and pseudo-ISPS. Unfortunately , similar to the fact that MI-based registration approach is not applied to group wise case, because of the disagreemen t of dimensionalit y , the Leb esgue measure of ˜ S ( t ) embedded in R N is zero, and the join t density does not exist. And from the statistics p oin t of view, it is also clear that, since each signal is a function of t , there is no independence defined for the set of all signals. This implies that under the pseudo-SPC point of view, MI is not able to b e computed to solv e the BSS problem. Ho wev er, in Section 3.1, we discuss the t wo-step framew ork of the ICA problem. W e p ointed out that in ICA the joint density and join t entrop y is nev er considered. In the second step, no join t en tropy is computed, but just the sum of marginal entropies. F ortunately , in the pseudo-SPC p erspective, the 1D marginal entrop y of each signal is w ell-defined. And by the close relationship b et w een it and the SPC model, we are still able to apply O 1 to BSS to approximate the “MI”, i.e. the sum of marginal en tropies, to solv e for unmixed signals. In fact, lo oking at O 1 carefully , w e notice that the joint arc length (the denominator) is also in v arian t to rotations, whic h means that in the second step of ICA where different rotation matrices are applied, the denominator do es not c hange either. This also meets the fact that O 1 is closely related to MI, where the joint en tropy part does not c hange with resp ect to rotations. And w e can simplify O 1 to get O 2 = Z N Y i =1 q 1 + ( s 0 i ( t )) 2 d t where only the marginal arc lengths are computed. Clearly , O 2 is related to the true ob jective function, the sum of marginal entropies, in the traditional MI approaches for ICA, and can b e considered as the ob jective function deriv ed from the SPC mo del. 4.3 Geometrical Ob jective F unctions for BSS Applying SPC to BSS, and considering the tw o-step framework of ICA, w e prop osed the ob jectiv e function O 2 , the pro duct of marginal arc lengths, by its close relationship with the ob jectiv e function of traditional MI approach. Hence, in the second step of ICA, given z ( t ) as the inputs, we can apply a rotation matrix R to get y ( t ) = R z ( t ) 8 and computes the ob jective functions of y ( t ) to solve for best ˆ y ( t ) that approximates s ( t ) best. The optimization can be done either by brute-force searc h, or gradien t descent algorithm since the ob jective function O 2 is smo oth and conv ex (see Section 6). In this pap er, for simplicity we only do brute-force search for each ob jective function for comparison. W e also prop ose some other ob jective functions which ha v e similar structures as O 2 : O 3 = Z N Y i =1 | y 0 i ( t ) | d t O 4 = Z log N Y i =1 | y 0 i ( t ) | d t O 5 = N X i =1 Z q 1 + ( y 0 i ( t )) 2 d t All these abov e ob jectiv e functions come from the arc lengths of each signals, and are named geometrical ob jectiv e functions for BSS. Comparing with the ob jectiv e function of sum of marginal en tropies, the adv antages of these func- tions are: they computes easier and faster than estimation of densities; they consider the deriv ative information of signals; they do not assume the independence, and w ork for the case where sources are not indep endent (for example, the counterexample sho wn in Fig. 2). Other than this dissertation, there do exist previous work that prop osed other functions approxi- mating the traditional MI ob jectiv e functions. The most famous ones are the following, prop osed in the work in [16]: G 1 ( y ) = 1 a 1 log cosh( a 1 y ) G 2 ( y ) = − 1 a 2 exp( − a 2 y 2 / 2) G 3 ( y ) = 1 4 y 4 where a 1 and a 2 are hyperparameters. In Section 6.1 w e sho w the function graph of eac h of the ab o v e ob jective functions. The results sho wed that all these geometrical ob jectiv es and the contrast functions agree at similar global minim um, up to some appro ximation error, which indicates that all these ob jectiv e functions hav e similar b eha viors in the BSS problems, and are effectiv e approac hes. How ev er, among them, the geometrical ob jectives ha ve significan t b etter precision, esp ecially O 3 and O 5 , which indicates that the geometrical ob jective functions not only share go od prop erties with the con trast functions, but also ha ve better p erformance. Therefore, they are comp etitiv e replacements of contrast functions, and hav e b oth theoretical and practical p oten tials. 5 F requency Domain Approac hes and the New Assumption 5.1 Motiv ation Among the new ob jectiv e functions prop osed ab o ve, O 3 ( R ) = R | y 0 1 ( t ) y 0 2 ( t ) | d t has the simplest form ula. An immediate question then comes up: do es it work if we simplify it further b y taking 9 Figure 3: Coun terexamples of the assumption of deriv ativ e orthogonalit y . Eac h figure sho ws a scatter plot of the deriv ativ es of a pair of source signals. F rom the figures w e can observe that the deriv ativ es are not orthogonal. The co v ariance of eac h pair of deriv ativ es are: (in order) 0.0195, 0.0881, 0.0580, which are relatively large. a wa y the absolute v alue sign, i.e. ˜ O 3 ( R ) = R y 0 1 ( t ) y 0 2 ( t )dt ? F rom the exp erimen t results in Section 6.1, w e can observ e that it has worse p erformance than O 3 , but its error was acceptable for a practical BSS task. F or differen t s 1 ( t ) and s 2 ( t ) , most likely R ( s 0 1 ( t )) 2 d t 6 = R ( s 0 2 ( t )) 2 d t , then supp ose that the minimiza- tion of ˜ O 3 ( R ) leads to min Z y 0 1 ( t ) y 0 2 ( t )d t = Z s 0 1 ( t ) s 0 2 ( t )d t = 0 , This induces the actual assumption of ˜ O 3 , other than approximating MI. Accepting this assumption, w e can solv e BSS b y solving a PCA problem on the deriv ativ es of giv en signals z 1 ( t ) and z 2 ( t ) : Supp ose that ˆ R is the correct rotation matrix to be solved, i.e. s ( t ) = ˆ R z ( t ) . T aking deriv ativ es on b oth sides, we ha v e s 0 ( t ) = ˆ R z 0 ( t ) . (3) Hence, Z z 0 ( t )( z 0 ( t )) T d t = ˆ R T Z s 0 ( t )( s 0 ( t )) T d t ˆ R. Since R ( s 0 1 ( t )) 2 d t 6 = R ( s 0 2 ( t )) 2 d t and R s 0 1 ( t ) s 0 2 ( t )d t = 0 , R s 0 ( t )( s 0 ( t )) T d t is a nontrivial diagonal matrix. Therefore, similar to the first PCA step, b y eigen decomp osition of R z 0 ( t )( z 0 ( t )) T d t , we are able to get ˆ R . W e call this approac h Deriv ativ e-PCA. The Deriv ative-PCA approac h is based on the assumption that R ( s 0 1 ( t )) 2 d t 6 = R ( s 0 2 ( t )) 2 d t and R s 0 1 ( t ) s 0 2 ( t )d t = 0 , R s 0 ( t )( s 0 ( t )) T d t . In other words, for a BSS problem, supp ose that w e do not ha ve any assumption on indep endence, but an assumption on orthogonalit y of deriv atives of the source signals, and then we are able to solv e for the second rotation by another PCA step on the deriv ativ e signals. As what we discuss in Section 1, a BSS problem is highly op en, and it can only be solved with restrictions based on assumptions. How ev er, assumptions are not true or false. An assumption w orks if it meets the real cases. Just lik e what we discussed ab o ve: in most cases the indep endence assumption works, but there are also counterexamples. Similarly , if most source signals that are sampled “indep enden tly” ha ve orthogonal deriv atives, then the ab ov e approac h w ould give correct approximations to the sources. Unfortunately , practically sp eaking, it is easier to find coun terexamples for the assumption of orthogonal deriv ativ es than the assumption of indep endence. Fig. 3 sho ws one of the examples where the original signals do not ha ve orthogonal deriv ativ es. Empirically , we ma y assert that the orthogonality on deriv ativ e signals is not go o d enough as an assumption. Ho wev er, this approac h is inspiring, whic h giv es the motiv ation of this section: finding a reasonable assumption that can construct a PCA-lik e structure for solving BSS, bypassing the optimization 10 pro cedure. This approach falls in the second-order-statistics category for BSS. And as w e discussed in Section 2, AMUSE, SOBI, and STFD are well-kno wn approac hes in this category . In the follo w- ing, w e prop ose a new approac h FT-PCA following this idea, and discuss the reasonabilit y of its assumption comparing with AMUSE and SOBI. 5.2 The F ourier T ransform Approach The goal of the second step is to solve for s ( t ) and ˆ R from s ( t ) = ˆ R z ( t ) . Applying F ourier transform (FT) on b oth sides, w e hav e S ( ω ) = ˆ R Z ( ω ) , where ω ∈ R is the frequency , S ( ω ) is the FT of s ( t ) , and Z ( ω ) is the FT of z ( t ) . By Parsev al’s Theorem, w e know that Z | Z i ( ω ) | 2 d ω = Z ( z i ( t )) 2 d t = 1 Z | S i ( ω ) | 2 d ω = Z ( s i ( t )) 2 d t = 1 for t = 1 , 2 , and Z Z 1 ( ω ) Z 2 ( ω )d ω = Z z 1 ( t ) z 2 ( t )d t = 0 Z S 1 ( ω ) S 2 ( ω )d ω = Z s 1 ( t ) s 2 ( t )d t = 0 . Therefore, R Z ( ω )( Z ( ω )) H d ω = R S ( ω )( S ( ω )) H d ω = I are b oth the identit y matrix. The ab o ve transformation giv es trivial results since ˆ R is not able to b e solved from Z Z ( ω )( Z ( ω )) H d ω = ˆ R T Z S ( ω )( S ( ω )) H d ω ˆ R whic h is equiv alent to I = ˆ R T I ˆ R = I . In fact, an y c hange of basis applied to the function space of the signals ha ve similar results, due to the Parsev al’s Theorem. Ho w ever, inspired b y the ab o ve deriv ativ e orthogonality assumption, w e can apply kernel tric ks as follo ws: Multiplying b oth sides of S ( ω ) = ˆ R Z ( ω ) by a certain complex function φ ( ω ) that is nonzero on a set with p ositive Lebesgue measure, w e ha v e φ ( ω ) S ( ω ) = φ ( ω ) ˆ R Z ( ω ) . Let K ( ω ) = φ ( ω ) φ ( ω ) b e the kernel function, then the elemen ts of the cov ariance matrices R K ( ω ) Z ( ω )( Z ( ω )) H d ω and R K ( ω ) S ( ω )( S ( ω )) H d ω b ecome inner pro ducts of FT of signals in the k ernel space defined by K ( ω ) , and we ha v e Z K ( ω ) Z ( ω )( Z ( ω )) H d ω = ˆ R T Z K ( ω ) S ( ω )( S ( ω )) H d ω ˆ R. F or conv enience, let us name eac h of the elements in the ab o ve matrices as follows: S ij = Z K ( ω ) S i ( ω ) S j ( ω )d ω 11 and Z ij = Z K ( ω ) Z i ( ω ) Z j ( ω )d ω under the case where K ( ω ) has no ambiguit y , then w e can write that Z K ( ω ) Z ( ω )( Z ( ω )) H d ω = Z 11 Z 12 Z 21 Z 22 (4) and Z K ( ω ) S ( ω )( S ( ω )) H d ω = S 11 S 12 S 21 S 22 . Supp ose there exists a k ernel space defined by K ( ω ) , so that S 11 6 = S 22 and S 12 = 0 , then R K ( ω ) S ( ω )( S ( ω )) H d ω is a nontrivial diagonal matrix, and ˆ R can b e solved by eigen decomp osition of R K ( ω ) Z ( ω )( Z ( ω )) H d ω by the uniqueness prop ert y of eigen decomp ositions. F ormally , supp ose that R K ( ω ) S ( ω )( S ( ω )) H d ω is a diagonal but not the identit y matrix, the eigen decomp osition of R K ( ω ) Z ( ω )( Z ( ω )) H d ω can b e written as Z K ( ω ) Z ( ω )( Z ( ω )) H d ω = E T Λ E . Then R K ( ω ) S ( ω )( S ( ω )) H d ω and Λ only differ by row switc hing, and E and ˆ R only differ by row switc hing and signs. This approac h of solving for the second rotation in BSS is called FT-PCA. Note that in the ideal case where S 12 = S 21 = 0 , R K ( ω ) S ( ω )( S ( ω )) H d ω is a real matrix. And since ˆ R is real, R K ( ω ) Z ( ω )( Z ( ω )) H d ω is also a real matrix. Therefore, under the ideal kernel K ( ω ) , w e only need to consider the real part of the matrix R K ( ω ) Z ( ω )( Z ( ω )) H d ω , and consider the imaginary part as error. The key p oin ts of FT-PCA are the reasonability of assuming the k ernel orthogonalit y , i.e. S ij = R K ( ω ) S i ( ω ) S j ( ω )d ω = 0 for i 6 = j , and if this is reasonable, how to find the kernel K ( ω ) . F rom Section 5.1 we know that the approac h of Deriv ativ e-PCA works for some inputs, but do es not work for others. Note that by applying FT to b oth sides of Eq. 3, w e hav e ω S ( ω ) = ω ˆ R Z ( ω ) , and the Deriv ative-PCA approach is just a sp ecial case of FT-PCA where the k ernel K 1 ( ω ) = ω 2 . This candidate kernel w orks for some inputs, but not p erfect since there exist coun terexamples. By noticing the function graph of K ( ω ) = ω 2 , we observe that this kernel is similar to a window function that focuses on the high frequency in terv als of the source signals, and hence, an immediate alternativ e option comes up: K 2 ( ω ) = 1 1 + | ω | whic h gran ts lo w frequency parts of the signals more weigh ts. See Fig. 4. Certainly , we can generalize it by K 3 ( ω ) = 1 1 + | ω − ω 0 | (5) where ω 0 is the cen ter of this window-lik e function. By picking differen t ω 0 ’s, K ( ω ) fo cuses on differen t in terv als of the frequency domain b y giving that in terv al higher w eigh ts, so as to gran t S 12 close to zero and S 11 6 = S 22 . If there exists an ideal ω 0 so that the k ernel orthogonalit y assumption holds, then FT-PCA can theoretically solve the BSS problems. In Section 5.3 w e sho w that the k ernel orthogonalit y assumption is reasonable, and in Section 5.4 we show that the ideal ω 0 is not a v ailable, but provide a strategy to searc h for goo d ω 0 to practically solve BSS using FT-PCA. With ω 0 as the hyper-parameter, w e hav e the FT-PCA Algorithm describ ed as 1: 12 Algorithm 1 The FT-PCA algorithm with ω 0 as a hyper-parameter. Input: signals x ( t ) , the hyper parameter ω 0 . First Step: 1. Centering x by x ← x − ¯ x ; 2. Let x = U Σ V T b e the SVD of x ; 3. Compute z = Σ − 1 U T x ; Second Step: 4. Compute the FT of z as Z ( ω ) ; 5. K ( ω ) = 1 1+ | ω − ˆ ω 0 | ; 6. Compute eigen decomp osition of the matrix Re( R K ( ω ) Z ( ω )( Z ( ω )) H d ω ) = E Λ E T ; 7. S ( ω ) = E T Z ( ω ) ; 8. Compute the in verse FT of S ( ω ) as s . Output: the separated signals s ( t ) . -0.5 0 0.5 0 0.05 0.1 0.15 0.2 0.25 -0.5 0 0.5 0.65 0.7 0.75 0.8 0.85 0.9 0.95 1 Figure 4: The function graph of K 1 ( ω ) and K 2 ( ω ) . Left: the function graph of K 1 ( ω ) whic h giv es higher w eights to high frequency parts; Righ t: the function graph of K 2 ( ω ) whic h giv es higher w eights to lo wer frequency parts. By extending K 2 ( ω ) to K 3 ( ω ) , the cen ter shift ω 0 gran ts the k ernel focusing on frequency parts defined b y user. 13 5.3 The Reasonabilit y of the Assumption Firstly , let’s discuss the assumptions of the previous approaches. The assumptions of the second- order-statistics approaches SOBI and AMUSE are deficien t. The assumption of AMUSE is that lim T →∞ 1 T T X t =1 s ( t + τ ) s ( t ) ∗ = diag[ ρ 1 ( τ ) , . . . , ρ n ( τ )] for a certain τ . And the assumption of SOBI shares the same formula, except that it is true for a set of different τ ’s. Both algorithms did not give a clear approach to determine which τ satisfies the assumption. If we assume that for any τ , s i ( t + τ ) s j ( t ) = 0 , it implies that the correlation of the tw o functions s i ( t ) ? s j ( t ) = Z f ( τ ) g ( t + τ )d τ = 0 and hence, S i ( ω ) S j ( ω ) = 0 where S i ( ω ) is the F ourier transform of s i ( t ) . Obviously , except for sp ecial cases, the multiplication of the F ourier transform of tw o signals cannot be a zero function. Therefore, this assumption is to o strong. If w e cannot assume the auto correlation b eing zero for an y time shift τ , the assumption is not complete since we are not able to aw are of how to select the correct time shifts. And most imp ortan tly , it do es not mak e ph ysical sense wh y suc h time shifts should exist so that the auto- correlation of the source signals are zero. The SOBI algorithm introduces the join t diagonalization strategy , to select a collection of τ ’s, and compute based on the av erage pattern of the co v ariance matrix, in order to b ypass the deficiency of their assumption. The case of STFD is similar, where the assumption is that sp ecial time and frequency shifts t and f can b e selected so that the co v ari- ance matrix has the diagonal structure. This is not guaran teed b y theory , since only v ery strong assumption can guaran tee the diagonal structure for any shifts t and f . And the algorithm has to apply joint diagonalization. Therefore, since the assumption of SOBI and STFD are either to o strong or not applicable, their algorithms are heuristic. Comparing to the incompleteness of the assumptions of previous approaches, we discuss the as- sumption of FT-PCA in the following. The first issue that we need to discuss is the existence of K ( ω ) . Supp ose that | S 1 ( ω ) | 2 6 = | S 2 ( ω ) | 2 on a subset D ⊂ R where m( D ) > 0 . (Without loss of generalit y , we can supp ose that | S 1 ( ω ) | 2 − | S 2 ( ω ) | 2 > 0 on D , since if there exists a subset with p ositiv e Leb esgue measure suc h that | S 1 ( ω ) | 2 6 = | S 2 ( ω ) | 2 , w e can alwa ys pic k a subset of it suc h that | S 1 ( ω ) | 2 > | S 2 ( ω ) | 2 or | S 1 ( ω ) | 2 < | S 2 ( ω ) | 2 .) Then the nonnegativ e k ernel function K ( ω ) with R K ( ω )d ω > 0 exists so that S 11 6 = S 22 , since w e can alwa ys pick K ( ω ) = 1 D ( ω ) where Z K ( ω )d ω = m( D ) > 0 . On the other hand, supp ose that | S 1 ( ω ) | 2 6 = | S 2 ( ω ) | 2 only on a subset of the frequency domain with zero Leb esgue measure, then K ( ω ) does not exist. Because Z ( | S 1 ( ω ) | 2 − | S 2 ( ω ) | 2 ) 2 d ω = 0 , 14 and hence, for an y K ( ω ) , | S 11 − S 22 | = | Z K ( ω )( | S 1 ( ω ) | 2 − | S 2 ( ω ) | 2 )d ω | ≤ Z | K ( ω )( | S 1 ( ω ) | 2 − | S 2 ( ω ) | 2 ) | d ω ≤ Z ( K ( ω )) 2 d ω 1 / 2 Z ( | S 1 ( ω ) | 2 − | S 2 ( ω ) | 2 ) 2 d ω 1 / 2 = 0 This indicates that, if the tw o source signals hav e the same energy density almost ev erywhere, no k ernel functions exist so that the t wo signals can be separated by FT-PCA. Therefore, w e hav e an necessary condition for the source signals: FT-PCA do es not w ork for signals whose p o w er sp ectral densities are the same. This necessary condition excludes the cases where the source signals are too close, for example s 1 ( t ) = sin t and s 2 ( t ) = cos t . Secondly , supp ose that there exists an in terv al D ⊂ R such that all the follo wing conditions are satisfied: 1. S 1 ( ω ) 6 = 0 on a subset D 1 ⊂ D with m ( D 1 ) > 0 2. S 2 ( ω ) 6 = 0 on a subset D 2 ⊂ D with m ( D 2 ) > 0 3. D 1 ∩ D 2 = ∅ Then w e immediately hav e that R D | S 1 ( ω ) | 2 d ω 6 = R D | S 1 ( ω ) | 2 d ω and R D S 1 ( ω ) S 2 ( ω )d ω = 0 . The ph ysical meaning of the these conditions can b e in terpreted directly: if there exists an interv al on whic h the tw o source signals hav e exclusive sp ectral density , then FT-PCA works. This sufficien t condition gives us a clear in tuition of the reasonability of FT-PCA. The nature of the second-order- statistics approach is to find a subset of the domain (either time domain or frequency domain) with p ositiv e Lebesgue measure where the source signals are clearly differen t. Since the linear com bination matrix A is applied to the whole domain, the BSS problem can b e solv ed algebraically b y finding a subset where the source signals hav e the characteristics to b e separated. And the reason to pick frequency domain as the approach is clear: in practice, it makes sense that different source signals almost alwa ys hav e different density distributions, and it is almost alw ays p ossible to find subsets (no matter ho w small it is) where the sp ectrums are approximately exclusive. On the other hand, using other p ossible assumptions is less practical, for example, trying to find an interv al in time domain where the signals hav e exclusive subsets is unlik ely , and thus these kinds of approaches do not work. Practically , since no sp ectral functions contain subsets where the sp ectral p o w er is exactly zero, within acceptable error, if there exists an interv al on whic h one density function has large v alues while the other is close to zero, and vise versa, then the abov e conditions can b e appro ximately satisfied. See Fig. 5. And in practice we do not use a true windo w function as the k ernel but the Eq. 5, in order to make the approximation more smo oth. On the other hand, from the exp erimental p oin t of view, we observe that, for each pair of source signals w e examined, there alwa ys exists a best ω 0 suc h that R K ( ω ) S ( ω )( S ( ω )) H d ω is close to a non trivial diagonal matrix most. And suppose that we kno w this sp ecific ω 0 for this pair of source signals, w e are able to solv e the BSS nearly p erfectly using FT-PCA, where the error is extremely small. See Fig. 6. This also supp orts the reasonabilit y of the assumption of FT-PCA. 15 -0.114 -0.113 -0.112 -0.111 -0.11 -0.109 -0.108 -0.107 -1 -0.8 -0.6 -0.4 -0.2 0 0.2 0.4 0.6 0.8 1 -0.114 -0.113 -0.112 -0.111 -0.11 -0.109 -0.108 -0.107 -1 -0.8 -0.6 -0.4 -0.2 0 0.2 0.4 0.6 0.8 1 Figure 5: An example of an interv al where tw o source signals has appro ximately exclusiv e spectral densit y . The figures sho w the segmen ts of the F ourier transform of each signal in this specific interv al (only the real parts). The v ariance of the left signal in this interv al is 0.1626; the v ariance of the righ t signal in this interv al is 0.0980l and the co v ariance of them is -0.0036+0.0017i. 0 100 200 300 400 500 0 0.5 1 1.5 2 2.5 3 3.5 10 -5 0 100 200 300 400 500 0 0.2 0.4 0.6 0.8 1 1.2 10 -3 0 100 200 300 400 500 0 1 2 3 4 5 6 10 -5 0 100 200 300 400 500 0 0.2 0.4 0.6 0.8 1 1.2 10 -4 Figure 6: Examples of best ω 0 ’s that diagonalize R K ( ω ) S ( ω )( S ( ω )) H d ω . Each figure sho ws the Re( S 12 ) with resp ect to ω 0 for a different pair of input source signals. In each example, there alwa ys exists a b est ω 0 so that Re( S 12 ) is close to zero the most. With these b est ω 0 ’s, the FT-PCA result has errors (from left to righ t): 0.000130, 0.0133, 0.00028, 0.00003, which are m uc h smaller than any other approaches. Please note that, these ω 0 ’s are the ideal cases, based on the analysis of Section 5.4, w e kno w that there do not exist approac hes to searc h for these ideal ω 0 ’s. W e can only use heuristic strategies to searc hing for go od ω 0 ’s whic h has larger errors than these perfect solutions. This figure is sho wn to support the reasonabilit y of the assumption of FT-PCA. 16 5.4 The Heuristic Strategy to Searc h for ω 0 Unfortunately , though FT-PCA has reasonable assumptions and solid theory , practically it is not easy to search for the ideal in terv al where the source signals are exclusive only based on the in- put signals z ( t ) . This means that for the searc hing of ω 0 , there are no theory to guaran tee the optimization. See the follo wing analysis: Our task is to apply different ω 0 as the shifts of the kernel function in K ( ω ) = 1 1 + | ω − ω 0 | , and searc h for b est ω 0 so that | S 11 − S 22 | is not close to zero while | S 12 | is minimized, based on the v alues of Z ij for i, j = 1 , 2 that w e computed according to each ω 0 that we apply . Practically , we need R K ( ω ) S ( ω )( S ( ω )) H d ω more “diagonal” than R K ( ω ) Z ( ω )( Z ( ω )) H d ω . Without loss of generalit y , we can write the rotation matrix ˆ R = cos θ − sin θ sin θ cos θ for a certain rotation angle θ . Then, b y Z K ( ω ) Z ( ω )( Z ( ω )) H d ω = ˆ R T Z K ( ω ) S ( ω )( S ( ω )) H d ω ˆ R w e ha v e Z 11 = cos 2 θ S 11 + 2 cos θ sin θ Re( S 12 ) + sin 2 θ S 22 Z 12 = cos θ sin θ ( S 22 − S 11 ) + (cos 2 θ − sin 2 θ )Re( S 12 ) + Im( S 12 ) (6) Z 21 = cos θ sin θ ( S 22 − S 11 ) + (cos 2 θ − sin 2 θ )Re( S 12 ) − Im( S 12 ) Z 22 = sin 2 θ S 11 − 2 cos θ sin θ Re( S 12 ) + cos 2 θ S 22 . Clearly , eac h Z ij is a mixture of S 11 , S 22 , and S 12 , and | S 11 − S 22 | and | S 12 | cannot b e solv ed separately b y Z ij without kno wing θ . And we are not able to understand the changing of | S 11 − S 22 | and | S 12 | b y observing the changing of Z ij , either. Hence, theoretically there is no wa y to guarantee that the optimized ω 0 can b e searched based on the Z ij v alues w e observed. Ho wev er, there exist heuristic strategies to search for go o d ω 0 . F rom the eigen decomposition structure of the equation Z K ( ω ) Z ( ω )( Z ( ω )) H d ω = ˆ R T Z K ( ω ) S ( ω )( S ( ω )) H d ω ˆ R and the relationship of traces and determinan ts, we observ e that: Z 11 + Z 22 = S 11 + S 22 (7) and Z 11 Z 22 − | Z 12 | 2 = S 11 S 22 − | S 12 | 2 . (8) F rom Eq. 7 and 8, w e hav e ( Z 11 − Z 12 ) 2 + 4 | Z 12 | 2 = ( S 11 − S 22 ) 2 + 4 | S 12 | 2 17 0 100 200 300 400 500 0 10 20 30 40 50 60 f1 f2 f3 0 100 200 300 400 500 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 f1 f2 f3 245 250 255 260 265 270 275 280 285 290 295 0 0.005 0.01 0.015 0.02 0.025 0.03 0.035 0.04 0.045 0.05 f1 f2 f3 Figure 7: The function graphs of f 1 , f 2 , f 3 with resp ect to the k ernel shift ω 0 . Left: The o verall function graphs. Mid: A closer view by showing the function v alues within range [0 , 1] . Righ t: The function graphs around the global minim um, where f 2 falls b elo w f 3 . The go o d ω 0 can b e selected at the p oin ts outside the crossing of f 2 and f 3 , where f 3 is small enough and f 2 is not close to zero. where the left hand side is av ailable, while the right hand side con tains the sum of squares of the t wo terms that we care ab out most. F or con venience, let’s name them as follo ws: f 1 = ( Z 11 − Z 12 ) 2 + 4 Z 2 12 f 2 = ( S 11 − S 22 ) 2 f 3 = 4 | S 12 | 2 . Since f 3 is close to zero, the v alues of f 1 is dominated by f 2 . How ev er, maximizing f 1 is not a goo d strategy , since we do not need F 2 to b e maximized but just not close to zero, and practically by maximizing f 1 , the part of f 3 gets larger whic h leads to worse solution, since the diagonal prop ert y of R K ( ω ) S ( ω )( S ( ω )) H d ω is more sensitive with the c hanging of f 3 . F rom Fig. 7 we can hav e an in tuitive idea ab out the absolute v alues of f 1 , f 2 , f 3 . F rom the figure, as w ell as observ ations on other examples, w e notice that, minimizing instead of maximizing f 1 should b e a b etter solution, since the magnitude of f 3 will b e con trolled while f 1 decreases, which can guaran tee f 3 b eing close to zero. After the minimum of f 1 is found, w e need to mo ve the shift ω 0 steps aw a y from the arg min f 1 . This is b ecause that there exists a small interv al around arg min f 1 where f 2 drops hea vily so as to b e even less than f 3 , and practically this will lead to R K ( ω ) S ( ω )( S ( ω )) H d ω getting to o close to the identit y matrix. By moving aw a y a certain distance from the minimum p oin t, we are able to ha ve f 2 significan tly larger than f 3 , and practically this can b e considered as | S 11 − S 22 | being far from zero while | S 12 | is close to zero. F rom the exp erien t results shown in Section 6, through this heuristic strategy we w ere able to get notable results ev en better than SOBI. 6 Exp erimen ts 6.1 The Beha viors of the Geometrical Ob jectiv e F unctions Firstly , we show the function graphs of different ob jectiv e functions prop osed in Section 4.3, and compare them with MI and con trast functions prop osed for F astICA. W e randomly pick ed tw o natural signals (tw o segments of true audio files) s 1 ( t ) and s 2 ( t ) , normalized them by remo ving their mean and standardizing their cov ariance matrix, and then applied a random 2 × 2 mixing matrix A to get the mixed signal observ ations x 1 ( t ) and x 2 ( t ) . Then the first step – PCA w as op erated on the observed signals to get the standardized signals z 1 ( t ) and z 2 ( t ) . Finally , w e searc hed the angle θ from − π to π , and computed the reco vered signals y 1 ( t ) and y 2 ( t ) by 18 -2 -1.5 -1 -0.5 0 0.5 1 1.5 2 Rotation Angle -2.5 -2 -1.5 -1 -0.5 0 0.5 1 1.5 Normalized Obj Values mi issra con1 con2 con3 obj1 obj2 obj3 obj4 -0.75 -0.7 -0.65 -0.6 -0.55 -0.5 -0.45 -0.4 -0.35 Rotation Angle -2.6 -2.4 -2.2 -2 -1.8 -1.6 -1.4 -1.2 -1 -0.8 -0.6 Normalized Obj Values mi issra con1 con2 con3 obj1 obj2 obj3 obj4 Figure 8: Comparison of different ob jectiv e functions for the second step. Left : The ov erall ob jective function graphs. Righ t : The ob jective function graphs in a neigh b orho od of the global minimum. T able 1: A verage error of each ob jective function with MI. O 1 O 2 O 3 O 4 O 5 ˜ O 3 G 1 G 2 G 3 Error (rad) 0.0623 0.0623 0.0621 0.4484 0.0624 0.0680 0.3457 0.0706 0.3463 y θ ( t ) = R ( θ ) z ( t ) where R ( θ ) is the rotation matrix cos θ − sin θ sin θ cos θ of each rotation angle θ , and computed the v alues of eac h ob jectiv e functions, i.e. Ob j( y θ ( t )) . Fig. 8 sho ws the ob jective function graphs with respect to the rotation angle. The ob jective v alues sho wn in the figure were normalized (removing means and divided b y standard deviations) so that they are comparable. In the figures, ’mi’ means the original MI ob jectiv e, i.e. the sum of marginal en tropies; ’issra’ is the ob jectiv e function O 1 in Section 4.3; ’con1’ to ’con3’ are the F astICA contrast functions G 1 to G 3 ; and ’ob j1’ is O 3 , ’ob j2’ is O 2 , ’ob j3’ is O 4 , and ’ob j4’ is O 5 , in Section 4.3. F rom Fig. 8, we observe that for all the ob jectiv e functions, their function graph has similar shap es, and the global minimum are very close. This shows the fact that all the prop osed geometrical ob jective functions are go o d approximations to the MI ob jectiv e (whic h is in fact the summation of marginal entropies). W e also did synthetic exp erimen ts to in vestigate the b eha viors of eac h geometrical ob jective func- tions, as well as the MI ob jective and F astICA contrast functions. The syn thetic exp erimen t w as done the same w a y as ab ov e: Firstly w e randomly pick ed a pair of source signals, and standard- ize them. Then w e applied random mixing matrix to generate the observed signals. F or the BSS pro cess, we did the PCA-ICA steps, and in the ICA step, we optimized each ob jective function to get its solution, as well as the computation time. The syn thetic experiment was rep eated for 20 times, and the errors and CPU time were pro vided as mean/std of the 20 results for each ob jectiv e function. T able 1 sho ws the differences betw een the MI results and each other ob jective function, where ˜ O 3 = | R Q i y 0 i ( t )d t | is the ob jectiv e function of the Deriv ativ e-PCA. The errors w ere computed as the differences of the resulted rotation angles. F rom the table, we observ e that, O 3 appro ximates the MI ob jectiv e function best. 19 T able 2: A verage error of each ob jectiv e function with the ground truths and av erage computational time of each ob jective functions. MI O 1 O 2 O 3 O 5 ˜ O 3 G 1 G 2 G 3 Error (deg) 0.7683 0.7685 0.7735 0.7535 0.7535 4.8035 2.2080 1.4273 1.3230 Std 1.0697 1.0679 1.0840 1.0668 1.0703 2.1372 4.3855 1.1455 1.1539 Time (sec) 6.14 10.40 9.55 2.13 2.62 2.14 3.00 1.39 7.33 T able 2 sho ws the mean errors computed b et ween the solution of each ob jective function and the ground truth. The errors w ere the differences b et w een the resulted rotation angles of eac h ob jective function and the true rotation angle. Please note that, since we standardized the source signals, the source signals and the first step (PCA) results exactly differ by a rotation, and hence we can compare the true rotation angle with eac h approac h. The optimizations were done b y brute-force searc h. The CPU time w as the a verage of all 20 rep eated exp erimen ts. In each exp erimen t, each ob jective function was computed 1801 times (from − 180 ◦ to 180 ◦ with step size as 0 . 1 ◦ ). F rom the table w e can observe that all the geometrical ob jectiv e functions work ed well, m uch b etter than all contrast functions. And the b est ones: O 3 and O 5 w orked even slightly b etter than MI. Among the geometrical ob jectiv es, O 3 has the least computational time due to its simple formula. The exp erimen t results supp orts our assertion that the geometrical ob jectiv e functions are go od candidates for the ICA step of BSS problems, esp ecially O 3 and O 5 whic h compute simply and fast, and has promisingly go od precision for mixed signal recov ering. Additionally , b eing preliminary con vex functions with their deriv atives a v ailable, O 3 and O 5 can b e applied to a gradien t-based optimization algorithm, and serve as a go o d algorithm for ICA-based BSS, replacing the traditional MI ob jective functions. The only disadv antage of O 3 is that the time complexit y gets higher than F astICA as the n umber of signals gro ws. And O 5 can b e adapted to the F astICA algorithm for it has the form of aggregation. 6.2 The Comparison of the Geometrical Ob jectiv e F unctions and the FT-ICA approac h In this experiment, w e compare the MI ob jective with the ab o ve prop osed ob jectiv e O 3 , as well as the con trast function G 2 (whic h appro ximates MI well and has fastest computational time in the abov e exp erimen ts) and the second-order approaches: SOBI and FT-PCA. The inputs are 72 pairs of real source signals (audio segmen ts) that w ere standardized to ha ve zero mean and identit y cov ariance. Random mixing matrices were applied to eac h pair of source signals, and the compared approac hes w ere applied to solve for the second rotation. The errors were computed as the differences of the rotation angles solved b y eac h approac h with the ground truth. T able 3 sho ws the av erage error for all 72 rounds of experiments, without noise or signal-noise-ratio (SNR) being 100, 50, and 20. F or the FT-PCA approach, we adopted the heuristic strategy that we described in Section 5.4, where the searching radius after minimization of f 1 is fixed as 100 and the step size of ω 0 is 0.001. F rom the table, we observe that, when there are no noise, or the SNR = 100 and 50, ev ery approac h w orks well. Errors of all approac hes except Deriv ative-PCA are less than 2 degree, which indicates that all these approac hes hav e practically acceptable precision. F or the optimization approac hes (MI, G 2 , and O 3 ), MI and O 3 w orked b etter, and G 2 w orked worse. And MI is sligh tly w orse than O 3 in a verage though they are very close. F or the second-order-statistics approac hes, FT-PCA w orked b est (its precision is very close to MI), and Deriv ativ e-PCA w ork ed w orst. When the SNR is 20, none of these approac hes w ork ed. The abov e results supports that, for optimization based 20 T able 3: The error table of differen t compared approac hes for the synthetic BSS exp erimen t. Error (deg) MI G 2 O 3 FT-PCA Deriv ative-PCA SOBI No Noise 0.7668 1.0995 0.7807 0.8735 2.7195 1.0195 SNR = 100 0.7970 1.1447 0.7878 0.8158 2.8338 0.9767 SNR = 50 0.8777 1.1796 0.8724 1.0363 2.9054 1.0750 SNR = 20 8.026 5.612 5.779 23.856 24.253 17.942 approac hes, O 3 is indeed a go o d ob jectiv e function, whic h is significan tly b etter than the con trast functions and comp etitiv e with MI. F or second-order-statistics approaches, FT-PCA works b etter than SOBI, hence it is an effectiv e approach for BSS problems that is based on different assumptions than indep endence and has simple and fast algorithm. 7 Conclusions In this pap er, w e highligh t tw o main contributions. First, we p oin t out the mo del of ICA-based approac hes for BSS, and based on the relationship b et ween SPC and MI, w e apply SPC to BSS to prop ose geometrical ob jectiv e functions based on the prop ert y of the joint signals, whose compu- tational time and precision are b oth excellen t. Second, we prop osed a new second-order-statistics approac h, FT-PCA, that assumes the k ernel orthogonality of signals in the frequency domain, and solv e the BSS problem b y applying F ourier transforms and solv e a second eigen decomposition. Comparing with other second-order-statistics approac hes, FT-PCA has a more reasonable assump- tion that bypass es the indep endence concepts, and has a simple and fast algorithm that do es not require any optimization or joint diagonalization, given go od hyper-parameters. W e also prop ose heuristic strategies for searc hing go od hyper-parameter, whic h w as prov en efficient in the exp eriment section. A immediate future w ork is to extend the idea of FT-PCA to nonstationary signals, and prop ose a generalized algorithm that works for signals whic h ha ve different frequency distribution for different time interv als. Another p oten tial future w ork is to apply FT-PCA to nonlinear ICA problems. A c kno wledgmen ts W e ackno wledge helpful conv ersations with Mingyuan Gao and Y uan Zhou. References [1] F. Abrard and Y. Deville. A time–frequency blind signal separation metho d applicable to underdetermined mixtures of dependent sources. Signal pr o c essing , 85(7):1389–1403, 2005. [2] F. R. Bac h and M. I. Jordan. Kernel independent comp onen t analysis. Journal of machine le arning r ese ar ch , 3(Jul):1–48, 2002. [3] A. J. Bell and T. J. Sejno wski. An information-maximization approach to blind separation and blind deconv olution. Neur al c omputation , 7(6):1129–1159, 1995. 21 [4] A. Belouc hrani, K. Ab ed-Meraim, J.-F. Cardoso, and E. Moulines. A blind source separation tec hnique using second-order statistics. IEEE T r ansactions on signal pr o c essing , 45(2):434–444, 1997. [5] A. Belouc hrani and M. G. Amin. Blind source separation based on time-frequency signal represen tations. IEEE T r ansactions on Signal Pr o c essing , 46(11):2888–2897, 1998. [6] J.-F. Cardoso. Blind signal separation: statistical principles. Pr o c e e dings of the IEEE , 86(10):2009–2025, 1998. [7] G. Chabriel, M. Kleinsteuber, E. Moreau, H. Shen, P . Tic ha vsky , and A. Y eredor. Join t matrices decompositions and blind source separation: A surv ey of metho ds, iden tification, and applications. IEEE Signal Pr o c essing Magazine , 31(3):34–43, 2014. [8] L. Cohen. Time-fr e quency analysis , v olume 778. Pren tice hall, 1995. [9] P . Comon. Separation of sources using higher-order cumulan ts. In A dvanc e d A lgorithms and A r chite ctur es for Signal Pr o c essing IV , volume 1152, pages 170–184. In ternational So ciet y for Optics and Photonics, 1989. [10] P . Comon. Indep enden t comp onent analysis, a new concept? Signal pr o c essing , 36(3):287–314, 1994. [11] P . Comon and C. Jutten. Handb o ok of Blind Sour c e Sep ar ation: Indep endent c omp onent anal- ysis and applic ations . A cademic press, 2010. [12] P . Comon, C. Jutten, and J. Herault. Blind separation of sources, part ii: Problems statement. Signal pr o c essing , 24(1):11–20, 1991. [13] A. Delorme and S. Mak eig. Eeglab: an op en source to olbox for analysis of single-trial eeg dy- namics including independent comp onent analysis. Journal of neur oscienc e metho ds , 134(1):9– 21, 2004. [14] C. Févotte and C. Doncarli. T wo contributions to blind source separation using time-frequency distributions. IEEE Signal Pr o c essing L etters , 11(3):386–389, 2004. [15] A. Hyvärinen. New approximations of differential entrop y for independent comp onen t analysis and pro jection pursuit. In A dvanc es in neur al information pr o c essing systems , pages 273–279, 1998. [16] A. Hyv arinen. F ast and robust fixed-p oin t algorithms for indep enden t comp onen t analysis. IEEE tr ansactions on Neur al Networks , 10(3):626–634, 1999. [17] A. Hyvärinen, J. Karhunen, and E. Oja. What is indep endent c omp onent analysis? Wiley Online Library , 2001. [18] A. Hyvärinen and E. Oja. Indep enden t component analysis: algorithms and applications. Neur al networks , 13(4-5):411–430, 2000. [19] S. Ikeda and N. Murata. A metho d of ica in time-frequency domain. In in Pr o c. ICA . Citeseer, 1999. [20] C. J. James and C. W. Hesse. Indep endent component analysis for biomedical signals. Physi- olo gic al me asur ement , 26(1):R15, 2004. 22 [21] A. Jourjine, S. Ric k ard, and O. Yilmaz. Blind separation of disjoint orthogonal signals: Demix- ing n sources from 2 mixtures. In A c oustics, Sp e e ch, and Signal Pr o c essing, 2000. ICASSP’00. Pr o c e e dings. 2000 IEEE International Confer enc e on , v olume 5, pages 2985–2988. IEEE, 2000. [22] J. Lacoume and P . Ruiz. Sources inden tification: a solution based on the cum ulan ts. In Sp e ctrum Estimation and Mo deling, 1988., F ourth Annual ASSP W orkshop on , pages 199–203. IEEE, 1988. [23] T.-W. Lee and M. S. Lewic ki. Unsup ervised image classification, segmentation, and enhance- men t using ica mixture models. IEEE T r ansactions on Image Pr o c essing , 11(3):270–279, 2002. [24] S. Mak eig, A. J. Bell, T.-P . Jung, and T. J. Sejno wski. Indep enden t comp onen t analysis of electro encephalographic data. In A dvanc es in neur al information pr o c essing systems , pages 145–151, 1996. [25] H. Mermoz. Spatial processing b ey ond adaptive beamforming. The Journal of the A c oustic al So ciety of Americ a , 70(1):74–79, 1981. [26] N. Mitianoudis and T. Stathaki. Pixel-based and region-based image fusion schemes using ica bases. Information F usion , 8(2):131–142, 2007. [27] A. Ra jwade, A. Banerjee, and A. Rangara jan. Probabilit y density estimation using iso con tours and isosurfaces: applications to information-theoretic image registration. IEEE tr ansactions on p attern analysis and machine intel ligenc e , 31(3):475–491, 2009. [28] H. Saru w atari, S. Kurita, and K. T ak eda. Blind source separation com bining frequency- domain ica and beamforming. In A c oustics, Sp e e ch, and Signal Pr o c essing, 2001. Pr o c e e d- ings.(ICASSP’01). 2001 IEEE International Confer enc e on , volume 5, pages 2733–2736. IEEE, 2001. [29] L. T ong, V. So on, Y. Huang, and R. Liu. Am use: a new blind identification algorithm. In Cir cuits and Systems, 1990., IEEE International Symp osium on , pages 1784–1787. IEEE, 1990. [30] E. W einstein, M. F eder, and A. V. Opp enheim. Multi-channel signal separation b y decorrela- tion. IEEE tr ansactions on Sp e e ch and Audio Pr o c essing , 1(4):405–413, 1993. [31] O. Yilmaz and S. Rick ard. Blind separation of sp eec h mixtures via time-frequency masking. IEEE T r ansactions on signal pr o c essing , 52(7):1830–1847, 2004. 23

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment