Deep Neural Object Analysis by Interactive Auditory Exploration with a Humanoid Robot

We present a novel approach for interactive auditory object analysis with a humanoid robot. The robot elicits sensory information by physically shaking visually indistinguishable plastic capsules. It gathers the resulting audio signals from microphones that are embedded into the robotic ears. A neural network architecture learns from these signals to analyze properties of the contents of the containers. Specifically, we evaluate the material classification and weight prediction accuracy and demonstrate that the framework is fairly robust to acoustic real-world noise.

💡 Research Summary

The paper introduces a novel framework for interactive auditory object analysis using a humanoid robot named NICO (Neuro‑Inspired Companion). The central idea is to emulate a human strategy: when visual cues are insufficient, a person shakes a container to listen to the sounds produced by its contents, thereby inferring material type and quantity. NICO replicates this behavior by grasping visually indistinguishable plastic capsules, shaking them near its ear, and recording the resulting acoustic signals with binaural microphones embedded in realistic pinnae.

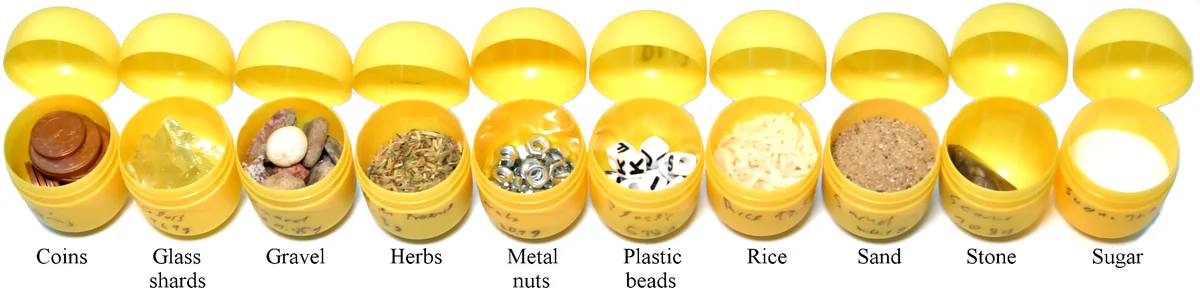

The experimental setup comprises ten different bulk materials—coins, glass, gravel, herbs, nuts, plastic, rice, sand, stone, and sugar—each filled in three quantities (approximately 20 g, 40 g, and 60 g), yielding 30 distinct objects. For each object, two recording sessions were performed, and during each session the robot shook the capsule 18 times, producing a total of 1080 audio clips. Each clip is trimmed to 0.625 seconds and sampled at 48 kHz in stereo. The recordings were made in a typical office environment with background chatter, footsteps, and other ambient noises, ensuring that the system’s robustness to real‑world acoustic interference could be evaluated.

Signal preprocessing follows a biologically inspired pipeline: the raw waveform is windowed (30 ms windows with 15 ms overlap) and transformed into Mel‑Frequency Cepstral Coefficients (MFCCs). Twenty‑one MFCCs are used for material classification, while twenty‑seven are employed for weight regression. The MFCCs are normalized to 0 dB before being fed to the neural networks.

Two separate recurrent neural networks (RNNs) are trained: a GRU‑based model for material classification and an LSTM‑based model for weight regression. Hyper‑parameter optimization is performed using a Tree‑structured Parzen Estimator (TPE), which searches over the number of recurrent layers, hidden units, learning rates, and other architectural choices. The final classification network consists of a 491‑unit GRU layer followed by a 99‑unit GRU and a softmax output over ten classes. The regression network comprises a 376‑unit LSTM layer, a 69‑unit LSTM layer, and a linear output that predicts weight in grams. Training uses an 80/20 split (80 % for training, 20 % for validation) repeated 15 times with random shuffling; early stopping halts training when validation loss does not improve for two consecutive epochs. Training on a four‑core Intel Xeon CPU takes roughly 10 minutes for classification and 20 minutes for regression, while inference requires about 25 ms per sample, confirming real‑time capability.

Results for material classification are presented as a confusion matrix averaged over the 15 runs. The overall accuracy reaches 91 %. Rice is perfectly recognized (100 % accuracy), whereas sugar, sand, and herbs are frequently confused due to their low‑energy acoustic signatures. Gravel is sometimes mistaken for glass, reflecting the similarity of their rattling sounds. The authors note that preliminary human tests found these particular distinctions (e.g., sand vs. sugar) to be extremely difficult, highlighting the robot’s advantage in this domain.

Weight regression yields a mean absolute error (MAE) of 3.51 g across all materials, corresponding to roughly 27 % of the average weight (13.13 g). Errors vary by material; for example, plastic achieves a low MAE of 1.19 g, while coins exhibit a higher error of 11.01 g, likely because the metallic clatter is more variable and less directly correlated with mass. Despite the relatively high percentage error, the results demonstrate that the acoustic signal contains sufficient information for coarse weight estimation, and they set a baseline for future improvements.

The authors compare their work to prior studies, particularly Sinapov et al., who achieved >95 % accuracy on four material types using a multimodal approach (including tactile data) and classified weight only into three coarse categories. In contrast, the present study handles ten materials, predicts continuous weight values, and operates under realistic indoor noise, thereby offering a more general and challenging testbed.

Limitations are openly discussed. The current system uses a fixed shaking frequency (≈1 Hz) and a predetermined number of shakes (18 per trial), lacking an adaptive exploration policy that could decide, on the fly, how many shakes are needed for a confident prediction. Ego‑noise from the robot’s servomotors is present, though the binaural microphone placement mitigates its impact. The weight regression error remains substantial, indicating that more sophisticated models (e.g., attention‑based Transformers or multimodal fusion with tactile vibration sensors) may be required to capture the nonlinear relationship between acoustic features and mass. Additionally, a systematic human baseline was not performed, leaving a gap in quantifying the robot’s advantage over human perception.

Future work outlined includes: (1) integrating tactile and visual modalities to improve both classification and regression; (2) developing active learning or reinforcement‑learning strategies to adapt shaking intensity, direction, and duration based on intermediate confidence estimates; (3) employing more advanced deep architectures (e.g., CNN‑RNN hybrids, temporal convolutional networks, or self‑attention models) to reduce weight prediction error; and (4) implementing ego‑noise cancellation and beamforming techniques to further enhance robustness in noisy environments.

In conclusion, the paper demonstrates that a humanoid robot can perform interactive auditory exploration to infer hidden object properties with a high degree of accuracy, even in the presence of ambient noise. By combining biologically inspired MFCC preprocessing with carefully tuned recurrent neural networks, the system achieves 91 % material classification accuracy across ten diverse bulk substances and provides a baseline for continuous weight estimation. The work advances the field of embodied AI by showing how action‑driven perception can be leveraged for tasks that are difficult for passive sensors, and it opens avenues for richer multimodal perception and adaptive exploration strategies in future service robots.

Comments & Academic Discussion

Loading comments...

Leave a Comment