Unifying Count-Based Exploration and Intrinsic Motivation

We consider an agent's uncertainty about its environment and the problem of generalizing this uncertainty across observations. Specifically, we focus on the problem of exploration in non-tabular reinforcement learning. Drawing inspiration from the in…

Authors: Marc G. Bellemare, Sriram Srinivasan, Georg Ostrovski

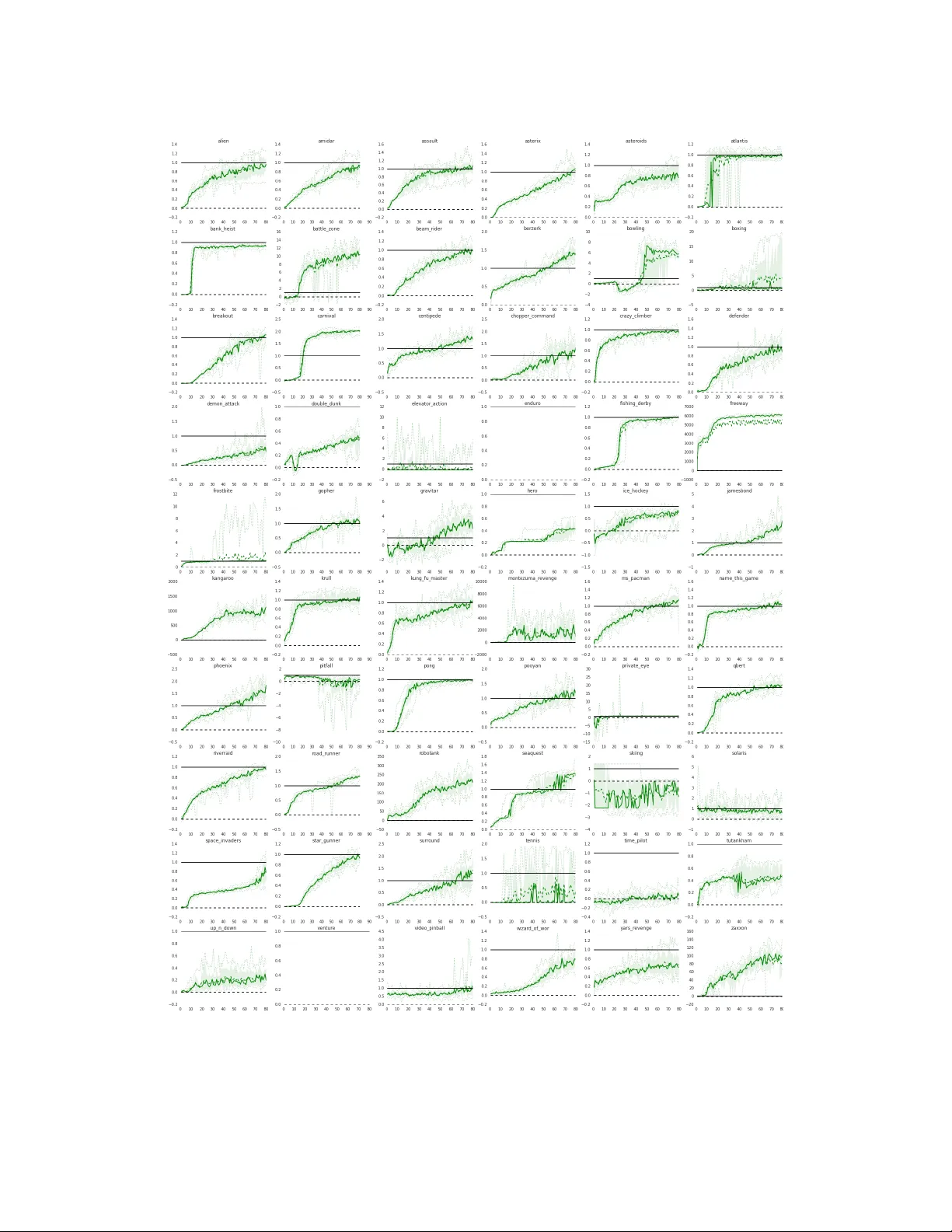

Unifying Count-Based Exploration and Intrinsic Motiv ation Marc G. Bellemar e bellemare@google.com Sriram Srinivasan srsriniv asan@google.com Georg Ostr ovski ostrovski@google.com T om Schaul schaul@google.com David Saxton saxton@google.com Google DeepMind London, United Kingdom R ´ emi Munos munos@google.com Abstract W e consider an agent’ s uncertainty about its en vironment and the problem of gen- eralizing this uncertainty across states. Specifically , we focus on the problem of exploration in non-tab ular reinforcement learning. Drawing inspiration from the intrinsic motiv ation literature, we use density models to measure uncertainty , and propose a nov el algorithm for deriving a pseudo-count from an arbitrary density model. This technique enables us to generalize count-based exploration algo- rithms to the non-tabular case. W e apply our ideas to Atari 2600 games, pro viding sensible pseudo-counts from raw pixels. W e transform these pseudo-counts into exploration bonuses and obtain significantly improv ed exploration in a number of hard games, including the infamously dif ficult M O N T E Z U M A ’ S R E V E N G E . 1 Introduction Exploration algorithms for Markov Decision Processes (MDPs) are typically concerned with re- ducing the agent’ s uncertainty over the en vironment’ s rew ard and transition functions. In a tabular setting, this uncertainty can be quantified using confidence intervals deri ved from Chernoff bounds, or inferred from a posterior o ver the environment parameters. In fact, both confidence intervals and posterior shrink as the in verse square root of the state-action visit count N ( x, a ) , making this quantity fundamental to most theoretical results on exploration. Count-based exploration methods directly use visit counts to guide an agent’ s behaviour tow ards reducing uncertainty . For example, Model-based Interval Estimation with Exploration Bonuses (MBIE-EB; Strehl and Littman, 2008) solves the augmented Bellman equation V ( x ) = max a ∈A h ˆ R ( x, a ) + γ E ˆ P [ V ( x 0 )] + β N ( x, a ) − 1 / 2 i , in volving the empirical reward ˆ R , the empirical transition function ˆ P , and an exploration bonus proportional to N ( x, a ) − 1 / 2 . This bonus accounts for uncertainties in both transition and reward functions and enables a finite-time bound on the agent’ s suboptimality . In spite of their pleasant theoretical guarantees, count-based methods hav e not played a role in the contemporary successes of reinforcement learning (e.g. Mnih et al., 2015). Instead, most practical methods still rely on simple rules such as -greedy . The issue is that visit counts are not directly useful in large domains, where states are rarely visited more than once. Answering a dif ferent scientific question, intrinsic motiv ation aims to provide qualitative guidance for exploration (Schmidhuber, 1991; Oudeyer et al., 2007; Barto, 2013). This guidance can be summarized as “explore what surprises you”. A typical approach guides the agent based on change 30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain. in prediction error , or learning pr ogr ess . If e n ( A ) is the error made by the agent at time n o ver some ev ent A, and e n +1 ( A ) the same error after observing a new piece of information, then learning progress is e n ( A ) − e n +1 ( A ) . Intrinsic motiv ation methods are attractiv e as they remain applicable in the absence of the Markov property or the lack of a tabular representation, both of which are required by count-based algo- rithms. Y et the theoretical foundations of intrinsic motiv ation remain largely absent from the litera- ture, which may explain its slo w rate of adoption as a standard approach to exploration. In this paper we provide formal evidence that intrinsic motiv ation and count-based exploration are but two sides of the same coin. Specifically , we consider a frequently used measure of learning progress, information gain (Cover and Thomas, 1991). Defined as the Kullback-Leibler div ergence of a prior distribution from its posterior, information gain can be related to the confidence intervals used in count-based exploration. Our contribution is to propose a new quantity , the pseudo-count , which connects information-gain-as-learning-progress and count-based exploration. W e deri ve our pseudo-count from a density model over the state space. This is in departure from more traditional approaches to intrinsic motiv ation that consider learning progress with respect to a transition model. W e expose the relationship between pseudo-counts, a variant of Schmidhuber’ s compression progress we call prediction gain , and information gain. Combined to Kolter and Ng’ s negati ve result on the frequentist suboptimality of Bayesian bonuses, our result highlights the theo- retical advantages of pseudo-counts compared to man y existing intrinsic motiv ation methods. The pseudo-counts we introduce here are best thought of as “function approximation for explo- ration”. W e bring them to bear on Atari 2600 games from the Arcade Learning En vironment (Belle- mare et al., 2013), focusing on games where myopic e xploration fails. W e e xtract our pseudo-counts from a simple density model and use them within a variant of MBIE-EB. W e apply them to an ex- perience replay setting and to an actor-critic setting, and find improved performance in both cases. Our approach produces dramatic progress on the reputedly most difficult Atari 2600 game, M O N - T E Z U M A ’ S R E V E N G E : within a fraction of the training time, our agent explores a significant portion of the first lev el and obtains significantly higher scores than previously published agents. 2 Notation W e consider a countable state space X . W e denote a sequence of length n from X by x 1: n ∈ X n , the set of finite sequences from X by X ∗ , write x 1: n x to mean the concatenation of x 1: n and a state x ∈ X , and denote the empty sequence by . A model ov er X is a mapping from X ∗ to probability distributions o ver X . That is, for each x 1: n ∈ X n the model provides a probability distrib ution ρ n ( x ) := ρ ( x ; x 1: n ) . Note that we do not require ρ n ( x ) to be strictly positi ve for all x and x 1: n . When it is, howe ver , we may understand ρ n ( x ) to be the usual conditional probability of X n +1 = x giv en X 1 . . . X n = x 1: n . W e will take particular interest in the empirical distribution µ n deriv ed from the sequence x 1: n . If N n ( x ) := N ( x, x 1: n ) is the number of occurrences of a state x in the sequence x 1: n , then µ n ( x ) := µ ( x ; x 1: n ) := N n ( x ) n . W e call the N n the empirical count function , or simply empirical count . The abov e notation e xtends to state-action spaces, and we write N n ( x, a ) to explicitly refer to the number of occurrences of a state-action pair when the argument requires it. When x 1: n is generated by an ergodic Marko v chain, for e xample if we follow a fix ed policy in a finite-state MDP , then the limit point of µ n is the chain’ s stationary distribution. In our setting, a density model is an y model that assumes states are independently (but not necessarily identically) distributed; a density model is thus a particular kind of generati ve model. W e emphasize that a density model differs from a forward model, which takes into account the temporal relationship between successiv e states. Note that µ n is itself a density model. 2 3 From Densities to Counts In the introduction we argued that the visit count N n ( x ) (and consequently , N n ( x, a ) ) is not directly useful in practical settings, since states are rarely re visited. Specifically , N n ( x ) is almost always zero and cannot help answer the question “Ho w no vel is this state?” Nor is the problem solved by a Bayesian approach: ev en variable-alphabet models (e.g. Hutter, 2013) must assign a small, diminishing probability to yet-unseen states. T o estimate the uncertainty of an agent’ s knowledge, we must instead look for a quantity which generalizes across states. Guided by ideas from the intrinsic moti vation literature, we no w deri ve such a quantity . W e call it a pseudo-count as it extends the familiar notion from Bayesian estimation. 3.1 Pseudo-Counts and the Recoding Probability W e are giv en a density model ρ over X . This density model may be approximate, biased, or ev en inconsistent. W e begin by introducing the r ecoding probability of a state x : ρ 0 n ( x ) := ρ ( x ; x 1: n x ) . This is the probability assigned to x by our density model after observing a new occurrence of x . The term “recoding” is inspired from the statistical compression literature, where coding costs are in versely related to probabilities (Cover and Thomas, 1991). When ρ admits a conditional probabil- ity distribution, ρ 0 n ( x ) = Pr ρ ( X n +2 = x | X 1 . . . X n = x 1: n , X n +1 = x ) . W e now postulate two unknowns: a pseudo-count function ˆ N n ( x ) , and a pseudo-count total ˆ n . W e relate these two unkno wns through two constraints: ρ n ( x ) = ˆ N n ( x ) ˆ n ρ 0 n ( x ) = ˆ N n ( x ) + 1 ˆ n + 1 . (1) In words: we require that, after observing one instance of x , the density model’ s increase in predic- tion of that same x should correspond to a unit increase in pseudo-count. The pseudo-count itself is deriv ed from solving the linear system (1): ˆ N n ( x ) = ρ n ( x )(1 − ρ 0 n ( x )) ρ 0 n ( x ) − ρ n ( x ) = ˆ nρ n ( x ) . (2) Note that the equations (1) yield ˆ N n ( x ) = 0 (with ˆ n = ∞ ) when ρ n ( x ) = ρ 0 n ( x ) = 0 , and are inconsistent when ρ n ( x ) < ρ 0 n ( x ) = 1 . These cases may arise from poorly beha ved density models, but are easily accounted for . From here onwards we will assume a consistent system of equations. Definition 1 (Learning-positiv e density model) . A density model ρ is learning-positiv e if for all x 1: n ∈ X n and all x ∈ X , ρ 0 n ( x ) ≥ ρ n ( x ) . By inspecting (2), we see that 1. ˆ N n ( x ) ≥ 0 if and only if ρ is learning-positiv e; 2. ˆ N n ( x ) = 0 if and only if ρ n ( x ) = 0 ; and 3. ˆ N n ( x ) = ∞ if and only if ρ n ( x ) = ρ 0 n ( x ) . In man y cases of interest, the pseudo-count ˆ N n ( x ) matches our intuition. If ρ n = µ n then ˆ N n = N n . Similarly , if ρ n is a Dirichlet estimator then ˆ N n recov ers the usual notion of pseudo-count. More importantly , if the model generalizes across states then so do pseudo-counts. 3.2 Estimating the Frequency of a Salient Ev ent in F R E E W AY As an illustrativ e example, we employ our method to estimate the number of occurrences of an infrequent e vent in the Atari 2600 video game F R E E W AY (Figure 1, screenshot). W e use the Arcade Learning En vironment (Bellemare et al., 2013). W e will demonstrate the follo wing: 1. Pseudo-counts are roughly zero for nov el events, 3 Frames (1 000s) Pseudo-counts salient event pseudo-counts star t position pseudo-counts periods witho ut salient events Figure 1: Pseudo-counts obtained from a CTS density model applied to F R E E W AY , along with a frame representative of the salient event (crossing the road). Shaded areas depict periods during which the agent observes the salient event, dotted lines interpolate across periods during which the salient ev ent is not observed. The reported values are 10,000-frame a verages. 2. they e xhibit credible magnitudes, 3. they respect the ordering of state frequenc y , 4. they gro w linearly (on average) with real counts, 5. they are rob ust in the presence of nonstationary data. These properties suggest that pseudo-counts provide an appropriate generalized notion of visit counts in non-tabular settings. In F R E E W AY , the agent must navigate a chicken across a busy road. As our example, we consider estimating the number of times the chicken has reached the very top of the screen. As is the case for many Atari 2600 games, this naturally salient ev ent is associated with an increase in score, which ALE translates into a positiv e reward. W e may reasonably imagine that kno wing ho w certain we are about this part of the en vironment is useful. After crossing, the chicken is teleported back to the bottom of the screen. T o highlight the robustness of our pseudo-count, we consider a nonstationary policy which waits for 250,000 frames, then applies the U P action for 250,000 frames, then waits, then goes U P again. The salient ev ent only occurs during U P periods. It also occurs with the cars in different positions, thus requiring generalization. As a point of reference, we record the pseudo-counts for both the salient ev ent and visits to the chicken’ s start position. W e use a simplified, pixel-le vel version of the CTS model for Atari 2600 frames proposed by Belle- mare et al. (2014), ignoring temporal dependencies. While the CTS model is rather impov erished in comparison to state-of-the-art density models for images (e.g. V an den Oord et al., 2016), its count- based nature results in extremely fast learning, making it an appealing candidate for exploration. Further details on the model may be found in the appendix. Examining the pseudo-counts depicted in Figure 1 confirms that they exhibit the desirable properties listed abo ve. In particular , the pseudo-count is almost zero on the first occurrence of the salient ev ent; it increases slightly during the 3rd period, since the salient and reference e vents share some common structure; throughout, it remains smaller than the reference pseudo-count. The linearity on average and robustness to nonstationarity are immediate from the graph. Note, howev er, that the pseudo- counts are a fraction of the real visit counts (inasmuch as we can define “real”): by the end of the trial, the start position has been visited about 140,000 times, and the topmost part of the screen, 1285 times. Furthermore, the ratio of recorded pseudo-counts differs from the ratio of real counts. Both effects are quantifiable, as we shall sho w in Section 5. 4 The Connection to Intrinsic Motivation Having argued that pseudo-counts appropriately generalize visit counts, we will no w sho w that the y are closely related to information gain , which is commonly used to quantify no velty or curiosity and consequently as an intrinsic re ward. Information gain is defined in relation to a mixtur e model ξ ov er 4 a class of density models M . This model predicts according to a weighted combination from M : ξ n ( x ) := ξ ( x ; x 1: n ) := Z ρ ∈M w n ( ρ ) ρ ( x ; x 1: n ) d ρ, with w n ( ρ ) the posterior weight of ρ . This posterior is defined recursively , starting from a prior distribution w 0 ov er M : w n +1 ( ρ ) := w n ( ρ, x n +1 ) w n ( ρ, x ) := w n ( ρ ) ρ ( x ; x 1: n ) ξ n ( x ) . (3) Information gain is then the Kullback-Leibler div ergence from prior to posterior that results from observing x : IG n ( x ) := IG ( x ; x 1: n ) := KL w n ( · , x ) k w n . Computing the information gain of a complex density model is often impractical, if not downright intractable. Howe ver , a quantity which we call the pr ediction gain provides us with a good approxi- mation of the information g ain. W e define the prediction gain of a density model ρ (and in particular , ξ ) as the difference between the recoding log-probability and log-probability of x : PG n ( x ) := log ρ 0 n ( x ) − log ρ n ( x ) . Prediction gain is nonnegati ve if and only if ρ is learning-positi ve. It is related to the pseudo-count: ˆ N n ( x ) ≈ e PG n ( x ) − 1 − 1 , with equality when ρ 0 n ( x ) → 0 . As the follo wing theorem sho ws, prediction gain allo ws us to relate pseudo-count and information gain. Theorem 1. Consider a sequence x 1: n ∈ X n . Let ξ be a mixtur e model over a class of learning- positive models M . Let ˆ N n be the pseudo-count derived fr om ξ (Equation 2). F or this model, IG n ( x ) ≤ PG n ( x ) ≤ ˆ N n ( x ) − 1 and PG n ( x ) ≤ ˆ N n ( x ) − 1 / 2 . Theorem 1 suggests that using an exploration bonus proportional to ˆ N n ( x ) − 1 / 2 , similar to the MBIE-EB bonus, leads to a behaviour at least as exploratory as one deriv ed from an information gain bonus. Since pseudo-counts correspond to empirical counts in the tabular setting, this approach also preserves known theoretical guarantees. In fact, we are confident pseudo-counts may be used to prov e similar results in non-tabular settings. On the other hand, it may be difficult to provide theoretical guarantees about existing bonus-based intrinsic motiv ation approaches. Kolter and Ng (2009) showed that no algorithm based on a bonus upper bounded by β N n ( x ) − 1 for any β > 0 can guarantee P AC-MDP optimality . Again considering the tabular setting and combining their result to Theorem 1, we conclude that bonuses proportional to immediate information (or prediction) gain are insufficient for theoretically near-optimal explo- ration: to paraphrase K olter and Ng, these methods produce explore too little in comparison to pseudo-count bonuses. By inspecting (2) we come to a similar negati ve conclusion for bonuses proportional to the L1 or L2 distance between ξ 0 n and ξ n . Unlike many intrinsic motiv ation algorithms, pseudo-counts also do not rely on learning a forward (transition and/or reward) model. This point is especially important because a number of powerful density models for images exist (V an den Oord et al., 2016), and because optimality guarantees cannot in general exist for intrinsic moti vation algorithms based on forward models. 5 Asymptotic Analysis In this section we analyze the limiting behaviour of the ratio ˆ N n / N n . W e use this analysis to assert the consistency of pseudo-counts deriv ed from tabular density models, i.e. models which maintain per-state visit counts. In the appendix we use the same result to bound the approximation error of pseudo-counts deriv ed from directed graphical models, of which our CTS model is a special case. Consider a fixed, infinite sequence x 1 , x 2 , . . . from X . W e define the limit of a sequence of functions f ( x ; x 1: n ) : n ∈ N with respect to the length n of the subsequence x 1: n . W e additionally assume that the empirical distribution µ n con verges pointwise to a distribution µ , and write µ 0 n ( x ) for the recoding probability of x under µ n . W e begin with two assumptions on our density model. 5 Assumption 1. The limits (a) r ( x ) := lim n →∞ ρ n ( x ) µ n ( x ) (b) ˙ r ( x ) := lim n →∞ ρ 0 n ( x ) − ρ n ( x ) µ 0 n ( x ) − µ n ( x ) exist for all x ; furthermor e, ˙ r ( x ) > 0 . Assumption (a) states that ρ should ev entually assign a probability to x proportional to the limiting empirical distribution µ ( x ) . In particular there must be a state x for which r ( x ) < 1 , unless ρ n → µ . Assumption (b), on the other hand, imposes a restriction on the learning rate of ρ relative to µ ’ s. As both r ( x ) and µ ( x ) exist, Assumption 1 also implies that ρ n ( x ) and ρ 0 n ( x ) hav e a common limit. Theorem 2. Under Assumption 1, the limit of the ratio of pseudo-counts ˆ N n ( x ) to empirical counts N n ( x ) exists for all x . This limit is lim n →∞ ˆ N n ( x ) N n ( x ) = r ( x ) ˙ r ( x ) 1 − µ ( x ) r ( x ) 1 − µ ( x ) . The model’ s relativ e rate of change, whose conv ergence to ˙ r ( x ) we require, plays an essential role in the ratio of pseudo- to empirical counts. T o see this, consider a sequence ( x n : n ∈ N ) generated i.i.d. from a distribution µ over a finite state space, and a density model defined from a sequence of nonincreasing step-sizes ( α n : n ∈ N ) : ρ n ( x ) = (1 − α n ) ρ n − 1 ( x ) + α n I { x n = x } , with initial condition ρ 0 ( x ) = |X | − 1 . For α n = n − 1 , this density model is the empirical distribu- tion. For α n = n − 2 / 3 , we may appeal to well-known results from stochastic approximation (e.g. Bertsekas and Tsitsiklis, 1996) and find that almost surely lim n →∞ ρ n ( x ) = µ ( x ) but lim n →∞ ρ 0 n ( x ) − ρ n ( x ) µ 0 n ( x ) − µ n ( x ) = ∞ . Since µ 0 n ( x ) − µ n ( x ) = n − 1 (1 − µ 0 n ( x )) , we may think of Assumption 1(b) as also requiring ρ to conv erge at a rate of Θ(1 /n ) for a comparison with the empirical count N n to be meaningful. Note, ho wev er , that a density model that does not satisfy Assumption 1(b) may still yield useful (b ut incommensurable) pseudo-counts. Corollary 1. Let φ ( x ) > 0 with P x ∈X φ ( x ) < ∞ and consider the count-based estimator ρ n ( x ) = N n ( x ) + φ ( x ) n + P x 0 ∈X φ ( x 0 ) . If ˆ N n is the pseudo-count corr esponding to ρ n then ˆ N n ( x ) / N n ( x ) → 1 for all x with µ ( x ) > 0 . 6 Empirical Evaluation In this section we demonstrate the use of pseudo-counts to guide exploration. W e return to the Arcade Learning En vironment, now using the CTS model to generate an exploration bonus. 6.1 Exploration in Hard Atari 2600 Games From 60 games av ailable through the Arcade Learning En vironment we selected fiv e “hard” games, in the sense that an -greedy policy is inef ficient at exploring them. W e used a bonus of the form R + n ( x, a ) := β ( ˆ N n ( x ) + 0 . 01) − 1 / 2 , (4) where β = 0 . 05 w as selected from a coarse parameter sweep. W e also compared our method to the optimistic initialization trick proposed by Machado et al. (2015). W e trained our agents’ Q-functions with Double DQN (v an Hasselt et al., 2016), with one important modification: we mix ed the Double Q-Learning target with the Monte Carlo return. This modification led to improved results both with and without exploration bonuses (details in the appendix). Figure 2 depicts the result of our experiment, av eraged across 5 trials. Although optimistic ini- tialization helps in F R E E W AY , it otherwise yields performance similar to DQN. By contrast, the 6 Score Tra ining frames (millions) F REEW AY M ONTEZUMA ’S R EVEN GE P RIV ATE E YE H.E.R.O. V ENTURE Figure 2: A verage training score with and without exploration bonus or optimistic initialization in 5 Atari 2600 games. Shaded areas denote inter-quartile range, dotted lines sho w min/max scores. No bonu s With bonus Figure 3: “Kno wn world” of a DQN agent trained for 50 million frames with ( right ) and without ( left ) count-based exploration bonuses, in M O N T E Z U M A ’ S R E V E N G E . count-based exploration bonus enables us to make quick progress on a number of games, most dra- matically in M O N T E Z U M A ’ S R E V E N G E and V E N T U R E . M O N T E Z U M A ’ S R E V E N G E is perhaps the hardest Atari 2600 g ame av ailable through the ALE. The game is infamous for its hostile, unforgi ving en vironment: the agent must navigate a number of different rooms, each filled with traps. Due to its sparse rew ard function, most published agents achiev e an a verage score close to zero and completely fail to explore most of the 24 rooms that constitute the first lev el (Figure 3, top). By contrast, within 50 million frames our agent learns a policy which consistently na vigates through 15 rooms (Figure 3, bottom). Our agent also achie ves a score higher than anything previously reported, with one run consistently achieving 6600 points by 100 million frames (half the training samples used by Mnih et al. (2015)). W e believe the success of our method in this game is a strong indicator of the usefulness of pseudo-counts for exploration. 1 6.2 Exploration for Actor -Critic Methods W e next used our exploration bonuses in conjunction with the A3C (Asynchronous Adv antage Actor-Critic) algorithm of Mnih et al. (2016). One appeal of actor-critic methods is their explicit separation of policy and Q-function parameters, which leads to a richer behaviour space. This very separation, howe ver , often leads to deficient exploration: to produce any sensible results, the A3C policy must be regularized with an entrop y cost. W e trained A3C on 60 Atari 2600 games, with and without the exploration bonus (4). W e refer to our augmented algorithm as A3C+. Full details and additional results may be found in the appendix. W e found that A3C fails to learn in 15 games, in the sense that the agent does not achiev e a score 50% better than random. In comparison, there are only 10 games for which A3C+ fails to improve on the random agent; of these, 8 are games where DQN f ails in the same sense. W e normalized the tw o algorithms’ scores so that 0 and 1 are respecti vely the minimum and maximum of the random agent’ s and A3C’ s end-of-training score on a particular game. Figure 4 depicts the in-training medi an score for A3C and A3C+, along with 1st and 3rd quartile intervals. Not only does A3C+ achiev e slightly superior median performance, but it also significantly outperforms A3C on at least a quarter of the games. This is particularly important given the large proportion of Atari 2600 games for which an -greedy policy is suf ficient for exploration. 7 Related W ork Information-theoretic quantities have been repeatedly used to describe intrinsically motiv ated be- haviour . Closely related to prediction gain is Schmidhuber (1991)’ s notion of compression progress, 1 A video of our agent playing is av ailable at https://youtu.be/0yI2wJ6F8r0 . 7 Tr aining frames (millions) Baseline score A3C+ P ERFORMANCE A CROSS G AMES Figure 4: Median and interquartile performance across 60 Atari 2600 games for A3C and A3C+. which equates novelty with an agent’ s improv ement in its ability to compress its past. More recently , Lopes et al. (2012) showed the relationship between time-averaged prediction gain and visit counts in a tabular setting; their result is a special case of Theorem 2. Orseau et al. (2013) demonstrated that maximizing the sum of future information gains does lead to optimal behaviour , even though maximizing immediate information gain does not (Section 4). Finally , there may be a connection be- tween sequential normalized maximum likelihood estimators and our pseudo-count deriv ation (see e.g. Ollivier, 2015). Intrinsic motiv ation has also been studied in reinforcement learning proper , in particular in the con- text of discovering skills (Singh et al., 2004; Barto, 2013). Recently , Stadie et al. (2015) used a squared prediction error bonus for e xploring in Atari 2600 games. Closest to our work is Houthooft et al. (2016)’ s v ariational approach to intrinsic motiv ation, which is equiv alent to a second order T ay- lor approximation to prediction gain. Mohamed and Rezende (2015) also considered a variational approach to the different problem of maximizing an agent’ s ability to influence its en vironment. Aside for Orseau et al.’ s above-cited work, it is only recently that theoretical guarantees for explo- ration have emerged for non-tabular , stateful settings. W e note Pazis and Parr (2016)’ s P A C-MDP result for metric spaces and Leike et al. (2016)’ s asymptotic analysis of Thompson sampling in general en vironments. 8 Future Dir ections The last fe w years have seen tremendous advances in learning representations for reinforcement learning. Surprisingly , these advances hav e yet to carry ov er to the problem of exploration. In this paper , we reconciled counts, the fundamental unit of uncertainty , with prediction-based heuristics and intrinsic motiv ation. Combining our work with more ideas from deep learning and better density models seems a plausible avenue for quick progress in practical, efficient exploration. W e now conclude by outlining a few research directions we belie ve are promising. Induced metric. W e did not address the question of where the generalization comes from. Clearly , the choice of density model induces a particular metric over the state space. A better understanding of this metric should allow us to tailor the density model to the problem of e xploration. Compatible value function. There may be a mismatch in the learning rates of the density model and the value function: DQN learns much more slowly than our CTS model. As such, it should be beneficial to design value functions compatible with density models (or vice-v ersa). The continuous case. Although we focused here on countable state spaces, we can as easily define a pseudo-count in terms of probability density functions. At present it is unclear whether this provides us with the right notion of counts for continuous spaces. Acknowledgments The authors w ould like to thank Laurent Orseau, Ale x Gra ves, Joel V eness, Charles Blundell, Shakir Mohamed, Ivo Danihelka, Ian Osband, Matt Hoffman, Greg W ayne, W ill Dabney , and A ¨ aron van den Oord for their excellent feedback early and late in the writing, and Pierre-Yves Oudeyer and Y ann Olli vier for pointing out additional connections to the literature. 8 References Barto, A. G. (2013). Intrinsic motiv ation and reinforcement learning. In Intrinsically Motivated Learning in Natural and Artificial Systems , pages 17–47. Springer . Bellemare, M., V eness, J., and T alvitie, E. (2014). Skip context tree switching. In Pr oceedings of the 31st International Confer ence on Machine Learning , pages 1458–1466. Bellemare, M. G., Naddaf, Y ., V eness, J., and Bowling, M. (2013). The Arcade Learning Environment: An ev aluation platform for general agents. Journal of Artificial Intellig ence Resear ch , 47:253–279. Bellemare, M. G., Ostrovski, G., Guez, A., Thomas, P . S., and Munos, R. (2016). Increasing the action gap: New operators for reinforcement learning. In Pr oceedings of the 30th AAAI Conference on Artificial Intelligence . Bertsekas, D. P . and Tsitsiklis, J. N. (1996). Neur o-Dynamic Pr ogramming . Athena Scientific. Cov er , T . M. and Thomas, J. A. (1991). Elements of information theory . John Wile y & Sons. Houthooft, R., Chen, X., Duan, Y ., Schulman, J., De Turck, F ., and Abbeel, P . (2016). V ariational information maximizing exploration. Hutter , M. (2013). Sparse adaptive dirichlet-multinomial-like processes. In Pr oceedings of the Conference on Online Learning Theory . K olter, Z. J. and Ng, A. Y . (2009). Near -bayesian exploration in polynomial time. In Pr oceedings of the 26th International Confer ence on Machine Learning . Leike, J., Lattimore, T ., Orseau, L., and Hutter, M. (2016). Thompson sampling is asymptotically optimal in general en vironments. In Pr oceedings of the Confer ence on Uncertainty in Artificial Intelligence . Lopes, M., Lang, T ., T oussaint, M., and Oudeyer , P .-Y . (2012). Exploration in model-based reinforcement learning by empirically estimating learning progress. In Advances in Neur al Information Pr ocessing Systems 25 . Machado, M. C., Sriniv asan, S., and Bowling, M. (2015). Domain-independent optimistic initialization for reinforcement learning. AAAI W orkshop on Learning for General Competency in V ideo Games . Mnih, V ., Badia, A. P ., Mirza, M., Graves, A., Lillicrap, T . P ., Harley , T ., Silver , D., and Kavukcuoglu, K. (2016). Asynchronous methods for deep reinforcement learning. In Pr oceedings of the International Con- fer ence on Machine Learning . Mnih, V ., Kavukcuoglu, K., Silver , D., Rusu, A. A., V eness, J., Bellemare, M. G., Grav es, A., Riedmiller , M., Fidjeland, A. K., Ostrovski, G., et al. (2015). Human-le vel control through deep reinforcement learning. Natur e , 518(7540):529–533. Mohamed, S. and Rezende, D. J. (2015). V ariational information maximisation for intrinsically moti vated reinforcement learning. In Advances in Neural Information Pr ocessing Systems 28 . Ollivier , Y . (2015). Laplace’ s rule of succession in information geometry . arXiv pr eprint arXiv:1503.04304 . Orseau, L., Lattimore, T ., and Hutter , M. (2013). Universal knowledge-seeking agents for stochastic en viron- ments. In Proceedings of the Confer ence on Algorithmic Learning Theory . Oudeyer , P ., Kaplan, F ., and Hafner , V . (2007). Intrinsic motiv ation systems for autonomous mental de velop- ment. IEEE T ransactions on Evolutionary Computation , 11(2):265–286. Pazis, J. and Parr , R. (2016). Efficient P A C-optimal exploration in concurrent, continuous state MDPs with delayed updates. In Proceedings of the 30th AAAI Confer ence on Artificial Intelligence . Schmidhuber , J. (1991). A possibility for implementing curiosity and boredom in model-building neural con- trollers. In F rom animals to animats: pr oceedings of the first international conference on simulation of adaptive behavior . Schmidhuber , J. (2008). Driv en by compression progress. In Knowledge-Based Intelligent Information and Engineering Systems . Springer . Singh, S., Barto, A. G., and Chentanez, N. (2004). Intrinsically moti vated reinforcement learning. In Advances in Neural Information Pr ocessing Systems 16 . 9 Stadie, B. C., Levine, S., and Abbeel, P . (2015). Incentivizing exploration in reinforcement learning with deep predictiv e models. arXiv preprint . Strehl, A. L. and Littman, M. L. (2008). An analysis of model-based interval estimation for Markov decision processes. Journal of Computer and System Sciences , 74(8):1309 – 1331. V an den Oord, A., Kalchbrenner, N., and Kavukcuoglu, K. (2016). Pixel recurrent neural networks. In Pro- ceedigns of the 33r d International Conference on Mac hine Learning . van Hasselt, H., Guez, A., and Silver , D. (2016). Deep reinforcement learning with double Q-learning. In Pr oceedings of the 30th AAAI Conference on Artificial Intellig ence . V eness, J., Bellemare, M. G., Hutter, M., Chua, A., and Desjardins, G. (2015). Compress and control. In Pr oceedings of the 29th AAAI Conference on Artificial Intellig ence . W ainwright, M. J. and Jordan, M. I. (2008). Graphical models, exponential families, and v ariational inference. F oundations and T r ends in Machine Learning , 1(1-2):1–305. A The Connection to Intrinsic Motivation The following pro vides an identity connecting information gain and prediction gain. Lemma 1. Consider a mixtur e model ξ over M with prediction gain PG n and information gain IG n , a fixed x ∈ X , and let w 0 n ( x ) := w n ( ρ, x ) be the posterior of ξ over M after observing x . Let w 00 n ( x ) := w 0 n ( ρ, x ) be the same posterior after observing x a second time, and let PG ρ n ( x ) denote the pr ediction gain of ρ ∈ M . Then PG n ( x ) = KL ( w 0 n k w n ) + KL ( w 0 n k w 00 n ) = IG n ( x ) + KL ( w 0 n k w 00 n ) + E w 0 n [ PG ρ n ( x )] . In particular , if M is a class of non-adaptive models in the sense that ρ n ( x ) = ρ ( x ) for all x 1: n , then PG n ( x ) = KL ( w 0 n k w n ) + KL ( w 0 n k w 00 n ) = IG n ( x ) + KL ( w 0 n k w 00 n ) . A model which is non-adaptive is also learning-positive in the sense of Definition 1. Many com- mon mixture models, for example Dirichlet-multinomial estimators, are mixtures over non-adapti ve models. Pr oof. W e rewrite the posterior update rule (3) to sho w that for any ρ ∈ M and any x ∈ X , ξ n ( x ) = ρ n ( x ) w n ( ρ ) w n ( ρ, x ) . Write E w 0 n := E ρ ∼ w 0 n ( · ) . Now P G n ( x ) = log ξ 0 n ( x ) ξ n ( x ) = E w 0 n log ξ 0 n ( x ) ξ n ( x ) = E w 0 n log w 0 n ( ρ ) w 00 n ( ρ ) w 0 n ( ρ ) w n ( ρ ) ρ 0 n ( x ) ρ n ( x ) = E w 0 n log w 0 n ( ρ ) w n ( ρ ) + E w 0 n log w 0 n ( ρ ) w 00 n ( ρ ) + E w 0 n log ρ 0 n ( x ) ρ n ( x ) = IG n ( x ) + KL ( w 0 n k w 00 n ) + E w 0 n [ PG ρ n ( x )] . The second statement follows immediately . Lemma 2. The functions f ( x ) := e x − 1 − x and g ( x ) := e x − 1 − x 2 ar e nonne gative on x ∈ [0 , ∞ ) . Pr oof. The statement regarding f ( x ) follows directly from the T aylor expansion for e x . No w , the first deriv ativ e of g ( x ) is e x − 2 x . It is clearly positive for x ≥ 1 . For x ∈ [0 , 1] , e x − 2 x = ∞ X i =0 x i i ! − 2 x ≥ 1 − x ≥ 0 . Since g (0) = 0 , the second result follows. 10 Pr oof (Theor em 1). The inequality IG n ( x ) ≤ PG n ( x ) follo ws directly from Lemma 1, the nonneg- ativity of the Kullback-Leibler diver gence, and the fact that all models in M are learning-positiv e. For the inequality PG n ( x ) ≤ ˆ N n ( x ) − 1 , we write ˆ N n ( x ) − 1 = (1 − ξ 0 n ( x )) − 1 ξ 0 n ( x ) − ξ n ( x ) ξ n ( x ) = (1 − ξ 0 n ( x )) − 1 ξ 0 n ( x ) ξ n ( x ) − 1 ( a ) = (1 − ξ 0 n ( x )) − 1 e PG n ( x ) − 1 ( b ) ≥ e PG n ( x ) − 1 ( c ) ≥ PG n ( x ) , where (a) follows by definition of prediction gain, (b) from ξ 0 n ( x ) ∈ [0 , 1) , and (c) from Lemma 2. Using the second part of Lemma 2 in (c) yields the inequality ˆ N n ( x ) − 1 / 2 ≥ PG n ( x ) . B Asymptotic Analysis W e begin with a simple lemma which will prov e useful throughout. Lemma 3. The rate of c hange of the empirical distribution, µ 0 n ( x ) − µ n ( x ) , is such that n µ 0 n ( x ) − µ n ( x ) = 1 − µ 0 n ( x ) . Pr oof. W e expand the definition of µ n and µ 0 n : n µ 0 n ( x ) − µ n ( x ) = n N n ( x ) + 1 n + 1 − N n ( x ) n = n n + 1 N n ( x ) + 1 − N n ( x ) = 1 − N n ( x ) + 1 n + 1 = 1 − µ 0 n ( x ) . Using this lemma, we deriv e an asymptotic relationship between N n and ˆ N n . Pr oof (Theor em 2). W e expand the definition of ˆ N n ( x ) and N n ( x ) : ˆ N n ( x ) N n ( x ) = ρ n ( x )(1 − ρ 0 n ( x )) N n ( x )( ρ 0 n ( x ) − ρ n ( x )) = ρ n ( x )(1 − ρ 0 n ( x )) nµ n ( x )( ρ 0 n ( x ) − ρ n ( x )) = ρ n ( x )( µ 0 n ( x ) − µ n ( x )) µ n ( x )( ρ 0 n ( x ) − ρ n ( x )) 1 − ρ 0 n ( x ) n ( µ 0 n ( x ) − µ n ( x )) = ρ n ( x ) µ n ( x ) µ 0 n ( x ) − µ n ( x ) ρ 0 n ( x ) − ρ n ( x ) 1 − ρ 0 n ( x ) 1 − µ 0 n ( x ) , with the last line following from Lemma 3. Under Assumption 1, all terms of the right-hand side con verge as n → ∞ . T aking the limit on both sides, lim n →∞ ˆ N n ( x ) N n ( x ) ( a ) = r ( x ) ˙ r ( x ) lim n →∞ 1 − ρ 0 n ( x ) 1 − µ 0 n ( x ) ( b ) = r ( x ) ˙ r ( x ) 1 − µ ( x ) r ( x ) 1 − µ ( x ) , 11 where (a) is justified by the existence of the relev ant limits and ˙ r ( x ) > 0 , and (b) follows from writing ρ 0 n ( x ) as µ n ( x ) ρ 0 n ( x ) /µ n ( x ) , where all limits in volved e xist. B.1 Directed Graphical Models W e say that X is a factored state space if it is the Cartesian product of k subspaces, i.e. X := X 1 × · · · × X k . This factored structure allows us to construct approximate density models ov er X , for example by modelling the joint density as a product of marginals. W e write the i th factor of a state x ∈ X as x i , and write the sequence of the i th factor across x 1: n as x i 1: n . W e will show that directed graphical models (W ainwright and Jordan, 2008) satisfy Assumption 1. A directed graphical model describes a probability distrib ution o ver a factored state space. T o the i th factor x i is associated a parent set π ( i ) ⊆ { 1 , . . . , i − 1 } . Let x π ( i ) denote the v alue of the f actors in the parent set. The i th factor model is ρ i n ( x i ; x π ( i ) ) := ρ i ( x i ; x 1: n , x π ( i ) ) , with the understanding that ρ i is allowed to make a different prediction for each value of x π ( i ) . The state x is assigned the joint probability ρ G M ( x ; x 1: n ) := k Y i =1 ρ i n ( x i ; x π ( i ) ) . Common choices for ρ i n include the conditional empirical distribution and the Dirichlet estimator . Proposition 1. Suppose that each factor model ρ i n con verg es to the conditional pr obability distri- bution µ ( x i | x π ( i ) ) and that for each x i with µ ( x i | x π ( i ) ) , lim n →∞ ρ i ( x i ; x 1: n x, x π ( i ) ) − ρ i ( x i ; x 1: n , x π ( i ) ) µ ( x i ; x 1: n x, x π ( i ) ) − µ ( x i ; x 1: n , x π ( i ) ) = 1 . Then for all x with µ ( x ) > 0 , the density model ρ G M satisfies Assumption 1 with r ( x ) = Q k i =1 µ ( x i | x π ( i ) ) µ ( x ) and ˙ r ( x ) = P k i =1 1 − µ ( x i | x π ( i ) ) Q j 6 = i µ ( x j | x π ( j ) ) 1 − µ ( x ) . The CTS density model used in our experiments is in fact a particular kind of induced graphical model. The result above thus describes ho w the pseudo-counts computed in Section 3.2 are asymp- totically related to the empirical counts. Pr oof. By hypothesis, ρ i n → µ ( x i | x π ( i ) ) . Combining this with µ n ( x ) → µ ( x ) > 0 , r ( x ) = lim n →∞ ρ D G M ( x ; x 1: n ) µ n ( x ) = lim n →∞ Q k i =1 ρ i n ( x i ; x π ( i ) ) µ n ( x ) = Q k i =1 µ ( x i | x π ( i ) ) µ ( x ) . Similarly , ˙ r ( x ) = lim n →∞ ρ 0 D G M ( x ; x 1: n ) − ρ D G M ( x ; x 1: n ) µ 0 n ( x ) − µ n ( x ) ( a ) = lim n →∞ ρ 0 D G M ( x ; x 1: n ) − ρ D G M ( x ; x 1: n ) n 1 − µ 0 n ( x ) = lim n →∞ ρ 0 D G M ( x ; x 1: n ) − ρ D G M ( x ; x 1: n ) n 1 − µ ( x ) , 12 where in (a) we used the identity n ( µ 0 n ( x ) − µ n ( x )) = 1 − µ 0 n ( x ) deri ved in the proof of Theorem 2. Now ˙ r ( x ) = (1 − µ ( x )) − 1 lim n →∞ ρ 0 D G M ( x ; x 1: n ) − ρ D G M ( x ; x 1: n ) n = (1 − µ ( x )) − 1 lim n →∞ k Y i =1 ρ i ( x i ; x 1: n x, x π ( i ) ) − k Y i =1 ρ i ( x i ; x 1: n , x π ( i ) ) n. Let c i := ρ i ( x i ; x 1: n , x π ( i ) ) and c 0 i := ρ i ( x i ; x 1: n x, x π ( i ) ) . The difference of products abo ve is k Y i =1 ρ i ( x i ; x 1: n x, x π ( i ) ) − k Y i =1 ρ i ( x i ; x 1: n , x π ( i ) ) = c 0 1 c 0 2 . . . c 0 k − c 1 c 2 . . . c k = ( c 0 1 − c 1 )( c 0 2 . . . c 0 k ) + c 1 ( c 0 2 . . . c 0 k − c 2 . . . c k ) = k X i =1 ( c 0 i − c i ) Y j i c 0 j , and ˙ r ( x ) = (1 − µ ( x )) − 1 lim n →∞ k X i =1 n ( c 0 i − c i ) Y j i c 0 j . By the hypothesis on the rate of change of ρ i and the identity n µ ( x i ; x 1: n x, x π ( i ) ) − µ ( x i ; x 1: n , x π ( i ) ) = 1 − µ ( x i | x π ( i ) ) , we hav e lim n →∞ n ( c 0 i − c i ) = 1 − µ ( x i | x π ( i ) ) . Since the limits of c 0 i and c i are both µ ( x i | x π ( i ) ) , we deduce that ˙ r ( x ) = P k i =1 1 − µ ( x i | x π ( i ) Q j 6 = i µ ( x j | x π j ( x ) ) 1 − µ ( x ) . Now , if µ ( x ) > 0 then also µ ( x i ; x π ( i ) ) > 0 for each factor x i . Hence ˙ r ( x ) > 0 . B.2 T abular Density Models (Cor ollary 1) W e shall prove the follo wing, which includes Corollary 1 as a special case. Lemma 4. Consider φ : X × X ∗ → R + . Suppose that for all ( x n : n ∈ N ) and e very x ∈ X 1. lim n →∞ 1 n P x ∈X φ ( x, x 1: n ) = 0 , and 2. lim n →∞ φ ( x, x 1: n x ) − φ ( x, x 1: n ) = 0 . Let ρ n ( x ) be the count-based estimator ρ n ( x ) = N n ( x ) + φ ( x, x 1: n ) n + P x ∈X φ ( x, x 1: n ) . If ˆ N n is the pseudo-count corr esponding to ρ n then ˆ N n ( x ) / N n ( x ) → 1 for all x with µ ( x ) > 0 . Condition 2 is satisfied if φ n ( x, x 1: n ) = u n ( x ) φ n with φ n monotonically increasing in n (but not too quickly!) and u n ( x ) con ver ging to some distribution u ( x ) for all sequences ( x n : n ∈ N ) . This is the case for most tabular density models. Pr oof. W e will show that the condition on the rate of change required by Proposition 1 is satisfied under the stated conditions. Let φ n ( x ) := φ ( x, x 1: n ) , φ 0 n ( x ) := φ ( x, x 1: n x ) , φ n := P x ∈X φ n ( x ) and φ 0 n := P x ∈X φ 0 n ( x ) . By hypothesis, ρ n ( x ) = N n ( x ) + φ n ( x ) n + φ n ρ 0 n ( x ) = N n ( x ) + φ 0 n ( x ) + 1 n + φ 0 n + 1 . 13 Note that we do not require φ n ( x ) = φ 0 n ( x ) . Now ρ 0 n ( x ) − ρ n ( x ) = n + φ n n + φ n ρ 0 n ( x ) − ρ n ( x ) = n + 1 + φ 0 n n + φ n ρ 0 n ( x ) − ρ n ( x ) − (1 + ( φ 0 n − φ n )) ρ 0 n ( x ) n + φ n = 1 n + φ n h ( N n ( x ) + 1 + φ 0 n ( x ) − ( N n ( x ) + φ n ( x )) − (1 + ( φ 0 n − φ n )) ρ 0 n ( x ) i = 1 n + φ n h 1 − ρ 0 n ( x ) + φ 0 n ( x ) − φ n ( x ) − ρ 0 n ( x ) φ 0 n − φ n i . Using Lemma 3 we deduce that ρ 0 n ( x ) − ρ n ( x ) µ 0 n ( x ) − µ n ( x ) = n n + φ n 1 − ρ 0 n ( x ) + φ 0 n ( x ) − φ n ( x ) + ρ 0 n ( x )( φ 0 n − φ n ) 1 − µ 0 n ( x ) . Since φ n = P x φ n ( x ) and similarly for φ 0 n , then φ 0 n ( x ) − φ n ( x ) → 0 pointwise implies that φ 0 n − φ n → 0 also. For any µ ( x ) > 0 , 0 ≤ lim n →∞ φ n ( x ) N n ( x ) ( a ) ≤ lim n →∞ P x ∈X φ n ( x ) N n ( x ) = lim n →∞ P x ∈X φ n ( x ) n n N n ( x ) ( b ) = 0 , where a) follows from φ n ( x ) ≥ 0 and b) is justified by n/ N n ( x ) → µ ( x ) − 1 > 0 and the hypothesis that P x ∈X φ n ( x ) /n → 0 . Therefore ρ n ( x ) → µ ( x ) . Hence lim n →∞ ρ 0 n ( x ) − ρ n ( x ) µ 0 n ( x ) − µ n ( x ) = lim n →∞ n n + φ n 1 − ρ 0 n ( x ) 1 − µ 0 n ( x ) = 1 . Since ρ n ( x ) → µ ( x ) , we further deduce from Theorem 2 that lim n →∞ ˆ N n ( x ) N n ( x ) = 1 . The condition µ ( x ) > 0 , which was also needed in Proposition 1, is necessary for the ratio to con verge to 1: for example, if N n ( x ) gro ws as O (log n ) but φ n ( x ) gro ws as O ( √ n ) (with |X | finite) then ˆ N n ( x ) will grow as the lar ger √ n . C Experimental Methods C.1 CTS Density Model Our state space X is the set of all preprocessed Atari 2600 frames. 2 Each raw frame is composed of 210 × 160 7-bit NTSC pix els (Bellemare et al., 2013). W e preprocess these frames by first con verting them to grayscale (luminance), then downsampling to 42 × 42 by av eraging o ver pixel v alues (Figure 5). Aside from this preprocessing, our model is very similar to the model used by Bellemare et al. (2014) and V eness et al. (2015). The CTS density model treats x ∈ X as a factored state, where each ( i, j ) pix el corresponds to a f actor x i,j . The parents of this factor are its upper -left neighbours, i.e. pixels ( i − 1 , j ) , ( i, j − 1) , ( i − 1 , j − 1) and ( i + 1 , j − 1) (in this order). The probability of x is then the product of the probability assigned to its factors. Each factor is modelled using a location-dependent CTS model, which predicts the pixel’ s colour value conditional on some, all, or possibly none, of the pixel’ s parents (Figure 6). 2 T echnically , the ALE is partially observable and a frame is an observation, not a state. In many games, howe ver , the current frame is sufficiently informati ve to guide e xploration. 14 Original Frame (1 60x2 1 0) Downsampled, 3-bit Greyscale (42x42) Figure 5: Sample preprocessed image provided to the CTS model ( right ), along with the original frame ( left ). Although details are lost, objects can still be made out. x i,j Figure 6: Depiction of the CTS “filter”. Each downsampled pixel is predicted by a location-specific model which can condition on the pixel’ s immediate neighbours (in blue). C.2 A T axonomy of Exploration W e pro vide in T able 1 a rough taxonomy of the Atari 2600 games available through the ALE in terms of the difficulty of e xploration. W e first divided the games into two groups: those for which local exploration (e.g. -greedy) is sufficient to achiev e a high scoring policy ( easy ), and those for which it is not ( har d ). For exam- ple, S PAC E I N V A D E R S versus P I T FA L L ! . W e further divided the easy group based on whether an -greedy scheme finds a scor e exploit , that is maximizes the score without achie ving the game’ s stated objectiv e. For example, K U N G - F U M A S T E R versus B OX I N G . While this distinction is not directly used here, score e xploits lead to behaviours which are optimal from an ALE perspecti ve b ut uninteresting to humans. W e divide the games in the hard category into dense reward games ( M S . P A C - M A N ) and sparse re ward games (M O N T E Z U M A ’ S R E V E N G E ). Easy Exploration Hard Exploration Human-Optimal Score Exploit Dense Rew ard Sparse Rew ard A S S AU LT A S T E R I X B E A M R I D E R A L I E N F R E E W AY A S T E RO I D S A T L A N T I S K A N G A RO O A M I DA R G R A V I TA R B ATT L E Z O N E B E R Z E R K K RU L L B A N K H E I S T M O N T E Z U M A ’ S R E V E N G E B OW L I N G B OX I N G K U N G - F U M A S T E R F R O S T B I T E P I T FA L L ! B R E A KO U T C E N T I P E D E R O A D R U N N E R H . E . R . O . P R I V ATE E Y E C H O P P E R C M D C R A Z Y C L I M B E R S E AQ U E S T M S . P AC - M A N S O L A R I S D E F E N D E R D E M O N A T TAC K U P N D O W N Q * B E RT V E N T U R E D O U B L E D U N K E N D U RO T U TA N K H A M S U R RO U N D F I S H I N G D E R B Y G O P H E R W I Z A R D O F W O R I C E H O C K E Y J AM E S B O N D Z A X X O N N A M E T H I S G A M E P H O E N I X P O N G R I V E R R A I D R O B OTA N K S K I I N G S PAC E I N V A D E R S S TAR G U N N E R T able 1: A rough taxonomy of Atari 2600 games according to their exploration dif ficulty . 15 0 2 3 4 5 6 7 8 9 10 11 12 13 14 16 17 18 19 20 21 22 23 Figure 7: Layout of levels in M O N T E Z U M A ’ S R E V E N G E , with rooms numbered from 0 to 23. The agent begins in room 1 and completes the le vel upon reaching room 15 (depicted). C.3 Exploration in M O N T E Z U M A ’ S R E V E N G E M O N T E Z U M A ’ S R E V E N G E is divided into three lev els, each composed of 24 rooms arranged in a pyramidal shape (Figure 7). As discussed above, each room poses a number of challenges: to escape the very first room, the agent must climb ladders, dodge a creature, pick up a key , then backtrack to open one of two doors. The number of rooms reached by an agent is therefore a good measure of its ability . By accessing the game RAM, we recorded the location of the agent at each step during the course of training. 3 W e computed the visit count to each room, av eraged over epochs each lasting one million frames. From this information we constructed a map of the agent’ s “known world”, that is, all rooms visited at least once. The agent’ s current room number ranges from 0 to 23 (Figure 7) and is stored at RAM location 0x83. Figure 8 shows the set of rooms explored by our DQN agents at different points during training. Figure 8 paints a clear picture: after 50 million frames, the agent using exploration bonuses has seen a total of 15 rooms, while the no-bonus agent has seen tw o. At that point in time, our agent achiev es an average score of 2461 ; by 100 million frames, this figure stands at 3439 , higher than anything previously reported. W e believ e the success of our method in this game is a strong indicator of the usefulness of pseudo-counts for exploration. W e remark that without mixing in the Monte-Carlo return, our bonus-based agent still explores significantly more than the no-bonus agent. Howe ver , the deep network seems unable to main- tain a sufficiently good approximation to the value function, and performance quickly deteriorates. Comparable results using the A3C method provide another example of the practical importance of eligibility traces and return-based methods in reinforcement learning. C.4 Impro ving Exploration for Actor -Critic Methods Our implementation of A3C was along the lines mentioned in Mnih et al. (2016) and uses 16 threads. Each thread corresponds to an actor learner and maintains a copy of the density model. All the threads are synchronized with the master thread at regular intervals of 250,000 steps. W e followed the same training procedure as that reported in the A3C paper with the following additional steps: W e update our density model with the states generated by following the policy . During the policy gradient step, we compute the intrinsic rew ards by querying the density model and add it to the extrinsic rewards before clipping them in the range [ − 1 , 1] as was done in the A3C paper . This resulted in minimal overhead in computation costs and the memory footprint was manageable ( < 32 GB) for most of the Atari games. Our training times were almost the same as the ones reported in the A3C paper . W e picked β = 0 . 01 after performing a short parameter sweep over the training games. The choice of training games is the same as mentioned in the A3C paper . The games on which DQN achiev es a score of 150% or less of the random score are: A S T E RO I D S , D O U B L E D U N K , G R A V I TA R , I C E H O C K E Y , M O N T E Z U M A ’ S R E V E N G E , P I T FA L L ! , S K I I N G , S U R - RO U N D , T E N N I S , T I M E P I L O T . The games on which A3C achie ves a score of 150% or less of the random score are: B AT T L E Z O N E , B O W L I N G , E N D U RO , F R E E W A Y , G R A V I TA R , K A N G A RO O , P I T FA L L ! , R O B OTA N K , S K I I N G , S O - L A R I S , S U R R O U N D , T E N N I S , T I M E P I L O T , V E N T U R E . 3 W e emphasize that the game RAM is not made av ailable to the agent, and is solely used here in our behavioural analysis. 16 No bonu s With bonus No bonu s With bonus No bonu s With bonus No bonu s With bonus 5 M ILLION T RAINING F RAMES 10 M ILLION T RAINING F RAMES 20 M ILLION T RAINING F RAMES 50 M ILLION T RAINING F RAMES Figure 8: “Known world” of a DQN agent trained ov er time, with ( bottom ) and without ( top ) count-based exploration bonuses, in M O N T E Z U M A ’ S R E V E N G E . The games on which A3C+ achie ves a score of 150% or less of the random score are: D O U B L E D U N K , G R A V I T A R , I C E H O C K E Y , P I T FA L L ! , S K I I N G , S O L A R I S , S U R R O U N D , T E N N I S , T I M E P I - L O T , V E N T U R E . Our experiments inv olved the stochastic version of the Arcade Learning En vironment (ALE) with- out a terminal signal for life loss, which is now the default ALE setting. Briefly , the stochasticity is achiev ed by accepting the agent action at each frame with probability 1 − p and using the agents previous action during rejection. W e used the ALE’ s default value of p = 0 . 25 as has been previ- ously used in Bellemare et al. (2016). For comparison, T able 2 also reports the deterministic + life loss setting also used in the literature. Anecdotally , we found that using the life loss signal, while helpful in achie ving high scores in some games, is detrimental in M O N T E Z U M A ’ S R E V E N G E . Recall that the life loss signal was used by Mnih et al. (2015) to treat each of the agent’ liv es as a separate episode. For comparison, after 200 million frames A3C+ achieves the following average scores: 1) Stochastic + Life Loss: 142.50; 2) Deterministic + Life Loss: 273.70 3) Stochastic without Life Loss: 1127.05 4) Deterministic without Life Loss: 273.70. The maximum score achieved by 3) is 3600, in comparison to the maximum of 500 achiev ed by 1) and 3). This lar ge discrepancy is not unsurprising when one considers that losing a life in M O N T E Z U M A ’ S R E V E N G E , and in fact in most games, is very different from restarting a new episode. C.5 Comparing Exploration Bonuses In this section we compare the effect of using different exploration bonuses deri ved from our density model. W e consider the following v ariants: • no exploration bonus, • ˆ N n ( x ) − 1 / 2 , as per MBIE-EB (Strehl and Littman, 2008); • ˆ N n ( x ) − 1 , as per BEB (K olter and Ng, 2009); and • PG n ( x ) , related to compression progress (Schmidhuber, 2008). The e xact form of these bonuses is analogous to (4). W e compare these variants after 10, 50, 100, and 200 million frames of training, again in the A3C setup. T o compare scores across 60 g ames, we use 17 Figure 9: A verage A3C+ score (solid line) ov er 200 million training frames, for all Atari 2600 games, normalized relati ve to the A3C baseline. Dotted lines denote min/max ov er seeds, inter- quartile range is shaded, and the median is dashed. 18 Fraction of Games Inter-algorit hm Score 10 M T RAINING F RAMES 1/N 1/ √ N PG No bo nus Fraction of Games Inter-algorit hm Score 50M T RAINING F RAMES 1/N 1/ √ N PG No bo nus Fraction of Games Inter-algorit hm Score 10 0 M T RAINING F RAMES 1/N 1/ √ N PG No bo nus Fraction of Games Inter-algorit hm Score 200M T RAINING F RAMES 1/N 1/ √ N PG No bo nus Figure 10: Inter-algorithm score distribution for exploration bonus variants. F or all methods the point f (0) = 1 is omitted for clarity . See text for details. inter-algorithm score distributions (Bellemare et al., 2013). Inter-algorithm scores are normalized so that 0 corresponds to the worst score on a game, and 1, to the best. If g ∈ { 1 , . . . , m } is a game and z g ,a the inter-algorithm score on g for algorithm a , then the score distribution function is f ( x ) := |{ g : z g ,a ≥ x }| m . The score distribution effecti vely depicts a kind of cumulativ e distribution, with a higher overall curve implying better scores across the gamut of Atari 2600 games. A higher curve at x = 1 implies top performance on more games; a higher curve at x = 0 indicates the algorithm does not perform poorly on many games. The scale parameter β was optimized to β = 0 . 01 for each v ariant separately . Figure 10 shows that, while prediction gain initially achiev es strong performance, by 50 million frames all three algorithms perform equally well. By 200 million frames, the ˆ N − 1 / 2 exploration bonus outperforms both prediction gain and no bonus. The prediction gain achiev es a decent, but not top-performing score on all games. This matches our earlier argument that using prediction gain results in too little exploration. W e hypothesize that the poor performance of the ˆ N − 1 bonus stems from too abrupt a decay from a large to small intrinsic re ward, although more experiments are needed. As a whole, these results sho w how using PG offers an advantage over the baseline A3C algorithm, which is furthered by using our count-based exploration bonus. 19 Stochastic ALE Deterministic ALE A3C A3C+ DQN A3C A3C+ DQN A L I E N 1968.40 1848.33 1802.08 1658.25 1945.66 1418.47 A M I DA R 1065.24 964.77 781.76 1034.15 861.14 654.40 A S S AU LT 2660.55 2607.28 1246.83 2881.69 2584.40 1707.87 A S T E R I X 7212.45 7262.77 3256.07 9546.96 7922.70 4062.55 A S T E R O I D S 2680.72 2257.92 525.09 3946.22 2406.57 735.05 A T L A N T I S 1752259.74 1733528.71 77670.03 1634837.98 1801392.35 281448.80 B A N K H E I S T 1071.89 991.96 419.50 1301.51 1182.89 315.93 B AT T L E Z O N E 3142.95 7428.99 16757.88 3393.84 7969.06 17927.46 B E A M R I D E R 6129.51 5992.08 4653.24 7004.58 6723.89 7949.08 B E R Z E R K 1203.09 1720.56 416.03 1233.47 1863.60 471.76 B O W L I N G 32.91 68.72 29.07 35.00 75.97 30.34 B O X I N G 4.48 13.82 66.13 3.07 15.75 80.17 B R E A K O U T 322.04 323.21 85.82 432.42 473.93 259.40 C E N T I P E D E 4488.43 5338.24 4698.76 5184.76 5442.94 1184.46 C H O P P E R C O M M A N D 4377.91 5388.22 1927.50 3324.24 5088.17 1569.84 C R A Z Y C L I M B E R 108896.28 104083.51 86126.17 111493.76 112885.03 102736.12 D E F E N D E R 42147.48 36377.60 4593.79 39388.08 38976.66 6225.82 D E M O N A T TAC K 26803.86 19589.95 4831.12 39293.17 30930.33 6183.58 D O U B L E D U N K 0.53 -8.88 -11.57 0.19 -7.84 -13.99 E N D U R O 0.00 749.11 348.30 0.00 694.83 441.24 F I S H I N G D E R B Y 30.42 29.46 -27.83 32.00 31.11 -8.68 F R E E W AY 0.00 27.33 30.59 0.00 30.48 30.12 F R O S T B I T E 290.02 506.61 707.41 283.99 325.42 506.10 G O P H E R 5724.01 5948.40 3946.13 6872.60 6611.28 4946.39 G R A V I TA R 204.65 246.02 43.04 201.29 238.68 219.39 H . E . R . O . 32612.96 15077.42 12140.76 34880.51 15210.62 11419.16 I C E H O C K E Y -5.22 -7.05 -9.78 -5.13 -6.45 -10.34 J A M E S B O N D 424.11 1024.16 511.76 422.42 1001.19 465.76 K A N G A R O O 47.19 5475.73 4170.09 46.63 4883.53 5972.64 K R U L L 7263.37 7587.58 5775.23 7603.84 8605.27 6140.24 K U N G - F U M A S T E R 26878.72 26593.67 15125.08 29369.90 28615.43 11187.13 M O N T E Z U M A ’ S R E V E N G E 0.06 142.50 0.02 0.17 273.70 0.00 M S . P AC - M A N 2163.43 2380.58 2480.39 2327.80 2401.04 2391.89 N A M E T H I S G A M E 6202.67 6427.51 3631.90 6087.31 7021.30 6565.41 P H O E N I X 12169.75 20300.72 3015.64 13893.06 23818.47 7835.20 P I T FA L L -8.83 -155.97 -84.40 -6.98 -259.09 -86.85 P O O Y A N 3706.93 3943.37 2817.36 4198.61 4305.57 2992.56 P O N G 18.21 17.33 15.10 20.84 20.75 19.17 P R I V A T E E Y E 94.87 100.00 69.53 97.36 99.32 -12.86 Q * B E RT 15007.55 15804.72 5259.18 19175.72 19257.55 7094.91 R I V E R R A I D 10559.82 10331.56 8934.68 11902.24 10712.54 2365.18 R O A D R U N N E R 36933.62 49029.74 31613.83 41059.12 50645.74 24933.39 R O B OTA N K 2.13 6.68 50.80 2.22 7.68 40.53 S E AQ U E S T 1680.84 2274.06 1180.70 1697.19 2015.55 3035.32 S K I I N G -23669.98 -20066.65 -26402.39 -20958.97 -22177.50 -27972.63 S O L A R I S 2156.96 2175.70 805.66 2102.13 2270.15 1752.72 S PAC E I N V A D E R S 1653.59 1466.01 1428.94 1741.27 1531.64 1101.43 S TA R G U N N E R 55221.64 52466.84 47557.16 59218.08 55233.43 40171.44 S U R RO U N D -7.79 -6.99 -8.77 -7.10 -7.21 -8.19 T E N N I S -12.44 -20.49 -12.98 -16.18 -23.06 -8.00 T I M E P I L OT 7417.08 3816.38 2808.92 9000.91 4103.00 4067.51 T U TA N K H A M 250.03 132.67 70.84 273.66 112.14 75.21 U P A N D D OW N 34362.80 8705.64 4139.20 44883.40 23106.24 5208.67 V E N T U R E 0.00 0.00 54.86 0.00 0.00 0.00 V I D E O P I N B A L L 53488.73 35515.91 55326.08 68287.63 97372.80 52995.08 W I Z A R D O F W O R 4402.10 3657.65 1231.23 4347.76 3355.09 378.70 Y A R ’ S R E V E N G E 19039.24 12317.49 14236.94 20006.02 13398.73 15042.75 Z A X X O N 121.35 7956.05 2333.52 152.11 7451.25 2481.40 T imes Best 26 24 8 26 25 9 T able 2: A verage score after 200 million training frames for A3C and A3C+ (with ˆ N − 1 / 2 n bonus), with a DQN baseline for comparison. 20

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment