Deep Learning Based Fast Multiuser Detection for Massive Machine-Type Communication

Massive machine-type communication (MTC) with sporadically transmitted small packets and low data rate requires new designs on the PHY and MAC layer with light transmission overhead. Compressive sensing based multiuser detection (CS-MUD) is designed …

Authors: Yanna Bai, Bo Ai, Wei Chen

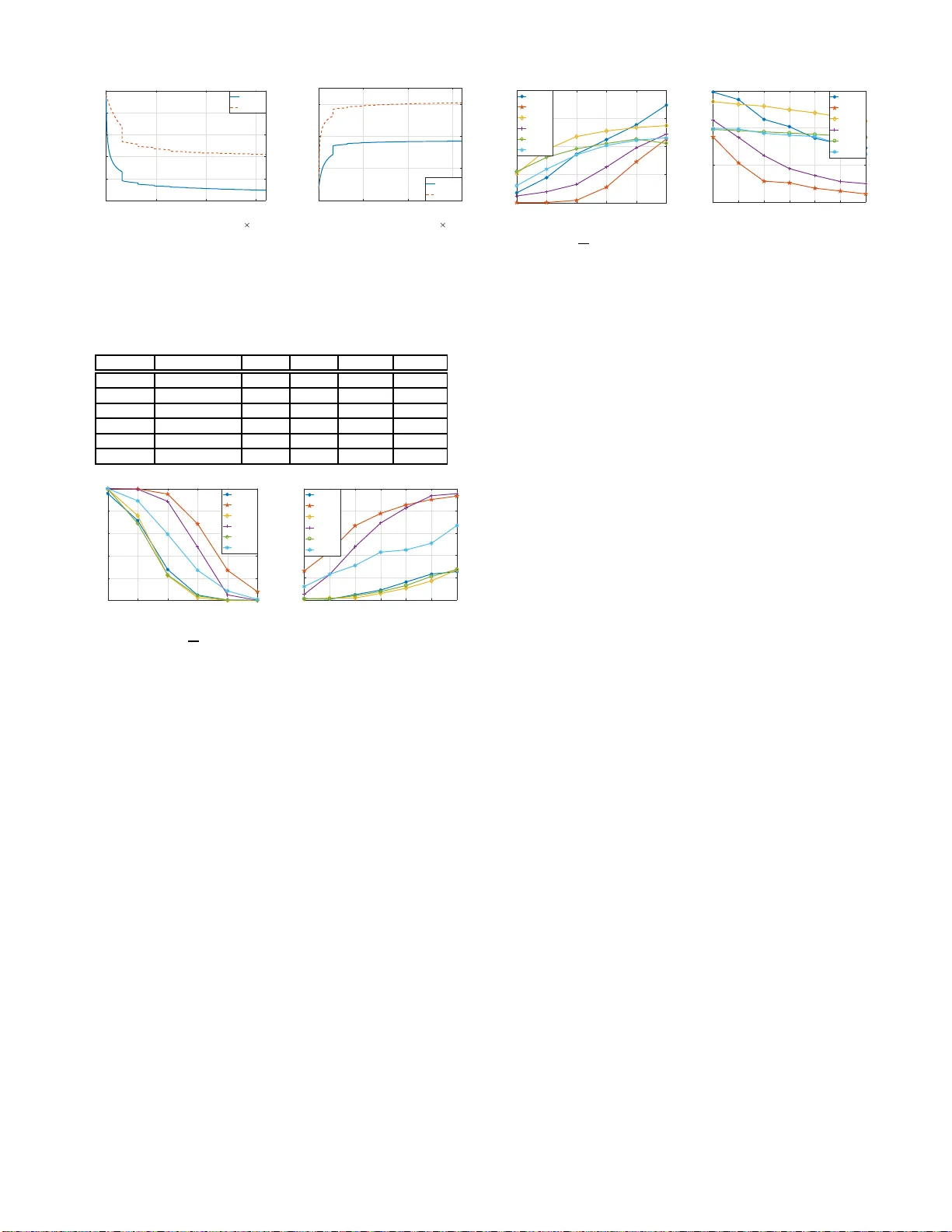

1 Deep Learning Based F ast Multiuser Detect ion for Massi v e Mac hine-T ype Co mmu nication Y anna Bai, Bo Ai, Senior Me mber , IEEE , and W ei Chen*, Senior Member , IEEE Abstract —Massiv e machine-type communication (MTC) with sporadically transmitted small p ackets and low data rate requires new designs on the PHY and MA C laye r with light transmission ov erhead. Compressi ve sensing based mul tiuser detection (CS- MUD) is designed to d etect activ e users through random access with low o verhead by exploiting sparsity , i.e., the nature o f sporadic transmissions in MTC. Ho wev er , the high computational complexity of con ventional sparse r econstruction algorithms prohibits the i mplementation of CS-MUD in rea l communication systems. T o ov erco me this drawback, i n th is paper , we propose a fast Deep learning based approa ch for CS-MUD in massive MTC systems. In particular , a novel bl ock restrictiv e activ ation nonlinear unit, is proposed to capture the block sp arse structure in wid e-band wireless communication systems (or multi-antenna systems). Our simulation r esults sho w that the proposed appro ach outperfor ms various existing algorithms f or CS-MUD and allows fo r ten-fold decrea se of the computing time. Index T erms —Massiv e machine-type communication, random access, deep learning. I . I N T RO D U C T I O N R ECENT years observe a growing interest in massive machine-ty pe communica tio n (MTC) owing to the rapid development of In ternet of Things and 5G [1]. In a typical MTC com munication scene, a massi ve numb er of nod es spo- radically transmit small packets with a low da ta rate, which is quite different to curren t cellu lar systems that are d esigned to suppo rt high data rates an d reliable conne c tions o f a small number of user s per cell. Commu nication overhead takes up a larger portio n of r esources in the MTC scene, and thus more efficient access method s are need ed. One po tential ap proach to redu ce the co mmunicatio n overhead is to av oid or r educe control sign aling overhead regarding the a c ti vity o f devices before tran smission. An im p ortant issue in massive MTC is acce ss cong e stion owing to the large num ber of MTC devices. In [2], f our approa c h es, i.e. , backoff-based scheme, access class barring based scheme, separating ran d om access ch annel (RA CH) resources and dyanam ic allocatio n of RA CH resource s, are introdu c ed to deal with acc e ss congestion. Howev er , th o se approa c h es does not effectiv ely redu ce sig naling overhead. In massi ve MTC with sporadic commu n ication, a comp ressiv e sensing (CS) based multiuser detectio n (MUD) [3], [4] is propo sed to joint detect u ser activity and data with a k nown channel state informa tion (CSI), wh ich redu ces commu nica- tion overhead by elimin ating contr o l signaling. In p ractical systems where CSI is unknown, a CS b ased joint activity and Y anna Bai, Bo A i and W ei Chen are w ith the Stat e Ke y Lab of Rail Tra f fic Control and Safet y , Beijing Jiaotong Uni versity , Beijing, China (e- mail: 16125001,boai,mmni,weich@bj tu.edu.cn). Correspondi ng author: W ei Chen. channel detection metho d is proposed in [5], where each nod e is assign ed a uniqu e pilot sequence f o r chann el estimation. The cost brou ght by the CS-MUD approach is the effort in solving a sparse estimation problem , which requ ires iterati ve algorithm s, e.g., ortho g onal matching pursuit (OMP) [6]. How- ev er , these iterative algorithm s are designed and optim ized for achieving a hig h er accur a cy and/or theoretically guar anteed conv ergence and fail to consider time constraints. Apply - ing iterative steps in trad itional sparse estimation algo rithms until a c hieving convergence would increases co mmunica tio n latency ( especially when the nu mber of nod es is large), wh ich is critical in some applications. It then naturally begs the question: can we red uce the sign aling overhead for MTC without sign ificantly sacrifice o n the laten cy . In this paper, we pr opose a fast Deep Learning (DL) based approa c h for MUD in massi ve MTC systems. As on e of the most h ig hly soug ht-after skills in technolo gy , DL [ 7] has been applied to various fields including comp uter vision [8], speech reco gnition [9], and langua ge translation [10] and got great success. As a branch of mach ine learnin g, DL, which usually refers to deep neur al networks (DNNs), con sumes a large amo unt of train ing data to learn param eters in a neural network. Whe n the neur a l ne twork has a suf ficient n u mber of hidden units, it can appr o ximate a large class of piecewise smooth f u nctions [1 1]. Altho ugh the training process o f the propo sed DL based app roach is time con suming, it can be condu c te d o ff-line with synthe tica lly gener ated data. For the inference task, i. e., MUD, the computing complexity of the trained DNN is low , as it only inv olves a nu mber of vector- matrix multiplication s/summations and eleme n t-wise no nlinear operation s. In addition , for wid e-band wireless commu nication systems or multi-a n tenna systems, the tra n smitted signal ar r iv es at the receiv er with multiple paths or multiple links, wh ich leads to a block sp a rse CSI vector . Capitalizing on the blo ck sparse structure, we furth er propose a novel b lock restricti ve activ atio n nonlin ear un it, which is distinct to existing a c ti vation function s in DNNs [12], [13]. Experim ents de monstrate the efficiency an d effecti veness of the pro posed bloc k-restrict neural network (BR NN) in compared with existing me thods. I I . B A C K G RO U N D A. System Description In this paper , we consider a massi ve MTC scenario where multiple devices communic a te with a b ase station (BS), a s shown in Fig. 1. W ithout lo ss o f ge nerality , o nly n devices out of K d evices hav e data to b e transmitted to the BS in one frame. For simp licity , we assume that all frames are received 2 Fig. 1. A star-t opology for the massiv e MT C scenario. synchro n ous at the BS. Each user is assigned a unique pilo t sequence s k ( k = 1 , . . . , K ) with the length N s for ch annel estimation. E ach symbol of the pilot sequ ence is ch osen fr o m the modulation alpha bet A . The channel vector h k of user k is d enoted by h k ∈ C L , wh e r e C denotes th e set of comp lex number s. Th e conv olution o f the chann el vector and the pilot sequence s k can be expressed as the matrix multiplication by rewriting th e conv o lution matrix of the tra n smitted pilot sequence of user k as ˆ S k = s k, 1 0 · · · s k, 2 s k, 1 . . . . . . . . . s k,N s s k,N s − 1 0 s k,N s 0 0 . . . ∈ C N , where N = ( N s + L − 1) × L . Then the sign a l received at the BS is gi ven by y = K X k =1 a k ˆ S k h k + n , (1) where n den otes add iti ve wh ite Gaussian noise, and a k ∈ { 0 , 1 } denotes the activity of user k . a k = 1 an d a k = 0 indicate active user and silen t user , respecti vely . Now we con struct th e pilot matrix of all user as ˆ S = [ ˆ S 1 , . . . , ˆ S K ] ∈ C ( N s + L − 1) × K L , th e ch annel vector of all user as h = [ h T 1 , . . . , h T K ] T ∈ C K L , and the user activity matrix as A = d iag ( a 1 I , . . . , a K I ) ∈ R K L × K L , where diag ( · ) denotes the transformatio n of a vector in to a d iagonal m atrix. Then the eq uation (1) can be reexpressed as y = ˆ SAh + n = ˆ Sx + n , (2) where x = Ah is a b lock sparse vector with n non zero b locks correspo n ding to acti ve users. By r econstructin g x fr o m y , we simultaneou sly detect active u sers and estimate their ch a n nel. Therefo re, the p r oblem boils down to solve the following optimization pr oblem min x y − ˆ Sx 2 2 , s.t. k x k 0 ≤ n L, (3) where k · k 0 denotes the ℓ 0 norm th at cou nts the number of nonzer o elem ents. Note that the optim iz a tion pro blem in (3) is NP-hard, and po pular a p proxim ations with v arying degrees o f co mputation al overhead include con vex relaxation methods [14] and iterative algo r ithms [15]. B. Deep Neural Network From th e perspec ti ve o f DL, the p rocess that solves the optimization problem in (3) could be seen as a black box, which is e xpressed as a functio n g ( y , ˆ S , θ ) = a rg min k x k 0 ≤ nL y − ˆ Sx 2 2 , (4) where θ denotes a set of parameter s. Giv en a set of training examples D = { x ( i ) , y ( i ) } i , a DNN is learned to m a p each input y ( i ) to a desired outco me by sev eral succ essi ve layers of lin ear transfor m ation interleaved with element- wise non - linear transforms. For an ord inary f eedforward n e ural network (FNN), the t th layer can be expressed as x t +1 = f ( W t x t + b t ) , (5) where the weig ht matr ix W t and the bias vector b t are parameters to be learned, an d f ( · ) denotes some non-linea r operator, e.g . , rectilinear un its (RELU). V arious DNN designs f o r th e sp a r sity enforcin g problem as (3) h ave be e n pro posed in literature. For example, in compariso n to the ordinar y FNN layers as defined in (5), a learned iterativ e shr inkage and thresho lding algo rithm (LIST A) is propo sed in [16], wher e different layers share same p a- rameters, i.e., W and b . Fu r thermor e, the no nlinear u nit f [ · ] adopted in LIST A is the elem e n t-wise soft-threshold ing func - tion f [ x ] = sig n ( x ) max { 0 , | x | − δ } , where δ is the shr inkage parameter . An IHT -net is proposed in [12], which is th e same as LIST A except fo r using a hard thr esholding function f [ x ] = sig n { x } max { 0 , | x |} as the nonlinear unit. While both L IST A and I H T -net use sha red weig h ts among layers, au th ors in [13] propo se to use or dinary FNN where layers do not have shared weights, and incorp o rate batch no rmalization [ 17] and residual connectio n [8] to reasonably initialize the neural network and to prevent v anishing/explod ing gradie n ts, respectiv ely . I I I . P RO P O S E D A P P R O AC H A. Network Structure The mo st straightfor ward DNN d esign for tacklin g a regre s- sion problem as in (3 ) is to map the rec e i ved sign al y to some outcome x . In v iew of the fact that a spar se x can be obtain e d by the least sq u are e stima to r g iven its suppo rt, it is would be mor e capacity- efficient to u se a D NN to app r oximate the mapping from y to the support o f x . Ther efore, we co n sider to use DNN for detecting acti ve users, which leads to a multi- label classification problem. Furthermo re, in wideband wireless communicatio n systems, x b ecomes a block sparse vector 1 with the block size L . It would be beneficial to incorpo rate this prior inform ation into the structure of the designed DNN. Here, we propo se to use a new block acti vation unit f ( x 1 , . . . , x L ) = sign (max { 0 , x 1 , . . . , x L } ) · [ x 1 , . . . , x L ] , (6) 1 For narro wband systems ( L = 1 ) with multiple antenna s at the BS, x is also a block sparse vecto r , where the block length is the number of antenna s. 3 Fig. 2. The structu re of the proposed BRNN. where a block of e le m ents are jointly acti vated if one elemen t is g reater than zero. Here sign ( · ) denotes th e sign fu n ction. Batch normalization is added for reasonable in itialization and residual connec tion is used to prevent vanishing/explod in g gradients. Furth ermore, we ad opt a p o oling layer b efore the last sof tmax layer to for c e the ou tput of the n etwork to indica te the acti ve users. R ELU is employed in the first a fe w layers, while the block activation unit is used in th e remaining lay ers. The last layer of BRNN employs th e softmax cross entro py loss functio n. This proposed network is named a s BRNN, and its stru cture is illustrated in Fig. 2 . B. T raining Data Gen eration A comm o n issue in app lying DL to wir e le ss c ommun ic a tion is the difficulty of collec tin g massive real data. Fortun ately , for solving the o p timization pro blem in (3) by a DNN, we cou ld use synthetically g enerated d ata fo r train ing. In specific, to obtain each training data, we first g enerate a r andom no ise vector n and a ran dom block sparse vector x whose support is used as the label, and then gener ate y by simply applyin g (1). Ma ssi ve training d a ta can be gen erated in this way , and the tr a ining pr ocess can be d o ne in an o ff-line manner . Once parameters of BRNN is learned, u sing this neura l network for inference, i.e., de te c ting active users in ne w d ataset, is compu tational inexpensive, as it only in volves a nu m ber of vector-matrix mu ltiplications/summa tio ns and elemen t- wise nonlinear o perations. W e would also like to emp hasize that the r e q uired amou nt of training data depen ds o n the numb er of n o des and the nu mber of acti ve nod es. W ith K nodes in total, there are K n different labels f or n active nodes. This number increa ses qu ick ly with the g row of n . T herefor e, in stead of generating the training data with a random activ e user nu mber, we fix the nu mber o f activ e node close to the lim it of traditional iterative algorithms for solv in g (3). I V . E X P E R I M E N T S In this section , we inv estigate the perfo r mance of the propo sed BRNN fo r MUD in MTC. In the experim ents, we co nsider K = 1 00 users in to tal, an d the activ e users transmit N s pilot sym bols with binary p hase shif t keying (BPSK) mod ulation. The chann el is modeled by L = 6 indepen d ent identically Ray le ig h distributed taps. Th e receiver noise n is generated b y a zero mean Gaussian vector with variance ad ju sted to have a desired value of the signal to noise ratio ( SNR). As K > N s + L − 1 L , the MUD p roblem is underd etermined . The propo sed BRNN is comp ared with several iterative sparse estimatio n alg orithms, in cluding o rthogo nal matching pursuit (OMP) [6], iterative hard th resholding (IHT) [1 5] and their extensions for block structur e, i.e., BOMP [18] and BIHT [19]. The DNN pro posed in [13] is also comp ared to emph a size the g ain brough t by BRNN. The detection o f multiuser is considered to be successful if er ror occur s. In our experimen ts, we g enerate 8 × 10 6 different samples for training, 10 5 samples for verification and 10 5 samples for testing. For a ll the generated data samples, we ad d ad ditiv e white Gaussian noise with a signal-to-noise ratio (SNR) o f 10 dB. In the trainin g data and verification data, we ran domly activ ate 6 no des, while in the test data, n ≤ 6 acti ve users a r e random ly selected. The optimizer adopte d for training neura l networks is stocha stic grad ient descen t with a m omentum 0 .9 and a learning rate 0.0 1. The batch size is fixed to 250. A. Con ver gence P erformance an d Comp utation Efficiency W e fir st inv estigate the co n vergence pe r forman ce of DNN and the pro posed BRNN in the train ing proc e ss. Th e pilot length of eac h u ser is fixed as N s = 40 . W e use the same initialization for DNN and BRNN as suggested in [2 0] and train the two neura l networks with the same learning r a te . The cross-entropy loss in the training proce ss is calculated for ev ery 100 b atches, and the r e sults are sho wn in Fig. 3( a). In addition to the cross-entropy loss, Fig. 3(b) shows the ratio o f successfully de te c te d users in the training proce ss. As shown in Fig. 3, be nefited from the block activ ation unit (6), BRNN conv erges mu c h faster and achieves a lower training lo ss than DNN, which fails to inco rporate the pr ior knowledge on the structure of the signal supp o rt. Then we investigate the computatio n efficiency o f in ference using testing data. The averaged computin g tim e for testing one d ata samp le is given in T able I. Note that altho ugh we use GPU to sp e ed up the training process for BRNN and DNN, for a fair comp a r ison of compu tational complexity we u se CPU for th e testing data for all the compared app r oaches includ ing OMP , BOMP , IHT , BIHT , DNN an d BRNN. These simulations are performed on a computer with a quad-core 4 .2GHz CPU 4 0 1 2 3 Batch index 10 5 18 20 22 24 26 28 Cross-entropy loss BRNN DNN (a) Cross-entr opy loss 0 1 2 3 Batch index 10 5 0 0.2 0.4 0.6 Ratio of successfully detected users BRNN DNN (b) Ratio of succe ssfully dete cted users Fig. 3. The con verg ence performance of DNN and BRNN. T ABLE I A V E R A G E D C O M P UT I N G T I M E F O R M ULT I U S E R D E T E C T I O N ( I N S EC O N D S ) . ( N s , n ) DNN/BRNN IHT BIHT OMP BOMP (30 , 3) 2 . 57 × 10 − 4 0 . 162 0 . 173 0 . 007 0 . 007 (30 , 6) 2 . 57 × 10 − 4 0 . 009 0 . 163 0 . 007 0 . 009 (40 , 3) 2 . 56 × 10 − 4 0 . 183 0 . 159 0 . 009 0 . 006 (40 , 6) 2 . 56 × 10 − 4 0 . 021 0 . 177 0 . 010 0 . 010 (50 , 3) 2 . 58 × 10 − 4 0 . 193 0 . 141 0 . 011 0 . 006 (50 , 6) 2 . 58 × 10 − 4 0 . 085 0 . 183 0 . 0136 0 . 012 0.01 0.02 0.03 0.04 0.05 0.06 n/K 0 0.2 0.4 0.6 0.8 1 Success Rate DNN BRNN OMP BOMP IHT BIHT (a) Success rate vs. n K ( N s = 40 ) 30 35 40 45 50 55 60 Ns 0 0.2 0.4 0.6 0.8 1 Success Rate DNN BRNN OMP BOMP IHT BIHT (b) Success rate vs. N s ( n = 4 ) Fig. 4. Performance of activ e users detection. and 16 GB RAM, ru nning under the Micr osoft W in dows 1 0 operating system. As shown in T able I, for various settings of pilot leng th and user a c ti vation probab ility , deep learning approa c h es, i.e., DNN and BRNN, allo w for mor e than 1 0 -fold decrease of the compu ting time. This significant improvement regarding to the com puting comp lexity is owing to th e fact that DNN and BRNN use a fixed n umber of matrix pro ductions and nonlinear th resholding , while both OMP an d BOMP in volve computatio nal co mplex matrix inv erse o peration , and bo th IHT and BIHT requ ire a relativ ely large num ber of iterations to conv erge. B. Active User Detection Accuracy In this experim ent we stud y how the propo sed approa c h perfor ms with different numbers of active user and d ifferent lengths of pilot. In Fig. 4(a), the pilot length of each user is fixed as N s = 40 . It is ob served that the p roposed BRNN achieves the highest activ e user de te c tion success rate among the com pared method s in cluding OMP , BOMP , IHT , BIHT and DNN. Fig. 4 (b) shows the active user detectio n success rate with d ifferent pilot leng th s, where the n umber of active users is set to b e 4 . It is also observed that BRNN outperfo rms other appr o aches in most of the cases. Here, we would like to emph a size that there are various way to furth er improve 0.01 0.02 0.03 0.04 0.05 0.06 n/K 0 0.5 1 1.5 2 MSE DNN BRNN OMP BOMP IHT BIHT (a) MSE vs. n K ( N s = 40 ) 30 35 40 45 50 55 60 Ns 0 0.5 1 1.5 MSE DNN BRNN OMP BOMP IHT BIHT (b) MSE vs. N s ( n = 4 ) Fig. 5. Performance of channel estimate. perfor mance of BRNN and DNN, e.g., using a larger size of training d ata and/or in creasing the numb er of layers in the neural n etwork, wh ile the perfo rmance of OMP , BOMP , IHT and BIHT would not improve with more iterations. C. Cha nnel Estimation Accuracy In this experimen t we show h ow do es th e prop o sed ap p roach affect th e chan n el e stima tio n perfo rmance under different number s of acti ve user an d different lengths of pilot. Th e channel is estimated by m in imum m ean squa re erro r estimator with the r e sult of active user detec t. In Fig. 5(a), the pilot length of each u ser is fixed as N s = 40 . It is o bserved that the pr oposed BRNN ach iev es the smallest mean squ a re error (MSE) amon g the comp a red methods including OMP , BOMP , IHT , BIHT and DNN. Fig. 5(b ) shows the MSE of channel estimation with different pilot len gths, whe r e the number of active users is set to be 4 . It is o bserved that BRNN outperf orms all the oth er appr oaches. V . C O N C L U S I O N In this pap er , we propose a novel de ep neural network, called BRNN, for m u ltiuser detection in m assi ve MTC com - munication with with sporad ically tr ansmitted small p a ckets and a lo w data rate. A new bloc k activ ation layer is pr oposed in BRNN to capture the block sparse structur e in the multiu ser detection p roblem. In comparison with existing appr oaches, significant red uction of compu tin g time and imp r ovement of multiuser detection accu racy are ac h iev ed by the p roposed approa c h . R E F E R E N C E S [1] C. Bock elmann, N. Pratas, H. Nik opour , K. Au, T . Sve nsson, C. Ste- fano vic, P . Popovski, and A. Dek orsy , “Massi ve machine- type communi- catio ns in 5g: physica l and mac-laye r solutions, ” IEE E Communicat ions Magazi ne , vol. 54, no. 9, pp. 59–65, September 2016. [2] S.-Y . Lien, K.-C. Chen, and Y . Lin, “T o ward ubiqu itous m assi ve accesses in 3gpp machine-to-mac hine communic ations, ” IEE E Communications Magazi ne , vol. 49, no. 4, 2011. [3] H. F . Schepker and A. Dek orsy , “Sparse multi-user detect ion for cdma transmission using greedy algo rithms, ” in 2011 8th International Sym- posium on W irele ss Communication Systems , Nov 2011, pp. 291–295. [4] C. Bockel mann, H. F . Schepker , and A. Dekorsy , “Compressi ve sensing based m ulti-use r detection for machine- to-machin e communication, ” T ransactions on Emerging T elecommunic ations T echnol ogie s , vol. 24, no. 4, pp. 389–400, 2013. [5] H. F . Schepke r , C. Bock elmann, and A. Dekorsy , “Exploit ing sparsity in channe l and data estimation for sporadic multi-user communication, ” in ISWCS 2013; The T e nth Internat ional Symposium on W ir eless Com- municati on Systems , Aug 2013, pp. 1–5. 5 [6] D. L. Donoho, Y . Tsaig, I. Drori, and J. L . S tarck, “Sparse solution of underdet ermined systems of linear equa tions by stage wise orthog onal matching pursuit , ” IEEE Tr ansact ions on Information Theory , vol. 58, no. 2, pp. 1094–1121, Feb 2012. [7] Y . LeCun, Y . Bengio, and G. Hinton, “Deep learni ng, ” natur e , vol. 521, no. 7553, p. 436, 2015. [8] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recogni tion, ” in 2016 IEEE Confere nce on Computer V ision and P attern Recogn ition (CVPR) , June 2016, pp. 770–778. [9] G. Hinton, L. D eng, D. Y u, G. E. Dahl, A. r . Mohamed, N. Jaitly , A. Senior , V . V anhoucke, P . N guyen, T . N. Sainath, and B. Kingsbury , “Deep neural netwo rks for acoustic modeling in speech recognit ion: The shared vie ws of four research groups, ” IEEE Signal Proc essing Magazi ne , vol. 29, no. 6, pp. 82–97, Nov 2012. [10] I. Sutske ver , O. V inyals, and Q. V . Le, “Sequence to sequence learn ing with neura l netw orks, ” in Advances in neural i nformation pr ocessing systems , 2014, pp. 3104–3112. [11] K . Hornik, M. Stinchc ombe, and H. White, “Multila yer feedforward netw orks are uni versa l approxima tors, ” Neur al networks , vol. 2, no. 5, pp. 359–366, 1989. [12] Z . W ang, Q. Ling, and T . Huang, “Learning deep l0 encoders, ” in A AAI Confer ence on Artificial Intel lig ence , 2016, pp. 2194–2200 . [13] B. Xin, Y . W ang, W . Gao, D. W ipf, and B. W ang, “Maximal sparsity with deep ne twork s?” in Advance s in Neur al Informati on Proc essing Systems , 2016, pp. 4340–434 8. [14] S . L. Kuk reja, J. L ¨ ofber g, and M. J. Brenner , “ A least absolute shrinkage and selec tion operator (lasso) for nonlinear system identific ation, ” IF AC pr oceedings volumes , vol. 39, no. 1, pp. 814–819 , 2006. [15] T . Blumensath and M. E. Davies, “Iterati ve hard thresholding for compressed sensing, ” Applied and computatio nal harmonic analysis , vol. 27, no. 3, pp. 265–274, 2009. [16] K . Gregor and Y . LeCun, “Learning fast approximat ions of sparse coding, ” in Proce edings of the 27th Internati onal Confer ence on In- ternati onal Co nfer ence on Mac hine Learning . Omnipress, 2010, pp. 399–406. [17] S . Ioffe and C. Szegedy , “Batc h normalization : Accel eratin g deep netw ork training by reducing internal cov ariat e shift, ” arXiv prepri nt arXiv:1502.03167 , 2015. [18] Y . Fu, H. Li, Q. Z hang, and J. Zou, “Blo ck-sparse recov ery via redundant block om p, ” Signal Pro cessing , vol. 97, pp. 162–171, 2014. [19] R. Garg and R. Khandekar , “Bloc k-sparse solutions using ker nel block rip and its applica tion to group lasso, ” in Pr oceedi ngs of the F ourte enth Internati onal Confer ence on Artifici al Intellig ence and Statistic s , 2011, pp. 296–304. [20] K . He, X. Zhang, S. Ren, and J. Sun, “Delving deep into rec tifiers: Surpassing human-le vel performance on imagenet classificat ion, ” in Pr oceed ings of the IEEE internati onal confer ence on compute r vision , 2015, pp. 1026–1034.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment