Waveform to Single Sinusoid Regression to Estimate the F0 Contour from Noisy Speech Using Recurrent Deep Neural Networks

The fundamental frequency (F0) represents pitch in speech that determines prosodic characteristics of speech and is needed in various tasks for speech analysis and synthesis. Despite decades of research on this topic, F0 estimation at low signal-to-n…

Authors: Akihiro Kato, Tomi Kinnunen

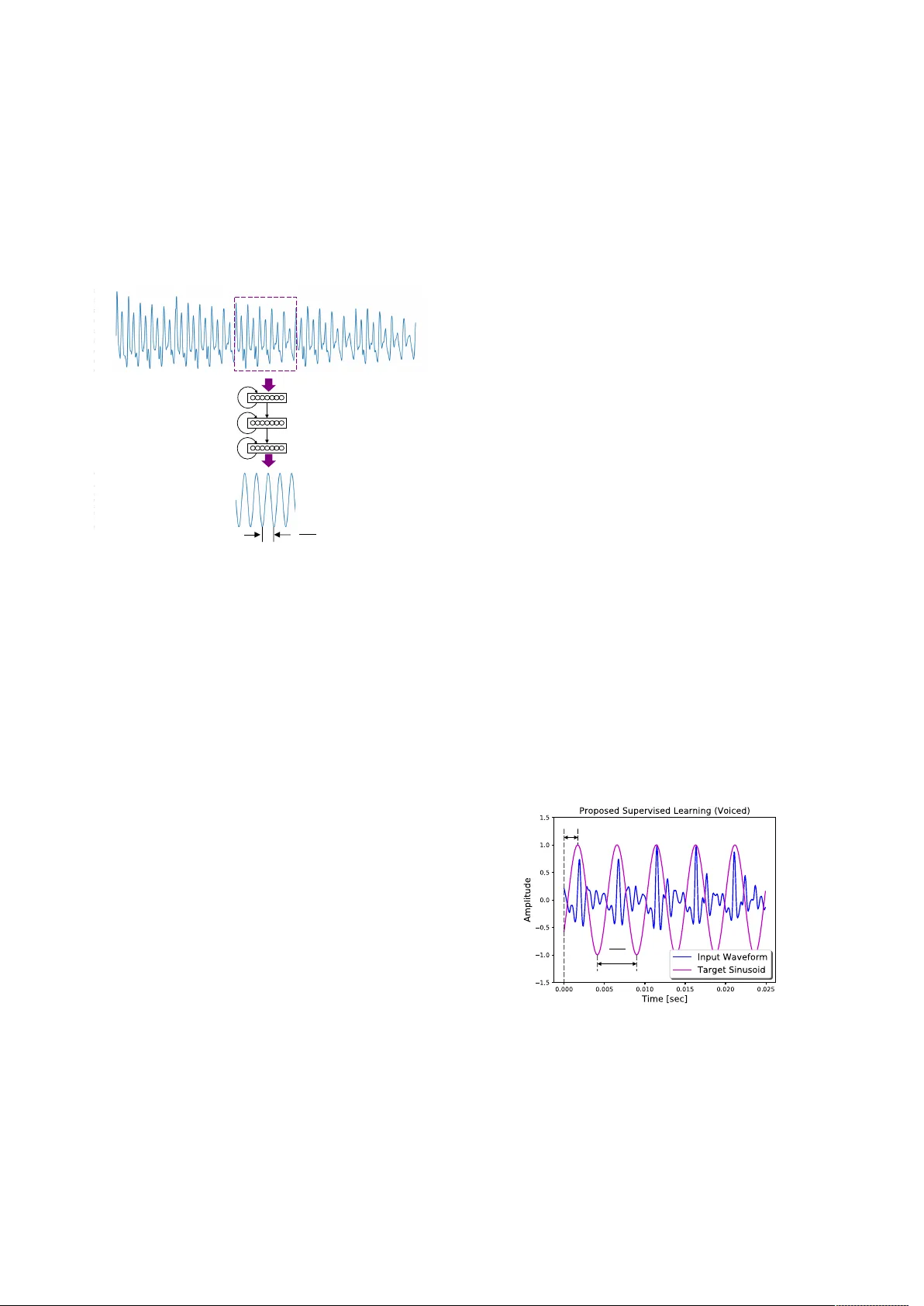

W a vef orm to Single Sinusoid Regression to Estimate the F0 Contour from Noisy Speech Using Recurrent Deep Neural Networks Akihir o Kato, T omi Kinnunen Uni versity of Eastern Finland akihiro.kato@uef.fi, tomi.kinnunen@uef.fi Abstract The fundamental frequency ( F 0 ) represents pitch in speech that determines prosodic characteristics of speech and is needed in various tasks for speech analysis and synthesis. Despite decades of research on this topic, F 0 estimation at low signal-to-noise ratios (SNRs) in unexpected noise conditions remains dif ficult. This work proposes a new approach to noise robust F 0 estima- tion using a recurrent neural network (RNN) trained in a su- pervised manner . Recent studies emplo y deep neural networks (DNNs) for F 0 tracking as a frame-by-frame classification task into quantised frequency states but we propose waveform-to- sinusoid r e gr ession instead to achie ve both noise rob ustness and accurate estimation with increased frequency resolution. Experimental results with PTDB-TUG corpus contaminated by additive noise ( NOISEX-92 ) demonstrate that the proposed method improves gross pitch error (GPE) rate and fine pitch error (FPE) by more than 35 % at SNRs between -10 dB and +10 dB compared with well-known noise robust F 0 tracker , PEF A C. Furthermore, the proposed method also outperforms state-of-the-art DNN-based approaches by more than 15 % in terms of both FPE and GPE rate ov er the preceding SNR range. Index T erms : F 0 estimation, pitch estimation, prosody analy- sis, voice acti vity detection, recurrent neural networks 1. Introduction Fundamental fr equency ( F 0 ) is the lo west frequency in a quasi- periodic signal. It represents pitch in speech that determines prosodic characteristics of speech. Therefore, F 0 is one of the key features of speech and F 0 estimation is vital for many ap- plications, e.g. v oice con version [1], speak er and language iden- tification [2, 3], prosody analysis [4], speech coding [5], speech synthesis [6] and speech enhancement [7, 8]. Over the past decades, v arious approaches to F 0 estima- tion have been proposed. Specifically , r obust algorithm for pitch trac king (RAPT) [9] and YIN [10] that track F 0 from time-domain signals hav e been widely used in many applica- tions showing high accuracy [11]. These methods, howe ver , do not attain satisfactory performance under noisy conditions [12]. Thus, several more noise robust methods have been proposed. For instance, pitch estimation filter with amplitude compres- sion (PEF A C) [13] tends to outperform both RAPT and YIN in terms of noise robustness. It analyses noisy signals in the log- frequency domain with a matched filter and normalisation with the univ ersal long-term av erage speech spectrum. Nonetheless, it remains challenging to obtain satisfactory estimates of F 0 at low signal-to-noise ratios (SNRs) such as 0 dB and belo w . In addition to such real-time digital signal processing (DSP) methods, various mac hine learning approaches using Gaussian mixtur e models (GMMs) and hidden Markov models (HMMs) [14, 15], for e xample, have been dev eloped for noise rob ust F 0 estimation. Furthermore, recent research has successfully ap- plied deep neural networks (DNNs) and their v ariants, e.g. con- volutional neur al networks (CNNs) and r ecurrent neural net- works (RNNs), to improve F 0 estimation in sev ere noise con- ditions [6, 16, 17]. DNNs deriv e discriminative models to rep- resent arbitrarily complex mapping functions as long as they comprise enough number of units in their hidden layers. Con- sequently , they enable statistical models to deal with higher di- mensional input features having stronger correlation than the preceding approaches. Recently , another technical trend in acoustic modelling has emerged since a remarkable achiev ement of W aveNet [18], which analyses time-domain wav eforms directly instead of ex- tracting spectral or cepstral features from speech. This con- tributed to not only adv ancement in speech synthesis but also end-to-end modelling for various speech applications that do not require traditional Fourier analysis [18]. Direct analysis of wa veforms is also beneficial for denoising of speech that usually combines noisy phase spectra with enhanced magnitude spectra to reconstruct clean speech [19]. In fact, the latest research has applied direct time-domain wa veform analysis to F 0 estimation with DNN-based [20] and CNN-based [21] approaches showing impro ved noise robust- ness ov er both the con ventional real-time signal processing and the recent DNN-based spectral analysis. These state-of-the-art time-domain F 0 estimators, ho wev er , still hav e a problem to be solved: they employ DNNs or CNNs to form a frame-by-frame classification model to decide a state corresponding to a quan- tised frequenc y . Even if it is con venient to treat F 0 tracking as a classification task in the same manner as alignment of senones in speech recognition, the resultant estimates of F 0 contours hav e a limited frequency resolution determined by the number of quantised frequency states. This is a potential draw-back in terms of estimation accuracy of F 0 . This work is an extension of our recent preliminary study [22]. In that study , we have successfully employed an RNN re- gression model, which maps spectral sequence directly onto F 0 values, to tackle the disadvantage in existing classification ap- proaches mentioned above. In relation to that preliminary study , the present paper represents the following four major changes. First, we employ direct waveform inputs instead of spectral se- quence. Second, we propose a novel encoding method of the F 0 information using a simple sinusoid oscillated with the ground truth v alue of F 0 . This encoding enables our model to map raw speech wa veforms to raw sinusoids without requirement of nei- ther pre-processing nor post-processing. Next, we amend our experiments with very recent competitive methods which are also based on wa veform input schemes [20, 21]. Finally , we augmented noise conditions for the experiments in order to ex- amine noise robustness against more various noise types. Con- sequently , a known noise condition is increased from consisting of six noise types to eight types while an unkno wn noise condi- tion is augmented from two types to four types. 2. Methodology In the proposed method of F 0 estimation, discrete time-domain speech wa veform, x ( n ) , is used as an input to an RNN. For voiced input speech, the posteriors of the RNN are mapped onto a single sinusoid oscillated with F 0 of the input wav eform as a regression task. For unv oiced and no voice inputs, the RNN performs an identity mapping. The F 0 value is then explicitly inferred from the resultant single sinusoid using its autocorrela- tion. Figure 1 illustrates the proposed framew ork. x i =[ x 0 ,x 1 ,...,x M 1 ] T AAACG3icbZDLSgMxFIYzXmu9jbp0EyyCi1pmVLAuhIIbN0KF1hY645BJM21o5kJyRlqGPogbX8WNCxVXggvfxvSy0NYfAh//OSfJ+f1EcAWW9W0sLC4tr6zm1vLrG5tb2+bO7p2KU0lZncYilk2fKCZ4xOrAQbBmIhkJfcEafu9qVG88MKl4HNVgkDA3JJ2IB5wS0JZnnmaOH+D+0OP4EjuCBdDqe1YR9z27iJ12DGrE2c2xPXQk73TBva95ZsEqWWPhebCnUEBTVT3zU99E05BFQAVRqmVbCbgZkcCpYMO8kyqWENojHdbSGJGQKTcbLzfEh9pp4yCW+kSAx+7viYyESg1CX3eGBLpqtjYy/6u1UgjKbsajJAUW0clDQSowxHiUFG5zySiIgQZCJdd/xbRLJKGg88zrEOzZleehflK6KNm3Z4VKeZpGDu2jA3SEbHSOKugaVVEdUfSIntErejOejBfj3fiYtC4Y05k99EfG1w9VHZ/a AAACG3icbZDLSgMxFIYzXmu9jbp0EyyCi1pmVLAuhIIbN0KF1hY645BJM21o5kJyRlqGPogbX8WNCxVXggvfxvSy0NYfAh//OSfJ+f1EcAWW9W0sLC4tr6zm1vLrG5tb2+bO7p2KU0lZncYilk2fKCZ4xOrAQbBmIhkJfcEafu9qVG88MKl4HNVgkDA3JJ2IB5wS0JZnnmaOH+D+0OP4EjuCBdDqe1YR9z27iJ12DGrE2c2xPXQk73TBva95ZsEqWWPhebCnUEBTVT3zU99E05BFQAVRqmVbCbgZkcCpYMO8kyqWENojHdbSGJGQKTcbLzfEh9pp4yCW+kSAx+7viYyESg1CX3eGBLpqtjYy/6u1UgjKbsajJAUW0clDQSowxHiUFG5zySiIgQZCJdd/xbRLJKGg88zrEOzZleehflK6KNm3Z4VKeZpGDu2jA3SEbHSOKugaVVEdUfSIntErejOejBfj3fiYtC4Y05k99EfG1w9VHZ/a AAACG3icbZDLSgMxFIYzXmu9jbp0EyyCi1pmVLAuhIIbN0KF1hY645BJM21o5kJyRlqGPogbX8WNCxVXggvfxvSy0NYfAh//OSfJ+f1EcAWW9W0sLC4tr6zm1vLrG5tb2+bO7p2KU0lZncYilk2fKCZ4xOrAQbBmIhkJfcEafu9qVG88MKl4HNVgkDA3JJ2IB5wS0JZnnmaOH+D+0OP4EjuCBdDqe1YR9z27iJ12DGrE2c2xPXQk73TBva95ZsEqWWPhebCnUEBTVT3zU99E05BFQAVRqmVbCbgZkcCpYMO8kyqWENojHdbSGJGQKTcbLzfEh9pp4yCW+kSAx+7viYyESg1CX3eGBLpqtjYy/6u1UgjKbsajJAUW0clDQSowxHiUFG5zySiIgQZCJdd/xbRLJKGg88zrEOzZleehflK6KNm3Z4VKeZpGDu2jA3SEbHSOKugaVVEdUfSIntErejOejBfj3fiYtC4Y05k99EfG1w9VHZ/a AAACG3icbZDLSgMxFIYzXmu9jbp0EyyCi1pmVLAuhIIbN0KF1hY645BJM21o5kJyRlqGPogbX8WNCxVXggvfxvSy0NYfAh//OSfJ+f1EcAWW9W0sLC4tr6zm1vLrG5tb2+bO7p2KU0lZncYilk2fKCZ4xOrAQbBmIhkJfcEafu9qVG88MKl4HNVgkDA3JJ2IB5wS0JZnnmaOH+D+0OP4EjuCBdDqe1YR9z27iJ12DGrE2c2xPXQk73TBva95ZsEqWWPhebCnUEBTVT3zU99E05BFQAVRqmVbCbgZkcCpYMO8kyqWENojHdbSGJGQKTcbLzfEh9pp4yCW+kSAx+7viYyESg1CX3eGBLpqtjYy/6u1UgjKbsajJAUW0clDQSowxHiUFG5zySiIgQZCJdd/xbRLJKGg88zrEOzZleehflK6KNm3Z4VKeZpGDu2jA3SEbHSOKugaVVEdUfSIntErejOejBfj3fiYtC4Y05k99EfG1w9VHZ/a x ( n ) AAAB6nicbVBNS8NAEJ3Ur1q/qh69LBahXkoigvVW8OKxgrGFNpTNdtMu3d2E3Y1YQv+CFw8qXv1F3vw3btoctPXBwOO9GWbmhQln2rjut1NaW9/Y3CpvV3Z29/YPqodHDzpOFaE+iXmsuiHWlDNJfcMMp91EUSxCTjvh5Cb3O49UaRbLezNNaCDwSLKIEWxy6akuzwfVmttw50CrxCtIDQq0B9Wv/jAmqaDSEI617nluYoIMK8MIp7NKP9U0wWSCR7RnqcSC6iCb3zpDZ1YZoihWtqRBc/X3RIaF1lMR2k6BzVgve7n4n9dLTdQMMiaT1FBJFouilCMTo/xxNGSKEsOnlmCimL0VkTFWmBgbT8WG4C2/vEr8i8Z1w7u7rLWaRRplOIFTqIMHV9CCW2iDDwTG8Ayv8OYI58V5dz4WrSWnmDmGP3A+fwDjPo2j AAAB6nicbVBNS8NAEJ3Ur1q/qh69LBahXkoigvVW8OKxgrGFNpTNdtMu3d2E3Y1YQv+CFw8qXv1F3vw3btoctPXBwOO9GWbmhQln2rjut1NaW9/Y3CpvV3Z29/YPqodHDzpOFaE+iXmsuiHWlDNJfcMMp91EUSxCTjvh5Cb3O49UaRbLezNNaCDwSLKIEWxy6akuzwfVmttw50CrxCtIDQq0B9Wv/jAmqaDSEI617nluYoIMK8MIp7NKP9U0wWSCR7RnqcSC6iCb3zpDZ1YZoihWtqRBc/X3RIaF1lMR2k6BzVgve7n4n9dLTdQMMiaT1FBJFouilCMTo/xxNGSKEsOnlmCimL0VkTFWmBgbT8WG4C2/vEr8i8Z1w7u7rLWaRRplOIFTqIMHV9CCW2iDDwTG8Ayv8OYI58V5dz4WrSWnmDmGP3A+fwDjPo2j AAAB6nicbVBNS8NAEJ3Ur1q/qh69LBahXkoigvVW8OKxgrGFNpTNdtMu3d2E3Y1YQv+CFw8qXv1F3vw3btoctPXBwOO9GWbmhQln2rjut1NaW9/Y3CpvV3Z29/YPqodHDzpOFaE+iXmsuiHWlDNJfcMMp91EUSxCTjvh5Cb3O49UaRbLezNNaCDwSLKIEWxy6akuzwfVmttw50CrxCtIDQq0B9Wv/jAmqaDSEI617nluYoIMK8MIp7NKP9U0wWSCR7RnqcSC6iCb3zpDZ1YZoihWtqRBc/X3RIaF1lMR2k6BzVgve7n4n9dLTdQMMiaT1FBJFouilCMTo/xxNGSKEsOnlmCimL0VkTFWmBgbT8WG4C2/vEr8i8Z1w7u7rLWaRRplOIFTqIMHV9CCW2iDDwTG8Ayv8OYI58V5dz4WrSWnmDmGP3A+fwDjPo2j AAAB6nicbVBNS8NAEJ3Ur1q/qh69LBahXkoigvVW8OKxgrGFNpTNdtMu3d2E3Y1YQv+CFw8qXv1F3vw3btoctPXBwOO9GWbmhQln2rjut1NaW9/Y3CpvV3Z29/YPqodHDzpOFaE+iXmsuiHWlDNJfcMMp91EUSxCTjvh5Cb3O49UaRbLezNNaCDwSLKIEWxy6akuzwfVmttw50CrxCtIDQq0B9Wv/jAmqaDSEI617nluYoIMK8MIp7NKP9U0wWSCR7RnqcSC6iCb3zpDZ1YZoihWtqRBc/X3RIaF1lMR2k6BzVgve7n4n9dLTdQMMiaT1FBJFouilCMTo/xxNGSKEsOnlmCimL0VkTFWmBgbT8WG4C2/vEr8i8Z1w7u7rLWaRRplOIFTqIMHV9CCW2iDDwTG8Ayv8OYI58V5dz4WrSWnmDmGP3A+fwDjPo2j y i =[ y 0 ,y 1 ,...,y M 1 ] T AAACG3icbZDLSgMxFIYzXmu9VV26CRbBRS0zKlgXQsGNG6FCawudccikmTY0cyE5IwxDH8SNr+LGhYorwYVvY6btQlt/CHz855wk5/diwRWY5rexsLi0vLJaWCuub2xubZd2du9UlEjKWjQSkex4RDHBQ9YCDoJ1YslI4AnW9oZXeb39wKTiUdiENGZOQPoh9zkloC23dJrZno/TkcvxJbYF86GbumYFp65VwXYvApVzdnNsjWzJ+wNw7ptuqWxWzbHwPFhTKKOpGm7pU99Ek4CFQAVRqmuZMTgZkcCpYKOinSgWEzokfdbVGJKAKScbLzfCh9rpYT+S+oSAx+7viYwESqWBpzsDAgM1W8vN/2rdBPyak/EwToCFdPKQnwgMEc6Twj0uGQWRaiBUcv1XTAdEEgo6z6IOwZpdeR5aJ9WLqnV7Vq7XpmkU0D46QEfIQueojq5RA7UQRY/oGb2iN+PJeDHejY9J64IxndlDf2R8/QBboJ/e AAACG3icbZDLSgMxFIYzXmu9VV26CRbBRS0zKlgXQsGNG6FCawudccikmTY0cyE5IwxDH8SNr+LGhYorwYVvY6btQlt/CHz855wk5/diwRWY5rexsLi0vLJaWCuub2xubZd2du9UlEjKWjQSkex4RDHBQ9YCDoJ1YslI4AnW9oZXeb39wKTiUdiENGZOQPoh9zkloC23dJrZno/TkcvxJbYF86GbumYFp65VwXYvApVzdnNsjWzJ+wNw7ptuqWxWzbHwPFhTKKOpGm7pU99Ek4CFQAVRqmuZMTgZkcCpYKOinSgWEzokfdbVGJKAKScbLzfCh9rpYT+S+oSAx+7viYwESqWBpzsDAgM1W8vN/2rdBPyak/EwToCFdPKQnwgMEc6Twj0uGQWRaiBUcv1XTAdEEgo6z6IOwZpdeR5aJ9WLqnV7Vq7XpmkU0D46QEfIQueojq5RA7UQRY/oGb2iN+PJeDHejY9J64IxndlDf2R8/QBboJ/e AAACG3icbZDLSgMxFIYzXmu9VV26CRbBRS0zKlgXQsGNG6FCawudccikmTY0cyE5IwxDH8SNr+LGhYorwYVvY6btQlt/CHz855wk5/diwRWY5rexsLi0vLJaWCuub2xubZd2du9UlEjKWjQSkex4RDHBQ9YCDoJ1YslI4AnW9oZXeb39wKTiUdiENGZOQPoh9zkloC23dJrZno/TkcvxJbYF86GbumYFp65VwXYvApVzdnNsjWzJ+wNw7ptuqWxWzbHwPFhTKKOpGm7pU99Ek4CFQAVRqmuZMTgZkcCpYKOinSgWEzokfdbVGJKAKScbLzfCh9rpYT+S+oSAx+7viYwESqWBpzsDAgM1W8vN/2rdBPyak/EwToCFdPKQnwgMEc6Twj0uGQWRaiBUcv1XTAdEEgo6z6IOwZpdeR5aJ9WLqnV7Vq7XpmkU0D46QEfIQueojq5RA7UQRY/oGb2iN+PJeDHejY9J64IxndlDf2R8/QBboJ/e AAACG3icbZDLSgMxFIYzXmu9VV26CRbBRS0zKlgXQsGNG6FCawudccikmTY0cyE5IwxDH8SNr+LGhYorwYVvY6btQlt/CHz855wk5/diwRWY5rexsLi0vLJaWCuub2xubZd2du9UlEjKWjQSkex4RDHBQ9YCDoJ1YslI4AnW9oZXeb39wKTiUdiENGZOQPoh9zkloC23dJrZno/TkcvxJbYF86GbumYFp65VwXYvApVzdnNsjWzJ+wNw7ptuqWxWzbHwPFhTKKOpGm7pU99Ek4CFQAVRqmuZMTgZkcCpYKOinSgWEzokfdbVGJKAKScbLzfCh9rpYT+S+oSAx+7viYwESqWBpzsDAgM1W8vN/2rdBPyak/EwToCFdPKQnwgMEc6Twj0uGQWRaiBUcv1XTAdEEgo6z6IOwZpdeR5aJ9WLqnV7Vq7XpmkU0D46QEfIQueojq5RA7UQRY/oGb2iN+PJeDHejY9J64IxndlDf2R8/QBboJ/e RNN-based Regression 1 ˆ f 0 i AAAB/HicbVBNS8NAEJ3Ur1q/6sfNy2IRPJVECuqt4MVjBWMLTQib7aZdutmE3Y1QQ/CvePGg4tUf4s1/47bNQVsfDDzem2FmXphyprRtf1uVldW19Y3qZm1re2d3r75/cK+STBLqkoQnshdiRTkT1NVMc9pLJcVxyGk3HF9P/e4DlYol4k5PUurHeChYxAjWRgrqR14kMcmdIvdGWOdRYQesCOoNu2nPgJaJU5IGlOgE9S9vkJAspkITjpXqO3aq/RxLzQinRc3LFE0xGeMh7RsqcEyVn8+uL9CpUQYoSqQpodFM/T2R41ipSRyazhjrkVr0puJ/Xj/T0aWfM5FmmgoyXxRlHOkETaNAAyYp0XxiCCaSmVsRGWEThzaB1UwIzuLLy8Q9b141ndtWo90q06jCMZzAGThwAW24gQ64QOARnuEV3qwn68V6tz7mrRWrnDmEP7A+fwBCJJVB AAAB/HicbVBNS8NAEJ3Ur1q/6sfNy2IRPJVECuqt4MVjBWMLTQib7aZdutmE3Y1QQ/CvePGg4tUf4s1/47bNQVsfDDzem2FmXphyprRtf1uVldW19Y3qZm1re2d3r75/cK+STBLqkoQnshdiRTkT1NVMc9pLJcVxyGk3HF9P/e4DlYol4k5PUurHeChYxAjWRgrqR14kMcmdIvdGWOdRYQesCOoNu2nPgJaJU5IGlOgE9S9vkJAspkITjpXqO3aq/RxLzQinRc3LFE0xGeMh7RsqcEyVn8+uL9CpUQYoSqQpodFM/T2R41ipSRyazhjrkVr0puJ/Xj/T0aWfM5FmmgoyXxRlHOkETaNAAyYp0XxiCCaSmVsRGWEThzaB1UwIzuLLy8Q9b141ndtWo90q06jCMZzAGThwAW24gQ64QOARnuEV3qwn68V6tz7mrRWrnDmEP7A+fwBCJJVB AAAB/HicbVBNS8NAEJ3Ur1q/6sfNy2IRPJVECuqt4MVjBWMLTQib7aZdutmE3Y1QQ/CvePGg4tUf4s1/47bNQVsfDDzem2FmXphyprRtf1uVldW19Y3qZm1re2d3r75/cK+STBLqkoQnshdiRTkT1NVMc9pLJcVxyGk3HF9P/e4DlYol4k5PUurHeChYxAjWRgrqR14kMcmdIvdGWOdRYQesCOoNu2nPgJaJU5IGlOgE9S9vkJAspkITjpXqO3aq/RxLzQinRc3LFE0xGeMh7RsqcEyVn8+uL9CpUQYoSqQpodFM/T2R41ipSRyazhjrkVr0puJ/Xj/T0aWfM5FmmgoyXxRlHOkETaNAAyYp0XxiCCaSmVsRGWEThzaB1UwIzuLLy8Q9b141ndtWo90q06jCMZzAGThwAW24gQ64QOARnuEV3qwn68V6tz7mrRWrnDmEP7A+fwBCJJVB AAAB/HicbVBNS8NAEJ3Ur1q/6sfNy2IRPJVECuqt4MVjBWMLTQib7aZdutmE3Y1QQ/CvePGg4tUf4s1/47bNQVsfDDzem2FmXphyprRtf1uVldW19Y3qZm1re2d3r75/cK+STBLqkoQnshdiRTkT1NVMc9pLJcVxyGk3HF9P/e4DlYol4k5PUurHeChYxAjWRgrqR14kMcmdIvdGWOdRYQesCOoNu2nPgJaJU5IGlOgE9S9vkJAspkITjpXqO3aq/RxLzQinRc3LFE0xGeMh7RsqcEyVn8+uL9CpUQYoSqQpodFM/T2R41ipSRyazhjrkVr0puJ/Xj/T0aWfM5FmmgoyXxRlHOkETaNAAyYp0XxiCCaSmVsRGWEThzaB1UwIzuLLy8Q9b141ndtWo90q06jCMZzAGThwAW24gQ64QOARnuEV3qwn68V6tz7mrRWrnDmEP7A+fwBCJJVB Figure 1: A voiced speech waveform dir ectly inputs to an RNN in order to perform waveform-to-sinusoid re gression. The esti- mate of F 0 is then inferred fr om the resultant sinusoid. 2.1. W avef orm-to-sinusoid regression using an RNN x ( n ) is first divided into I frames, x 0 , x 1 , . . . , x I − 1 , x i = [ x ( M i ) , x ( M i + 1) , . . . , x ( M ( i + 1) − 1)] > , (1) where M denotes the number of samples in a frame. Units in RNN layers have connections from their outputs back to their own inputs in addition to the feedforward connec- tions. Therefore, an RNN layer receives its own output at the previous time sequence as well as the current time sequence input from the previous layer . This behaviour of RNN layers, interpreted as memory cells [23], is well-suited to analyse tem- poral dynamics of speech. Thus, F 0 at the i -th frame, f 0 i , is analysed with neighbouring 2 p frames, i.e. i − p to i + p , sequence-by-sequence. Since the RNN-based discriminati ve model in the proposed method takes a form of sequence-to-sequence structure, output of RNN layer, l , at time sequence, n ( n = 0 , 1 , . . . , 2 p ) , θ l n , is deriv ed as follows with respect to input instance, x i . θ l n = g W l φ l n + H l φ l +1 n − 1 (2) φ l n = h 1 , ( θ l − 1 n ) > i > (3) θ 0 n = h 1 , ( x i − p + n ) > i > , (4) where g ( · ) represents an activ ation function and W l is the weight matrix from the output of layer l − 1 to the input of layer l (feedforward) while H l denotes the weight matrix from the output of layer l to the input of layer l (feedback). Here, W l and H l are represented as W l = w l 10 w l 11 . . . w l 1 q l − 1 w l 20 w l 21 . . . w l 2 q l − 1 . . . . . . . . . . . . w l q l 0 w l q l 1 . . . w l q l q l − 1 (5) H l = h l 10 h l 11 . . . h l 1 q l h l 20 h l 21 . . . h l 2 q l . . . . . . . . . . . . h l q l 0 h l q l 1 . . . h l q l q l , , (6) where q l is the number of units (excluding the bias unit) in the l -th layer . Furthermore, w l j k is the feedforw ard weight between unit, k , in the ( l − 1 )-th layer and unit, j , in the l -th layer while h l j k is the feedback weight between the output of unit, k , and the input of unit, j , in the l -th layer . In order to achieve the RNN-based regression to a single sinusoid, the output layer is activated by the identity function unlike classification model applying the softmax function for the activ ation. Consequently , posteriors of the RNN at the i -th frame (i.e. n = p ), y i , is deriv ed as y i = I W L φ L p + H L φ L +1 p − 1 (7) φ L +1 p = h 1 , y > p − 1 i > , (8) where I ( · ) and L denote the identity function and the number of RNN layers respectiv ely . 2.2. Model training and F0 estimation In the offline training process, W l and H l are optimised in ad- vance by supervised learning. During voiced speech periods, training is achiev ed by minimising the mean square error (MSE) between y i and its target sinusoid. The sinusoid is oscillated with f 0 i and ϕ i which are the ground truth of F 0 at frame, i , and the phase to maximise the cross-correlation between x i and cos(2 π f 0 i m/f s ) . Here, f s is the sampling frequency and m = 0 , 1 , . . . , M − 1 , as illustrated in Figure 2. For unvoiced x i AAAB73icbVBNSwMxEJ3Ur1q/qh69BIvgqexKQb0VvHis4NpKu5Rsmm1Dk+ySZMWy9Fd48aDi1b/jzX9j+oFo64OBx3szzMyLUsGN9bwvVFhZXVvfKG6WtrZ3dvfK+wd3Jsk0ZQFNRKJbETFMcMUCy61grVQzIiPBmtHwauI3H5g2PFG3dpSyUJK+4jGnxDrpPu9EMX4cd3m3XPGq3hT4h/iLpAJzNLrlz04voZlkylJBjGn7XmrDnGjLqWDjUiczLCV0SPqs7agikpkwnx48xidO6eE40a6UxVP190ROpDEjGblOSezALHoT8T+vndn4Isy5SjPLFJ0tijOBbYIn3+Me14xaMXKEUM3drZgOiCbUuoxKLoSll5dJcFa9rPo3tUq9Nk+jCEdwDKfgwznU4RoaEAAFCU/wAq9Io2f0ht5nrQU0nzmEP0Af3xZzkBc= AAAB73icbVBNSwMxEJ3Ur1q/qh69BIvgqexKQb0VvHis4NpKu5Rsmm1Dk+ySZMWy9Fd48aDi1b/jzX9j+oFo64OBx3szzMyLUsGN9bwvVFhZXVvfKG6WtrZ3dvfK+wd3Jsk0ZQFNRKJbETFMcMUCy61grVQzIiPBmtHwauI3H5g2PFG3dpSyUJK+4jGnxDrpPu9EMX4cd3m3XPGq3hT4h/iLpAJzNLrlz04voZlkylJBjGn7XmrDnGjLqWDjUiczLCV0SPqs7agikpkwnx48xidO6eE40a6UxVP190ROpDEjGblOSezALHoT8T+vndn4Isy5SjPLFJ0tijOBbYIn3+Me14xaMXKEUM3drZgOiCbUuoxKLoSll5dJcFa9rPo3tUq9Nk+jCEdwDKfgwznU4RoaEAAFCU/wAq9Io2f0ht5nrQU0nzmEP0Af3xZzkBc= AAAB73icbVBNSwMxEJ3Ur1q/qh69BIvgqexKQb0VvHis4NpKu5Rsmm1Dk+ySZMWy9Fd48aDi1b/jzX9j+oFo64OBx3szzMyLUsGN9bwvVFhZXVvfKG6WtrZ3dvfK+wd3Jsk0ZQFNRKJbETFMcMUCy61grVQzIiPBmtHwauI3H5g2PFG3dpSyUJK+4jGnxDrpPu9EMX4cd3m3XPGq3hT4h/iLpAJzNLrlz04voZlkylJBjGn7XmrDnGjLqWDjUiczLCV0SPqs7agikpkwnx48xidO6eE40a6UxVP190ROpDEjGblOSezALHoT8T+vndn4Isy5SjPLFJ0tijOBbYIn3+Me14xaMXKEUM3drZgOiCbUuoxKLoSll5dJcFa9rPo3tUq9Nk+jCEdwDKfgwznU4RoaEAAFCU/wAq9Io2f0ht5nrQU0nzmEP0Af3xZzkBc= AAAB73icbVBNSwMxEJ3Ur1q/qh69BIvgqexKQb0VvHis4NpKu5Rsmm1Dk+ySZMWy9Fd48aDi1b/jzX9j+oFo64OBx3szzMyLUsGN9bwvVFhZXVvfKG6WtrZ3dvfK+wd3Jsk0ZQFNRKJbETFMcMUCy61grVQzIiPBmtHwauI3H5g2PFG3dpSyUJK+4jGnxDrpPu9EMX4cd3m3XPGq3hT4h/iLpAJzNLrlz04voZlkylJBjGn7XmrDnGjLqWDjUiczLCV0SPqs7agikpkwnx48xidO6eE40a6UxVP190ROpDEjGblOSezALHoT8T+vndn4Isy5SjPLFJ0tijOBbYIn3+Me14xaMXKEUM3drZgOiCbUuoxKLoSll5dJcFa9rPo3tUq9Nk+jCEdwDKfgwznU4RoaEAAFCU/wAq9Io2f0ht5nrQU0nzmEP0Af3xZzkBc= ' i AAAB73icbVBNSwMxEJ3Ur1q/qh69BIvgqeyKoN4KXjxWcG2lXUo2zbahSXZJsoWy9Fd48aDi1b/jzX9j2u5BWx8MPN6bYWZelApurOd9o9La+sbmVnm7srO7t39QPTx6NEmmKQtoIhLdjohhgisWWG4Fa6eaERkJ1opGtzO/NWba8EQ92EnKQkkGisecEuukp+6Y6HTIe7xXrXl1bw68SvyC1KBAs1f96vYTmkmmLBXEmI7vpTbMibacCjatdDPDUkJHZMA6jioimQnz+cFTfOaUPo4T7UpZPFd/T+REGjORkeuUxA7NsjcT//M6mY2vw5yrNLNM0cWiOBPYJnj2Pe5zzagVE0cI1dzdiumQaEKty6jiQvCXX14lwUX9pu7fX9Yal0UaZTiBUzgHH66gAXfQhAAoSHiGV3hDGr2gd/SxaC2hYuYY/gB9/gBheJBI AAAB73icbVBNSwMxEJ3Ur1q/qh69BIvgqeyKoN4KXjxWcG2lXUo2zbahSXZJsoWy9Fd48aDi1b/jzX9j2u5BWx8MPN6bYWZelApurOd9o9La+sbmVnm7srO7t39QPTx6NEmmKQtoIhLdjohhgisWWG4Fa6eaERkJ1opGtzO/NWba8EQ92EnKQkkGisecEuukp+6Y6HTIe7xXrXl1bw68SvyC1KBAs1f96vYTmkmmLBXEmI7vpTbMibacCjatdDPDUkJHZMA6jioimQnz+cFTfOaUPo4T7UpZPFd/T+REGjORkeuUxA7NsjcT//M6mY2vw5yrNLNM0cWiOBPYJnj2Pe5zzagVE0cI1dzdiumQaEKty6jiQvCXX14lwUX9pu7fX9Yal0UaZTiBUzgHH66gAXfQhAAoSHiGV3hDGr2gd/SxaC2hYuYY/gB9/gBheJBI AAAB73icbVBNSwMxEJ3Ur1q/qh69BIvgqeyKoN4KXjxWcG2lXUo2zbahSXZJsoWy9Fd48aDi1b/jzX9j2u5BWx8MPN6bYWZelApurOd9o9La+sbmVnm7srO7t39QPTx6NEmmKQtoIhLdjohhgisWWG4Fa6eaERkJ1opGtzO/NWba8EQ92EnKQkkGisecEuukp+6Y6HTIe7xXrXl1bw68SvyC1KBAs1f96vYTmkmmLBXEmI7vpTbMibacCjatdDPDUkJHZMA6jioimQnz+cFTfOaUPo4T7UpZPFd/T+REGjORkeuUxA7NsjcT//M6mY2vw5yrNLNM0cWiOBPYJnj2Pe5zzagVE0cI1dzdiumQaEKty6jiQvCXX14lwUX9pu7fX9Yal0UaZTiBUzgHH66gAXfQhAAoSHiGV3hDGr2gd/SxaC2hYuYY/gB9/gBheJBI AAAB73icbVBNSwMxEJ3Ur1q/qh69BIvgqeyKoN4KXjxWcG2lXUo2zbahSXZJsoWy9Fd48aDi1b/jzX9j2u5BWx8MPN6bYWZelApurOd9o9La+sbmVnm7srO7t39QPTx6NEmmKQtoIhLdjohhgisWWG4Fa6eaERkJ1opGtzO/NWba8EQ92EnKQkkGisecEuukp+6Y6HTIe7xXrXl1bw68SvyC1KBAs1f96vYTmkmmLBXEmI7vpTbMibacCjatdDPDUkJHZMA6jioimQnz+cFTfOaUPo4T7UpZPFd/T+REGjORkeuUxA7NsjcT//M6mY2vw5yrNLNM0cWiOBPYJnj2Pe5zzagVE0cI1dzdiumQaEKty6jiQvCXX14lwUX9pu7fX9Yal0UaZTiBUzgHH66gAXfQhAAoSHiGV3hDGr2gd/SxaC2hYuYY/gB9/gBheJBI 1 f 0 i AAAB9HicbVBNS8NAEJ3Ur1q/qh69LBbBU0mKoN4KXjxWMFZoa9lsJ+3SzSbsbpQS8j+8eFDx6o/x5r9x2+agrQ8GHu/NMDMvSATXxnW/ndLK6tr6RnmzsrW9s7tX3T+403GqGPosFrG6D6hGwSX6hhuB94lCGgUC28H4auq3H1FpHstbM0mwF9Gh5CFn1FjpoRsqyjIvz0K3z/N+tebW3RnIMvEKUoMCrX71qzuIWRqhNExQrTuem5heRpXhTGBe6aYaE8rGdIgdSyWNUPey2dU5ObHKgISxsiUNmam/JzIaaT2JAtsZUTPSi95U/M/rpCa86GVcJqlByeaLwlQQE5NpBGTAFTIjJpZQpri9lbARtUEYG1TFhuAtvrxM/Eb9su7dnNWajSKNMhzBMZyCB+fQhGtogQ8MFDzDK7w5T86L8+58zFtLTjFzCH/gfP4A4WiSQQ== AAAB9HicbVBNS8NAEJ3Ur1q/qh69LBbBU0mKoN4KXjxWMFZoa9lsJ+3SzSbsbpQS8j+8eFDx6o/x5r9x2+agrQ8GHu/NMDMvSATXxnW/ndLK6tr6RnmzsrW9s7tX3T+403GqGPosFrG6D6hGwSX6hhuB94lCGgUC28H4auq3H1FpHstbM0mwF9Gh5CFn1FjpoRsqyjIvz0K3z/N+tebW3RnIMvEKUoMCrX71qzuIWRqhNExQrTuem5heRpXhTGBe6aYaE8rGdIgdSyWNUPey2dU5ObHKgISxsiUNmam/JzIaaT2JAtsZUTPSi95U/M/rpCa86GVcJqlByeaLwlQQE5NpBGTAFTIjJpZQpri9lbARtUEYG1TFhuAtvrxM/Eb9su7dnNWajSKNMhzBMZyCB+fQhGtogQ8MFDzDK7w5T86L8+58zFtLTjFzCH/gfP4A4WiSQQ== AAAB9HicbVBNS8NAEJ3Ur1q/qh69LBbBU0mKoN4KXjxWMFZoa9lsJ+3SzSbsbpQS8j+8eFDx6o/x5r9x2+agrQ8GHu/NMDMvSATXxnW/ndLK6tr6RnmzsrW9s7tX3T+403GqGPosFrG6D6hGwSX6hhuB94lCGgUC28H4auq3H1FpHstbM0mwF9Gh5CFn1FjpoRsqyjIvz0K3z/N+tebW3RnIMvEKUoMCrX71qzuIWRqhNExQrTuem5heRpXhTGBe6aYaE8rGdIgdSyWNUPey2dU5ObHKgISxsiUNmam/JzIaaT2JAtsZUTPSi95U/M/rpCa86GVcJqlByeaLwlQQE5NpBGTAFTIjJpZQpri9lbARtUEYG1TFhuAtvrxM/Eb9su7dnNWajSKNMhzBMZyCB+fQhGtogQ8MFDzDK7w5T86L8+58zFtLTjFzCH/gfP4A4WiSQQ== AAAB9HicbVBNS8NAEJ3Ur1q/qh69LBbBU0mKoN4KXjxWMFZoa9lsJ+3SzSbsbpQS8j+8eFDx6o/x5r9x2+agrQ8GHu/NMDMvSATXxnW/ndLK6tr6RnmzsrW9s7tX3T+403GqGPosFrG6D6hGwSX6hhuB94lCGgUC28H4auq3H1FpHstbM0mwF9Gh5CFn1FjpoRsqyjIvz0K3z/N+tebW3RnIMvEKUoMCrX71qzuIWRqhNExQrTuem5heRpXhTGBe6aYaE8rGdIgdSyWNUPey2dU5ObHKgISxsiUNmam/JzIaaT2JAtsZUTPSi95U/M/rpCa86GVcJqlByeaLwlQQE5NpBGTAFTIjJpZQpri9lbARtUEYG1TFhuAtvrxM/Eb9su7dnNWajSKNMhzBMZyCB+fQhGtogQ8MFDzDK7w5T86L8+58zFtLTjFzCH/gfP4A4WiSQQ== Figure 2: The targ et sinusoid for supervised training is oscil- lated with the gr ound truth of F 0 at frame, i ( f 0 i ). and no voice periods, the target of supervised learning is set to the input wa veform itself, i.e. identity mapping. The weight op- timisation during this training is accomplished with mini-batch gradient descent with the backpropagation algorithm [24]. Finally , estimate of F 0 at the i -th frame including voiced, un voiced and no voice frames, ˆ f 0 i , is inferred from y i by max- imising its autocorrelation. Since un voiced and no v oice frames are transformed with identity mapping, those frames can be ef- fectiv ely detected if the maximum of cross correlation between y i and cos(2 π ˆ f 0 i m/f s ) is lower than threshold, λ . In other words, voiced frames are easily distinguished if the shape of y i is close to a sinusoid, otherwise the frame is un voiced or no voice. λ is empirically selected as 0.15 by preliminary cross- validation test. Figure 3 (a) demonstrates the relation between input voiced waveform, x i , and sinusoid obtained by the pro- posed RNN model, y i , at different SNRs in white noise. (b) plots their magnitude spectra to illustrate how y i is suitable for F 0 analysis compared with x i . 0 0.005 0.01 0.015 0.02 0.025 Time [sec] Amplitude 0 200 400 600 800 1000 Frequency [Hz] Log Magnitude x i : AAAB8HicbVBNS8NAEJ3Ur1q/qh69LBbBg5REBD9OBS8eK1hbbEPZbCft0s0m7G7EEvovvHhQ8erP8ea/cdvmoK0PBh7vzTAzL0gE18Z1v53C0vLK6lpxvbSxubW9U97du9dxqhg2WCxi1QqoRsElNgw3AluJQhoFApvB8HriNx9RaR7LOzNK0I9oX/KQM2qs9JB1gpA8dfn4qluuuFV3CrJIvJxUIEe9W/7q9GKWRigNE1Trtucmxs+oMpwJHJc6qcaEsiHtY9tSSSPUfja9eEyOrNIjYaxsSUOm6u+JjEZaj6LAdkbUDPS8NxH/89qpCS/8jMskNSjZbFGYCmJiMnmf9LhCZsTIEsoUt7cSNqCKMmNDKtkQvPmXF0njtHpZ9W7PKrWTPI0iHMAhHIMH51CDG6hDAxhIeIZXeHO08+K8Ox+z1oKTz+zDHzifP5KJkFI= AAAB8HicbVBNS8NAEJ3Ur1q/qh69LBbBg5REBD9OBS8eK1hbbEPZbCft0s0m7G7EEvovvHhQ8erP8ea/cdvmoK0PBh7vzTAzL0gE18Z1v53C0vLK6lpxvbSxubW9U97du9dxqhg2WCxi1QqoRsElNgw3AluJQhoFApvB8HriNx9RaR7LOzNK0I9oX/KQM2qs9JB1gpA8dfn4qluuuFV3CrJIvJxUIEe9W/7q9GKWRigNE1Trtucmxs+oMpwJHJc6qcaEsiHtY9tSSSPUfja9eEyOrNIjYaxsSUOm6u+JjEZaj6LAdkbUDPS8NxH/89qpCS/8jMskNSjZbFGYCmJiMnmf9LhCZsTIEsoUt7cSNqCKMmNDKtkQvPmXF0njtHpZ9W7PKrWTPI0iHMAhHIMH51CDG6hDAxhIeIZXeHO08+K8Ox+z1oKTz+zDHzifP5KJkFI= AAAB8HicbVBNS8NAEJ3Ur1q/qh69LBbBg5REBD9OBS8eK1hbbEPZbCft0s0m7G7EEvovvHhQ8erP8ea/cdvmoK0PBh7vzTAzL0gE18Z1v53C0vLK6lpxvbSxubW9U97du9dxqhg2WCxi1QqoRsElNgw3AluJQhoFApvB8HriNx9RaR7LOzNK0I9oX/KQM2qs9JB1gpA8dfn4qluuuFV3CrJIvJxUIEe9W/7q9GKWRigNE1Trtucmxs+oMpwJHJc6qcaEsiHtY9tSSSPUfja9eEyOrNIjYaxsSUOm6u+JjEZaj6LAdkbUDPS8NxH/89qpCS/8jMskNSjZbFGYCmJiMnmf9LhCZsTIEsoUt7cSNqCKMmNDKtkQvPmXF0njtHpZ9W7PKrWTPI0iHMAhHIMH51CDG6hDAxhIeIZXeHO08+K8Ox+z1oKTz+zDHzifP5KJkFI= AAAB8HicbVBNS8NAEJ3Ur1q/qh69LBbBg5REBD9OBS8eK1hbbEPZbCft0s0m7G7EEvovvHhQ8erP8ea/cdvmoK0PBh7vzTAzL0gE18Z1v53C0vLK6lpxvbSxubW9U97du9dxqhg2WCxi1QqoRsElNgw3AluJQhoFApvB8HriNx9RaR7LOzNK0I9oX/KQM2qs9JB1gpA8dfn4qluuuFV3CrJIvJxUIEe9W/7q9GKWRigNE1Trtucmxs+oMpwJHJc6qcaEsiHtY9tSSSPUfja9eEyOrNIjYaxsSUOm6u+JjEZaj6LAdkbUDPS8NxH/89qpCS/8jMskNSjZbFGYCmJiMnmf9LhCZsTIEsoUt7cSNqCKMmNDKtkQvPmXF0njtHpZ9W7PKrWTPI0iHMAhHIMH51CDG6hDAxhIeIZXeHO08+K8Ox+z1oKTz+zDHzifP5KJkFI= y i : AAAB8HicbVBNS8NAEJ34WetX1aOXxSJ4kJKI4Mep4MVjBWOLbSib7aRdutmE3Y1QQv+FFw8qXv053vw3btsctPXBwOO9GWbmhang2rjut7O0vLK6tl7aKG9ube/sVvb2H3SSKYY+S0SiWiHVKLhE33AjsJUqpHEosBkObyZ+8wmV5om8N6MUg5j2JY84o8ZKj3knjMioy8fX3UrVrblTkEXiFaQKBRrdylenl7AsRmmYoFq3PTc1QU6V4UzguNzJNKaUDWkf25ZKGqMO8unFY3JslR6JEmVLGjJVf0/kNNZ6FIe2M6ZmoOe9ifif185MdBnkXKaZQclmi6JMEJOQyfukxxUyI0aWUKa4vZWwAVWUGRtS2Ybgzb+8SPyz2lXNuzuv1k+LNEpwCEdwAh5cQB1uoQE+MJDwDK/w5mjnxXl3PmatS04xcwB/4Hz+AJQQkFM= AAAB8HicbVBNS8NAEJ34WetX1aOXxSJ4kJKI4Mep4MVjBWOLbSib7aRdutmE3Y1QQv+FFw8qXv053vw3btsctPXBwOO9GWbmhang2rjut7O0vLK6tl7aKG9ube/sVvb2H3SSKYY+S0SiWiHVKLhE33AjsJUqpHEosBkObyZ+8wmV5om8N6MUg5j2JY84o8ZKj3knjMioy8fX3UrVrblTkEXiFaQKBRrdylenl7AsRmmYoFq3PTc1QU6V4UzguNzJNKaUDWkf25ZKGqMO8unFY3JslR6JEmVLGjJVf0/kNNZ6FIe2M6ZmoOe9ifif185MdBnkXKaZQclmi6JMEJOQyfukxxUyI0aWUKa4vZWwAVWUGRtS2Ybgzb+8SPyz2lXNuzuv1k+LNEpwCEdwAh5cQB1uoQE+MJDwDK/w5mjnxXl3PmatS04xcwB/4Hz+AJQQkFM= AAAB8HicbVBNS8NAEJ34WetX1aOXxSJ4kJKI4Mep4MVjBWOLbSib7aRdutmE3Y1QQv+FFw8qXv053vw3btsctPXBwOO9GWbmhang2rjut7O0vLK6tl7aKG9ube/sVvb2H3SSKYY+S0SiWiHVKLhE33AjsJUqpHEosBkObyZ+8wmV5om8N6MUg5j2JY84o8ZKj3knjMioy8fX3UrVrblTkEXiFaQKBRrdylenl7AsRmmYoFq3PTc1QU6V4UzguNzJNKaUDWkf25ZKGqMO8unFY3JslR6JEmVLGjJVf0/kNNZ6FIe2M6ZmoOe9ifif185MdBnkXKaZQclmi6JMEJOQyfukxxUyI0aWUKa4vZWwAVWUGRtS2Ybgzb+8SPyz2lXNuzuv1k+LNEpwCEdwAh5cQB1uoQE+MJDwDK/w5mjnxXl3PmatS04xcwB/4Hz+AJQQkFM= AAAB8HicbVBNS8NAEJ34WetX1aOXxSJ4kJKI4Mep4MVjBWOLbSib7aRdutmE3Y1QQv+FFw8qXv053vw3btsctPXBwOO9GWbmhang2rjut7O0vLK6tl7aKG9ube/sVvb2H3SSKYY+S0SiWiHVKLhE33AjsJUqpHEosBkObyZ+8wmV5om8N6MUg5j2JY84o8ZKj3knjMioy8fX3UrVrblTkEXiFaQKBRrdylenl7AsRmmYoFq3PTc1QU6V4UzguNzJNKaUDWkf25ZKGqMO8unFY3JslR6JEmVLGjJVf0/kNNZ6FIe2M6ZmoOe9ifif185MdBnkXKaZQclmi6JMEJOQyfukxxUyI0aWUKa4vZWwAVWUGRtS2Ybgzb+8SPyz2lXNuzuv1k+LNEpwCEdwAh5cQB1uoQE+MJDwDK/w5mjnxXl3PmatS04xcwB/4Hz+AJQQkFM= Clean Clean +10dB +10dB - 10dB - 10dB ( a ) W aveforms of x i and y i AAACIXicbZBNSwMxEIazflu/qh69BIugHsquCNpbwYtHBWsLbSmz6awGs9klmRXL0t/ixb/ixYOKN/HPmNYV1DoQeHneGTLzhqmSlnz/3Zuanpmdm19YLC0tr6yuldc3Lm2SGYENkajEtEKwqKTGBklS2EoNQhwqbIY3JyO/eYvGykRf0CDFbgxXWkZSADnUK9d2Ya9DeEc5b8ItRomJLU8iPuR5J4z43bAneeGD7n/jgcO9csWv+uPikyIoRIUVddYrv3X6ichi1CQUWNsO/JS6ORiSQuGw1MkspiBu4ArbTmqI0Xbz8YlDvuNIn7v13NPEx/TnRA6xtYM4dJ0x0LX9643gf147o+i4m0udZoRafH0UZYpTwkd58b40KEgNnABhpNuVi2swIMilWnIhBH9PnhSNg2qtGpwfVur7RRoLbItts10WsCNWZ6fsjDWYYPfskT2zF+/Be/Jevbev1imvmNlkv8r7+ASenqNA AAACIXicbZBNSwMxEIazflu/qh69BIugHsquCNpbwYtHBWsLbSmz6awGs9klmRXL0t/ixb/ixYOKN/HPmNYV1DoQeHneGTLzhqmSlnz/3Zuanpmdm19YLC0tr6yuldc3Lm2SGYENkajEtEKwqKTGBklS2EoNQhwqbIY3JyO/eYvGykRf0CDFbgxXWkZSADnUK9d2Ya9DeEc5b8ItRomJLU8iPuR5J4z43bAneeGD7n/jgcO9csWv+uPikyIoRIUVddYrv3X6ichi1CQUWNsO/JS6ORiSQuGw1MkspiBu4ArbTmqI0Xbz8YlDvuNIn7v13NPEx/TnRA6xtYM4dJ0x0LX9643gf147o+i4m0udZoRafH0UZYpTwkd58b40KEgNnABhpNuVi2swIMilWnIhBH9PnhSNg2qtGpwfVur7RRoLbItts10WsCNWZ6fsjDWYYPfskT2zF+/Be/Jevbev1imvmNlkv8r7+ASenqNA AAACIXicbZBNSwMxEIazflu/qh69BIugHsquCNpbwYtHBWsLbSmz6awGs9klmRXL0t/ixb/ixYOKN/HPmNYV1DoQeHneGTLzhqmSlnz/3Zuanpmdm19YLC0tr6yuldc3Lm2SGYENkajEtEKwqKTGBklS2EoNQhwqbIY3JyO/eYvGykRf0CDFbgxXWkZSADnUK9d2Ya9DeEc5b8ItRomJLU8iPuR5J4z43bAneeGD7n/jgcO9csWv+uPikyIoRIUVddYrv3X6ichi1CQUWNsO/JS6ORiSQuGw1MkspiBu4ArbTmqI0Xbz8YlDvuNIn7v13NPEx/TnRA6xtYM4dJ0x0LX9643gf147o+i4m0udZoRafH0UZYpTwkd58b40KEgNnABhpNuVi2swIMilWnIhBH9PnhSNg2qtGpwfVur7RRoLbItts10WsCNWZ6fsjDWYYPfskT2zF+/Be/Jevbev1imvmNlkv8r7+ASenqNA AAACIXicbZBNSwMxEIazflu/qh69BIugHsquCNpbwYtHBWsLbSmz6awGs9klmRXL0t/ixb/ixYOKN/HPmNYV1DoQeHneGTLzhqmSlnz/3Zuanpmdm19YLC0tr6yuldc3Lm2SGYENkajEtEKwqKTGBklS2EoNQhwqbIY3JyO/eYvGykRf0CDFbgxXWkZSADnUK9d2Ya9DeEc5b8ItRomJLU8iPuR5J4z43bAneeGD7n/jgcO9csWv+uPikyIoRIUVddYrv3X6ichi1CQUWNsO/JS6ORiSQuGw1MkspiBu4ArbTmqI0Xbz8YlDvuNIn7v13NPEx/TnRA6xtYM4dJ0x0LX9643gf147o+i4m0udZoRafH0UZYpTwkd58b40KEgNnABhpNuVi2swIMilWnIhBH9PnhSNg2qtGpwfVur7RRoLbItts10WsCNWZ6fsjDWYYPfskT2zF+/Be/Jevbev1imvmNlkv8r7+ASenqNA ( b ) Spectra of x i and y i AAACH3icbZDNSgMxFIUz9a/Wv6pLN8EiVBdlRoTqruDGZUVrC20pmUymDc1khuSOtAzzKG58FTcuVMRd38a0HUFbLwQO37mXm3vcSHANtj2xciura+sb+c3C1vbO7l5x/+BBh7GirEFDEaqWSzQTXLIGcBCsFSlGAlewpju8nvrNR6Y0D+U9jCPWDUhfcp9TAgb1itWye9oBNoIE30WMgiI49HGKk47r41Ha4zhzifR+8NjgXrFkV+xZ4WXhZKKEsqr3il8dL6RxwCRQQbRuO3YE3YQo4FSwtNCJNYsIHZI+axspScB0N5kdmOITQzzsh8o8CXhGf08kJNB6HLimMyAw0IveFP7ntWPwL7sJl1EMTNL5Ij8WGEI8TQt7XJlQxNgIQhU3f8V0QBShYDItmBCcxZOXReO8clVxbi9KtbMsjTw6QseojBxURTV0g+qogSh6Qi/oDb1bz9ar9WF9zltzVjZziP6UNfkGxz2iRQ== AAACH3icbZDNSgMxFIUz9a/Wv6pLN8EiVBdlRoTqruDGZUVrC20pmUymDc1khuSOtAzzKG58FTcuVMRd38a0HUFbLwQO37mXm3vcSHANtj2xciura+sb+c3C1vbO7l5x/+BBh7GirEFDEaqWSzQTXLIGcBCsFSlGAlewpju8nvrNR6Y0D+U9jCPWDUhfcp9TAgb1itWye9oBNoIE30WMgiI49HGKk47r41Ha4zhzifR+8NjgXrFkV+xZ4WXhZKKEsqr3il8dL6RxwCRQQbRuO3YE3YQo4FSwtNCJNYsIHZI+axspScB0N5kdmOITQzzsh8o8CXhGf08kJNB6HLimMyAw0IveFP7ntWPwL7sJl1EMTNL5Ij8WGEI8TQt7XJlQxNgIQhU3f8V0QBShYDItmBCcxZOXReO8clVxbi9KtbMsjTw6QseojBxURTV0g+qogSh6Qi/oDb1bz9ar9WF9zltzVjZziP6UNfkGxz2iRQ== AAACH3icbZDNSgMxFIUz9a/Wv6pLN8EiVBdlRoTqruDGZUVrC20pmUymDc1khuSOtAzzKG58FTcuVMRd38a0HUFbLwQO37mXm3vcSHANtj2xciura+sb+c3C1vbO7l5x/+BBh7GirEFDEaqWSzQTXLIGcBCsFSlGAlewpju8nvrNR6Y0D+U9jCPWDUhfcp9TAgb1itWye9oBNoIE30WMgiI49HGKk47r41Ha4zhzifR+8NjgXrFkV+xZ4WXhZKKEsqr3il8dL6RxwCRQQbRuO3YE3YQo4FSwtNCJNYsIHZI+axspScB0N5kdmOITQzzsh8o8CXhGf08kJNB6HLimMyAw0IveFP7ntWPwL7sJl1EMTNL5Ij8WGEI8TQt7XJlQxNgIQhU3f8V0QBShYDItmBCcxZOXReO8clVxbi9KtbMsjTw6QseojBxURTV0g+qogSh6Qi/oDb1bz9ar9WF9zltzVjZziP6UNfkGxz2iRQ== AAACH3icbZDNSgMxFIUz9a/Wv6pLN8EiVBdlRoTqruDGZUVrC20pmUymDc1khuSOtAzzKG58FTcuVMRd38a0HUFbLwQO37mXm3vcSHANtj2xciura+sb+c3C1vbO7l5x/+BBh7GirEFDEaqWSzQTXLIGcBCsFSlGAlewpju8nvrNR6Y0D+U9jCPWDUhfcp9TAgb1itWye9oBNoIE30WMgiI49HGKk47r41Ha4zhzifR+8NjgXrFkV+xZ4WXhZKKEsqr3il8dL6RxwCRQQbRuO3YE3YQo4FSwtNCJNYsIHZI+axspScB0N5kdmOITQzzsh8o8CXhGf08kJNB6HLimMyAw0IveFP7ntWPwL7sJl1EMTNL5Ij8WGEI8TQt7XJlQxNgIQhU3f8V0QBShYDItmBCcxZOXReO8clVxbi9KtbMsjTw6QseojBxURTV0g+qogSh6Qi/oDb1bz9ar9WF9zltzVjZziP6UNfkGxz2iRQ== Figure 3: (a) depicts the relation between x i and y i in clean and noisy conditions (SNRs at +10 dB and -10 dB in white noise). (b) plots their magnitude spectra. W aveforms and spectra in each plot have been shifted for better visualisation. The RNN model abov e can be replaced with more so- phisticated recurrent units such as the long short-term memory (LSTM) cells [25] or the gated recurr ent unit (GR U) cells [26] in order to capture longer-term dependencies in signals. W e em- ploy LSTM cells for our approach in the follo wing experiments. 3. Experiments W e e xamine both accuracy and noise robustness of the proposed methods and compare them with RAPT [9], YIN [10], PEF A C [13] and state-of-the-art DNN-based classification approaches: DNN-CLS(S) [16], DNN-CLS(W) [20] and CREPE [21]. DNN- CLS(S) is based on a DNN classification model from spectral features to quantised frequency states whereas DNN-CLS(W) uses a DNN classification model from wav eforms to quantised frequency states. CREPE is an F 0 tracker targeting at mu- sic signals using a CNN-based classification model from wav e- forms to quantised frequency states. Performance of these F 0 trackers is ev aluated in terms of two standard metrics: gr oss pitch err or (GPE) rate and fine pitch err or (FPE) [27]. GPE frames represent voiced frames in which the error between the estimated pitch period ( 1 / ˆ f 0 ) and the ground truth ( 1 /f 0 ) is more than 10 samples, i.e. 0.625 ms. FPE frames, in turn, are voiced frames excluding GPE frames. The mean of FPEs, µ FPE , represents the bias in F 0 estimation whereas the standard deviation of FPEs, σ FPE , measures the ac- curacy of estimation [27]. 3.1. Datasets (PTDB-TUG corpus + NOISEX-92) The experiments use speech from pitch trac king database fr om Graz University of T echnology (PTDB-TUG) [11]. The train- ing set consists of 3200 utterances spoken by 16 speakers (8 males, 8 females), i.e. 200 utterances each. The cross-validation (CV) set comprises other 576 utterances spoken by the same 16 speakers, 36 utterances per each. For the test set, 944 utterances spoken by 4 speakers (2 males, 2 females) who are not in the training and CV sets (unknown speakers), i.e. 236 utterances each, are contained in order to set the test condition as speaker independent (SI). Speech in each dataset is sampled at 16kHz and the sam- pled signals in the training and CV sets are contaminated with eight types of additiv e noise at five levels of SNR, -10, -5, 0, +5 and +10 dB. The noise types are referred to as Babble , De- str oyer ops , F16 , F actory2 , Leopar d , M109 , Machine gun and White in NOISEX-92 [28]. The test set is contaminated with other four types of additive noise including Destr oyerengine , F actory1 , Pink and V olvo in addition to the preceding eight types at the same SNRs as the training and CV sets. The for- mer four types make an unknown noise condition while the lat- ter eight types giv e a known noise condition. Consequently , the training set amounts 131,200 utterances (15,252 min), i.e. 3,200 × (8 noise × 5 le vel + 1 clean), and the CV set becomes 23,616 utterances (2,542 min) while the test set amounts 57,584 utter- ances (6,344 min), i.e. 944 × (12 noise × 5 level + 1 clean), in total. PTDB-TUG contains ground truth F 0 contours of each ut- terance obtained from laryngograph signals recorded in a clean condition to which a Kaiser filter and RAPT were applied. These are used in the following experiments as the ground truth. 3.2. T raining and test settings The speech signals in the datasets are framed into 25 ms frames at 5 ms interv als. The first 400 frames and the last 200 frames of each utterance are then remov ed to reduce non-speech frames. The hyperparameters of the proposed method are empiri- cally selected by preliminary cross-validation test. The number of hidden layers (LSTM cells) are set equal to three with 1024 units each that are activ ated by tanh function. Mini-batch size is set to 300 frames and random unit dropout (25 %) and batch normalisation [29] are applied during training. T o train or anal- yse frame, x i , 15 neighbouring frames in a row , i.e. from x i − 7 to x i +7 are used as inputs to the RNN to perform sequence-to- sequence analysis. Feature extraction and parameter settings of the other meth- ods follo w their original paper mentioned above but the pos- terior frequency states in CREPE are modified with the same quantisation manner as DNN-CLS(S&W) because the classifi- cation target of original CREPE is music signals. 3.3. Results and discussion Figure 4 (a) illustrates GPE rates of each method at dif ferent SNRs in the multi noise condition of the known noise types. (b) represents GPE rates in the multi noise condition of the un- known noise. RNN-REG always shows the best performance in terms of GPE rate. It outperforms the other methods ov er the SNR range between -10 and +10 dB in both known and unknown noise conditions giving GPE rate of around 35 % at -10 dB. DNN-CLS(S&W) also show lower GPE rate than the other real-time DSP methods, i.e. RAPT , YIN and PEF AC, but they always e xceed RNN-REG by around 8 or more percentage points in both noise conditions. CREPE is not as robust as the other three neural net-based methods in terms of GPE rate. GPE frames correspond to failure in F 0 estimation at voiced frames [27]. In that sense, F 0 estimation with YIN -10 -5 0 5 10 SNR [dB] 20 40 60 80 100 GPE Rate [%] (a) GPE Rate in Known Noise RAPT YIN PEFAC DNN-CLS(S) DNN-CLS(W) CREPE RNN-REG -10 -5 0 5 10 SNR [dB] 20 40 60 80 100 GPE Rate [%] (b) GPE Rate in Unknown Noise 1234 Mean [Hz] 1 2 3 4 5 Standard Deviation [Hz] (c) FPE in Known Noise PEFAC DNN-CLS(S) DNN-CLS(W) CREPE RNN-REG 1234 Mean [Hz] 1 2 3 4 5 Standard Deviation [Hz] (d) FPE in Unknown Noise Figure 4: F 0 estimation performance of each method at differ - ent SNRs showing (a) GPE rates in the known noise condition, (b) GPE rates in the unknown noise condition. (c) illustrates a scatter plot of µ FPE and sigma FPE in the known noise condition while (d) shows the performance in the unknown noise. at SNRs belo w 5 dB, RAPT at less than 0 dB and CREPE and PEF AC at -5 dB and belo w are likely to have unreliable frames accounting for more than 50 % of voiced frames. Con- versely , RNN-REG keeps estimation failure approximately 35 % of voiced frames ev en at -10 dB in unknown noise condi- tion whereas DNN-CLS(S&W) score over 40 % at -10 dB in unknown noise. This demonstrates substantial advantage of our proposal in F 0 estimation from noisy speech. Figures 4 (c) and (d) illustrate the performance of PEF A C, DNN-CLS(S&W), CREPE and RNN-REG in terms of FPE at SNRs of -10, -5, 0, +5 and +10 dB in the known and unknown noise conditions respectiv ely as scatter plots of µ FPE and σ FPE . YIN and RAPT are eliminated from this ev aluation because suf- ficient amount of frames for FPE analysis are not brought by those methods in such noisy conditions. Since µ FPE represents the bias in F 0 estimation while σ FPE is a measure of the accuracy in the estimation [27], RNN-REG performs best in terms of both bias and accuracy of estima- tion ov er the SNR range between -10 dB and +10 dB in both known and unknown noise conditions. Although PEF A C sho ws strong noise robustness in both accuracy and bias, RNN-REG outperforms it by approximately 35 % on av erage in both known and unknown noise. RNN-REG also superior to DNN-CLS(S), DNN-CLS(W) and CREPE by more than 15 %. In comparison among RNN-REG, DNN-CLS(S&W) and CREPE, the regression task to map wa veforms onto the sinusoid encoding F 0 is more difficult than the classification task to clas- sify the wav eforms or spectral features into quantised frequen- cies. Howev er , RNN re gression can capture temporal dynamics by optimising recurrent weights unlike the full-connected DNN in DNN-CLS(S) augmenting the input with consecutive frames which produce a lot of poor -correlated connections into the net- work, e.g. a connection between a unit in a past frame and a unit in a future frame. Consequently , RNN regression accuracy outperforms the quantised frequencies in the classification task although the resolution of RNN-REG is also restricted by the sampling period. Figure 5 illustrates F 0 contours of the spoken word “D ARK” estimated by DNN-CLS(W), CREPE and RNN-REG in a clean condition and they are compared with the ground truth ( REF ). (a) and (b) show the F 0 contours spoken by a fe- male speaker and a male speaker respecti vely . Utterances of these two speakers are not included in the training set, i.e. un- known speakers. The figures demonstrate the advantage of the 0 0.05 0.1 0.15 0.2 Time [sec] Frequency (a) F0 contour (Female) REF RNN-REG CREPE DNN-CLS(W) 0 0.05 0.1 0.15 0.2 Time [sec] Frequency (b) F0 contour (Male) 15 Hz shift 30 Hz shift Figure 5: F 0 contours of word “D ARK” spoken by (a) an un- known female speaker and (b) an unknown male speaker . F 0 contours in plot (a) ar e shifted at 10 Hz intervals while the con- tours in plot (b) ar e shifted at 5 Hz intervals for better visuali- sation. proposed method employing RNN-based wa veform-to-sinusoid regression approach over classification approaches using DNNs or CNNs. Specifically , the F 0 contours estimated by RNN- REG is closer to the ground truth than other methods. This also reveals the potential of our proposal (RNN-REG) to track prosody of dif ferent speakers in a speaker -independent manner . 4. Conclusion W e addressed the problem of F 0 estimation with a waveform- to-sinusoid re gression using an RNN in order to obtain accurate F 0 estimates with improved noise robustness. The proposed RNN-based approach demonstrates considerable improvement ov er the existing state-of-the-art F 0 trackers. Compared to PE- F A C, one of the most rob ust autocorrelation-based F 0 trackers, the proposed method yielded a relati ve improvement exceeding 35 % in both gross pitch error (GPE) rate and fine pitch error (FPE) at SNRs between -10 dB and +10 dB in both known and unknown noise conditions. Furthermore, the proposed method outperformed the latest DNN and CNN-based F 0 trackers, in terms of relativ e improvement in both GPE rate and FPE, by more than 15 % ov er the preceding SNR range. Comparison of the estimated F 0 contours of clean speech also demonstrates an adv antage of our proposal ov er other DNN and CNN-based approaches in producing more natural F 0 tra- jectories. While the present work focused solely on the F 0 tracking problem itself, our future plan in volves integrating the proposed method in a downstream application such as voice con version or prosody-based speaker recognition. 5. Acknowledgement This work was supported in part by Academy of Finland (Proj. No. 309629). The authors wish to acknowledge CSC - IT Cen- tre for Science, Finland, for computational resources. 6. References [1] S. H. Mohammadi and A. Kain, “ An overvie w of v oice conv ersion systems, ” Speech Communication , vol. 88, pp. 65–82, 2017. [2] P . A. T orres-Carrasquillo, F . Richardson, S. Nercessian, D. Sturim, W . Campbell, Y . Gwon, S. V attam, N. Dehak, H. Mallidi, P . S. Nidadav olu et al. , “The MIT -LL, JHU and LRDE NIST 2016 speaker recognition ev aluation system, ” Proceedings of INTERSPEECH , pp. 1333–1337, August 2017. [3] D. Nandi, D. Pati, and K. S. Rao, “Parametric representation of excitation source information for language identification, ” Com- puter Speech and Languag e , vol. 41, pp. 88–115, January 2017. [4] E. Godoy , J. R. Williamson, and T . F . Quatieri, “Canonical corre- lation analysis and prediction of perceived rhythmic prominences and pitch tones in speech, ” Pr oceedings of INTERSPEECH , pp. 3206–3210, August 2017. [5] V . Rajendran, A. A. Kandhadai, and V . Krishnan, “Systems, meth- ods, and apparatus for signal encoding using pitch-regularizing and non-pitch-regularizing coding, ” US P atent 9,653,088 , 2017. [6] X. W ang, S. T akaki, and J. Y amagishi, “ An RNN-based quantized F0 model with multi-tier feedback links for text-to-speech synthe- sis, ” Pr oceedings of INTERSPEECH , pp. 20–24, August 2017. [7] A. Kato and B. Milner, “Using hidden Mark ov models for speech enhancement, ” Pr oceedings of INTERSPEECH , pp. 2695–2699, 2014. [8] A. Kato and B. Milner, “HMM-based speech enhancement using sub-word models and noise adaptation, ” Proceedings of INTER- SPEECH , pp. 3748–3752, September 2016. [9] D. T alkin, “ A robulst algorithm for pitch tracking (RAPT), ” Speech coding and synthesis , pp. 495–518, 1995. [10] H. Kawahara, “YIN, a fundamental frequency estimator for speech and music, ” Journal of the Acoustical Society of America , vol. 111, no. 4, pp. 1917–1930, April 2002. [11] G. Pirker , M. W ohlmayr , S. Petrik, and F . Pernkopf, “ A pitch tracking corpus with ev aluation on multipitch tracking scenario, ” Pr oceedings of INTERSPEECH , pp. 1509–1512, 2011. [12] D. W ang, P . C. Loizou, and J. H. Hansen, “F0 estimation in noisy speech based on long-term harmonic feature analysis com- bined with neural network classification, ” Pr oceedings of INTER- SPEECH , pp. 2258–2262, September 2014. [13] S. Gonzalez and M. Brookes, “PEF AC - A pitch estimation algo- rithm robust to high lev els of noise, ” IEEE T ransactions on Audio, Speech, and Language Processing , vol. 22, no. 2, pp. 518–530, February 2014. [14] B. Milner and X. Shao, “Prediction of fundamental frequency and voicing from Mel-frequency cepstral coefficients for uncon- strained speech reconstruction, ” IEEE Tr ansactions on Audio, Speech, and Language Processing , vol. 15, no. 1, pp. 24–33, 2007. [15] Z. Jin and D. W ang, “HMM-based multipitch tracking for noisy and reverberant speech, ” IEEE Tr ansactions on A udio, Speech, and Language Processing , vol. 19, no. 5, pp. 1091–1102, July 2011. [16] K. Han and D. W ang, “Neural network based pitch tracking in very noisy speech, ” IEEE/ACM Tr ansactions on Audio, Speech and Language Processing , vol. 22, no. 12, pp. 2158–2168, De- cember 2014. [17] D. W ang, C. Y u, and J. H. L. Hansen, “Robust harmonic fea- tures for classification-based pitch estimation, ” IEEE/A CM T rans- actions on Audio, Speech and Language Pr ocessing , v ol. 25, no. 5, pp. 952–964, May 2017. [18] A. v . d. Oord, S. Dieleman, H. Zen, K. Simonyan, O. V inyals, A. Graves, N. Kalchbrenner, A. Senior , and K. Kavukcuoglu, “W av enet: A generativ e model for raw audio, ” arXiv pr eprint arXiv:1609.03499 , 2016. [19] D. Rethage, J. Pons, and X. Serra, “ A wavenet for speech denois- ing, ” arXiv preprint , 2017. [20] P . V erma and R. W . Schafer, “Frequency estimation from wave- forms using multi-layered neural networks, ” Pr oceedings of IN- TERSPEECH , pp. 2165–2169, September 2016. [21] J. W . Kim, J. Salamon, P . Li, and J. P . Bello, “CREPE: A con- volutional representation for pitch estimation, ” arXiv preprint arXiv:1802.06182 , February 2018. [22] A. Kato and T . Kinnunen, “ A regression model of recurrent deep neural networks for noise robust estimation of the fundamen- tal frequency contour of speech, ” Pr oceedings of Odyssey , The Speaker and Language Recognition W orkshop , pp. 275–282, June 2018. [23] S. Grossber g and D. Levine, “Some dev elopmental and attentional biases in the contrast enhancement and short term memory of re- current neural networks, ” Journal of Theor etical Biology , vol. 53, no. 2, pp. 341–380, 1975. [24] D. E. Rumelhart, G. E. Hinton, and R. J. W illiams, “Learning internal representations by error propagation, ” 1985. [25] S. Hochreiter and J. Schmidhuber , “Long short-term memory , ” Neural computation , v ol. 9, no. 8, pp. 1735–1780, 1997. [26] J. Chung, C. Gulcehre, K. Cho, and Y . Bengio, “Empirical evalu- ation of gated recurrent neural networks on sequence modeling, ” arXiv:1412.3555 , 2014. [27] L. Rabiner , M. Cheng, A. Rosenberg, and C. McGonegal, “ A com- parativ e performance study of several pitch detection algorithms, ” IEEE T ransactions on Acoustics, Speech and Signal Pr ocessing , vol. 24, no. 5, pp. 399–418, October 1976. [28] A. V arga and H. J. Steeneken, “ Assessment for automatic speech recognition: II. NOISEX-92: A database and an experiment to study the effect of additive noise on speech recognition systems, ” Speech Communication , v ol. 12, no. 3, pp. 247–251, 1993. [29] S. Ioffe and C. Sze gedy , “Batch normalization: Accelerating deep network training by reducing internal covariate shift, ” Proceed- ings of International Conference on Machine Learning , vol. 37, pp. 448–456, July 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment