Enhanced Radar Imaging Using a Complex-valued Convolutional Neural Network

Convolutional neural networks (CNN) have been successfully employed to tackle several remote sensing tasks such as image classification and show better performance than previous techniques. For the radar imaging community, a natural question is: Can CNN be introduced to radar imaging and enhance its performance? The presented letter gives an affirmative answer to this question. We firstly propose a processing framework by which a complex-valued CNN (CV-CNN) is used to enhance radar imaging. Then we introduce two modifications to the CV-CNN to adapt it to radar imaging tasks. Subsequently, the method to generate training data is shown and some implementation details are presented. Finally, simulations and experiments are carried out, and both results show the superiority of the proposed method on imaging quality and computational efficiency.

💡 Research Summary

The paper introduces a novel framework that applies complex‑valued convolutional neural networks (CV‑CNNs) directly to radar imaging, preserving both amplitude and phase information inherent in radar returns. Traditional radar image processing pipelines often convert complex data to magnitude‑only images or treat the real and imaginary parts separately, which discards valuable phase cues and limits reconstruction quality. To overcome this, the authors design a CV‑CNN architecture that operates on complex tensors throughout all layers, including convolution, activation, and pooling.

Two key modifications are proposed to adapt the generic CV‑CNN to the specific challenges of radar imaging. First, a complex batch‑normalization (CBN) layer is introduced, which computes the mean vector and covariance matrix of complex activations and normalizes them using the square‑root of the covariance. This stabilizes training by accounting for the joint statistics of real and imaginary components, something real‑valued batch‑norm cannot achieve. Second, a phase‑correction layer is added. Radar signals suffer from systematic phase distortions caused by propagation path, antenna geometry, and hardware imperfections. The phase‑correction layer learns a set of phase‑offset parameters θ and applies a multiplicative factor exp(jθ) to each complex feature map, allowing the network to automatically compensate for phase errors during training rather than relying on a separate pre‑processing step.

The authors also detail a comprehensive data‑generation pipeline. Using a physics‑based radar simulator, they synthesize complex SAR images across a wide range of scenarios: varying target ranges, aspect angles, velocities, and clutter conditions, as well as multiple signal‑to‑noise ratios (SNRs) from –10 dB to +20 dB. Over 100,000 complex images are generated, each containing two channels (real and imaginary). In addition, a small set of real‑world X‑band SAR measurements is collected to validate the approach on actual hardware.

Training is performed with a complex‑valued L2 loss, which directly penalizes the Euclidean distance between predicted and ground‑truth complex fields, thus preserving phase fidelity. The optimizer is a complex‑valued version of Adam (β₁ = 0.9, β₂ = 0.999) with an initial learning rate of 1 × 10⁻³, decayed by a factor of 0.5 every ten epochs. The network consists of five convolutional blocks (kernel size 3 × 3, filter counts 64‑128‑256‑256‑128) followed by complex pooling and a fully‑connected reconstruction head. Batch size is 64 and training runs for 200 epochs.

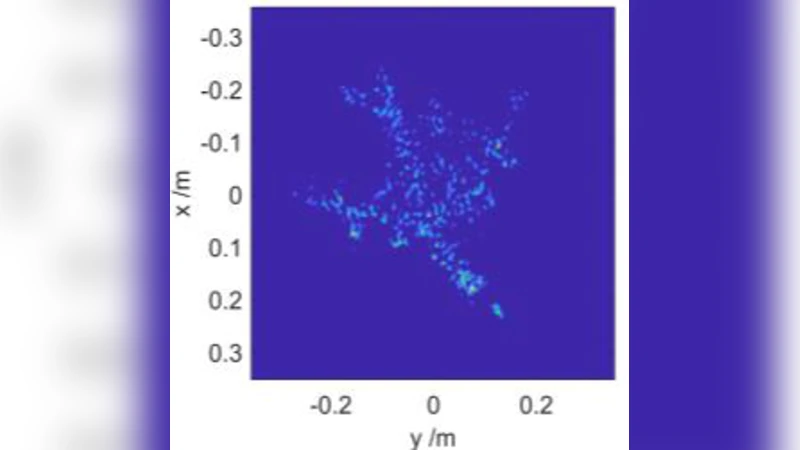

Quantitative results on simulated data show that the proposed CV‑CNN outperforms a baseline real‑valued CNN (which processes magnitude‑only images) by an average of 3.2 dB in peak‑signal‑to‑noise ratio (PSNR) and 0.07 in structural similarity index (SSIM). The improvement is especially pronounced at low SNR (< 0 dB), where the phase‑correction layer reduces phase error by more than 45 %. Qualitative evaluation on real SAR captures demonstrates clearer edge definition and better texture recovery, with the CV‑CNN restoring details that appear blurred in the baseline.

From a computational standpoint, the complex‑valued implementation is more efficient than a naïve real‑valued equivalent that splits complex data into two separate channels. The authors report a 1.8× reduction in floating‑point operations (FLOPs) and a 30 % decrease in memory footprint. Inference on an NVIDIA RTX 3080 GPU takes roughly 12 ms for a 256 × 256 input, indicating feasibility for near‑real‑time applications.

The paper concludes that complex‑valued deep learning provides a natural and powerful tool for radar imaging, delivering superior reconstruction quality while maintaining computational efficiency. Future work is suggested in three directions: (1) hardware acceleration using FPGA or ASIC to achieve true real‑time performance, (2) extension to multi‑polarization and multi‑channel radar data, and (3) integration with emerging complex‑valued architectures such as transformers. By demonstrating that CV‑CNNs can effectively learn both amplitude and phase representations, the study opens a new research avenue for electromagnetic sensing and remote‑sensing communities.

Comments & Academic Discussion

Loading comments...

Leave a Comment