Polyphonic Music Generation with Sequence Generative Adversarial Networks

We propose an application of sequence generative adversarial networks (SeqGAN), which are generative adversarial networks for discrete sequence generation, for creating polyphonic musical sequences. Instead of a monophonic melody generation suggested…

Authors: Sang-gil Lee, Uiwon Hwang, Seonwoo Min

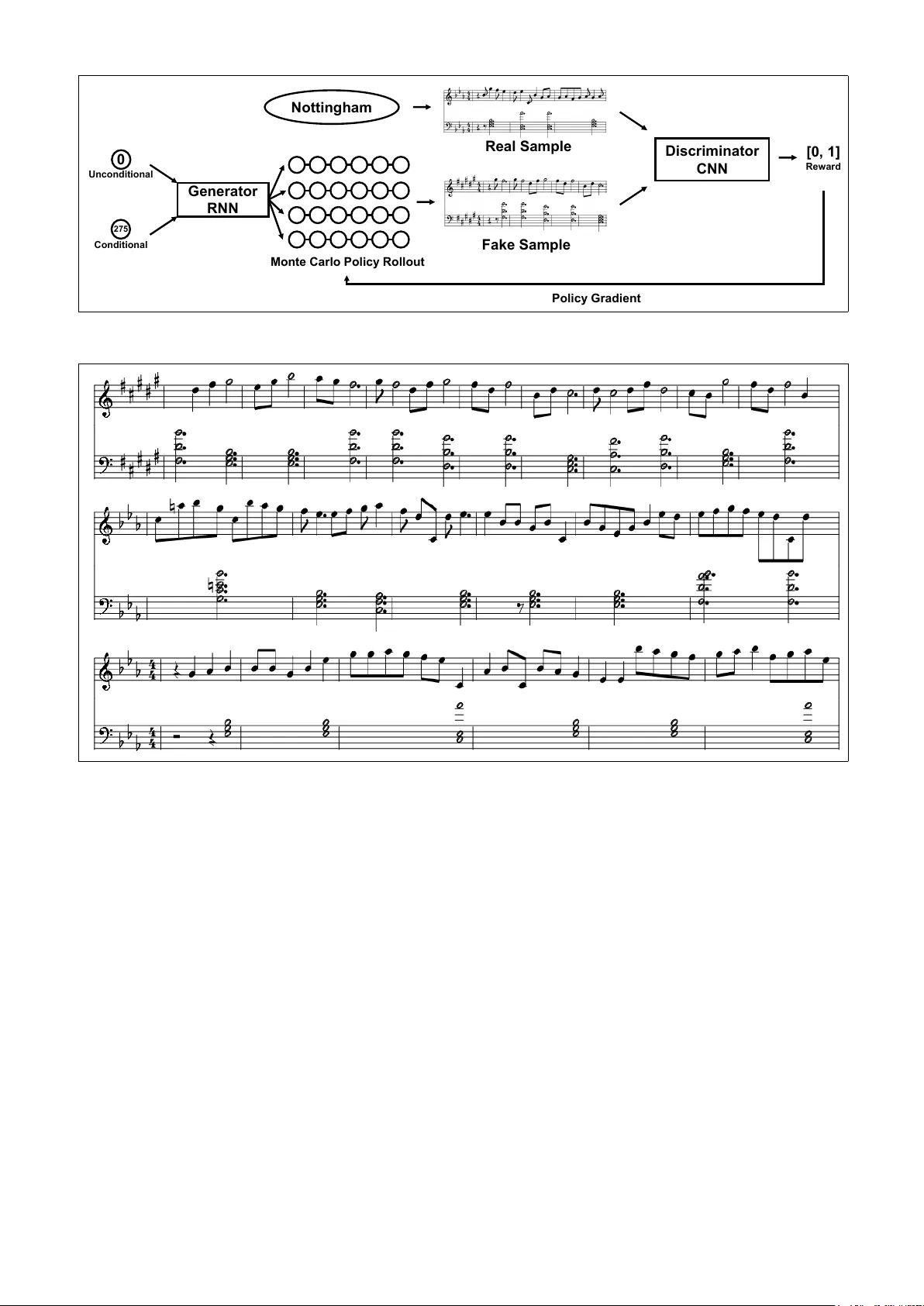

POL YPHONIC MUSIC GENERA TION WITH SEQUENCE GENERA TIVE AD VERSARIAL NETWORKS Sang-gil Lee, Uiwon Hwang, Seonwoo Min, and Sungr oh Y oon Electrical and Computer Engineering, Seoul National Uni versity , Seoul, K orea { tkdrlf9202, uiwon.hwang, mswzeus, sryoon } @snu.ac.kr ABSTRA CT W e propose an application of sequence generativ e adver - sarial networks (SeqGAN), which are generati ve adver - sarial networks for discrete sequence generation, for cre- ating polyphonic musical sequences. Instead of a mono- phonic melody generation suggested in the original work, we present an efficient representation of a polyphony MIDI file that simultaneously captures chords and melodies with dynamic timings. The proposed method condenses dura- tion, octaves, and keys of both melodies and chords into a single word vector representation, and recurrent neural networks learn to predict distributions of sequences from the embedded musical word space. W e experiment with the original method and the least squares method to the discriminator , which is kno wn to stabilize the training of GANs. The network can create sequences that are mu- sically coherent and shows an improved quantitative and qualitativ e measures. W e also report that careful optimiza- tion of reinforcement learning signals of the model is cru- cial for general application of the model. 1. INTR ODUCTION Automatic music generation is a concept of creation of a continuous audio signal or a discrete symbolic sequence that represents musical structure from computational mod- els in an autonomous way [12]. A continuous audio signal includes raw w av eform and a spectrogram as a data struc- ture. A discrete symbolic sequence includes MIDI and a piano roll. In this paper , we focus on the polyphonic mu- sic generation with MIDI, where the system creates both chords and melodies simultaneously . Recent adv ancements in deep learning [18] have brought a wide range of applications, such as image [11] and speech recognition [1], machine translation [5], and bioinformatics [22]. They are also getting attention for mu- sic generation and there ha ve been v arious approaches [3]. Specially , recurrent neural networks (RNNs) are widely used for music language modeling, since they can process time series information which has a central role in musical structure. Generativ e adversarial networks (GANs) [9] are frame- works in deep learning that are achieving state-of-the-art performance in generative tasks. Ho wev er, GANs are more difficult to train with discrete sequences than with contin- uous data, which results in their limited applications in do- mains with discrete data. Sequence generati ve adv ersarial networks (SeqGAN) [30] are one of the first models that try to overcome this limitation by combining reinforcement learning and GANs for learning from discrete sequence data. The SeqGAN model consists of RNNs as a sequence generator and con volutional neural netw orks (CNNs) as a discriminator that identifies whether a given sequence is real or fake. SeqGAN successfully learns from artificial and real-world discrete data and can be used in language modeling and monophonic music generation. The results from the original work hav e shown a strong potential for application of SeqGANs to automatic music generation. Howe ver , the original work hav e shown rather simple approaches to melody generation (i.e. monophonic music generation) by only using the melody part of the MIDI music and constraining a v ailable w ords in the model to 88-key pitches. In contrast, polyphonic music genera- tion [8, 10, 15], where the system can compose both chords and melodies simultaneously , is more appealing and can greatly improve the realism of the computer-generated mu- sic. This consideration leads us to a question of ho w to rep- resent the language of symbolic music that the model can effecti vely lev erage. W e would like to design a w ord repre- sentation of the polyphonic symbolic music with minimal hand-designed preprocessing that w ould negati vely impact the representational power . In addition, we would like to let the model fully incorporate the structure of the data dis- tribution of polyphonic music, including chords, keys, and dynamic timings. Based on the pioneering work, we apply SeqGAN for the purpose of polyphonic music generation. Specifically , we propose a simple and ef ficient word token formulation of polyphonic MIDI sequence that can be learned by Se- qGAN. Our representation can capture multiple keys and durations of MIDI music sequence with word embedding. Since we inte grated the duration of notes to word represen- tations, the recurrent networks can learn sequences with dynamic timings. The proposed method condenses dura- tion, octaves, and keys of both melodies and chords into a single word vector representation and recurrent neural networks learn to predict distributions of sequences from the embedded musical word space. Sampled sequences from the trained networks show long-term structures that are musically coherent and sho w an improv ed quantitati ve measure of BLEU score and perceptive quality from Mean Opinion Score (MOS) by adversarial training. W e discuss about advantages and limitations of the approach and fu- ture works. 2. RELA TED WORK Refer to [3] for a comprehensi ve survey on deep learning- based music generation. RNNs are widely used for the task of sequence generation, and are designed for pro- cessing time-series sequences. Primarily used in language modeling, RNNs can also be applied to music generation based on discrete sequences, notably MIDI and piano rolls. Long Short-T erm Memory (LSTM) is a v ariant module for RNNs that incorporates conte xtual memory cells and gates for information flo w that “ learn to forg et ” and alleviates the long-term dependency problem of RNNs [13]. Re- cent models with RNNs typically use LSTM as a building block. Based on the success of the LSTM that can handle long- term dependenc y , there have been studies of music genera- tion using LSTM. Ho wev er , there is a problem called “e x- posure bias” [26] in the discrete sequence generation using LSTM, when a model is trained with the maximum likeli- hood method. In the case of an out-of-sample discrete se- quence not in the training set, a discrepancy between train- ing and inference occurs because the sampled output of the previous time step is used as the input in the current time step. SeqGAN [30] addresses this problem by considering the sequence generation problem as a sequential decision- making process in the reinforcement learning (RL). Fur - ther , to calculate reward signals at each time step for RL, SeqGAN incorporates GANs, where the discrimina- tor CNNs provide scores that identify whether the giv en sequence is real or fake. After being pretrained with a ne g- ativ e log-likelihood (NLL) loss, the generator RNNs are trained by the policy gradient method [28] with these RL signals. More specifically , the generator uses the average of discriminator outputs for sequences generated by Monte Carlo search with a rollout polic y as the estimated re ward. The rollout policy is set to be the same as the current gener- ator . The generator is updated by the following equations: ∇ θ J ( θ ) = E Y 1: t − 1 ∼ G θ h P y t ∈Y ∇ θ G θ ( y t | Y 1: t − 1 ) · Q G θ D φ ( Y 1: t − 1 , y t ) i ' 1 T T X t =1 E y t ∼ G θ ( y t | Y 1: t − 1 ) h ∇ θ log G θ ( y t | Y 1: t − 1 ) · Q G θ D φ ( Y 1: t − 1 , y t ) i (1) where G θ is the polic y parameterized by the generator and Q G θ D φ is the action value function of a sequence following policy G θ . In an actual implementation, Q G θ D φ is replaced with the output of the discriminator as mentioned above. Y 1: t − 1 denotes a sequence from the generator and y t is a token at time step t . The parameters of the generator are updated by the gradient ascent method. The parameters of the discriminator are trained with the GAN loss. More detailed explanations can be found in the original SeqGAN paper . There are other RL approaches in addition to SeqGAN. Using RL for our task has an advantage of the ability to utilize well-defined music theories to calculate reward sig- nals that can be le veraged by the model [14]. Compared to end-to-end training approaches, RL has an advantage of allowing to guide the network with our prior kno wledge of music and steering the model with user preferred musical styles. The interaction between a composer and the generator is one of the important factors in the music generation task. Therefore, various conditional mechanisms for the music generation ha ve been dev eloped [6, 29]. MidiNet [29] is a model that generates a monophonic note sequence condi- tioned on a primer melody or a chord sequence. Howe ver , symbolic representation of music is not able to distinguish between a single long note and multiple repeating notes in this work. MidiNet can generate polyphonic music only by prim- ing a giv en chord as a condition. Our work instead explores the unconditioned polyphonic music generation by distill- ing all the necessary information into a word embedding space and letting the model to learn from the embedded space. Note that the conditional generation is also possible with our method by priming pre-defined word sequences before the unconditional generation. C-RNN-GAN [24] uses RNNs as a sequence genera- tor and incorporates GANs framew ork in parallel to our work. Ho wev er, it uses real-valued feature representation of a MIDI file by modeling tone length, frequency , inten- sity , and time with four real-v alued scalars. RNNs are trained from the real-valued feature space, because of the challenge of training GANs with discrete data, as it was discussed abov e. Our work is based on the framework that can nativ ely handle a discrete sequence with GANs. Efficient representation of musical data is crucial for the ability of the model to learn the musical structure. Notable examples include Performance RNN [27], which empha- sizes that the training dataset and musical representation are the most interesting elements of deep learning-based music generation. Performance RNN uses MIDI represen- tation that handles expressi ve timing and dynamics, which can be considered as a compressed version of a fixed time step. 3. MIDI D A T A REPRESENT A TION For our MIDI music dataset we used Nottingham database, which is a collection of 1,200 British and American folk tunes. Note that the original work also used the same dataset, but it only used the monophonic melody part with fixed time steps for training and e valuation. W e extend the representation of the dataset for polyphonic sequences. W e used music21 Python package for preprocessing of the MIDI data into an input sequence and for postpro- cessing the output sequence back to MIDI as depicted in Figure 1. A MIDI file in the Nottingham dataset consists of two parts: the melody and chords. After each MIDI file in Mid i files V ecto r Seq u en ces T o ken Seq u en ces [[ 0. 0, 0. 5, 4, 8, 80], …] [442, 2 556 , … ] Mus ic21 Stream T r ai n V al i d N otes C ho rds [[ 0. 0, 0. 5, 0, 0 , 0 ] , [ 0. 5, 3. 0, 7, 16 ] , …] [O c ta v e o f no t es ], [Pitc h of no t es ] [O c ta v e o f c ho rds ], [Pitc h se t o f c ho rds ] V oc a bul a ry P r ep r oc ess i ng P os t p r oc ess i ng sta r t tim e du r a tio n o cta ve p itch ve lo cit y [[ 0. 5, 4, 8, 0. 5, 0, 0], [ 0. 5, 4, 8, 3. 0, 7, 16], …] Figure 1 . Preprocessing and postprocessing pipeline of MIDI files for polyphonic music sequence. Counts Pitch sets Chord symbols 5000 ∼ 10000 [D,G,B], [D,F ] ,A] G/D, D 2000 ∼ 4999 [C ] ,E,A], [C,E,G], [E,G,B], [C,E,A] C ] m ] 5, C, Em, C6 1000 ∼ 1999 [C ] ,E,G,A], [C,D,F ] ,A] A7/C ] , D7/C 500 ∼ 999 [D,F ] ,B], [C,F ,A], [D,E,G ] ,B] D6, F/C, E7/D 250 ∼ 499 [D,G,A ] ], [D,F ,A], [D,F ,A ] ], [E,G ] ,B] Gm/D, Dm, A ] /D, E 100 ∼ 249 [D,F ,G,B], [C ] ,F ] ,A], [D ] ,F ] ,A,B], [C,E,G,A ] ], [C,D ] ,G] G7/D, F ] m/C ] , B7/D ] , C7, Cm 10 ∼ 99 [D ] ,G,A ] ], [C,D ] ,F ,A], [C ] ,E,F ] ,A ] ], [D,F ] ], [D ] ,F ] ,B], [C ] ,E,G], [C ] ,E,G ] ], [C ] ,F ] ,A ] ], [D,E,G,B] D ] , F7/C, F ] 7/C ] , D, D ] m ] 5, C ] dim, C ] m, F ] /C ] , G6/D T able 1 . Pitch set statistics of Nottingham dataset. the dataset was loaded, each note in the file was parsed into a list containing start time, duration, octave, pitch and ve- locity . For chords, we assigned dif ferent indices to all dif- ferent sets of pitches. For example, [C,E,G] and [G,B,D] hav e different indices in the pitch list. In this w ay , we in- corporated approximately 30 pitch sets into the pitch list. The statistics of the pitch sets is shown in T able 1. In exper - iments, we omitted the v elocity for two reasons: to reduce the vocabulary size to a tractable amount, and because the incorporation of the velocity would scatter the word dis- tribution severely , which would not yield good estimation results gi ven the amount of data points in the Nottingham dataset. T okenization was done by scattering e very possible combinations of the musical information into separate words. That is, the duration, the octa ve of the note, the pitch of the note, the octave of the chord and the pitch of the chord of a time step were combined in a single inte- ger . By including durations in the preprocessing pipeline we were able to tokenize each time step with different lengths. For notes whose lengths were different from the corresponding chords, we inserted dummy notes so that the length of a note and that of a chord sequence would be the same. Rest and dummy notes were designated as a special ‘rest’ token. W e excluded music with tokens which oc- curred less than 10 times in the total dataset to keep the size of the vocabulary tractable. T okenized integer sequences were used as inputs for SeqGAN. Based on the generated output sequence of tok ens from the SeqGAN model, postprocessing was performed to con- vert the sequences to MIDI files. After loading the con- structed vocabulary with a token sequence, each token in the sequence was conv erted to two musical symbols, a note and a chord, through the rev erse process of the preprocess- ing. The symbols were appended to the melody stream and the chord stream. After processing all tokens, the two streams were combined into a MIDI file. Unlike in models with fixed time steps introduced in the related work [29], our preprocessing method can dis- tinguish between a case where a single note is played for a long time and a case where a single note is played multiple times. Our method can do so, because we represented a variable duration by a single word token that can be pro- cessed by the recurrent networks. The dynamic timing of this representation can also benefit the generativ e model, where the RNNs can learn the time-dependent structure of the musical sequence beyond the fix ed time steps. The proposed preprocessing method is designed with minimal human-designed reformulation possible, since we wanted to let the model fully observe the underlying data distribution of polyphonic symbolic MIDI data that the model could le verage from learning. Ho wever , our method also has a drawback due to the tokenizing with naiv e hashing-like approach. Nai ve hashing can make vocab u- lary space expand more than necessary . It is difficult to learn chords in an octave that appear only fe w times in the dataset, even if the same chords in other octaves are abun- dant in the dataset. For example, tonic triad in different octav es are actually related, b ut the vocab ulary maps to different tok ens. Gener ator RN N 0 Un c ondi t ion a l 275 C on dit ional Notti ng ham Disc riminator CN N Re a l Sa m pl e Fak e Sa mple Mo n te C a rl o Po li c y Ro ll ou t [0 , 1 ] Rew a rd Po li c y Gra d ie n t Figure 2 . Schematic diagram of sequence generati ve adv ersarial networks (SeqGAN). Figure 3 . Sample music sequences generated from the model. 4. MODEL DESCRIPTION Here we describe core details of the SeqGAN model and our modifications to the stabilized training of the model with our customized polyphonic MIDI dataset. In Seq- GAN, the generator RNNs and discriminator CNNs are pretrained with a regular negati ve log-likelihood (NLL) loss (until con vergence). Then they are further tuned by adversarial training with polic y gradient with outputs from the discriminator CNNs ranging from 0 to 1 as re ward sig- nals. W e followed the same training scheme as in the orig- inal work. W e experienced instabilities in the adversarial training with hyperparameters from the original work. The instabil- ity persisted both from the original sequence length setting of 20 and our customized setting of 100. The main obsta- cle came from the discriminator vastly outperforming the generator . Even after pretraining the generator to achieve a saturated performance, the generator failed to fool the discriminator , and the discriminator identified all the given sequences as f ake with extremely high confidence (close to 1), which provided no meaningful re ward signals. W e thus lo wered the representational power of the dis- criminator by reducing the number of 1-D con volutional layers from 10 to 5. W e also increased the recepti ve field of con volution filters up to 20 (and discarded layers with small size filters), since we wanted the discriminator to capture a periodic structure of musical sequences effec- tiv ely . Note that the large receptiv e field approach is shown to be effecti ve in the related work, which handles raw wa veform audio [7]. Furthermore, we found that hyperparameters for policy gradients needed careful optimization. W e used 32 (instead of 16) Monte Carlo search rollouts for calculating re wards in the policy gradient to ensure lower variance of reward signals. This prevented the generator from learning with an unnecessary noise, which would lead to di vergence and critically impact performance of the model. W e adjusted the reward discount factor from 0.95 to 0.99 to compensate for the longer sequence length of 100. W e also applied a more “conservati ve” target generator network update rate from 0.8 to 0.9. W e observed that the higher update rate (i.e. less amount of parameter update of the target network) stabilized the adv ersarial training with re ward signals and constrained the div ergence of the generator . Instead of feeding a mixture minibatch containing both real and fake samples to the discriminator as in the orig- inal work, we used minibatch discrimination technique where minibatches contained only real or fake samples. This technique is used in several other works with GANs [21], and it empirically impro ved adversarial training of the model. 5. EXPERIMENT AL RESUL TS W e trained SeqGAN with hyperparameter optimization, which resulted in a larger version of the original model. Our polyphonic word representation of a MIDI file has a vocab ulary size of 3,216. W e embedded each word with randomly initialized 32-dimensional vectors. W e created sequences of length of 100 for training. This length also applies to sequence generation from the trained model. The generator RNNs ha ve 512 LSTM cells. The discrim- inator CNNs have five 1-D con volutional layers, and each of them has 400 feature maps with a receptiv e field of 20. W e pretrained both generator RNNs and discrimina- tor CNNs for 100 epochs with the regular negati ve log- likelihood loss. Due to the tendency of the discriminator to dominate, we first pretrained the generator and the dis- criminator at learning rates of 0.001 and 0.0001 and set the learning rate of the generator higher at 0.01. W e used a batch size of 32 for all experiments. W e compared two strate gies: the unconditional method where sampled sequences alw ays started from the pre- defined zero token, and the conditional method where we trained the model and generated sequences from the trained model with the first word in the real sequence as a start token. For each strategy , we additionally compared two formulations of the loss for the discriminator: the orig- inal softmax reward with the cross entropy loss and a sig- moid reward with the least squares loss, which is known to stabilize the training of GANs [20]. The generator fol- lowed the same policy gradient method with the giv en scalar re ward in each time step. The generated sequences showed musically coherent structure with long-term har- monics. W e measured results both from quantitative and perceptiv e qualitative perspecti ves. For quantitative analysis, we calculated the BLEU score that measures a similarity between the validation set and the generated samples and which is largely used to ev alu- ate the quality of machine translation [25]. T o be specific, the BLEU score can be calculated by comparing the entire corpus from the validation set and the sequence generated from the model. A higher BLEU score means that samples from the generator follow the underlying real data distribu- tion more closely . For the conditional method, we used a start token from a randomly sampled batch from the train- Algorithm Log-likelihood Adversarial SeqGAN, Uncond. 0.5335 0.6272 SeqGAN, Cond. 0.5095 0.5552 LS-SeqGAN, Uncond. 0.5312 0.6852 LS-SeqGAN, Cond. 0.5177 0.5743 T able 2 . Performance comparison with BLEU-4 scores from the v alidation set. SeqGAN: original softmax output from the discriminator with the cross entropy loss. LS- SeqGAN: sigmoid output from the discriminator with the least squares loss. Sample Pleasant? Real? Interesting? Uniform Random 2.36 2.10 2.71 Log-likelihood 3.02 2.93 3.07 Adversarial 1 3.17 2.69 3.14 Adversarial 2 3.90 3.62 3.83 Adversarial 3 3.86 3.81 3.76 Mode Collapse 1.67 1.67 1.90 Real Sample 4.31 4.31 4.07 T able 3 . Mean Opinion Score (MOS) results. Uniform Random: a sample generated with a uniform random prob- ability in each time step from the vocab ulary . Adversar- ial: samples from adversarial training with progressively increasing BLEU-4 score. Mode Collapse: a sample from the failure case of adversarial training with BLEU-4 score below 0.2. ing set. T able 2 showed that the BLEU score of the generator RNN is saturated from the pretraining and is further im- prov ed by the adversarial training. The generator RNN trained with NLL loss showed peak performance when the BLEU score reached approximately 0.53 and the adversar- ial training could generally improve the score from 0.05 to 0.15. The best configuration had the BLEU score of o ver 0.68. Note that these improvements are similar in magni- tude to those reported in the original paper . Ho wev er, we could not reproduce the same results with the original net- work configurations because of the instant diver gence of the generator . Results showed that the unconditional method per- formed relatively better than the conditional method espe- cially in the adversarial training phase. A possible expla- nation is that the unconditional method can estimate man- ifolds from the embedded space better with the fix ed zero start token, because the model can observe many more trajectories of the real data manifold from a single start- ing point, compared to smaller number of trajectories from many starting points in the pretraining phase of the condi- tional method. This further impacts potential benefits from the unsupervised adv ersarial training with reinforcement learning signals, as the model pretrained with the condi- tional method tends to fall into a bad local minimum with a higher probability than the model pretrained with the un- conditional method. W e conducted a qualitati ve analysis of human percep- tiv e performance of the generated MIDI sequences using MOS user study . The experiment asked 42 participants to rate seven different sequences from 1 to 5, by respond- ing to three questions: How pleasant is the song? Ho w realistic is the sequence? How interesting is the song? These questions are constructed gi ven the inspiration from MidiNet. The seven sequences included a sample from a real dataset, a sequence sampled by uniform random prob- ability in each time step from the vocabulary , and a sam- ple from a f ailure case of the adversarial training with low BLEU score (below 0.2). T o remove the bias, we notified participants that all se ven sequences were generated by the model. T able 3 showed that the sequences from adversarial training sounded more like the real ones than the sequences from the pretrained model with NLL, which is consistent with the quantitativ e analysis. Samples from the model pretrained with NLL sounded relativ ely more repetiti ve and focused more on the short- term harmonics. This is to be expected, since the pre- training phase tar gets the next token in the real training dataset. Samples from the adversarial training tended to show longer harmonics with more consistent chord pro- gressions, possibly since the model successfully explored policies that receiv ed high re ward by keeping the chord progression. 6. DISCUSSION AND FUTURE WORK Although experiments showed that the adversarial train- ing further boosted the performance in the music language modeling, there are drawbacks due to the nature of GANs. Firstly , GANs often suf fer from the mode collapsing prob- lem, where the generator fools the discriminator by cre- ating artifacts rather than realistic samples [21]. W e also hav e noticed this problem where the generated samples played the same note constantly , which broke the musical coherence. This phenomenon can also be observed with a decrease in the BLEU score, which implies a div ergence from the pretrained model. Recent works on GANs in- troduce earth-mov er distance as a loss function to over - come this issue [2]. Thus, incorporating this idea to dis- crete GANs could alleviate the problem [17]. There ha ve been recent improv ements in the original work based on the rank-based loss [19], which can be directly applicable to our task. Secondly , the training of GANs is not more computa- tionally efficient than the NLL training of the generator RNNs. For example, with our stabilized hyperparameters, GANs require roughly ten times more computing time than the NLL training per epoch for a relati vely small improve- ment in performance. The computational cost also scales to the number of Monte Carlo polic y rollouts, which gives us a trade-off between accurac y and variance. Thirdly , the policy gradient method with the Monte Carlo rollout is highly stochastic. Although the adversarial training can provide the extra performance improv ement that the NLL method cannot, the reinforcement learning signal showed high v ariance and a relativ ely lo w repro- ducibility . This means that ev en for the same hyperpa- rameter settings, one would need to run multiple training trials to achiev e improvements from the adversarial train- ing. This leav es room for impro vements in minimizing the variance of the reinforcement learning signals notably by Monte Carlo Tree Search (MCTS) [4] and experience re- play [23] as examples. The restriction of the vocab ulary to the pre-defined words that are observ ed in the dataset has a limitation that the model cannot create chords and melodies that are out- side the dataset. In terms of creati vity , the model would hav e to “compose” a novel music outside the boundaries of the learned data [3]. While harder to train, the uncon- strained models capable of processing arbitrary polyphonic input and output are crucial for creativity . As we ha ve mentioned in the related work, we ha ve ob- served that reinforcement learning using reward signals is a direct way to inject prior kno wledge about musical struc- ture into the model. This suggests that we could further lev erage the reinforcement learning signals by incorporat- ing a critic model that e v aluates musical consonance based on music theory . Indeed, RL-T uner , a deep Q-networks based model, uses scores from music theory rules as aux- iliary reward signals [14]. W e plan to implement this idea in the future work. Albeit the proposed word embedding method for the polyphonic MIDI data is simple and efficient, the word em- bedding with random projection does not effecti vely cap- ture relati ve harmony and consonance of each w ord. Mod- ular networks that consider this relative information of the MIDI data could further improve performance of the mu- sic language model. CNNs are a viable choice for this pur - pose [16], and we plan to use the CNN-RNN hybrid model in the future work. For more objective and structured experiments with au- tomatic music generation, we need a rob ust quantitati ve measures to ev aluate the perceptiv e quality of the machine- generated music [3]. From our experiments, the quantita- tiv e BLEU score analysis w as consistent with the qualita- tiv e MOS user study to a certain degree, but did not ex- actly reflect the perceptiv e performance. Dev elopment of a structured quantitative metric would improve objectiv- ity and reproducibility of research on the automatic music generation. 7. REFERENCES [1] Dario Amodei, Sundaram Ananthanarayanan, Rishita Anubhai, Jingliang Bai, Eric Battenberg, Carl Case, Jared Casper, Bryan Catanzaro, Qiang Cheng, Guo- liang Chen, et al. Deep speech 2: End-to-end speech recognition in english and mandarin. In International Confer ence on Machine Learning , pages 173–182, 2016. [2] Martin Arjovsky , Soumith Chintala, and L ´ eon Bottou. W asserstein gan. arXiv pr eprint arXiv:1701.07875 , 2017. [3] Jean-Pierre Briot, Ga ¨ etan Hadjeres, and Franc ¸ ois Pa- chet. Deep learning techniques for music generation-a surve y . arXiv pr eprint arXiv:1709.01620 , 2017. [4] Cameron B Browne, Edward Po wley , Daniel White- house, Simon M Lucas, Peter I Co wling, Philipp Rohlfshagen, Stephen T avener , Die go Perez, Spyridon Samothrakis, and Simon Colton. A surve y of monte carlo tree search methods. IEEE T ransactions on Com- putational Intelligence and AI in games , 4(1):1–43, 2012. [5] Kyunghyun Cho, Bart V an Merri ¨ enboer , Caglar Gul- cehre, Dzmitry Bahdanau, Fethi Bougares, Holger Schwenk, and Y oshua Bengio. Learning phrase rep- resentations using rnn encoder-decoder for statistical machine translation. arXiv preprint , 2014. [6] Hang Chu, Raquel Urtasun, and Sanja Fidler . Song from pi: A musically plausible network for pop music generation. arXiv pr eprint arXiv:1611.03477 , 2016. [7] Chris Donahue, Julian McAuley , and Miller Puckette. Synthesizing audio with generati ve adversarial net- works. arXiv pr eprint arXiv:1802.04208 , 2018. [8] Kratarth Goel, Raunaq V ohra, and JK Sahoo. Poly- phonic music generation by modeling temporal de- pendencies using a rnn-dbn. In International Confer- ence on Artificial Neural Networks , pages 217–224. Springer , 2014. [9] Ian Goodfello w , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair, Aaron Courville, and Y oshua Bengio. Generativ e adversarial nets. In Advances in neural information pr ocessing sys- tems , pages 2672–2680, 2014. [10] Ga ¨ etan Hadjeres and Franc ¸ ois Pachet. Deepbach: a steerable model for bach chorales generation. arXiv pr eprint arXiv:1612.01010 , 2016. [11] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. In Pr oceedings of the IEEE conference on computer vi- sion and pattern r ecognition , pages 770–778, 2016. [12] Lejaren Arthur Hiller and Leonard Maxwell Isaacson. Experimental music: composition with an electronic computer . 1959. [13] Sepp Hochreiter and J ¨ urgen Schmidhuber . Long short- term memory . Neural computation , 9(8):1735–1780, 1997. [14] Natasha Jaques, Shixiang Gu, Richard E T urner, and Douglas Eck. Tuning recurrent neural networks with reinforcement learning. 2017. [15] Daniel D Johnson. Generating polyphonic music using tied parallel netw orks. In International Confer ence on Evolutionary and Biologically Inspired Music and Art , pages 128–143. Springer , 2017. [16] Y oon Kim. Con volutional neural netw orks for sentence classification. arXiv pr eprint arXiv:1408.5882 , 2014. [17] Y oon Kim, Kelly Zhang, Alexander M Rush, Y ann LeCun, et al. Adversarially regularized autoencoders for generating discrete structures. arXiv pr eprint arXiv:1706.04223 , 2017. [18] Y ann LeCun, Y oshua Bengio, and Geoffrey Hinton. Deep learning. natur e , 521(7553):436, 2015. [19] Ke vin Lin, Dianqi Li, Xiaodong He, Zhengyou Zhang, and Ming-T ing Sun. Adversarial ranking for language generation. In Advances in Neur al Information Pr o- cessing Systems , pages 3155–3165, 2017. [20] Xudong Mao, Qing Li, Haoran Xie, Raymond YK Lau, Zhen W ang, and Stephen Paul Smolle y . Least squares generativ e adversarial networks. In 2017 IEEE Interna- tional Confer ence on Computer V ision (ICCV) , pages 2813–2821. IEEE, 2017. [21] Luke Metz, Ben Poole, David Pfau, and Jascha Sohl- Dickstein. Unrolled generati ve adversarial networks. arXiv pr eprint arXiv:1611.02163 , 2016. [22] Seonwoo Min, Byunghan Lee, and Sungroh Y oon. Deep learning in bioinformatics. Briefings in bioinfor- matics , 18(5):851–869, 2017. [23] V olodymyr Mnih, Koray Ka vukcuoglu, David Sil- ver , Andrei A Rusu, Joel V eness, Marc G Bellemare, Alex Grav es, Martin Riedmiller , Andreas K Fidjeland, Georg Ostrovski, et al. Human-le vel control through deep reinforcement learning. Natur e , 518(7540):529, 2015. [24] Olof Mogren. C-rnn-gan: Continuous recurrent neu- ral networks with adversarial training. arXiv pr eprint arXiv:1611.09904 , 2016. [25] Kishore P apineni, Salim Roukos, T odd W ard, and W ei- Jing Zhu. Bleu: a method for automatic ev aluation of machine translation. In Pr oceedings of the 40th annual meeting on association for computational linguistics , pages 311–318. Association for Computational Lin- guistics, 2002. [26] Marc’Aurelio Ranzato, Sumit Chopra, Michael Auli, and W ojciech Zaremba. Sequence lev el train- ing with recurrent neural networks. arXiv preprint arXiv:1511.06732 , 2015. [27] Ian Simon and Sagee v Oore. Performance rnn: Generating music with expressiv e timing and dynam- ics. https://magenta.tensorflow.org/ performance- rnn , 2017. [28] Richard S Sutton, David A McAllester , Satinder P Singh, and Y ishay Mansour . Policy gradient methods for reinforcement learning with function approxima- tion. In Advances in neural information pr ocessing sys- tems , pages 1057–1063, 2000. [29] Li-Chia Y ang, Szu-Y u Chou, and Y i-Hsuan Y ang. Midinet: A con volutional generative adversarial net- work for symbolic-domain music generation. In Pr o- ceedings of the 18th International Society for Music In- formation Retrieval Confer ence (ISMIR2017), Suzhou, China , 2017. [30] Lantao Y u, W einan Zhang, Jun W ang, and Y ong Y u. Seqgan: Sequence generativ e adversarial nets with pol- icy gradient. In AAAI , pages 2852–2858, 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment