Deep learning for dehazing: Comparison and analysis

We compare a recent dehazing method based on deep learning, Dehazenet, with traditional state-of-the-art approaches , on benchmark data with reference. Dehazenet estimates the depth map from transmission factor on a single color image, which is used …

Authors: A Benoit (LISTIC), Leonel Cuevas, Jean-Baptiste Thomas (Le2i)

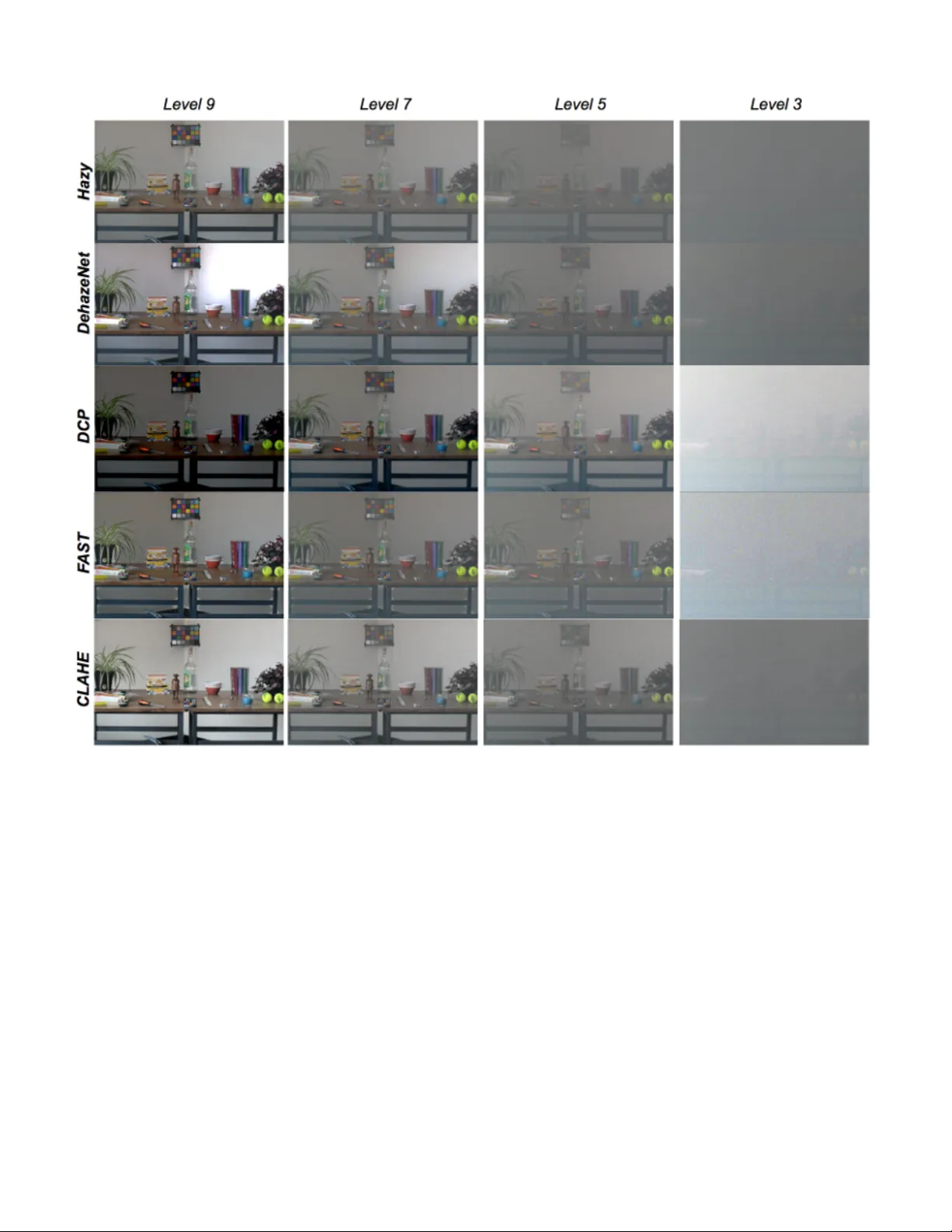

Deep learning for dehazing: Comparison and analysis Leonel Cue v as V aleriano and Jean-Baptiste Thomas The Norwegian Colour and V isual Computing Laboratory NTNU, Gjøvik, Norway Email: jean.b .thomas@ntnu.no Alexandre Benoit LISTIC Univ . Sa v oie Mont Blanc, Annecy , France Abstract —W e compare a recent dehazing method based on deep learning, Dehazenet, with traditional state-of-the-art ap- proaches, on benchmark data with refer ence. Dehazenet estimates the depth map from transmission factor on a single color image, which is used to in verse the K oschmieder model of imaging in the presence of haze. In this sense, the solution is still attached to the K oschmieder model. W e demonstrate that the transmission is very well estimated by the network, but also that this method exhibits the same limitation than others due to the use of the same imaging model. I . I N T RO D U C T I O N Dehazing aims at improving visibility in images captured in a presence of haze. In general, methods can be classified in two categories (Jessica El Khoury PhD [1], Chapter 3). One is based on an image enhancement paradigm, and is usually instances of local histogram equalization. The other category aims at the in version of the Koschmieder model [2] written in Equation 1, J ( x ) = I ( x ) t ( x ) + A ∞ (1 − t ( x )) , (1) which states that the image captured at a position x yields J ( x ) that is a linear combination of the radiant image I ( x ) and the contribution of the airlight A ∞ weighted by a transmission factor t ( x ) . A ∞ is defined as the light sent to the camera by an object at infinite distance, i.e. the diffusion of the light by the haze. The transmission factor is t ( x ) = exp( − β d ( x )) , where β is the scattering coefficient of the haze and d ( x ) the distance of the object from the camera. This is performed by estimating t ( x ) and A ∞ separately or jointly . Recently , solutions using deep learning have been intro- duced. They fall into this category . For instance Dehazenet [3] focuses on the estimation of t(x), and A OD-Net [4] focuses of the joint estimation of t ( x ) and A ∞ . T wo limitations can be observed: 1-the K oschmieder model seems to have a limited validity for heavy amount of haze where the airlight contribu- tion is predominant compared to the radiant signal (Jessica El Khoury PhD [1]), 2-Networks are trained on simulated material based on the Koschmieder model, which impacts generalization capabilities and thus may limit performance lev els on real data. W e perform a quantitativ e analysis of the first point and compare Dehazenet with the state of the art on the CHIC database [5], [6]. The methods selected for comparison are: DCP [7], F AST [8] and CLAHE [9]; DCP is based on the inv ersion of the K oschmieder model by use of the Dark Channel to estimate t ( x ) , F AST proposes a v ariation of this by estimating an Athmospheric V eil which represents the achromatic veil responsible for intensity changes in the image due to the light interactions in the environment, and finally CLAHE is based on applying contrast-limited adaptiv e histogram equalization. The remaining of the paper is organized as follows. Next Section considers the description of the parameters estimation by learning, Section III defines the experimental procedure. Benchmarking results are presented in Section IV and demon- strate that dehazenet exhibits the same limitations than other state-of-the-art methods based on the K oschmieder model despite a very good estimation of the transmission factor . I I . D E E P L E A R N I N G F O R D E H A Z I N G Researchers have recently turned their attention to deep learning in order to e xplore how well it performs in the task of haze remov al, inspired by the outstanding results of Con v olutional Neural Networks (CNN) in high-lev el vision tasks such as image classification [10]. The main reasoning behind these methods is the fact that the human brain can quickly identify the hazy area from the natural scenery without any additional information [3], so by the use of CNNs it seems plausible to extract the features necessary to perform the dehazing task. Although more efficient or vision inspired formulation may rise in the future, the first attempts focus on the in v ersion of the K oschmieder imaging model. DehazeNet: Cai et al. [3] introduced DehazeNet, a deep learning method for single image haze removal. DehazeNet is, because of its principles, a method based on the in v ersion of the K oschmieder model. It proposes a ne w approach to estimate the transmission map t ( x ) . Giv en a hazy image as input, DehazeNet computes and output its corresponding t ( x ) , which can be then used to recover a haze-free image by in v ersion of the model. DehazeNet is trained with thousands of hazy image patches, which are synthesized from haze-free versions of images taken from the Internet. Since the model for generating these hazy patches is known, a ground truth for the transmission map t ( x ) can be provided for training. DehazeNet only estimates t ( x ) , the estimation of the global atmospheric light A ∞ is done in a separate and independent step. In fact it can be done by using an y of the approaches used in other methods. For the purpose of this study , we have used the same A ∞ estimation for all methods in the benchmark. A OD-Net: More recently Li et al. introduced the All-in-One Dehazing Network (A OD-Net) [4]. Unlike DehazeNet, A OD- Net is based on a reformulation of the K oschmieder model, where all parameters are encapsulated into one variable. This enables the joint implicit estimation of the transmission map and the atmospheric light to in vert the model. For the training of A OD-Net, the authors created synthesized hazy images, using the ground-truth images with depth metadata from the indoor NYU2 Depth Database [11], which contains around 30,000 images. Dif ferent atmospheric light and β values are set to generate a new set of hazy images which are fed as input to A OD-Net. The output of the network is then compared to the original haze-free image and trained to minimize the error between the output and the original images prior haze simulation. They also used a set of natural hazy images to ev aluate visually the general performance. Since the A OD- Net has been published after our experiment, we dev eloped the analysis around Dehazenet in this paper . A OD-Net will be in v estigated in further work. I I I . E X P E R I M E N T The objective of our work is to perform an objectiv e comparison between deep learning based methods such as DehazeNet and traditional haze removal methods. W e opted for different approaches to perform the comparison. W e made use of the CHIC database introduced by El Khoury et al. [5], [6]. CHIC stands for ”Color Hazy Image for Comparison”. The database consists of two different scenes that include numerous objects with different shapes, colors, positions, surface types (glossy or rough surfaces) and textures. The scenes also include four Macbeth color checkers at different distances for color accuracy checks. A set of high-resolution images of the same scene is acquired under a controlled en vironment with the same light conditions; first without any haze (haze-free image) and then under 9 dif ferent le v els of fog density which is introduced by using a fog machine. Each image has a uniform fog le vel. Level 9 is the lo west fog density while level 1 is the highest. In addition to this, for each scene the distances of the four color checkers to the camera are kno wn, the fog properties, such as the scattering coef ficient ( β ) are also known and the lighting conditions remain constant. Due to the high resolution of the images and because of the limitations in our computing power it was not possible to giv e the full image as an input to DehazeNet, to ov ercome this issue we decided to crop a region of the original image and use it as input for DehazeNet and the other reference methods. W e chose this option because we were concerned about the possibility that resizing processes could introduce artifacts in the image, which might ha ve an impact on the K oschmieder model and the results. The particular region of the scene of the CHIC database sho wn in Figure 1. W e selected this re gion of the scene due to the presence of the color checker in the back of the scenes, which will be used for comparison. Also it covers objects at different depths and being the furthest (with more depth) part of the scene it prov es to be a more challenging task for the different haze remov al methods. Fig. 1. Selected regions of the scene to do the comparisons. The A ∞ is measured on the dataset and gi ven to all the algorithms, which enable a fair comparison. A set of state-of-the-art dehazing methods is considered for comparison for different levels of haze : DCP [7], F AST [8], CLAHE [9] and Dehazenet. For this, the pre-trained Caffe implementation provided by Zlinker [12] is used. W e compute a selection of metrics for dehazing ev aluation [13], which mostly indicates how well edges are recovered or enhanced ( e [14], r [14], F ADE [15] and VSI [16]). W e perform a color analysis of the results based on a color checker , which indicates how well color are recovered (similar to [17]). W e also inv estigate ho w well DehazeNet estimates the transmission factor t ( x ) . I V . E V A L U A T I N G D E H A Z E N E T A. T r ansmission map estimation analysis DehazeNet performs an estimation of the transmission map t ( x ) . W e propose to ev aluate this directly compared to mea- sured values from the benchmark. W e also compare with the transmission map obtained by DCP under dif ferent levels of haze. W e only compared with DCP because F AST is based on a different variation of the model and CLAHE does not try to estimate the transmission map. The distance between the color checkers and the camera serves as a ground truth to compare the two considered meth- ods. Since an approximation of the scattering coefficient β is av ailable for each scene, a ground truth t ( x ) = exp( − β d ( x )) can be computed for each of those color checkers to bench- mark the considered methods. W e decided to perform the quantitativ e comparison only for lev el 5 and abov e, where the model in version permits to improv e the visibility of the scene. Levels below 5 destroy so much the scene that the transmission map, as formulated, is not relev ant. Figure 2 shows an example of the transmission maps obtained by DehazeNet and DCP for lev el 9. Already visually , we could spot differences. Fig. 2. Examples of transmission maps. a) Transmission map estimated by Dehazenet for haze lev el 9. b) T ransmission map estimated by DCP for haze lev el 9. In order to get an estimation of the transmission of the whole color checker we segment it out and take all the pixels in the region, we exclude the highest and lowest 15% values, then calculate the mean of the remaining 70%. W e do this in order to avoid the effect of noise and outliers due to bad estimations. The obtained estimation is then compared to the values obtained using the ground truth data for the scene (color checker on the back is located 7 m from the camera and the one on the table at 4.35 m from the camera). T ables I and II show the scattering coefficients ( β ) used, the transmission values obtained from both DehazeNet and DCP , along with the expected values calculated using the ground truth data, for the color checker on the back and the color checker of the table, respectiv ely . W e observe that DehazeNet clearly outperforms DCP with results fairly close to the measured ground truth. This is especially true for stronger haze. B. Color accuracy Sev eral haze-removal methods are known to make colors significantly more colorful, and most methods disreg ard the T ABLE I R E SU LT S O F T R AN S M I SS I O N E S TI M A T IO N A NA L Y SI S F O R T H E C O L OR C H EC K E R P L AC ED O N T H E BA CK O F T H E S C EN E . Haze Level β DehazeNet DCP Ground truth 5 103.69 0.559 0.133 0.484 7 83.57 0.618 0.255 0.557 9 17.84 0.855 0.617 0.883 T ABLE II R E SU LT S O F T R AN S M I SS I O N E S TI M A T IO N A NA L Y SI S F O R T H E C O L OR C H EC K E R P L AC ED O N T H E TAB L E O N T H E S C E NE . Haze Level β DehazeNet DCP Ground truth 5 103.69 0.624 0.262 0.637 7 83.57 0.700 0.432 0.695 9 17.84 0.872 0.725 0.925 color accuracy of the restored images. In addition, the color reliability is an aspect that most visibility metrics do not take into account. W e propose to in vestigate on the color accuracy of the restored images by making use of the Macbeth Color Checkers in the scene and of the haze-free reference images, which are provided in the CHIC database. Fig. 3. Labels for the 24 GretagMacbeth ColorChecker patches. W e select sev eral patches of the color checker and plot on a r g chromaticity diagram the color for the hazy image, the haze-free image, and the color restored by DehazeNet and the benchmarking algorithms. This allows us to make a comparison of the color between the different methods. In Figure 3, we present the labeled patches. W e only show the results for low densities of haze because with lev el 5 and lo wer , the restored colors hav e values close to the ones of the achromatic patches. For those le vels, visualization is meaningless. In practice, we select 8 patches (2, 6, 7, 13, 14, 16, 17, 19). These patches were chosen to cover dif ferent regions of the space. Only one of the achromatic patches were selected because their results are very similar . W e present an example of the results and its interpretation in Figure 4. W e can observe that for level 9, the restored colors are close to those of the ground truth, DehazeNet comes in second place after F AST with an average distance of 0.081 units. W e can also observe that DCP tends to over -enhance the results, as we will see later for other lev els of haze. Fig. 4. Results example of for haze le vel 9. W e can observe a similar trend for the results with DCP (in red) showing a significant over-estimation of colorfulness. In Figure 5 (b) - (c) we present, for representativ e lev els of haze, the rg chromaticity of the obtained colors for the selected patches by using DehazeNet in order to compare with those obtained using DCP , F AST and CLAHE, for a complete comparison we also include the colors of the haze-free image and the hazy image. For other levels of haze we can observe different phenom- ena: for lev el 5 the restored colors are still mostly pale grayish, so the real colors are still quite far from the ground truth, in this case DehazeNet comes in second place, only behind F AST , with an a verage distance of 0.227 units. For level 5, the restored colors by all methods seem to approach closer to those of the ground truth. In this case, DehazeNet falls behind DCP and F AST with an av erage distance of 0.153 units. Overall, DehazeNet’ s performance in regards to color ac- curacy is good, giving in most cases the first or second- closest method (most times second, since F AST normally gi v es the closest one) to the original haze-free color and without showing significant over -enhancements, unlike DCP . This is to put in relation with the e xcellent estimation of the transmission map. C. Image analysis. Model limitations El Khoury et al. [1] took advantage of the CHIC database to verify the assumptions and limitations of the Koschmieder model. Having the color checkers as reference points, they divided the scene into four different parts and, by using the known distances of the color checkers in the scene, they were able to calculate the transmission map for each part of the scene, which they would later use to obtain a ”haze-free” version of the scene as seen in Figure 6. Fig. 5. Image of the rg chromaticity for the 8 selected patches in a)the original haze-free image, b) under haze level 5, c) under haze lev el 7 and d) under haze lev el 9. Fig. 6. Evaluation of the K oschmieder model limitations under dif ferent lev els of haze. The upper row shows the original images, while the bottom row shows the dehazed versions. Note that below level 5 the color checker in the back can no longer be restored. Image reproduced from [1]. This, in a way , presents the limits of the in v ersion model itself, since the transmission is calculated using kno wn depths instead of only an estimate. So, as a validation step, we ev aluated the results of DehazeNet under dif ferent lev els of haze. Our idea was to judge visually ho w good DehazeNet performs by having the ”limit results” of the model for comparison. In Figure 7, we sho w the results obtained for all the methods. The results show an improv ement in the overall quality of the image for all the dif ferent haze le v els abo ve 3. In le vel 3 we can still see a slight improvement of the visibility particularly in the book on the left and the tennis balls on the right side of the table. W e can observe that the color checker on the back Fig. 7. Results obtained by applying the selected haze remov al methods on the selected cropped region of the scene under different lev els of haze. From top to bottom: hazy input image, DehazeNet results, DCP results, F AST results and CLAHE results. is no longer visible in the restored images of level 3 haze for any of the methods. This is coherent with the limitations of the Koschmieder model [1]. Although, when comparing with the results seen in Figure 6, there is still possibility for improvement. So we concluded that the performance of DehazeNet is on par with other state-of-the-art methods, but still constrained by the limitation of the K oschmeider model. D. Image analysis. Metrics The final comparison consists in using a set of metrics which are normally used for the quality assessment of visibility enhancement algorithms. For this comparison the following metrics were chosen based on the recommendations by El Khoury et al. [13]: • e and r : this metric compares the restored image to a reference hazy image. The e index ev aluates the ability of the method to restore edges which were not visible in the reference image but are in the restored image, while the r index is an indicator of restoration quality . The scores of these indexes refer to the gain of visibility , h i gher score means better results obtained [14]. • F ADE: Fog A ware Density Ev aluator . This is a reference- less metric. It is based on natural scene statistics. They create a model based on extracted features observed in 500 natural hazy and 500 haze-free images. By using this model the metric estimates the ”fog density” in the image, therefore lower scores represent a better restored image. It performs particularly well at assessing color recovery assessment [15]. • VSI: V isual Saliency-based Index. This is one of the few metrics that compares the restored image to the original haze-free image. It is a metric based on the assumption that an images V isual Saliency map has a close relationship with its perceptual quality . It is based on their own Saliency Detection by combining Simple Priors (SDSP) method [18] that works by integrating prior knowledge from frequency , color and location. VSI outperforms the other two metrics significantly when it comes to sharpness recov ery assessment [16]. Fig. 8. Results of the selected metrics under the different av ailable haze lev els. Note that the performance varies depending on the haze level, but DehazeNet (in red) tends to perform poorly across all metrics (note: for F ADE values lower is better). The results of using these metrics are sho wn in Figure 8, when appropriate, we include the results for the original hazy image as well. Note that we do not present the results of the e index for lev els under 5, because due to the low visibility conditions any change in the image results in very high values for all methods, which are dif ficult to compare. The results show that in general (across all the four metrics), ov er most of the different lev els of haze, DehazeNet performs worse than all the other methods. So, by considering the results of these metrics, we can conclude that DehazeNet performs poorly in terms of edge visibility and structural sharpness restoration, either being the worst or second-worst performer in the majority of cases. The results of those metrics should not be generalized to the overall e v aluation of the methods since it has been demonstrated that none of them correlate perfectly with the visual observation on this database [13]. V . C O N C L U S I O N DehazeNet performs comparable to other state of the art algorithms. W e observed better results for transmission map estimation and color accuracy , but worse for improvements in edge visibility and structural sharpness. This analysis is based only on an image across several level of one type of fog. W e nevertheless can predict that eventually , deep learning will permit to recover the parameters of the model the best possible. A OD already improv ed a lot on the Airlight estimation, if it is re-tuned for real scenes, it may become optimal. Those proposals still face the limitations of the imaging model, and in v estigations in the reformulation of the model of haze are required to create a breakthrough in performance. R E F E R E N C E S [1] J. El Khoury , “Model and quality assessment of single image dehazing, ” Ph.D. dissertation, Univ ersit ´ e de Bourgogne, 2016. [2] K. Harald, “Theorie der horizontalen sichtweite: K ontrast und sichtweite, vol. 12, ” Keim & Nemnich, Munich , 1924. [3] B. Cai, X. Xu, K. Jia, C. Qing, and D. T ao, “Dehazenet: An end-to-end system for single image haze remov al, ” IEEE T ransactions on Image Pr ocessing , vol. 25, no. 11, pp. 5187–5198, 2016. [4] B. Li, X. Peng, Z. W ang, J. Xu, and D. Feng, “ Aod-net: All-in-one dehazing network, ” in The IEEE International Conference on Computer V ision (ICCV) , Oct 2017. [5] J. El Khoury , J.-B. Thomas, and A. Mansouri, “ A color image database for haze model and dehazing methods ev aluation, ” in Image and Signal Pr ocessing , A. Mansouri, F . Nouboud, A. Chalifour , D. Mammass, J. Meunier, and A. Elmoataz, Eds. Cham: Springer International Publishing, 2016, pp. 109–117. [6] ——, “ A database with reference for image dehazing ev aluation, ” Journal of Imaging Science and T echnology , vol. 62, no. 1, 2018. [7] K. He, J. Sun, and X. T ang, “Single image haze removal using dark channel prior, ” IEEE transactions on pattern analysis and machine intelligence , vol. 33, no. 12, pp. 2341–2353, 2011. [8] J.-P . T arel and N. Hautiere, “Fast visibility restoration from a single color or gray level image, ” in Computer V ision, 2009 IEEE 12th International Confer ence on . IEEE, 2009, pp. 2201–2208. [9] Z. Xu, X. Liu, and N. Ji, “Fog removal from color images using contrast limited adaptive histogram equalization, ” in Image and Signal Pr ocessing, 2009. CISP’09. 2nd International Congr ess on . IEEE, 2009, pp. 1–5. [10] A. Krizhevsky , I. Sutskev er , and G. E. Hinton, “Imagenet classification with deep conv olutional neural networks, ” in Advances in neural infor- mation processing systems , 2012, pp. 1097–1105. [11] N. Silberman, D. Hoiem, P . Kohli, and R. Fergus, “Indoor se gmentation and support inference from rgbd images, ” in Eur opean Conference on Computer V ision . Springer , 2012, pp. 746–760. [12] “zlinker - dehazenet, ” https://github.com/zlinker/DehazeNet, accessed: 2017-12-05. [13] J. El Khoury , S. Le Moan, J.-B. Thomas, and A. Mansouri, “Color and sharpness assessment of single image dehazing, ” Multimedia T ools and Applications , Sep 2017. [Online]. A vailable: https: //doi.org/10.1007/s11042- 017- 5122- y [14] N. Hauti ` ere, J.-P . T arel, D. Aubert, and E. Dumont, “Blind contrast enhancement assessment by gradient ratioing at visible edges, ” Image Analysis & Ster eology , vol. 27, no. 2, pp. 87–95, 2011. [15] L. K. Choi, J. Y ou, and A. C. Bovik, “Referenceless prediction of per- ceptual fog density and perceptual image defogging, ” IEEE Tr ansactions on Image Processing , vol. 24, no. 11, pp. 3888–3901, 2015. [16] L. Zhang, Y . Shen, and H. Li, “Vsi: A visual saliency-induced index for perceptual image quality assessment, ” IEEE Tr ansactions on Image Pr ocessing , vol. 23, no. 10, pp. 4270–4281, 2014. [17] J. El Khoury , J.-B. Thomas, and A. Mansouri, “Haze and con ver gence models: Experimental comparison, ” in AIC 2015 , T okyo, Japan, May 2015. [Online]. A vailable: https://hal.archives- ouv ertes.fr/hal- 01202989 [18] L. Zhang, Z. Gu, and H. Li, “Sdsp: A novel saliency detection method by combining simple priors, ” in Image Processing (ICIP), 2013 20th IEEE International Confer ence on . IEEE, 2013, pp. 171–175.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment