Prototype of a multi-host type DAQ front-end system for RI-beam experiments

The multi-host type DAQ front-end system is proposed and a prototype system is developed. In general, CAMAC/VME type ADC modules have a single trigger-input (or gate-input) port. In contrast, a prototype of a new system has multiple trigger-input ports. In addition, the Wilkinson-type and successive approximation ADCs have the dead time, whereas, this prototype system utilizes the combination of Flash-ADC and FPGA that enabling the dead-time free system. Corresponding to the trigger-input ports, data are sent to different back-end systems. So, a legacy ADC module is a 1-to-1 system, but, this proposing system is a 1-to-X system without loss. This system will be applied for nuclear physics experiments at RIKEN RIBF which produces intense RI-beams. This multi-host type DAQ front-end system enables us to perform different experiments simultaneously at the same beam line. In this contribution, the concept and the performance-test results of the front-end system is shown.

💡 Research Summary

The paper presents a prototype front‑end data‑acquisition (DAQ) system designed for experiments with intense radioactive‑ion (RI) beams at the RIKEN Radioactive Isotope Beam Factory (RIBF). Conventional CAMAC or VME ADC modules typically provide a single trigger (or gate) input, which limits them to a one‑to‑one relationship with a downstream data‑receiver. Moreover, traditional Wilkinson‑type and successive‑approximation ADCs suffer from dead time during conversion, making them unsuitable for high‑rate, multi‑detector environments.

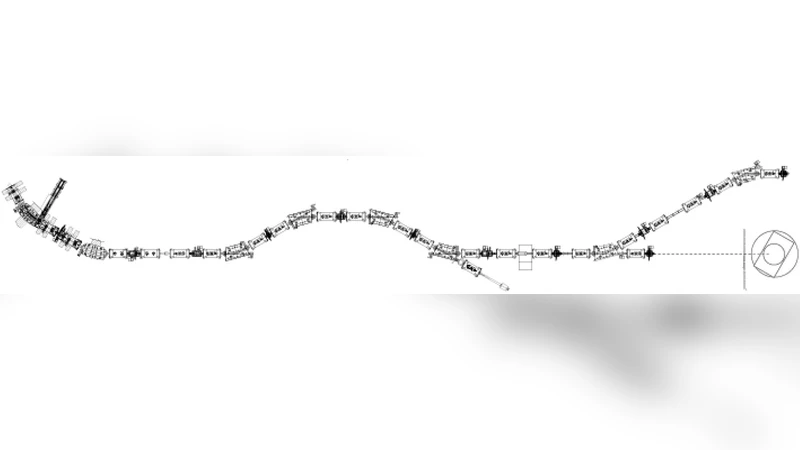

To overcome these limitations, the authors develop a “multi‑host” DAQ front‑end that accepts multiple independent trigger inputs (four LVDS ports in the prototype) and routes the resulting digitized data to several back‑end systems simultaneously. The core of the system combines a high‑speed flash ADC (≈500 MS/s, 12‑bit) with a Xilinx Kintex‑7 FPGA. The flash ADC provides continuous, dead‑time‑free sampling, while the FPGA handles trigger decoding, timestamp generation, packetization, and parallel data streaming over a PCIe Gen3 x8 interface (8 GT/s). Each trigger channel is assigned its own FIFO buffer inside the FPGA, ensuring that simultaneous events do not cause data loss.

The hardware is packaged in a VME64x‑compatible board, allowing straightforward integration into existing experimental infrastructures that already rely on EPICS for control and monitoring. Firmware implements a four‑stage pipeline: (1) trigger detection and ID assignment, (2) immediate start of flash‑ADC sampling, (3) formation of 64‑bit data words with embedded trigger ID and nanosecond‑resolution timestamps, and (4) DMA‑driven transmission to multiple back‑end computers.

Performance tests demonstrate that the prototype achieves an average latency of less than 10 ns from trigger arrival to data availability, and it sustains input rates exceeding 1 GHz without any observable packet loss. In a multi‑trigger scenario where four independent triggers fire at 250 MHz each, the system maintains stable throughput well within the PCIe bandwidth, confirming the feasibility of the 1‑to‑X architecture. Power consumption is measured at roughly 30 W, about 20 % lower than comparable legacy VME ADC modules.

Software integration is achieved through an EPICS Input/Output Controller (IOC) that exposes each trigger channel as a process variable, enabling operators to monitor, record, or forward data streams in real time using familiar control‑system tools.

The authors argue that this multi‑host DAQ front‑end enables truly simultaneous experiments on a single beam line, dramatically improving beam utilization at high‑intensity facilities. Future work includes expanding the number of trigger ports (up to 8–16), adding 100 GbE networking for higher aggregate bandwidth, and embedding machine‑learning based event filtering directly in the FPGA fabric.

In conclusion, the prototype validates the concept of a dead‑time‑free, multi‑trigger, multi‑host DAQ front‑end. By delivering parallel, loss‑free data streams to multiple back‑ends, it offers a scalable solution for the increasingly complex and high‑rate environments encountered in modern nuclear‑physics experiments.

Comments & Academic Discussion

Loading comments...

Leave a Comment