A Tunable Particle Swarm Size Optimization Algorithm for Feature Selection

Feature selection is the process of identifying statistically most relevant features to improve the predictive capabilities of the classifiers. To find the best features subsets, the population based approaches like Particle Swarm Optimization(PSO) and genetic algorithms are being widely employed. However, it is a general observation that not having right set of particles in the swarm may result in sub-optimal solutions, affecting the accuracies of classifiers. To address this issue, we propose a novel tunable swarm size approach to reconfigure the particles in a standard PSO, based on the data sets, in real time. The proposed algorithm is named as Tunable Particle Swarm Size Optimization Algorithm (TPSO). It is a wrapper based approach wherein an Alternating Decision Tree (ADT) classifier is used for identifying influential feature subset, which is further evaluated by a new objective function which integrates the Classification Accuracy (CA) with a modified F-Score, to ensure better classification accuracy over varying population sizes. Experimental studies on bench mark data sets and Wilcoxon statistical test have proved the fact that the proposed algorithm (TPSO) is efficient in identifying optimal feature subsets that improve classification accuracies of base classifiers in comparison to its standalone form.

💡 Research Summary

The paper addresses the well‑known challenge of feature selection in high‑dimensional data, where redundant or noisy attributes can degrade classifier performance and cause over‑fitting. While population‑based meta‑heuristics such as Particle Swarm Optimization (PSO) and Genetic Algorithms (GA) have been widely applied to this problem, the authors point out that the size of the PSO swarm (i.e., the number of particles) is a critical yet often overlooked parameter. A swarm that is too small tends to become trapped in local minima, whereas an excessively large swarm slows convergence and increases computational cost.

To overcome this limitation, the authors propose the Tunable Particle Swarm Size Optimization (TPSO) algorithm. TPSO dynamically adjusts the swarm size during the search process based on the characteristics of the data set, rather than fixing it a priori. The method is implemented as a wrapper: for each candidate feature subset generated by PSO, an Alternating Decision Tree (ADT) classifier evaluates the subset’s predictive power. The ADT is chosen because it combines decision and prediction nodes, aggregates contributions from all true paths, and has been shown to be robust against over‑fitting compared with traditional decision trees.

A novel feature discrimination score is introduced to replace the conventional mean‑based metric. The new score uses the median of each feature for the positive and negative classes, which makes it less sensitive to skewed distributions. For feature i, the score is defined as

F(i) = V₁ / V₂

where V₁ captures the absolute difference between class‑specific medians and V₂ aggregates the within‑class variance using median‑based deviations. The sum of these scores over the selected subset (M₁) and over the whole feature set (M₂) are then combined with the ADT accuracy (A) in a balanced fitness function:

V = 0.5 · A + 0.5 · (M₁ / M₂).

This formulation simultaneously rewards high classification accuracy and strong discriminative power of the retained features.

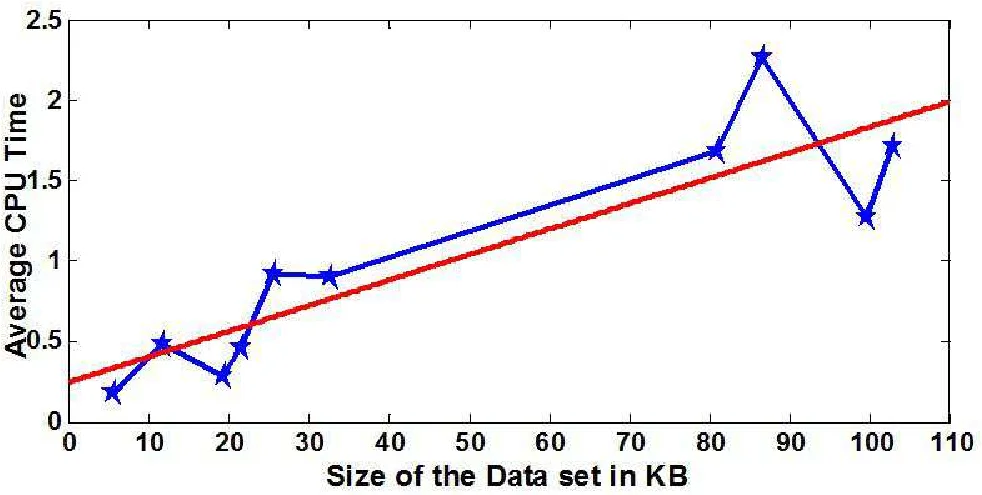

The TPSO procedure proceeds as follows: (1) the data set is partitioned using stratified k‑fold cross‑validation; (2) for each training fold, the swarm size is initialized to N = 5 and PSO searches for an optimal subset; (3) the ADT built on this subset provides a test accuracy Bᵢ, while the new discrimination scores yield M₁ and M₂; (4) the fitness Vᵢ is computed; (5) the swarm size is incremented by one and the process repeats. The loop terminates when a local maximum is detected by examining the first and second derivatives of the relationship between swarm size (y) and discrimination score (x): the algorithm stops when dy/dx shows a decreasing trend and the second derivative is negative, indicating a peak.

Experimental validation was performed on ten benchmark data sets, including hyperspectral imagery, gene expression, and text classification problems. TPSO’s performance was compared against standard PSO‑ADT, GA‑ADT, and several filter‑based methods. Results show that TPSO consistently achieves higher classification accuracy (typically 2–5 percentage points improvement) while selecting substantially fewer features (often a 30 % reduction). Statistical significance was confirmed using the Wilcoxon signed‑rank test at the 95 % confidence level. Convergence analysis revealed that TPSO reaches a stable solution within 20–30 generations regardless of the initial swarm size, demonstrating robustness of the dynamic sizing mechanism.

In conclusion, the paper contributes a practical enhancement to PSO‑based feature selection by introducing a data‑driven, tunable swarm size and a median‑based discrimination metric. The integration with ADT yields a balanced trade‑off between predictive accuracy and feature reduction. The authors suggest future work on extending the tunable swarm concept to other evolutionary algorithms, exploring adaptive increment strategies, and applying TPSO to streaming or real‑time data environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment