The challenge of realistic music generation: modelling raw audio at scale

Realistic music generation is a challenging task. When building generative models of music that are learnt from data, typically high-level representations such as scores or MIDI are used that abstract away the idiosyncrasies of a particular performan…

Authors: S, er Dieleman, A"aron van den Oord

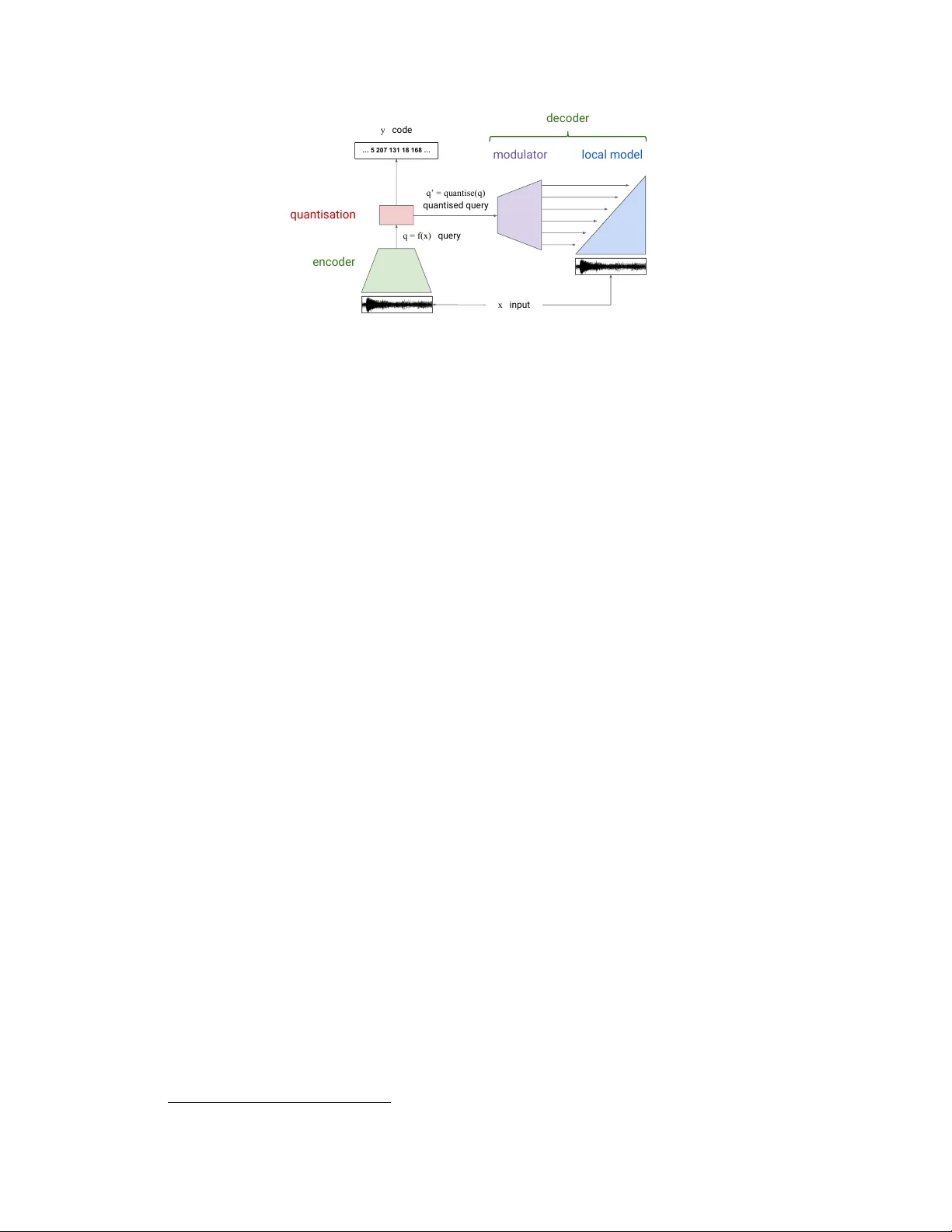

The challenge of r ealistic music generation: modelling raw audio at scale Sander Dieleman Aäron v an den Oord Karen Simonyan DeepMind London, UK {sedielem,avdnoord,simonyan}@google.com Abstract Realistic music generation is a challenging task. When building generati ve models of music that are learnt from data, typically high-lev el representations such as scores or MIDI are used that abstract away the idiosyncrasies of a particular performance. But these nuances are very important for our perception of musicality and realism, so in this work we embark on modelling music in the raw audio domain. It has been sho wn that autoregressi v e models excel at generating raw audio wa veforms of speech, but when applied to music, we find them biased to wards capturing local signal structure at the expense of modelling long-range correlations. This is problematic because music exhibits structure at many different timescales. In this work, we explore autore gressi ve discrete autoencoders (AD As) as a means to enable autoregressi ve models to capture long-range correlations in wav eforms. W e find that they allo w us to unconditionally generate piano music directly in the raw audio domain, which shows stylistic consistenc y across tens of seconds. 1 Introduction Music is a complex, highly structured sequential data modality . When rendered as an audio signal, this structure manifests itself at v arious timescales, ranging from the periodicity of the wa v eforms at the scale of milliseconds, all the way to the musical form of a piece of music, which typically spans sev eral minutes. Modelling all of the temporal correlations in the sequence that arise from this structure is challenging, because they span man y different orders of magnitude. There has been significant interest in computational music generation for many decades [ 11 , 20 ]. More recently , deep learning and modern generativ e modelling techniques hav e been applied to this task [ 5 , 7 ]. Although music can be represented as a wav eform, we can represent it more concisely by abstracting aw ay the idiosyncrasies of a particular performance. Almost all of the work in music generation so far has focused on such symbolic r epresentations : scores, MIDI 1 sequences and other representations that remov e certain aspects of music to varying de grees. The use of symbolic representations comes with limitations: the nuances abstracted a way by such representations are often musically quite important and greatly impact our enjoyment of music. For example, the precise timing, timbre and volume of the notes played by a musician do not correspond exactly to those written in a score. While these v ariations may be captured symbolically for some instruments (e.g. the piano, where we can record the e xact timings and intensities of each key press [ 41 ]), this is usually very dif ficult and impractical for most instruments. Furthermore, symbolic representations are often tailored to particular instruments, which reduces their generality and implies that a lot of work is required to apply existing modelling techniques to ne w instruments. 1 Musical Instrument Digital Interface Preprint. W ork in progress. 1.1 Raw audio signals T o overcome these limitations, we can model music in the raw audio domain instead. While digital representations of audio wa veforms are still lossy to some e xtent, the y retain all the musically rele vant information. Models of audio wa veforms are also much more general and can be applied to recordings of any set of instruments, or non-musical audio signals such as speech. That said, modelling musical audio signals is much mor e challenging than modelling symbolic r epresentations, and as a result, this domain has receiv ed relati vely little attention . Building generativ e models of wa veforms that capture musical structure at many timescales requires high representational capacity , distributed ef fecti vely ov er the v arious musically-relev ant timescales. Pre vious work on music modelling in the raw audio domain [ 10 , 13 , 31 , 43 ] has sho wn that capturing local structure (such as timbre) is feasible, b ut capturing higher-le vel structure has pro ven dif ficult, ev en for models that should be able to do so in theory because their receptiv e fields are large enough. 1.2 Generative models of raw audio signals Models that are capable of generating audio waveforms directly (as opposed to some other representa- tion that can be con verted into audio afterwards, such as spectrograms or piano rolls) are only recently starting to be explored. This was long thought to be infeasible due to the scale of the problem, as audio signals are often sampled at rates of 16 kHz or higher . Recent successful attempts rely on autoregressi ve (AR) models: W aveNet [ 43 ], VRNN [ 10 ], W aveRNN [ 23 ] and SampleRNN [ 31 ] predict digital waveforms one timestep at a time. W av eNet is a conv olutional neural network (CNN) with dilated conv olutions [ 47 ], W aveRNN and VRNN are recurrent neural networks (RNNs) and SampleRNN uses a hierarchy of RNNs operating at dif ferent timescales. For a sequence x t with t = 1 , . . . , T , they model the distrib ution as a product of conditionals: p ( x 1 , x 2 , . . . , x T ) = p ( x 1 ) · p ( x 2 | x 1 ) · p ( x 3 | x 1 , x 2 ) · . . . = Q t p ( x t | x

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment