Independent Deeply Learned Matrix Analysis for Multichannel Audio Source Separation

In this paper, we address a multichannel audio source separation task and propose a new efficient method called independent deeply learned matrix analysis (IDLMA). IDLMA estimates the demixing matrix in a blind manner and updates the time-frequency s…

Authors: Shinichi Mogami, Hayato Sumino, Daichi Kitamura

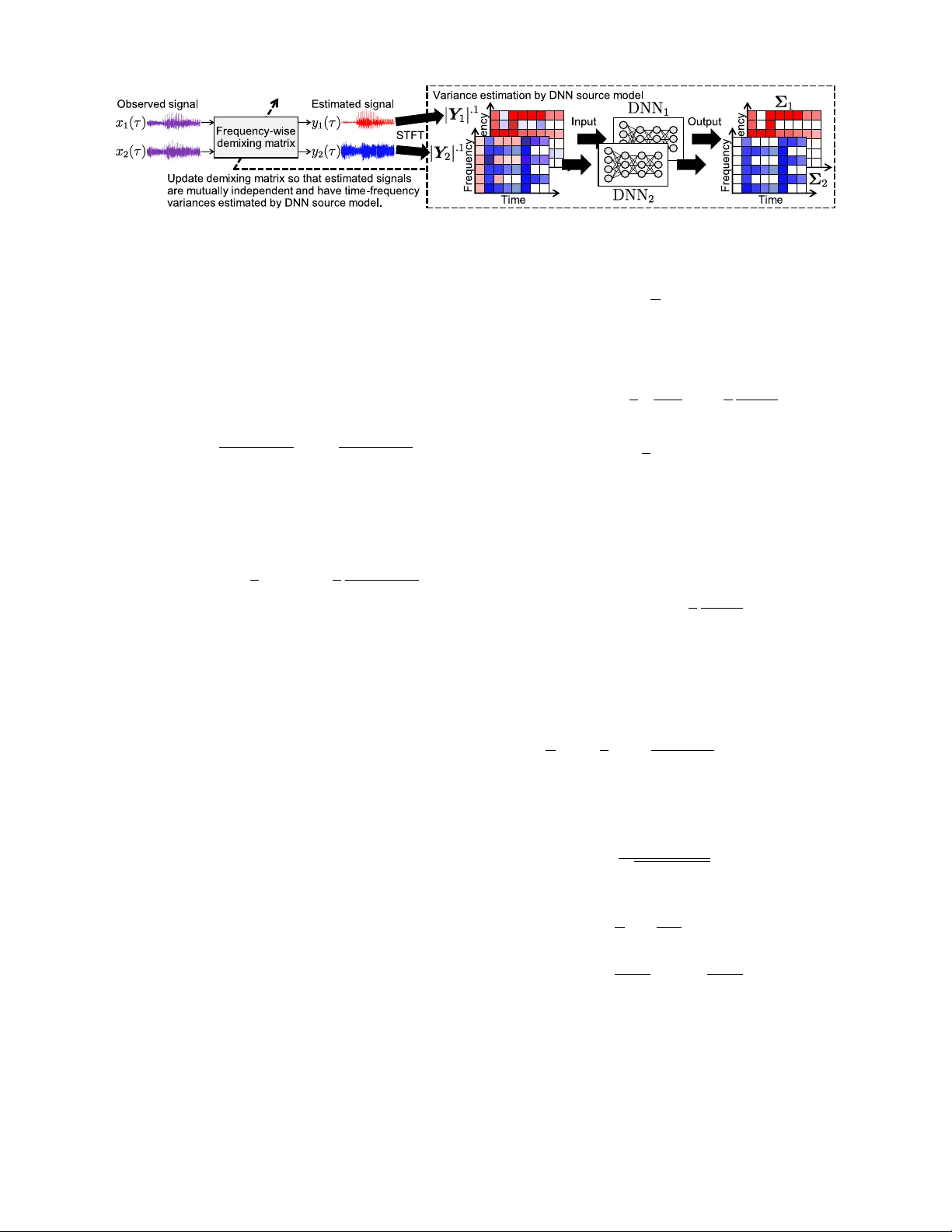

Independent Deeply Learned Matrix Analysis for Multichannel Audio Source Separation Shinichi Mogami, Hayato Sumino, Daichi Kitamura, Norihiro T akamune, Shinnosuke T akamichi, Hiroshi Saruwatari The University of T okyo Nobutaka Ono T okyo Metr opolitan University Abstract —In this paper , we address a multichannel audio source separation task and propose a new efficient method called independent deeply learned matrix analysis (IDLMA). IDLMA estimates the demixing matrix in a blind manner and updates the time-frequency structures of each source using a pretrained deep neural network (DNN). Also, we introduce a complex Student’ s t -distribution as a generalized source generative model including both complex Gaussian and Cauchy distributions. Experiments are conducted using music signals with a training dataset, and the results show the validity of the proposed method in terms of separation accuracy and computational cost. Index T erms —multichannel audio source separation, indepen- dent component analysis, deep neural networks I . I N T R O D U C T I O N Blind source separation (BSS) is a technique for ex- tracting specific sources from an observed multichan- nel mixture signal without knowing a priori informa- tion about the mixing system. The most commonly used algorithm for BSS in the (over)determined case (number of microphones ≥ number of sources) is indepen- dent component analysis (ICA) [1]. Recently , independent low-rank matrix analysis (ILRMA) [2], [3], which is a uni- fication of independent vector analysis (IV A) [4] and non- negati ve matrix factorization (NMF) [5], was proposed as a state-of-the-art BSS method. ILRMA assumes both statistical independence between sources and a low-rank time-frequency structure for each source, and the frequency-wise demixing matrices are estimated without encountering the permutation problem. The source generativ e model assumed in ILRMA was generalized from a complex Gaussian distribution [2] to complex Student’ s t -distribution ( t -ILRMA) [6] for more robust BSS. As a more general framework, in [7], demixing matrix optimization based on a giv en spectrogram estimate for the source was proposed, showing that the precise source spectrogram model enables accurate spatial model estimation. In the underdetermined case (number of microphones < number of sources), the Duong model [8] is a commonly used framew ork. In the Duong model, frequency-wise spatial cov ariances, which encode source locations and their spa- tial spreads, are estimated by an expectation-maximization (EM) algorithm, where the permutation problem must be solved after the optimization. Similarly to ILRMA, an NMF- based low-rank assumption is employed in the Duong model to automatically solve the permutation problem, resulting in multichannel NMF (MNMF) [9], [10]. Note that these algorithms formulate a mixing model, whereas ICA-based methods including ILRMA estimate a demixing model for the separation by focusing only on the determined case. It has been experimentally confirmed that the optimization of a demixing model is more efficient and numerically stable than that of a mixing model [2]. In supervised (informed) source separation, deep neural network (DNN) has shown promising performance in both single-channel [11] and multichannel source separation [12]. In fact, when sufficient data of the audio sources are av ailable, DNN can effecti vely model their time-frequency structures. Howe ver , it is almost impossible to compose an appropri- ate and generalized spatial model with DNN from training data observed in a multichannel format. This is because the spatial model depends on many factors, including source and microphone locations, the recording room, and re verberation. Therefore, it is reasonable to combine a pretrained DNN source model and a blind estimation of the spatial model. Nugraha et al. proposed a DNN-based multichannel source separation framework [13] using the Duong model (hereafter referred to as Duong+DNN ). Although this is a con vincing approach, a large computational cost is required to estimate the spatial cov ariance (the EM algorithm in the Duong model) and the performance is not satisfactory owing to the difficulty of parameter optimization. In this paper , we unify the ICA-based blind estimation of the demixing matrix and the DNN-based supervised update of the source spectrogram model. In the proposed method, we intro- duce a comple x Student’ s t -distribution as a generalized source generativ e model, and the demixing matrix (spatial model) is efficiently optimized using a majorization-minimization (MM) algorithm [14]. Since the proposed method utilizes a time- frequency spectrogram matrix estimated by DNN to optimize the spatial model, we call this method independent deeply learned matrix analysis (IDLMA) . T able I shows the relation- ship between the existing and proposed methods. The spatial model is blindly estimated in all the methods, while the source spectrogram model is estimated by DNN in Duong+DNN and the proposed IDLMA. I I . C O N V E N T I O N A L M E T H O D A. F ormulation Let N and M be the numbers of sources and channels, respectiv ely . The short-time Fourier transform (STFT) of the T ABLE I C L AS S I FI CATI O N O F M ULT I CH A N N EL S O UR C E S E PAR A T I ON M E TH O D S Source spectrogram model Blind Supervised Mixing model MNMF [10], [15] Duong+DNN [13] Demixing model ILRMA [2], [6] Proposed IDLMA multichannel source, observed, and estimated signals are de- fined as s ij = ( s ij 1 , . . . , s ij N ) > , x ij = ( x ij 1 , . . . , x ij M ) > , and y ij = ( y ij 1 , . . . , y ij N ) > , where i = 1 , . . . , I ; j = 1 , . . . , J ; n = 1 , . . . , N ; and m = 1 , . . . , M are the integral index es of the frequency bins, time frames, sources, and channels, respectiv ely , and > denotes the transpose. W e also denote these spectrograms as S n ∈ C I × J , X m ∈ C I × J , and Y n ∈ C I × J , whose elements are s ij n , x ij n , and y ij n , respec- tiv ely . In ILRMA, the following mixing system is assumed: x ij = A i s ij , (1) where A i = ( a i 1 , . . . , a iN ) ∈ C M × N is a frequency-wise mixing matrix and a in is the steering vector for the n th source. The assumption of the mixing system (1) corresponds to restricting the spatial covariance in the Duong model to a rank-1 matrix [8]. When M = N and A i is not a singular matrix, the estimated signal y ij can be represented as y ij = W i x ij , (2) where W i = A − 1 i = ( w i 1 , . . . , w iN ) H is the demixing matrix, w in is the demixing filter for the n th source, and H denotes the Hermitian transpose. ILRMA estimates both W i and y ij from only the observation x ij assuming statistical independence between s ij n and s ij n 0 , where n 6 = n 0 . B. ILRMA and Its Generalization with Student’ s t -distribution In [2], [3], the following time-frequency-varying complex Gaussian source generati ve model is assumed (hereafter re- ferred to as Gauss-ILRMA): Y i,j p ( y ij n ) = Y i,j 1 π σ ij n 2 exp − | y ij n | 2 σ ij n 2 , (3) σ ij n 2 = X k t ikn v kj n , (4) where σ ij n is the variance (source spectrogram model), k = 1 , . . . , K is the index of the bases, and t ikn and v kj n are the parameters in the NMF-based low-rank model. W e also denote the variance matrix as Σ n ∈ R I × J ≥ 0 , whose elements are σ ij n . In t -ILRMA [6], (3) is generalized to a complex Student’ s t -distribution as follows: Y i,j p ( y ij n ) = Y i,j 1 π σ ij n 2 1 + 2 ν | y ij n | 2 σ ij n 2 − 2+ ν 2 , (5) σ ij n p = X k t ikn v kj n , (6) where ν is the degree-of-freedom parameter and p is the domain parameter . When ν → ∞ and p = 2 , (5) and (6) become (3) and (4), respectively . Also, (5) with ν = 1 represents the Cauchy-distribution likelihood. The demixing matrix W i and NMF source model t ikn v kj n can be optimized in the maximum-likelihood (ML) sense on the basis of (3) or (5). Since the low-rank structure of | Y n | . 2 is ensured by the NMF source model, the permutation problem can be avoided, where |·| .p for matrices denotes the element-wise absolute and p th-power operations. I I I . P R O P O S E D M E T H O D A. Motivation The NMF source model in ILRMA is effecti ve for some sources that hav e a low-rank time-frequency structure. How- ev er, this source spectrogram model is not always valid. For example, speech signals have continuously varying spectra, which cannot be efficiently modeled by NMF , and the sepa- ration performance of ILRMA is degraded for such sources. If suf ficient training data for each source can be prepared in advance, it is possible to construct a suitable source spectro- gram model by employing DNN [11]. On the other hand, since the spatial parameters depend on many factors, it is simply impractical to train a general spatial model with DNN e ven if huge amounts of multichannel observ ation data are av ailable; therefore, the spatial parameters should be estimated blindly . In this paper , we propose a new framew ork, IDLMA, which combines the ICA-based blind estimation of demixing matrix W i and the supervised learning of variance matrix Σ n based on DNN, where the loss function in DNN is designed to maximize the likelihood of the source generative model. In addition, similarly to t -ILRMA, we use a generalized model based on a complex Student’ s t -distribution including both Gaussian and Cauchy distributions. Duong+DNN also employs DNN that maximizes the likelihood of the Gaussian or Cauchy distrib ution. Howe ver , since the mixing model (spatial cov ariance) in Duong+DNN is defined by only the Gaussian model, the estimations of the spectral and spatial parameters are inconsistent. In the proposed method, this conflict is resolved by modeling the spatial parameters with the Student’ s t -distrib ution model and deri ving its optimization algorithm fully consistently in the ML sense. B. Cost Function in IDLMA Let DNN n be the DNN source model that enhances the n th source component from a mixture signal, namely , the variance matrix Σ n is estimated by DNN n , and these DNN source models are trained in advance. Fig. 1 shows the principle of the separation mechanism in the proposed IDLMA. On the basis of (3), the cost function (negati ve log- likelihood of x ij = W − 1 i y ij ) in IDLMA with the complex Gaussian distribution (Gauss-IDLMA) is obtained as L Gauss = X i,j,n " | y ij n | 2 σ ij n 2 + 2 log σ ij n # − 2 J X i log | det W i | , (7) and (7) can be generalized with (5) ( t -IDLMA) as L t = X i,j,n " 1 + ν 2 log 1 + 2 ν | y ij n | 2 σ ij n 2 + 2 log σ ij n # − 2 J X i log | det W i | , (8) Fig. 1. Principle of source separation based on IDLMA in case of N = M = 2 . where y ij n = w H in x ij . Note that L t con verges to L Gauss when ν → ∞ . C. Update Rule of Sour ce Spectr ogram Model Based on DNN DNN n is trained so that the source spectrogram | ˜ S n | . 1 is predicted from an input mixture spectrogram | ˜ X | . 1 , where ˜ S n ∈ C I × J and ˜ X ∈ C I × J are source and mixture spec- trograms in the training data, respectiv ely . When we define the output spectrogram as D n = DNN n ( | ˜ X | . 1 ) ∈ R I × J ≥ 0 , the loss function of DNN n for Gauss-IDLMA is defined as L Gauss ( D n ) = X i,j | ˜ s ij n | 2 + δ 1 d ij n 2 + δ 1 − log | ˜ s ij n | 2 + δ 1 d ij n 2 + δ 1 − 1 ! , (9) where ˜ s ij n and d ij n are the elements of ˜ S n and D n , respec- tiv ely , and δ 1 is a small value to avoid division by zero [13]. Also, the loss function of DNN n for t -IDLMA is defined as L t ( D n ) = X i,j " 1 + ν 2 log 1 + 2 ν | ˜ s ij n | 2 + δ 1 d ij n 2 + δ 1 + log( d ij n 2 + δ 1 ) # . (10) Since minimizing (9) or (10) is equiv alent to the ML estima- tion of σ ij n in (7) or (8), DNN n can be interpreted as the proper source generative model based on (3) or (5), respec- tiv ely . Similarly to (8), L t ( D n ) con verges to L Gauss ( D n ) up to a constant when ν → ∞ . The variance matrix is updated by the trained DNN n as | Σ n | . 1 ← DNN n ( | Y n | . 1 ) , (11) σ ij n ← max( σ ij n , ε ) , (12) where ε is a small value to increase the numerical stability of the spatial update described in Sect. III-D. The DNN architectures used in this paper are described in detail in Sect. IV -B. D. Update Rule of Demixing Matrix The demixing matrix W i can be optimized while taking the statistical independence between sources and the variance matrix Σ n into account on the basis of (3) or (5). In Gauss- IDLMA, W i can be updated by applying iterati ve projection (IP) [16] to (7), where IP is a fast and stable optimization algorithm that can be applied to the sum of w H in x ij 2 and − log | det W i | . In t -IDLMA, IP cannot be applied to (8) because w H in x ij 2 is intrinsic in the logarithm function. Therefore, we apply an MM algorithm [14] to deriv e the update rule of w in . T o design a majorization function for (8), we apply the tangent line inequality log z ≤ 1 α ( z − α ) + log α (13) to the logarithm term in (8), where z > 0 is the original variable and α > 0 is an auxiliary variable. The majorization function can be designed as L t ≤ X i,j,n " 1 + ν 2 1 α ij n 1 + 2 ν | y ij n | 2 σ ij n 2 − α ij n + 1 + ν 2 log α ij n + 2 log σ ij n # − 2 J X i log | det W i | =: L + t , (14) where α ij n is the auxiliary variable, and L t and L + t become equal only when α ij n = 1 + 2 ν | y ij n | 2 σ ij n 2 . (15) W e can apply IP in analogy with the deriv ation in Gauss- ILRMA. The majorization function (14) is reformulated as L + t = J X i,n w H in U in w in − 2 J X i log | det W i | + const ., (16) U in = 1 J 1 + 2 ν X j 1 α ij n σ ij n 2 x ij x H ij . (17) By applying IP and substituting (15), the demixing filter w in can be updated as follows: w in ← ( W i U in ) − 1 e n , (18) w in ← w in p w H in U in w in , (19) where U in = 1 J X j 1 c ij n x ij x H ij , (20) c ij n = ν ν + 2 σ ij n 2 + 2 ν + 2 | y ij n | 2 , (21) and e n is an N -dimensional vector whose n th element is one and whose other elements are zero. After calculating (18) and (19), we update the separated signal by y ij n ← w H in x ij . In particular , when ν → ∞ , the majorization function (14) con verges to the original cost function (7), and (20) con verges to U in = 1 J X j 1 σ ij n 2 x ij x H ij . (22) The update rule (18)–(21) is equal to that in t -ILRMA. T o fix the scales of y ij n among the frequency bins, the following back-projection technique is applied before updating Σ n by (11) and (12): y ij n ← [ W − 1 i ( e n ◦ y ij )] m ref , (23) where y ij n is an element of Y n , ◦ is the Hadamard product, [ · ] n is the n th value of the vector , and m ref is the index of the reference channel. E. Relation between P arameter ν and Numerical Stability In Gauss-IDLMA, U in defined by (22) can be interpreted as the spatial covariance matrix x ij x H ij weighted by σ ij n − 2 . In general, σ ij n is estimated by DNN n , whose output likely fluctuates, resulting in many spectral chasms in the time- frequency plane. Therefore, the weight coefficient σ ij n − 2 may be an excessi vely large value, reducing the numerical stability of Gauss-IDLMA in IP . In t -IDLMA, on the other hand, c ij n in (20) is the point internally dividing σ ij n 2 and | y ij n | 2 with a ratio of ν : 2 . Since y ij n is the output of a linear filter, | y ij n | 2 contains fewer chasms than σ ij n 2 ; this yields a beneficial spectral smoothing and numerical stability in optimization. A prospecti ve dra wback of t -IDLMA is slo wer con ver gence, especially in the case of small ν close to unity , because the strong inference of DNN is discounted. Thus, there is a tradeoff when setting ν . The appropriate selection of ν will be discussed in the next section. I V . E X P E R I M E N TA L E V A L UAT I O N A. T ask, Dataset, and Conditions W e confirmed the v alidity of the proposed method by conducting a music source separation task. W e compared four methods: ILRMA (blind, K = 20 ), DNN+WF , Duong+DNN, and proposed IDLMA, where DNN+WF applies a Wiener fil- ter constructed using all the outputs of the DNN source models to the observed monaural signal [17]. Note that MNMF was not included in this experiment because its performance is al- most always inferior to that of ILRMA [18]. For Duong+DNN and IDLMA, the v ariance matrix Σ n was updated by DNN n after ev ery 10 iterations of the spatial optimization. W e used the DSD100 dataset of SiSEC2016 [19] as the dry sources and the training datasets of DNN, where only bass (Ba.), drums (Dr .), and vocals (V o.) were used in this experiment. The 50 songs in the dev data were used to train DNN n and the top 25 songs in alphabetical order in the test data were used for performance ev aluation. The test songs were trimmed only in the interval of 30 to 60 s . T o simulate a rev erberant mixture, we produced the two-channel observed signals by conv oluting the impulse response E2A ( T 60 = 300 ms ) obtained from the R WCP database [20] with each source, and the mixture of Ba. and V o. (Ba./V o.) or Dr . and V o. (Dr ./V o.) was separated. The recording condition of E2A is given in [6]. All the signals were do wnsampled to 0 20 40 60 80 100 Iteration step − 4 − 2 0 2 4 6 8 10 12 14 16 18 SDR imp rovement [dB] ILRMA ( ν → ∞ ; Gauss) IDLMA ( ν = 10) IDLMA ( ν = 1000) IDLMA ( ν = 1; Cauchy) IDLMA ( ν = 100) IDLMA ( ν → ∞ ; Gauss) Fig. 2. Example of SDR improvements for each method for Ba./V o. 8 kHz . STFT was performed using a 512-ms-long Hamming window with a 256-ms-long shift in the Ba./V o. case and a 256-ms-long Hamming window with a 128-ms-long shift in the Dr ./V o. case. W e used the signal-to-distortion ratio (SDR) [21] as the total separation performance. B. Ar chitectur e and T raining of DNN Source Model W e constructed a fully connected DNN with four hidden layers. Each layer had 1024 units, and a rectified linear unit was used for the output of each layer . T o prepare the training data of mixture signals, we defined the following vectors: ~ s j n = ( ˜ s > ( j − 2 c ) n , ˜ s > ( j − 2 c +2) n , · · · , ˜ s > ( j +2 c ) n ) > ∈ C I (2 c +1) , (24) ~ x j = ( P n α j n ~ s j n ) k P n α j n ~ s j n k 2 + δ 2 − 1 ∈ C I (2 c +1) , (25) ¯ s j n = ( α j n ˜ s j n ) k P n α j n ~ s j n k 2 + δ 2 − 1 ∈ C I , (26) where ˜ s j n ∈ C I is the STFT of the n th source at j (the column vector of ˜ S n ), ~ x j and ¯ s j n are the mixture and source vectors, respectiv ely , α j n is a random v ariable in the range [0 . 05 , 1] , which controls the signal-to-noise ratio in ~ x j , and δ 2 is a small value to av oid division by zero. The input and output vectors of DNN n are | ~ x j | . 1 and | ¯ s j n | . 1 , respectiv ely . T o optimize DNN, we added the term ( λ/ 2) P q g q 2 to (9) or (10) for regularization, where g q is the weight coefficient in DNN, and AD ADEL T A [22] with a 128-size mini-batch was performed for 200 epochs. The parameter ε was experimen- tally optimized and set to 0 . 1 × ( I J ) − 1 P i,j ˆ r ij n . The other parameters were set to δ 1 = δ 2 = 10 − 5 , c = 3 , and λ = 10 − 5 . C. Comparison of Separation P erformance Fig. 2 depicts an example of the con ver gence behaviors of ILRMA and IDLMA. These results show that (a) the DNN source model leads the demixing matrix to more accurate estimation, resulting in a significant leap of SDR improv ement, and (b) a larger ν provides a faster spatial model update but t -IDLMA with the appropriate ν ( =1000 ) con verges to a higher SDR than Gauss-IDLMA ( ν = ∞ ), as mentioned in Sect. III-E. Figs. 3 and 4 sho w the a verage SDR improv ements of 25 test songs for Ba./V o. and Dr ./V o., respectiv ely . W e can confirm ILRMA DNN+WF Duong+DNN Prop osed IDLMA − 2 0 2 4 6 8 10 12 14 SDR imp rovement [dB] Blind Sup ervised ν = 1 (Cauchy) ν = 100 ν → ∞ (Gauss) ν = 10 ν = 1000 Fig. 3. A verage SDR improvements of 25 Ba./V o. songs. ILRMA DNN+WF Duong+DNN Prop osed IDLMA 0 2 4 6 8 10 12 14 16 SDR imp rovement [dB] Blind Sup ervised ν = 1 (Cauchy) ν = 100 ν → ∞ (Gauss) ν = 10 ν = 1000 Fig. 4. A verage SDR improvements of 25 Dr ./V o. songs. that the proposed IDLMA outperforms the other methods for both mixtures of instruments. In particular , t -IDLMA with ν = 1000 achiev es the highest separation accuracy . D. Computational T imes T o show the efficienc y of the proposed approach, we com- pared the computational times of ILRMA, Duong+DNN, and IDLMA for 100 iterations of spatial optimization. W e used Python 3.5.2 (64-bit) and Chainer 2.1.0 with an Intel Core i7-6850K ( 3 . 60 GHz , 6 Cores) CPU. T o calculate the DNN outputs, a GeForce GTX 1080T i GPU was utilized. Examples of computational times were 23 . 3 s for ILRMA, 287 . 1 s for Duong+DNN, and 26 . 6 s for IDLMA. These results confirm that the proposed method is as fast as conv entional ILRMA and more than 10 times faster than Duong+DNN. V . C O N C L U S I O N In this paper , we proposed a new determined source separa- tion method that unifies ICA-based blind spatial optimization and the DNN-based supervised source spectrogram model. The proposed method employs a complex Student’ s t -distribution as the source generative model. An experimental comparison showed the efficac y of the proposed method in terms of both the separation accuracy and the computational cost. A C K N O W L E D G M E N T This work was partly supported by SECOM Science and T echnology Foundation and JSPS KAKENHI Grant Numbers JP16H01735, JP17H06101, and JP17H06572. R E F E R E N C E S [1] P . Comon, “Independent component analysis, a new concept?” Signal Pr ocess. , vol. 36, no. 3, pp. 287–314, 1994. [2] D. Kitamura, N. Ono, H. Sawada, H. Kameoka, and H. Saruwatari, “De- termined blind source separation unifying independent vector analysis and nonnegativ e matrix factorization, ” IEEE/ACM T rans. ASLP , vol. 24, no. 9, pp. 1626–1641, 2016. [3] D. Kitamura, N. Ono, H. Sawada, H. Kameoka, and H. Saruwatari, “Determined blind source separation with independent low-rank matrix analysis, ” in Audio Sour ce Separation , S. Makino, Ed. Springer , 2018 (in press), ch. 6, 31 pages. [4] T . Kim, H. T . Attias, S.-Y . Lee, and T .-W . Lee, “Blind source separation exploiting higher-order frequency dependencies, ” IEEE T rans. ASLP , vol. 15, no. 1, pp. 70–79, 2007. [5] D. D. Lee and H. S. Seung, “Learning the parts of objects by non- negati ve matrix factorization, ” Nature , vol. 401, no. 6755, pp. 788–791, 1999. [6] S. Mogami, D. Kitamura, Y . Mitsui, N. T akamune, H. Saruwatari, and N. Ono, “Independent low-rank matrix analysis based on complex Student’ s t -distribution for blind audio source separation, ” in Proc. MLSP , 2017. [7] A. R. L ´ opez, N. Ono, U. Remes, K. Palom ¨ aki, and M. Kurimo, “Designing multichannel source separation based on single-channel source separation, ” in Pr oc. ICASSP , 2015, pp. 469–473. [8] N. Q. K. Duong, E. V incent, and R. Gribon val, “Under-determined rev erberant audio source separation using a full-rank spatial cov ariance model, ” IEEE T rans. ASLP , vol. 18, no. 7, pp. 1830–1840, 2010. [9] A. Ozerov , E. V incent, and F . Bimbot, “ A general flexible framework for the handling of prior information in audio source separation, ” IEEE T rans. ASLP , vol. 20, no. 4, pp. 1118–1133, 2012. [10] H. Sawada, H. Kameoka, S. Araki, and N. Ueda, “Multichannel exten- sions of non-negati ve matrix factorization with complex-valued data, ” IEEE Tr ans. ASLP , vol. 21, no. 5, pp. 971–982, 2013. [11] E. M. Grais, M. U. Sen, and H. Erdogan, “Deep neural networks for single channel source separation, ” in Proc. ICASSP , 2014, pp. 3734– 3738. [12] S. Araki, T . Hayashi, M. Delcroix, M. Fujimoto, K. T akeda, and T . Nakatani, “Exploring multi-channel features for denoising- autoencoder-based speech enhancement, ” in Proc. ICASSP , 2015, pp. 116–120. [13] A. A. Nugraha, A. Liutkus, and E. V incent, “Multichannel audio source separation with deep neural networks, ” IEEE/ACM T rans. ASLP , vol. 24, no. 9, pp. 1652–1664, Sept 2016. [14] D. R. Hunter and K. Lange, “Quantile regression via an MM algorithm, ” J. Comput. Graph. Stat. , vol. 9, no. 1, pp. 60–77, 2000. [15] A. Ozerov and C. F ´ evotte, “Multichannel nonnegati ve matrix factoriza- tion in conv olutiv e mixtures for audio source separation, ” IEEE T rans. ASLP , vol. 18, no. 3, pp. 550–563, 2010. [16] N. Ono, “Stable and fast update rules for independent vector analysis based on auxiliary function technique, ” in Proc. W ASP AA , 2011, pp. 189–192. [17] S. Uhlich, F . Giron, and Y . Mitsufuji, “Deep neural network based instrument extraction from music, ” in Proc. ICASSP , 2015, pp. 2135– 2139. [18] S. Mogami, D. Kitamura, N. T akamune, Y . Mitsui, H. Saruwatari, N. Ono, Y . T akahashi, and K. Kondo, “Experimental ev aluation of independent lo w-rank matrix analysis based on complex Student’ s t - distribution, ” in Pr oc. 2017 Autumn Meeting of Acoustical Society of J apan , 2017, pp. 515–518 (in Japanese). [19] A. Liutkus, F .-R. St ¨ oter , Z. Rafii, D. Kitamura, B. Rivet, N. Ito, N. Ono, and J. Fontecav e, “The 2016 Signal Separation Evaluation Campaign, ” in Proc. L V A/ICA , 2017, pp. 323–332. [20] S. Nakamura, K. Hiyane, F . Asano, T . Nishiura, and T . Y amada, “ Acous- tical sound database in real en vironments for sound scene understanding and hands-free speech recognition, ” in Proc. LREC , 2000, pp. 965–968. [21] E. V incent, R. Gribonv al, and C. F ´ evotte, “Performance measurement in blind audio source separation, ” IEEE T rans. ASLP , vol. 14, no. 4, pp. 1462–1469, 2006. [22] M. D. Zeiler, “ Adadelta: An adaptiv e learning rate method, ” CoRR , vol. abs/1212.5701, 2012.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment