Sounderfeit: Cloning a Physical Model using a Conditional Adversarial Autoencoder

An adversarial autoencoder conditioned on known parameters of a physical modeling bowed string synthesizer is evaluated for use in parameter estimation and resynthesis tasks. Latent dimensions are provided to capture variance not explained by the con…

Authors: Stephen Sinclair

This postprint has been reformatted; the published article may be found at < https://www.revistas.ufg.br/m usica/article/view/53570> SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional A dversa rial Autoenco der Revista Música Hodie, Goiânia, V.18 - n.1, 2018, p. 44–60 Sounderfeit: Cloning a Ph ysical Mo del using a Conditional A dv ersarial A uto enco der Stephen Sinclair (Inria Chile, San tiago, Chile) stephen.sinclair@inria.cl Abstract: An adversarial auto encoder conditioned on known parameters of a physical mo deling b ow ed string synthesizer is ev aluated for use in parameter estimation and resynthesis tasks. Latent dimensions are provided to capture v ariance not explained by the conditional parameters. Results are compared with and without the adversarial training, and a system capable of “cop ying” a giv en parameter-signal bidirectional relationship is examined. A real-time synthesis system built on a generative, conditioned and regularized neural net work is presented, allowing to construct engaging sound synthesizers based purely on recorded data. Keyw ords: Physical mo deling, sound syn thesis, auto enco der, latent parameter space Sounderfeit: Clonagem de um mo delo físico com auto-enco ders adversários condicionais Resumo: Um auto codificador adv ersarial condicionado a parâmetros conhecidos de um sintetizador mo delado físico de cordas arqueadas é av aliado p or sua utilização na estimação de parâmetros e nas tarefas de resíntese. O dimensõ es latentes são fornecidas para capturar a v ariância não explicado p elos parâmetros condicionais. Os resultados são comparados com e sem o treinamen to adversário, e um sistema capaz de “copiar” uma determinada relação bidirecional entre parâmetros e sinal é examinado. Um sistema de síntese em temp o real construído em uma rede neuronal generativ a, condicionada e regularizada é apresen tada, p ermitindo criar sin tetizadores de som interessan tes baseados puramen te em dados grav ados. P ala vras-c hav e: Modelagem física, sín tese de som, auto co der, parâmetros laten tes 1. Intro duction This pap er explores the use of an auto enco der to mimic the bidirectional parameter- data relationship of an audio syn thesizer, effectively “cloning” its op eration while regular- izing the parameter space for interactiv e con trol. The auto enc o der 1 is an artificial neural net w ork (ANN) configuration in which the netw ork weigh ts are trained to minimize the difference b etw een input and output, learning the iden tity function. When forced through a b ottlenec k lay er of few parameters, the netw ork is made to represent the data with a lo w-dimensional “co de,” which w e call the latent parameters. Recen tly adversarial configurations ha v e b een prop osed as a metho d of regularizing this latent parameter space in order to match it to a given distribution (MAKHZANI, 2016). The adv antages are t w o-fold: to ensure the av ailable range is uniformly co v ered, making it a useful interpolation space; and to maximally reduce correlation b etw een parameters, encouraging them to represen t orthogonal asp ects of the v ariance. F or example, in a face-generator mo del, this could translate to parameters for hair style and the presence of glasses (RADFORD; METZ; CHINT ALA, 2015). Mean while, it has also b een sho wn that a generative netw ork can b e conditioned on known parameters (MIRZA, 2014), to mak e it p ossible to con trol the output, for example, to generate a known digit class when trained on MNIST digits. In this work, these t w o concepts are com bined to explore whether an adversarial auto enco der can b e conditioned on known parameters for use in b oth parameter estimation Revista Música Hodie, Goiânia - V.18, 165p., n.1, 2018 44 Recibido em: 27/11/2017 - Aprovado em: 05/03/2018 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 and synthesis tasks for audio. In essence, we seek to hav e the netw ork sim ultaneously learn to mimic the transfer function from parameters to data of a p erio dic signal, as well as from data to parameters. Latent dimensions are provided to the net w ork to capture v ariance not explained b y the conditional parameters; in audio, they ma y represent in ternal state, sto c hastic sources of v ariance, or unrepresented parameters e.g. low-frequency oscillators. The idea of using adv ersarial training to regularize the distribution of the latent space is to find a configuration suc h that the parameters are made to lie in a predictable range and uniformly fill the space, in order to pro vide a system suitable for live interaction. F or the principal test case herein, w e train the auto enco der on wa veform p erio ds from a ph ysical mo deling synthesizer based on a mo del of the interaction b et ween a string and a b o w. The goal is to pro duce a black-box parameter estimator and synthesizer that b oth “listens to” (estimates physical parameters) of an incoming sound and repro duces it, with a parameter space optionally informed by the original parameters. Application of the arc hitecture describ ed here is of course not limited to physical mo dels, but ma y b e applied to any p erio dic sound source; a physical mo del was c hosen for its abilit y to pro duce fairly complex signals from a simple parameter mapping, and the p erio dic requiremen t comes mainly from needing a constant size for the input and output net w ork lay ers. Results are visualized and some informal qualitativ e ev aluations are discussed. The auto enco der was able to repro duce the steady state of the syn thesizer with and without regularization, although repro duction error increased, exp ectedly , in the presence of regularization. Some parameter estimation problems w ere iden tified with the dataset and sampling metho d used, and w e conclude with some lessons learned in the art of “synth cloning” . A real-time system, Sounderfeit, built on a generative neural netw ork is presented, allowing to construct engaging sound syn thesizers based purely on recorded data and optional prior kno wledge of parameters. 2. Previous wo rk Previous publication of this w ork (SINCLAIR, 2017) did not fully compare the results with related literature in the audio domain, and therefore in this extended v ersion w e include a more thorough o v erview of related work in this section. Indeed this w ork com bines tw o ideas that ha v e b een previously inv estigated, that of parameter estimation, and that of ANN-based audio synthesis. Note that in the follo wing w e skip mention of sev eral w orks that make use of similar ANN approac hes for classifying sounds; in fact quite a lot of this w ork is av ailable in the music information retriev al literature, and thus w e restrict the discussion to pap ers that sp ecifically discuss parameter estimation and audio synthesis. P arameter estimation for physical mo deling is a w ell-researched topic, ho w ev er it typically lev erages kno wn relations b et w een observ able asp ects of the signal and physically-relev ant parameters, c.f. (SCHERRER; DEP ALLE, 2011). The application of black-box, ANN-based mo dels is until recen tly rather less common, but several works can b e found in the literature. The intuition in suc h an approac h is that since a physical mo del represen ts a non-linear, stateful transformation of the parameters, the generated signal tends to b e difficult to separate in to effects originating from sp ecific parameters, thus an arbitrary non-linear m ultiv ariate regression based on known data is a more pragmatic approac h to constructing suc h an inverse mapping . F or example, Cemgil and Erkut (1997) inv estigated the application of ANN for 45 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 estimating the parameters of a pluck ed string mo del. Also in this vein, Riionheimo and Välimäki (2003) used a genetic searc h strategy for finding similar parameters. They emplo y ed a p erceptual mo del as their error metric in order to b etter measure distance b et w een sets of parameters as p erceiv ed by humans. A parameter space quantized (also according to a p erceptual mo del) was used. Similarly , G abrielli (2017) used a multila yered con v olutional neural net w ork (deep CNN) to determine parameters of an organ physical mo del that supp orts up to 58 parameters (organ stops) p er k ey . Short-time F ourier sp ectra w ere used as input calculated from a dataset of 2220 samples, and the netw ork w as trained to minimize the mean squared error on the parameter reconstruction. A notion of sp e ctr al irr e gularity w as used to judge the similarit y of resulting synthesized sounds. Pfalz and Berdahl (2017) explored the use of a long short-term memory recursive neural net work (LSTM-RNN) to estimate the contin uous control signals from the output of a physical mo del. Mean squared error of the reconstructed parameters to the original parameters is used as the loss. It w as successful at determining trigger times and parameters for fairly simple pluck ed gestures with several t yp es of resonator mo dels ov er a few seconds of time, but generated spurious triggers when trained on more complex musical gestures. Regarding generation of audio using neural netw orks, generally tw o approac hes are used: either (1) generation of pulse-co ded audio one sample at a time using a sequen tial mo del, e.g. an autoregressiv e mo del or a recursive neural netw ork (RNN); or (2) generation of audio frames in the form of sp ectra or sp ectrograms (series of sp ectra). The current work tak es an alternativ e approach (3) generation of pulse-co ded time-domain audio frames. An example of the first tec hnique, sample-at-a-time synthesis, is W a veNet (OORD et al., 2016), in which a multila yered CNN with exp onentially dilated receptive fields is used to mo del progressively short- to long-term dep endencies in the audio stream. The “receptive field” is enlarged exp onentially at eac h la y er using dilated causal conv olutions. This configuration may b e conditioned on external v ariables, for example sp eak er identification, phoneme information, m usical st yle, etc. In addition to testing this mo del on sp eec h co ding, it w as later used in an auto enco der configuration, dubb ed NSyn th, in a wa y quite comparable to the current w ork, that is, to enco de and repro duce musical instrumen t tones (ENGEL, 2017). Their analysis go es into depth on the qualities of reconstruction compared to a baseline mo del, whic h itself is also a deep CNN describ ed b elow, and describ es some temp oral asp ects of the learned laten t space, as w ell as the qualitativ e effects of laten t-space interpolation. In comparison, another example is SampleRNN (MEHRI et al., 2016), whic h used m ulti-scaled deep RNNs to capture long-term dep endencies as a stac k ed autoregressive mo del, i.e., it enco des the conditional probability distribution of the next sample based on previous samples and enco dings pro duced b y other la y ers. The m ultiple scales allo w this sample-at-a-time mo del to also take into accoun t frame-level information, and can therefore use higher levels to enco de longer-term dep endencies. It w as compared to W a v eNet and a standard RNN with Gaussian mixture mo del in terms of reconstruction mean squared error, and with h uman listening preference exp erimen ts on enco dings of voice, human non-v o cal sounds, and piano music, and p erformed fa v ourably . Interestingly , rather than purely real-v alued output, all of the ab o ve-men tioned sample-at-a-time metho ds made use of a quantized one-hot categorical softmax o ver an 8-bit µ -la w enco ding for estimating the real v alue of the audio signal. The intuition is that such an enco ding allo ws to remo v e any prior assumptions ab out distribution and simply take the most probable discrete v alue. As for frame-at-a-time metho ds, the baseline mo del from Engel (2017) is applicable, 46 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 as it consists of a deep CNN trained on sp ectrograms. As men tioned, they used a large instrumen t dataset and a large latent space of appro ximately 2000 dimensions to enco de b oth the time and frequency domains. Indeed, this mo del can b e though t of more as a sp ectrogram-at-a-time rather than frame-at-a-time, since the enco ding takes multiple frames into accoun t; the latent dimensions p er frame w ere on the order of 16 and 32. The authors rep orted p o or p erformance for enco ding phase or complex representations, and th us used only sp ectral magnitude as input, and applied a phase reconstruction tec hnique to synthesize the final audio. In a w ork similar in motiv ation to the curren t one, Riera, Eguía and Zabaljáuregui (2017) used a sparse auto enco der to generate a set of descriptors according to the latent space of the mo del. A multila y ered net w ork w as used with a latent mo del of 8 dimensions, trained on all frames of a single recording. The sparse activ ation of the central b ottlenec k la y er is visualized in time and in terpreted as a “neural score”, and can b e used to reconstruct the original audio. A comparison of the clustering in the first three principle comp onen ts is pro vided to compare the resulting “tim bre space” in terms of activ ations of the latent lay er with those of MFCC and sp ectral contrast descriptors, whic h are organized qualitatively differen tly; the former show a distinctly less “cloudy” shap e compared to the latter, and instead distinct curved lines or tra jectories are apparen t. This is of course qualitatively op en to interpretation, but do es imply some kind of structure imp osed on the laten t space that app ears to differ significan tly from the use of non-learned descriptors. In this work, w e found similar patterns in unregularized laten t spaces, e.g. Figures 6a and 8a. A distinguishing factor of this work compared to NSynth is that rather than attempt to mo del a large set of instruments, whic h requires a large mo del, large dataset, and large-dimensional latent space (16 or 32) with unknown meaning, w e fo cus on representing the sound with a comparatively small set of parameters (2 to 3) and attempt to learn a minimal enco ding based on previous knowledge of the mo del parameters, adding latent dimensions only as necessary . This stems from a different motiv ation, whic h, instead of b eing to determine multi-instrumen t embedding spaces as in the case of NSynth, is to b etter understand the in verse data-parameter relationship, as w ell as to provide a small, salien t set of “knobs” for real-time syn thesis of a single family of tim bres. 3. Datasets Giv en a netw ork with sufficient capacity we can enco de any functional relationship, but for the exp erimen ts describ ed herein a p erio dic signal sp ecified by a small num b er of parameters w as sough t that nonetheless features some complexit y and is related to sound synthesis. Th us, a ph ysical mo deling syn thesizer prov ed a go o d c hoice. W e used the b o w ed string mo del from the STK Synthesis T o olkit in C++ (COOK; SCA V ONE, 1999), whic h uses digital wa veguide syn thesis and is con trolled by 4 parameters: b ow pr essur e , the force of the b o w on the string; b ow velo city , the velocity of the b ow across the string; b ow p osition , the distance of the string-b o w in tersection from the bridge; and fr e quency , whic h controls the length of the delay lines and filter parameters, and thus the tuning of the instrument. The parameters are represented in STK as v alues from 0 to 128, and thus we do not worry ab out physical units in this pap er; all parameters w ere linearly scaled to a range [ − 1 , 1] for input to the neural net w ork. The data was similarly scaled for input, and a linear descaling of the output is p erformed for the diagrams in this pap er. Additionally , 47 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 the p er-elemen t mean and standard deviations across the entire dataset were subtracted and divided resp ectively in order to ensure similar v ariance for each discrete step of the w a v eform p erio d. T o extract the data, a program w as written to ev aluate the b o wed string mo del at 48000 Hz for 1 second for each com bination of b ow p osition and b ow pr essur e for intege rs 0 to 128. The 1-second in terv al was used to ensure the sound reached a steady state with a constan t p erio d size. The b ow velo city and volume parameters were b oth held at a v alue of 100. F or eac h instance, the last tw o p erio ds of oscillation w ere k ept, and since some parameter combinations did not give rise to stable oscillation, recordings with an RMS output low er than 10 − 5 (in normalized units) o v er this span were rejected, giving a total of 15731 recordings evenly distributed ov er the parameter range. The frequency w as selected at 476.5 Hz to count 201 samples to capture t w o p erio ds—some parameter com binations c hanged the tuning slightly , but insp ection b y ey e of 50 p erio ds concatenated end to end sho w ed minimal deviation at this frequency for a wide v ariety of parameters. T w o p erio ds were recorded in order to minimize the impact of any p ossible repro duction artifacts at the edges of the recording during ov erlap-add syn thesis. The recordings were phase-aligned using a cross-correlation analysis with a representativ e random sample, then differen tiated b y first-order difference, and 200 sample-to-sample differences were thus used as the training data, normalized as stated ab o v e. This dataset w e refer to as b owe d1 . Although it ma y b e b eneficial to use a log-sp ectrum represen tation rather than “ra w” (pulse-co ded) audio (ENGEL, 2017), we found that learning the time-domain oscillation cycle w as no problem. In this manner we av oided the need to p erform phase reconstruction. The use of a differen tiated represen tation also help ed to suppress noise. As will b e discussed b elo w, parameter estimation on new data w as not successful based on this dataset due to the lack of represen tation of the synthesizer’s dynamic regimes. T o resolv e this, a second extended dataset, b owe d2 , was created in a similar manner, how ever instead of recording only the steady state p ortion, the synthesizer was executed contin uously while changing the parameters randomly at random in terv als. 100,000 samples uniformly cov ering the parameter range w ere captured for b owe d2 . Finally , in order to test the idea on a completely indep endent alb eit simple dataset, a human v oice was recorded uttering constant vo wel sounds. The voice (the author’s o wn v oice) was held steady in frequency for a p erio d of 3 seconds for v o w els a , e , i , o , and u . The b eginning and end of each utterance w as clipped and p erio ds were extracted and globally phase-aligned by aligning p eaks. The voice w as recorded at 44100 Hz and had a frequency b et ween 114 and 117 Hz, thus sligh tly long cycles were extracted to ha ve exactly 400.5 samples p er p erio d, so that tw o p erio ds w ere 801 samples, or 800 samples in the differential represen tation used here. This created a final fundamental frequency of 110 Hz in the synthesized sound. (Due to o v erlap-add, artifacts in 2 or 3 samples at the b eginning and end of a cycle are mostly surpressed.) In tegers 0 through 4 were assigned to eac h vo wel and used as the single conditional parameter. This resulted in 996 tw o-p erio d samples, or approximately 200 samples p er vo wel. As will b e shown, since the voice was held quite steady , most p eriods for the same vo wel were quite similar, ho w ev er a low-qualit y microphone and natural v o cal v ariation con tributed to differences b et w een samples. This dataset is referred to in this text as vowels . In all cases, repro duction consists of de-normalizing, concatenating using an ov erlap- add metho d, and first-order integrating the final signal. A 50% o v erlap-add with a Hanning windo w was used, whic h features a constant o v erlap summation thereby a voiding mo dula- 48 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 tion artifacts (SMITH; SERRA, 1987). The parameters are assumed constant during one windo w, and th us interpolation artifacts may b egin to app ear if the parameters c hanged quic kly relative to t w o cycles of the wa veform. In the ideal case, p erfect repro duction of eac h cycle concatenated using this technique should repro duce the steady-state wa veform of the original sound source 4. T raining and net w o rk a rchitecture 4.1. Lea rned conditional auto enco ding While the principle job of the auto enco der is to repro duce the input as exactly as p ossible, in this w ork we also wish to estimate the parameters used to generate the data. Th us w e additionally c ondition part of the laten t space b y adding a loss related to the parameter reconstruction. This is somewhat different to providing conditional parameters to the input of the enco der (MAKHZANI, 2016; MIRZA, 2014), but has a similar effect. This is to encourage the netw ork to learn ho w to recognize the known parameters and assign asp ects of the v ariance to them that is asso ciated with those parameters. Note that the presence of the latent parameters is what allows for the fact that w e do not assume that the signal is purely deterministic in the known parameters. F or instance, in a ph ysical signal there may b e internal state v ariables that are not tak en in to account in the initial conditions, or acoustic characteristics suc h as ro om rev erb that are not considered a priori. Naturally , the less deterministic the signal is in the known parameters, the more m ust be left to latent parameters, and the p o orer a job w e can exp ect the parameter reconstruction to do. Note that if the latent parameters are able to represent the dynamic regimes, then dynamical state c hanges may b e represented as tra jectories in the latent space, ho w ev er we did not try to reconstruct such tra jectories in this work. 4.2. Generative adversarial regula rization The co de used in the middle la y er of an auto enco der, called the latent p ar ameters , whic h we shall refer to as z , when trained to enco de the data distribution p ( x ) , has conditional p osterior probabilit y distribution q ( z | x ) . As men tioned, it is in general useful to regularize q ( z | x ) to match a desired distribution. Sev eral metho ds exist for this purp ose: a variational auto enc o der (V AE) uses the Kullbac k-Leibler divergence from a given prior distribution. Other measures of difference from a prior are p ossible. The use of an adversarial configuration has b een prop osed (MAKHZANI, 2016) to regularize q ( z ) based on the negativ e log likelihoo d from a dis- criminator on z . With adv ersarial regularization, a discriminator is used to judge whether a p osterior distribution q ( z ) was likely pro duced by the generator and is th us sampled from q ( z | x ) , or rather sampled from an example distribution p ( z ) , which is often set to a normal or uniform distribution. The discriminator is itself an ANN which outputs a 1 if z consists of a “real” sample of p ( z ) or a 0 for a “fak e” sample of q ( z | x ) . The training loss of the generator, which is also the enco der of the auto enco der, maximizes the probabilit y of fo oling the discriminator into thinking it is a real sample of p ( z ) , while the discriminator sim ultaneously tries to increase its accuracy at distinguishing samples from p ( z ) and 49 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 E encoding q(z|x) late nt q(y|x) es timat ed conditi onal E z E y G g enerate d audio dat a D z | D E discr imina tiv e log-probability g(z,y) h(z) p(x) recorded audio dat a f(x) p(z) = 𝓤 ( -1,1 ) desired la tent dis tr ibution h(z) Figure 1: V ariables and functions in the description of the adversarial auto encoder. samples from q ( z | x ) . Th us th us enco der ev en tually generates p osterior q ( z | x ) to b e similar to p ( z ) . 4.3. Net w o rk description Putting together the ab ov e concepts, the system is comp osed of t wo neural netw orks and three training steps. A visual description of the netw ork configuration and ho w it relates to the following v ariables and functions may b e found in Figure 1. First, the auto enco der netw ork is comp osed of the enco der E = f ( x ) and the deco der/generator G = g ( z , y ) . The discriminator is designed analogously as D = h ( z ) . F or notational conv enience, we also define G E = g ( E ) = g ( f ( x )) , D E = h ( E z ) , and D z = h ( z ) where x = x 1 . . . x s are sampled from p ( x ) , z = z 1 . . . z s is sampled from p ( z ) , and s is the batch size. E z ( x ) and E y ( x ) are the first n and the last m dimensions of E ∈ [ z 1 · · · z n y 1 · · · y m ] , resp ectively . In the current work, f ( x ) and g ( z , y ) are simple one-hidden-la y er ANNs with one non-linearit y ζ and linear outputs: f ( x ) = ζ ( x · w 1 + b 1 ) · w 2 + b 2 (1) g ( z , y ) = ζ ([ z y ] · w 3 + b 3 ) · w 4 + b 4 (2) h ( z ) = ζ ( z · w 5 + b 5 ) · w 6 + b 6 (3) W e used the rectified linear unit ζ ( x ) = max (0 , x ) (ReLU), but we also in v estigated the use of tanh non-linearities, describ ed in Section 6. The principal dataset, describ ed b elo w, was comp osed of 200-wide 1-D vectors, and we had acceptable results using hidden lay ers of half that size, so w 1 ∈ R 200 × 100 , w 2 ∈ R 100 × ( n + m ) and w 3 , w 5 ∈ R ( n + m ) × 100 , w 4 , w 6 ∈ R 100 × 1 , where ( n + m ) , the total size of the hidden co de, was 2 or 3, dep ending on the exp eriment. The bias v ectors b 1 . . . b 6 had corresp onding sizes accordingly . 4.4. T raining The training steps were p erformed in the follo wing order for each batc h: 2 50 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 1. The Adam optimiser (KINGMA; BA, 2015) with a learning rate of 0.001 w as used to train the full set of auto enco der weigh ts w 1 . . . w 4 , and b 1 . . . b 4 , minimizing b oth the data x reconstruction loss and parameter y reconstruction loss, L AE b y bac k-propagation. The w eigh ting parameter λ = 0 . 5 is describ ed b elow. 2. A dam with learning rate 0.001 was used to train the generator w eights and biases w 1 , w 2 , b 1 , and b 2 . The negativ e log-likelihoo d L G w as minimized b y back-propagation. 3. A dam with learning rate 0.001 w as used to train the discriminator w eigh ts and biases w 5 , w 6 , b 5 , and b 6 . The negativ e log-likelihoo d L D w as minimized b y back- propagation. where, L AE = X ( x − g ( f ( x ))) 2 + λ X ( y − g ( x )) 2 (4) L G = − X log( D E ) (5) L D = − X (log( D z ) + log (1 − D E )) . (6) Exp erimen ts w ere p erformed using the T ensorFlow framework (ABADI, 2015), whic h implemented the differen tiation and gradient descent (bac k-propagation) algorithms. A small batch size of 50 was used, with each exp erimen t ev aluated after 4,000 batches. It w as found that smaller batc h sizes w ork ed b etter for the adversarial configuration. Matrices z and x , y w ere sampled indep enden tly from Z ∼ p ( z ) = U ( − 1 , 1) and ( X , Y ) ∼ p ( x, y ) for each step, where U ( a, b ) is the uniform distribution in range [ a, b ] inclusive. 5. Exp eriments Six conditions were tested in order to explore the role of conditional and latent parameters. The num b er of known parameters in the dataset was 2. W e tried training the b owe d1 dataset with and without an extra latent parameter. W e lab el these conditions D 1 Z 2 Y and D 0 Z 2 Y resp ectiv ely . The third condition, N 1 Z 2 Y , was like the D 1 Z 2 Y condition but without adv ersarial regularization on q ( z | x ) . Thus the D lab el is to indicate the use of training on the discriminator, while N indicates No discriminator. T o compare conditioning with the “natural” distribution of the data among latent parameters and the effects of adversarial regularization thereup on, t w o configurations with no conditional parameters, with and without the discriminator, were explored, named D 2 Z 0 Y and N 2 Z 0 Y resp ectiv ely . 6. Results Figure 2 demonstrates the results of D 1 Z 2 Y . Comparing the middle and b ottom curv es, we can see that while it has some trouble with lo w v alues of b ow pr essur e and the extremes of b ow p osition , the auto enco der is able to more or less enco de the distribution in our dataset. The top curve (red) was generated by explicitly sp ecifying the y (conditional) parameters instead of letting the auto enco der infer them, with z 0 = 0 , and demonstrates the output for parameter-driv en reconstruction if z 0 is held constan t. Although not a p erfect repro duction, particularly at extremes of the b ow p osition range where there is more v ariance, this demonstrates that the trained netw ork is able to appro ximate the data-parameter relationship presen t in the dataset. 51 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 (a) (b) Figure 2: Output of D 1 Z 2 Y as pressure y 0 and p osition y 1 a re changed. T op (red) is the deco der with pa rameters explicitly sp ecified and z 0 = 0 ; middle (green) is with pa rameters and z 0 inferred by the enco der, b ottom (blue) is the dataset sample with closest pa rameters. (a) Time domain; (b) Frequency domain. (a) (b) Figure 3: Output of D 1 Z 2 Y as b ow p osition is set to 100, and b ow pressure and latent z 0 a re changed. T op (red) Deco der output; b ottom (blue) is the dataset. (a) Time domain; (b) Frequency domain. The role of z is now considered in Figure 3, by holding b ow p osition constant ( y 1 = 100 ) and examining ho w the signal c hanges with z 0 . One notices that for some v alues of z 0 the signal matches w ell, and for others it v aries from the target signal. F or example, we can see that in this case, high v alues of z 0 push the signal tow ards t w o sharp p eaks, while lo w v alues of z 0 tend tow ards more oscillations; b oth z 0 = − 0 . 8 and z 0 = 0 . 8 resem ble the pr = 115 . 2 condition, but in different asp ects. Meanwhile there is consistency with the “stylistic” influence of z 0 on the signal for differen t v alues of b o w pressure; for lac k of b etter words, in the time domain it changes from “p eaky” to “wiggly” going from left to righ t. Next, we lo ok at the enco der (parameter estimator) p erformance, by pro ducing a new signal from the STK syn thesizer with a parameter tra jectory starting with smo oth v ariation only in b ow pr essur e and then smo oth v ariation only in b ow p osition , and then 52 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 (a) 0 20 40 60 80 100 120 b o w p r e s s u r e y 0 0 7 Time (s) 0 20 40 60 80 100 120 b o w p o s i t i o n y 1 Estimated Truth (b) 0 20 40 60 80 100 120 b o w p r e s s u r e y 0 0 7 Time (s) 0 10 20 30 40 50 60 b o w p o s i t i o n y 1 Estimated Truth (c) 0 20 40 60 80 100 120 b o w p r e s s u r e y 0 0 7 Time (s) 0 20 40 60 80 100 120 b o w p o s i t i o n y 1 Estimated Truth (d) 0 20 40 60 80 100 120 b o w p r e s s u r e y 0 0 7 Time (s) 0 10 20 30 40 50 60 b o w p o s i t i o n y 1 Estimated Truth Figure 4: P arameter estimation performance of the D 1 Z 2 Y net wo rk fo r (a) b ow ed1 full dataset, RMS erro r=23.23; (b) b o w ed1 half dataset, RMS error=33.45; (c) b ow ed2 full dataset, RMS erro r=19.54; (d) b o w ed2 half dataset, RMS error=8.61. in b oth parameters. Figure 4(a) sho ws actually rather disapp ointing p erformance in this resp ect, ho w ever it do es clarify some information not present in the previous analysis: the estimation is clearly b etter for b ow pr essur e , but easily disturb ed b y changes in b ow p osition . Nonetheless we see the tendency of the estimate in the right direction, with rather a lot of flipping ab o v e and b elow the center. Since v arying the hyperparameters of our net w ork did not solv e this problem, w e h yp othesized that this error could come from tw o sources: (1) ambiguities in the dataset—indeed, if one examines the shap e of the signal as b ow p osition c hanges, one notices a symmetry b etw een v alues on either side of p os =64, c.f. samples from dataset in Figure 2, blue line. By consequence the inv erse problem is undersp ecified, leading to ambiguit y in the parameter estimate. (2) underrepresented v ariance in the dataset; the new testing data v aries contin uously in the parameters, but the dataset w as constructed based on the p er-parameter steady state. T o inv estigate this, the net w ork was trained on a “half dataset”, consisting only of samples of b owe d1 where b ow p osition < 64 . F urthermore, as men tioned, an extended dataset, b owe d2 , was constructed based on random parameter v ariations. Results in Figure 4(b)-(d) show that training on the half- b owe d1 dataset changed the character of errors, but did not impro v e ov erall, ho w ever the extended b owe d2 dataset ga v e improv ed parameter estimation, and muc h impro v ed in the b ow p osition < 64 case. Thus it can b e concluded that b oth sources contributed to parameter estimation difficulties. Figure 6 sho ws the resulting parameter space if b oth parameters are left to be absorb ed by the unsup ervised laten t space. The adversarial regularization regime can b e seen in the generator and discriminator losses of Fig. 6(b), which encourages the auto enco der to make the distribution of these v ariables similar to U ( − 1 , 1) , i.e., a rectangle. This facilitates user interaction with the generator, since limited-range con trol knobs can b e mapp ed to this rectangle, thus having a strong c hance to access the full range of v ariance present in the dataset; conv ersely , the chance of syn thesizing a sound that do es not corresp ond with the training data is minimized. Without regularization, Fig. 6(a) ( N 2 Z 0 Y ), we see some relationship b etw een the t w o inferred v ariables z 0 and z 1 (Fig. 6)— 53 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 Figure 5: The Sounderfeit graphical user interface allows to interact in real time with the ANN-synthesized sound and compare it to the physical mo del that it was trained on. Here, pressure and p osition are controlled b y limited-range knobs on a MIDI keyboard (Novation). although it app ears more complex than could b e captured by a Pearson’s correlation—while this is completely gone for the regularized version ( D 2 Z 0 y ). The spreading clusters are generated b ecause without regularization, the auto enco der attempts to maximally separate v arious asp ects of the v ariance in a reduced 2-dimensional space in order to decrease uncertain t y in reconstruction, whic h can b e useful for data analysis but do es not pro duce a go o d interpolation space. The regularization therefore encourages the parameter space to b e in teractiv ely “interesting,” in the sense that the parameters represent orthogonal (or at least, uncorrelated) axes within the distribution that co ver a defined domain (red square in Fig. 6) and tend to w ards uniform co v erage without “holes” . Of course, it is p ossible to restrict the domain without relying on the regularizer, simply by defining the netw ork architecture accordingly . F or instance, if the non-linear units are c hanged for the hyperb olic tangen t, it is imp ossible for the net w ork to generate v alues outside the range [ − 1 , 1] . In this sense the net work arc hitecture itself can b e understo o d as contributing to regularization by enforcing hard constrain ts, rather than the soft constraints of the cost function. In Fig. 8, this tanh arc hitecture is demonstrated on the b owe d1 dataset, and it can b e seen that the adversarial regularization nonetheless is still useful for ensuring that the domain is used effectively , i.e., despite some visual clusters still b eing apparen t, they are m uc h more spread out, b etter appro ximating a uniform distribution and, to a large degree, breaking up the piecewise correlations b etw een the parameters that can b e seen b y insp ection when regularization is not used. In general, w e found the ReLU approach more stable and b etter at pro ducing uniform co v erage. One will undoubtedly notice that the reconstruction error as rep orted in Fig. 6 do es suffer due to the reguralization. Indeed this is an exp ected outcome since the regularization applies extra requirements such that the training will sacrifice one criteria to improv e another. Additionally , the error will dep end greatly on ho w muc h v ariance is present in the data vs. ho w m uc h “ro om” it needs to express it—in this sense, we w ould exp ect accuracy to increase as laten t dimensions are added. Fig. 7 gives an idea of ho w reconstruction error c hanges as w e do so. 54 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 W e found that with this small deco der netw ork of 20300 weigh ts and 300 biases, an o v erlap-add synthesis could b e p erformed in real time on a laptop computer (10 seconds to ok 8.5 seconds to generate and w as muc h faster when re-implemented in C++), and we can thus presen t a real-time, interactiv e data-driven wa vetable syn thesizer, which w e call Sounderfeit, see Figure 5, with a num b er of adjustable parameters. 3 The output of the o v erlap-add pro cess is visualised in Figure 10. Lastly , in order to v erify this metho d on another dataset, a similar netw ork w as trained on the vowels dataset, adjusted to hav e the right size of input lay er of 800, see Figure 9. The inferred space reflects the condition n um b er (discrete, here), but the remaining parameter uniformly co v ers the range [ − 1 , 1] . In this case the extra v ariance b ey ond the conditional parameter consists only of small tonal changes in the recorded v oice as w ell as microphone noise, and th us there is muc h more v ariance b et ween vo wels than within. It is apparent from Fig. 9(c) that there is no “leakage” of these extra sources of v ariance to the vo wel lab el parameter axis, as the v o wel num b er adequately iden tifies the cluster. The clusters can therefore b e nicely mapp ed to a desired space automatically b y the conditioning and regularization. 7. Conclusions These exp eriments sho wed some mo dest success in copying the parameter-data relationship of a physical mo deling syn thesizer and fitting them into a desired configuration. Lik e many machine learning approaches, the quality of results dep ends strongly on the h yp erparameters used: netw ork size and architecture, learning rates, regularization weigh ts, etc., and these m ust b e adapted to the dataset. Shown here are results from the b est parameters found after some combination of automatic and manual optimisation on this sp ecific dataset, which w e use to demonstrate some principles of the design, ho w ev er it should b e noted that actual results v aried sometimes unexp ectedly with small c hanges to these parameters. This h yp erparameter optimization is non-trivial, especially when it comes to audio where mean squared error ma y not rev eal muc h ab out the p erceptual qualit y of the results, and so a lot of trial and error is the game. Th us, a truly “univ ersal”, turn-k ey synthesizer copier w ould require future work on measuring a combined h yp ercost that balances w ell the desire for go o d repro duction with go o d parameter estimation qualit y , and well-distributed latent parameters. Suc h work could go b eyond mean squared error to in v olve p erceptual mo dels of sound p erception. F or example, recent work in sp eech syn thesis has sho wn a significant impro v emen t in p erceiv ed quality when the mo del was conditioned on mel frequency sp ectrograms (SHEN, 2017). Some practical notes: (1) W e found that getting the adv ersarial metho d to prop erly regularize the laten t v ariables in the presence of conditional v ariables is somewhat tric ky; the batc h size and relativ e learning rates play ed a lot in balancing the generator and discriminator p erformances. New research in adv ersarial metho ds is a current area of in v estigation in the ML communit y and man y new techniques could apply here; more- o v er comparison with v ariational metho ds is needed—w e note how ever that v ariational auto enco ders are typically regularized to fit a Gaussian normal distribution, whereas an adv an tage of the adversarial approach is to fit any example-based distribution, which w e to ok adv an tage of to fit the rectangle accessible by a pair of knobs. (2) W e found the parameter estimation extremely sensitiv e to phase alignment; w e tried randomizing phase of examples during training, whic h gav e b etter parameter estimates, but this was quite damaging to the auto enco der p erformance. In general ov ersensitivity to global phase is a 55 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 (a) Unr e gularize d (b) A dversarial r e gularization 0 1000 2000 3000 4000 5000 6000 0.01 0.02 0.03 E_loss 0 0 30 E z 0 v s . E z 1 15 0 25 15 0 30 0 1000 2000 3000 4000 5000 6000 0.02 0.04 0.06 E_loss 0 1000 2000 3000 4000 5000 6000 0.7 0.8 0.9 1.0 G_loss D_loss 0.0 0.0 E z 0 v s . E z 1 2.5 0.0 2.0 1.5 0.0 2.5 z 1 = 0 . 8 0 z 0 = 0 . 8 0 z 0 = 0 . 4 0 z 0 = 0 . 0 0 z 0 = 0 . 4 0 z 0 = 0 . 8 0 z 1 = 0 . 4 0 z 1 = 0 . 0 0 z 1 = 0 . 4 0 z 1 = 0 . 8 0 z 1 = 0 . 8 0 z 0 = 0 . 8 0 z 0 = 0 . 4 0 z 0 = 0 . 0 0 z 0 = 0 . 4 0 z 0 = 0 . 8 0 z 1 = 0 . 4 0 z 1 = 0 . 0 0 z 1 = 0 . 4 0 z 1 = 0 . 8 0 Figure 6: Distributions of latent parameters corresponding to a random sample of 3000 cycles from the dataset when trained (a) without regularization ( N 2 Z 0 Y ) and (b) with adversarial regularization ( D 2 Z 0 Y ). The top ro ws indicate reconstruction error ( E loss , mean square error in normalized rep resentation), and adversa rial discrimination errors ( G loss = L G , D loss = L D ). Regularization encourages the netw o rk to find a latent space such that the full variance of the dataset can b e accessed uniformly from a restricted range of values, approp riate fo r interactive control. 56 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 1 2 3 4 8 16 Number of latent parameters 0.000 0.025 0.050 0.075 0.100 0.125 0.150 0.175 0.200 Autoencoder reconstruction RMSE no regularization with adversarial reguralizer Figure 7: Reconstruction mean squa red error as a function of the numb er of latent parameters, with and without reguralization. (a) Unr e gularize d (b) A dversarial r e gularization 0 500 1000 1500 2000 2500 3000 3500 4000 0.01 0.02 0.03 0.04 E_loss 0.00 0.00 E z 0 v s . E z 1 1.25 0.00 1.25 1.25 0.00 1.25 0 1000 2000 3000 4000 5000 6000 0.02 0.04 0.06 E_loss 0 1000 2000 3000 4000 5000 6000 0.68 0.70 0.72 0.74 G_loss D_loss 0.00 0.00 E z 0 v s . E z 1 1.25 0.00 1.25 1.25 0.00 1.25 Figure 8: Results of same conditions as Fig. 6, but the ReLU activation functions are replaced with the hyp erbolic tangent in o rder to restrict the domain of z instead of relying on the regularizer. It can b e seen that when the netw o rk ar chitecture provides domain limiting, the regularization still provides a benefit of b etter app roximating a uniform distribution, and further decorrelating the parameters. (a) 0 200 400 600 800 1000 0.02 0.03 E_loss 10.0 0.0 12.5 H i s t o g r a m o f E z 0 7.5 0.0 17.5 H i s t o g r a m o f E z 1 10.0 0.0 12.5 10 0 20 E z 0 v s . E z 1 (b) 0 500 1000 1500 2000 2500 3000 0.02 0.04 0.06 E_loss 0 500 1000 1500 2000 2500 3000 2.5 5.0 G_loss D_loss 2.0 0.0 1.5 H i s t o g r a m o f E z 0 1.5 0.0 1.5 H i s t o g r a m o f E z 1 2.0 0.0 1.5 1.5 0.0 1.5 E z 0 v s . E z 1 (c) 1.5 0.0 1.5 H i s t o g r a m o f E z 0 1.5 0.0 1.5 H i s t o g r a m o f E y 0 1.5 0.0 1.5 1 0 5 E z 0 v s . E y 0 2 0 6 2 0 5 y 0 v s . E z 0 2 0 6 1 0 5 y 0 v s . E y 0 Figure 9: Results on vow els dataset: (a) using t wo latent pa rameters without regula rization, the vow els a re sepa rated into clusters; (b) regularization encourages spreading to cover the uniform space within the desired b ounda ries, effectively smo othing out and blending the clusters; (c) replacing one latent parameter with conditioning on a vow el numb er 0 to 4. 57 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 Figure 10: Overlap-add output of D 1 Z 2 Y , varying each parameter over a short interval. problem with this metho d, a do wnside to the time domain representation; more work on dealing with phase as a latent parameter is necessary . Nev ertheless we ha v e attempted to outline some p oten tial for use of auto enco ders and their latent spaces for audio analysis and synthesis based on a sp ecific signal source. Only a very simple fully-connected single-lay er arc hitecture was used, and thus impro v e- men ts should b e explored, in particular the addition of conv olutional la y ers. The adv an tages of time- and frequency-domain represen tations as learning targets should b e c haracterised. More imp ortan t than the qualit y of these sp ecific results, w e wish to p oin t out the mo dular approac h that auto enco ders enable in mo deling oscillator p erio ds of known and unknown parameters, and that, in contrast to larger datasets co v ering many instruments (ENGEL, 2017), in teresting insigh ts and useful p erformance systems can b e generated ev en from small data. One might ask what a blackbox mo del brings to the table in the presence of an existing, seman tically-ric h physical mo del. Indeed, in this w ork a digital synthesizer w as used as an easy wa y to gain access to a fairly complicated but clean signal with a small num b er of parameters. In principle this metho d could b e used on m uch richer, real instrumen t recordings. T o demonstrate this p oin t we ha v e trained it, as shown, on a very small v o cal recording (3 seconds p er vo wel) and pro duced a working vo wel synthesizer with separate knobs for v o w el num b er and “noise”, e.g. microphone noise and vocal v ariance, ho w ev er more complex exp erimen ts are needed in this v ein. A challenge in op erating on real data was cutting and aligning oscillation cycles correctly , which w as non-trivial and prohibits easier exp erimen tation on arbitrary data streams. Sim ultaneous estimation and generation with the same netw ork ma y b e unnecessary . In fact conditioning v ariables could b e made external inputs instead of inferred from the input data, and the deco der could b e used separately to only learn the parameters in a more typical regression configuration. Ho w ev er, one of the long-term goals of p erforming automatic inference is to play somewhat with the latent and parameter space, suc h as using it for what is kno wn in the audio comm unit y as cross-syn thesis, or in the machine learning communit y as “st yle transfer”, i.e., swapping the b ottom and top halv es of t wo suc h auto enco der netw orks, allowing to driv e a synthesizer by b oth conditioned and latent parameters estimated on an incoming signal. One can imagine, for example, playing the violin and having the b o w pressure con trol the brigh tness of a wind instrumen t sound, while subtle asp ects of the gesture are left to laten t space to con trol more subtle parameters of the sound. T o ac hiev e this a muc h less noisy inference result would b e necessary , and is of course predicated on the idea that the parameter to data function is inv ertible, whic h, 58 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 as seen in our failure to map the complete b ow p osition domain, is not necessarily a given. Another reason for doing sim ultaneous estimation and generation left for future w ork is to inv estigate whether a tied-weigh ts approach migh t improv e b oth goals by integrating m utual sources of information on either side of the equation. Note 1 A udio examples at: < https://emac.ufg.br/up/269/o/Sinclair_soundexample.mp3> . 2 The learning rates hav e b een changed from Sinclair (2017): stochastic gradient descent with learning rate 0.005 for the auto enco der and learning rate 0.05 for the generator and discriminator. Due to a programming error, the autoenco der training step p erformed b etter with a different learning rate. W e later found that the results were muc h more robust with the A dam optimiser and the same learning rate v alue for all training steps. 3 Sounderfeit source co de can b e found on its pro ject page at < https://gitlab.com/sinclairs/sounderfeit> References ABADI, M; AGAR W AL A; BARHAM P; BREVDO E; CHEN Z; CITRO C; CORRADO G; D A VIS A; DEAN J; DEVIN M; GHEMA W A T S; GOODFELLO W I; HARP A; IR V- ING G; ISARD M; JOZEFO WICZ R; JIA Y; KAISER L; KUDLUR M; LEVENBERG J; MANÉ D; SCHUSTER M; MONGA R; MOORE S; MURRA Y D; OLAH C; SHLENS J; STEINER B; SUTSKEVER I; T AL W AR K; TUCKER P; V ANHOUCKE V; V ASUDE- V AN V; VIÉGAS F; VINY ALS O; W ARDEN P; W A TTENBERG M; WICKE M; YU Y; ZHENG X, T ensorFlow: L ar ge-Sc ale Machine L e arning on Heter o gene ous Systems . 2015. A v ailable: < ht tp://tensorflow.org> . A ccessed: 2017. CEMGIL, A. T.; ERKUT, C, Calibration of physical mo dels using artificial neural net- w orks with application to pluck ed string instrumen ts. In: Pr o c e e dings of the International Symp osium on Music al A c oustics , St-Alban, UK, 1997. v. 19, p. 213–218. COOK, P . R.; SCA V ONE, G. P , The Synthesis T o olKit (STK). In: Pr o c e e dings of the International Compup ater Music Confer enc e , Beijing, c hina, 1999. ENGEL, J; RESNICK, C; R OBER TS, A; DIELEMAN, S; ECK, D; SIMONY AN, K; NOR OUZI, M, Neural audio syn thesis of musical notes with W av eNet auto enco ders. pr eprint arXiv:1704.01279 , 2017. GABRIELLI, L; TOMASSETTI, S; SQUAR TINI, S; ZINA TO, C, Introducing deep mac hine learning for parameter estimation in physical modelling. Pr o c e e dings of the International Confer enc e on Digital A udio Effe cts (DAFx-17) , Edin burgh, UK, 2017. KINGMA, D.; BA, J, A dam: A metho d for sto chastic optimization. International Confer- enc e on L e arning R epr esentations , San Diego, 2015. MAKHZANI, A; SHLENS, J; JAITL Y, N; GOODFELLO W, I, Adv ersarial auto enco ders. Pr o c e e dings of the International Confer enc e on L e arning R epr esentations , San Juan, Puerto Rico, 2016. MEHRI, S; KUMAR, K; GULRAJANI, I; KUMAR, R; JAIN S; SOTELO, J; COUR VILLE, A; BENGIO, Y, SampleRNN: An unconditional end-to-end neural audio generation mo del. International Confer enc e on L e arning R epr esentations , T oulon, F rance, 2017. 59 SINCLAIR, S. Sounderfeit: Cloning a Physical Mo del using a Conditional Adversa rial A uto enco der Revista Música Ho die, Goiânia, V.18 - n.1, 2018, p. 44–60 MIRZA, M.; OSINDERO, S, Conditional generative adv ersarial nets. arXiv pr eprint arXiv:1411.1784 , 2014. OORD, A; DIELEMAN, S; ZEN, H; SI MONY AN, K; VINY ALS, O; GRA VES, A; KALCHBREN- NER, N; SENIOR, A; KA VUK CUOGLU, K, W a veNet: A generativ e mo del for raw audio. arXiv pr eprint arXiv:1609.03499 , 2016. PF ALZ, A.; BERDAHL, E. T ow ard in v erse control of ph ysics-based sound synthesis. Pr o c e e dings of the First International Confer enc e on De ep L e arning and Music , Anchorage, USA, 2017. RADF ORD, A.; METZ, L.; CHINT ALA, S. Unsup ervised representation learning with deep con v olutional generative adv ersarial netw orks. Pr o c e e dings of the International Confer enc e on L e arning R epr esentations , San Juan, Puerto Rico, 2016. RIERA, P . E.; EGUÍA, M. C.; ZABALJÁUREGUI, M. Timbre spaces with sparse auto en- co ders. Pr o c e e dings of the Br azilian Symp osium on Computer Music , Sao Paulo, Brazil, 2017. p. 93–98. RI IONHEIMO, J.; VÄLIMÄKI, V. Parameter estimation of a pluck ed string synthesis mo del using a genetic algorithm with p erceptual fitness calculation. EURASIP Journal on A dvanc es in Signal Pr o c essing , Springer, v. 2003, n. 8, p. 758284, 2003. SCHERRER, B.; DEP ALLE, P . A ph ysically-informed audio analysis framework for the iden tification of plucking gestures on the classical guitar. Canadian A c oustics , v. 39, n. 3, p. 132–133, 2011. SHEN, J; P ANG R; WEISS R; SCHUSTER M; JAITL Y N; Y ANG Z; CHEN Z; ZHANG Y; W ANG Y; SKERR Y-R Y AN, RJ, SAUR OUS R; A GIOMYR GIANNAKIS Y; WU Y. Natural TTS synthesis b y conditioning W a v eNet on mel sp ectrogram predictions. arXiv pr eprint arXiv:1712.05884 , 2017. SINCLAIR, S. Sounderfeit: Cloning a physical mo del with conditional adversarial auto en- co ders. Pr o c e e dings of the Br azilian Confer enc e on Computer Music , Sao Paulo, Brazil, 2017. p. 67–74. SMITH, J; SERRA, X, P ARSHL: An analysis/syn thesis program for non-harmonic sounds ba- sed on a sinusoidal represen tation. Pr o c e e dings of the International Computer Music Confer enc e , T okyo, Japan, 1987. Stephen Sinclair – In 2012 completed a PhD at McGill Universit y in Montreal. His topic was audio-haptic in teraction with m usical acoustic mo dels. He spent 3 years as a post-do ctoral researcher in the ISIR lab oratory of UPMC, Paris, working on new haptic interaction metho ds. Currently is a research engineer at Inria Chile, principally working to improv e the Siconos non-smo oth dynamical system simulation engine. (This p ostprint has b een reformatted from the published article) 60

Original Paper

Loading high-quality paper...

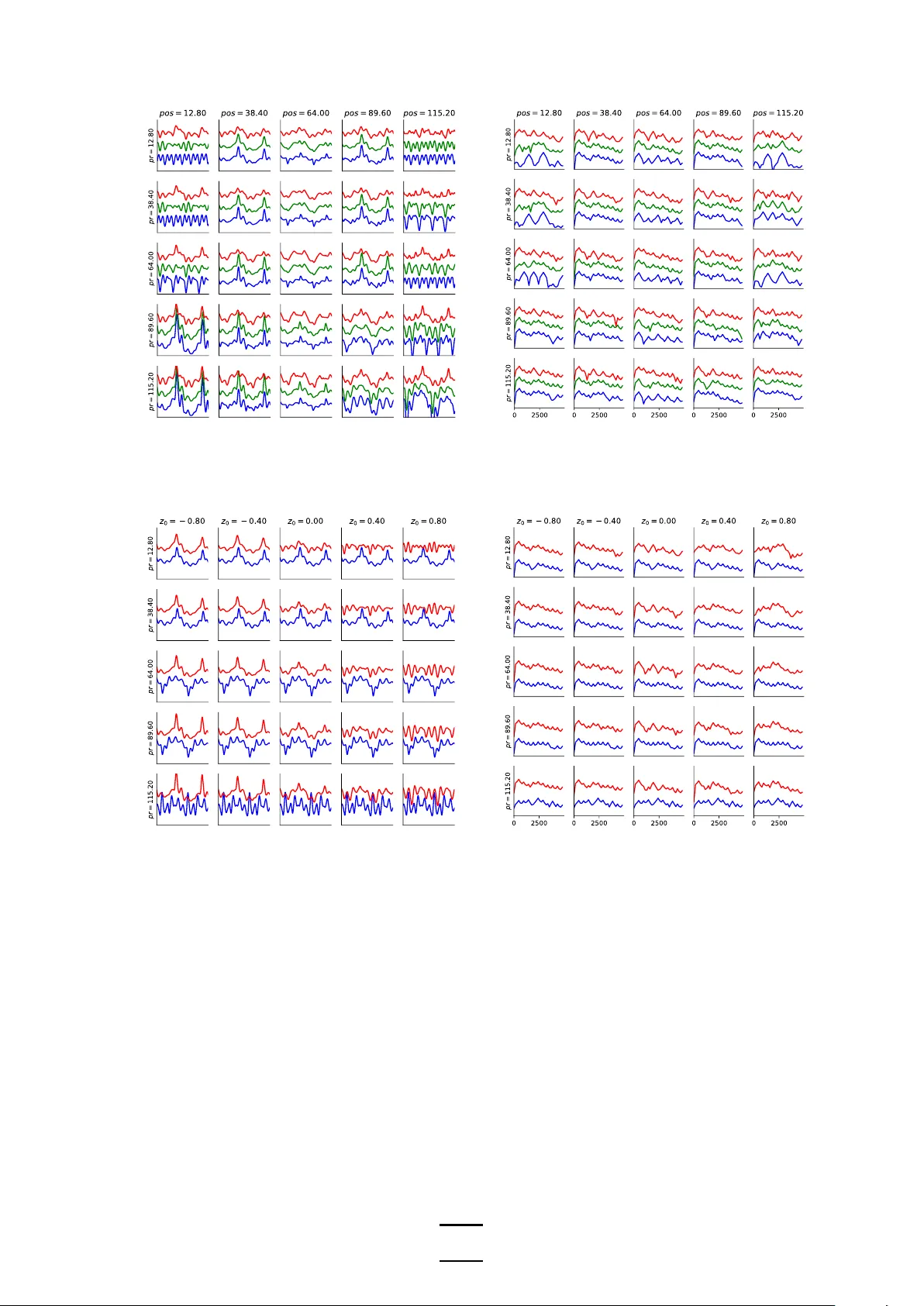

Comments & Academic Discussion

Loading comments...

Leave a Comment