Frame-level Instrument Recognition by Timbre and Pitch

Instrument recognition is a fundamental task in music information retrieval, yet little has been done to predict the presence of instruments in multi-instrument music for each time frame. This task is important for not only automatic transcription bu…

Authors: Yun-Ning Hung, Yi-Hsuan Yang

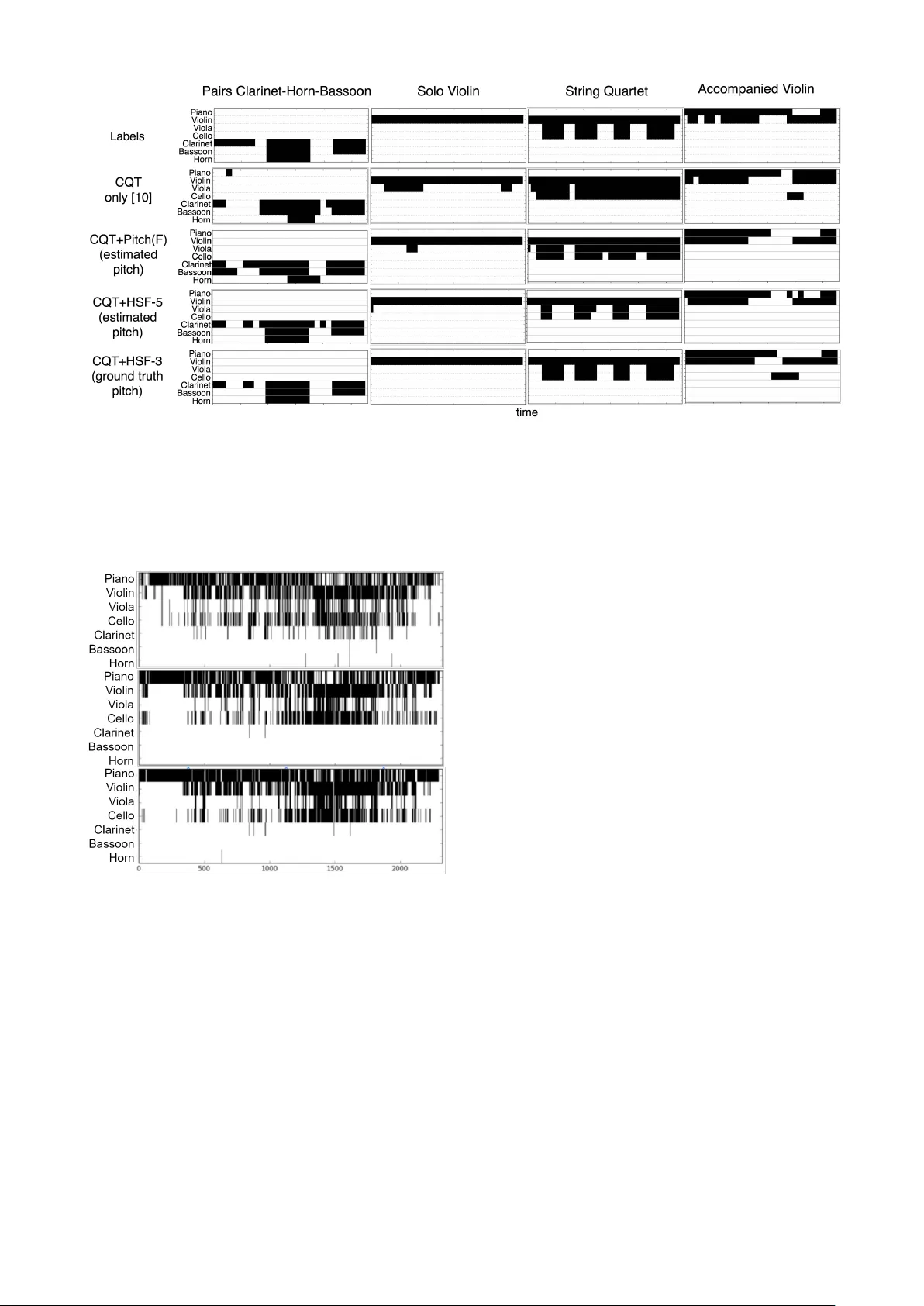

FRAME-LEVEL INSTR UMENT RECOGNITION BY TIMBRE AND PITCH Y un-Ning Hung and Y i-Hsuan Y ang Research Center for IT Innov ation, Academia Sinica, T aipei, T aiwan { biboamy,yang } @citi.sinica.edu.tw ABSTRA CT Instrument recognition is a fundamental task in music in- formation retriev al, yet little has been done to predict the presence of instruments in multi-instrument music for each time frame. This task is important for not only automatic transcription b ut also many retrie val problems. In this pa- per , we use the newly released MusicNet dataset to study this front, by building and e v aluating a con volutional neu- ral netw ork for making frame-lev el instrument prediction. W e consider it as a multi-label classification problem for each frame and use frame-le vel annotations as the supervi- sory signal in training the network. Moreover , we experi- ment with dif ferent ways to incorporate pitch information to our model, with the premise that doing so informs the model the notes that are acti ve per frame, and also encour - ages the model to learn relative rates of ener gy b uildup in the harmonic partials of dif ferent instruments. Exper- iments sho w salient performance impro vement ov er base- line methods. W e also report an analysis probing ho w pitch information helps the instrument prediction task. Code and experiment details can be found at https://biboamy. github.io/instrument- recognition/ . 1. INTR ODUCTION Progress in pattern recognition problems usually depends highly on the av ailability of high-quality labeled data for model training. For example, in computer vision, the re- lease of the ImageNet dataset [11], along with adv ances in algorithms for training deep neural networks [26], has fu- eled significant progress in image-level object recognition. The subsequent av ailability of other datasets, such as the COCO dataset [30], provide bounding boxes or e ven pixel- level annotations of objects that appear in an image, facil- itating research on localizing objects in an image, seman- tic segmentation, and instance segmentation [30]. Such a mov e from image-level to pix el-level prediction opens up many ne w exciting applications in computer vision [16]. Analogously , for man y music-related applications, it is desirable to have not only clip-level but also frame-level predictions. For e xample, expert users such as music com- posers may want to search for music with certain attrib utes c Y un-Ning Hung and Y i-Hsuan Y ang. Licensed under a Creativ e Commons Attribution 4.0 International License (CC BY 4.0). Attribution: Y un-Ning Hung and Yi-Hsuan Y ang. “Frame-le vel Instru- ment Recognition by Timbre and Pitch”, 19th International Society for Music Information Retriev al Conference, Paris, France, 2018. and require a system to return not only a list of songs but also indicate the time interv als of the songs that hav e those attributes [3]. Frame-le vel predictions of music tags can be used for visualization and music understanding [31, 45]. In automatic music transcription, we want to kno w the musi- cal notes that are activ e per frame as well as figure out the instrument that plays each note [13]. V ocal detection [40] and guitar solo detection [36] are another two examples that requires frame-lev el predictions. Many of the aforementioned applications are related to the classification of sound sources, or instrument clas- sification. Howe ver , as labeling the presence of instru- ments in multi-instrument music for each time frame is labor-intensi ve and time-consuming, most existing work on instrument classification uses either datasets of solo in- strument recordings (e.g., the ParisT ech dataset [24]), or datasets with only clip- or excerpt-le vel annotations (e.g., the IRMAS dataset [7]). While it is still possible to train a model that performs frame-level instrument prediction from these datasets, it is difficult to e valuate the result due to the absence of frame-lev el annotations. 1 As a result, to date little work has been done to specifically study frame- lev el instrument recognition, to the best of our knowledge (see Section 2 for a brief literature surve y). The goal of this paper is to present such a study , by tak- ing adv antage of a recently released dataset called Music- Net [44]. The dataset contains 330 freely-licensed classical music recordings by 10 composers, written for 11 instru- ments, along with over 1 million annotated labels indicat- ing the precise time of each note in ev ery recording and the instrument that plays each note. Using the pitch labels av ailable in this dataset, Thickstun et al. [43] built a con- volutional neural network (CNN) model that establishes a new state-of-the-art in multi-pitch estimation. W e pro- pose that the frame-lev el instrument labels provided by the dataset also represent a v aluable information source. And, we try to realize this potential by using the data to train and ev aluate a frame-le vel instrument recognition model. Specifically , we formulate the problem as a multi-label classification problem for each frame and use frame-lev el annotations as the supervisory signal in training a CNN model with three residual blocks [21]. The model learns 1 Moreover , these datasets may not provide high-quality labeled data for frame-level instrument prediction. T o name a few reasons: the Paris- T ech dataset [24] contains only instrument solos and therefore misses the complexity seen in multi-instrument music; the IRMAS dataset [7] la- bels only the “predominant” instrument(s) rather than all the activ e in- struments in each e xcerpt; moreover , an instrument may not be al ways activ e throughout an excerpt. to predict instruments from a spectral representation of au- dio signals provided by the constant-Q transform (CQT) (see Section 4.1 for details). Moreover , as another tech- nical contribution, we in vestigate sev eral ways to incorpo- rate pitch information to the instrument recognition model (Sections 4.2), with the premise that doing so informs the model the notes that are acti ve per frame, and also encour - ages the model to learn the energy distribution of partials (i.e., fundamental frequency and o vertones) of dif ferent in- struments [2, 4, 14,15]. W e experiment with using either the ground truth pitch labels from MusicNet, or the pitch esti- mates pro vided by the CNN model of Thickstun et al . [43] (which is open-source). Although the use of pitch features for music classification is not new , to our knowledge fe w attempts hav e been made to jointly consider timbre and pitch features in a deep neural network model. W e present in Section 5 the experimental results and analyze whether and how pitch-aware models outperform baseline models that take only CQT as the input. 2. RELA TED WORK A great many approaches hav e been proposed for (clip- lev el) instrument recognition. T raditional approaches used domain kno wledge to engineer audio feature e xtraction al- gorithms and fed the features to classifiers such as support vector machine [25, 32]. For example, Diment et al. [12] combined Mel-frequency cepstral coefficients (MFCCs) and phase-related features and trained a Gaussian mix- ture model. Using the instrument solo recordings from the R WC dataset [17], they achiev ed 96.0%, 84.9%, 70.7% ac- curacy in classifying 4, 9, 22 instruments, respecti vely . Y u et al. [47] used sparse coding for feature extraction and support vector machine for classifier training, obtaining 96% accuracy in 10-instrument classification for the solo recordings in the ParisT ech dataset [24]. Recently , Y ip and Bittner [46] made open-source a solo instrument classifier that uses MFCCs in tandem with random forests to achieve 96% frame-level test accuracy in 18-instrument classifica- tion using solo recordings from the Medle yDB multi-track dataset [5]. Recognizing instruments in multi-instrument music has been prov en more challenging. For e xample, Y u et al. [47] achie ved 66% F-score in 11-instrument recogni- tion using a subset of the IRMAS dataset [7]. Deep learning has been increasingly used in more recent work. Deep architectures can “learn” features by training the feature extraction module and the classification module in an end-to-end manner [26], thereby leading to better ac- curacy than traditional approaches. For e xample, Li et al. [27] showed that feeding raw audio wav eforms to a CNN achiev es 72% (clip-lev el) F-micro score in discriminating 11 instruments in MedleyDB, which MFCCs and random forest only achiev es 64%. Han et al. [19] trained a CNN to recognize predominant instrument in IRMAS and achieved 60% F-micro, which is about 20% higher than a non- deep learning baseline. Park et al. [35] combined multi- resolution recurrence plots and spectrogram with CNN to achiev ed 94% accuracy in 20-instrument classification us- ing the UIO W A solo instrument dataset [18]. Number of instru- Number of clips Pitch est. ments used T rain set T est set accuracy 0 3 0 — 1 172 5 62.9% 2 33 1 56.2% 3 95 4 60.5% 4 15 0 56.6% 6 2 0 49.6% T able 1 : The number of clips in the training and test sets of MusicNet [44], divided according to the number of in- struments used (among the seven instruments we consider in our experiment) per clip (e.g., a piano trio uses 3 instru- ments). W e also sho w the average frame-lev el multi-pitch estimation accurac y (using mir e val [38]) achieved by the CNN model proposed by Thickstun et al . [43]. Due to the lack of frame-level instrument labels in many existing datasets, little work has focused on frame-le vel in- strument recognition. The w ork presented by Schl ¨ uter for vocal detection [40] and by Pati and Lerch for guitar solo detection [36] are e xceptions, but they each addressed one specific instrument, rather than general instruments. Liu and Y ang [31] proposed to use clip-le vel annotations in a weakly-supervised setting to make frame-level predictions, but the model is for general tags. Moreov er , due to the assumption that CNN can learn high-level features on its own, domain knowledge of music has not been much used in prior work on deep learning based instrument recogni- tion, though there are some exceptions [33, 37]. Our work dif ferentiates itself from the prior arts in two aspects. First, we focus on frame-lev el instrument recog- nition. Second, we explicitly employ the result of multi- pitch estimation [6, 43] as additional inputs to our CNN model, with a design that is motiv ated by the observation that instruments hav e different pitch range and hav e unique energy distrib utions in the partials [14]. 3. D A T ASET T raining and ev aluating a model for frame-le vel instrument recognition is possible due to the recent release of the Mu- sicNet dataset [44]. It contains 330 freely-licensed music recordings by 10 composers with over 1 million annotated pitch and instrument labels on 34 hours of chamber mu- sic performances. Follo wing [43], we use the pre-defined split of training and test sets, leading to 320 and 10 clips in the training and test sets, respecti vely . As there are only sev en different instruments in the test set, we only con- sider the recognition of these sev en instruments in our ex- periment. They are Piano, V iolin, V iola, Cello, Clarinet, Bassoon and Horn . For the training set, we do not ex- clude the sounds from the instruments that are not on the list, but these instruments are not labeled. Different clips use different number of instruments. See T able 1 for some statistics. For con venience, each clip is divided into 3- second segments. W e use these segments as the input to our model. W e zero-pad (i.e., adding silence) the last seg- ment of each clip so that it is also 3 seconds. Due to space limit, for details we refer readers to the MusicNet website (check reference [44] for the URL) and also our project website (see the abstract for the URL). W e note that the Medle yDB dataset [5] can also be used for frame-le vel instrument recognition, but we choose Mu- sicNet for two reasons. First, MusicNet is more than three times lar ger than MedleyDB in terms of the total duration of the clips. Second, MusicNet has pitch labels for each in- strument, while MedleyDB only annotates the melody line. Howe ver , as MusicNet contains only classical music and MedleyDB has more Pop and Rock songs, the tw o datasets feature fairly different instruments and future work can be done to consider they both. 4. INSTR UMENT RECOGNITION METHOD 4.1 Basic Network Ar chitectures that Uses CQT T o capture the timbral characteristics of each instrument, in our basic model we use CQT as the feature represen- tation of music audio. CQT is a spectrographic represen- tation that has a musically and perceptual motiv ated fre- quency scale [41]. W e compute CQT by librosa [34], with sampling rate 44,100 and 512-sample window size. 88 frequency notes are extracted with 12 bins per octav e, which forms a matrix X ∈ R 258 × 88 as the input data, for each inputting 3-second audio segment. W e experiment with two baseline models. The first one is adapted from the CNN model proposed by Liu and Y ang [31], which has been sho wn ef fectiv e for music auto- tagging. Instead of using 6 feature maps as the input to the model as the y did, we just use CQT as the input. Moreover , we use frame-lev el annotations as the supervisory signal in training the network, instead of training the model in a weakly-supervised fashion as the y did. A batch normaliza- tion layer [23] is added after each con volution layer . Figure 1a shows the model architecture. The second one is adapted from a more recent CNN model proposed by Chou et al. [10], which has been shown effecti ve for large-scale sound ev ent detection. Its design is special in two aspects. First, it uses 1D con volutions (along time) instead of 2D con volutions. While 2D con- volutions analyze the input data as a chunk and con volve on both spectral and temporal dimensions, the 1D con volu- tions (along time) might better capture frequency and tim- bral information in each time frame [10, 29]. Second, it uses the so-called residual (Res) blocks [21, 22] to help the model learn deeper . Specifically , we employ three Res- blocks in between an early conv olutional layer and a late con volutional layer . Each Res-block has three con volu- tional layers, so the network has a stack of 11 con volutional layers in total. W e expect such a deep structure can learn well for a lar ge-scale dataset such as MusicNet. Figure 1b shows its model architecture. 4.2 Adding Pitch Although people usually expect neural networks can learn high-lev el feature such as pitch, onset and melody , our pi- lot study shows that with the basic architecture the network still confuses some instruments (e.g., clarinet, bassoon and horn), and that onset frames for each instrument are not nicely located (see the second ro w of Figure 3). W e pro- pose to remedy this with a pitch-a ware model that explic- itly takes pitch as input, in a hope that doing so can amplify onset and timbre information. W e e xperiment with sev eral methods for in viting pitch to join the model. 4.2.1 Sour ce of F rame-le vel Pitch Labels W e consider two ways of getting pitch labels in our ex- periment. One is using human-labeled ground truth pitch labels provided by MusicNet. Ho wev er , in real-word appli- cations, it is hard to get 100% correct pitch labels. Hence, we also use pitch estimation predicted by a state-of-the- art multi-pitch estimator proposed by Thickstun et al. [43]. The author proposed a translation-inv ariant network which combines traditional filterbank with a con volutional neu- ral network. The model shares parameters in the log- frequency domain, which exploits the frequency inv ariance of music to reduce the number of model parameters and to av oid overfitting to the training data. The model reaches the top performance in the 2017 MIREX Multiple Funda- mental Frequency Estimation ev aluation [1]. The av erage pitch estimation accurac y , e valuated using mir ev al [38], is shown in T able 1. 4.2.2 Harmonic Series F eatur e Figure 1c depicts the architecture of a proposed pitch- aware model. In this model, we aim to exploit the observ a- tion that the energy distribution of the partials constitutes a key factor in the perception of instrument timbre [14]. Being moti vated by [6], we propose the harmonic series featur e (HSF) to capture the harmonic structure of music notes, calculated as follows. W e are given the input pitch estimate (or ground truth) P 0 ∈ R 258 × 88 , which is a ma- trix with the same size as the CQT matrix. The entries in P 0 take the value of either 0 or 1 in the case of ground truth pitch labels, and the value in [0 , 1] in the case of estimated pitches. If the value of an entry is close to 1, we know that likely a music note with the fundamental frequenc y is activ e on that time frame. First, we construct a harmonic map that shifts the acti ve entries in P 0 upwards by a multiple of the corresponding fundamental frequenc y ( f 0 ). That is, the ( t, f ) -th entry in the resulting harmonic map P n ∈ R 258 × 88 is nonzero only if that frequency is ( n + 1) times lar ger than an acti ve f 0 that frame, i.e., f = f 0 · ( n + 1) . Then, a harmonic series feature up to the ( n + 1) -th har - monics, 2 denoted as H n ∈ R 258 × 88 , is computed by an element-wise sum of P 0 , P 1 , . . . up to P n , as illustrated in Figure 1c. In that follows, we also refer to H n as HSF– n . When using HSF– n as input to the instrument recog- nition model, we concatenate CQT X and H n along the channel dimension, to the ef fect that emphasizing the par- tials in the input audio. The resulting matrix is then used as the input to a CNN model depicted in Figure 1c. The CNN 2 W e note that the first harmonic is the fundamental frequency . (a) Baseline CNN [31] (b) CNN + ResBlocks [10] (c) Pitch-aware model (CQT+HSF) Figure 1 : Three kinds of model structure used in this instrument recognition experiment. model used here is also adapted from [10], using 1D con- volutions, ResBlocks, and 11 con volutional layers in total. W e call this model ‘ CQT + HSF– n ’ hereafter . 4.2.3 Other W ays of Using Pitch W e consider another two methods to use pitch information. First, instead of stressing the overtones, the matrix P 0 already contains information regarding which pitches are activ e per time frame. This information can be impor- tant because different instruments (e.g., violin, viola and cello) ha ve dif ferent pitch ranges. Therefore, a simple w ay of taking pitch information into account is to concatenate P 0 with the input CQT X along the frequency dimension (which is fine since we use 1D conv olutions), leading to a 258 × 176 matrix, and then feed it to the early con volu- tional layer . This method exploits pitch information right from the be ginning of the feature learning process. W e call it the ‘ CQT + pitch (F) ’ method for short. Second, we can also concatenate P 0 with the input CQT X along the channel dimension, to allo w the pitch informa- tion to directly influence the input CQT X . It can tell us the pitch note and onset timing, which is critical in instrument recognition. W e call this method ‘ CQT + pitch (C) ’. 4.3 Implementation Details All the networks are trained using stochastic gradient de- scend (SGD) with momentum 0.9. The initial learning rate is set to 0.01. The weighted cross entropy , as defined be- low , is used as the cost function for model training: l n = − y n [ t n · log σ ( ˆ y n ) + (1 − y n ) · log(1 − σ ( ˆ y n ))] , (1) where y n and ˆ y n are the ground truth and predicted la- bel for the n -th instrument per time frame, σ ( · ) is the sigmoid function to reduce the scale of ˆ y n to [0 , 1] , and w n is a weight computed to emphasize positi ve labels and counter class imbalance between the instruments, based on the trick proposed in [39]. Code and model are b uilt with the deep learning framew ork PyT orch. Due to the final sigmoid layer , the output of the instru- ment recognition model is a continuous v alue in [0 , 1] for each instrument per frame, which can be interpreted as the likelihood of the presence for each instrument. T o decide the e xistence of an instrument, we need to pick a threshold to binarize the result. Simply setting the threshold to 0.5 equally for all the instruments may not work well. Accord- ingly , we implement a simple threshold picking algorithm that selects the threshold (from 0.01, 0.02, . . . to 0.99, in total 99 candidates) per instrument by maximizing the F1- score on the training set. F1-score is the harmonic mean of precision and recall. In our experiments, we compute the F1-score indepen- dently (by concatenating the result for all the segments) for each instrument and then report the average result across instruments as the performance metric. W e do not implement any smoothing algorithm to post- process the recognition result, though this may help [28]. 5. PERFORMANCE STUD Y The ev aluation result is sho wn in T able 2. W e first examine the result between two models without pitch information. From the first and second rows, we see that adding Res- blocks indeed leads to a more accurate model. Therefore, we also use Res-blocks for the pitch-aware models. W e then examine the result when we use ground truth pitch labels to inform the model. From the upper half of T able 2, pitch-aware models (i.e., CQT + HSF) indeed out- perform the models that only use CQT . While the CQT - only model based on [10] attains 0.887 average F1-score, the best model CQT + HSF-3 reaches 0.933. Salient im- prov ement is found for V iola , Clarinet , and Bassoon . Pitch Method Piano V iolin V iola Cello Clarinet Bassoon Horn A vg. source none CQT only (based on [31]) 0.972 0.934 0.798 0.909 0.854 0.816 0.770 0.865 CQT only (based on [10]) 0.982 0.956 0.830 0.933 0.894 0.822 0.789 0.887 CQT + HSF–1 0.999 0.986 0.916 0.972 0.945 0.909 0.776 0.929 groud- CQT + HSF–2 0.997 0.984 0.912 0.968 0.941 0.906 0.799 0.930 truth CQT + HSF–3 0.997 0.985 0.914 0.971 0.944 0.907 0.810 0.933 pitch CQT + HSF–4 0.997 0.986 0.909 0.969 0.944 0.904 0.815 0.932 CQT + HSF–5 0.998 0.975 0.902 0.968 0.942 0.912 0.803 0.928 CQT + HSF–1 0.983 0.955 0.841 0.935 0.901 0.822 0.793 0.890 CQT + HSF–2 0.983 0.954 0.830 0.933 0.899 0.820 0.800 0.889 estimated CQT + HSF–3 0.983 0.955 0.829 0.934 0.903 0.818 0.805 0.890 pitch CQT + HSF–4 0.981 0.955 0.833 0.937 0.903 0.831 0.793 0.890 by [43] CQT + HSF–5 0.984 0.956 0.835 0.935 0.915 0.839 0.805 0.896 CQT + Pitch (F) 0.983 0.955 0.829 0.936 0.887 0.819 0.791 0.886 CQT + Pitch (C) 0.982 0.958 0.819 0.921 0.898 0.827 0.794 0.886 T able 2 : Recognition accuracy (in F1-score) of model with and without pitch information, using either ground truth pitches or estimated pitches. W e use bold font to highlight the best result per instrument for the three groups of results. Figure 2 : Harmonic spectrum of V iola (top left), V iolin (top right), Bassoon (bottom left) and Horn (bottom right), created by the software Audacity [42] for real-life record- ings of instruments playing a single note. Moreov er , a comparison among the pitch-a ware models shows that different instruments seem to prefer different numbers of harmonics n . Horn and Bassoon achieve best F1-score with lar ger n (i.e., using more partials), while V i- ola and Cello achiev es best F1-score with smaller n (us- ing less partials). This is possibly because string instru- ments hav e similar amplitudes for the first five o vertones, as Figure 2 ex emplifies. Therefore, when more overtones are emphasized, it may be hard for the model to detect those trivial difference, and this in turn causes confusion between similar string instruments. In contrast, there is salient difference in the amplitudes of the first fiv e over - tones for Horn and Bassoon , making HSF–5 effecti ve. Figure 3 shows qualitati ve result demonstrating the pre- diction result for four clips in the test set. By comparing the result of the first tw o ro ws and the last ro w , we see that on- set frames are clearly identified by the HSF-based model. Furthermore, when adding HSF , it seems easier for a model to distinguish between similar instruments (e.g., violin ver- sus viola). These examples show that adding HSF helps the model learn onset and timbre information. Next, we examine the result when we use pitch esti- mation provided by the model of Thickstun et al. [43]. W e know already from T able 1 that multi-pitch estimation is not perfect. Accordingly , as sho wn in the last part of T able 2, the performance of the pitch-aware models de- grades, though still better than the model without pitch information. The best result is obtained by CQT + HSF– 5, reaching 0.896 av erage F1-score. Except for V iolin , CQT + HSF–5 outperforms CQT -only for all the instru- ments. W e see salient improvement for V iola , Clarinet , Bassoon and Horn , for which the CQT -only model per- forms relati vely worse. This shows that HSF helps high- light dif ferences in the spectral patterns of the instruments. Besides, similar to the case when using ground truth pitch labels, when using the estimated pitches, we see that V iola still prefers using fe wer harmonic maps, whereas Bassoon and Horn prefer more. Given the observ ation that different instruments prefer different number of harmon- ics, it may be interesting to design an automatic way to dy- namically decide the number of harmonic maps per frame, to further improv e the result. The fourth row of Figure 3 gi ves some result for CQT + HSF–5 based on estimated pitches. Compared to the re- sult of CQT only (second ro w), we see that CQT + HSF–5 nicely reduces the confusion between V iolin and V iola for the solo violin piece, and reinforces the onset timing for the string quartet piece. Moving forward, we e xamine the result of the other two pitch-based methods, CQT+Pitch (F) and CQT+Pitch (C), using again estimated pitches. From the last two ro ws of T able 2, we see that these two methods do not perform bet- ter than e ven the second CQT -only baseline. As these two pitch-based methods take the pitch estimates directly as the model input, we conjecture that they are more sensitive Figure 3 : Prediction results of different methods for four test clips. The first ro w shows the ground truth frame-lev el instrument labels, where the horizontal axis denotes time. The other ro ws sho w the frame-lev el instrument recognition result for a model that only uses CQT (‘CQT only’; based on [10]) and three pitch-aware models that use either ground truth or estimated pitches. W e use black shade to indicate the instrument(s) that are considered activ e in the labels or in the recognition result in each time frame. Figure 4 : Frame-le vel instrument recognition result for a pop song, Make Y ou F eel My Love by Adele, using the baseline CNN [31] (top), CNN + Res-blocks [10] (middle) and CQT + HSF–5 using estimated pitches (bottom). to errors in multi-pitch estimation and accordingly cannot perform well. From the recognition result of the string quartet clip in the third row of Figure 3, we see that the CQT+Pitch (F) method cannot distinguish between similar instruments such as V iolin and V iola . This suggests that HSF might be a better way to exploit pitch information. Finally , out of curiosity , we test our models on a famous pop music (despite that our models are trained on classical music). Figure 4 sho ws the prediction result for the song Make Y ou F eel My Love by Adele. It is encouraging to see that our models correctly detect the Piano used throughout the song and the string instruments used in the middle solo part. Moreover , they correctly giv e almost zero estimate for the wind and brass instruments. Moreov er, when us- ing the Res-blocks, the prediction errors on clarinet are re- duced. When using the pitch-a ware model, the prediction errors on V iolin and Cello at the beginning of the song are reduced. Besides, Piano timbre can also be strengthened when Piano and the strings play together at the bridge. 6. CONCLUSION In this paper , we have proposed se veral methods for frame- lev el instrument recognition. Using CQT as the input fea- ture, our model can achie ve 88.7% average F1-score for recognizing se ven instruments in the MusicNet dataset. Even better result can be obtained by the proposed pitch- aware models. Among the proposed methods, the HSF- based models achiev e the best result, with av erage F1- score 89.6% and 93.3% respecti vely when using estimated and ground truth pitch information. In future work, we will include Medle yDB to our train- ing set to cover more instruments and music genres. W e also like to explore joint learning frameworks and recur- rent models (e.g., [8, 9, 20]) for better accuracy . 7. A CKNOWLEDGEMENT This work was funded by a project with KKBO X Inc. 8. REFERENCES [1] MIREX multiple fundamental frequency esti- mation ev aluation result, 2017. [Online] http: //www.music- ir.org/mirex/wiki/2017: Multiple_Fundamental_Frequency_ Estimation_%26_Tracking_Results_ - _MIREX_Dataset . [2] Giulio Agostini, Maurizio Longari, and Emanuele Pol- lastri. Musical instrument timbres classification with spectral features. EURASIP J ournal on Applied Signal Pr ocessing , 1:5–14, 2003. [3] Kristina Andersen and Peter Knees. Conv ersations with e xpert users in music retriev al and research chal- lenges for creative MIR. In Pr oc. Int. Soc. Music Infor- mation Retrieval Conf . , pages 122–128, 2016. [4] Jayme Garcia Arnal Barbedo and George Tzanetakis. Musical instrument classification using individual par- tials. IEEE T rans. Audio, Speech, and Language Pr o- cessing , 19(1):111–122, 2011. [5] Rachel Bittner , Justin Salamon, Mike T ierney , Matthias Mauch, Chris Cannam, and Juan Bello1. MedleyDB: A multitrack dataset for annotation- intensiv e MIR research. In Proc. Int. Soc. Music In- formation Retrieval Conf. , 2014. [Online] http:// medleydb.weebly.com/ . [6] Rachel M. Bittner , Brian McFee, Justin Salamon, Peter Li, and Juan P . Bello. Deep salience representations for f 0 estimation in polyphonic music. In Pr oc. Int. Soc. Music Information Retrieval Conf. , pages 63–70, 2017. [7] Juan J. Bosch, Jordi Janer, Ferdinand Fuhrmann, and Perfecto Herrera. A comparison of sound segre gation techniques for predominant instrument recognition in musical audio signals. In Pr oc. Int. Soc. Music Information Retrieval Conf. , pages 559–564, 2012. [Online] http://mtg.upf.edu/ download/datasets/irmas/ . [8] Ning Chen and Shijun W ang. High-le vel music de- scriptor extraction algorithm based on combination of multi-channel CNNs and LSTM. In Pr oc. Int. Soc. Mu- sic Information Retrieval Conf . , pages 509–514, 2017. [9] Keunw oo Choi, Gyorgy Fazekas, Mark Sandler, and Kyungh yun Cho. Con volutional recurrent neural net- works for music classification. In Pr oc. IEEE Int. Conf . Acoustics, Speech, and Signal Pr ocessing , 2017. [10] Szu-Y u Chou, Jyh-Shing Jang, and Y i-Hsuan Y ang. Learning to recognize transient sound events using at- tentional supervision. In Pr oc. Int. Joint Conf. Artificial Intelligence , 2018. [11] Jia Deng et al. ImageNet: A Large-Scale Hierarchical Image Database. In Proc. Conf. Computer V ision and P attern Recognition , 2009. [12] Aleksandr Diment, P admanabhan Rajan, T oni Heittola, and T uomas V irtanen. Modified group delay feature for musical instrument recognition. In Pr oc. Int. Symp. Computer Music Multidisciplinary Resear ch , 2013. [13] Zhiyao Duan, Jin yu Han, and Bryan P ardo. Multi-pitch streaming of harmonic sound mixtures. IEEE/A CM T rans. Audio, Speech, and Language Pr ocessing , 22(1):138–150, 2014. [14] Zhiyao Duan, Y ungang Zhang, Changshui Zhang, and Zhenwei Shi. Unsupervised single-channel music source separation by average harmonic structure mod- eling. IEEE T rans. Audio, Speech, and Language Pr o- cessing , 16(4):766 – 778, 2008. [15] Slim Essid, Ga ¨ el Richard, and Bertrand David. Mu- sical instrument recognition by pairwise classification strategies. IEEE T rans. Audio, Speech, and Language Pr ocessing , 14(4):1401–1412, 2006. [16] Alberto Garcia-Garcia, Sergio Orts-Escolano, Sergiu Oprea, V ictor V illena-Martinez, and Jos ´ e Garc ´ ıa Rodr ´ ıguez. A revie w on deep learning techniques applied to semantic segmentation. arXiv pr eprint arXiv:1704.06857 , 2017. [17] Masataka Goto, Hiroki Hashiguchi, T akuichi Nishimura, and Ryuichi Oka. R WC Music Database: Popular , classical and jazz music databases. In Pr oc. Int. Society of Music Information Re- trieval Conf. , pages 287–288, 2002. [Online] https://staff.aist.go.jp/m.goto/ RWC- MDB/rwc- mdb- i.html . [18] Matt Hallaron et al. University of Iow a musical in- strument samples. Univ ersity of Iowa, 1997. [On- line] http://theremin.music.uiowa.edu/ MIS.html . [19] Y oonchang Han, Jaehun Kim, and Kyogu Lee. Deep con volutional neural networks for predominant instru- ment recognition in polyphonic music. IEEE/ACM T rans. Audio, Speech, and Language Pr ocessing , 25(1):208 – 221, 2017. [20] Curtis Ha wthorne, Erich Elsen adn Jialin Song, Adam Roberts, Ian Simon, Colin Raffel, Jesse Engel, Sageev Oore, and Douglas Eck. Onsets and frames: Dual- objectiv e piano transcription. Pr oc. Int. Soc. Music In- formation Retrieval Conf . , 2018. [21] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. In Pr oc. IEEE Int. Conf. Computer V ision and P attern Recognition , 2016. [22] Shawn Hershey et al. CNN architectures for large-scale audio classification. In Pr oc. IEEE Int. Conf. Acoustics, Speech and Signal Pr ocessing , 2017. [23] Serge y Ioffe and Christian Szegedy . Batch normaliza- tion: Accelerating deep network training by reducing internal covariate shift. In Pr oc. Int. Conf. Machine Learning , pages 448–456, 2015. [24] Cyril Joder, Slim Essid, and Ga ¨ el Richard. T empo- ral integration for audio classification with application to musical instrument classification. IEEE T rans. Au- dio, Speec h and Langua ge Pr ocessing , 17(1):174–186, 2009. [25] T etsuro Kitahara, Masataka Goto, Kazunori K omatani, T etsuya Ogata, and Hiroshi G. Okuno. Instrument identification in polyphonic music: Feature weighting with mixed sounds, pitch-dependent timbre modeling, and use of musical context. In Pr oc. Int. Soc. Music Information Retrieval Conf . , pages 558–563, 2005. [26] Y ann LeCun, Y oshua Bengio, and Geoffre y Hinton. Deep learning. Natur e , 521(7553):436–444, 2015. [27] Peter Li, Jiyuan Qian, and T ian W ang. Auto- matic instrument recognition in polyphonic music using con volutional neural networks. arXiv pr eprint arXiv:1511.05520 , 2015. [28] Dawen Liang, Matthew D. Hoffman, and Gautham J. Mysore. A generativ e product-of-filters model of au- dio. In Proc. Int. Conf. Learning Repr esentations , 2014. [29] Hyungui Lim, Jeongsoo Park, Kyogu Lee, and Y oon- chang Han. Rare sound ev ent detection using 1D con- volutional recurrent neural networks. In Pr oc. Int. W orkshop on Detection and Classification of Acoustic Scenes and Events , 2017. [30] Tsung-Y i Lin et al. Microsoft COCO: Common objects in context. In Pr oc. Eur opean Conf. Computer V ision , pages 740–755, 2014. [31] Jen-Y u Liu and Y i-Hsuan Y ang. Event localization in music auto-tagging. Pr oc. ACM Int. Conf. Multimedia , pages 1048–1057, 2016. [32] Arie Li vshin and Xavier Rodet. The significance of the non-harmonic “noise” versus the harmonic series for musical instrument recognition. In Pr oc. Int. Soc. Mu- sic Information Retrieval Conf . , 2006. [33] V incent Lostanlen and Carmine-Emanuele Cella. Deep con volutional networks on the pitch spiral for musical instrument recognition. In Pr oc. Int. Soc. Music Infor- mation Retrieval Conf . , pages 612–618, 2016. [34] Brian McFee, Colin Raf fel, Dawen Liang, Daniel PW Ellis, Matt McV icar, Eric Battenberg, and Oriol Ni- eto. librosa: Audio and music signal analysis in python. In Pr oc. Python in Science Conf. , pages 18– 25, 2015. [Online] https://librosa.github. io/librosa/ . [35] T aejin P ark and T aejin Lee. Musical instrument sound classification with deep con volutional neural net- work using feature fusion approach. arXiv preprint arXiv:1512.07370 , 2015. [36] Kumar Ashis Pati and Ale xander Lerch. A dataset and method for electric guitar solo detection in rock music. In Pr oc. Audio Engineering Soc. Conf . , 2017. [37] Jordi Pons, Thomas Lidy , and Xa vier Serra. Experi- menting with musically motiv ated conv olutional neu- ral networks. In Pr oc. Int. W orkshop on Content-based Multimedia Indexing , 2016. [38] Colin Raf fel, Brian Mcfee, Eric J. Humphrey , Justin Salamon, Oriol Nieto, Da wen Liang, and Daniel P . W . Ellis. mir ev al: a transparent implementation of com- mon mir metrics. In Pr oc. Int. Soc. Music Information Retrieval Conf. , 2014. [Online] https://github. com/craffel/mir_eval . [39] Rif A. Saurous et al. The story of audioset, 2017. [Online] http://www.cs.tut.fi/sgn/ arg/dcase2017/documents/workshop_ presentations/the_story_of_audioset. pdf . [40] Jan Schl ¨ uter . Learning to pinpoint singing voice from weakly labeled examples. In Pr oc. Int. Soc. Music In- formation Retrieval Conf . , 2016. [41] Christian Schoerkhuber and Anssi Klapuri. Constant- Q transform toolbox for music processing. In Pr oc. Sound and Music Computing Conf. , 2010. [42] Audacity T eam. Audacity . https://www. audacityteam.org/ , 1999-2018. [43] John Thickstun, Zaid Harchaoui, Dean P . Foster , and Sham M. Kakade. In variances and data augmentation for supervised music transcription. In Pr oc. IEEE Int. Conf. Acoustics, Speech, and Signal Pr ocessing , 2018. [Online] https://github.com/jthickstun/ thickstun2018invariances . [44] John Thickstun, Zaid Harchaoui, and Sham M. Kakade. Learning features of music from scratch. In Pr oc. Int. Conf. Learning Repr esentations , 2017. [On- line] https://homes.cs.washington.edu/ ˜ thickstn/musicnet.html . [45] Ju-Chiang W ang, Hsin-Min W ang, and Shyh-Kang Jeng. Playing with tagging: A real-time tagging mu- sic player . In Pr oc. IEEE Int. Conf. Acoustics, Speech and Signal Pr ocessing , pages 77–80, 2014. [46] Hanna Y ip and Rachel M. Bittner . An accurate open- source solo musical instrument classifier . In Pr oc. Int. Soc. Music Information Retrieval Conf., Late-Breaking Demo P aper , 2017. [47] Li-Fan Y u, Li Su, and Y i-Hsuan Y ang. Sparse cepstral codes and power scale for instrument identification. In Pr oc. IEEE Int. Conf. Acoustics, Speech and Signal Pr ocessing , pages 7460–7464, 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment