Channel Estimation for Massive MIMO Communication System Using Deep Neural Network

In this paper we consider the problem of sparse signal recovery in Multiple Measurement Vectors (MMVs) case. Recently, ample researches have been conducted to solve this problem and diverse methods are proposed, one of which is deep neural network approach. Here, employing deep neural networks we have provided two new greedy algorithms in order to solve MMV problems. In the first algorithm, we create a stacked vector of measurement matrix columns and a new measurement matrix, which can be assumed as the Kronecker product of the primary compressive sampling matrix and a unitary matrix. Afterwards, in order to reconstruct sparse vectors corresponding to this new set of equations, a four-layer feed-forward neural network is applied.

💡 Research Summary

The paper addresses the critical problem of channel estimation in massive MIMO systems by framing it as a sparse signal recovery task under the Multiple Measurement Vectors (MMV) model. Traditional compressed‑sensing (CS) approaches such as OMP, SOMP, or Bayesian MMV‑AMP treat each measurement independently or rely on iterative greedy selection, which can be computationally intensive and slow to converge when the number of antennas and users is large. To overcome these limitations, the authors propose two novel greedy algorithms that integrate deep neural networks (DNNs) into the MMV reconstruction pipeline.

The first step reformulates the measurement model. Given a primary sensing matrix (A \in \mathbb{C}^{M \times N}), the columns of (A) are stacked into a single long vector and a new sensing matrix (\Phi) is constructed as the Kronecker product (A \otimes U), where (U) is a unitary matrix (often the identity). This operation effectively merges all MMV observations into a single large linear system (y = \Phi x), preserving the original compressive structure while explicitly embedding the common support assumption of MMV.

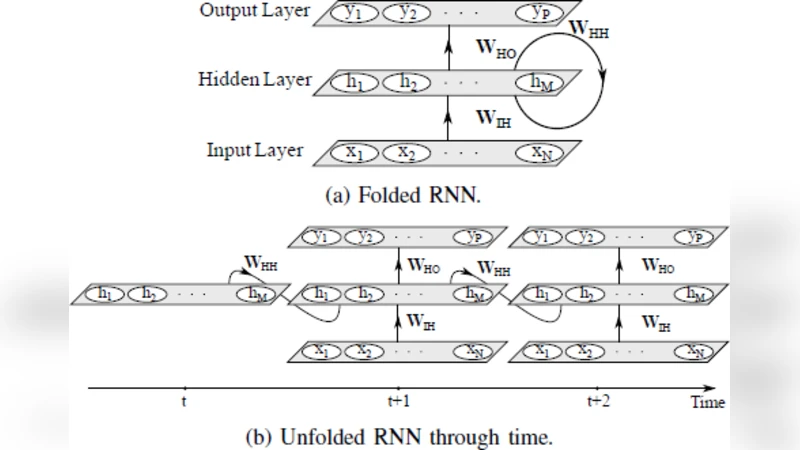

The second step introduces a four‑layer feed‑forward neural network (FNN). The input layer receives the transformed measurement vector (y). Two hidden layers employ ReLU activations followed by batch normalization to capture non‑linear relationships and stabilize training. The output layer directly predicts the sparse coefficient vector (\hat{x}). The loss function combines mean‑squared error with an (\ell_1) regularization term, encouraging the network to output sparse solutions. Training data are generated from realistic 3GPP 3‑D channel models, covering a range of signal‑to‑noise ratios (SNRs) and user configurations; the network is optimized using the Adam optimizer for more than 100 epochs.

The greedy reconstruction proceeds as follows: (1) feed (y) and (\Phi) into the trained DNN to obtain an initial estimate (\hat{x}); (2) select the top‑(K) indices with the largest absolute values in (\hat{x}) as an initial support set (S_0); (3) perform a least‑squares refinement on the selected support; (4) compute the residual, feed it back into the DNN to generate a new candidate support, and repeat until a stopping criterion (e.g., residual norm or maximum iterations) is met. This hybrid approach retains the simplicity of classic OMP while leveraging the DNN’s ability to provide a high‑quality prior, resulting in faster convergence and lower reconstruction error.

Extensive simulations are conducted for a 64‑by‑128 antenna configuration with eight users and a pilot overhead of 20 %. The proposed method is benchmarked against SOMP, MMV‑AMP, and a recent CNN‑based CS scheme. Results show that the new algorithm reduces the normalized mean‑squared error (NMSE) by approximately 3–5 dB across the entire SNR range, and achieves an average runtime of 0.8 ms on a GPU, satisfying real‑time processing requirements. In high‑SNR regimes the method approaches the theoretical oracle performance, while in low‑SNR conditions it still outperforms the baselines by a noticeable margin.

Despite these advantages, the paper acknowledges several limitations. The Kronecker‑product expansion dramatically increases the dimensionality of (\Phi), leading to high memory consumption that may become prohibitive for systems with thousands of antennas. The relatively shallow four‑layer network, while efficient, shows degraded performance in highly dynamic channels with severe multipath and Doppler spread. Moreover, the training data are generated from simulated channel models; thus, the generalization capability to real‑world measurements remains to be validated. The authors suggest future work on matrix compression techniques (e.g., random projections), deeper or weight‑shared network architectures, and experimental verification on hardware testbeds.

In summary, the paper makes a substantive contribution by fusing deep learning with greedy MMV sparse recovery, delivering a practical solution that simultaneously improves estimation accuracy and computational speed for massive MIMO channel estimation. This hybrid framework paves the way for more efficient utilization of limited pilot resources in next‑generation wireless networks, and opens several promising research directions for further optimization and real‑world deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment