Internet of Things (IoT) and Cloud Computing Enabled Disaster Management

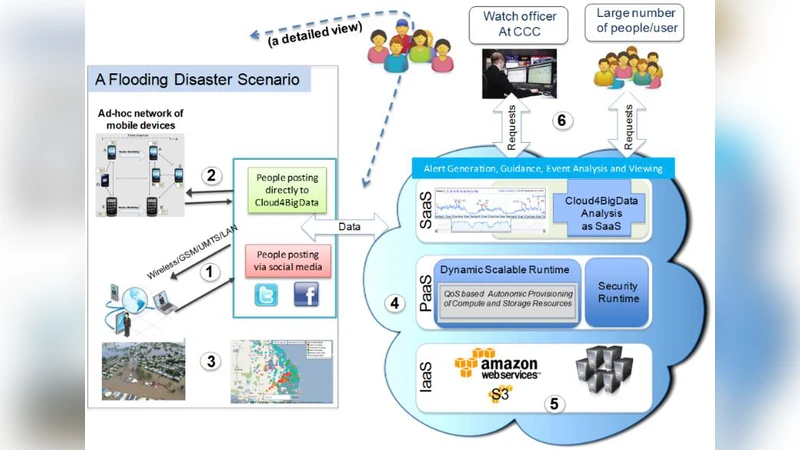

Disaster management demands a near real-time information dissemina-tion so that the emergency services can be provided to the right people at the right time. Recent advances in information and communication technologies enable collection of real-time information from various sources. For example, sensors deployed in the fields collect data about the environment. Similarly, social networks like Twitter and Facebook can help to collect data from people in the disaster zone. On one hand, inadequate situation awareness in disasters has been identified as one of the primary factors in human errors with grave consequences such as loss of lives and destruction of critical infrastructure. On the other hand, the growing ubiquity of social media and mobile devices, and pervasive nature of the Internet-of-Things means that there are more sources of outbound traffic, which ultimately results in the creation of a data deluge, beginning shortly after the onset of disaster events, leading to the problem of information tsunami. In addition, security and privacy has crucial role to overcome the misuse of the system for either intrusions into data or overcome the misuse of the information that was meant for a specified purpose. …. In this chapter, we provide such a situation aware application to support disaster management data lifecycle, i.e. from data ingestion and processing to alert dissemination. We utilize cloud computing, Internet of Things and social computing technologies to achieve a scalable, effi-cient, and usable situation-aware application called Cloud4BigData.

💡 Research Summary

The paper addresses the critical need for near‑real‑time information dissemination in disaster management, where the rapid influx of heterogeneous data from sensors, mobile devices, and social media creates an “information tsunami.” The authors argue that inadequate situational awareness is a major cause of human error during emergencies, and that existing ICT solutions lack the scalability and resilience required to process massive data streams in a timely manner. To overcome these challenges, they propose a comprehensive framework called Cloud4BigData, which integrates three disruptive technologies: the Internet of Things (IoT), cloud computing, and big‑data analytics.

The framework is organized into four functional layers. First, data ingestion collects continuous streams from field‑deployed environmental sensors (e.g., temperature, humidity, water level), smartphones, and social platforms such as Twitter and Facebook. Second, network transport employs Delay‑Tolerant Networks (DTNs) and Transient Social Networks (TSNs) to ensure data delivery even when conventional communication infrastructure is damaged or overloaded. DTNs use a store‑and‑forward paradigm, allowing intermediate nodes to buffer messages until a connection becomes available, while TSNs enable opportunistic peer‑to‑peer exchanges among mobile devices for rapid alert propagation.

Third, cloud‑based processing leverages elastic infrastructure (IaaS/PaaS) and streaming engines (e.g., Apache Storm, Spark Streaming) to perform real‑time cleansing, geo‑aggregation, sentiment analysis, and risk modeling. The output is a quantified “situational awareness score” that is visualized on a dashboard for decision‑makers at the Crisis Coordination Centre (CCC). Fourth, alert dissemination and decision support automatically generate targeted notifications (SMS, app push, broadcast) based on the computed score, allowing responders to allocate resources and issue warnings with minimal latency.

Security and privacy are woven throughout the architecture. During transport, messages are signed and authenticated using public‑key cryptography to protect integrity and prevent spoofing. In the cloud, data at rest is encrypted and access is controlled via fine‑grained ACLs. The analytics stage applies anonymization and aggregation techniques to minimize exposure of personally identifiable information.

Key contributions of the work include: (1) a unified pipeline that can ingest, process, and act upon massive, multi‑modal disaster data in real time; (2) a hybrid networking model (DTN + TSN) that maintains connectivity under severe infrastructure disruption; (3) a real‑time situational awareness metric that feeds directly into automated alerting; and (4) an integrated security/privacy framework tailored for emergency contexts.

The paper also acknowledges several limitations. No concrete implementation details, performance benchmarks, or field trials are presented, leaving open questions about the scalability of DTN routing protocols, the overhead of TSN formation, and the cost model of cloud resources under bursty disaster workloads. Future research directions suggested include extensive simulation and real‑world testing, incorporation of AI‑driven predictive models, and exploration of multi‑cloud/edge architectures for optimal resource allocation.

Overall, the study provides a forward‑looking vision of how IoT, cloud computing, and big‑data analytics can be orchestrated to transform disaster response from a reactive, manual process into a proactive, data‑driven operation capable of handling the deluge of information that modern emergencies generate.

Comments & Academic Discussion

Loading comments...

Leave a Comment