Training Augmentation with Adversarial Examples for Robust Speech Recognition

This paper explores the use of adversarial examples in training speech recognition systems to increase robustness of deep neural network acoustic models. During training, the fast gradient sign method is used to generate adversarial examples augmenti…

Authors: Sining Sun, Ching-Feng Yeh, Mari Ostendorf

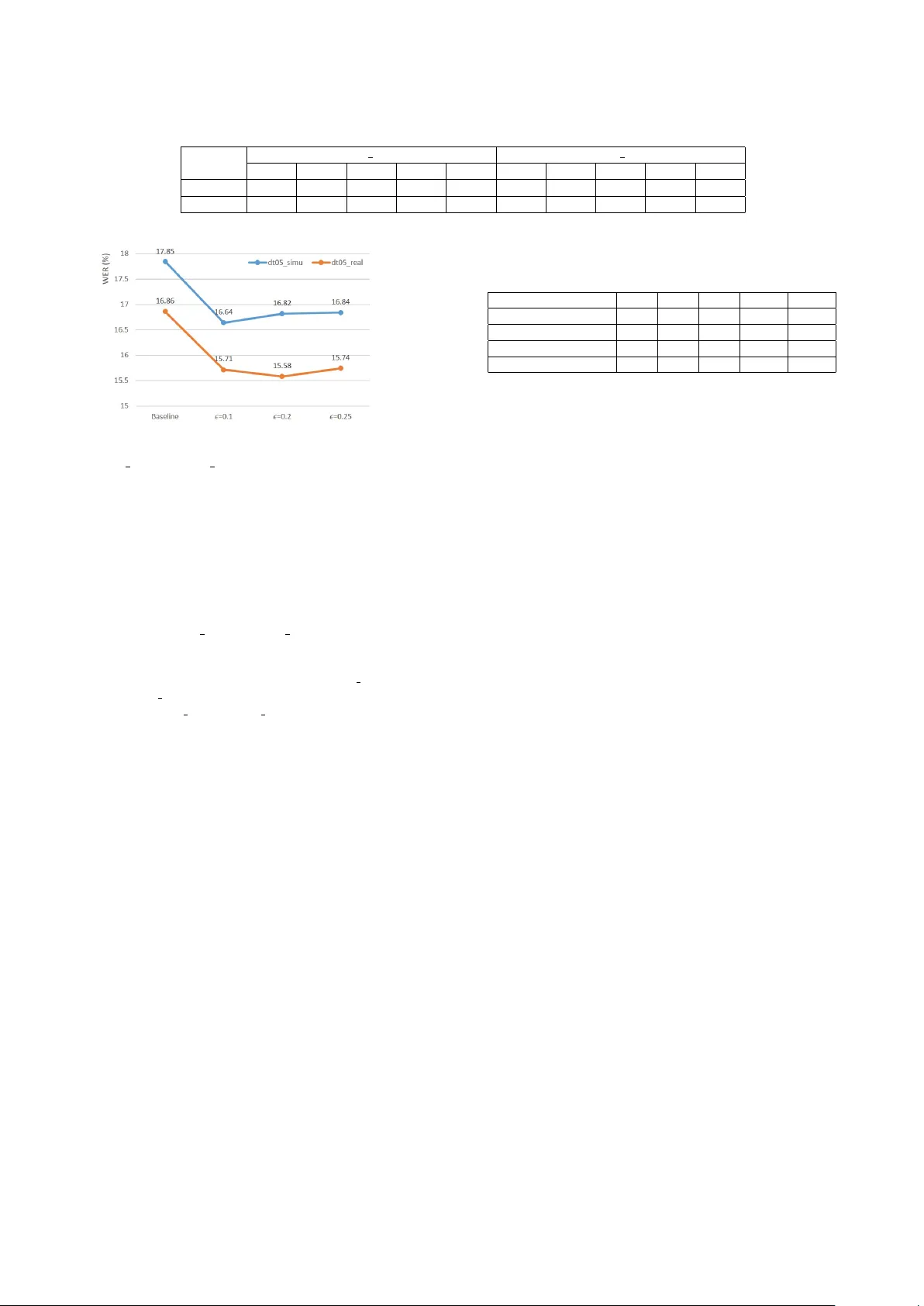

T raining A ugmentation with Adversarial Examples f or Rob ust Speech Recognition Sining Sun 1 , Ching-F eng Y eh 2 , Mari Ostendorf 3 , Mei-Y uh Hwang 2 , Lei Xie 1 ∗ 1 School of Computer Science, Northwestern Polytechnical Uni versity , Xi’an, China 2 Mobv oi AI Lab, Seattle, USA 3 Department of Electrical Engineering, Uni versity of W ashington, Seattle , USA { snsun,lxie } @nwpu-aslp.org, { cfyeh,mhwang } @mobvoi.com, ostendor@uw.edu Abstract This paper explores the use of adversarial examples in training speech recognition systems to increase robustness of deep neu- ral network acoustic models. During training, the f ast gradient sign method is used to generate adversarial examples augment- ing the original training data. Different from conv entional data augmentation based on data transformations, the examples are dynamically generated based on current acoustic model param- eters. W e assess the impact of adversarial data augmentation in experiments on the Aurora-4 and CHiME-4 single-channel tasks, sho wing impro ved robustness against noise and channel variation. Further improvement is obtained when combining adversarial examples with teacher/student training, leading to a 23% relativ e word error rate reduction on Aurora-4. Index T erms : rob ust speech recognition, adversarial examples, FGSM, data augmentation, teacher-student model 1. Introduction In recent few years, there has been significant progress in au- tomatic speech recognition (ASR) due to the successful appli- cation of deep neural networks (DNNs) [1, 2], such as con volu- tional neural networks (CNNs) [3, 4], recurrent neural networks (RNNs) [5] and sequence-to-sequence learning [5]. Acous- tic modeling based on deep learning has shown robustness against noisy signals due to the deep structure and an abil- ity to model non-linear transformations [6]. Howe ver , current ASR systems are still sensitiv e to environmental noise, room rev erberation [7] and channel distortion. A variety of meth- ods hav e been proposed to deal with noise, including front- end processing such as data augmentation [8], single or multi- channel speech enhancement [9], rob ust feature transformation, very deep CNNs [10, 6], and learning strategies such as teacher- student (T/S) [11] and adv ersarial training [12]. Here, we focus on data augmentation, assessed with and without T/S training. The performance of acoustic models de grades when there exists a mismatch between training data and unseen test data. Data augmentation is an ef fecti ve way to improve rob ustness, aiming to increase the di versity of the training data by augment- ing it with perturbed versions using methods such as adding noises or reverberation to clean speech. Acoustic modeling with data augmentation is also known as multi-condition or multi- style training [13], which has been widely adopted in many ASR systems. Data augmentation strategies for re verberant sig- nals was in vestigated in [8], which proposed using real and sim- The research w ork is supported by the National Key Research and Dev elopment Program of China (Grant No.2017YFB1002102) and the National Natural Science Foundation of China (Grant No.61571363). ∗ Lei Xie is the corresponding author . ulated room impulse responses to modify clean speech with dif- ferent signal-to-noise ratio (SNR) levels. Generally , the robust- ness of the system improves with the diversity in the training data. A variational autoencoder (V AE) approach to data aug- mentation was proposed in [14] for unsupervised domain adap- tation. They train a V AE on both clean and noisy speech with- out supervised information to learn a latent representation. The noisy data to be augmented are then selected by comparing the similarities with the original data, with latent representations modified by removing attributes such as speaker identity , chan- nel and background noise. T eacher-student (T/S) training has been widely adopted as an ef fectiv e approach to increase the robustness of acoustic modeling in supervised [15] or unsupervised [11] scenarios. T/S training relies on parallel data to train a teacher model and a student model. For example, a close-talk data set can be used to train the teacher model, while the same speech collected by a far -field microphone can be used to train the student model. Adversarial training, which aims to learn a domain-inv ariant representation, is recently proposed for rob ust acoustic mod- eling [16, 17]. Adversarial training can be used to reduce the mismatches between training and test data, and it is applicable in both supervised and unsupervised scenarios. In this paper, we propose data augmentation with adver - sarial examples for acoustic modeling, in order to improv e ro- bustness in adv erse en vironments. The concept of adv ersarial examples was first proposed in [18] for computer vision tasks. They discovered that neural networks can easily misclassify ex- amples in which the image pixels are only slightly sk ewed from the original ones. That is, the models can be v ery sensiti ve to ev en minor input disturbance. Adversarial examples have pro- vok ed research interest in computer vision and natural language processing [19, 20]. Recently , adversarial examples were in- troduced to simulate attacks to state-of-the-art end-to-end ASR systems [21]. In the work, a white-box targeted attack scenario was shown: giv en a natural wav eform x and a nearly inaudible adversarial noise δ which is generated from x and some tar geted phrase y , x + δ would be recognized as y regardless of the orig- inal content in x . This is consistent with the observ ation in [18] where neural networks can be vulnerable when there are minor but elaborate disturbances. Previous work has focused on improving model robustness against adversarial test examples [19, 22]. In our work, we adopt the idea and augment training data with adv ersarial ex- amples to obtain more robust acoustic models to natural data instead of adversarial examples only . In contrast to adversarial training, where the model is trained to be inv ariant to specific phenomena represented in the training set, the adversarial ex- amples used here are generated automatically based on inputs and model parameters associated with each mini-batch. In the training stage, we generate adversarial e xamples dynamically using the fast gradient sign method (FGSM) [19], since it has been shown to be both effectiv e and efficient compared with other approaches for generating adversarial e xamples. F or each mini-batch, after the adversarial examples are obtained, the pa- rameters in the model are updated with both the original and the adversarial e xamples. Furthermore, we combine the proposed data augmentation scheme with teacher-student (T/S) training when parallel data is available, and find that the improvements from both approaches are additiv e. The rest of the paper is organized as follo ws: Sec. 2 in- troduces adversarial examples and ho w to generate them using FGSM. Sec. 3 gi ves details of using adversarial examples for acoustic modeling. Sec. 4 describes the experimental setup and results on the CHiME-4 single track tasks and on the Aurora-4 dataset. Concluding remarks are presented in Sec. 5. 2. Adversarial examples 2.1. Definition of adversarial examples The goal of adversarial examples is to disturb well-trained ma- chine learning models. Rele vant work shows that state-of-the- art models can be vulnerable to adversarial e xamples; i.e., the predictions of the models are easily misled by non-random perturbation on input signals, e ven though the perturbation is hardly perceptible by humans [18]. In such cases, these per- turbed input signals are carefully designed and named “adver - sarial examples. ” The success of using adversarial examples to disturb models also indicates that the output distrib ution of neu- ral networks may not be smooth with respect to the instances of input data distribution. As a result, a small sk ew in the input signals may cause abrupt changes on the output values of the models [23]. In general, a machine learning model, such as a neural net- work, is a parameterized function, f ( x ; θ ) , where x is the in- put and θ represents the model’ s parameters. A trained model f ( x ; θ ) is used to predict the label y i giv en the input x i . An adversarial e xample x adv i can be constructed as: x adv i = x i + δ i (1) so that y i 6 = f ( x adv i ; θ ) (2) where k δ i k k x i k , (3) and δ is called the adversarial perturbation. For a trained and robust model, small random perturbations should not have a sig- nificant impact on the output of the model. Therefore, generat- ing adversarial perturbations as negati ve training examples can potentially improv e model robustness. 2.2. Generating adversarial examples In [19], the fast gradient sign method (FGSM) was proposed to generate adv ersarial e xamples using current model parameters and existing training data to generate adv ersarial perturbations δ i in equation 1. Giv en model parameters θ , inputs x and the targets y asso- ciated with x , the model is trained to minimize the loss func- tion J ( θ , x , y ) . In this work, we use av erage cross-entropy for J ( θ , x , y ) , which is very common for classification tasks. Con- ventionally , to train a neural network, gradients are computed with the predictions of the model and the designated label, and the gradients are propagated through the layers using the back- propagation algorithm until the input layer of the network is reached. Ho wev er , it is possible to further compute the gradi- ent with respect to the input to the network (to find adversarial examples) rather than just the network weights [19]. The idea of FGSM is to generate adversarial examples that maximize the loss function J ( θ , x , y ) , x adv = arg max x J ( θ , x , y ) (4) = x + δ F GS M , (5) where δ F GS M = sign ( ∇ x J ( θ , x , y )) (6) and is a small constant to be tuned. Note that FGSM uses the sign of the gradient instead of the v alue, making it easier to satisfy the constraint of equation 3. Our experimental results show that small is stable to generate adversarial examples to perturb the neural networks. 3. T raining with adversarial examples Different from other data augmentation approaches such as adding artificial noises to simulate perturbations, the adversarial examples are generated by the model but shifted to a bigger loss value ∇ x adv J ( θ , x adv , y ) than the original data x . After x adv is generated, we further use it to update the model parameters, in order to enhance the robustness of the ASR system against noisy environments. In this work, FGSM is used to generate adversarial examples dynamically within each mini-batch, and the model parameters are updated immediately following the original mini-batch, as elaborated in Algorithm 1. Algorithm 1 Training neural network with automatically gen- erated adversarial e xamples Input: D = { x i , y i } K k =1 , training set x i , input features y i , output labels µ , learning rate , adversarial weight Output: θ , model parameters 1: Initialize model parameters θ 2: while model does not con verge do 3: Read a mini-batch B = { x m , y m } M m =1 from D 4: T rain model using B , θ ← θ − µ M P M m =1 ∇ θ J ( x m , y m , θ ) 5: Calculate { δ F GS M m } M m =1 using equation 6 for B δ F GS M m = sign ( ∇ x m J ( x m , y m , θ )) 6: Generate adversarial e xamples using equation 1 x adv m = x m + δ F GS M m 7: Make a mini-batch B adv with { x adv m } M m =1 B adv = { x adv m , y m } M m =1 8: T rain model using B adv , θ ← θ − µ M P M m =1 ∇ θ J ( x adv m , y m , θ ) 9: end while In the case of acoustic modeling, the input x refers to acoustic features such as Mel-frequency cepstral coefficients (MFCCs), the label y refers to frame-le vel alignments such as indices of senones from forced alignments. W ith data augmen- tation using adversarial examples, we apply several steps with each mini-batch to update the model parameters: (1) Train the model with the original inputs and obtain the adv ersarial pertur - bations δ m per sample as in equation 6. As is a constant, the perturbation for each feature dimension of each sample is either + or − . (2) Generate adversarial examples with the obtained adversarial perturbations. (3) Make a mini-batch with adversar - ial e xamples and original labels. (4) T rain the model with the mini-batch from the adversarial e xamples. By feeding the original labels in the mini-batch with adver - sarial examples, we are minimizing the distance between the ground truth and output of the network as regular training. But what is worth noticing is that the adversarial examples are gen- erated by FGSM to maximize the loss function as described in equation 4, which reflects the “blind spots” of the current model in input space to some extent. 4. Experiments 4.1. Speech corpora and system description 4.1.1. Aur ora-4 corpus The Aurora-4 corpus is designed to ev aluate the robust- ness of ASR systems on a medium v ocabulary continuous speech recognition task based on W all Street Journal (WSJ0, LDC93S6A). There are 7138 clean utterances from 83 speak- ers in the training set, recorded using the primary microphone, denoted as WSJ0 corpus here. The multi-condition training set (denoted as WSJ0m) also consists of 7138 utterances, but with a combination of clean and noisy speech perturbed by one of six different noises at 10-20 dB SNR. Channel distortion is in- troduced by recording the data with tw o different microphones. The test data set consists of four subsets: clean, noisy , clean with channel distortion, and noisy with channel distortion. The noise is simulated. The four test sets are referred to as A, B, C, D respecti vely in the literature. There are 330 utterances in test set A and C, and 1980 ( 330 × 6 ) utterances in B and D respectiv ely . For model tuning, we use a 330-utterance subset (dev 0330) [24] of the of ficial 1206 utterance dev set as our de- velopment set, for reasons that will be explained later . More details about Aurora-4 corpus can be found in [25]. 4.1.2. CHiME-4 corpus The CHiME-4 task is a speech recognition challenge for single- microphone or multi-microphone tablet device recordings in ev- eryday scenarios under noisy en vironments. For the CHiME- 4 data set, there are four noisy recording environments: street (STR), pedestrian area (PED), cafe (CAF) and bus (BUS). F or training, 1600 utterances were recorded in the four noisy envi- ronments from four speakers, and additional 7138 noisy utter- ances simulated from WSJ0 by additive noises from the four noisy en vironments. The development set consists of 410 utter- ances in each of the four en vironments with both real (dt05 real) and simulated en vironments (dt05 simu), for a total of 3280 ut- terances. There are 2640 utterances in the e v aluation set, with 330 utterances in each of the same eight conditions. 4.1.3. System description W e adopt con volutional neural networks (CNNs) for acoustic modeling for all the experiments we report in this w ork. The configurations for our CNNs are consistent with the previous work in [10], which has two conv olutional layers with 256 fea- ture maps in each layer . 9 × 9 filters with 1 × 3 pooling is used in the first layer and 3 × 4 filters in the second layer without pool- ing. There are four fully-connected layers with 1024 hidden units after the conv olutional layers. Rectified linear unit (ReLU) activ ation function is used for all layers. F or the Aurora-4 setup, 40-dimensional mel-filter bank (fbank) features with 11-frame context window are used as inputs. For the CHiME-4 setup, 40-dimensional fMLLR features with 11-frame context win- dow are used. Standard recipes in Kaldi [26] are adopted for feature e xtraction, HMM-GMM training and alignment gener- ation. As for acoustic modeling, T ensorFlo w [27] is used for training CNNs in this work with cross-entropy as the objective function and Adam [28] as the optimizer . The sizes for output layers are 2025 and 1942 for the Aurora-4 and CHiME-4 tasks, respectiv ely . As Aurora dev 0330 contains v erbalized punctuations, we use the 20k closed-vocab bigram LM with verbalized punc- tuations during Aurora dev elopment, to fine-tune our hyper- parameter and LM weight. This de v subset is chosen so that all words are co vered by the 20k vocab ulary , to av oid confounding effects of noise with out-of-v ocabulary issues. For the e valua- tion sets, we use the official WSJ 5k closed vocab ulary with a 3- gram model with non-verbalized punctuations (5c-nvp 3gram), since the 5k vocab ulary cov ers all words in the test sets. The values of and the language weight for both tasks are tuned on the respective de velopment sets, and the best hyper- parameters are then applied to the ev aluation sets. 4.2. Experimental results Figure 1: WER on A ur ora-4 dev 0330 with adversarial data augmentation using various perturbation weights. T able 1: WER comparison on the Aur ora-4 evaluation set with adversarial examples (AdvEx) ( = 0 . 3 ) A B C D A V G. Baseline 3.21 6.08 6.41 18.11 11.05 AdvEx 3.51 5.84 5.79 14.75 9.49 WER reduction (%) -9.4 3.9 9.7 18.6 14.1 4.2.1. Aur ora-4 r esults Figure 1 shows the word error rate (WER) results on dev 0330 for dif ferent . Based on the results, = 0 . 3 is chosen as the best perturbation weight to train the Aurora-4 model. T able 1 shows the results on the Aurora-4 ev aluation set. The model trained on WSJ0m serv es as the baseline. W ith = 0 . 3 , the augmented data training achiev es 9.49% WER averaged across the four test sets, a 14.1% relative improvement over the base- line. For the test set with the highest WER on the baseline sys- tem, D, in which both noise and channel distortion are present, T able 2: WER comparison on CHiME-4 single-channel track evaluation sets with adversarial examples (AdvEx) ( = 0 . 1 ). system et05 simu et05 real BUS CAF PED STR A VE. BUS CAF PED STR A VE. Baseline 20.25 30.69 26.62 28.74 26.57 43.95 33.64 25.95 18.68 30.55 AdvEx 19.65 29.29 24.75 26.95 25.16 41.00 31.34 24.74 18.23 28.82 Figure 2: WER with adversarial data augmentation on CHiME- 4 dt05 simu and dt05 r eal data sets, with various perturbation weights. the proposed method reduced the WER by 18.6% relative. W e also experimented with dropout training, and it gave only very small gains ov er the baseline. 4.2.2. CHiME-4 r esults In Figure 2, different v alues of are selected to demonstrate the impact of on dt05 simu and dt05 real sets on CHiME-4. W e see that within a reasonable range ( < 0 . 25 ) the proposed ap- proach brings consistent gain. In T able 2, results on CHiME-4 single track are listed, including the real (et05 real) and sim- ulated (et05 simu) ev aluation sets. Relati ve WER reductions obtained on et05 real and et05 simu sets were 5.7% and 5.3%, respectiv ely . The proposed approach was able to bring consis- tent improvements for all types of noises, whether in simulated or real en vironments. 4.2.3. Combining T/S training with data augmentation T eacher-student (T/S) training has proven to be effecti ve to im- prov e the rob ustness of the model in scenarios where parallel data is av ailable. As a result, in this work we also tried to com- bine T/S training with the proposed data augmentation method. As described in Section 4.1.1, parallel training data is av ailable for Aurora-4. Accordingly , a teacher model is trained using clean data, while the noisy data is used to train the student model. While training the student model, the following loss function is used to optimize the parameters: J T /S = α C E ( y , f ( x n , θ S )) + (1 − α ) C E ( y T , f ( x n , θ S )) (7) where 0 < α < 1 is the discount weight, CE refers to the cross-entropy loss, y is the posterior probability estimated by the student model, x n is a noisy (or adversarial) example, θ S is the student model parameter , and y T is the posterior probability estimated from the teacher model using clean data x c , y T = f ( x c , θ T ) (8) where θ T is the teacher model. The teacher model has the same configuration as the student model. This learning strategy uses T able 3: WER when combining T/S with adver sarial data aug- mentation on Aur ora-4. A B C D A VG. Baseline 3.21 6.08 6.41 18.11 11.05 T/S( α =0.5) 2.86 5.49 5.25 15.80 9.70 T/S+AdvEx( =0.3) 3.08 5.42 4.89 13.09 8.50 T/S+Random( =0.3) 3.62 5.69 5.60 14.89 9.48 a similar loss function as KullbackLeibler div ergence re gular- ization [29]. T able 3 shows the WER of T/S learning with α = 0 . 5 . T/S learning alone giv es us 12 . 2% relati ve WER reduction. After combining with adversarial data augmentation, we get the best performance with 8.50% WER. 4.2.4. Random perturbations In order to assess the utility of data augmentation using adver- sarial e xamples, we compare the proposed approach with data augmentation using random perturbation instead of FSGM. F or random perturbation, we replace sign ( ∇ x J ( θ , x , y )) in equa- tion 6 with a random ± 1 value. The last row of T able 3 shows that augmenting data using random perturbation gi ves little g ain (T/S+Random) compared to using T/S learning alone. How- ev er , there is a significant performance gap between the two data augmentation methods, e ven though the sizes of the augmented training data are the same. This v erifies the effecti veness of ad- versarial e xamples for robust acoustic modeling. 5. Conclusions In this work, we propose data augmentation using adversarial examples for robust acoustic modeling. During training, FGSM is used to efficiently generate adversarial examples, in order to increase the div ersity of the training data. Experimental results on Aurora-4 and CHiME-4 tasks sho w that the proposed ap- proach can impro ve the robustness of acoustic modeling with deep neural networks against noise and channel v ariation. On the Aurora-4 ev aluation set, 14.1% relative WER reduction w as obtained, with the greatest benefit (18.6%) when both noise and channel distortion are present. On the CHiME-4 single track task, roughly 5% WER reductions were obtained on both real and simulated data. Similar to the use of simulated data, ad- versarial e xamples effecti vely increase the size of the training set without actually requiring new data. These results show that the methods are useful in combination. Adding teacher- student learning further impro ved performance on the Aurora- 4 task, leading 23% relative WER reduction ov erall. Training with adversarial e xamples is similar in spirit to discriminati ve training; it would be interesting to compare and combine these approaches with dif ferent size training sets. This finding sug- gests that the use of adversarial examples for data augmentation is likely to be complementary to other methods for improving robustness, of fering opportunities for future work. 6. References [1] G. E. Dahl, D. Y u, L. Deng, and A. Acero, “Context-dependent pre-trained deep neural networks for large-v ocabulary speech recognition, ” IEEE T ransactions on audio, speech, and language pr ocessing , vol. 20, no. 1, pp. 30–42, 2012. [2] G. Hinton, L. Deng, D. Y u, G. E. Dahl, A.-r. Mohamed, N. Jaitly , A. Senior, V . V anhoucke, P . Nguyen, T . N. Sainath et al. , “Deep neural netw orks for acoustic modeling in speech recognition: The shared vie ws of four research groups, ” IEEE Signal Pr ocessing Magazine , vol. 29, no. 6, pp. 82–97, 2012. [3] O. Abdel-Hamid, A.-r . Mohamed, H. Jiang, and G. Penn, “ Ap- plying con volutional neural networks concepts to hybrid nn-hmm model for speech recognition, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2012 IEEE International Conference on . IEEE, 2012, pp. 4277–4280. [4] T . N. Sainath, A.-r . Mohamed, B. Kingsbury , and B. Ramabhad- ran, “Deep con volutional neural networks for lvcsr , ” in Acoustics, speech and signal pr ocessing (ICASSP), 2013 IEEE international confer ence on . IEEE, 2013, pp. 8614–8618. [5] A. Graves, A.-r . Mohamed, and G. Hinton, “Speech recognition with deep recurrent neural networks, ” in Acoustics, speech and signal pr ocessing (icassp), 2013 ieee international conference on . IEEE, 2013, pp. 6645–6649. [6] Y . Qian, M. Bi, T . T an, and K. Y u, “V ery deep conv olutional neural networks for noise rob ust speech recognition, ” IEEE/ACM T rans- actions on Audio, Speech, and Language Pr ocessing , vol. 24, no. 12, pp. 2263–2276, 2016. [7] K. Kinoshita, M. Delcroix, T . Y oshioka, T . Nakatani, A. Sehr , W . Kellermann, and R. Maas, “The reverb challenge: A common ev aluation framework for dereverberation and recognition of re- verberant speech, ” in Applications of Signal Processing to Audio and Acoustics (W ASP AA), 2013 IEEE W orkshop on . IEEE, 2013, pp. 1–4. [8] T . Ko, V . Peddinti, D. Povey , and S. Khudanpur, “ Audio augmen- tation for speech recognition, ” in Sixteenth Annual Confer ence of the International Speech Communication Association , 2015. [9] X. Xiao, C. Xu, Z. Zhang, S. Zhao, S. Sun, S. W atanabe, L. W ang, L. Xie, D. L. Jones, E. S. Chng et al. , “ A study of learning based beamforming methods for speech recognition. ” [10] S. J. Rennie, V . Goel, and S. Thomas, “Deep order statistic net- works, ” in Spok en Languag e T echnology W orkshop (SLT), 2014 IEEE . IEEE, 2014, pp. 124–128. [11] J. Li, M. L. Seltzer, X. W ang, R. Zhao, and Y . Gong, “Large- scale domain adaptation via teacher-student learning, ” Pr oc. In- terspeech 2017 , pp. 2386–2390, 2017. [12] S. Sun, B. Zhang, L. Xie, and Y . Zhang, “ An unsupervised deep domain adaptation approach for robust speech recognition, ” Neu- r ocomputing , vol. 257, pp. 79 – 87, 2017, machine Learning and Signal Processing for Big Multimedia Analysis. [13] R. Lippmann, E. Martin, and D. P aul, “Multi-style train- ing for rob ust isolated-word speech recognition, ” in Acoustics, Speech, and Signal Pr ocessing, IEEE International Confer ence on ICASSP’87. , vol. 12. IEEE, 1987, pp. 705–708. [14] W .-N. Hsu, Y . Zhang, and J. Glass, “Unsupervised domain adap- tation for robust speech recognition via variational autoencoder- based data augmentation, ” arXiv pr eprint arXiv:1707.06265 , 2017. [15] S. W atanabe, T . Hori, J. Le Roux, and J. R. Hershe y , “Student- teacher network learning with enhanced features, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2017 IEEE Interna- tional Confer ence on . IEEE, 2017, pp. 5275–5279. [16] S. Sun, C.-F . Y eh, M.-Y . Hwang, M. Ostendorf, and L. Xie, “Do- main adversarial training for accented speech recognition, ” arXiv pr eprint arXiv:1806.02786 , 2018. [17] Y . Shinohara, “ Adversarial multi-task learning of deep neural net- works for robust speech recognition. ” 2016. [18] C. Szegedy , W . Zaremba, I. Sutskev er , J. Bruna, D. Erhan, I. Goodfellow , and R. Fergus, “Intriguing properties of neural networks, ” Pr oceedings of the 2014 International Confer ence on Learning Representations , Computational and Biological Learn- ing Society , 2014. [19] I. Goodfellow , J. Shlens, and C. Szegedy , “Explaining and harnessing adversarial examples, ” in International Conference on Learning Repr esentations , 2015. [Online]. A vailable: http: //arxiv .org/abs/1412.6572 [20] R. Jia and P . Liang, “ Adversarial examples for ev aluating reading comprehension systems, ” in Pr oceedings of the 2017 Conference on Empirical Methods in Natural Languag e Pr ocessing , 2017, pp. 2021–2031. [21] N. Carlini and D. W agner , “ Audio adversarial examples: T argeted attacks on speech-to-text, ” arXiv pr eprint arXiv:1801.01944 , 2018. [22] A. Kurakin, I. Goodfellow , and S. Bengio, “ Adversarial machine learning at scale, ” arXiv preprint , 2016. [23] T . Miyato, S.-i. Maeda, M. Ko yama, K. Nakae, and S. Ishii, “Distributional smoothing with virtual adv ersarial training, ” arXiv pr eprint arXiv:1507.00677 , 2015. [24] D. Pearce, “ Aurora working group: Dsr front end lvcsr ev aluation au/384/02, ” 2002. [25] H.-G. Hirsch and D. Pearce, “The aurora experimental frame work for the performance ev aluation of speech recognition systems un- der noisy conditions, ” in ASR2000-A utomatic Speech Recogni- tion: Challenges for the ne w Millenium ISCA T utorial and Re- sear ch W orkshop (ITRW) , 2000. [26] D. Pov ey , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz et al. , “The kaldi speech recognition toolkit, ” in IEEE 2011 workshop on automatic speech recognition and understanding , no. EPFL- CONF-192584. IEEE Signal Processing Society , 2011. [27] M. Abadi, P . Barham, J. Chen, Z. Chen, A. Davis, J. Dean, M. De vin, S. Ghema wat, G. Irving, M. Isard et al. , “T ensorflow: A system for large-scale machine learning. ” in OSDI , vol. 16, 2016, pp. 265–283. [28] D. P . Kingma and J. Ba, “ Adam: A method for stochastic opti- mization, ” arXiv preprint , 2014. [29] D. Y u, K. Y ao, H. Su, G. Li, and F . Seide, “KL-di ver gence regular - ized deep neural network adaptation for improved large vocab u- lary speech recognition, ” ICASSP , IEEE International Conference on Acoustics, Speech and Signal Pr ocessing - Pr oceedings , pp. 7893–7897, 2013.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment