Potential of Augmented Reality for Intelligent Transportation Systems

Rapid advances in wireless communication technologies coupled with ongoing massive development in vehicular networking standards and innovations in computing, sensing, and analytics have paved the way for intelligent transportation systems (ITS) to develop rapidly in the near future. ITS provides a complete solution for the efficient and intelligent management of real-time traffic, wherein sensory data is collected from within the vehicles (i.e., via their onboard units) as well as data exchanged between the vehicles, between the vehicles and their supporting roadside infrastructure/network, among the vehicles and vulnerable pedestrians, subsequently paving the way for the realization of the futuristic Internet of Vehicles. The traditional intent of an ITS system is to detect, monitor, control, and subsequently reduce traffic congestion based on a real-time analysis of the data pertinent to certain patterns of the road traffic, including traffic density at a geographical area of interest, precise velocity of vehicles, current and predicted travelling trajectories and times, etc. However, merely relying on an ITS framework is not an optimal solution. In case of dense traffic environments, where communication broadcasts from hundreds of thousands of vehicles could potentially choke the entire network (and so could lead to fatal accidents in the case of autonomous vehicles that depend on reliable communications for their operational safety), a fall back to the traditional decentralized vehicular ad hoc network (VANET) approach becomes necessary. It is therefore of critical importance to enhance the situational awareness of vehicular drivers so as to enable them to make quick but well-founded manual decisions in such safety-critical situations.

💡 Research Summary

The paper surveys the convergence of emerging wireless communication technologies, vehicular networking standards, and advances in computing, sensing, and analytics, arguing that these trends are paving the way for next‑generation Intelligent Transportation Systems (ITS). ITS is described as a holistic framework that gathers data from on‑board vehicle units, vehicle‑to‑vehicle (V2V), vehicle‑to‑infrastructure/network (V2I/N), and vehicle‑to‑pedestrian (V2P) links to monitor traffic density, vehicle speed, predicted trajectories, and travel times in real time. While ITS can dramatically improve traffic flow and safety, the authors point out that in dense traffic scenarios the sheer volume of broadcast messages from hundreds of thousands of vehicles can saturate the communication channel, jeopardizing the reliability of autonomous‑vehicle operations. In such cases a fallback to a decentralized vehicular ad‑hoc network (VANET) is necessary, and the driver’s situational awareness becomes the critical safety lever.

To address this, the authors propose Augmented Reality (AR) as a human‑computer interface that overlays minimal, context‑relevant virtual information directly into the driver’s line of sight. The AR pipeline consists of four stages: (1) scene capture via cameras or see‑through head‑mounted displays, (2) scene identification using markers, GPS, laser, infrared or other tracking technologies, (3) scene processing where the identified context triggers a request to a cloud or edge server that filters the data to the most essential visual cues, and (4) scene visualization where the fused image is rendered on a heads‑up display (HUD) or smart glasses. By keeping the overlay concise, AR reduces cognitive load and eliminates the need for drivers to glance away to dashboards or central consoles.

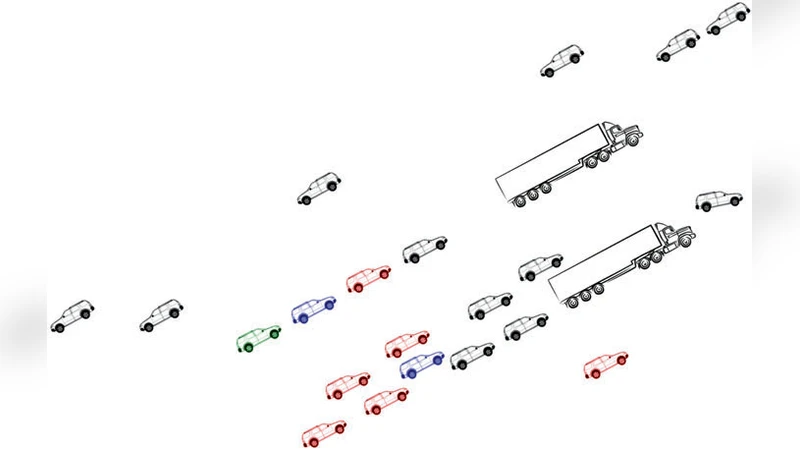

The paper illustrates several concrete driving scenarios where AR can improve safety: (a) vehicle platooning, where reduced inter‑vehicle gaps increase road capacity and aerodynamic efficiency, but require precise, low‑latency coordination; (b) overtaking maneuvers obstructed by large trucks, where AR can provide a “see‑through” view of hidden vehicles; (c) low‑visibility conditions (fog, heavy rain, snow) where lane markings, traffic signs, and hazard warnings are projected directly onto the windshield. In each case, the authors stress that the information must be carefully selected, derived from a fusion of on‑board sensors and V2X data, and delivered within a latency budget of 20–100 ms (Table 1).

From a networking perspective, the authors quantify the data explosion: each modern vehicle already hosts roughly 100 sensors, a number expected to double by 2020; autonomous vehicles may generate up to 5 TB of data per hour, with cameras alone producing 20–40 Mbps and radars 100 kbps. Human occupants add further load, consuming 650 Mbps to 1.5 Gbps of multimedia traffic per day. Traditional static network architectures cannot cope with such dynamic, high‑throughput, low‑latency demands. Consequently, the paper advocates a hybrid architecture that couples Software‑Defined Networking (SDN) with edge computing. SDN separates the control plane from the data plane, allowing a logically centralized controller to orchestrate traffic, prioritize safety‑critical V2X messages, and dynamically allocate bandwidth. Edge nodes cache frequently requested safety and infotainment services, reducing round‑trip time and alleviating core‑network congestion.

The authors also review the state of AR hardware (smartphones, tablets, stationary AR walls, spatial AR rigs, head‑mounted displays such as Oculus Rift, smart glasses, and emerging smart contact lenses) and note that automotive‑grade devices must meet stringent requirements for low power consumption, high resolution, wide field‑of‑view, and robust tracking under varying illumination. Software challenges include real‑time 3D rendering, sensor fusion, machine‑learning‑based risk prediction, and the design of intuitive visual metaphors that convey critical alerts without overwhelming the driver.

In the conclusion, the paper reiterates that AR holds significant promise for enhancing driver awareness, reducing accidents caused by human error (which account for over 80 % of road fatalities), and enabling more efficient traffic management. However, realizing this promise demands advances across multiple domains: ultra‑low‑latency V2X communication, edge‑centric data processing, precise optical displays, and seamless integration of AR content with existing ADAS functions. Open research questions include optimal content selection algorithms, high‑accuracy tracking under motion, mitigation of visual distortion, and scalable deployment strategies for city‑wide ITS infrastructures. The authors call for interdisciplinary efforts to address these challenges and unlock the full potential of AR‑enabled next‑generation intelligent transportation systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment