Neonatal EEG Interpretation and Decision Support Framework for Mobile Platforms

This paper proposes and implements an intuitive and pervasive solution for neonatal EEG monitoring assisted by sonification and deep learning AI that provides information about neonatal brain health to all neonatal healthcare professionals, particularly those without EEG interpretation expertise. The system aims to increase the demographic of clinicians capable of diagnosing abnormalities in neonatal EEG. The proposed system uses a low-cost and low-power EEG acquisition system. An Android app provides single-channel EEG visualization, traffic-light indication of the presence of neonatal seizures provided by a trained, deep convolutional neural network and an algorithm for EEG sonification, designed to facilitate the perception of changes in EEG morphology specific to neonatal seizures. The multifaceted EEG interpretation framework is presented and the implemented mobile platform architecture is analyzed with respect to its power consumption and accuracy.

💡 Research Summary

The paper presents a comprehensive, low‑cost solution for neonatal electroencephalography (EEG) monitoring that combines a single‑channel dry‑electrode acquisition board, an Android‑based mobile application, deep‑learning‑driven seizure detection, and auditory sonification to support clinicians without EEG expertise.

Hardware: The authors use an OpenBCI Ganglion board (≈ €200) equipped with dry electrodes, eliminating the need for abrasive skin preparation and conductive gels. The board records up to four channels at 24‑bit resolution and 200 Hz, but the prototype operates with a single channel to maximize portability. Bluetooth Low Energy (BLE) provides wireless data transfer with a current draw of 14.2 mA (idle) and 15.5 mA (streaming), allowing roughly 130 hours of continuous monitoring on a 2000 mAh battery pack.

Software: An Android app running on a Samsung A6 tablet (2 GB RAM, 8‑core CPU) handles BLE pairing, data decoding, storage, real‑time visualization, and filtering (high‑pass, low‑pass, 50 Hz notch) following IFCN guidelines (1 s → 30 mm horizontal, 10 mm per channel vertical, ≥120 samples/s). Visualization consumes about 44 % CPU; overall app CPU usage averages below 95 % with a power draw of ~100 mAh and 128 MB RAM.

Sonification: Two auditory conversion methods are implemented. (1) Phase Vocoder (PV) maps the 0.5‑16 Hz EEG band to 500‑16 kHz audio while preserving spectral envelope, enabling time‑compression (×1, ×5, ×10) for rapid review. (2) FM/AM synthesis uses a 500 Hz carrier frequency frequency‑modulated by the EEG signal; amplitude modulation embeds EEG amplitude dynamics. Both methods were evaluated at three speed factors.

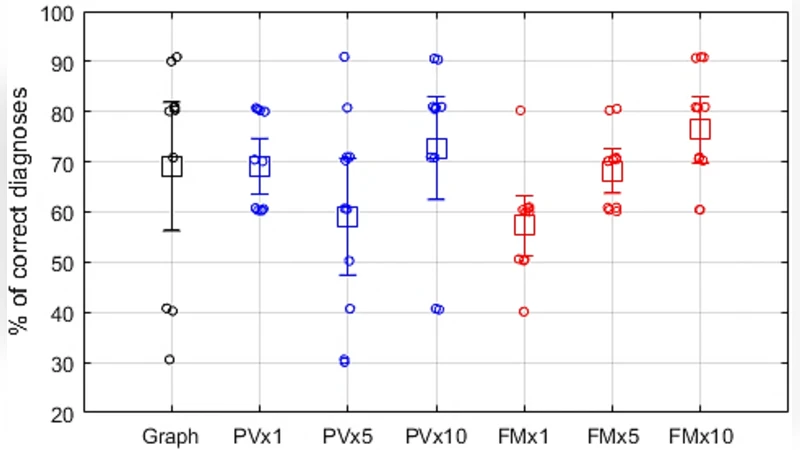

Human factors study: Eleven non‑EEG experts performed a detection task using (a) visual EEG traces, (b) PV at three speeds, and (c) FM/AM at three speeds. PV at normal speed achieved 69 % correct detection, while FM/AM at 10× speed reached the highest accuracy of 76 %. Visual interpretation showed the widest confidence interval, indicating lower consistency among novices.

AI‑assisted detection: A previously developed convolutional neural network (CNN) for neonatal seizure detection is deployed in two configurations: a 6‑layer network (≈ 17 k parameters) and an 11‑layer network (≈ 286 k parameters). On a large multi‑channel dataset, the 11‑layer model achieved AUC 97.7 % (vs. 97.1 % for 6‑layer) and a good detection rate (GDR) of 83.2 % (vs. 78.8 %). The deeper model consumes an additional 7.9 mAh per hour and reduces battery life by ~19 minutes, but processes an 8‑second window with 1‑second shift in 283 ms (vs. 115 ms for the shallower model).

Results & discussion: Power measurements confirm that acquisition (BLE, parsing, storage) is the most energy‑intensive component (≈ 19 mAh/h). Visualization adds negligible overhead. The sonification algorithms run below 40 % CPU even on a low‑spec device (1 GB RAM, 1.2 GHz quad‑core). The AI module’s deeper architecture improves clinical performance at the cost of modestly higher power consumption and processing time, which may become a bottleneck when acquisition, visualization, and sonification run concurrently.

Conclusion: The authors successfully demonstrate a fully integrated, low‑cost neonatal EEG monitoring platform that delivers real‑time visual display, traffic‑light seizure alerts, and intuitive auditory cues. The system operates for extended periods without recharging, and the CNN‑based seizure detector shows clear performance gains with increased depth. Remaining challenges include the reliance on a single channel (limiting spatial information), relatively high CPU/RAM demands on the mobile device, and the need for further validation in diverse clinical settings. Future work should explore multi‑channel extensions, model compression techniques, and refined user interfaces to broaden adoption in resource‑limited NICUs.

Comments & Academic Discussion

Loading comments...

Leave a Comment