Stochastic seismic waveform inversion using generative adversarial networks as a geological prior

We present an application of deep generative models in the context of partial-differential equation (PDE) constrained inverse problems. We combine a generative adversarial network (GAN) representing an a priori model that creates subsurface geological structures and their petrophysical properties, with the numerical solution of the PDE governing the propagation of acoustic waves within the earth’s interior. We perform Bayesian inversion using an approximate Metropolis-adjusted Langevin algorithm (MALA) to sample from the posterior given seismic observations. Gradients with respect to the model parameters governing the forward problem are obtained by solving the adjoint of the acoustic wave equation. Gradients of the mismatch with respect to the latent variables are obtained by leveraging the differentiable nature of the deep neural network used to represent the generative model. We show that approximate MALA sampling allows efficient Bayesian inversion of model parameters obtained from a prior represented by a deep generative model, obtaining a diverse set of realizations that reflect the observed seismic response.

💡 Research Summary

This paper introduces a novel Bayesian framework for seismic waveform inversion that tightly couples a deep generative prior with a physics‑based forward model. The authors first train a generative adversarial network (GAN) to serve as a prior over high‑dimensional geological models (acoustic velocity, density, facies). The GAN maps a low‑dimensional latent vector z, drawn from a standard normal distribution, to a full spatial model m = Gθ(z). Because the generator is fully differentiable, gradients with respect to z can be obtained via back‑propagation.

The forward problem is the time‑dependent acoustic wave equation with a damping term to absorb boundary reflections. Given a model mV(x) (the acoustic velocity field), the wavefield u(x,t) is simulated, and synthetic seismograms d̂ = S(m) are recorded at surface receivers. The data‑misfit functional J(m) = ‖d̂ − d_obs‖² measures the L2 distance between synthetic and observed traces. Using the adjoint‑state method, the gradient ∂J/∂m is computed efficiently by solving an adjoint wave equation backward in time.

To sample the posterior p(z|d) ∝ p(d|z)p(z), the authors employ an approximate Metropolis‑adjusted Langevin algorithm (MALA). The proposal step adds a scaled gradient of the log‑likelihood (computed as (∂J/∂m)·(∂G/∂z)) and a Gaussian noise term, followed by a Metropolis acceptance test. The latent prior contributes a simple −z term to the gradient. The algorithm also incorporates additional constraints such as bore‑hole facies observations; these appear as extra log‑likelihood terms with a large weighting factor, ensuring that sampled models honor both surface seismic data and well logs.

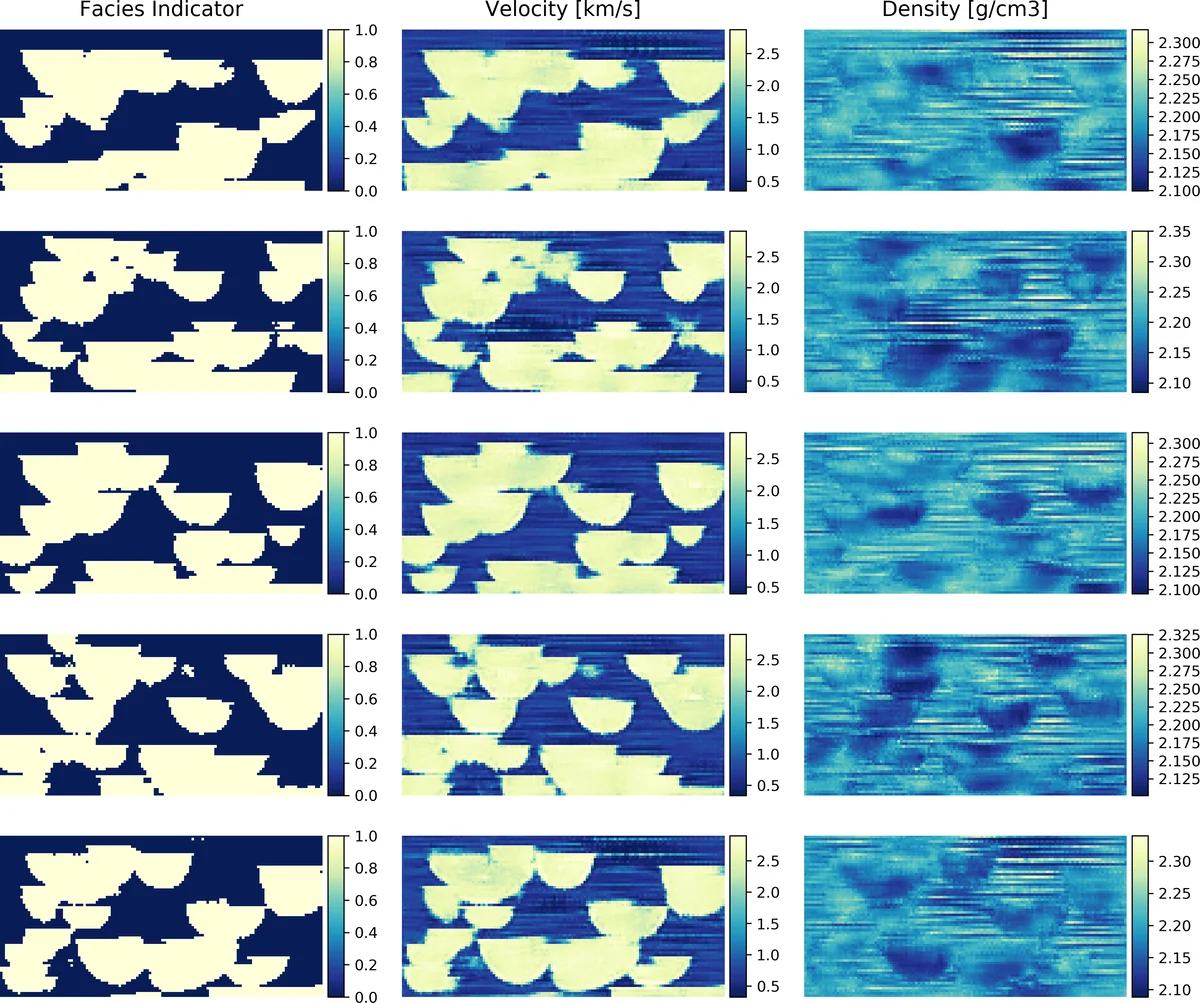

Experiments are conducted on a 2‑D synthetic domain containing layered structures, faults, and heterogeneous velocity fields. The GAN is pre‑trained on a large set of geological realizations, enabling it to generate realistic subsurface models from random latent vectors. The MALA‑approx sampler is run for several thousand iterations with step‑size annealing (from 10⁻¹ to 10⁻⁵) and a small friction parameter λ = 10⁻⁵. Results show that the ensemble of sampled models reproduces the statistical properties of the true model (mean velocity, layer thickness distribution) and captures multimodal posterior features such as alternative fault positions. When well‑log facies data are added, virtually all sampled models match the prescribed facies at the bore‑hole locations, demonstrating the flexibility of the framework to fuse heterogeneous data types.

Key contributions are: (1) a low‑dimensional latent‑space formulation that leverages adjoint‑derived gradients, dramatically reducing the computational burden of high‑dimensional inversion; (2) the use of a differentiable GAN as a powerful, non‑Gaussian prior capable of representing complex geological patterns beyond traditional geostatistical models; (3) MALA‑based sampling that yields a diverse set of posterior realizations, providing a quantitative measure of uncertainty; and (4) seamless integration of additional constraints (e.g., well logs) within the same probabilistic scheme.

The authors acknowledge limitations: the current study is restricted to 2‑D synthetic examples; extending to realistic 3‑D field data will increase memory and computational demands for both forward/adjoint wave simulations and GAN training. Moreover, the performance of MALA depends on careful tuning of step sizes and friction parameters, and the quality of the prior hinges on the availability of representative training data. Future work is suggested in three directions: (i) scalable 3‑D adjoint implementations, (ii) physics‑informed GAN training or data‑augmentation strategies to reduce the need for massive training sets, and (iii) adaptive or Hamiltonian Monte Carlo schemes to alleviate sensitivity to hyper‑parameters.

In summary, the paper demonstrates that coupling a deep generative prior with adjoint‑based gradient computation and Langevin sampling offers a powerful, uncertainty‑aware approach to seismic waveform inversion, opening pathways for more realistic subsurface imaging in geophysics and related inverse problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment