On sound-based interpretation of neonatal EEG

Significant training is required to visually interpret neonatal EEG signals. This study explores alternative sound-based methods for EEG interpretation which are designed to allow for intuitive and quick differentiation between healthy background activity and abnormal activity such as seizures. A novel method based on frequency and amplitude modulation (FM/AM) is presented. The algorithm is tuned to facilitate the audio domain perception of rhythmic activity which is specific to neonatal seizures. The method is compared with the previously developed phase vocoder algorithm for different time compressing factors. A survey is conducted amongst a cohort of non-EEG experts to quantitatively and qualitatively examine the performance of sound-based methods in comparison with the visual interpretation. It is shown that both sonification methods perform similarly well, with a smaller inter-observer variability in comparison with visual. A post-survey analysis of results is performed by examining the sensitivity of the ear to frequency evolution in audio.

💡 Research Summary

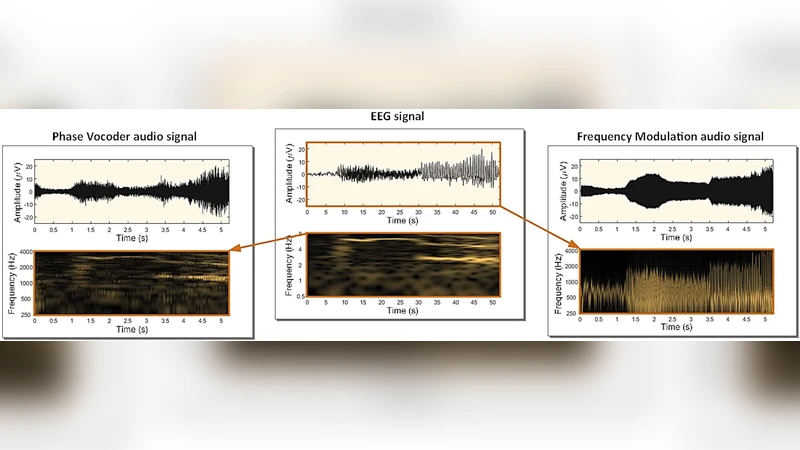

The paper addresses the challenge of interpreting neonatal electroencephalography (EEG), a task that traditionally demands extensive training and continuous expert availability. To reduce reliance on visual expertise, the authors propose two auditory sonification approaches that translate EEG signals into sound: a Frequency‑and‑Amplitude Modulation (FM/AM) method and a Phase Vocoder (PV) method. Both algorithms are designed to preserve the essential characteristics of neonatal EEG—particularly the rhythmic, slowly decreasing dominant frequencies that typify seizures—while mapping them into the audible range (approximately 50 Hz to 5 kHz).

The FM/AM pipeline first band‑passes the EEG (0.5–7.5 Hz) and downsamples it to 16 Hz. A logarithmic‑scale compressor reduces the dynamic range (threshold –20 dB, ratio 1.5) to prevent aliasing in subsequent stages. The compressed waveform is amplified, hard‑limited, and then up‑sampled to 16 kHz. An exponential transform converts the voltage level into a carrier frequency that varies between 50 Hz and 5 kHz, thereby encoding EEG amplitude as pitch variation. The envelope of the original EEG is simultaneously used to modulate the amplitude of the resulting sound, embedding both frequency and amplitude information and emphasizing rhythmic patterns.

The PV approach performs a short‑time Fourier transform (STFT) on the same pre‑processed EEG (window 64 s, hop 16 s). Magnitude and phase are extracted for each frequency bin. Magnitude is linearly interpolated according to a chosen time‑compression factor, while phase is unwrapped and interpolated to maintain coherence across frames. An inverse STFT with overlap‑add reconstructs an 8 kHz audio signal that preserves the EEG’s spectral envelope. The resulting sound is then shifted into the audible band (250–4000 Hz).

Both methods support time compression, allowing a user to listen to a 60‑minute EEG segment in as little as 6 minutes (compression factor = 10). This accelerates the perception of slow EEG dynamics, making seizure‑related rhythm changes audible in near‑real time.

For evaluation, the authors assembled a balanced dataset of 200 ten‑second segments: 100 containing clinically verified seizures (various types, durations 1–5 min) and 100 non‑seizure background segments that included artifacts such as respiration, ECG, and movement. The dataset was curated to prevent simple amplitude‑based discrimination.

A survey was conducted with 11 participants who had no formal EEG training. Each participant experienced seven scenarios: visual inspection of the raw EEG (baseline) and six sonification conditions (PV×1, PV×5, PV×10, FM×1, FM×5, FM×10). For each scenario, ten randomly selected segments were presented, and participants marked each as seizure or non‑seizure. Accuracy rates and confidence intervals were calculated.

Results showed that visual inspection achieved 69 % accuracy, while the fastest sonification (compression factor = 10) yielded the highest performance: FM×10 at 76 % and PV×10 at 73 %. All sonification conditions outperformed visual inspection, and the variability among participants was markedly lower for auditory methods (≤ 15 % difference between the lowest and highest performers) compared with visual inspection (≈ 40 % difference). When participants were split into two groups based on their visual performance (below‑average vs. above‑average), the gap in accuracy between groups narrowed dramatically for the sonified conditions, indicating that auditory cues provide a more consistent basis for decision‑making. Preference surveys revealed a slight tilt toward FM (8 participants) over PV (3 participants), with most preferring the highly compressed versions (×10).

The authors conclude that human auditory perception is especially sensitive to frequency evolution and rhythmic structure, making it well‑suited for rapid discrimination of neonatal seizure activity. Time‑compressed sonification enables clinicians to review long EEG recordings quickly, potentially serving as a real‑time alert system in neonatal intensive care units where expert EEG readers are scarce. The study suggests that integrating sonification with existing automated detection algorithms could further improve reliability and reduce false alarms. Future work should explore real‑time implementation, larger multi‑center validation, and the combination of auditory and visual displays to maximize diagnostic confidence.

Comments & Academic Discussion

Loading comments...

Leave a Comment