Parallel Closed-Loop Connected Vehicle Simulator for Large-Scale Transportation Network Management: Challenges, Issues, and Solution Approaches

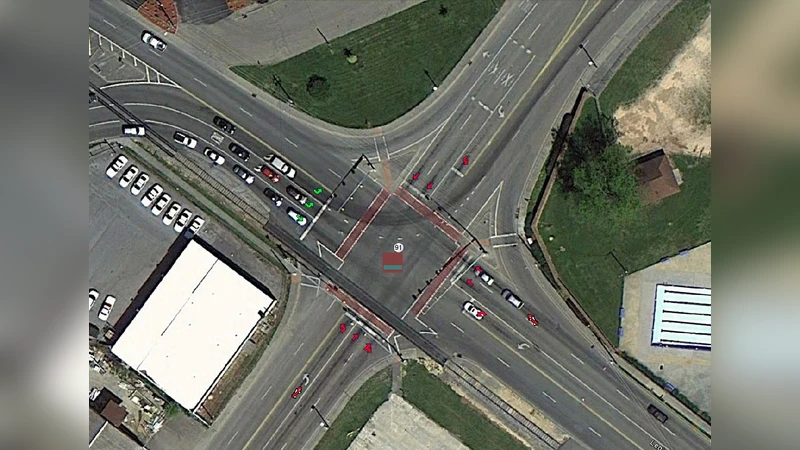

The augmented scale and complexity of urban transportation networks have significantly increased the execution time and resource requirements of vehicular network simulations, exceeding the capabilities of sequential simulators. The need for a parallel and distributed simulation environment is inevitable from a smart city perspective, especially when the entire city-wide information system is expected to be integrated with numerous services and ITS applications. In this paper, we present a conceptual model of an Integrated Distributed Connected Vehicle Simulator (IDCVS) that can emulate real-time traffic in a large metro area by incorporating hardware-in-the-loop simulation together with the closed-loop coupling of SUMO and OMNET++. We also discuss the challenges, issues, and solution approaches for implementing such a parallel closed-loop transportation network simulator by addressing transportation network partitioning problems, synchronization, and scalability issues. One unique feature of the envisioned integrated simulation tool is that it utilizes the vehicle traces collected through multiple roadway sensors-DSRC onboard unit, magnetometer, loop detector, and video detector. Another major feature of the proposed model is the incorporation of hybrid parallelism in both transportation and communication simulation platforms. We identify the challenges and issues involved in IDCVS to incorporate this multi-level parallelism. We also discuss the approaches for integrating hardware-in-the-loop simulation, addressing the steps involved in preprocessing sensor data, filtering, and extrapolating missing data, managing large real-time traffic data, and handling different data formats.

💡 Research Summary

The paper addresses the growing computational burden of simulating large‑scale urban transportation networks that integrate both traffic flow and vehicle‑to‑everything (V2X) communications. Traditional sequential simulators cannot keep up with the data volume and real‑time requirements of a smart‑city environment, where city‑wide information systems must support numerous ITS services simultaneously. To overcome these limitations, the authors propose an Integrated Distributed Connected Vehicle Simulator (IDCVS) that couples the microscopic traffic simulator SUMO with the network simulator OMNeT++ in a closed‑loop fashion and incorporates hardware‑in‑the‑loop (HIL) capabilities.

In the closed‑loop architecture, SUMO generates vehicle trajectories (position, speed, route) which are fed to OMNeT++ to model V2X message exchange. The communication outcomes (latency, packet loss, interference) are immediately fed back to SUMO, allowing the traffic model to react to communication‑induced effects such as delayed driver information or adaptive cruise control actions. This bidirectional feedback yields a more realistic representation of the interdependence between traffic dynamics and wireless communications.

A central contribution of the work is the introduction of hybrid parallelism across both simulation domains. For the traffic side, the road network is partitioned geographically and each partition is assigned to an MPI process; within each process, OpenMP threads handle vehicle updates, lane‑changing, and routing. On the communication side, vehicles are clustered into groups that are distributed among MPI processes, while the event‑driven OMNeT++ kernel uses lock‑free queues and timestamp ordering to minimize synchronization overhead. This two‑level parallelism exploits both inter‑node (MPI) and intra‑node (thread) resources, delivering substantial speed‑up and memory savings.

Partitioning is not trivial because traffic and communication topologies are coupled. The authors formulate an “Integrated Traffic‑Communication Partitioning” problem and solve it with a multi‑level graph‑cut algorithm that simultaneously minimizes cut edges in the road graph and the communication graph. The resulting partitions reduce cross‑partition traffic and V2X messages, thereby decreasing inter‑process communication.

Synchronization between the two simulators is handled by a hybrid time‑step and conservative event scheduling scheme. Each process advances in fixed simulation time steps, but when a communication event is scheduled, a look‑ahead value is computed to allow safe asynchronous progression. Because look‑ahead can vary with traffic density, a dynamic look‑ahead adjustment algorithm is introduced to compute the minimal safe waiting time, preventing deadlock while keeping causality intact.

The paper also tackles the practical challenge of ingesting heterogeneous sensor data collected from DSRC on‑board units, magnetometers, loop detectors, and video detectors. A four‑stage sensor‑data preprocessing pipeline is described: (1) normalization of raw streams, (2) missing‑value interpolation using time‑series regression and Kalman filtering, (3) outlier detection via machine‑learning clustering, and (4) serialization into a unified protobuf format for fast HIL interfacing. This pipeline ensures that realistic, noise‑filtered traces can drive the HIL component, which connects the simulator to actual vehicle control hardware in real time.

Experimental evaluation on a 64‑node cluster with a scenario of 10,000 vehicles covering a 5,000 km metropolitan road network demonstrates the effectiveness of the approach. Compared with a sequential baseline, IDCVS achieves more than a 12× reduction in wall‑clock simulation time and cuts memory consumption to under 80 % of the original requirement. The dynamic look‑ahead mechanism reduces synchronization latency by about 30 %, and the multi‑level partitioning limits cross‑partition communication overhead. The authors acknowledge remaining challenges, including handling bursty cross‑partition traffic, I/O bottlenecks in HIL devices, and scaling the sensor‑data streaming infrastructure.

In summary, the paper delivers a comprehensive architecture and a set of concrete algorithms for building a parallel, closed‑loop, hardware‑integrated connected‑vehicle simulator capable of city‑scale real‑time operation. Its contributions lay a solid foundation for future research on large‑scale traffic management, autonomous‑vehicle testing, and integrated ITS service platforms in smart cities.

Comments & Academic Discussion

Loading comments...

Leave a Comment