Learning Representations for Counterfactual Inference

Observational studies are rising in importance due to the widespread accumulation of data in fields such as healthcare, education, employment and ecology. We consider the task of answering counterfactual questions such as, "Would this patient have lo…

Authors: Fredrik D. Johansson, Uri Shalit, David Sontag

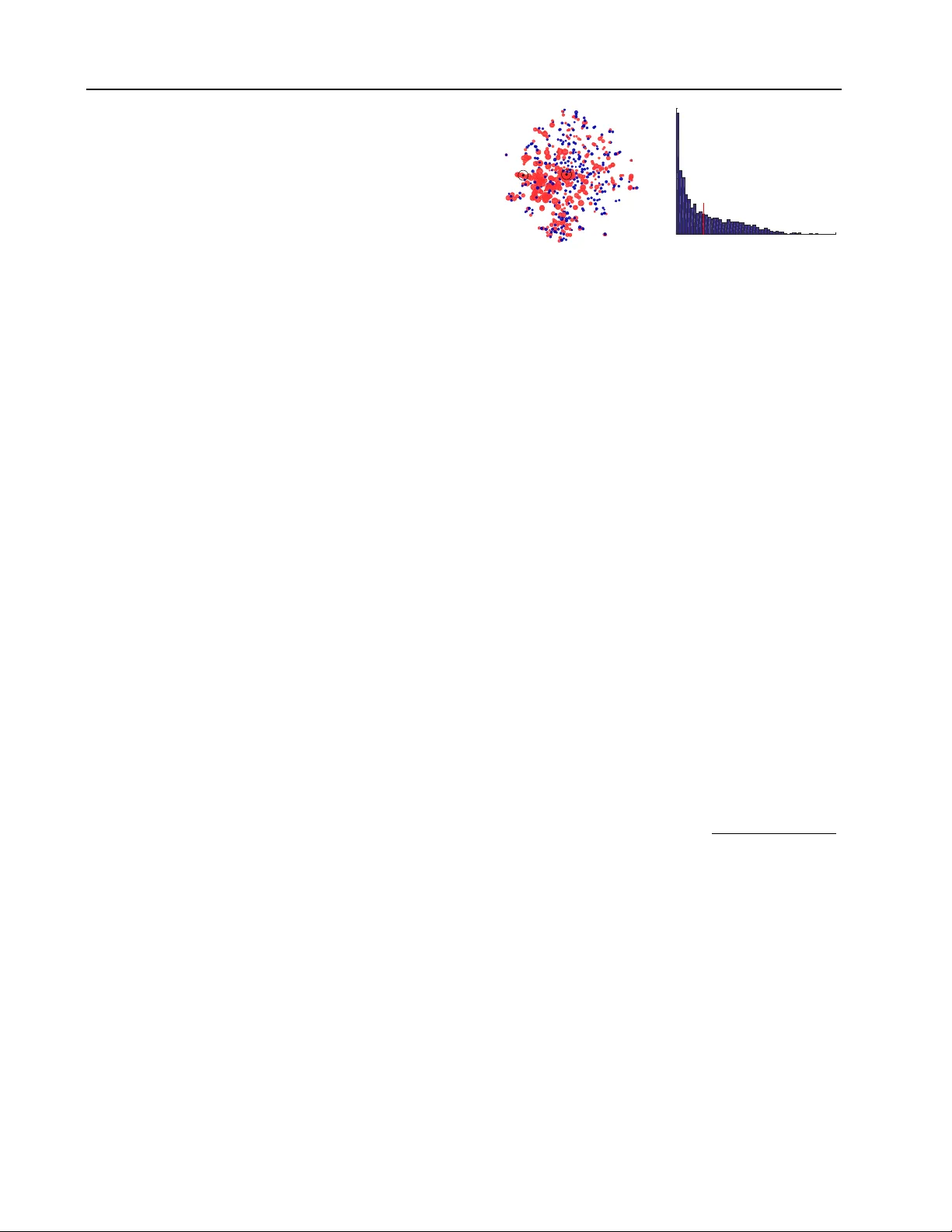

Learning Repr esentations f or Counterfactual Inference Fredrik D . Johansson ∗ F R E J O H K @ C H A L M E R S . S E CSE, Chalmers Univ ersity of T echnology , G ¨ oteborg, SE-412 96, Sweden Uri Shalit ∗ S H A L I T @ C S . N Y U . E D U David Sontag D S O N T A G @ C S . N Y U . E D U CIMS, New Y ork Univ ersity , 251 Mercer Street, New Y ork, NY 10012 USA ∗ Equal contribution Abstract Observational studies are rising in importance due to the widespread accumulation of data in fields such as healthcare, education, employment and ecology . W e consider the task of answering counterfactual questions such as, “W ould this pa- tient hav e lower blood sugar had she receiv ed a different medication?”. W e propose a new algo- rithmic framew ork for counterfactual inference which brings together ideas from domain adapta- tion and representation learning. In addition to a theoretical justification, we perform an empirical comparison with previous approaches to causal inference from observational data. Our deep learning algorithm significantly outperforms the previous state-of-the-art. 1. Introduction Inferring causal relations is a fundamental problem in the sciences and commercial applications. The problem of causal inference is often framed in terms of counterfactual questions ( Lewis , 1973 ; Rubin , 1974 ; Pearl , 2009 ) such as “W ould this patient have lo wer blood sugar had she re- ceiv ed a different medication?”, or “W ould the user ha ve clicked on this ad had it been in a different color?”. In this paper we propose a method to learn representations suited for counterfactual inference, and sho w its efficacy in both simulated and real world tasks. W e focus on counterfactual questions raised by what are known as observational studies . Observational studies are studies where interventions and outcomes have been recorded, along with appropriate context. For example, consider an electronic health record dataset collected over Pr oceedings of the 33 rd International Conference on Machine Learning , New Y ork, NY , USA, 2016. JMLR: W&CP volume 48. Cop yright 2016 by the author(s). sev eral years, where for each patient we have lab tests and past diagnoses, as well as data relating to their diabetic sta- tus, and the causal question of interest is which of two ex- isting anti-diabetic medications A or B is better for a gi ven patient. Observ ational studies are rising in importance due to the widespread accumulation of data in fields such as healthcare, education, employment and ecology . W e be- liev e machine learning will be called on more and more to help make better decisions in these fields, and that re- searchers should be careful to pay attention to the w ays in which these studies differ from classic supervised learning, as explained in Section 2 belo w . In this work we dra w a connection between counterfac- tual inference and domain adaptation. W e then introduce a form of regularization by enforcing similarity between the distributions of representations learned for populations with dif ferent interventions. For example, the representa- tions for patients who receiv ed medication A versus those who recei ved medication B. This reduces the v ariance from fitting a model on one distrib ution and applying it to an- other . In Section 3 we give sev eral methods for learning such representations. In Section 4 we sho w our methods approximately minimizes an upper bound on a regret term in the counterfactual regime. The general method is out- lined in Figure 1 . Our work has commonalities with recent work on learning fair representations ( Zemel et al. , 2013 ; Louizos et al. , 2015 ) and learning representations for trans- fer learning ( Ben-David et al. , 2007 ; Gani et al. , 2015 ). In all these cases the learned representation has some inv ari- ance to specific aspects of the data: either an identity of a certain group such as racial minorities for fair representa- tions, or the identity of the data source for domain adapta- tion, or, in the case of counterfactual learning, the type of intervention enacted in each population. In machine learning, counterfactual questions typically arise in problems where there is a learning agent which performs actions, and receiv es feedback or reward for that Learning Repr esentations for Counterfactual Inference choice without knowing what would be the feedback for other possible choices. This is sometimes referred to as bandit feedback ( Beygelzimer et al. , 2010 ). This setup comes up in di verse areas, for example of f-policy ev alu- ation in reinforcement learning ( Sutton & Barto , 1998 ), learning from “logged implicit exploration data” ( Strehl et al. , 2010 ) or “logged bandit feedback” ( Swaminathan & Joachims , 2015 ), and in understanding and designing com- plex real w orld ad-placement systems ( Bottou et al. , 2013 ). Note that while in contextual bandit or robotics applica- tions the researcher typically knows the method underlying the action choice (e.g. the policy in reinforcement learn- ing), in observ ational studies we usually do not hav e con- trol or even a full understanding of the mechanism which chooses which actions are performed and which feedback or rew ard is rev ealed. For instance, for anti-diabetic med- ication, more af fluent patients might be insensitive to the price of a drug, while less af fluent patients could bring this into account in their choice. Giv en that we do not know beforehand the particulars de- termining the choice of action, the question remains, how can we learn from data which course of action would hav e better outcomes. By bringing together ideas from represen- tation learning and domain adaptation, our method of fers a nov el way to leverage increasing computation po wer and the rise of large datasets to tackle consequential questions of causal inference. The contributions of our paper are as follo ws. First, we show ho w to formulate the problem of counterfactual infer - ence as a domain adaptation problem, and more specifically a covariate shift problem. Second, we deriv e ne w fami- lies of representation algorithms for counterfactual infer- ence: one is based on linear models and variable selection, and the other is based on deep learning of representations ( Bengio et al. , 2013 ). Finally , we sho w that learning repre- sentations that encourage similarity (balance) between the treated and control populations leads to better counterfac- tual inference; this is in contrast to many methods which at- tempt to create balance by re-weighting samples (e.g., Bang & Robins , 2005 ; Dud ´ ık et al. , 2011 ; Austin , 2011 ; Swami- nathan & Joachims , 2015 ). W e sho w the merit of learning balanced representations both theoretically in Theorem 1, and empirically in a set of experiments across tw o datasets. 2. Problem setup Let T be the set of potential interventions or actions we wish to consider , X the set of contexts, and Y the set of possible outcomes. For example, for a patient x ∈ X the set T of interventions of interest might be two dif ferent treatments, and the set of outcomes might be Y = [0 , 200] indicating blood sugar le vels in mg/dL. For an ad slot on a webpage x , the set of interventions T might be all possi- ble ads in the in ventory that fit that slot, while the potential outcomes could be Y = { click , no click } . For a context x (e.g. patient, webpage), and for each potential interven- tion t ∈ T , let Y t ( x ) ∈ Y be the potential outcome for x . The fundamental problem of causal inference is that only one potential outcome is observ ed for a giv en context x : ev en if we giv e the patient one medication and later the other , the patient is not in exactly the same state. In ma- chine learning this type of partial feedback is often called “bandit feedback”. The model described abov e is known as the Rubin-Neyman causal model ( Rubin , 1974 ; 2011 ). W e are interested in the case of a binary action set T = { 0 , 1 } , where action 1 is often kno wn as the “treated” and action 0 is the “control”. In this case the quantity Y 1 ( x ) − Y 0 ( x ) is of high interest: it is kno wn as the in- dividualized treatment effect (ITE) for conte xt x ( van der Laan & Petersen , 2007 ; W eiss et al. , 2015 ). Knowing this quantity enables choosing the best of the tw o actions when confronted with the choice, for example choosing the best treatment for a specific patient. Ho wev er, the fact that we only ha ve access to the outcome of one of the two ac- tions prevents the ITE from being known. Another com- monly sought after quantity is the aver age tr eatment ef fect , A TE = E x ∼ p ( x ) [ ITE ( x )] for a population with distribution p ( x ) . In the binary action setting, we refer to the observed and unobserved outcomes as the factual outcome y F ( x ) , and counterfactual outcome y C F ( x ) respecti vely . A common approach for estimating the ITE is by dir ect modelling : giv en n samples { ( x i , t i , y F i ) } n i =1 , where y F i = t i · Y 1 ( x i ) + (1 − t i ) Y 0 ( x i ) , learn a function h : X × T → Y such that h ( x i , t i ) ≈ y F i . The estimated transductive ITE is then: ˆ ITE ( x i ) = ( y F i − h ( x i , 1 − t i ) , t i = 1 . h ( x i , 1 − t i ) − y F i , t i = 0 . (1) While in principle an y function fitting model might be used for estimating the ITE ( Prentice , 1976 ; Gelman & Hill , 2006 ; Chipman et al. , 2010 ; W ager & Athey , 2015 ; W eiss et al. , 2015 ), it is important to note how this task differs from standard supervised learning. The problem is as follows: the observed sample consists of the set ˆ P F = { ( x i , t i ) } n i =1 . Howe ver , calculating the ITE requires inferring the outcome on the set ˆ P C F = { ( x i , 1 − t i ) } n i =1 . W e call the set ˆ P F ∼ P F the empirical factual distribu- tion , and the set ˆ P C F ∼ P C F the empirical counterfac- tual distribution , respecti vely . Because P F and P C F need not be equal, the problem of causal inference by counter- factual prediction might require inference over a dif ferent distribution than the one from which samples are giv en. In machine learning terms, this means that the feature distri- bution of the test set differs from that of the train set. This is a case of covariate shift , which is a special case of do- Learning Repr esentations for Counterfactual Inference Context ! x Representation ! Φ Outcome error ! loss ( h ( Φ , t), y) T reatment ! t Imbalance ! disc ( Φ C , Φ T ) Figure 1. Conte xts x are representated by Φ( x ) , which are used, with group indicator t , to predict the response y while minimizing the imbalance in distributions measured by disc(Φ C , Φ T ) . Algorithm 1 Balancing counterfactual regression 1: Input: X , T , Y F ; H , N ; α, γ , λ 2: Φ ∗ , g ∗ = arg min Φ ∈N ,g ∈H B H ,α,γ (Φ , g ) ( 2 ) 3: h ∗ = arg min h ∈H 1 n P n i =1 ( h (Φ , t i ) − y F i ) 2 + λ k h k H 4: Output: h ∗ , Φ ∗ main adaptation ( Daume III & Marcu , 2006 ; Jiang , 2008 ; Mansour et al. , 2009 ). A somewhat similar connection was noted in Sch ¨ olkopf et al. ( 2012 ) with respect to covariate shift, in the context of a v ery simple causal model. Specifically , we hav e that P F ( x, t ) = P ( x ) · P ( t | x ) and P C F ( x, t ) = P ( x ) · P ( ¬ t | x ) . The difference between the observed (factual) sample and the sample we must per - form inference on lies precisely in the treatment assignment mechanism, P ( t | x ) . For example, in a randomized con- trol trial, we typically ha ve that t and x are independent. In the contextual bandit setting, there is typically an algo- rithm which determines the choice of the action t giv en the context x . In observational studies, which are the focus of this work, the treatment assignment mechanism is not un- der our control and in general will not be independent of the conte xt x . Therefore, in general, the counterfactual dis- tribution will be dif ferent from the factual distrib ution. 3. Balancing counterfactual regr ession W e propose to perform counterf actual inference by amend- ing the direct modeling approach, taking into account the fact that the learned estimator h must generalize from the factual distrib ution to the counterfactual distribution. Our method, see Figure 1 , learns a representation Φ : X → R d , (either using a deep neural network, or by feature re- weighting and selection), and a function h : R d × T → R , such that the learned representation trades of f three objec- tiv es: (1) enabling lo w-error prediction of the observed out- comes over the factual representation, (2) enabling lo w- error prediction of unobserved counterfactuals by taking into account relev ant factual outcomes, and (3) the distri- butions of treatment populations are similar or balanced . W e accomplish low-error prediction by the usual means of error minimization over a training set and regularization in order to enable good generalization error . W e accomplish the second objective by a penalty that encourages counter- factual predictions to be close to the nearest observed out- come from the respecti ve treated or control set. Finally , we accomplish the third objectiv e by minimizing the so-called discr epancy distance , introduced by Mansour et al. ( 2009 ), which is a hypothesis class dependent distance measure tai- lored for domain adaptation. F or hypothesis space H , we denote the discrepanc y distance by disc H . See Section 4 for the formal definition and motiv ation. Other discrepancy measures such as Maximum Mean Discrepancy ( Gretton et al. , 2012 ) could also be used for this purpose. Intuitiv ely , representations that reduce the discrepancy be- tween the treated and control populations pre vent the learner from using “unreliable” aspects of the data when trying to generalize from the factual to counterfactual do- mains. F or example, if in our sample almost no men e ver receiv ed medication A, inferring how men would react to medication A is highly prone to error and a more conserv a- tiv e use of the gender feature might be warranted. Let X = { x i } n i =1 , T = { t i } n i =1 , and Y F = { y F i } n i =1 de- note the observed units, treatment assignments and factual outcomes respectively . W e assume X is a metric space with a metric d . Let j ( i ) ∈ arg min j ∈{ 1 ...n } s.t. t j =1 − t i d( x j , x i ) be the nearest neighbor of x i among the group that receiv ed the opposite treatment from unit i . Note that the nearest neighbor is computed once, in the input space, and does not change with the representation Φ . The objecti ve we minimize ov er representations Φ and h ypotheses h ∈ H is B H ,α,γ (Φ , h ) = 1 n n X i =1 | h (Φ( x i ) , t i ) − y F i | + (2) α disc H ( ˆ P F Φ , ˆ P C F Φ ) + γ n n X i =1 | h (Φ( x i ) , 1 − t i ) − y F j ( i ) | , where α, γ > 0 are hyperparameters to control the strength of the imbalance penalties, and disc is the discrepancy measure defined in 4.1 . When the hypothesis class H is the class of linear functions, the term disc H ( ˆ P F Φ , ˆ P C F Φ ) has a closed form brought in 4.1 below , and h (Φ , t i ) = h > [Φ( x i ) t i ] . F or more complex hypothesis spaces there is in general no exact closed form for disc H ( ˆ P F Φ , ˆ P C F Φ ) . Once the representation Φ is learned, we fit a final hypoth- esis minimizing a re gularized squared loss objecti ve on the factual data. Our algorithm is summarized in Algorithm 1 . Note that our algorithm in volves two minimization proce- dures. In Section 4 we motiv ate our method, by showing that our method of learning representations minimizes an upper bound on the re gret error over the counterfactual dis- tribution, using results of Cortes & Mohri ( 2014 ). Learning Repr esentations for Counterfactual Inference 3.1. Balancing variable selection A na ¨ ıve way of obtaining a balanced representation is to use only features that are already well balanced, i.e. fea- tures which hav e a similar distribution over both treated and control sets. Ho wever , imbalanced features can be highly predictiv e of the outcome, and should not always be dis- carded. A middle-ground is to restrict the influence of im- balanced features on the predicted outcome. W e build on this idea by learning a sparse re-weighting of the features that minimizes the bound in Theorem 1 . The re-weighting determines the influence of a feature by trading off its pre- dictiv e capabilities and its balance. W e implement the re-weighting as a diagonal matrix W , forming the representation Φ( x ) = W x , with diag ( W ) subject to a simplex constraint to achie ve sparsity . Let N = { x 7→ W x : W = diag ( w ) , w i ∈ [0 , 1] , P i w i = 1 } de- note the space of such representations. W e can now apply Algorithm 1 with H l the space of linear hypotheses. Be- cause the hypotheses are linear , disc(Φ) is a function of the distance between the weighted population means, see Section 4.1 . W ith p = E [ t ] , c = p − 1 / 2 , n t = P n i =1 t i , µ 1 = 1 n t P n i : t i =1 x i , and µ 0 analogously defined, disc H l ( X W ) = c + q c 2 + k W ( pµ 1 − (1 − p ) µ 0 )] k 2 2 T o minimize the discrepanc y , features k that differ a lot be- tween treatment groups will receive a smaller weight w k . Minimizing the ov erall objective B , inv olves a trade-off between maximizing balance and predictiv e accuracy . W e minimize ( 2 ) using alternating sub-gradient descent. 3.2. Deep neural networks Deep neural netw orks ha ve been sho wn to successfully learn good representations of high-dimensional data in many tasks ( Bengio et al. , 2013 ). Here we sho w that they can be used for counterfactual inference and, crucially , for accommodating imbalance penalties. W e propose a modi- fication of the standard feed-forward architecture with fully connected layers, see Figure 2 . The first d r hidden layers are used to learn a representation Φ( x ) of the input x . The output of the d r :th layer is used to calculate the discrepancy disc H ( ˆ P F Φ , ˆ P C F Φ ) . The d o layers follo wing the first d r lay- ers take as additional input the treatment assignment t i and generate a prediction h ([Φ( x i ) , t i ]) of the outcome. 3.3. Non-linear hypotheses and individual effect W e note that both in the case of variable re-weighting, and for neural nets with a single linear outcome layer , the hypothesis space H comprises linear functions of [Φ , t ] and the discrepancy , disc H (Φ) can be e xpressed in closed- form. A less desirable consequence is that such models cannot capture dif ference in the individual treatment ef- t x loss ( h ( Φ , t ), y ) …" …" Φ t Φ disc ( Φ t=0 , Φ t=1 ) d r d o Figure 2. Neural network architecture. fect, as they in volv e no interactions between Φ( x ) and t . Such interactions could be introduced by for example (polynomial) feature expansion, or in the case of neural net- works, by adding non-linear layers after the concatenation [Φ( x ) , t ] . For both approaches ho wever , we no longer ha ve a closed form expression for disc H ( ˆ P F Φ , ˆ P C F Φ ) . 4. Theory In this section we derive an upper bound on the rela- tiv e counterfactual generalization error of a representation function Φ . The bound only uses quantities we can mea- sure directly from the available data. In the previous sec- tion we gave sev eral methods for learning representations which approximately minimize the upper bound. Recall that for an observed context or instance x i ∈ X with observed treatment t i ∈ { 0 , 1 } , the two potential out- comes are Y 0 ( x i ) , Y 1 ( x i ) ∈ Y , of which we observe the factual outcome y F i = t i Y 1 ( x i ) + (1 − t i ) Y 0 ( x i ) . Let ( x 1 , t 1 , y F 1 ) , . . . , ( x n , t n , y F n ) be a sample from the factual distribution. Similarly , let ( x 1 , 1 − t 1 , y C F 1 ) , . . . , ( x n , 1 − t n , y C F n ) be the counterfactual sample. Note that while we know the factual outcomes y F i , we do not know the coun- terfactual outcomes y C F i . Let Φ : X → R d be a repre- sentation function, and let R (Φ) denote its range. Denote by ˆ P F Φ the empirical distribution over the representations and treatment assignments (Φ( x 1 ) , t 1 ) , . . . , (Φ( x n ) , t n ) , and similarly ˆ P C F Φ the empirical distribution over the representations and counterfactual treatment assignments (Φ( x 1 ) , 1 − t 1 ) , . . . , (Φ( x n ) , 1 − t n ) . Let H l be the hy- pothesis set of linear functions β : R (Φ) × { 0 , 1 } → Y . Definition 1 ( Mansour et al. 2009 ) . Gi ven a hypothesis set H and a loss function L , the empirical discrepancy between the empirical distributions ˆ P F Φ and ˆ P C F Φ is: disc H ( ˆ P F Φ , ˆ P C F Φ ) = max β ,β 0 ∈H E x ∼ ˆ P F Φ [ L ( β ( x ) , β 0 ( x ))] − E x ∼ ˆ P C F Φ [ L ( β ( x ) , β 0 ( x ))] where L is a loss function L : Y × Y → R with weak Lip- schitz constant µ relati ve to H 1 . Note that the discrepancy 1 When L is the squared loss we can show that if k Φ( x ) k 2 ≤ m and | y | ≤ M , and the hypothesis set H is that of linear func- tions with norm bounded by m/λ , then µ ≤ 2 M (1 + m 2 /λ ) . Learning Repr esentations for Counterfactual Inference is defined with respect to a hypothesis class and a loss func- tion, and is therefore very useful for obtaining generaliza- tion bounds inv olving different distributions. Throughout this section we always hav e L denote the squared loss. W e prov e the following, based on Cortes & Mohri ( 2014 ): Theorem 1. F or a sample { ( x i , t i , y F i ) } n i =1 , x i ∈ X , t i ∈ { 0 , 1 } and y i ∈ Y , and a given r epresentation function Φ : X → R d , let ˆ P F Φ = (Φ( x 1 ) , t 1 ) , . . . , (Φ( x n ) , t n ) , ˆ P C F Φ = (Φ( x 1 ) , 1 − t 1 ) , . . . , (Φ( x n ) , 1 − t n ) . W e assume that X is a metric space with metric d , and that the potential outcome functions Y 0 ( x ) and Y 1 ( x ) ar e Lipschitz continuous with constants K 0 and K 1 r espectively , such that d( x a , x b ) ≤ c = ⇒ | Y t ( x a ) − Y t ( x b ) | ≤ K t · c for t = 0 , 1 . Let H l ⊂ R d +1 be the space of linear func- tions β : X × { 0 , 1 } → Y , and for β ∈ H l , let L P ( β ) = E ( x,t,y ) ∼ P [ L ( β ( x, t ) , y )] be the ex- pected loss of β over distribution P . Let r = max E ( x,t ) ∼ P F [ k [Φ( x ) , t ] k 2 ] , E ( x,t ) ∼ P C F [ k [Φ( x ) , t ] k 2 ] be the maximum expected radius of the distributions. F or λ > 0 , let ˆ β F (Φ) = arg min β ∈H l L ˆ P F Φ ( β ) + λ k β k 2 2 , and ˆ β C F (Φ) similarly for ˆ P C F Φ , i.e. ˆ β F (Φ) and ˆ β C F (Φ) ar e the ridge re gression solutions for the factual and counterfactual empirical distributions, r espectively . Let ˆ y F i (Φ , h ) = h > [Φ( x i ) , t i ] and ˆ y C F i (Φ , h ) = h > [Φ( x i ) , 1 − t i ] be the outputs of the hypothesis h ∈ H l over the r epr esentation Φ( x i ) for the factual and counterfactual settings of t i , r espectively . F inally , for each i, j ∈ { 1 . . . n } , let d i,j ≡ d( x i , x j ) and j ( i ) ∈ arg min j ∈{ 1 ...n } s.t. t j =1 − t i d( x j , x i ) be the nearest neigh- bor in X of x i among the gr oup that r eceived the oppo- site treatment fr om unit i . Then for both Q = P F and Q = P C F we have: λ µr ( L Q ( ˆ β F (Φ)) − L Q ( ˆ β C F (Φ))) 2 ≤ disc H l ( ˆ P F Φ , ˆ P C F Φ ) + (3) min h ∈H l 1 n n X i =1 | ˆ y F i (Φ , h ) − y F i | + | ˆ y C F i (Φ , h ) − y C F i | ≤ (4) disc H l ( ˆ P F Φ , ˆ P C F Φ )+ min h ∈H l 1 n n X i =1 | ˆ y F i (Φ , h ) − y F i | + | ˆ y C F i (Φ , h ) − y F j ( i ) | + (5) K 0 n X i : t i =1 d i,j ( i ) + K 1 n X i : t i =0 d i,j ( i ) . (6) The proof is in the supplemental material. Theorem 1 gives, for all fixed representations Φ , a bound on the relativ e error for a ridge regression model fit on the factual outcomes and e valuated on the counterfactual, as compared with ridge re gression had it been fit on the un- observed counterfactual outcomes. It does not take into ac- count how Φ is obtained, and applies ev en if h (Φ( x ) , t ) is not con vex in x , e.g. if Φ is a neural net. Since the bound in the theorem is true for all representations Φ , we can attempt to minimize it ov er Φ , as done in Algorithm 1 . The term on line ( 4 ) of the bound includes the unknown counterfactual outcomes y C F i . It measures how well could we in principle fit the factual and counterfactual outcomes together using a linear hypothesis over the representation Φ . F or example, if the dimension of the representation is greater than the number of samples, and in addition if there exist constants b and such that | y F i − y C F i − b | ≤ , then this term is upper bounded by . In general howe ver , we cannot directly control its magnitude. The term on line ( 3 ) measures the discrepanc y between the factual and counterfactual distributions over the represen- tation Φ . In 4.1 below , we show that this term is closely related to the norm of the difference in means between the representation of the control group and the treated group. A representation for which the means of the treated and control are close (small value of ( 3 )), but at the same time allows for a good prediction of the factuals and counterfac- tuals (small value of ( 4 )), is guaranteed to yield structural risk minimizers with similar generalization errors between factual and counterfactual. W e further sho w that the term on line ( 4 ), which can- not be ev aluated since we do not know y C F i , can be up- per bounded by a sum of the terms on lines ( 5 ) and ( 6 ). The term ( 5 ) includes two empirical data fitting terms: | ˆ y F i (Φ , v ) − y F i | and | ˆ y C F i (Φ , v ) − y F j ( i ) | . The first is simply fitting the observed factual outcomes using a linear func- tion ov er the representation Φ . The second term is a form of nearest-neighbor regression, where the counterfactual outcomes for a treated (resp. control) instance are fit to the most similar factual outcome among the control (resp. treated) set, where similarity is measured in the original space X . Finally , the term on line ( 6 ), is the only quan- tity which is independent of the representation Φ . It mea- sures the a verage distance between each treated instance to the nearest control, and vice-versa, scaled by the Lipschitz constants of the true treated and control outcome functions. This term will be small when: (a) the true outcome func- tions Y 0 ( x ) and Y 1 ( x ) are relatively smooth, and (b) there is overlap between the treated and control groups, lead- ing to small av erage nearest neighbor distance across the groups. It is well-known that when there is not much ov er- lap between treated and control, causal inference in general is more difficult since the e xtrapolation from treated to con- trol and vice-versa is more e xtreme ( Rosenbaum , 2009 ). Learning Repr esentations for Counterfactual Inference The upper bound in Theorem 1 suggests the follo wing ap- proach for counterfactual regression. First minimize the terms ( 3 ) and ( 5 ) as functions of the representation Φ . Once Φ is obtained, perform a ridge regression on the f actual out- comes using the representations Φ( x ) and the treatment as- signments as input. The terms in the bound ensure that Φ would hav e a good fit for the data (term ( 5 )), while remov- ing aspects of the treated and control which create a large discrepancy term ( 3 )). For example, if there is a feature which is much more strongly associated with the treatment assignment than with the outcome, it might be advisable to not use it ( Pearl , 2011 ). 4.1. Linear discrepancy A straightforward calculation shows that for a class H l of linear hypotheses, disc H l ( P , Q ) = k µ 2 ( P ) − µ 2 ( Q ) k 2 . Here, k A k 2 is the spectral norm of A and µ 2 ( P ) = E x ∼ P [ xx > ] is the second-order moment of x ∼ P . In the special case of counterf actual inference, P and Q dif fer only in the treatment assignment. Specifically , disc ( ˆ P F Φ , ˆ P C F Φ ) = 0 d,d v v > 2 p − 1 2 (7) = p − 1 2 + r (2 p − 1) 2 4 + k v k 2 2 (8) where v = E ( x,t ) ∼ ˆ P F Φ [Φ( x ) · t ] − E ( x,t ) ∼ ˆ P F Φ [Φ( x ) · (1 − t )] and p = E [ t ] . Let µ 1 (Φ) = E ( x,t ) ∼ ˆ P F Φ [Φ( x ) | t = 1] and µ 0 (Φ) = E ( x,t ) ∼ ˆ P F Φ [Φ( x ) | t = 0] be the treated and control means in Φ space. Then v = p · µ 1 (Φ) − (1 − p ) · µ 0 (Φ) , e xactly the difference in means between the treated and control groups, weighted by their respectiv e sizes. As a consequence, min- imizing the discrepancy with linear hypotheses constitutes matching means in feature space. 5. Related work Counterfactual inference for determining causal effects in observational studies has been studied extensi vely in statis- tics, economics, epidemiology and sociology ( Morgan & W inship , 2014 ; Robins et al. , 2000 ; Rubin , 2011 ; Cher- nozhukov et al. , 2013 ) as well as in machine learning ( Langford et al. , 2011 ; Bottou et al. , 2013 ; Swaminathan & Joachims , 2015 ). Non-parametric methods do not attempt to model the rela- tion between the context, intervention, and outcome. The methods include nearest-neighbor matching, propensity score matching, and propensity score re-weighting ( Rosen- baum & Rubin , 1983 ; Rosenbaum , 2002 ; Austin , 2011 ). Parametric methods, on the other hand, attempt to con- cretely model the relation between the context, interven- tion, and outcome. These methods include any type of regression including linear and logistic regression ( Pren- tice , 1976 ; Gelman & Hill , 2006 ), random forests ( W ager & Athey , 2015 ) and regression trees ( Chipman et al. , 2010 ). Doubly robust methods combine aspects of parametric and non-parametric methods, typically by using a propensity score weighted regression ( Bang & Robins , 2005 ; Dud ´ ık et al. , 2011 ). They are especially of use when the treat- ment assignment probability is known, as is the case for off-polic y e valuation or learning from logged bandit data. Once the treatment assignment probability has to be esti- mated, as is the case in most observ ational studies, their efficac y might w ane considerably ( Kang & Schafer , 2007 ). T ian et al. ( 2014 ) presented one of the fe w methods that achiev e balance by transforming or selecting covariates, modeling interactions between treatment and cov ariates. 6. Experiments W e ev aluate the two variants of our algorithm proposed in Section 3 with focus on two questions: 1) What is the effect of imposing imbalance regularization on representations? 2) Ho w do our methods fare against established methods for counterfactual inference? W e refer to the v ariable se- lection method of Section 3.1 as Balancing Linear Regr es- sion ( B L R) and the neural network approach as B N N for Balancing Neural Network . W e report the RMSE of the estimated individual treatment effect, denoted I T E , and the absolute error in estimated av erage treatment effect, denoted AT E , see Section 2 . Further , follo wing Hill ( 2011 ), we report the Pr ecision in Estimation of Heter ogeneous Effect (PEHE), PEHE = q 1 n P n i =1 ( ˆ y 1 ( x i ) − ˆ y 0 ( x i ) − ( Y 1 ( x i ) − Y 0 ( x i ))) 2 . Un- like for ITE, obtaining a good (small) PEHE requires ac- curate estimation of both the factual and counterfactual re- sponses, not just the counterfactual. Standard methods for hyperparameter selection, including cross-validation, are unav ailable when training counterfactual models on real- world data, as there are no samples from the counterf actual outcome. In our experiments, all outcomes are simulated, and we have access to counterfactual samples. T o av oid fitting parameters to the test set, we generate multiple re- peated experiments, each with a dif ferent outcome function and pick hyperparameters once, for all models (and base- lines), based on a held-out set of experiments. While not possible for real-world data, this approach gives an indica- tion of the robustness of the parameters. The neural network architectures used for all experiments consist of fully-connected ReLU layers trained using RM- Learning Repr esentations for Counterfactual Inference SProp, with a small l 2 weight decay , λ = 10 − 3 . W e ev aluate two architectures. B N N - 4 - 0 consists of 4 ReLU representation-only layers and a single linear output layer , d r = 4 , d o = 0 . B N N - 2 - 2 consists of 2 ReLU representation-only layers, 2 ReLU output layers after the treatment has been added, and a single linear output layer , d r = 2 , d o = 2 , see Figure 2 . For the IHDP data we use layers of 25 hidden units each. For the Ne ws data repre- sentation layers hav e 400 units and output layers 200 units. The nearest neighbor term, see Section 3 , did not improve empirical performance, and was omitted for the BNN mod- els. F or the neural network models, the hypothesis and the representation were fit jointly . W e include several dif ferent linear models in our compari- son, including ordinary linear regression ( O L S) and doubly robust linear regression (DR) ( Bang & Robins , 2005 ). W e also include a method were variables are first selected us- ing LASSO and then used to fit a ridge regression ( L A S S O + R I D G E ). Regularization parameters are picked based on a held out sample. For DR, we estimate propensity scores using logistic regression and clip weights at 100. For the News dataset (see belo w), we perform the logistic regres- sion on the first 100 principal components of the data. Bayesian Additiv e Regression Trees (B AR T) ( Chipman et al. , 2010 ) is a non-linear re gression model which has been used successfully for counterfactual inference in the past ( Hill , 2011 ). W e compare our results to B AR T using the implementation provided in the BayesT ree R- package ( Chipman & McCulloch , 2016 ). Like ( Hill , 2011 ), we do not attempt to tune the parameters, but use the de- fault. Finally , we include a standard feed-forward neural network, trained with 4 hidden layers, to predict the factual outcome based on X and t , without a penalty for imbal- ance. W e refer to this as N N - 4 . 6.1. Simulation based on real data – IHDP Hill ( 2011 ) introduced a semi-simulated dataset based on the Infant Health and Development Program (IHDP). The IHDP data has cov ariates from a real randomized exper- iment, studying the effect of high-quality child care and home visits on future cogniti ve test scores. The experiment proposed by Hill ( 2011 ) uses a simulated outcome and ar- tificially introduces imbalance between treated and control subjects by removing a subset of the treated population. In total, the dataset consists of 747 subjects (139 treated, 608 control), each represented by 25 cov ariates measuring properties of the child and their mother . For details, see Hill ( 2011 ). W e run 100 repeated e xperiments for hyper - parameter selection and 1000 for ev aluation, all with the log-linear response surface implemented as setting “ A” in the NPCI package ( Dorie , 2016 ). ITE(x) 0 5 10 15 20 0 100 200 300 400 500 600 700 800 900 ATE = 3.45 Figure 3. V isualization of one of the News sets (left). Each dot represents a single news item x . The radius represents the out- come y ( x ) , and the color the treatment t . The two black dots represent the two centroids. Histogram of ITE in News (right). 6.2. Simulation based on real data – News W e introduce a new dataset, simulating the opinions of a media consumer exposed to multiple news items. Each item is consumed either on a mobile device or on desk- top. The units are different news items represented by word counts x i ∈ N V , and the outcome y F ( x i ) ∈ R is the readers experience of x i . The intervention t ∈ { 0 , 1 } represents the viewing device, desktop ( t = 0) or mobile ( t = 1) . W e assume that the consumer prefers to read about certain topics on mobile. T o model this, we train a topic model on a large set of documents and let z ( x ) ∈ R k represent the topic distribution of news item x . W e define two centroids in topic space, z c 1 (mobile), and z c 0 (desk- top), and let the readers opinion of ne ws item x on de- vice t be determined by the similarity between z ( x ) and z c t , y F ( x i ) = C z ( x ) > z c 0 + t i · z ( x ) > z c 1 + , where C is a scaling factor and ∼ N (0 , 1) . Here, we let the mobile centroid, z c 1 be the topic distribution of a randomly sam- pled document, and z c 0 be the av erage topic representation of all documents. W e further assume that the assignment of a news item x to a device t ∈ { 0 , 1 } is biased towards the device preferred for that item. W e model this using the softmax function, p ( t = 1 | x ) = e κ · z ( x ) > z c 1 e κ · z ( x ) > z c 0 + e κ · z ( x ) > z c 1 , where κ ≥ 0 determines the strength of the bias. Note that κ = 0 implies a completely random de vice assignment. W e sample n = 5000 news items and outcomes accord- ing to this model, based on 50 LD A topics, trained on documents from the NY T imes corpus (downloaded from UCI ( Newman , 2008 )). The data av ailable to the algo- rithms are the raw word counts, from a vocab ulary of k = 3477 words, selected as union of the most 100 prob- able words in each topic. W e set the scaling parameters to C = 50 , κ = 10 and sample 50 realizations for ev aluation. Figure 3 shows a visualization of the outcome and device assignments for a sample of 500 documents. Note that the device assignment becomes increasingly random, and the outcome lower , further away from the centroids. Learning Repr esentations for Counterfactual Inference T able 1. IHDP . Results and standard errors for 1000 repeated ex- periments. (Lower is better .) Proposed methods: B L R , B N N - 4 - 0 and B N N - 2 -2 . † ( Chipman et al. , 2010 ) I T E AT E P E H E L I N E AR O U T C O M E O L S 4 . 6 ± 0 . 2 0 . 7 ± 0 . 0 5 . 8 ± 0 . 3 D O U B L Y R O BU S T 3 . 0 ± 0 . 1 0 . 2 ± 0 . 0 5 . 7 ± 0 . 3 L A S S O + R I D G E 2 . 8 ± 0 . 1 0 . 2 ± 0 . 0 5 . 7 ± 0 . 2 B L R 2 . 8 ± 0 . 1 0 . 2 ± 0 . 0 5 . 7 ± 0 . 3 B N N - 4 -0 3 . 0 ± 0 . 0 0 . 3 ± 0 . 0 5 . 6 ± 0 . 3 N O N - LI N E A R O U T C O ME N N - 4 2 . 0 ± 0 . 0 0 . 5 ± 0 . 0 1 . 9 ± 0 . 1 BA RT † 2 . 1 ± 0 . 2 0 . 2 ± 0 . 0 1 . 7 ± 0 . 2 B N N - 2 -2 1 . 7 ± 0 . 0 0 . 3 ± 0 . 0 1 . 6 ± 0 . 1 T able 2. News. Results and standard errors for 50 repeated e xper- iments. (Lower is better .) Proposed methods: B L R , B N N - 4 -0 and B N N - 2 - 2. † ( Chipman et al. , 2010 ) I T E AT E P E H E L I N E AR O U T C O M E O L S 3 . 1 ± 0 . 2 0 . 2 ± 0 . 0 3 . 3 ± 0 . 2 D O U B L Y R O BU S T 3 . 1 ± 0 . 2 0 . 2 ± 0 . 0 3 . 3 ± 0 . 2 L A S S O + R I D G E 2 . 2 ± 0 . 1 0 . 6 ± 0 . 0 3 . 4 ± 0 . 2 B L R 2 . 2 ± 0 . 1 0 . 6 ± 0 . 0 3 . 3 ± 0 . 2 B N N - 4 -0 2 . 1 ± 0 . 0 0 . 3 ± 0 . 0 3 . 4 ± 0 . 2 N O N - LI N E A R O U T C O ME N N - 4 2 . 8 ± 0 . 0 1 . 1 ± 0 . 0 3 . 8 ± 0 . 2 BA RT † 5 . 8 ± 0 . 2 0 . 2 ± 0 . 0 3 . 2 ± 0 . 2 B N N - 2 -2 2 . 0 ± 0 . 0 0 . 3 ± 0 . 0 2 . 0 ± 0 . 1 6.3. Results The results of the IHDP and News experiments are pre- sented in T able 1 and T able 2 respectiv ely . W e see that, in general, the non-linear methods perform better in terms of individual prediction (ITE, PEHE). Further , we see that our proposed balancing neural netw ork B N N - 2 - 2 performs the best on both datasets in terms of estimating the ITE and PEHE, and is competitiv e on average treatment effect, A TE. Particularly notew orthy is the comparison with the network without balance penalty , N N - 4 . These results in- dicate that our proposed regularization can help avoid over - fitting the representation to the factual outcome. Figure 4 plots the performance of B N N - 2 - 2 for various imbalance penalties α . The valley in the region α = 1 , and the fact that we don’t experience a loss in performance for smaller values of α , sho w that the penalizing imbalance in the rep- resentation Φ has the desired effect. For the linear methods, we see that the two variable selec- tion approaches, our proposed BLR method and L A S S O + R I D G E , w ork the best in terms of estimating ITE. W e would Imbalance penalty, , (log-scale) 0 10 -4 10 -2 10 0 10 2 1.5 2 2.5 3 3.5 4 // // PEHE ITE ITE (BART) Imbalance penalty, , (log-scale) 0 10 -4 10 -2 10 0 10 2 1 1.5 2 2.5 3 3.5 4 // // Factual RMSE Counterfactual RMSE Counterfactual RMSE (BART) Figure 4. Error in estimated treatment effect (ITE, PEHE) and counterfactual response (RMSE) on the IHDP dataset. Sweep ov er α for the BNN-2-2 neural network model. like to emphasize that L A S S O + R I D G E is a very strong baseline and it’ s exciting that our theory-guided method is competitiv e with this approach. On Ne ws, BLR and L A S S O + R I D G E perform equally well yet again, although this time with qualitativ ely dif ferent re- sults, as they do not select the same variables. Interestingly , BNN-4-0, BLR and L A S S O + R I D G E all perform better on News than the standard neural network, NN-4. The perfor- mance of B AR T on Ne ws is likely hurt by the dimensional- ity of the dataset, and could improv e with hyperparameter tuning. 7. Conclusion As machine learning is becoming a major tool for re- searchers and policy makers across different fields such as healthcare and economics, causal inference becomes a cru- cial issue for the practice of machine learning. In this paper we focus on counterfactual inference, which is a widely ap- plicable special case of causal inference. W e cast counter- factual inference as a type of domain adaptation problem, and derive a nov el way of learning representations suited for this problem. Our models rely on a novel type of regularization criteria: learning balanced r epr esentations , representations which hav e similar distributions among the treated and untreated populations. W e show that trading of f a balancing criterion with standard data fitting and regularization terms is both practically and theoretically prudent. Open questions which remain are how to generalize this method for cases where more than one treatment is in question, deri ving better optimization algorithms and using richer discrepancy measures. Acknowledgements DS and US were supported by NSF CAREER aw ard #1350965. Learning Repr esentations for Counterfactual Inference References Austin, Peter C. An introduction to propensity score meth- ods for reducing the effects of confounding in observa- tional studies. Multivariate behavioral r esear ch , 46(3): 399–424, 2011. Bang, Heejung and Robins, James M. Doubly robust es- timation in missing data and causal inference models. Biometrics , 61(4):962–973, 2005. Ben-David, Shai, Blitzer, John, Crammer , Koby , Pereira, Fernando, et al. Analysis of representations for domain adaptation. Advances in neural information pr ocessing systems , 19:137, 2007. Bengio, Y oshua, Courville, Aaron, and V incent, Pierre. Representation learning: A re view and new perspectiv es. P attern Analysis and Machine Intelligence, IEEE T rans- actions on , 35(8):1798–1828, 2013. Beygelzimer , Alina, Langford, John, Li, Lihong, Reyzin, Lev , and Schapire, Robert E. Contextual bandit al- gorithms with supervised learning guarantees. arXiv pr eprint arXiv:1002.4058 , 2010. Bottou, L ´ eon, Peters, Jonas, Quinonero-Candela, Joaquin, Charles, Denis X, Chickering, D Max, Portugaly , Elon, Ray , Dipankar, Simard, P atrice, and Snelson, Ed. Coun- terfactual reasoning and learning systems: The example of computational advertising. The J ournal of Machine Learning Resear ch , 14(1):3207–3260, 2013. Chernozhukov , V ictor , Fern ´ andez-V al, Iv ´ an, and Melly , Blaise. Inference on counterfactual distributions. Econo- metrica , 81(6):2205–2268, 2013. Chipman, Hugh and McCulloch, Robert. BayesTree: Bayesian additiv e regression trees. https://cran. r- project.org/package=BayesTree/ , 2016. Accessed: 2016-01-30. Chipman, Hugh A, George, Edward I, and McCulloch, Robert E. Bart: Bayesian additiv e regression trees. The Annals of Applied Statistics , pp. 266–298, 2010. Cortes, Corinna and Mohri, Mehryar . Domain adaptation and sample bias correction theory and algorithm for re- gression. Theoretical Computer Science , 519:103–126, 2014. Daume III, Hal and Marcu, Daniel. Domain adaptation for statistical classifiers. Journal of Artificial Intelligence Resear ch , pp. 101–126, 2006. Dorie, V incent. NPCI: Non-parametrics for causal in- ference. https://github.com/vdorie/npci , 2016. Accessed: 2016-01-30. Dud ´ ık, Mirosla v , Langford, John, and Li, Lihong. Dou- bly rob ust polic y e valuation and learning. arXiv pr eprint arXiv:1103.4601 , 2011. Gani, Y aroslav , Ustinova, Evgeniya, Ajakan, Hana, Ger- main, Pascal, Larochelle, Hugo, La violette, Franc ¸ ois, Marchand, Mario, and Lempitsky , V ictor . Domain- adversarial training of neural networks. arXiv pr eprint arXiv:1505.07818 , 2015. Gelman, Andre w and Hill, Jennifer . Data analysis using re- gr ession and multile vel/hierar chical models . Cambridge Univ ersity Press, 2006. Gretton, Arthur, Borgwardt, Karsten M., Rasch, Malte J., Sch ¨ olkopf, Bernhard, and Smola, Alexander . A ker - nel two-sample test. J . Mach. Learn. Res. , 13:723–773, March 2012. ISSN 1532-4435. Hill, Jennifer L. Bayesian nonparametric modeling for causal inference. Journal of Computational and Graph- ical Statistics , 20(1), 2011. Jiang, Jing. A literature surve y on domain adaptation of statistical classifiers. T echnical report, Uni versity of Illi- nois at Urbana-Champaign, 2008. Kang, Joseph D Y and Schafer, Joseph L. Demystifying double robustness: A comparison of alternati ve strate- gies for estimating a population mean from incomplete data. Statistical science , pp. 523–539, 2007. Langford, John, Li, Lihong, and Dud ´ ık, Miroslav . Doubly robust polic y ev aluation and learning. In Pr oceedings of the 28th International Confer ence on Machine Learning (ICML-11) , pp. 1097–1104, 2011. Lewis, David. Causation. The journal of philosophy , pp. 556–567, 1973. Louizos, Christos, Swersky , Ke vin, Li, Y ujia, W elling, Max, and Zemel, Richard. The variational fair auto en- coder . arXiv preprint , 2015. Mansour , Y ishay , Mohri, Mehryar , and Rostamizadeh, Af- shin. Domain adaptation: Learning bounds and algo- rithms. arXiv pr eprint arXiv:0902.3430 , 2009. Morgan, Stephen L and W inship, Christopher . Counterfac- tuals and causal infer ence . Cambridge Uni versity Press, 2014. Newman, David. Bag of words data set. https: //archive.ics.uci.edu/ml/datasets/ Bag+of+Words , 2008. Pearl, Judea. Causality . Cambridge uni versity press, 2009. Learning Repr esentations for Counterfactual Inference Pearl, Judea. In vited commentary: understanding bias am- plification. American journal of epidemiology , 174(11): 1223–1227, 2011. Prentice, Ross. Use of the logistic model in retrospectiv e studies. Biometrics , pp. 599–606, 1976. Robins, James M, Hernan, Miguel Angel, and Brumback, Babette. Mar ginal structural models and causal inference in epidemiology . Epidemiology , pp. 550–560, 2000. Rosenbaum, Paul R. Observational studies . Springer , 2002. Rosenbaum, Paul R. Design of Observational Studies . Springer Science & Business Media, 2009. Rosenbaum, Paul R and Rubin, Donald B. The central role of the propensity score in observational studies for causal effects. Biometrika , 70(1):41–55, 1983. Rubin, Donald B. Estimating causal effects of treatments in randomized and nonrandomized studies. Journal of educational Psychology , 66(5):688, 1974. Rubin, Donald B. Causal inference using potential out- comes. J ournal of the American Statistical Association , 2011. Sch ¨ olkopf, B., Janzing, D., Peters, J., Sgouritsa, E., Zhang, K., and Mooij, J. On causal and anticausal learning. In Pr oceedings of the 29th International Conference on Machine Learning , pp. 1255–1262, Ne w Y ork, NY , USA, 2012. Omnipress. Strehl, Alex, Langford, John, Li, Lihong, and Kakade, Sham M. Learning from logged implicit exploration data. In Advances in Neural Information Pr ocessing Sys- tems , pp. 2217–2225, 2010. Sutton, Richard S and Barto, Andrew G. Reinfor cement learning: An intr oduction , volume 1. MIT press Cam- bridge, 1998. Swaminathan, Adith and Joachims, Thorsten. Batch learn- ing from logged bandit feedback through counterfactual risk minimization. Journal of Mac hine Learning Re- sear ch , 16:1731–1755, 2015. T ian, Lu, Alizadeh, Ash A, Gentles, Andrew J, and Tib- shirani, Robert. A simple method for estimating interac- tions between a treatment and a large number of covari- ates. Journal of the American Statistical Association , 109(508):1517–1532, 2014. van der Laan, Mark J and Petersen, Maya L. Causal effect models for realistic indi vidualized treatment and inten- tion to treat rules. The International J ournal of Biostatis- tics , 3(1), 2007. W ager , Stefan and Athey , Susan. Estimation and inference of heterogeneous treatment effects using random forests. arXiv pr eprint arXiv:1510.04342 , 2015. W eiss, Jeremy C, K uusisto, Finn, Boyd, K endrick, Lui, Jie, and Page, David C. Machine learning for treatment assignment: Improving indi vidualized risk attribution. American Medical Informatics Association Annual Sym- posium , 2015. Zemel, Rich, W u, Y u, Swersky , Ke vin, Pitassi, T oni, and Dwork, Cynthia. Learning fair representations. In Pr o- ceedings of the 30th International Conference on Ma- chine Learning (ICML-13) , pp. 325–333, 2013. Learning Repr esentations for Counterfactual Inference A. Proof of Theor em 1 W e use a result implicit in the proof of Theorem 2 of Cortes & Mohri ( 2014 ), for the case where H is the set of linear hypotheses over a fixed representation Φ . Cortes & Mohri ( 2014 ) state their result for the case of domain adaptation: in our case, the factual distribution is the so-called “source domain”, and the counterfactual distribution is the “target domain”. Theorem A1. [ Cortes & Mohri ( 2014 )] Using the notation and assumptions of Theor em 1, for both Q = P F and Q = P C F : λ µr ( L Q ( ˆ β F (Φ)) − L Q ( ˆ β C F (Φ))) 2 ≤ disc H l ( ˆ P F Φ , ˆ P C F Φ )+ min h ∈H l 1 n n X i =1 | ˆ y F i (Φ , h ) − y F i | + | ˆ y C F i (Φ , h ) − y C F i | ! (9) In their work, Cortes & Mohri ( 2014 ) assume the H is a reproducing kernel Hilbert space (RKHS) for a univ ersal k ernel, and they do not consider the role of the representation Φ . Since the RKHS hypothesis space they use is much stronger than the linear space H l , it is often reasonable to assume that the second term in the bound 9 is small. W e howe ver cannot make this assump- tion, and therefore we wish to explicitly bound the term min h ∈H l 1 n P n i =1 | ˆ y F i (Φ , h ) − y F i | + | ˆ y C F i (Φ , h ) − y C F i | , while using the fact that we hav e control o ver the represen- tation Φ . Lemma 1. Let { ( x i , t i , y F i ) } n i =1 , x i ∈ X , t i ∈ { 0 , 1 } and y F i ∈ Y ⊆ R . W e assume that X is a metric space with metric d , and that ther e exist two function Y 0 ( x ) and Y 1 ( x ) such that y F i = t i Y 1 ( x i ) + (1 − t i ) Y 0 ( x i ) , and in addition we define y C F i = (1 − t i ) Y 1 ( x i ) + t i Y 0 ( x i ) . W e further assume that the functions Y 0 ( x ) and Y 1 ( x ) ar e Lipschitz continuous with constants K 0 and K 1 r espectively , such that d( x a , x b ) ≤ c = ⇒ | Y t ( x a ) − Y t ( x b ) | ≤ K t c . De- fine j ( i ) ∈ arg min j ∈{ 1 ...n } s.t. t j =1 − t i d( x j , x i ) to be the near est neighbor of x i among the gr oup that r eceived the opposite treatment fr om unit i , for all i ∈ { 1 . . . n } . Let d i,j = d( x i , x j ) F or any b ∈ Y and h ∈ H : | b − y C F i | ≤ | b − y F j ( i ) | + K 1 − t i d i,j ( i ) Pr oof. By the triangle inequality , we hav e that: | b − y C F i | ≤ | b − y F j ( i ) | + | y F j ( i ) − y C F i | . By the Lipschitz assumption on Y 1 − t i , and since d( x i , x j ( i ) ) ≤ d i,j ( i ) , we obtain that | y F j ( i ) − y C F i | = | Y 1 − t i ( x j ( i ) ) − Y 1 − t i ( x i ) | ≤ d i,j ( i ) K 1 − t i . By definition y C F i = Y 1 − t i ( x i ) . In addition, by def- inition of j ( i ) , we ha ve t j ( i ) = 1 − t i , and therefore y F j ( i ) = Y 1 − t i ( x j ( i ) ) , proving the equality . The inequality is an immediate consequence of the Lipschitz property . W e restate Theorem 1 and prov e it. Theorem 1. F or a sample { ( x i , t i , y F i ) } n i =1 , x i ∈ X , t i ∈ { 0 , 1 } and y i ∈ Y , r ecall that y F i = t i Y 1 ( x i ) + (1 − t i ) Y 0 ( x i ) , and in addition define y C F i = (1 − t i ) Y 1 ( x i ) + t i Y 0 ( x i ) . F or a given repr esentation function Φ : X → R d , let ˆ P F Φ = (Φ( x 1 ) , t 1 ) , . . . , (Φ( x n ) , t n ) , ˆ P C F Φ = (Φ( x 1 ) , 1 − t 1 ) , . . . , (Φ( x n ) , 1 − t n ) . W e assume that X is a metric space with metric d , and that the poten- tial outcome functions Y 0 ( x ) and Y 1 ( x ) ar e Lipschitz con- tinuous with constants K 0 and K 1 r espectively , such that d( x a , x b ) ≤ c = ⇒ | Y t ( x a ) − Y t ( x b ) | ≤ K t c . Let H l ⊂ R d +1 be the space of linear functions, and for β ∈ H l , let L P ( β ) = E ( x,t,y ) ∼ P [ L ( β ( x, t ) , y )] be the e xpected loss of β over distribution P . Let r = max E ( x,t ) ∼ P F [ k [Φ( x ) , t ] k 2 ] , E ( x,t ) ∼ P C F [ k [Φ( x ) , t ] k 2 ] . F or λ > 0 , let ˆ β F (Φ) = arg min β ∈H l L ˆ P F Φ ( β ) + λ k β k 2 2 , and ˆ β C F (Φ) similarly for ˆ P C F Φ , i.e. ˆ β F (Φ) and ˆ β C F (Φ) ar e the ridge re gression solutions for the factual and counterfactual empirical distributions, r espectively . Let ˆ y F i (Φ , h ) = h > [Φ( x i ) , t i ] and ˆ y C F i (Φ , h ) = h > [Φ( x i ) , 1 − t i ] be the outputs of the hypothesis h ∈ H l over the r epr esentation Φ( x i ) for the factual and counter- factual settings of t i , r espectively . Finally , for each i ∈ { 1 . . . n } , let j ( i ) ∈ arg min j ∈{ 1 ...n } s.t. t j =1 − t i d( x j , x i ) be the near est neighbor of x i among the gr oup that r eceived the opposite tr eatment fr om unit i . Let d i,j = d( x i , x j ) . Then for both Q = P F and Q = P C F we have: λ µr ( L Q ( ˆ β F (Φ)) − L Q ( ˆ β C F (Φ))) 2 ≤ (10) disc H l ( ˆ P F Φ , ˆ P C F Φ )+ min h ∈H l 1 n n X i =1 | ˆ y F i (Φ , h ) − y F i | + | ˆ y C F i (Φ , h ) − y C F i | ≤ (11) disc H l ( ˆ P F Φ , ˆ P C F Φ )+ min h ∈H l 1 n n X i =1 | ˆ y F i (Φ , h ) − y F i | + | ˆ y C F i (Φ , h ) − y F j ( i ) | + K 0 n X i : t i =1 d i,j ( i ) + K 1 n X i : t i =0 d i,j ( i ) . Pr oof. Inequality ( 10 ) is immediate by Theorem A1 . In order to prov e inequality ( 11 ), we apply Lemma 1 , setting b = ˆ y C F i and summing ov er the i .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment