Performance Based Cost Functions for End-to-End Speech Separation

Recent neural network strategies for source separation attempt to model audio signals by processing their waveforms directly. Mean squared error (MSE) that measures the Euclidean distance between waveforms of denoised speech and the ground-truth spee…

Authors: Shrikant Venkataramani, Ryley Higa, Paris Smaragdis

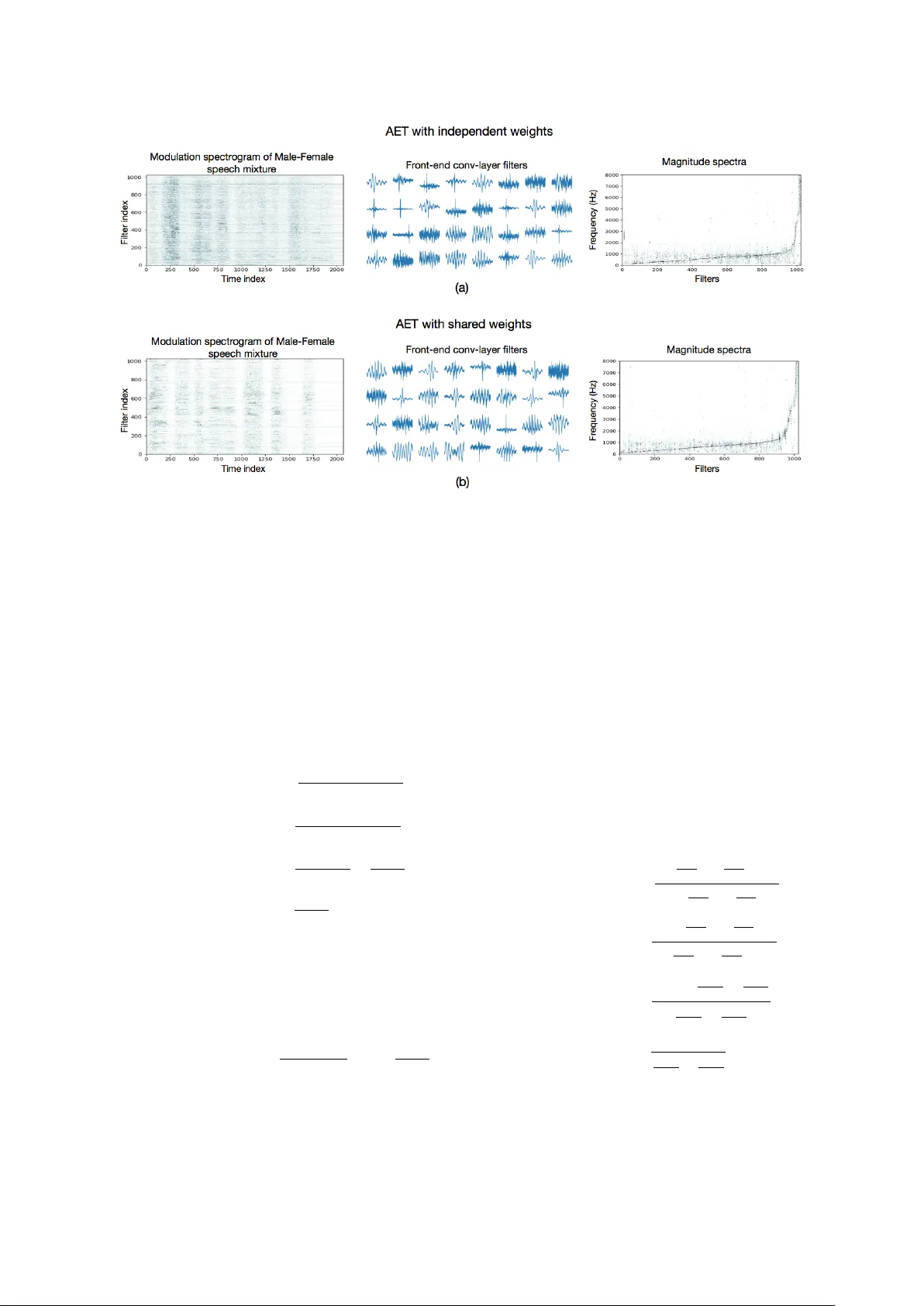

Performance Based Cost Functions for End-to-End Speech Separation Shrikant V enkataramani ∗ † , Ryley Higa ∗ † , Paris Smaragdis † ‡ † Univ ersity of Illinois at Urbana-Champaign ‡ Adobe Research ∗ Authors with equal contribution {svnktrm2, higa2}@illinois.edu Abstract —Recent neural network strategies f or source separation attempt to model audio signals by processing their wa veforms dir ectly . Mean squared error (MSE) that measures the Euclidean distance between wavef orms of denoised speech and the ground-truth speech, has been a natural cost-function for these approaches. However , MSE is not a perceptually motivated measure and may result in lar ge perceptual discrepancies. In this paper , we propose and experiment with new loss functions for end- to-end source separation. These loss functions are moti- vated by BSS_Eval and perceptual metrics like source to distortion ratio (SDR), source to interference ratio (SIR), source to artifact ratio (SAR) and short-time objective intelligibility ratio (STOI). This enables the flexibility to mix and match these loss functions depending upon the requir ements of the task. Subjective listening tests rev eal that combinations of the proposed cost functions help achieve superior separation performance as compared to stand-alone MSE and SDR costs. Index T erms —End-to-end speech separation, Deep learning, Cost functions I . I N T RO D U C T I O N Single channel source separation deals with the problem of extracting the speaker or sound of interest from a mixture consisting of multiple simultaneous speakers or audio sources. In order to identify the source, we assume the av ailability of a few unmixed training examples. These examples are used to build representativ e models for the corresponding source. W ith the dev elopment of deep learning, sev eral neural network architectures hav e been proposed to solve the supervised single-channel source separation problem [1], [2], [3]. The latest deep-learning approaches to source separation have started to focus on performing separation by operating directly on the mixture wa veforms [4], [5], [6], [7]. T o train these end-to-end models, the papers restrict themselves to minimizing a mean-squared error loss [4], [5], [6], an L1 loss [7] or a source-to-distortion ratio [4], [5] based cost-function between the separated speech and the corresponding ground-truth. A potential direction for improving end-to-end models is to use loss functions This work was supported by NSF grant 1453104 that capture the salient aspects of source separation. Predominantly , the BSS_Eval metrics source- to-Distortion ratio (SDR), source-to-Interference ratio (SIR), source-to-Artifact ratio (SAR) [8], and short-time objecti ve intelligibility (STOI) [9] hav e been used to ev aluate the performance of source separation algorithms. Fu et.al., hav e proposed an end- to-end neural network that captures the effect of STOI in performing source separation [10]. Alternativ ely , we could also develop suitable cost-functions for end- to-end source separation by interpreting these metrics as suitable loss functions themselves. Proposing and ev aluating these new cost-functions for source separation would also allow us mix and match a combination of these metrics to suit our requirements and improv e source separation performance, for any neural network architecture. Section II pro vides a description of the neur al network used for end-to-end source separation. Section III presents the approach to interpret the BSS_Eval and STOI metrics as loss functions for end-to-end source separation. W e ev aluate our cost functions by deploying subjectiv e listening tests. The details of our experiments, subjectiv e listening tests and the corresponding results are discussed in section IV and we conclude in section V. I I . E N D - T O - E N D S E PA R A T I O N W e first describe the end-to-end neural network architecture used for source separation. W e begin with the description of a short-time Fourier transform (STFT) based source separation neural network. This network can be transformed into an end-to-end separation network by replacing the STFT analysis and synthesis operations by their neural network alternatives [4]. Figure 1 (a) shows the architecture of a source separation network [4]. The flow of data through the network can be explained by the following sequence of steps. The mixture is first transformed into its equiv alent time-frequency (TF) representation using the STFT . The TF representation is then split into Fig. 1. (a) Block diagram of a source separation network using STFT as the front-end. (b) Block diagram of the equiv alent end-to-end source separation network using an auto-encoder transform as the front-end. its magnitude and phase components. The magnitude spectrogram of the mixture is then fed to the separation neural network. This network is trained to estimate the magnitude spectrogram of the source of interest from the magnitude spectrogram of the mixture. The estimated magnitude spectrogram is multipled by the phase of the mixture and transformed into the time domain by the ov erlap-and-add approach to in vert the STFT . As described in [4], we can transform this net- work into an end-to-end source separation network by replacing the STFT blocks by corresponding neural networks, with the following sequence of steps. (i) The STFT and inv erse STFT operations can be replaced by 1-D con volution and transposed con volution layers. This would enable the network to learn an adaptiv e TF representation ( X ) directly from the wa veform of the mixture. (ii) The front-end con v olutional layer needs to be follo wed by a smoothing con v olutional layer . This is done to obtain a smooth modulation spectrogram ( M ) that is similar to STFT magnitude spectrogram. The carrier component obtained using the element-wise division operation, P = X / M in- corporates the rapid variations of the adaptiv e TF representation. W e will refer to this front end as the auto-encoder transform (AET). Figure 1(b) giv es the block diagram of the end-to-end separation network using an AET front-end. A. Examining the adaptive bases W e can understand the performance of end-to-end source separation better by examining the learned TF bases and TF representations. Figure 2 plots the modulation spectrograms of a male-female speech mixture, the first 32 TF bases and their corresponding magnitude spectra. W e rank the TF bases according to their dominant frequency component. W e give these plots for two cases viz., the analysis con v olution and synthesis transposed-con volution layers are inde- pendent (top), the analysis con v olution and synthe- sis transposed-con v olution layers share their weights (bottom). W e observe that, similar to STFT bases, the adaptiv e bases are frequency selectiv e in nature. How- ev er , the adaptive bases are concentrated at the lower frequencies and spread-out at the higher frequencies similar to the filters of the Mel filter bank. I I I . P E R F O R M A N C E BA S E D C O S T - F U N C T I O N S Source separation approaches hav e traditionally re- lied on the use of magnitude spectrograms as the choice of TF representation. Magnitude spectrograms hav e been interpreted as probability distribution func- tions (pdf) drawn from random v ariables of varying characteristics. This moti vated the use of sev eral cost functions like the mean squared error [11], Kullback- Leibler di vergence [11], Itakura-Saito div ergence [12], Bregman div ergences [13] to be used for source sep- aration. Since these interpretations do not extend to wa veforms, there is a need to propose and experiment with additional cost-functions suitable for use in the wa veform domain. As stated before, the BSS_Eval metrics (SDR, SIR, SAR) and STOI are the most commonly used metrics to e valuate the performance of source separation algorithms. W e now discuss how we can interpret these metrics as suitable loss functions for our neural network. A. BSS_Eval based cost-functions In the absence of external noise, the distortions present in the output of a source separation algorithm can be categorized as interference and artifacts. In- terference refers to the lingering effects of the other sources on the separated source. Thus, source-to- interference ratio (SIR) is a metric that captures the ability of the algorithm to eliminate the other sources and preserve the source of interest. The processing steps in an algorithm may introduce arifacts or addi- tional sounds in the separation results that do not exist in the original sources. Source-to-artifact ratio (SAR) measures the ability of the network to produce high quality results without introducing additional artifacts. The unwanted non-linear processing ef fects that may occur due to a neural network are also incorporated by SAR. These metrics can be combined into source- to-distortion ratio (SDR), which captures the overall separation quality of the algorithm. W e denote the output of the network by x . This output should ideally be equal to the target source y and completely suppress the interfering source z . W e note that notations refer to the time-domain wa veforms of each signal. Thus, y and z are constants with respect to any optimization Fig. 2. (a) An example of the modulation spectrogram for a male-female speech mixture (left), adaptive bases i.e., filters of analysis con volutional layer (middle), Normalized magnitude spectra of adaptive bases (right) for independent analysis and synthesis layers (top). (b) An example of the modulation spectrogram for a male-female speech mixture (left), adaptive bases i.e., filters of analysis conv olutional layer (middle), Normalized magnitude spectra of adaptiv e bases (right) for shared analysis and synthesis layers (bottom). The orthogonal- AET uses a transposed version of the analysis filters for the synthesis conv olutional layer . The filters are ordered according to their dominant frequency component (from low to high). In the middle subplots, we show a subset of the first 32 filters. The adaptiv e bases concentrate on the lower frequencies and spread-out at the higher frequencies. These plots have been obtained using SDR as the cost function. (max or min) applied on the network output x . W e will also use the following definition of the inner-product between vectors as, h xy i = x T · y Maximizing SDR with respect to x can be giv en as, max SDR ( x , y ) = max h xy i 2 h yy ih xx i − h xy i 2 ≡ min h yy ih xx i − h xy i 2 h xy i 2 = min h yy ih xx i h xy i 2 − h xy i 2 h xy i 2 ∝ min h xx i h xy i 2 Thus, maximizing the SDR is equi valent to maxi- mizing the correlation between x and y , while produc- ing the solution with least energy . Maximizing the SIR cost function can be giv en as, max SIR ( x , y , z ) = max h zz i 2 h xy i 2 h yy i 2 h xz i 2 ≡ min h xz i 2 h xy i 2 Maximizing SIR is equiv alent to maximizing the correlation between the network output x and target source y while minimizing the correlation between x and interference z . Over informal listening tests, we identified that a network trained purely on SIR, maximizes time-frequency (TF) bins where the target is present and the interference is not present and minimizes TF bins where both sources are present or bins where the interference dominates the target. This results in a network output consisting of sinusoidal tones near TF bins dominated by the target source. For the SAR cost function, we assume that the clean target source y and the clean interference z are orthogonal in time. This allo ws for the following simplification: max SAR ( x , y , z ) = max k h xy i h yy i y + h xz i h zz i z k 2 k x − h xy i h yy i y − h xz i h zz i z k 2 ≡ min k x − h xy i h yy i y − h xz i h zz i z k 2 k h xy i h yy i y + h xz i h zz i z k 2 = min h xx i − h xy i 2 h yy i − h xz i 2 h zz i h xy i 2 h yy i + h xz i 2 h zz i ∝ min h xx i h xy i 2 h yy i + h xz i 2 h zz i From the equations, we see that SAR does not dis- tinguish between the tar get source and the interference. Consequently , optimizing the SAR cost function does not directly optimize the quality of separation. The purpose of optimizing the SAR cost function should be to reduce audio artifacts in conjunction with a loss function that penalizes the presence of interference such as the SIR. In practice, a network that optimizes SAR directly should apply the identity transformation to the input mixture. B. STOI based cost function The drawback of BSS_Eval metrics is that they fail to incorporate “intelligibility” of the separated signal. Short-time objecti ve intelligibility (STOI) [9] is a metric that correlates well with subjecti ve speech intelligibility . STOI accesses the short-time correlation between TF representations of target speech y and network output x . W e now describe the sequence of steps inv olved in interpreting STOI as a cost-function. The network output x and tar get source y wa veforms are first transformed into the TF domain using an STFT step. T o do so, we use Hanning windowed frames of 256 samples zero-padded to a size of 512 samples each, and a hop of 50%. This STFT step was implemented using a 1-D con volution operation. The resulting magnitude spectrograms are transformed into an octave-band representation by grouping frequencies using 15 one-third octave bands reaching upto 10000 Hz. The resulting representations will be denoted as ˆ X and ˆ Y , corresponding to x and y respecti vely . The representation ˆ X j , m corresponds to the j th one- third octav e band at the m th time frame. This was implemented as a matrix multiplication step applied on the magnitude spectrograms. Giv en the one-third octa ve band representation ˆ X and ˆ Y , we constructed new vectors X j , m and Y j , m consisting of N = 30 pre vious frames before the m th time frame. W e can write this explicitly as X j , m = [ ˆ X j , m − N + 1 , ˆ X j , m − N + 2 , ..., ˆ X j , m ] T Let X j , m ( n ) be the n th frame of vector X j , m . The octav e-band representation of the network output is then normalized and clipped to hav e the similar scale as the target source, which is denoted as ¯ X j , m . The clipping procedure clips the network output so that the signal-to-distortion ratio (SDR) is abov e β = − 15 d B . ¯ X j , m ( n ) = min k Y j , m k k X j , m k X j , m ( n ) , ( 1 + 10 − β / 20 ) Y j , m ( n ) W e then compute the intermediate intelligibility ma- trix denoted by d j , m by taking the correlation between ¯ X j , m and Y j , m . d j , m = ( ¯ X j , m − µ ¯ X j , m ) T ( Y j , m − µ Y j , m ) k ¯ X j , m − µ ¯ X j , m kk Y j , m − µ Y j , m k T o get the final STOI cost function, we take the av erage short-time correlation over M total time frames and J = 13 total one-third octave bands. STOI = 1 J M ∑ j , m d j , m It is clear by the procedure that maximizing the STOI cost function is equiv alent to maximizing the av erage short-time correlation between the TF representations for the target source and separation network output. I V . E X P E R I M E N T S Since the paper deals with interpreting source sepa- ration metrics as a cost function, it is not a reasonable approach to use the same metrics for their ev aluation. In this paper, we use subjectiv e listening tests targeted at e valuating the separation, artifacts and intelligibility of the separation results to compare the different loss functions. W e use the crowd-sourced audio quality ev aluation (CA QE) toolkit [14] to setup the listening tests over Amazon Mechanical Turk (AMT). The de- tails and results of our experiments follow . A. Experimental Setup For our experiments, we use the end-to-end network shown in figure 1(b). The separation was performed with a 1024 dimensional AET representation computed at a stride of 16 samples. A smoothing of 5 samples was applied by the smoothing conv olutional layer . The separation network consisted of 2 dense layers each followed by a softplus non-linarity . This network was trained using different proposed cost functions and their combinations. W e compare the cost-functions by ev aluating their performance on isolating the female speaker from a mixture comprising a male speaker and a female speaker , using the above end-to-end network. T o train the network, we randomly selected 15 male- female speaker pairs from the TIMIT database [15]. 10 pairs were used for training and the remaining 5 pairs were used for testing. Each speaker has 10 recorded sentences in the database. For each pair , the recordings were mixed at 0 dB. Thus, the training data consisted of 100 mixtures. The trained networks were compared on their separation performance on the 50 test sentences. Clearly , the test speakers were not a part of the training data to ensure that the network learns to separate female speech from a mixture of male and female speakers and does not memorize the speakers themselves. In the subjective listening tests we compare the performance of end-to-end source separation under the following cost functions: (i) Mean squared error (ii) SDR (iii) 0 . 75 × SDR + 0 . 25 × ST OI (iv) 0 . 5 × SDR + 0 . 5 × ST OI (v) 0 . 75 × SI R + 0 . 25 × SAR (vi) 0 . 5 × SI R + 0 . 5 × SAR (vii) 0 . 25 × SI R + 0 . 75 × SAR . These combinations were selected to understand the effects of individual cost functions on separation per- formance. W e scale the v alue of each cost-function to unity before starting the training procedure. This was done to control the weighting of terms in case of composite cost-functions. Fig. 3. Listening test scores for tasks (a) Preservation of target source. (b) Suppression of interfering sources. (c) Suppression of artifacts (d) Speech intelligibility over different cost-functions. The distribution of scores is presented in the form of a box-plot where, the solid line in the middle give the median value and the extremities of the box giv e the 25 th and 75 th percentile values. B. Evaluation Using CA QE ov er a web environment like AMT has been shown to giv e consistent results to listening tests performed in controlled lab en vironments [14]. Thus, we use the same approach for our listening tests. The details are briefly described below . 1) Recruiting Listeners: For the listening tasks, we recruited listeners on Amazon Mechanical T urk that were ov er the age of 18 and had no pre vious history of hearing impairment. Each listener had to pass a brief hearing test that consisted of identifying the number of sinusoidal tones within two segments of audio. If the listener failed to identify the correct number of tones within the audio clip in two attempts, the listener’ s response was rejected. For the listening tests, we recruited a total of 180 participants ov er AMT . 2) Subjective Listening T ests: W e assigned each of the accepted listeners to one of four ev aluation tasks. Each task asked listeners to rate the quality of separation based on one of four perceptual metrics: preservation of source, suppression of interference, ab- sence of additional artifacts, and speech intelligibility . The perceptual metrics such as preservation of source, suppression of interference, absence of additional arti- facts, and speech intelligibility directly correspond to objectiv e metrics such as SDR, SIR, SAR, and STOI respectiv ely . Accepted listeners were giv en the option to submit multiple ev aluations for each of the dif ferent tasks. For each task, we trained listeners by giving each listener an audio sample of the isolated target source as well as a mixture of the source and interfering speech. W e also provided 1-3 audio separation examples of poor quality and 1-3 audio examples of high quality according to the perceptual metric assigned to the listener . The audio files used to train the listener all had exceptionally high or low objective metrics (SDR, SIR, SAR, STOI) with respect to the pertaining task so that listeners could base their ratings in comparison to the best or worst separation examples. After training, the listeners were then asked to rate eight unlabelled, randomly ordered, separation samples from 0 to 100 based on the metric assigned. The isolated target source was included in the listener ev al- uation as a baseline. The other seven audio samples correspond to separation examples output by a neural network trained with different cost functions enlisted in section IV -A. C. Results and Discussion Figure 3 gives the results of the subjectiv e listening tests performed through AMT for each of the four tasks. The results are shown in the form of a bar-plot that shows the median value (solid line in the middle) and the 25-percentile and 75-percentile points (box boundaries). The vertical axis gi ves the distribution of listener-scores over the range (0-100) obtained from the tests. The horizontal axis shows the different cost-functions used for ev aluation, as listed in section IV -A. This also helps us to understand the nature of the proposed cost-functions. For example, figure 3(b) (bars 5,6,7) shows that incorporating the SIR term into the cost function explicitly , helps the network to suppress the interfering sources better . Similarly , the addition of a STOI term into the cost function improves the results in terms of speech intelligibility as seen in figure 3(d). It is also observed that adding STOI to the SDR cost-function helps in preserving the tar get source better (figure 3(a), bars 2,3 and 4). One possible reason for this could be that increasing the intelligibility of the separation results results in a perceptual notion of preserving the target source better . The BSS_Ev al cost functions appear to be comparable in terms of preserving the target source (figure 3(a), bars 2,5,6,7) and slightly better than MSE. In terms of artifacts in the separated source, SDR outperforms all the cost-functions, all of which seem to introduce a comparable level of artifacts into the separation results (figure 3(c)). The use of SAR in the cost-function does not seem to have fa vorable or adverse ef fects on the perception of artifacts on the separation results. V . C O N C L U S I O N A N D F U T U R E W O R K In this paper we hav e proposed and experimented with nov el cost-functions motiv ated by BSS_Eval and STOI metrics, for end-to-end source separation. W e hav e shown that these cost-functions capture different salient aspects of source-separation depending upon their characteristics. This enables the flexibility to use composite cost-functions that can potentially improv e the performance of existing source separation algorithms. R E F E R E N C E S [1] Y . Isik, J. L. Roux, Z. Chen, S. W atanabe, and J. R. Hershey , “Single-channel multi-speaker separation using deep cluster- ing, ” 2016. [2] Z. Chen, Y . Luo, and N. Mesgarani, “Deep attractor network for single-microphone speaker separation, ” in 2017 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , March 2017, pp. 246–250. [3] Y . Luo, Z. Chen, and N. Mesgarani, “Speaker-independent speech separation with deep attractor network, ” IEEE/ACM T ransactions on Audio, Speech, and Language Pr ocessing , vol. 26, no. 4, pp. 787–796, April 2018. [4] S. V enkataramani, J. Casebeer, and P . Smaragdis, “ Adapti ve front-ends for end-to-end source separation. ” [Online]. A v ailable: http://media.aau.dk/smc/wp- content/uploads/2017/ 12/ML4AudioNIPS17_paper_39.pdf [5] Y . Luo and N. Mesgarani, “T asnet: Time-domain audio separa- tion network for real-time single-channel speech separation, ” in Acoustics, Speech and Signal Processing (ICASSP), 2014 IEEE International Conference on . IEEE, 2018. [6] S. W . Fu, Y . Tsao, X. Lu, and H. Kawai, “Raw wa veform-based speech enhancement by fully conv olutional networks, ” in 2017 Asia-P acific Signal and Information Pr ocessing Association Annual Summit and Conference (APSIP A ASC) , Dec 2017. [7] D. Rethage, J. Pons, and X. Serra, “ A wavenet for speech denoising, ” 2018. [8] C. Févotte, R. Gribonv al, and E. V incent, “Bss_eval toolbox user guide–revision 2.0, ” 2005. [9] C. H. T aal, R. C. Hendriks, R. Heusdens, and J. Jensen, “ A short-time objective intelligibility measure for time-frequency weighted noisy speech, ” in Acoustics Speech and Signal Pr o- cessing (ICASSP), 2010 IEEE International Conference on . IEEE, 2010, pp. 4214–4217. [10] S.-W . Fu, Y . Tsao, X. Lu, and H. Kawai, “End-to-end wav e- form utterance enhancement for direct ev aluation metrics optimization by fully conv olutional neural networks, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , 2018. [11] D. D. Lee and H. S. Seung, “ Algorithms for non-negati ve matrix factorization, ” in Advances in neural information pr o- cessing systems , 2001, pp. 556–562. [12] C. Févotte, N. Bertin, and J.-L. Durrieu, “Nonnegativ e matrix factorization with the itakura-saito diver gence: With applica- tion to music analysis, ” Neural computation , vol. 21, no. 3, pp. 793–830, 2009. [13] S. Sra and I. S. Dhillon, “Generalized nonnegativ e matrix approximations with bregman divergences, ” in Advances in neural information processing systems , 2006, pp. 283–290. [14] M. Cartwright, B. Pardo, G. J. Mysore, and M. Hoffman, “Fast and easy crowdsourced perceptual audio evaluation, ” in 2016 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) , March 2016, pp. 619–623. [15] J. S. Garofolo, L. F . Lamel, J. G. F . William M Fisher, D. S. Pallett, N. L. Dahlgren, and V . Zue, “T imit acoustic phonetic continuous speech corpus, ” Philadelphia, 1993.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment