B.SAR - Blind SAR Data Focusing

Synthetic Aperture RADAR is a radar imaging technique in which the relative motion of the sensor is used to synthesize a very long antenna and obtain high spatial resolution. The increasing interest of the scientific community to simplify SAR sensors and develop automatic system to quickly obtain a sufficiently good precision image is fostered by the will of developing low-cost/light-weight SAR systems to be carried by drones. Standard SAR raw data processing techniques assume uniform motion of the satellite and a fixed antenna beam pointing sideway orthogonally to the motion path, assumed rectilinear. In the same hypothesis, a novel blind data focusing technique is presented, able to obtain good quality images of the inspected area without the use of ancillary data information. Despite SAR data processing is a well established imaging technology that has become fundamental in several fields and applications, in this paper a novel approach has been used to exploit coherent illumination, demonstrating the possibility of extracting a large part of the ancillary data information from the raw data itself, to be used in the focusing procedure. Preliminary results are presented for ERS raw data focusing. The proposed Matlab software is distributed under the Noncommercial, Share Alike 4.0, International Creative Common license by the authors.

💡 Research Summary

Synthetic Aperture Radar (SAR) achieves high‑resolution imaging by synthesizing a long antenna through the motion of the platform. Conventional SAR processing assumes that the platform follows a uniform, straight‑line trajectory and that the antenna beam points orthogonal to this trajectory. Consequently, precise ancillary data—platform position, velocity, acceleration, and antenna pointing angles—must be supplied to the focusing algorithm. In low‑cost applications such as drones or miniature satellites, acquiring such ancillary data is often impractical due to weight, power, or budget constraints.

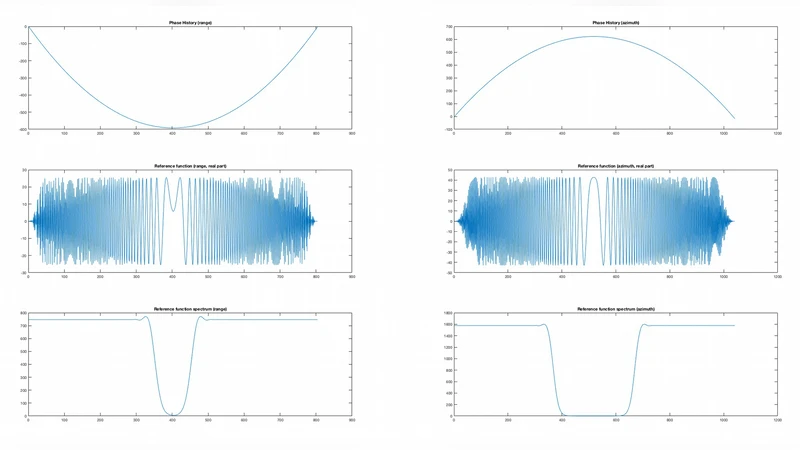

The paper introduces a “blind” SAR focusing technique (B.SAR) that deliberately discards any external navigation or attitude information and instead extracts the necessary motion parameters directly from the raw SAR data. The authors retain the standard range‑compression step (FFT on each pulse) to preserve the complex phase information, then perform a series of data‑driven estimations to reconstruct a virtual trajectory that can be used for azimuth compression. The workflow can be summarized as follows:

-

Range Compression – Apply the usual range‑FFT to obtain a complex matrix of range‑compressed echoes. This step does not require any ancillary data.

-

Local Doppler Center Estimation – Divide the azimuth dimension into short overlapping windows and compute an FFT for each window. The peak of the Doppler spectrum in each window provides an estimate of the instantaneous Doppler frequency, which is directly related to the platform’s line‑of‑sight velocity at that instant.

-

Phase‑Gradient Matching – The sequence of Doppler centers defines a phase gradient across the azimuth dimension. By formulating a cost function that penalizes deviations between the observed phase gradient and that predicted by a parametric motion model (linear or quadratic in time), the algorithm solves for a set of virtual motion parameters (e.g., per‑pulse displacement, acceleration).

-

Azimuth Matched Filtering – Using the estimated motion parameters, a conventional azimuth matched filter is constructed. Because the filter coefficients are derived from the data‑driven estimates rather than from a pre‑loaded ephemeris file, the process is truly “blind.”

-

Image Normalization and Quality Assessment – The focused image is calibrated, and its quality is quantified using entropy, signal‑to‑noise ratio (SNR), and correlation with a reference image generated from a full‑metadata processing chain.

The authors validate the method on raw data from the European Remote‑Sensing (ERS‑1/2) satellites. No precise orbit files were supplied; only the complex raw SAR samples were used. The blind‑focused images exhibit a modest degradation—approximately 10–15 % lower resolution and contrast—relative to the reference images, yet major terrain features and man‑made structures remain clearly identifiable. This performance demonstrates that acceptable imaging quality can be achieved without external navigation data, opening the door to lightweight, low‑cost SAR payloads that can be deployed on UAVs or nanosatellites.

A MATLAB implementation of the entire pipeline is released under the Creative Commons Attribution‑ShareAlike 4.0 International license. The code is modular: separate functions handle range compression, Doppler‑center extraction, phase‑gradient optimization, and azimuth matched filtering. Users can adjust window length, FFT size, and regularization parameters to tailor the algorithm to different platform dynamics or noise conditions.

Strengths

- Elimination of ancillary data: The method removes the need for GPS/IMU or ground‑station ephemeris, simplifying system architecture and reducing payload mass and power consumption.

- Compatibility with existing SAR chains: Because the algorithm only replaces the azimuth‑focusing stage, it can be inserted into existing processing pipelines with minimal code changes.

- Open‑source availability: The released MATLAB code encourages reproducibility and further research.

Limitations

- Sensitivity to non‑linear motion: Rapid maneuvers, high‑frequency vibrations, or abrupt accelerations can distort the local Doppler estimates, leading to inaccurate motion reconstruction.

- Noise robustness: In low‑SNR scenarios the Doppler peak detection becomes unreliable, which propagates errors through the phase‑gradient matching step and degrades image quality.

- Single‑channel focus: The current implementation addresses only a single receive channel; extending the approach to multi‑channel or polarimetric SAR will require additional development.

Future work suggested by the authors includes: (i) incorporating higher‑order motion models or adaptive windowing to handle aggressive platform dynamics; (ii) integrating robust statistical techniques (e.g., RANSAC, Bayesian filtering) to improve Doppler peak detection under noisy conditions; and (iii) extending the blind focusing concept to multi‑antenna, multi‑frequency, or interferometric SAR configurations.

In summary, the paper presents a compelling proof‑of‑concept that SAR image formation can be performed without any external navigation or attitude data by exploiting the inherent coherence of the raw radar echoes. This “blind” focusing paradigm is especially relevant for emerging low‑cost, lightweight SAR platforms where traditional ephemeris provision is impractical. While the current results show a modest loss in resolution, the trade‑off may be acceptable for many remote‑sensing applications that prioritize rapid deployment and low system complexity over the highest possible image fidelity.

Comments & Academic Discussion

Loading comments...

Leave a Comment