ASR-based Features for Emotion Recognition: A Transfer Learning Approach

During the last decade, the applications of signal processing have drastically improved with deep learning. However areas of affecting computing such as emotional speech synthesis or emotion recognition from spoken language remains challenging. In th…

Authors: Noe Tits, Kevin El Haddad, Thierry Dutoit

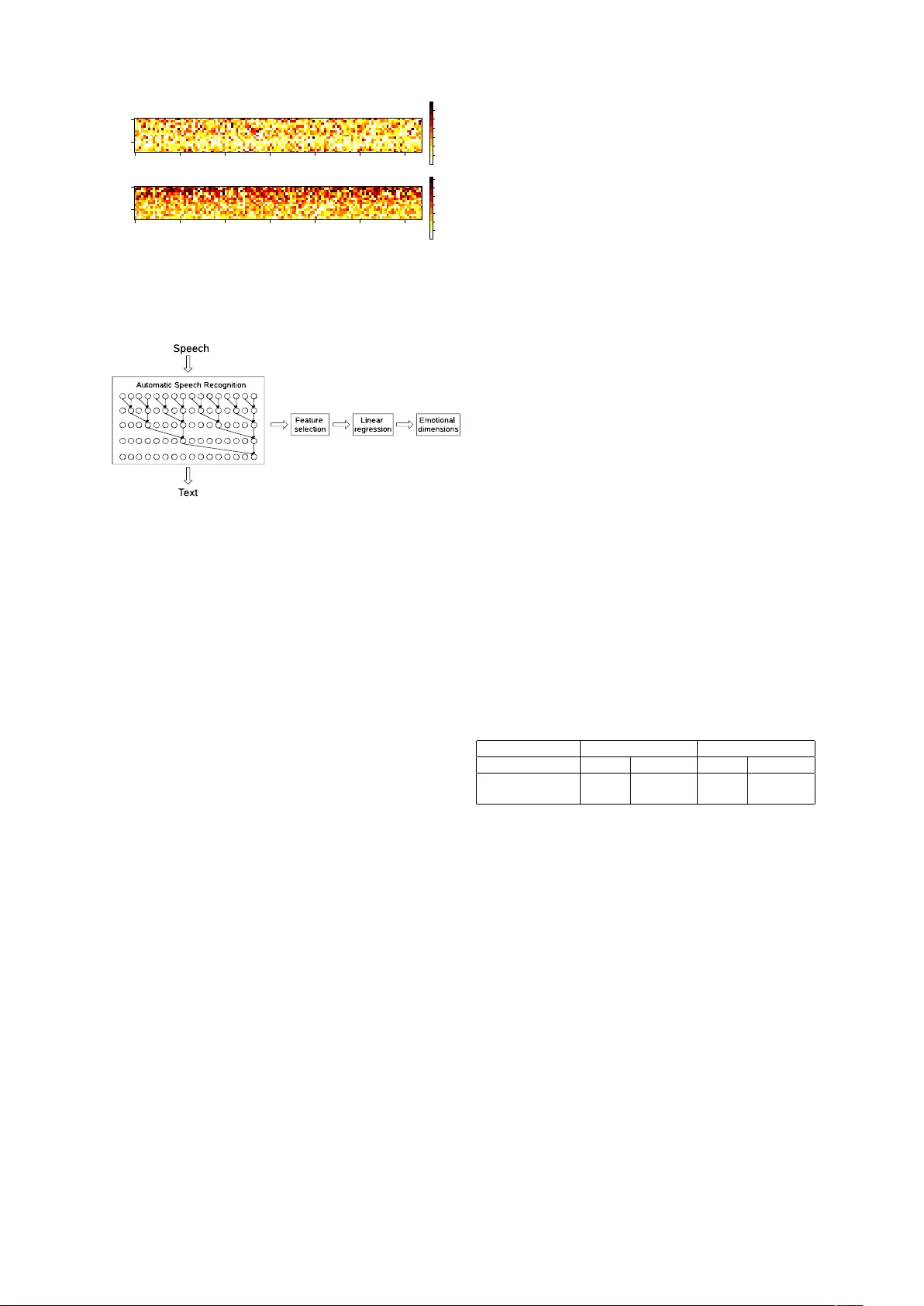

ASR-based F eatur es f or Emotion Recognition: A T ransfer Learning A ppr oach No ´ e Tits, K e vin El Haddad, Thierry Dutoit Numediart Institute, Uni versity of Mons, Belgium { noe.tits, kevin.elhaddad, thierry.dutoit } @umons.ac.be Abstract During the last decade, the applications of signal processing have drastically im- prov ed with deep learning. Ho wev er ar- eas of af fecting computing such as emo- tional speech synthesis or emotion recog- nition from spoken language remains chal- lenging. In this paper , we in vestigate the use of a neural Automatic Speech Recog- nition (ASR) as a feature extractor for emotion recognition. W e show that these features outperform the eGeMAPS fea- ture set to predict the valence and arousal emotional dimensions, which means that the audio-to-text mapping learned by the ASR system contains information related to the emotional dimensions in sponta- neous speech. W e also e xamine the re- lationship between first layers (closer to speech) and last layers (closer to text) of the ASR and v alence/arousal. 1 Introduction W ith the advent of deep learning, areas of signal processing ha ve been drasctically improved. In the field of speech synthesis, W a venet ( V an Den Oord et al. , 2016 ), a deep neural network for generat- ing raw audio w av eforms, outperforms all pre vi- ous approaches in terms of naturalness. One of the remaining challenges in speech synthesis is to control its emotional dimension (happiness, sad- ness, amusement, etc.). The work described here is part of a larger project to control as accurately as possible, the emotional state of a sentence being synthesized. For this, we present here exploratory work regarding the analysis of the relationship be- tween the emotional states and the modalities used to express them in speech. Indeed one of the main problems to develop such a system is the amount of good quality data (naturalistic emotional speech of synthesis qual- ity , i.e. containing no noise of an y sorts). This is why we are considering solutions such as syn- thesis by analysis and transfer learning ( Pan and Y ang , 2010 ). Arousal and valence ( Russell , 1980 ) are among the most, if not the most used dimensions for quantizing emotions. V alence represents the posi- ti vity of the emotion whereas arousal represents its acti vation. Since they represent emotional states, these dimensions are linked to sev eral modalities that we use to e xpress emotions (audio, te xt, f acial expressions, etc.). It has recently been sho wn that for emotion recognition, deep learning based systems learn features that outperform handcrafted features ( Tri- georgis et al. , 2016 ) ( Martinez et al. , 2013 ) ( Kim et al. , 2017a , b ). The use of context and different modalities has also been studied with deep learn- ing models. Poria et al. ( 2017 ) focus on the con- textual information among utterances in a video while Zadeh et al. ( 2017 , 2018 ) dev elop specific architectures to fuse information coming from dif- ferent modalities. In this work, with the goal to study the re- lationship between valence/arousal, and differ - ent modalities, we propose to use the internal representation of a speech-to-text system. An Automatic Speech Recognition (ASR) system or speech-to-text system, learns a mapping between two modalities: an audio speech signal and its corresponding transcription. W e hypothesize that such a system must also be learning representa- tions of emotional expressions since these are con- tained intrinsically in both speech (v ariation or the pitch, the energy , etc.) and text (semantic of the words). In f act, we show here that the activ ations of cer- tain neurons in an ASR system, are useful to esti- mate the arousal and v alence dimensions of an au- dio speech signal. In other words, transfer learning is lev eraged by using features learned for an auto- matic speech recognition (ASR) task to estimate v alence and arousal. The adv antage of our method is that it allows combining the use of lar ge datasets of speech with transcriptions with limited datasets annotated in emotional dimensions. An example of transfer learning is the work of Radford et al. ( 2017 ). They trained a multiplica- ti ve L TSM ( Krause et al. , 2016 ) to predict next character based on the previous ones to design a text generator system. The dataset used to train their model was the Amazon revie w dataset pre- sented in McAuley et al. ( 2015 ). Then, they used the representation learned by the model to pre- dict sentiment also av ailable in the dataset, and achie ved state of the art prediction. In this paper , we show that the activ ations of a deep learning-based ASR system trained on a large database can be used as features for the esti- mation of arousal and valence values. The features would therefore be extracted from both the audio and text modalities which the ASR system learned to map. 2 ASR-based F eatures f or Emotion Prediction V ia Regression Our goal is to study the relationship between v alence/arousal, and audio/text modalities thanks to an ASR system. The main idea is that the ASR system that models the mapping between audio and text might learn a representation of emotional e xpression. So, for our analyses, we use an ASR system as a feature extractor which feeds a linear regression algorithm to estimate the arousal/v alence v alues. This section describes the whole system. First we present the ASR system used as a feature e xtractor . W e then briefly present the data used and present first results on the data analysis. 2.1 ASR system The ASR system used is implemented in ( Namju and K yubyong , 2016 ) and pre-trained on the VCTK dataset ( V eaux et al. , 2017 ) containing 44 hours of speech uttered by 109 native speakers of English. Its architecture consists of a dilated con volution of blocks. Each block is a gated constitutional unit (GCU) with a skip (residual) connection. In other words a W avenet-lik e architecture ( V an Den Oord et al. , 2016 ). There are 15 layers and 128 GCUs in each layer: 1920 GCUs in total. T o lighten the computational cost, the audio sig- nal is compressed in 20 Mel-Frequency Cepstral Coef ficients (MFCCs) and then fed into the sys- tem. 2.2 Dataset Used IEMOCAP Dataset The ”interactiv e emotional dyadic motion cap- ture database” (IEMOCAP) dataset ( Busso et al. , 2008 ) is used in this paper . It consists of audio- visual recordings of 5 sessions of dialogues be- tween male and female subjects. In total it con- tains 10 speakers and a total of 12 hours of data. The data is segmented in utterances. Each utter- ance is transcribed and annotated by cate gory of emotions ( Ekman , 1992 ) and a value for emotional dimensions ( Russell , 1980 ) (valence, arousal and dominance) between 1 and 5 representing the di- mension’ s intensity . In this work, we only use the audio and text modalities as well as the valence and arousal an- notations. Data Analysis and Neural F eatures W e in vestig ate the relationship between the activ a- tion output of the ASR-based system’ s GCUs and the valence/arousal values by studying the corre- lations between them. For ev ery utterance and for each speaker of the IEMOCAP dataset, we com- pute the mean acti vation of the GCUs of the ASR. The Pearson correlation coefficient is then calcu- lated between the mean activ ation outputs and the v alues of valence/arousal of all utterances of the speaker . In the rest of the paper , we will refer to the mean acti vation of the GCUs as neural fea- tures. As an example, the results concerning the female speaker of session 2 is summarized in a heat map represented in Figure 1 Each row of the heat map corresponds to a layer of GCUs. The color is mapped with the Pearson correlation coef ficient value. One can see that correlations exist for both arousal and v alence. This suggests that the ASR- based system learns a certain representation of the emotional dimensions. 2.3 Structure of the system The system is illustrated in Figure 2 . As previ- ously mentioned, the ASR system is used as a fea- 0 2 0 4 0 6 0 8 0 1 0 0 1 2 0 0 1 0 P e a r s o n c o e f f i c i e n t : v a l e n ce 0 2 0 4 0 6 0 8 0 1 0 0 1 2 0 0 1 0 P e a r s o n c o e f f i c i e n t : a r ou sa l 0 . 0 5 0 . 1 0 0 . 1 5 0 . 2 0 0 . 2 5 0 . 3 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 Figure 1: Pearson correlation coefficient between the neural features and valence (up) and arousal (do wn) - Female speaker of session 2 Figure 2: Block diagram of the system ture extractor . First we compute the 20 MFCCs of the utterances of the IEMOCAP dataset with li- brosa python library ( McFee et al. , 2015 ). These are passed through the ASR to compute the corre- sponding neural features. A feature selection is applied on the neural fea- tures to k eep 100 among the 1920 features for dimensionality reduction purpose. The selection is done using the scikit-learn p ython library ( Pe- dregosa et al. , 2011 ) with the Fisher score. Finally a linear re gression is trained to estimate the v alence/arousal values from the neural features using the IEMOCAP data. The linear regression is done using scikit-learn. The training is done by minimizing the Mean Squared Error (MSE) be- tween predictions and labels. 3 Experiments and Results In this section, we detail the experiments that we carried out. The first one is the ev aluation of the neural features in terms of MSE and its compari- son with a linear regression of the eGeMAPS fea- ture set ( Eyben et al. , 2016 ). In the second one, we in vestigate the relationship between the audio and text and modalities and the emotional dimensions. 3.1 First experiment: Linear regression In this first experiment, we in vestigate the perfor- mance of a linear re gression to predict arousal and v alence using the neural features. W e compare this with a linear regression using the eGeMAPS fea- ture set. The eGeMAPS feature set is a selection of acoustic features that provide a common baseline for e valuation in researches to av oid dif ferences of feature set and implementations. Indeed, the y also provide their implementation with openS- MILE toolkit ( Eyben et al. , 2010 ) that we used in this work. The features were selected based on their abil- ity to represent af fectiv e physiological nuances in voice production, their proven performance in for - mer research work as well as the possibility to ex- tract them automatically , and their theoretical sig- nificance. The result of this selection is a set of 18 Lo w-lev el descriptors (LLDs) related to fre- quency (pitch, formants etc.), energy (loudness, Harmonics-to-Noise Ratio, etc.) and spectral bal- ance (spectral slopes, ratios between formant ener- gies, etc.). Then se veral functionals such as stan- dard deviation and mean are applied to these LLDs to hav e the final features. The results obtained from the linear regression in terms of MSE are compared to the annotations for each of the arousal and v alence values (be- tween 1 and 5) in T able 1 . Arousal V alence Mean V ariance Mean V ariance Neural features 0.259 0.020 0.660 0.118 eGeMAPS set 0.267 0.034 0.697 0.135 T able 1: MSE on the prediction of v alence and arousal. W e perform a lea ve-one-speaker -out ev aluation scheme with both feature sets for cross-v alidation. In other words, each validation set in constituted with the utterances corresponding to one speaker and the corresponding training set with the other speakers. W e train a model with each training set and ev aluate it on the validation set in terms of MSE. The table contains the mean and standard de viation of the MSEs. It is clear from this table that the neural fea- tures outperform the eGeMAPS in this experi- ment. This confirms the fact that the ASR system learns representations of emotional dimensions in spontaneous speech. 3.2 Second experiment: Influence of modalities During the data e xploration, we noticed that, for some speakers, the layers closer to the speech in- put were more correlated to arousal and the ones closer to the text output to valence. An example is shown in Figure 3 . W e present, in this section, preliminary studies regarding this matter . 0 2 0 4 0 6 0 8 0 1 0 0 1 2 0 0 1 0 P e a r s o n c o e f f i c i e n t : v a l e n ce 0 2 0 4 0 6 0 8 0 1 0 0 1 2 0 0 1 0 P e a r s o n c o e f f i c i e n t : a r ou sa l 0 . 0 5 0 . 1 0 0 . 1 5 0 . 2 0 0 . 2 5 0 . 3 0 0 . 3 5 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 Figure 3: Pearson correlation coefficient between the neural features and valence (up) and arousal (do wn) - Female speaker of session 1 In order to analyze this phenomenon as pre- cisely as possible, we only considered the utter - ances from the IEMOCAP database for which the v alence/arousal annotators were consistent with each other , leaving us with 7532 utterances in total instead of 10039. Then we performed linear re gression with 4 dif- ferent sets of feature to study their influence. For the first set, we select the 100 best features among the 3 first layers of the neural ASR in terms of Fisher score using scikit-learn. For the second set, we apply the same selection to the 3 last layers. The third set selection is applied among all neural features. The last set is the eGeMAPS feature set. The results are summarized in Figure 2 . As expected, the results show , that for the speakers considered, the layers closer to the audio modal- ity outperform the ones closer to the text modality in the ASR architecture for arousal prediction and vice versa for the valence prediction. On this we build a hypothesis that the arousal-related features learned are more related to the audio modality than the text and vice versa for the v alence-related fea- tures. This hypothesis will be further explored in future work. 4 Conclusions and Future w ork In this paper, we show that features learned by a deep learning-based system trained for the Automatic Speech Recognition task can be Arousal V alence Mean V ariance Mean V ariance First layers 0.325 0.069 0.714 0.114 Last layers 0.357 0.038 0.661 0.089 All 0.296 0.044 0.621 0.099 eGeMAPS set 0.328 0.064 0.683 0.124 T able 2: Means and variances of the MSE on the prediction of v alence and arousal. used for emotion recognition and outperform the eGeMAPS feature set, the state of the art hand- crafted features for emotion recognition. Then we in vestigate the correlation of the emotional di- mensions arousal and valence with the modalities of audio and te xt of the speech. W e sho w that for some speakers, arousal is more correlated to neural features extracted from layers closer to the speech modality and v alence to the ones closer to the text modality . T o improv e the system, we plan to perform an end-to-end training including the av erage opera- tion. Another av enue to explore is to replace the av erage over time by a max-pooling over time which according to Aldeneh and Provost ( 2017 ) select the frames that are emotionally salient. Then an analysis of the underlying activ ation e volutions could be done to see if it is possible to e xtract a frame-by-frame description of valence and arousal without having to annotate a database frame-by-frame. Concerning the second experiment, we intend to in vestigate why these correlation patterns are only visible for some speakers and not others and the relationship between the arousal/v alence and au- dio/text. W e thereby hope to better understand the way multidimensional representations of emotions can be used to control the expressiv eness in syn- thesized speech. Acknowledgments No ´ e Tits is funded through a PhD grant from the Fonds pour la Formation ` a la Recherche dans l’Industrie et l’Agriculture (FRIA), Belgium. References Zakaria Aldeneh and Emily Mo wer Prov ost. 2017. Us- ing regional saliency for speech emotion recogni- tion. In Acoustics, Speech and Signal Pr ocessing (ICASSP), 2017 IEEE International Confer ence on , pages 2741–2745. IEEE. Carlos Busso, Murtaza Bulut, Chi-Chun Lee, Abe Kazemzadeh, Emily Mower , Samuel Kim, Jean- nette N Chang, Sungbok Lee, and Shrikanth S Narayanan. 2008. Iemocap: Interactiv e emotional dyadic motion capture database. Language r e- sour ces and evaluation , 42(4):335. Paul Ekman. 1992. An argument for basic emotions. Cognition & emotion , 6(3-4):169–200. Florian Eyben, Klaus R Scherer , Bj ¨ orn W Schuller , Johan Sundberg, Elisabeth Andr ´ e, Carlos Busso, Laurence Y Devillers, Julien Epps, Petri Laukka, Shrikanth S Narayanan, et al. 2016. The genev a minimalistic acoustic parameter set (gemaps) for voice research and affecti ve computing. IEEE T ransactions on Af fective Computing , 7(2):190–202. Florian Eyben, Martin W ¨ ollmer , and Bj ¨ orn Schuller . 2010. Opensmile: the munich versatile and fast open-source audio feature extractor . In Pr oceedings of the 18th A CM international confer ence on Multi- media , pages 1459–1462. A CM. Jaebok Kim, Gwenn Englebienne, Khiet P . Truong, and V anessa Evers. 2017a. Deep temporal models using identity skip-connections for speech emotion recog- nition . In Pr oceedings of the 2017 A CM on Multi- media Conference , MM ’17, pages 1006–1013, New Y ork, NY , USA. A CM. Jaebok Kim, Gwenn Englebienne, Khiet P . T ruong, and V anessa Ev ers. 2017b. T o wards speech emotion recognition ”in the wild” using aggregated corpora and deep multi-task learning. In INTERSPEECH . Ben Krause, Liang Lu, Iain Murray , and Stev e Renals. 2016. Multiplicative lstm for sequence modelling. arXiv pr eprint arXiv:1609.07959 . Hector P Martinez, Y oshua Bengio, and Georgios N Y annakakis. 2013. Learning deep physiological models of affect. IEEE Computational Intelligence Magazine , 8(2):20–33. Julian McAuley , Rahul P andey , and Jure Lesko vec. 2015. Inferring networks of substitutable and com- plementary products. In Pr oceedings of the 21th A CM SIGKDD International Conference on Knowl- edge Discovery and Data Mining , pages 785–794. A CM. Brian McFee, Colin Raffel, Dawen Liang, Daniel PW Ellis, Matt McV icar , Eric Battenberg, and Oriol Ni- eto. 2015. librosa: Audio and music signal analysis in python. In Pr oceedings of the 14th python in sci- ence confer ence , pages 18–25. Kim Namju and Park K yubyong. 2016. Speech-to-text-wa venet. https: //github.com/buriburisuri/ speech- to- text- wavenet . Sinno Jialin Pan and Qiang Y ang. 2010. A surve y on transfer learning. IEEE T ransactions on knowledge and data engineering , 22(10):1345–1359. F . Pedregosa, G. V aroquaux, A. Gramfort, V . Michel, B. Thirion, O. Grisel, M. Blondel, P . Pretten- hofer , R. W eiss, V . Dubourg, J. V anderplas, A. Pas- sos, D. Cournapeau, M. Brucher , M. Perrot, and E. Duchesnay . 2011. Scikit-learn: Machine learning in Python. Journal of Machine Learning Resear ch , 12:2825–2830. Soujanya Poria, Erik Cambria, Dev amanyu Hazarika, Nav onil Majumder, Amir Zadeh, and Louis-Philippe Morency . 2017. Context-dependent sentiment anal- ysis in user-generated videos. In Pr oceedings of the 55th Annual Meeting of the Association for Compu- tational Linguistics (V olume 1: Long P apers) , vol- ume 1, pages 873–883. Alec Radford, Rafal Jozefowicz, and Ilya Sutskev er . 2017. Learning to generate revie ws and discovering sentiment. arXiv preprint . James A Russell. 1980. A circumple x model of af- fect. Journal of personality and social psychology , 39(6):1161. George T rigeorgis, Fabien Ringe val, Raymond Brueck- ner , Erik Marchi, Mihalis A Nicolaou, Bj ¨ orn Schuller , and Stefanos Zafeiriou. 2016. Adieu fea- tures? end-to-end speech emotion recognition using a deep conv olutional recurrent network. In Acous- tics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Confer ence on , pages 5200– 5204. IEEE. Aaron V an Den Oord, Sander Dieleman, Heiga Zen, Karen Simonyan, Oriol V inyals, Alex Graves, Nal Kalchbrenner , Andrew Senior, and K oray Kavukcuoglu. 2016. W a venet: A generativ e model for raw audio. arXiv pr eprint arXiv:1609.03499 . Christophe V eaux, Junichi Y amagishi, Kirsten Mac- Donald, et al. 2017. Cstr vctk corpus: English multi- speaker corpus for cstr v oice cloning toolkit. Amir Zadeh, Minghai Chen, Soujanya Poria, Erik Cambria, and Louis-Philippe Morency . 2017. T en- sor fusion network for multimodal sentiment analy- sis. arXiv preprint . Amir Zadeh, Paul Pu Liang, Nav onil Mazumder , Soujanya Poria, Erik Cambria, and Louis-Philippe Morency . 2018. Memory fusion network for multi-view sequential learning. arXiv pr eprint arXiv:1802.00927 .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment