Fast L1-Minimization Algorithm for Sparse Approximation Based on an Improved LPNN-LCA framework

The aim of sparse approximation is to estimate a sparse signal according to the measurement matrix and an observation vector. It is widely used in data analytics, image processing, and communication, etc. Up to now, a lot of research has been done in…

Authors: Hao Wang, Ruibin Feng, Chi-Sing Leung

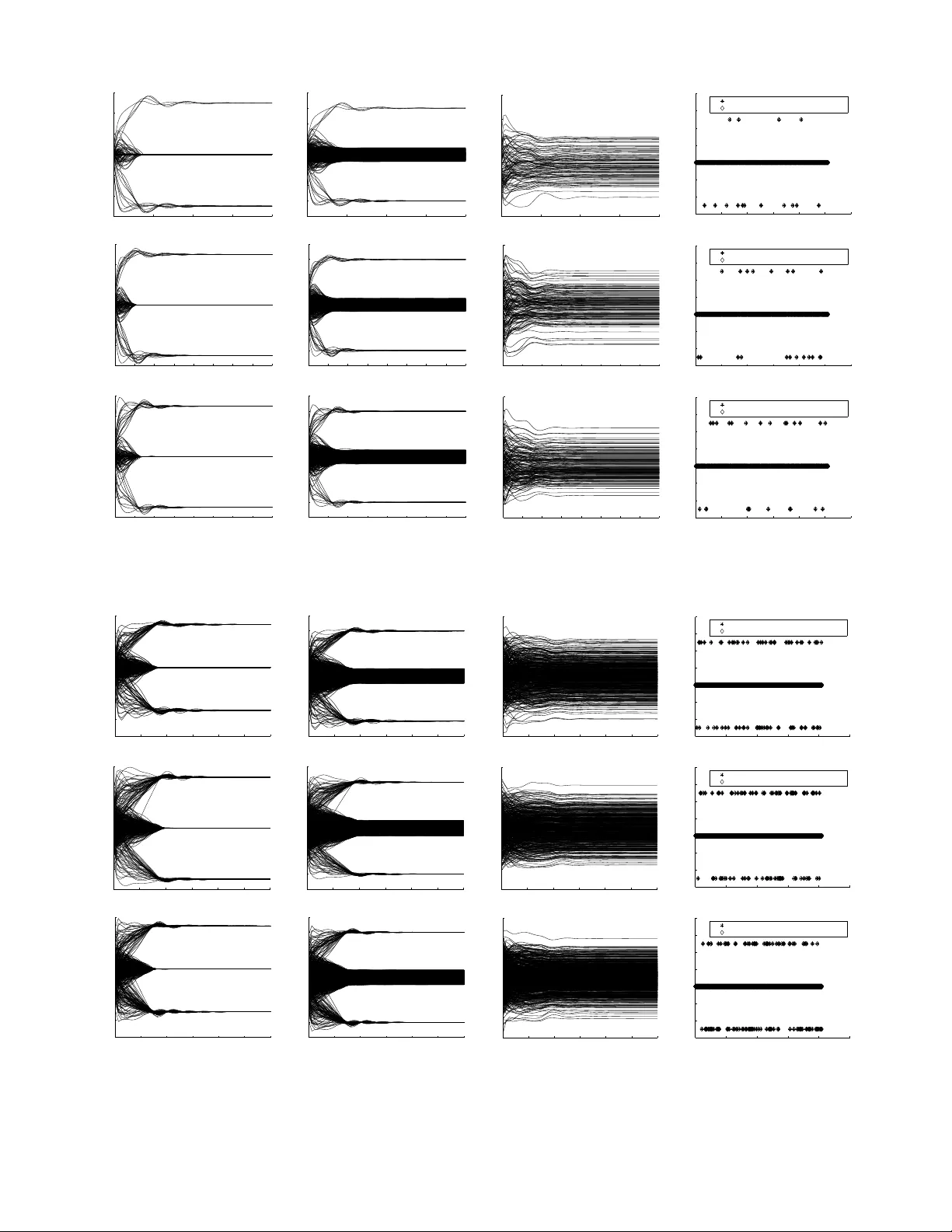

IEEE TRANSA CTIONS ON SIGNAL PR OCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 1 F ast L 1 -Minimization Algorithm for Sparse Approximation Based on an Impro v ed LPNN-LCA frame work Hao W ang, Ruibin Feng, and Chi-Sing Leung, Member , IEEE, Abstract —The aim of sparse approximation is to estimate a sparse signal according to the measurement matrix and an observation vector . It is widely used in data analytics, image processing, and communication, etc. Up to now , a lot of resear ch has been done in this area, and many off-the-shelf algorithms hav e been proposed. However , most of them cannot offer a real-time solution. T o some extent, this shortcoming limits its application prospects. T o address this issue, we devise a novel sparse approximation algorithm based on Lagrange program- ming neural network (LPNN), locally competitiv e algorithm (LCA), and projection theorem. LPNN and LCA are both analog neural network which can help us get a real-time solution. The non-differentiable objective function can be solved by the concept of LCA. Utilizing the projection theorem, we further modify the dynamics and proposed a new system with global asymptotic stability . Simulation results show that the proposed sparse approximation method has the real-time solutions with satisfactory MSEs. Index T erms —Sparse Appr oximation, Basis Pursuit (BP), La- grange Programming Neural Network (LPNN), Locally Compet- itive Algorithm (LCA), Projection Theorem. I . I N T RO D U C T I O N Sparse approximation is frequently used in many dif ferent application areas including data analytic, image processing, communication, feature extraction, audio processing and so on [1]–[5]. The aim of sparse approximation algorithms is to represent an observation using a sparse signal selected from a specified measurement matrix. The relationship between the unknown sparse signal and the observation can be described as Φ x x x = b b b, (1) where x x x ∈ R n is an unknown sparse vector , b b b ∈ R m is an observation vector , and Φ ∈ R m × n is the measurement matrix (also known as dictionary) with rank m ( m < n ). The matrix Φ is fat with full row rank. Apparently , an infinite number of solutions are av ailable for this equality . Therefore, constraints must be introduced. In sparse approximation, the number of nonzero elements in x x x is denoted by t , the solution with the smallest t is the best representation. Because for real data, like communication signals and images, ev en though their observations are in high-dimensional spaces, the actual signals are organized in some lower -dimensional subspaces, Hao W ang, Ruibin Feng, and Chi-Sing Leung are with the Department of Electronic Engineering, City Uni versity of Hong K ong, Ko wloon T ong, Hong K ong, China. i.e., t < m . Hence the spare representation problem is to solv e: min || x x x || 0 (2a) s.t. Φ x x x = b b b, (2b) where || · || 0 is the so-called l 0 -norm. || x x x || 0 implies the number of nonzero elements in x x x . The problem in (2) is a non-conv ex. And, due to l 0 -norm, it is NP-hard [6]. Hence, in the first kind of methods, approximate functions are used to replace the || x x x || 0 term. It is well known that l 1 -norm is the best conv ex approximate function of l 0 -norm. Thus the original problem in (2) can be rewritten as min || x x x || 1 (3a) s.t. Φ x x x = b b b, (3b) which is kno wn as basis pursuit (BP). It can be also trans- formed into the unconstrained form given by min 1 2 k b b b − Φ x x x k 2 2 + κ k x x x k 1 , (4) where κ ∈ R is a trade-off parameter . (4) is kno wn as least absolute shrinkage and selection operator (LASSO) problem. Both (3) and (4) can be used to calculate the original solution of problem (2) under certain hypotheses [6]. And, for solving the problem in (3) and (4), some elegant implementation packages, for example L1Magic [7] and SPGL1 [8], [9], are av ailable. Comparing (3) and (4), (3) is more attractive. Be- cause it does not need any trade-off parameter and the solution of this model normally has better performance. Therefore, the proposed algorithm also uses the BP model in (3). Another common type of methods are greedy iterative algorithms which include the matching pursuit (MP) algorithm [10] and its variants. The basic procedures of this kind of algorithms are shown as follo ws. First, the column in Φ which best matches with b b b is found. Second, the residual is computed. Third, the column in Φ which best matches with the residual is calculated; Finally , we repeat the second and third step several times and then calculate a linear summation of all selected columns. Theoretically , the linear summation of these columns is the most optimal sparse representation of the observ ation. In addition to these two kinds of algorithms, there are sev- eral other methods including gradient descent based methods [11], Dantzig selector method [12], etc. In practice, the computational speed is a major barrier to the real-time signal processing with high-dimensional signals IEEE TRANSA CTIONS ON SIGNAL PROCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 2 [13]. Howe ver , e xisting sparse approximation algorithms nor- mally suf fer from one or more the following drawbacks: (i) Computational speed for solving the sparse approximation problem largely depends on the dimension and denseness of the problem. W ith the increasing of dimensions and denseness, the performance of these con ventional methods is worse and worse. (ii) Most of them cannot generate exactly zero-valued coefficients in finite time [14]. (iii) Generally speaking, they can only calculate a suboptimal solution due to the relaxation. The analog neural network is very attractive when real-time solution are needed [15]–[17]. Because the analog neural net- work can be implemented by hardware circuit and its parallel architecture can eliminate the influence of high dimensions and great denseness. Hence, in this paper , we use the analog neural network to solve the BP problem. LPNN is an analog neural network which is generally used to solve the nonlinear con- strained optimization problems [18]. Howe ver , LPNN requires that all its objective function and constraints should be twice differentiable. Obviously , BP problem does not satisfy this requirement. Hence it cannot be directly used to solve the BP problem. LCA is another analog neural network, which can be used for solving the sparse approximation problem in (4). Ev en though it is effecti ve to handle the non-dif ferentiable term, this method can only solve the unconstrained optimization problem. F or solving the BP problem with LCA, we have to introduce an appropriate trade-off parameter and transform the original problem into the unconstrained form. Besides, LCA cannot ensure that the original constraints in BP are satisfied. Hence, in our previous work, LPNN and LCA are combined to solve the BP problem. Even though the performance of this method is superior , the global stability of this system is hard to prov e. Inspired by the projection neural network [19], [20], we note that the dynamics of LPNN-LCA method also contain a projection operation to a con vex set, and their structure can be further modified. After the modification, its equilibrium point is still equiv alent to the optimal solution of the original BP problem, and the whole system has global stability in the sense of L yapunov . The rest of paper is organized as follows. In Section II, the background of LPNN, LCA and projection neural network are described. In Section III, the proposed algorithm are de vel- oped. The global con ver gence of our algorithm is prov ed in Section IV. Experimental results for algorithm ev aluation and comparison are provided in Section V. Finally , conclusions are drawn in Section VI. I I . B A C K G RO U N D A. Notation W e use a lo wer-case or upper -case letter to represent a scalar while vectors and matrices are denoted by bold lo wer-case and upper-case letters, respectiv ely . The transpose operator is denoted as ( ) T , and I I I and 0 respectiv ely represent the identity matrix and zero matrix with appropriate dimensions. Other mathematical symbols are defined in their first appearance. B. Lagrang e Pr ogramming Neural Network LPNN is an analog neural network, which can be used to solve a general nonlinear constrained optimization problem giv en by min x x x f ( x x x ) (5a) s.t. h h h ( x x x ) = 0 . (5b) where x x x = [ x 1 , · · · , x n ] T is the variable vector , f : R n → R is the objectiv e function, h h h : R n → R m ( m < n ) represents m equality constraints. In LPNN, we first set up its Lagrangian: L ( x x x, λ ) = f ( x x x ) + λ T h h h ( x x x ) (6) where λ = [ λ 1 , · · · , λ m ] T is the Lagrange multiplier . There are two kinds of neurons in LPNN, namely , v ariable neurons and Lagrangian neurons. The n v ariable neurons are used to hold the decision variable vector x x x while the m Lagrangian neurons hold the Lagrange multiplier vector λ . In the LPNN framew ork, the dynamics of the neurons are d x x x dt = − ∂ L ( x x x, λ ) ∂x x x , (7a) d λ dt = ∂ L ( x x x, λ ) ∂ λ , (7b) where is the time constant of the circuit. W ithout loss of generality , we let = 1 in this paper . The dif ferential equations in (7) govern the state transition of the neurons. After the neurons settle do wn at an equilibria, the solution is obtained by measuring the neuron outputs at the equilibrium point. The purpose of (7a) is to seek for a state with the minimum objectiv e v alue, while (7b) aims to constrain its outputs into the feasible region. With (7), the network will settle down at a stable state if se veral mild conditions are satisfied [18], [21], [22]. It is worth noting that both f and h h h should be differentiable. Otherwise, the dynamics cannot be defined. Thus, due to the l 1 -norm term in (3), it cannot be directly solved by LPNN. C. Locally Competitive Algorithm The LCA [14] is also an analog neural network which is devised for solving the unconstrained sparse approximation problem in (4). Due to the non-differentiable term κ k x x x k 1 , LCA introduces an internal state variable u u u = [ u 1 , · · · , u n ] T and defines an element-wise relationship between x x x and u u u which is given by x i = T κ ( u i ) = 0 , | u i | ≤ κ, u i − κ sign ( u i ) , | u i | > κ, (8) Where x i and u i denote the i-th element of x x x and u u u respec- tiv ely . The shapes of function (8) with κ = 1 , κ = 2 and κ = 3 are shown in Fig. 1. It is observed that the κ is a threshold of function (8). From (8), we can further deduce that u u u − x x x ∈ κ∂ k u u u k 1 , (9) where ∂ k u u u k 1 denotes the sub-differential of the l 1 -norm term which is equal to its gradient ( ± 1 ) at the differentiable points IEEE TRANSA CTIONS ON SIGNAL PROCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 3 and equal to a set [ − 1 , 1] at zero. Thus, LCA defines its dynamics on u u u as d u u u dt = − u u u + x x x + Φ T ( b b b − Φ x x x ) . (10) It should be noticed that if the dynamics are defined as dx x x/dt , we need to implement ∂ k x x x k 1 which is equal to a set at the zero point. Therefore, LCA uses d u u u/dt rather than d x x x/dt . The properties of LCA has been analysed in [13], [14], [23]. Besides, LCA cannot directly handle the constrained problem in (3). −5 −4 −3 −2 −1 0 1 2 3 4 5 −4 −3 −2 −1 0 1 2 3 4 u x κ =1 κ =2 κ =3 Fig. 1: General threshold function. D. LPNN-LCA F ramework LPNN is a framework for solving the general nonlinear con- strained optimization problems. LCA can ef fectiv ely handle the non-differentiable term in dynamics. But neither LPNN nor LCA can solve the problem in (3) alone. Hence, in our previous study , we have combined these two methods and devised the LPNN-LCA frame work which can be used to solv e the problem in (3) [24]. For this method, we first construct the Lagrangian of (3) as L ( x x x, λ ) = k x x x k 1 + λ T ( Φ x x x − b b b ) , (11) Then, follows the concept of LPNN in (7), we define its dynamics: d x x x dt = − ∂ L ( x x x, λ ) ∂x x x = − ∂ k x x x k 1 − Φ T λ , (12a) d λ dt = ∂ L ( x x x, λ ) ∂ λ = Φ x x x − b b b. (12b) T o handle the non-differential part, the concept of LCA is utilized and its dynamics can be further modified as d u u u dt = − ( u u u − x x x + Φ T λ ) , (13a) d λ dt = Φ x x x − b b b. (13b) The relationship between x x x and u u u is given by (8). Ho wev er, according to our preliminary simulation result, this system may not be stable. Hence we further introduce an augmented term 1 2 k Φ x x x − b b b k 2 2 into its Lagrangian, then L ( x x x, λ ) = k x x x k 1 + λ T ( Φ x x x − b b b ) + 1 2 k Φ x x x − b b b k 2 2 , (14) The augmented term does not affect the objective value at an equilibrium point x x x ∗ , because Φ x x x ∗ − b b b = 0 . And it can further improv e the stability of the system. Thus, the dynamics can be rewritten as d u u u dt = − ( u u u − x x x + Φ T λ ) − Φ T ( Φ x x x − b b b ) , (15a) d λ dt = Φ x x x − b b b. (15b) After the dynamics in (15) settle down, the solution of problem (3) can be obtained at an equilibrium point. Even the aug- mented term is used, we can only prove the local asymptotic con vergence of this system. E. Pr ojection Neur al Network The projection neural network [19], [20] is based on the projection theorem [25]. It is devised to solve the follo wing variational inequality V I ( U , Z ) , finding z z z ∗ ∈ Z such that U ( z z z ∗ ) T ( z z z − z z z ∗ ) ≥ 0 , f or ∀ z z z ∈ Z . (16) Where U ( z z z ) : R p → R p is a continuous function, the set Z = { z z z ∈ R p | d i ≤ z i ≤ h i , i = 1 , ..., p } is con vex. According to the optimization literature [19], problem (16) is equi v alent to the nonlinear projection formulation: P Z ( z z z − U ( z z z )) = z z z , (17) where P Z : R p → Z is a projection operator defined by P Z ( z z z ) = arg min v v v ∈ Z k z z z − v v v k , k · k denotes l 2 -norm. The dynamic of projection neural network is defined as follows: d z z z dt = ζ { P Z ( z z z − U ( z z z )) − z z z } (18) where z z z ∈ R p , and ζ is a positiv e scaling constant. This neural network can be used to solve the VI problem, by solving its relev ant nonlinear projection formulation in (17). Projection neural network is a kind of recurrent neural network. I I I . D E V E L O P M E N T O F P RO P O S E D A L G O R I T H M A. The Impr oved LPNN-LCA F ramework In [24], we ha ve proved that the equilibrium point of the dynamics (15) is equiv alent to the optimal solution of problem (3), and the system has local asymptotic con vergence. Howe ver , its global con ver gence is hard to prove. In this sec- tion, we further modify the dynamics. After the modification, we hope that the equilibrium point of the new dynamics is still equiv alent to the minimal solution of problem (3) and the global con ver gence of the proposed neural network can be prov ed. According to the description in Section II, we see that the dynamic of the projection neural network is constructed by two parts: the projection operation term and a non-projected term. It is worth noting that the operation u u u − x x x in LPNN- LCA frame work can also be seen as an projection operation. IEEE TRANSA CTIONS ON SIGNAL PROCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 4 Because according to the threshold function in (8), if we let κ = 1 , the element-wise relationship between x x x and u u u is u i − x i = u i , | u i | ≤ 1 , 1 , u i > 1 , − 1 , u i < − 1 . (19) Apparently , the operation u i − x i in (19) can be seen as a projection operation g Γ ( · ) , which can project u i into a box set [ − 1 , 1] . Inspired by the dynamic of the projection neural network, we also modify the dynamics in (13) with the similar trick, after that the dynamics can be expressed as d u u u dt = − ( u u u − x x x + Φ T λ ) = M ( t ) , (20a) d λ dt = Φ x x x − b b b − Φ ( u u u − x x x + Φ T λ ) = N ( t ) + Φ M ( t ) . (20b) For the con venience of description, we respectively define that M ( t ) = − ( u u u − x x x + Φ T λ ) , N ( t ) = Φ x x x − b b b . While N ( t ) is the original function giv en by (13b), it includes a non-projected term. Refer to the dynamic of the projection neural network in [20], we also introduce a projection operation Φ M ( t ) into it, thus we can get (20b). The matrix before the additional term is needed for resizing its dimensions. W ith the dynamics in (20), the parameters are updated with following steps: u u u k +1 = u u u k + µ d u u u k dt , (21a) λ k +1 = λ k + µ d λ k dt , (21b) where µ is the step size ( µ > 0 ). B. Pr operties Analysis The equilibrium points of the dynamics in (20) are with respect to the internal state variable u u u , while the optimal solution of original problem (3) is in regard to the decision variable x x x . Hence, we need Theorem 1 to show that the equilibrium point of the dynamics in (20) is identical to the optimal solution of problem (3). Theorem 1 : Let ( u u u ∗ , λ ∗ ) denotes an equilibrium point of (20) . At the equilibrium point, the KKT conditions of (3) ar e satisfied. Since the optimization problem given in (3) is con vex, and the r e gularity condition is satisfied. Thus the equilibrium point of (20) is equivalent to the optimal solution of the problem in (3) . Proof: The KKT conditions of problem (3) are 0 ∈ ∂ || x x x || 1 + Φ T λ , (22a) 0 = Φ x x x − b b b. (22b) According to the definition of the equilibrium point, we hav e M ∗ ( t ) = 0 (23a) Φ M ∗ ( t ) + N ∗ ( t ) = 0 (23b) Where M ∗ ( t ) = − ( u u u ∗ − x x x ∗ + Φ T λ ∗ ) , N ∗ ( t ) = Φ x x x ∗ − b b b . By the concept of LCA, we know that u u u ∗ − x x x ∗ ∈ ∂ || x x x ∗ || 1 , thus from (23a) we can deduce that 0 ∈ ∂ || x x x ∗ || 1 + Φ T λ ∗ . (24) That means (22a) is satisfied at the equilibrium point. Then from (23), it is obvious that N ∗ ( t ) = Φ x x x ∗ − b b b = 0 , (25) (22b) is satisfied. So the equilibrium point of (20) satisfies the KKT conditions of problem (3). Besides, we kno w the problem in (3) is conv ex and the regularity condition is satisfied. Hence, the KKT conditions in (22) are sufficient and necessary . Moreov er , we can say that the equilibrium point of (20) is a global optimal solution of problem (3). According to the analysis of LPNN giv en in [18], we know that (13a) is used for seeking the minimum objecti ve value, while (13b) aims at making the variables fall into the feasible region. After we modify the dynamics, these features are still valid. Howe ver , comparing with dynamics in (13), (20) accelerates its con ver gence to the optimal point, and it does not introduce any augmented term. After modification the com- plexity of the dynamics is still equal to O ( mn ) . W e know that the circuit complexity of analog neural network depends on the time deriv ative calculations. Hence, the proposed impro ved LPNN-LCA framew ork basically does not increase the circuit complexity . I V . G L O B A L S TA B I L I TY A N A L Y S I S According to Theorem 1, when the dynamics in (20) settle down, the equilibrium point is an optimal solution of problem (3). Next, we prove that the proposed neural network has global stability . Lemma 1 : Γ ⊂ R n is a closed and con vex set, g Γ ( · ) denotes the pr ojection operator to Γ . F or any v v v ∈ R n and v v v 0 ∈ Γ , ( v v v − g Γ ( v v v )) T ( g Γ ( v v v ) − v v v 0 ) > 0 . (26) The proof is sho wn in [25]. For the proposed neural network, g Γ ( u u u ) = u u u − x x x is a projection operation to a closed and conv ex set. Thus, if we let v v v = u u u and v v v 0 = u u u ∗ − x x x ∗ , the inequality (26) can be re written as x x x T [( u u u − x x x ) − ( u u u ∗ − x x x ∗ )] > 0 . (27) Then let v v v = u u u ∗ and v v v 0 = u u u − x x x , based on the inequality in (26), we can deduce that − x x x ∗ T [( u u u − x x x ) − ( u u u ∗ − x x x ∗ )] > 0 . (28) Add (27) and (28), we have ( x x x − x x x ∗ ) T [( u u u − x x x ) − ( u u u ∗ − x x x ∗ )] > 0 (29) L yapunov stability theory : Let v v v ∗ be an equilibrium point of the system ˙ v v v = ˆ f ( v v v ) , Let V : R n → R be a continuously differ entiable function suc h that V ( 0 ) = 0 and V ( v v v ) ≥ 0 , ∀ v v v 6 = 0 k v v v k → ∞ ⇒ V ( v v v ) → ∞ ˙ V ( v v v ) < 0 , ∀ v v v 6 = 0 then the system is globally asymptotically stable in the sense of Lyapuno v . IEEE TRANSA CTIONS ON SIGNAL PROCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 5 W ith Lemma 1 and the L yapunov stability theory , we can deduce the following theorem. Theorem 2 : The analog neural network given in (20) is globally asymptotically stable in the sense of L yapunov and it is globally conver gent to a solution of pr oblem (3) . Proof: T o prove the global con vergence, first, we need to devise a valid L yapunov function. Here we use the standard form V : R n → R giv en by V ( w w w − w w w ∗ ) = 1 2 || w w w − w w w ∗ || 2 2 (30) where w w w = ( u u u, λ ) T , and w w w ∗ = ( u u u ∗ , λ ∗ ) T is an equilibrium point of dynamics in (20). According to Theorem 1, we know that w w w ∗ is also the optimal solution of problem (3). Obviously , the function V ( w w w − w w w ∗ ) is continuous, dif ferentiable and radially unbounded. When w w w = w w w ∗ , we hav e V ( 0 ) = 0 . Otherwise, V ( w w w − w w w ∗ ) ≥ 0 , for any w w w 6 = w w w ∗ . Next, we prov e ˙ V ( w w w − w w w ∗ ) < 0 , for any w w w 6 = w w w ∗ . The deri vati ve of V ( w w w − w w w ∗ ) is giv en by ˙ V ( w w w − w w w ∗ ) = d V ( w w w − w w w ∗ ) d t = ( w w w − w w w ∗ ) T d w w w d t = u u u − u u u ∗ λ − λ ∗ T M ( t ) Φ M ( t ) + N ( t ) = ( u u u − u u u ∗ ) T M ( t ) + ( λ − λ ∗ ) T ( Φ M ( t ) + N ( t )) = − M ( t ) T M ( t ) + ( x x x − x x x ∗ ) T M ( t ) + ( λ − λ ∗ ) T N ( t ) = − M ( t ) T M ( t ) − ( x x x − x x x ∗ ) T [( u u u − x x x ) − ( u u u ∗ − x x x ∗ )] (31) Apparently , from (29) we know that, for any w w w 6 = w w w ∗ , the function in (31) are less than 0 . So ˙ V ( w w w − w w w ∗ ) < 0 for any w w w 6 = w w w ∗ . Thus we can say that the proposed neural network is globally asymptotically stable in the sense of L yapunov . If ˙ V ( w w w − w w w ∗ ) = 0 , we ha ve w w w = w w w ∗ . Similar with the proof in [26], we see the system in (20) is globally con vergent to an equilibrium point. Similar with the dynamics in (15), we can also introduce an augment term 1 2 k Φ x x x − b b b k 2 2 into its Lagrangian, then we hav e d u u u dt = M ( t ) − Φ T N ( t ) , (32a) d λ dt = N ( t ) + Φ M ( t ) . (32b) Compared with the dynamics in (20), (32) further improve its con vexity and conv ergence speed. V . S I M U L A T I O N A N D E X P E R I M E N TA L R E S U L T In this section, we conduct se veral experiments to ev aluate the performance of the proposed algorithm. In the follo wing experiments, the length of the signal x x x are 512 or 4096 . When n = 512 , the number of non-zero elements Ω ∈ { 15 , 20 , 25 } . And their position are randomly chosen with uniform distri- bution. Their corresponding values are randomly equal to +5 or − 5 . While for the case n = 4096 , the number of non-zero elements Ω ∈ { 75 , 100 , 125 } . The measurement matrix Φ is a ± 1 random matrix ( Φ ∈ R m × n ). And each column of it is normalized to have a unit l 2 -norm. For better observing the performance of the proposed algo- rithm, sev eral l 1 -norm based sparse approximation algorithms are implemented. The y are L1Magic package [7], SPGL1 package [9], homotopy method [27], the improved PNN-based sparse reconstruction (IPNNSR) method [26] and the LPNN- LCA method giv en by (15). The L1Magic is a toolbox which can be used to solve the BP problem with the primal-dual interior point method. The SPGL1 package is also a common way for solving the BP problem, it is mainly based on the spectral projected gradient (SPG) method which uses the gradient vector as a search direction and chooses its step size according to the spectrum of the underlying local Hessian. Homotopy is a kind of greedy iterative algorithm. IPNNSR and LPNN-LCA are both analog neural networks. The first one is based on the projection neural network, and its global con vergence has been proved [26]. While the second one is our pre vious work which is based on LPNN and LCA, and it only has local asymptotic con vergence. A. Stability In Section IV, we theoretically prove the global conv ergence of the proposed method. Then, we use several typical examples to show its con vergence in practice. For both Fig. 2 and Fig. 3, the first three columns are the dynamics of the estimated parameters x x x , u u u , and λ and the corresponding recovered signals are sho wn in the last column. From Fig. 2, it is observed that when n = 512 , the dynamics can settle down within around 60-80 iterations. While for n = 4096 in Fig. 3, the dynamics can settle down within 100 to 120 iterations. From these figures, we kno w that the whole system is stable and can conv erge to their optimal solutions. B. Con vergence time In this experiment, we test the speed of the proposed method. First let n = 512 , m = 150 , and Ω = 15 , 20 , 25 . The results are shown in Fig. 4. Where the y-axis denotes the av erage relative error of ten trials, and the x-axis is the CPU time (unit: sec). While Fig. 5 depicts the results when n = 4096 , m = 800 , and Ω = 75 , 100 , 125 . From Fig. 4 and Fig. 5, it is observed that, when n = 512 , the greedy method homotopy is better than others. And compared with other two analog neural networks, the IPNNSR needs less conv ergence time to achiev e a satisfied result. When n = 4096 , the performance of our proposed algorithm is superior . Comparing with others, the proposed algorithm has relativ ely stable conv ergence time. Besides, it is worth noting that these three analog neural net- works can be implemented with hardw are circuit, hence their speed can be further improv ed. The computational complexity of IPNNSR’ s dynamics is O ( n 2 ) , while, for the proposed al- gorithm and LPNN-LCA, their corresponding value is O ( mn ) . Hence, compared with the impro ved LPNN-LCA and the original LPNN-LCA, the increase of n has a greater impact on IPNNSR. Comparing with LPNN-LCA, the improv ed method has better stability . And its step size µ = 1 in the experiments. Howe ver , the step size of the original LPNN-LCA can only be 0.1 under the same situation, otherwise the system may not IEEE TRANSA CTIONS ON SIGNAL PROCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 6 0 20 40 60 80 −6 −4 −2 0 2 4 6 number of iterations x 0 20 40 60 80 −8 −6 −4 −2 0 2 4 6 8 number of iterations u 0 20 40 60 80 −4 −2 0 2 4 6 number of iterations λ 0 100 200 300 400 500 600 −6 −4 −2 0 2 4 6 8 signal index signal Original signal Recoveried signal from modified LPNN−LCA 0 10 20 30 40 50 60 70 80 −6 −4 −2 0 2 4 6 number of iterations x 0 10 20 30 40 50 60 70 80 −8 −6 −4 −2 0 2 4 6 8 number of iterations u 0 10 20 30 40 50 60 70 80 −4 −3 −2 −1 0 1 2 3 4 number of iterations λ 0 100 200 300 400 500 600 −6 −4 −2 0 2 4 6 8 signal index signal Original signal Recoveried signal from modified LPNN−LCA 0 10 20 30 40 50 60 70 80 −6 −4 −2 0 2 4 6 number of iterations x 0 10 20 30 40 50 60 70 80 −8 −6 −4 −2 0 2 4 6 8 number of iterations u 0 10 20 30 40 50 60 70 80 −4 −3 −2 −1 0 1 2 3 4 5 number of iterations λ 0 100 200 300 400 500 600 −6 −4 −2 0 2 4 6 8 signal index signal Original signal Recoveried signal from modified LPNN−LCA Fig. 2: Con ver gence of the proposed method when n = 512 . For the first row m = 100 and Ω = 15 . For the second ro w m = 130 and Ω = 20 . F or the third row m = 160 and Ω = 25 . 0 20 40 60 80 100 120 −8 −6 −4 −2 0 2 4 6 number of iterations x 0 20 40 60 80 100 120 −8 −6 −4 −2 0 2 4 6 8 number of iterations u 0 20 40 60 80 100 120 −6 −4 −2 0 2 4 6 number of iterations λ 0 1000 2000 3000 4000 5000 −6 −4 −2 0 2 4 6 8 signal index signal Original signal Recoveried signal from modified LPNN−LCA 0 20 40 60 80 100 120 −6 −4 −2 0 2 4 6 number of iterations x 0 20 40 60 80 100 120 −8 −6 −4 −2 0 2 4 6 8 number of iterations u 0 20 40 60 80 100 120 −6 −4 −2 0 2 4 6 number of iterations λ 0 1000 2000 3000 4000 5000 −6 −4 −2 0 2 4 6 8 signal index signal Original signal Recoveried signal from modified LPNN−LCA 0 20 40 60 80 100 120 −8 −6 −4 −2 0 2 4 6 number of iterations x 0 20 40 60 80 100 120 −8 −6 −4 −2 0 2 4 6 8 number of iterations u 0 20 40 60 80 100 120 −6 −4 −2 0 2 4 6 number of iterations λ 0 1000 2000 3000 4000 5000 −6 −4 −2 0 2 4 6 8 signal index signal Original signal Recoveried signal from modified LPNN−LCA Fig. 3: Con ver gence of the proposed method when n = 4096 . For the first row m = 500 and Ω = 75 . F or the second row m = 600 and Ω = 100 . F or the third row m = 700 and Ω = 125 . IEEE TRANSA CTIONS ON SIGNAL PROCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 7 0 0.1 0.2 0.3 0.4 0.5 10 −16 10 −14 10 −12 10 −10 10 −8 10 −6 10 −4 10 −2 10 0 time (sec) relative error of x L1Magic SPGL1 Homotopy LPNN−LCA IPNNSR modified LPNN−LCA (0.2122, 2.946e−16) (a) 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 10 −16 10 −14 10 −12 10 −10 10 −8 10 −6 10 −4 10 −2 10 0 time (sec) relative error of x L1Magic SPGL1 Homotopy LPNN−LCA IPNNSR modified LPNN−LCA (0.2122,3.057e−16) (b) 0 0.1 0.2 0.3 0.4 0.5 10 −16 10 −14 10 −12 10 −10 10 −8 10 −6 10 −4 10 −2 10 0 time (sec) relative error of x L1Magic SPGL1 Homotopy LPNN−LCA IPNNSR modified LPNN−LCA (0.2309,8.559e−16) (c) Fig. 4: Relativ e error of x x x with respect to the conv ergence time. (a) n = 512 , m = 150 , and Ω = 15 . (b) n = 512 , m = 150 , and Ω = 20 . (c) n = 512 , m = 150 , and Ω = 25 . . con verge. So the con ver gence time of the proposed method is less than the original LPNN-LCA framework. C. MSE performance In this e xperiment, we fix the length of the signal and the number of its nonzero elements. The MSE performances of the proposed method are in vestigated with dif ferent m . W e repeat the experiment 100 times with different measurement matrices, initial states and sparse signals. The results are given in Fig. 6. Where the x-axis denotes the number of elements in observation vector b b b , the y-axis is the mean square error (MSE) between the recovered signal and the original one. It 0 2 4 6 8 10 12 14 10 −16 10 −14 10 −12 10 −10 10 −8 10 −6 10 −4 10 −2 10 0 time (sec) relative error of x L1Magic SPGL1 Homotopy LPNN−LCA IPNNSR modified LPNN−LCA (0.195, 3.227e−16) (a) 0 5 10 15 20 25 10 −16 10 −14 10 −12 10 −10 10 −8 10 −6 10 −4 10 −2 10 0 time (sec) relative error of x L1Magic SPGL1 Homotopy LPNN−LCA IPNNSR modified LPNN−LCA (0.2324, 1.659e−16) (b) 0 5 10 15 20 25 30 35 10 −16 10 −14 10 −12 10 −10 10 −8 10 −6 10 −4 10 −2 10 0 time (sec) relative error of x L1Magic SPGL1 Homotopy LPNN−LCA IPNNSR modified LPNN−LCA (0.2512,3.627e−16) (c) Fig. 5: Relative error of x x x with respect to the con ver gence time. (a) n = 4096 , m = 800 , and Ω = 75 . (b) n = 4096 , m = 800 , and Ω = 100 . (c) n = 4096 , m = 800 , and Ω = 125 . . can be seen from the figures that as m increases, the MSEs of all algorithms decrease. In the same situation, the SPGL1 package and the homotopy method hav e larger MSEs. For other four approaches, their MSE performance is quite similar with each other . Even the proposed method is devised for solving the BP problem, it still works under low le vel Gaussian noise. The corresponding simulation results are giv en in Fig.7 and Fig.8. Their observations are generated by b b b = Φ x x x + , where = [ 1 , ..., m ] T denotes the vector of zero-mean IEEE TRANSA CTIONS ON SIGNAL PROCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 8 70 75 80 85 90 95 100 105 110 0 0.05 0.1 0.15 0.2 0.25 m: number of measurements MSE n = 512 with 15 non−zero data point L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 90 95 100 105 110 115 120 125 130 0 0.05 0.1 0.15 0.2 m: number of measurements MSE n = 512 with 20 non−zero data point L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 100 105 110 115 120 125 130 135 140 0 0.05 0.1 0.15 0.2 0.25 m: number of measurements MSE n = 512 with 25 non−zero data point L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA (a) (b) (c) 350 400 450 500 550 600 0 0.05 0.1 0.15 0.2 m: number of measurements MSE n = 4096 with 75 non−zero data point L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 450 500 550 600 650 700 0 0.05 0.1 0.15 0.2 m: number of measurements MSE n = 4096 with 100 non−zero data point L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 550 600 650 700 750 800 0 0.05 0.1 0.15 0.2 m: number of measurements MSE n = 4096 with 125 non−zero data point L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA (d) (e) (f) Fig. 6: MSE performances among the six methods. (a) n = 512 and Ω = 15 . (b) n = 512 and Ω = 20 . (c) n = 512 and Ω = 25 . (d) n = 4096 and Ω = 75 . (e) n = 4096 and Ω = 100 . (f) n = 4096 and Ω = 125 . 70 75 80 85 90 95 100 105 110 0 0.05 0.1 0.15 0.2 time (sec) MSE n = 512 with 15 non−zero data point σ = 0.001 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 90 95 100 105 110 115 120 125 130 0 0.05 0.1 0.15 0.2 time (sec) MSE n = 512 with 20 non−zero data point σ = 0.001 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 100 105 110 115 120 125 130 135 140 0 0.05 0.1 0.15 0.2 time (sec) MSE n = 512 with 25 non−zero data point σ = 0.001 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA (a) (b) (c) 350 400 450 500 550 600 0 0.05 0.1 0.15 0.2 m: number of measurements MSE n = 4096 with 75 non−zero data point σ = 0.001 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 450 500 550 600 650 700 0 0.05 0.1 0.15 0.2 m: number of measurements MSE n = 4096 with 100 non−zero data point σ = 0.001 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 550 600 650 700 750 800 0 0.05 0.1 0.15 0.2 m: number of measurements MSE n = 4096 with 125 non−zero data point σ = 0.001 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA (d) (e) (f) Fig. 7: MSE performances under Gaussian noise, where σ = 0 . 001 . (a) n = 512 and Ω = 15 . (b) n = 512 and Ω = 20 . (c) n = 512 and Ω = 25 . (d) n = 4096 and Ω = 75 . (e) n = 4096 and Ω = 100 . (f) n = 4096 and Ω = 125 . Gaussian noise. The standard de viation of the noise is denotes by σ . For the Fig.7, σ = 0 . 001 , while for Fig.8, σ = 0 . 01 . V I . C O N C L U S I O N This paper proposes a novel sparse approximation algorithm by combining LPNN, LCA and projection theorem. The main target of the proposed algorithm is to calculate a real-time IEEE TRANSA CTIONS ON SIGNAL PROCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 9 70 75 80 85 90 95 100 105 110 0 0.05 0.1 0.15 0.2 time (sec) MSE n = 512 with 15 non−zero data point σ = 0.01 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 90 95 100 105 110 115 120 125 130 0 0.05 0.1 0.15 0.2 time (sec) MSE n = 512 with 20 non−zero data point σ = 0.01 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 105 110 115 120 125 130 135 140 0 0.05 0.1 0.15 0.2 time (sec) MSE n = 512 with 25 non−zero data point σ = 0.01 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA (a) (b) (c) 350 400 450 500 550 600 0 0.05 0.1 0.15 0.2 m: number of measurements MSE n = 4096 with 75 non−zero data point σ = 0.01 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 450 500 550 600 650 700 0 0.05 0.1 0.15 0.2 m: number of measurements MSE n = 4096 with 100 non−zero data point σ = 0.01 L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA 550 600 650 700 750 800 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16 0.18 0.2 n = 4096 with 125 non−zero data point σ = 0.01 MSE m: number of measurements L1Magic SPGL1 LPNN−LCA Homotopy IPNNSR modified LPNN−LCA (d) (e) (f) Fig. 8: MSE performances under Gaussian noise, where σ = 0 . 01 . (a) n = 512 and Ω = 15 . (b) n = 512 and Ω = 20 . (c) n = 512 and Ω = 25 . (d) n = 4096 and Ω = 75 . (e) n = 4096 and Ω = 100 . (f) n = 4096 and Ω = 125 . solution of the large-scale problem. For this purpose, this paper uses LPNN framework and introduces LCA to set up an approximate differentiable expression for the sub-differential at the non-dif ferentiable point. Referring to the dynamic of projection neural network, we further modify its dynamics. Thus the equilibrium point of the improved dynamics is equiv- alent to an optimal solution, and the proposed algorithm is globally stable in the sense of L yapunov . Besides, according to the simulation results, the MSE performance of the proposed algorithm is similar with se veral state-of-the-art methods and for large-scale problem it has obvious advantage in speed. In future work we will try to modify the dynamics of LPNN-LCA method for solving BPDN problem and prove its global con vergence. Besides, the modified dynamics may be helpful to improve the performance of the approximate l 0 - norm relev ant methods. R E F E R E N C E S [1] J. Wright, A. Y . Y ang, A. Ganesh, S. S. Sastry , and Y . Ma, “Robust face recognition via sparse representation, ” IEEE Tr ansactions on P attern Analysis and Machine Intelligence , vol. 31, no. 2, pp. 210–227, 2009. [2] F . Bach, J. Mairal, J. Ponce, and G. Sapiro, “Sparse coding and dictio- nary learning for image analysis, ” in Pr oceedings of IEEE International Confer ence on Computer V ision and P attern Recognition , 2010. [3] K. Huang and S. A viyente, “Sparse representation for signal classifica- tion, ” in Advances in Neural Information Pr ocessing Systems , 2006, pp. 609–616. [4] Y . Li, A. Cichocki, and S.-i. Amari, “ Analysis of sparse representation and blind source separation, ” Neural Computation , vol. 16, no. 6, pp. 1193–1234, 2004. [5] J. Wright, Y . Ma, J. Mairal, G. Sapiro, T . S. Huang, and S. Y an, “Sparse representation for computer vision and pattern recognition, ” Pr oceedings of the IEEE , vol. 98, no. 6, pp. 1031–1044, 2010. [6] D. L. Donoho, “For most large underdetermined systems of linear equations the minimal l 1 -norm solution is also the sparsest solution, ” Communications on Pur e and Applied Mathematics , vol. 59, no. 6, pp. 797–829, 2006. [7] E. Candes and J. Romberg, “l1-magic: Recovery of sparse signals via conve x programming, ” URL: www . acm. caltech. edu/l1magic/downloads/l1magic. pdf , vol. 4, p. 46, 2005. [8] E. van den Berg and M. P . Friedlander, “Probing the Pareto frontier for basis pursuit solutions, ” SIAM Journal on Scientific Computing , v ol. 31, no. 2, pp. 890–912, 2008. [9] E. van den Berg and M. P . Friedlander, “SPGL1: A solver for large-scale sparse reconstruction, ” June 2007, [Online]. A vailable: http://www .cs.ubc.ca/labs/scl/spgl1. [10] S. G. Mallat and Z. Zhang, “Matching pursuits with time-frequency dictionaries, ” IEEE T ransactions on Signal Pr ocessing , vol. 41, no. 12, pp. 3397–3415, 1993. [11] R. D. Now ak, S. J. Wright et al. , “Gradient projection for sparse reconstruction: Application to compressed sensing and other inv erse problems, ” IEEE J ournal of Selected T opics in Signal Pr ocessing , vol. 1, no. 4, pp. 586–597, 2007. [12] E. Candes and T . T ao, “The dantzig selector: Statistical estimation when p is much larger than n, ” The Annals of Statistics , pp. 2313–2351, 2007. [13] A. Balavoine, J. Romberg, and C. J. Rozell, “Conv ergence and rate anal- ysis of neural networks for sparse approximation, ” IEEE T ransactions on Neural Networks and Learning Systems , vol. 23, no. 9, pp. 1377–1389, 2012. [14] C. J. Rozell, D. H. Johnson, R. G. Baraniuk, and B. A. Olshausen, “Sparse coding via thresholding and local competition in neural circuits, ” Neural Computation , vol. 20, no. 10, pp. 2526–2563, 2008. [15] A. Cochocki and R. Unbehauen, Neural networks for optimization and signal pr ocessing . John Wile y and Sons, Inc., 1993. [16] J. J. Hopfield, “Neural networks and physical systems with emergent collectiv e computational abilities, ” Proceedings of the National Academy of Sciences , vol. 79, no. 8, pp. 2554–2558, 1982. [17] L. Chua and G.-N. Lin, “Nonlinear programming without computation, ” IEEE Tr ansactions on Circuits and Systems , vol. 31, no. 2, pp. 182–188, 1984. [18] S. Zhang and A. Constantinides, “Lagrange programming neural net- works, ” IEEE T ransactions on Circuits and Systems II: Analog and Digital Signal Pr ocessing , vol. 39, no. 7, pp. 441–452, 1992. IEEE TRANSA CTIONS ON SIGNAL PROCESSING, V OL. 1, NO. 1, SEPTEMBER 2018 10 [19] Y . Xia, H. Leung, and J. W ang, “ A projection neural network and its application to constrained optimization problems, ” IEEE T ransactions on Cir cuits and Systems I: Fundamental Theory and Applications , vol. 49, no. 4, pp. 447–458, 2002. [20] Y . Xia and J. W ang, “ A general projection neural network for solving monotone v ariational inequalities and related optimization problems, ” IEEE T ransactions on Neur al Networks , vol. 15, no. 2, pp. 318–328, 2004. [21] J. Liang, H. C. So, C. S. Leung, J. Li, and A. Farina, “W av eform design with unit modulus and spectral shape constraints via Lagrange programming neural network, ” IEEE J ournal of Selected T opics in Signal Pr ocessing , vol. 9, no. 8, pp. 1377–1386, 2015. [22] J. Liang, C. S. Leung, and H. C. So, “Lagrange programming neural network approach for tar get localization in distributed MIMO radar , ” IEEE T rans. Signal Pr ocess. , vol. 64, no. 6, pp. 1574–1585, Mar . 2016. [23] A. Balav oine, C. J. Rozell, and J. Romberg, “Global con ver gence of the locally competitiv e algorithm, ” in Digital Signal Pr ocessing W orkshop and IEEE Signal Pr ocessing Education W orkshop (DSP/SPE) . IEEE, 2011, pp. 431–436. [24] R. Feng, C.-S. Leung, A. G. Constantinides, and W .-J. Zeng, “Lagrange programming neural network for nondifferentiable optimization prob- lems in sparse approximation, ” IEEE transactions on neural networks and learning systems , 2017. [25] D. Kinderlehrer and G. Stampacchia, An introduction to variational inequalities and their applications . Siam, 1980, vol. 31. [26] Q. Liu and J. W ang, “ l 1 -minimization algorithms for sparse signal reconstruction based on a projection neural network, ” IEEE T ransactions on Neural Networks and Learning Systems , vol. 27, no. 3, pp. 698–707, 2016. [27] D. M. Malioutov , M. Cetin, and A. S. Willsk y , “Homotopy continuation for sparse signal representation, ” in Acoustics, Speech, and Signal Pr ocessing, 2005. Pr oceedings.(ICASSP’05). IEEE International Con- fer ence on , vol. 5. IEEE, 2005, pp. v–733. Hao W ang is currently pursuing Ph.D. degree from the Department of Electronic Engineering, City Uni- versity of Hong Kong. His current research interests include neural networks and machine learning. Ruibin Feng received the PhD. degree in electronic engineering from the City University of Hong K ong in 2017. His current research interests include Neural Networks and Machine Learning. Chi-Sing Leung recei ved the Ph.D. de gree from the Chinese University of Hong Kong, Hong Kong, in 1995. He is currently a Professor with the Department of Electronic Engineering, City Univ ersity of Hong K ong, Hong Kong. He has authored ov er 120 journal papers in the areas of digital signal processing, neural networks, and computer graphics. His cur- rent research interests include neural computing and computer graphics. Dr . Leung was a member of the Organizing Com- mittee of ICONIP2006. He received the 2005 IEEE T ransactions on Multime- dia Prize Paper A ward for his paper titled The Plenoptic Illumination Function in 2005. He was the Program Chair of ICONIP2009 and ICONIP2012. He is/was the Guest Editor of several journals, including Neural Computing and Applications, Neurocomputing, and Neural Processing Letters. He is a Governing Board Member of the Asian Pacific Neural Network Assembly (APNN A) and the V ice President of APNNA.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment