Accelerating Large-Scale Data Analysis by Offloading to High-Performance Computing Libraries using Alchemist

Apache Spark is a popular system aimed at the analysis of large data sets, but recent studies have shown that certain computations—in particular, many linear algebra computations that are the basis for solving common machine learning problems—are significantly slower in Spark than when done using libraries written in a high-performance computing framework such as the Message-Passing Interface (MPI). To remedy this, we introduce Alchemist, a system designed to call MPI-based libraries from Apache Spark. Using Alchemist with Spark helps accelerate linear algebra, machine learning, and related computations, while still retaining the benefits of working within the Spark environment. We discuss the motivation behind the development of Alchemist, and we provide a brief overview of its design and implementation. We also compare the performances of pure Spark implementations with those of Spark implementations that leverage MPI-based codes via Alchemist. To do so, we use data science case studies: a large-scale application of the conjugate gradient method to solve very large linear systems arising in a speech classification problem, where we see an improvement of an order of magnitude; and the truncated singular value decomposition (SVD) of a 400GB three-dimensional ocean temperature data set, where we see a speedup of up to 7.9x. We also illustrate that the truncated SVD computation is easily scalable to terabyte-sized data by applying it to data sets of sizes up to 17.6TB.

💡 Research Summary

The paper introduces Alchemist, a system that bridges Apache Spark with high‑performance MPI‑based linear algebra libraries, enabling data scientists to retain Spark’s ease of use while achieving HPC‑level computational speed. The motivation stems from the observation that many iterative linear algebra operations—such as singular value decomposition (SVD), principal component analysis (PCA), and the conjugate gradient (CG) method—are dramatically slower in Spark due to its bulk‑synchronous execution model, scheduler delays, and task‑level overheads. In contrast, MPI implementations of the same algorithms can be an order of magnitude faster, especially on data sets that reach terabyte scale.

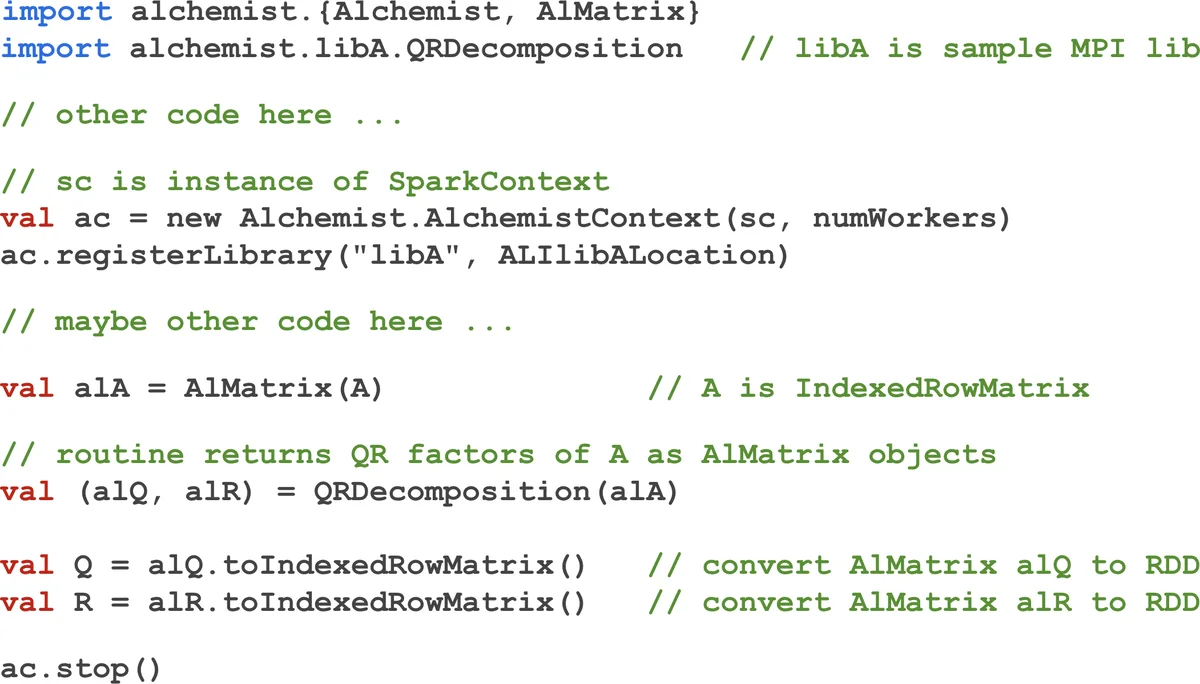

Alchemist’s architecture consists of three main components. The core Alchemist process runs as an MPI program with a driver and multiple worker processes. The Alchemist‑Client Interface (ACI) establishes asynchronous TCP socket connections between Spark executors and Alchemist workers, allowing direct, in‑memory transfer of distributed data without intermediate files or extra copies. Spark’s IndexedRowMatrix is streamed row‑by‑row as byte sequences; the receiving workers reconstruct the data into Elemental’s DistMatrix format, which provides a high‑performance distributed matrix abstraction. The Alchemist‑Library Interface (ALI) is a set of C/C++ shared objects that dynamically load the desired MPI library at runtime. When a Spark application requests a routine, Alchemist forwards the routine name and serialized arguments to the appropriate ALI, which invokes the MPI routine, serializes the results, and sends them back through the ACI. This design avoids the need for a bespoke wrapper for each library function and makes it easy to add new MPI libraries.

Related work such as Spark+MPI, Spark‑MPI, and Smart‑MLlib also attempted to combine Spark with MPI code, but they relied on file‑based I/O or shared‑memory mechanisms that incurred significant overhead and limited scalability, especially for dense, large‑scale data. Alchemist’s direct socket transfer and use of Elemental address these shortcomings.

The authors evaluate Alchemist on two representative workloads. First, a large‑scale speech classification task uses CG to solve a symmetric positive‑definite linear system; the Alchemist‑enabled MPI version achieves roughly a ten‑fold speedup over a pure Spark implementation. Second, a truncated SVD on a 400 GB three‑dimensional ocean‑temperature data set shows up to 7.9× acceleration compared with Spark’s MLlib SVD. Even accounting for the data‑transfer cost (≈10–15 % of total runtime), Alchemist remains substantially faster. Moreover, the same SVD code scales to a 17.6 TB data set, demonstrating near‑linear scalability when increasing the number of MPI workers and network bandwidth.

The paper acknowledges current limitations: Alchemist presently supports only dense matrices, lacks built‑in fault tolerance for the MPI side, and does not automatically manage resource elasticity. Future work includes extending support to sparse data structures, integrating checkpointing and recovery mechanisms, and tighter coupling with Spark’s resource manager to enable dynamic scaling of MPI workers.

In summary, Alchemist provides a practical, high‑performance bridge that lets Spark users offload computationally intensive linear algebra kernels to mature MPI libraries with minimal code changes. By handling data movement efficiently and loading libraries at runtime, it delivers order‑of‑magnitude speedups on real‑world, terabyte‑scale workloads while preserving the productivity advantages of the Spark ecosystem. This makes Alchemist a valuable tool for data scientists and engineers tackling large‑scale machine‑learning and scientific‑computing problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment