Can DNNs Learn to Lipread Full Sentences?

Finding visual features and suitable models for lipreading tasks that are more complex than a well-constrained vocabulary has proven challenging. This paper explores state-of-the-art Deep Neural Network architectures for lipreading based on a Sequenc…

Authors: George Sterpu, Christian Saam, Naomi Harte

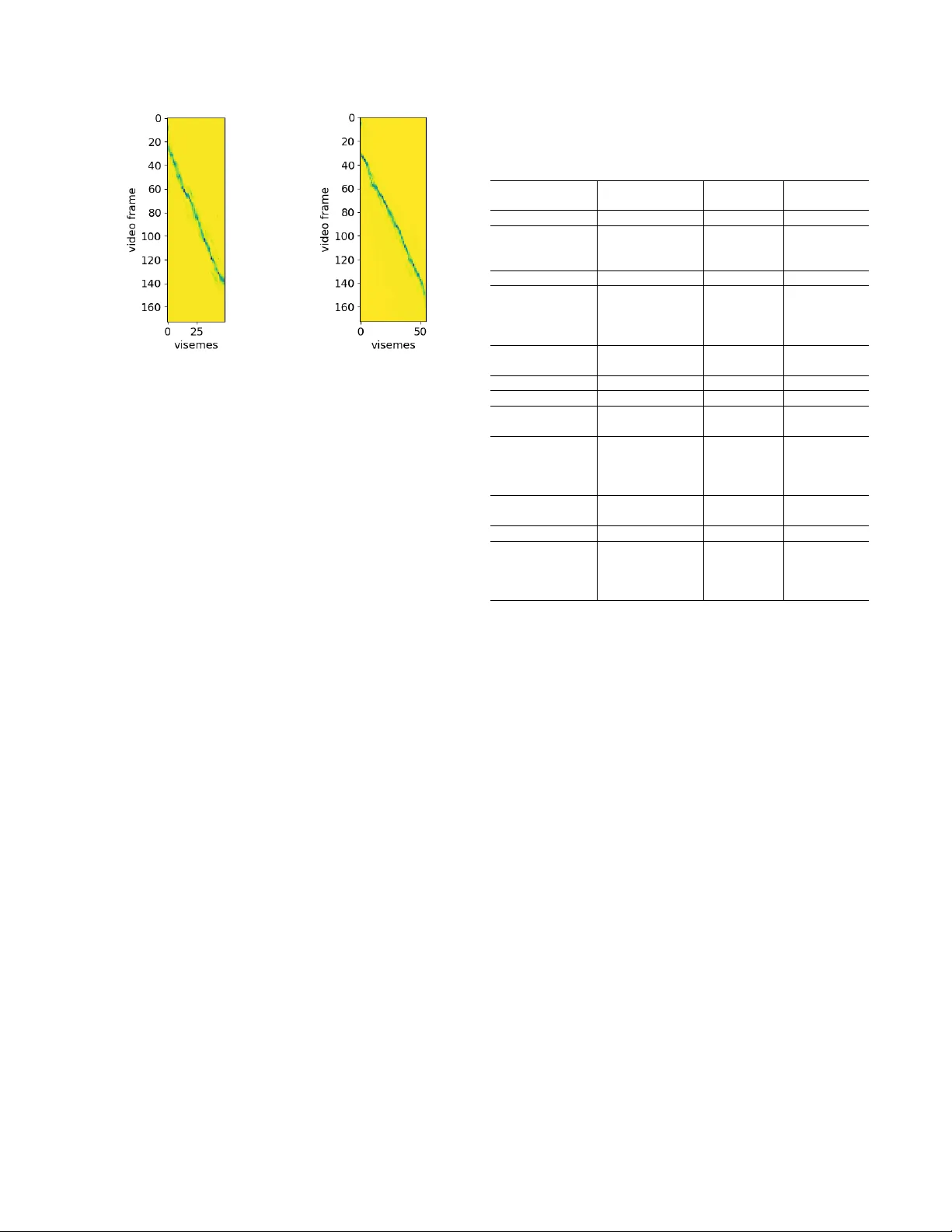

CAN DNNS LEARN TO LIPREAD FULL SENTENCES? Geor ge Sterpu, Christian Saam, Naomi Harte Sigmedia, AD APT Centre, School of Engineering, T rinity College Dublin, Ireland ABSTRA CT Finding visual features and suitable models for lipreading tasks that are more complex than a well-constrained vocab- ulary has prov en challenging. This paper explores state- of-the-art Deep Neural Network architectures for lipreading based on a Sequence to Sequence Recurrent Neural Netw ork. W e report results for both hand-crafted and 2D/3D Con volu- tional Neural Network visual front-ends, online monotonic attention, and a joint Connectionist T emporal Classification- Sequence-to-Sequence loss. The system is ev aluated on the publicly av ailable TCD-TIMIT dataset, with 59 speakers and a vocab ulary of ov er 6000 words. Results show a major im- prov ement on a Hidden Marko v Model framework. A fuller analysis of performance across visemes demonstrates that the network is not only learning the language model, b ut actually learning to lipread. Index T erms — Lipreading, Sequence to Sequence Re- current Neural Networks, TCD-TIMIT 1. INTR ODUCTION Automatic lipreading of continuous and large v ocabulary speech is a promising technology with many applications, re- cov ering the information in speech from a dif ferent modality than the acoustic one. The traditional approaches ha ve lar gely followed early approaches in speech recognition, using hand- crafted features and Hidden Markov Models (HMM). These hav e been so far unsuccessful at modelling the complex pat- terns of visual speech [1, 2, 3, 4, 5], and several research problems, such as finding good representations, remain open in the lipreading community . The Sequence to Sequence Recurrent Neural Network (Seq2seq RNN) architecture has seen a surge in popularity since it was first introduced in [6] for machine translation. It has an elegant formulation, makes minimal assumptions about the modelled sequences, requires less domain knowl- edge and has obtained competitive results on many bench- marks. T ogether with the Connectionist T emporal Classifica- tion (CTC) method [7], these represent the main end-to-end trainable approaches for transcribing temporal patterns. In Supported by the AD APT Centre for Digital Content T echnology , which is funded under the SFI Research Centres Programme (Grant 13/RC/2106) and is co-funded under the European Regional De velopment Fund. this work we prefer Seq2seq for its additional property of implicitly learning a language model, as CTC performance is limited by the conditional independence of its predictions [7]. Sev eral recent adv ancements in machine learning have not been explored by the lipreading community . These in- clude the monotonic attention [8] and the joint CTC-Sequence loss [9]. In addition, se veral successful applications in Auto- matic Speech Recognition (ASR) [10, 11, 12, 13], using both medium-sized (TIMIT , WSJ) and large (Google Speech Com- mands) datasets, gi ve us some useful insights from a different, though correlated modality . Y et experience has shown that techniques successful for audio-only speech recognition don’t automatically translate well to a lip-reading task [1, 2, 3, 4, 5]. Thus our contribution is an exploration of state of the art Seq2seq techniques within the domain of lipreading, to de- termine what approaches hold the greatest potential in this domain and identify where further challenges remain. There are, to our best knowledge, only two papers in the literature to date that address the problem of lipreading at the sub-word lev el using DNNs. The first one [14] uses a spatio- temporal Con v olutional Neural Network (CNN) and the CTC loss in order to produce a phonetic transcription of the in- put sentence. Ho we ver , the model was applied on a lo w per- plexity dataset, GRID [15] where it can be argued that the model can hea vily rely on the predictable structure of the sen- tences. In addition, the CTC loss has its own shortcomings due to the independence assumption for the predicted labels. W e address this by testing the algorithms on TCD-TIMIT [1], a dataset of phonetically-balanced sentences and a vocab u- lary of approx. 6000 words. The second paper [16] makes use of a spatial only CNN, and applies the Seq2seq network to produce sentence transcriptions at the character lev el. The dataset used for ev aluation, LRS, is larger than TCD-TIMIT but not public, and has recently been superseded by a more challenging, public version, MV -LRS. Our work differs from these two by making predictions at the viseme le vel, which is a unit choice that av oids ambiguities. In this way , the lan- guage model does not ha ve to be well trained in advance, as in [16]. In addition, we explore a wider range of architec- tures, such as both hand-crafted and 2D/3D CNN visual front- ends, online monotonic attention and a joint CTC-Seq2seq loss. The paper is organised as follows: In Section 2 we de- scribe the general network architecture. Section 3 presents our experiments, and we discuss our findings in Section 4. 2. MODEL ARCHITECTURE Our lipreading pipeline has a video processing front-end and a Seq2seq RNN, learning from variable-length videos and pro- ducing v ariable-length transcriptions at the viseme le vel. The system is trained end-to-end. 2.1. V isual fr ont-end The visual front-end inv olves segmenting the lip region from a visual stream and computing a feature vector for each frame. W e consider both handcrafted features and learnt CNN-based visual representations. At this stage, we can also take advan- tage of the temporal dimension by appending deri vati ves or by using 3D con volution k ernels. 2.2. Sequence modelling Next, the extracted visual features are fed to a Seq2Seq model, which consists of two RNNs termed as the encoder and the decoder . W ith each input timestep, the encoder up- dates its internal state and produces one output. W e collect all the outputs in a memory and retain only the final state, known as a thought vector that summarises the input sentence. The decoder is initialised from the thought vector and starts pro- ducing output symbols from a designated start-of-sentence token until it finally produces an end-of-sentence symbol. As the temporal dimension is warped onto the one-dimensional thought vector , the decoder is allowed to peek into the mem- ory and soft-select the vectors that are correlated with its current internal state. This mechanism is known as attention , and the soft-selection temporal pattern is called alignment . W ith speech signals, enforcing this alignment to be mono- tonic with respect to the encoded inputs may alle viate the problem of attending to the repetitions of a word in the same sentence. The impact may be more significant for visemes, where the number of classes is typically much lower than for phonemes or characters. In addition, scanning only a past history of the memory enables the on-line application of the lipreading system, further reducing the time complexity . W e consider the implementation of [8], which was shown to out- perform related strate gies with a minimal loss in accuracy ov er the softmax attention baseline. 2.3. T raining and decoding In the training stage, the entire transcription is a vailable to the decoder . The embedding of the ground-truth symbol gets fed at e very time step, but from time to time we replace it with the previously decoded symbol in order to increase the ro- bustness of the network to recover from mistakes. This train- ing process implies that the predicted output transcription has an identical length with the ground-truth transcription, thus a cross-entropy loss function can be applied. In the ev alua- tion stage, the ground-truth transcription cannot be used, and the decoder is likely to produce a transcription of a different length. Hence, we ev aluate the quality of the prediction by computing the Lev enshtein edit distance with respect to the ground truth. Combining the Seq2Seq cross entropy loss with the CTC loss could lead to se veral benefits. First, the CTC loss forces the encoder to better focus on the input signal, as it tends to become ”lazy” due to the power of the implicitly learnt lan- guage model on the decoding side. In addition, the encoder should now learn representations that are more closely related to the class labels, as the CTC first predicts a class for each frame, and only later it merges the repeated symbols. 3. EV ALU A TION 3.1. Dataset W e performed our experiments on TCD-TIMIT [1], a publicly av ailable audio-visual dataset with 59 subjects, each recit- ing 98 phonetically balanced sentences from a vocabulary of 6000 words, totalling around 8 hours of recordings. Sen- tences vary from 10 to 65 visemes in length. Evaluation was done on the speaker -dependent protocol of [1], choosing 67 and 31 sentences from each speaker for train and test respec- tiv ely . The dialect-dependent sentences (name begins with SA ) were removed. As in the original TIMIT database, these two sentences were common across all speakers. Early re- sults demonstrated that the models quickly learned the struc- ture of these sentences, giving misleading high performance. Our labels are the same viseme le vel transcriptions as in [1], which were obtained from a phonetic transcription by map- ping phonemes into 12 viseme clusters. 3.2. Setup V isual features. As the lip region coordinates were already provided in [1, 2], we used them to crop this region from the video frames, do wnsampled to 36x36 pixels and conv erted it to grey scale as a preprocessing step. W e first considered handcrafted features and kept 44 low frequency coefficients of the lip region 2D DCT transform, plus their first two deriv a- tiv es, as in [1, 2]. T o check the impact of the implicitly learnt language model alone, we also present the results in the ab- sence of a visual stream by replacing the features with zeros. Next, we tested multiple CNN architectures on the previ- ously cropped region, additionally using a 36x36 RGB ver- sion and a 64x64 gre y one to check the benefits of color and a larger windo w size. Our 2D CNNs hav e 4 layers with 16, 32, 64 and 128 feature detectors respecti vely , a small 3x3 con- volution kernel and rectified linear activ ations. After the first layer , our conv olutions use a stride of 2 to reduce the dimen- sionality . The activ ations of the last layer are flattened and fully connected to a new layer of 128 units, producing our frame-wise feature vectors. The 3D CNN is of the same struc- ture, differing only in the use of a 3x3x3 con volution kernel. Encoder -decoder RNN. For our Seq2Seq model we start with two unidirectional recurrent layers of 128 Long Short- term Memory (LSTM) cells each, for both the encoder and the decoder . The one layer version was not performing well and we do not report these results. Ho wev er , we test a one layer bidirectional LSTM (BiLSTM) version, processing the sentence both in the forward and backward directions, while maintaining the same number of parameters. Decoding was performed using a beam search strategy of width equal to 4. Attention. Our default attention mechanism was the Luong [17] version with the energy term scaled, and we obtained significantly worse results with the more popular Bahdanau attention style [18]. W e also tested the online monotonic at- tention strategy of [8]. T o make it work, we found it was essential to turn off the pre-sigmoid noise and set the scalar bias to a negati ve v alue. Joint CTC-Seq2seq loss. As the Seq2seq language model exhibits a strong early influence in training, we try to add a CTC loss over the encoder’ s outputs, inserting a softmax layer ov er the vocab ulary size plus 1, and training jointly with the cross-entropy loss on the decoder side. Since [9] obtained the best results for a mixing coef ficient of 0.2 for the CTC loss, we only consider this case here. 3.3. Practical aspects Input pipeline. W e noticed a consistent improvement when randomly shuffling the train files with each dataset itera- tion. Grouping sentences of similar lengths together , a con- cept known as buck eting, leads to a smaller zero padding of batches, noticeably reducing the RNN processing time. Our buck et width was 15 frames, or approximately 0.5 seconds. Regularisation. W e generally obtained good results with dropout applied to the recurrent cells [19], keeping the in- puts, the states and the outputs with a probability of 0.9. For the best results with the CNN architectures, we interleaved dropout layers with a rate of 50% between conv olutions. W e also applied L2-norm regularisation on the recurrent and the con volutional weights, scaled by 0.0001 and 0.01 respec- tiv ely . W e enable gradient clipping to a maximum norm of 10.0 and we also clip the LSTM cells between -10.0 and 10.0. 4. DISCUSSION AND CONCLUSION The results of our study are shown in T able 1. W e first observe a massiv e improv ement over the HMM baseline. Howe ver , a large part is o wed to the implicitly learnt RNN-based lan- guage model, as hypothesised in [12] and rev ealed by system D . In comparison, a bi-gram language model only increased the accuracy of the HMM system A up to 35% [2] on the same dataset, using the same DCT features. Looking at the predictions, we note that the model quickly learns to output only tw o visemes in an interlea ved pattern, surrounded by the silence visemes delimiting the start and the end of each sen- T able 1 . Lipreading accuracy on TCD-TIMIT . The right col- umn sho ws the number of iterations needed to reach conv er- gence (or nc for no conver gence ). Featur e Accuracy Iters A . DCT + HMM baseline [2] 31.59 % - B . AAM + HMM baseline [2] 25.28 % - C . Eigenlips + DNN-HMM [4] 46.61 % - D . zeros + LSTMs 45.87 % 160 E . DCT + LSTMs 61.52 % 250 F . DCT + BiLSTMs 60.72 % 180 G . E w/o attention 48.29 % 270 H . E w/ monotonic attention 61.58 % 170 I . DCT + joint CTC-Seq2seq 61.18 % 180 J . 2D CNN + LSTMs nc K . 2D CNN + BiLSTMs 66.27 % 400 L . J on RGB + joint CTC-Seq2seq 66.20% 150 M . J on 64x64 + joint CTC-Seq2seq nc N . Gray 3D CNN + LSTMs nc O . 2D CNN + joint CTC-Seq2seq 64.61% 260 tence. These correspond to the Lips relaxed, narr ow opening and T ongue up or down classes, and together they account for 52.56% of the occurrences in TCD-TIMIT scripts. Since the scripts were phonetically balanced, the viseme distribu- tion only reflects a natural speech pattern. W e identified this matter in all our experiments, typically taking at least 100 iterations before the predictions start to look di verse. This suggests that the language model might slow do wn training con ver gence, as the system will learn the patterns from the input signal more slowly . The use of DCT features with a Seq2seq model led to a substantial improv ement ov er the state of the art on the TCD- TIMIT dataset [4]. There is a noticeable boost in con vergence speed from unidirectional to bidirectional LSTMs, yet it does not always translate into higher a ccuracy , as demonstrated by E and F . This could be explained by the fact that two single- layer networks are less po werful than a single two-layer vari- ant. W e tried another variant of two-layered bidirectional LSTM which did not improv e the performance. As noted by [16], the attention-less system G could not learn meaningful patterns from the input, predicting a sim- ilar transcription for most sentences. This could imply that either the temporal information v anishes during encoding, or the decoding process relies heavily on the language model. The attention-based system E alleviates these aspects, obtain- ing an absolute 13.23% improv ement over this v ariant. Replacing the Luong-style softmax attention with the monotonic attention of [8] maintains the performance at the same lev el. This is also demonstrated by the alignments in Figure 1, where the softmax attention learns to align mono- tonically , producing a sharp peak in the weight distrib ution. System K System H Fig. 1 . T ypical alignments learnt by our systems Consequently , the enforced monotonic attention w ould repre- sent a suitable choice for lipreading, further reducing the time complexity and enabling online decoding. Cited as a possible extension in [16], our benchmark shows the first successful application of online and monotonic attention to lipreading. The use of 2D-CNN features led to an additional ≈ 5% absolute improv ement over the best performing DCT -based system, as is the case with system K . In this case, using BiL- STMs was crucial to pre vent the system from getting stuck in a local mininum, as in J . Howe ver , our experiments on images of increased resolution (64x64) and with 3D con v olutions did not reach con ver gence, showing the limits of a shallo w CNN architecture. The use of the joint CTC-Seq2seq loss function signifi- cantly accelerates the training process. Howe ver , in our case, the test set accuracy was lo wer than for the cross-entropy loss function alone. The impact of the CTC loss may be twofold. It enforces a frame-wise classification on the encoder’ s out- puts, which could lead to better gradients for the CNN lay- ers. This is demonstrated by the performance achieved with systems L and O , which could not con verge without the ad- ditional CTC loss. On the other hand, the two loss functions could ha ve competing requirements for the state representa- tion, and a proper weighting may be vital for optimal perfor- mance, as shown in [9]. On the alignments produced by the decoder we could ob- serve that they tend to get fuzzy to wards the end of the sen- tence, sometimes resembling to a ri ver delta. This suggests that the thought vector is quite good at summarising the recent past, and the attention is only needed to boost the decoding of early ev ents. W e hypothesise that a different assignment of the thought vector and attention duties, where the first en- codes a rather short history and the latter attends to more dis- tant key frames, could enhance the o verall performance. W e hav e compared the viseme confusion matrices of sys- tems A , the DCT + HMM baseline, and K , our top perform- T able 2 . V iseme accuracy of the best DNN system K and relativ e change from HMM baseline ( A ). V isemes sorted by decreasing visibility . V iseme TIMIT Phonemes Accuracy K [%] ∆ Accuracy K - A [%] Lips to teeth /f/ /v/ 85.6 21.25 Lips puckered /er/ /ow/ /r/ /q/ /w/ /uh/ /uw/ /axr/ /ux/ 83.4 50.81 Lips together /b/ /p/ /m/ /em/ 94.8 30.40 Lips relaxed moderate open- ing to lips narrow-puck ered /aw/ 45.7 25.90 T ongue between teeth /dh/ /th/ 58.4 27.79 Lips forward /ch/ /jh/ /sh/ /zh/ 65.4 18.26 Lips rounded /o y/ /ao/ 31.6 -8.41 T eeth Approxi- mated /s/ /z/ 81.6 52.24 Lips relax ed nar- row opening /aa/ /ae/ /ah/ /ay/ /ey/ /ih/ /iy/ /y/ /eh/ /ax-h/ /ax/ /ix/ 95.6 73.50 T ongue up or down /d/ /l/ /n/ /t/ /el/ /nx/ /en/ /dx/ 84.8 56.17 T ongue back /g/ /k/ /ng/ /eng/ 63.2 24.41 Silence /sil/ /pcl/ /tcl/ /kcl/ /bcl/ /dcl/ /gcl/ /h#/ /#h/ /pau/ /epi/ 93.6 0.21 ing DNN-based lipreading system. T able 2 sho ws the relati ve performance increase across the viseme classes for these two systems. The table also shows the TIMIT phonemes mapped to each viseme class and their visibility , or ease of observ a- tion for a human. The improvement from A to K is ubiquitous with the exception of a single viseme corresponding to the Lips rounded shape. This viseme is most frequently confused with the Lips relaxed narrow opening viseme, suggesting that it is difficult ev en for the CNN to learn features that disam- biguate them. Lower impro vements are seen for Lips forwar d and T ongue back . The frontal view used as input does not cap- ture any depth information, ho wev er the database includes a second view at 30 ◦ which could be useful for such visemes. Overall, the Seq2seq model greatly outperforms HMM and hybrid DNN-HMM systems ev en without CNN-based feature e xtraction. The fully neural architectures achieved the highest accuracies in our experiments. Additionally , the use of the joint loss function boosted the training con vergence and enabled learning visual features on higher dimensional inputs. Lastly , we demonstrate the ef ficiency of online monotonic at- tention on this task, a necessary step to wards online decoding. 5. A CKNOWLEDGEMENTS W e are grateful to Eugene Brevdo, Marco Forte, Oriol V inyals and Li Deng for their helpful comments and suggestions. 6. REFERENCES [1] Naomi Harte and Eoin Gillen, “TCD-TIMIT: An audio- visual corpus of continuous speech, ” IEEE T ransactions on Multimedia , v ol. 17, no. 5, pp. 603–615, May 2015. [2] George Sterpu and Naomi Harte, “T ow ards lipreading sentences using activ e appearance models, ” in A VSP , Stockholm, Sweden, August 2017. [3] Kwanchiv a Thangthai and Richard Harve y , “Improv- ing computer lipreading via dnn sequence discrimina- tiv e training techniques, ” in The 18th Annual Confer- ence of the International Speech Communication Asso- ciation Interspeec h 2017 . May 2017. [4] Kwanchiv a Thangthai, Helen L Bear, and Richard Har- ve y , “Comparing phonemes and visemes with dnn- based lipreading, ” in W orkshop on Lip-Reading using deep learning methods , 2017, BMVC 2017. [5] G. Potamianos, C. Neti, G. Gravier , A. Garg, and A. W . Senior , “Recent advances in the automatic recognition of audiovisual speech, ” Pr oceedings of the IEEE , vol. 91, no. 9, pp. 1306–1326, Sept 2003. [6] Ilya Sutskev er, Oriol V inyals, and Quoc V Le, “Se- quence to sequence learning with neural networks, ” in Advances in Neural Information Processing Systems 27 , Z. Ghahramani, M. W elling, C. Cortes, N. D. Lawrence, and K. Q. W einberger , Eds., pp. 3104–3112. Curran As- sociates, Inc., 2014. [7] Alex Gra ves, Santiago Fern ´ andez, F austino Gomez, and J ¨ urgen Schmidhuber, “Connectionist temporal classifi- cation: Labelling unsegmented sequence data with re- current neural networks, ” in Pr oceedings of the 23r d International Confer ence on Mac hine Learning , New Y ork, NY , USA, 2006, ICML ’06, pp. 369–376, A CM. [8] Colin Raffel, Minh-Thang Luong, Peter J. Liu, Ron J. W eiss, and Douglas Eck, “Online and linear-time atten- tion by enforcing monotonic alignments, ” in Pr oceed- ings of the 34th International Confer ence on Machine Learning , Doina Precup and Y ee Whye T eh, Eds., Inter - national Con vention Centre, Sydne y , Australia, 06–11 Aug 2017, vol. 70 of Pr oceedings of Machine Learning Resear ch , pp. 2837–2846, PMLR. [9] S. Kim, T . Hori, and S. W atanabe, “Joint ctc-attention based end-to-end speech recognition using multi-task learning, ” in 2017 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , March 2017, pp. 4835–4839. [10] Jan Choro wski, Dzmitry Bahdanau, K yunghyun Cho, and Y oshua Bengio, End-to-end continuous speech r ecognition using attention-based r ecurr ent NN: F irst r esults , 2014. [11] Jan K Chorowski, Dzmitry Bahdanau, Dmitriy Serdyuk, Kyungh yun Cho, and Y oshua Bengio, “ Attention-based models for speech recognition, ” in Advances in Neu- ral Information Pr ocessing Systems 28 , C. Cortes, N. D. Lawrence, D. D. Lee, M. Sugiyama, and R. Garnett, Eds., pp. 577–585. Curran Associates, Inc., 2015. [12] D. Bahdanau, J. Chorowski, D. Serdyuk, P . Brakel, and Y . Bengio, “End-to-end attention-based large vocab u- lary speech recognition, ” in 2016 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , March 2016, pp. 4945–4949. [13] W . Chan, N. Jaitly , Q. Le, and O. V inyals, “Listen, at- tend and spell: A neural network for large v ocabulary con versational speech recognition, ” in 2016 IEEE In- ternational Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , March 2016, pp. 4960–4964. [14] Y annis M. Assael, Brendan Shillingford, Shimon Whiteson, and Nando de Freitas, “Lipnet: Sentence- lev el lipreading, ” vol. abs/1611.01599, 2016. [15] Martin Cooke, Jon Barker , Stuart Cunningham, and Xu Shao, “ An audio-visual corpus for speech percep- tion and automatic speech recognition, ” The Journal of the Acoustical Society of America , vol. 120, no. 5, pp. 2421–2424, 2006. [16] Joon Son Chung, Andre w Senior , Oriol V inyals, and Andrew Zisserman, “Lip reading sentences in the wild, ” in The IEEE Conference on Computer V ision and P at- tern Recognition (CVPR) , July 2017. [17] Minh-Thang Luong, Hieu Pham, and Christopher D. Manning, “Effecti ve approaches to attention-based neu- ral machine translation, ” CoRR , vol. abs/1508.04025, 2015. [18] Dzmitry Bahdanau, Kyunghyun Cho, and Y oshua Ben- gio, “Neural machine translation by jointly learning to align and translate, ” CoRR , vol. abs/1409.0473, 2014. [19] W ojciech Zaremba, Ilya Sutskev er, and Oriol V inyals, “Recurrent neural network regularization, ” CoRR , v ol. abs/1409.2329, 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment