Performance Evaluation in High-Speed Networks by the Example of Intrusion Detection

Purchase decisions for devices in high-throughput networks as well as scientific evaluations of algorithms and technologies need to be based in measurements and clear procedures. Therefore, evaluation of network devices and their performance in high-throughput networks is an important part of research. In this paper, we document our approach and show its applicability for our purpose in an evaluation of two of the most well-known and common open source intrusion detection systems, Snort and Suricata. We used a hardware network testing setup to ensure a realistic environment and documented our testing approach. In our work, we focus on accuracy of the detection especially dependent on bandwidth. We would like to pass on our experiences and considerations.

💡 Research Summary

**

The paper presents a systematic methodology for evaluating intrusion detection systems (IDS) in high‑throughput network environments and applies it to two widely used open‑source IDS: Snort (v2.9.9) and Suricata (v3.2.1). Recognizing that 10 Gbps Ethernet has become commonplace in enterprise and academic networks, the authors argue that performance measurements under realistic conditions are essential for both procurement decisions and scientific research.

Testbed Architecture

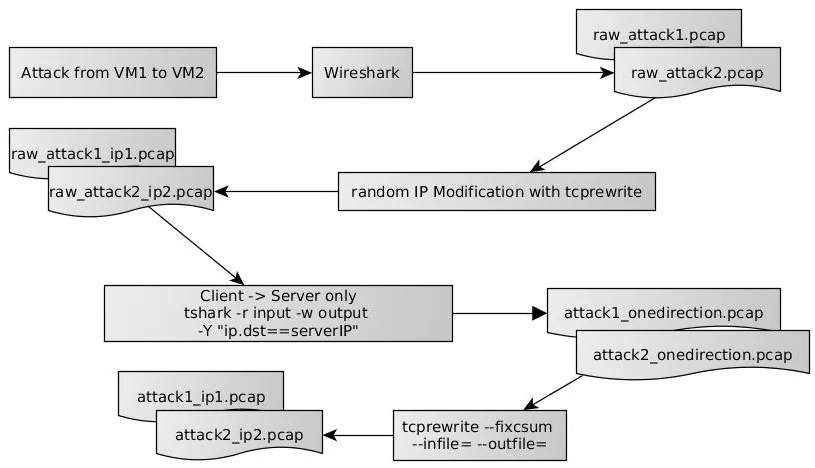

A dedicated off‑the‑shelf server equipped with a 4‑core 3.1 GHz CPU and 6 GB RAM serves as the IDS platform. The server is connected via 10 Gbps NICs to two additional machines that emulate an external network (traffic generator) and an internal network (traffic receiver). The traffic generator runs Kali Linux and Metasploit to produce a variety of malicious payloads, while benign background traffic is generated with iperf3. Attack traffic is derived from a McAfee Labs threat report and includes browser‑based exploits, SSH brute‑force attempts, DoS floods, SSL attacks, port scans, DNS spoofing, and backdoors—collectively representing 91 % of observed Internet attacks. Captured attack traces are replayed using tcpreplay from a RAM‑disk to achieve precise line‑rate speeds ranging from 1 Gbps to 7 Gbps.

Rule Set Harmonization

Both IDS are configured with an identical minimal rule set to ensure that any performance differences stem from the IDS implementations rather than rule complexity. Rules are written in Snort syntax but are also compatible with Suricata, which can parse Snort rules. Thresholds (e.g., flood detection threshold of 150) are kept identical across the two systems.

Evaluation Procedure

Each experiment is defined by four parameters: (1) evaluation duration, (2) number of attacks per minute (10–35), (3) traffic throughput (1–7 Gbps), and (4) the IDS under test. The test sequence consists of initialization (loading attack plans), execution (continuous benign traffic plus scheduled attacks), and output collection (CPU, memory, packet statistics, and IDS alerts). All three machines are synchronized and controlled by automated scripts, guaranteeing repeatability.

Metrics Collected

- CPU utilization per core

- Memory consumption

- Packets received, analyzed, and dropped (derived from IDS statistics and system logs)

- True Positive (TP), False Positive (FP), and False Negative (FN) counts based on a priority file that distinguishes mandatory from optional alert messages

- Derived performance indicators: True Positive Rate (Sensitivity = TP/(TP+FN)), Precision = TP/(TP+FP), Drop Rate, and average CPU/Memory usage

Key Findings

- Throughput Impact: As traffic speed increases, both IDS exhibit higher CPU usage and packet drop rates. The drop rate rises sharply at higher throughputs, indicating that the processing pipelines become saturated.

- Attack Frequency Impact: Varying the number of attacks per minute does not significantly affect CPU utilization or drop rate, suggesting that the IDS processing cost is dominated by raw packet volume rather than the number of signatures triggered.

- Detection Accuracy: Despite increased drop rates at higher throughputs, both precision and sensitivity remain stable across all tested speeds. No false positives were observed in any scenario. This implies that the dropped packets were not critical for the detection of the selected attacks.

- Resource Footprint: Snort maintains a very low memory footprint (~6 MB) while Suricata consumes substantially more memory (~80 MB) due to its multithreaded architecture and internal buffering.

- Scalability Considerations: Suricata’s multithreading allows it to utilize all four CPU cores, but the memory overhead may become a limiting factor in constrained environments. Snort’s single‑threaded design keeps memory usage minimal but may struggle to keep up with line‑rate traffic on limited cores.

Strengths of the Study

- Detailed documentation of the hardware setup, traffic generation, and synchronization procedures enhances reproducibility.

- Use of real‑world attack mixes based on a reputable threat report adds ecological validity.

- Automated processing of IDS logs, including priority‑based filtering, yields accurate TP/FP/FN calculations.

Limitations and Future Work

- The maximum tested throughput is 7 Gbps; performance at the full 10 Gbps Ethernet standard remains unverified.

- Only a minimal rule set was employed; larger, production‑scale rule bases could reveal different CPU and memory scaling behaviors.

- The study excludes Bro/Zeek and other IDS categories, limiting the generalizability of the conclusions.

- Attack‑type‑specific detection rates are not reported, preventing analysis of whether certain signatures are more vulnerable to packet loss.

- No assessment of the impact of dropped packets on downstream security operations (e.g., forensic analysis) is provided.

Conclusion

The paper delivers a practical, repeatable framework for benchmarking IDS performance in high‑speed networks and demonstrates that Snort and Suricata exhibit distinct trade‑offs: Snort offers a lightweight memory profile with modest CPU demands, whereas Suricata leverages multithreading to handle higher packet rates at the cost of increased memory consumption. Both systems maintain high detection accuracy despite packet drops, but the scalability limits observed suggest that further testing at true 10 Gbps rates, with richer rule sets and additional IDS platforms, is necessary to fully inform procurement and deployment decisions in modern high‑throughput environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment