Unsupervised Alignment of Embeddings with Wasserstein Procrustes

We consider the task of aligning two sets of points in high dimension, which has many applications in natural language processing and computer vision. As an example, it was recently shown that it is possible to infer a bilingual lexicon, without supervised data, by aligning word embeddings trained on monolingual data. These recent advances are based on adversarial training to learn the mapping between the two embeddings. In this paper, we propose to use an alternative formulation, based on the joint estimation of an orthogonal matrix and a permutation matrix. While this problem is not convex, we propose to initialize our optimization algorithm by using a convex relaxation, traditionally considered for the graph isomorphism problem. We propose a stochastic algorithm to minimize our cost function on large scale problems. Finally, we evaluate our method on the problem of unsupervised word translation, by aligning word embeddings trained on monolingual data. On this task, our method obtains state of the art results, while requiring less computational resources than competing approaches.

💡 Research Summary

The paper addresses the problem of aligning two high‑dimensional point clouds, a task that underlies many applications such as unsupervised bilingual lexicon induction and point‑set registration. Traditional unsupervised approaches either rely on adversarial training (GANs) or on minimizing a Wasserstein distance, both of which involve complex, often unstable optimization procedures. The authors propose a fundamentally different formulation: jointly estimate an orthogonal transformation matrix Q and a permutation matrix P that aligns the two sets. This “Wasserstein Procrustes” problem can be written as

min_{Q∈O(d)} min_{P∈Pₙ} ‖X Q − P Y‖²_F,

where X and Y are the two embedding matrices, O(d) denotes the set of orthogonal d × d matrices, and Pₙ the set of n × n permutation matrices. When one variable is fixed, the other has a closed‑form solution: Q is obtained via the SVD of Xᵀ P Y, and P via the Hungarian algorithm (or a Sinkhorn approximation). However, naïve alternating minimization quickly gets trapped in poor local minima, especially for large n.

To overcome this, the authors introduce two complementary strategies. First, they compute a high‑quality initialization using a convex relaxation of the quadratic assignment problem. By replacing the permutation set with its convex hull—the Birkhoff polytope of doubly‑stochastic matrices—they solve

min_{P∈Bₙ} ‖K_X P − P K_Y‖²_F

with K_X = X Xᵀ and K_Y = Y Yᵀ, using the Frank‑Wolfe algorithm. The resulting doubly‑stochastic matrix P* is then projected back to an orthogonal matrix Q₀ via SVD of P* Y Xᵀ. This step provides a principled starting point that dramatically improves convergence.

Second, they design a stochastic optimization scheme that scales to millions of vectors. At each iteration t, a mini‑batch of size b (typically a few thousand) is sampled from X and Y, yielding X_t and Y_t. The optimal matching P_t for this batch is computed given the current Q_t, and the gradient with respect to Q is

G_t = −2 X_tᵀ P_t Y_t.

A gradient step is taken and the updated matrix is projected back onto the Stiefel manifold (the set of orthogonal matrices) by performing an SVD and retaining the product of the left and right singular vectors. This stochastic gradient descent with manifold projection is computationally cheap (O(b³) for exact matching, O(b² log b) with Sinkhorn) and empirically converges within a few minutes.

The authors also address the “hubness” problem that plagues nearest‑neighbor retrieval in high‑dimensional spaces. After mapping source embeddings into the target space, they replace raw cosine similarity with either Inverted Softmax (ISF) or Cross‑Domain Similarity Local Scaling (CSLS), both of which down‑weight overly popular “hub” vectors and improve translation precision.

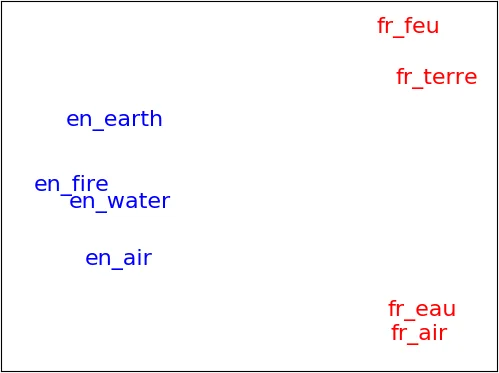

Experiments are conducted on synthetic toy data to illustrate the behavior of the algorithm under controlled conditions, and on real word‑embedding datasets (FastText and Word2Vec) for several language pairs. Evaluation metrics include bilingual lexicon induction accuracy (precision at 1) and bidirectional retrieval scores. The proposed method matches or exceeds the performance of state‑of‑the‑art unsupervised approaches such as MUSE (Conneau et al., 2017) and the ICP‑based method of Hoshen & Wolf (2018), while requiring substantially less computational time (often under five minutes on a single GPU). Ablation studies confirm that the convex‑relaxation initialization is critical: without it, the stochastic optimizer frequently converges to suboptimal alignments.

In summary, the paper makes four main contributions: (1) a clean joint formulation of orthogonal transformation and permutation for embedding alignment; (2) a convex‑relaxation initialization derived from graph‑matching literature; (3) a scalable stochastic optimization algorithm that operates on mini‑batches and respects the orthogonal constraint via manifold projection; and (4) a thorough empirical validation showing state‑of‑the‑art results with modest computational resources. The work bridges concepts from optimal transport, Procrustes analysis, and graph matching, opening avenues for further extensions such as non‑linear mappings or applications beyond language, e.g., 3‑D point‑cloud registration.

Comments & Academic Discussion

Loading comments...

Leave a Comment