Investigating Label Noise Sensitivity of Convolutional Neural Networks for Fine Grained Audio Signal Labelling

We measure the effect of small amounts of systematic and random label noise caused by slightly misaligned ground truth labels in a fine grained audio signal labeling task. The task we choose to demonstrate these effects on is also known as framewise …

Authors: Rainer Kelz, Gerhard Widmer

INVESTIGA TING LABEL NOISE SENSITIVITY OF CONV OLUTIONAL NEURAL NETWORKS FOR FINE GRAINED A UDIO SIGN AL LABELLING Rainer K elz Gerhar d W idmer Johannes K epler University Linz Department of Computational Perception Altenberger Str . 69, 4040 Linz, Austria ABSTRA CT W e measure the effect of small amounts of systematic and random label noise caused by slightly misaligned ground truth labels in a fine grained audio signal labeling task. The task we choose to demonstrate these effects on is also kno wn as frame wise polyphonic transcription or note quantized multi- f0 estimation , and transforms a monaural audio signal into a sequence of note indicator labels. It will be sho wn that e ven slight misalignments ha ve clearly apparent ef fects, demon- strating a great sensiti vity of con volutional neural networks to label noise. The implications are clear: when using con vo- lutional neural networks for fine grained audio signal label- ing tasks, great care has to be taken to ensure that the anno- tations hav e precise timing, and are free from systematic or random error as much as possible - e ven small misalignments will hav e a noticeable impact. Index T erms — con volutional neural networks, multi- label classification, framewise polyphonic transcription 1. INTR ODUCTION Recent empirical work on quantifying the generalization ca- pabilities of deep neural networks [1, 2] questions the useful- ness of traditional learning theory applied to neural networks, and among other things shows that image classification per- formance gradually degrades in the presence of mislabeled images. W e in vestigate how severe these ef fects are for time series labeling when the ground truth labels are only slightly mis- aligned, which is a common occurrence when dealing with manually annotated time series data. The assumption being that the main difference between label noise in time series and label noise for images caused by annotators is the similarity of examples in input space. This comparison is justified by the fact that the way a sequence labeling task with con volu- tional neural networks is usually set up, corresponds exactly to repeated image classification on similar images obtained by shifting a reading windo w across the short time Fourier transformed audio signal. Howe ver , because the distribution of examples in the image domain is dif ferent from examples obtained in the audio domain it is not immediately clear to which extent label noise is a problem. W e posit that it is highly unlikely that two similar images will be assigned different labels by the same annotator . It is more likely that an imprecision either in hand movements steering a pointing device such as the mouse, or the annota- tion software used itself, will lead to very similar e xamples in time being assigned dif ferent labels. A sketch of this intu- itiv e notion can be seen in figure 1, where we can observe the sequential transformations of the audio signal input together with its annotation. Fig. 1 : The intuitiv e reason why imprecision in audio signal annotation may yield highly similar, yet dif ferently labeled examples. At the last stage of the signal processing chain we see pairs of input and corresponding indicator ( x t , y t,k ) for label k . Note the very similar frames x 3 and x 4 and their different labels. The data consists of pairs w ∈ R T hi denoting the audio signal and M ∈ { 0 , 1 } T hi × K denoting the annotation, where T hi is the number of audio samples, K is the number of la- bels, and k is the index of an arbitrary label. A common pre- processing step is the short-time Fourier transform (STFT) of w and subsequent application of a filter bank to obtain X ∈ R T lo × B . B is the frequency resolution, dependent on the choice of filter bank applied after the Fourier transform. The usually much lo wer frame rate of the STFT is depen- dent on the hop size, resulting in T lo T hi . In a similar fashion, the high resolution annotation M is transformed into Y ∈ R T lo × K to match up with the filtered STFT . The final inputs to the conv olutional neural network are pairs x t ∈ R T lo c × B of e xcerpts of length T lo c from X at time t and labels y t,k ∈ { 0 , 1 } as a tar get for each label indicator output. Looking at these steps in detail makes it apparent that slight misalignments in the annotation, be they random or systematic, lead to collections of pairs { ( x a , y a,k ) , ( x b , y b,k ) , . . . } where most distance measures in input space d ( x a , x b ) are small but the targets for these examples dif fer , as y a,k 6 = y b,k . The sequence labeling task we chose to inv estigate the impact of misaligned annotations on, is also called frame- wise polyphonic transcription or note quantized multi-f0 es- timation in the music information retrie v al community . W e claim that the effects measured here also extend to beyond the frame wise scenario because the misalignment problems we consider only affect the start and end positions of a label, and hence also extend to systems that try and predict labels in interval form. 2. MODELS The con volutional neural networks we use for time series la- beling are parametrized functions g θ : R T c × B → { 0 , 1 } K , mapping excerpts from a filtered STFT of length T c in time and width B in frequency to a vector of length K , whose components indicate the presence or absence of a label. After the application of a logarithmic filter bank to the STFT , the number of bins comes do wn to B = 229 , as described in [3]. For framewise transcription of pianos, K = 88 denotes the tonal range of the instrument, and a label indicator having a value of 1 means that a note is sounding in the excerpt pre- sented as the input. The misalignment effects are measured at two different STFT frame rates, 31 . 25 [fps] and 100 [fps] , the lower frame rate also being used in [4, 3]. Keeping the temporal context approximately the same for the two frame rates necessitated the use of two different architectures, with the one for the higher frame rate being deeper and wider , yet only slightly increasing parameter count. 1 For the training procedure, we adhere closely to the de- scription in [3], which uses mini-batch stochastic gradient de- scent with Nesterov momentum and a step-wise learning rate schedule, b ut reduced mini-batch size. A rather drastic reduc- tion of the number of examples in a batch from 128 examples 1 Source code to replicate all results can be found at https://github. com/rainerkelz/ICASSP18 as adv ocated in [3], to 8 examples in the present work, is mo- tiv ated by findings in [5], which state that noisier gradient es- timates are helpful in finding flatter minima, thus potentially improving generalization. W e notice a small impro vement in prediction performance together with a con venient reduction in training time. 3. D A T ASET W e chose the MAPS dataset [6] as our experimental testbed due to the availability of a v ery precise ground truth, free from any human annotator disagreement. Note that for the purpose of demonstrating non-neglig able effect sizes of mis- alignments, any annotation could suf fice in principle, as long as it is unambiguous. The data consists of a collection of MIDI files, and corresponding audio renderings. Multiple sample banks were used to render the audio files. T o further increase acoustic variability , se veral MIDI files were played back on a computer controlled Disklavier and recorded in close and ambient microphone conditions. The MIDI files in the MAPS dataset have a sufficiently high temporal resolution that enables us to neglect any quantization error stemming from the con version of MIDI ticks to seconds and treat the start and end times of note labels ef fectively as if they were originating from a continuous space. There are two train- test protocols defined in [4], with different amounts of in- strument overlap. In Configuration-I , instruments in training, validation and test sets overlap, whereas in Configuration-II only training and validation sets contain ov erlapping instru- ments. The test set for Configuration-II solely consists of pieces rendered with the Diskla vier . For both configurations, the 31 . 25 [fps] models are trained and ev aluated on four dif- ferent splits of the training data. The models with the higher frame rate input at 100 [fps] are trained and ev aluated on one fold only for both configurations due to the high computa- tional cost. 4. EXPERIMENT AL SETUP For all models trained, all non-architectural hyper parameters are fixed, the only varied quantities are the choice of frame rate and the choice of labeling function, the exact notion of which we will now define. Throughout this paper we will use the term labeling func- tion to mean the complete con version process from high res- olution annotations to assigning concrete labels y t,k ∈ { 0 , 1 } to the examples x t ∈ R T c × B shown to the network. This in- cludes the annotator as well, be it a mechanistic generator , as in the case of extracting labels from MIDI files, or a human providing manual annotation. The main focus for each func- tion lies on the conv ersion from high resolution annotations to lower resolution annotations. Figure 2a shows a schematic depiction of the con version of a label from an interval defined t s t e ¯ t s ¯ t e d t s e 1 (a) schematic illustration of quantities in volved in the definition of la- beling functions for fine grained sequence labeling tasks f · ( ¯ t s , ¯ t e ) t s t e f a b ¯ t s / d t e b ¯ t e / d t e f b d ¯ t s / d t e d ¯ t e / d t e f c b ¯ t s / d t c b ¯ t e / d t c f d b ¯ t s / d t c b ¯ t e / d t c + b ( ¯ t e − ¯ t s ) / d t c f e f a + R j f a + R j f f f a + R s f a + R e (b) The different labeling functions used, leading to different kinds of quantization error . Symbols b·c , b·e , d·e denote the functions flo or( · ) , round( · ) , ceil( · ) respectiv ely , and R j,s,e ∈ {− 1 , 0 , 1 } are discrete, uniformly distributed random variables. f a is used as the ref- erence labeling function throughout this article. Fig. 2 : Quantization schemes and definitions of labeling func- tions. with high time resolution into a sequence of much fewer la- bels at a lo wer time resolution. Symbols ¯ t s , ¯ t e denote start and end times in high resolution, expressed in seconds (we pretend these are continuous, due to their high time resolu- tion). Symbols t s , t e are their counterparts in low resolution, expressed as discrete frame indices, with s , e denoting the errors incurred by rounding after con version. The symbol d t denotes the length of one lower resolution frame in seconds and is used as the con version factor . The exact definitions of the different labeling functions used to transform the high resolution annotations obtained from MIDI files into framewise labels can be found in fig- ure 2b. They can all be vie wed as functions of the form f : R × R → N × N , mapping pairs of continuous times to pairs of discrete indices. The differences between labeling functions lie in the choice of ho w to quantize and when. Functions f { a,b,c,d } deal with systematic misalignment caused by systematic quan- tization errors, and functions f { e,f } randomly modify the result of f a , by either shifting both start and end indices jointly by a random variable R j ∈ {− 1 , 0 , 1 } , or shifting the two indices separately by two independent random variables R s , R e ∈ {− 1 , 0 , 1 } . For all functions in v olving random variables, their realizations are dra wn from a discrete uniform distribution at the time of conv ersion. This means that for each pair of start and end times and a particular experimental run, a potential shift can happen only once. W e treat the labeling function f a , which simply rounds to the nearest inte ger , as the reference. All ev aluations are done on the first 30[s] of all pieces in the respecti ve test set for a fold, and against a ground truth obtained at a frame rate of 100 [fps] with labeling function f a . This means the predic- tions of the models running at a lower frame rate of 31 . 25 [fps] need to be upsampled again for ev aluation. T o measure pre- diction performance, we use the definitions for precision P , recall R and f-measure F as described in [7]. P = P T t =1 T P [ t ] P T t =1 T P [ t ] + F P [ t ] (1) R = P T t =1 T P [ t ] P T t =1 T P [ t ] + F N [ t ] (2) F = 2 · P · R P + R (3) 5. RESUL TS The effect of small misalignments on label annotation can be observed in figures 3 and 4, which show clear evidence of the effect of using different labeling functions at a frame rate of 31 . 25 [fps] , across multiple folds for Configuration I with similar data in train and test sets. Along the horizontal axis we see the labeling functions, and the vertical axis shows f- measure. For each labeling function we see the performance on individual folds ( × , ◦ , , ) and the te xtually annotated mean ( − ) of all folds. W e immediately notice that a labeling func- tion with either a small systematic ( f { a,b,c,d } ) or random ( f { e,f } ) error has a non-negligible ef fect on the f-measure. f a f b f c f d f e f f Lab elfunction 74 75 76 77 78 79 80 81 82 F − measure 79.21 77.38 78.25 76.58 77.47 77.57 Fig. 3 : Configuration I: F-measure on the validation set at a frame rate of 31 . 25 [fps] across different labeling functions for multiple folds. The impact on the performance on the test set is less se- vere, as can be observed in figure 4, but still on the order of 1 percentage point. Incidentally , the mean result for the ref- erence labeling function improves slightly on the state of the art for this task as reported in [3]. Interestingly , when inspecting the lower frame rate results for Configuration II which has much more dissimilar train and f a f b f c f d f e f f Lab elfunction 68 70 72 74 76 78 80 82 84 86 F − measure 80.42 79.29 79.56 78.55 79.16 79.27 Fig. 4 : Configuration I: F-measure on the test set at a frame rate of 31 . 25 [fps] across dif ferent labeling functions for mul- tiple folds. test sets in terms of acoustic conditions, we can still observe a similar pattern. Ev en small misalignments lead to non neg- ligible differences in performance, observ able on the test set results in figure 5. The obtained results all improve upon the state of the art as reported in [3], regardless of labeling func- tion which we attribute to some extent to the much smaller batch size and the usage of a labeling function introducing systematic error in [3]. f a f b f c f d f e f f Lab elfunction 71 72 73 74 75 76 77 78 79 F − measure 75.58 73.14 76.42 73.16 75.41 75.01 Fig. 5 : Configuration II: F-measure on the test set at a frame rate of 31 . 25 [fps] across dif ferent labeling functions for mul- tiple folds. Finally , focusing our attention on the results for the test set at a higher frame rate of 100 [fps] in figure 6, a pattern of performance differences with respect to the reference labeling function f a is still noticeable. The sev erity is much smaller howe ver , which is to be e xpected, due to the higher frame rate and hence lo wer quantization error . Results are shown for only one fold, due to the high computational costs of training and ev aluation at higher frame rates. An interesting oddity in both lo w and high frame rate cases for Configuration II, is the performance increase when using f c to train and f a to obtain the ground truth for ev alu- ation. This indicates a problem with the ground truth align- ment, likely due to MIDI clock drift or similar issues, and will need to be addressed in future work. It has no effect on the main contribution of this work, which is to demonstrate that con volutional neural networks are highly sensitiv e to even small amounts of label noise, and to increase awareness that this issue needs to be addressed for fine grained audio signal labeling tasks. f a f b f c f d f e f f Lab elfunction 77 . 5 78 . 0 78 . 5 79 . 0 F − measure Fig. 6 : Configuration II: F-measure on the test set at a frame rate of 100 [fps] across dif ferent labeling functions. 6. CONCLUSION The effect of systematic and random label noise stemming from small misalignments of label annotations on con volu- tional neural networks in the context of audio signal labeling was in vestigated empirically , and sho wn to be non-ne gligible. W e therefore conclude that great care must be taken to make sure the ground truth annotations align with the events in the audio as much as possible, especially if the intention is to use the data for fine grained sequence labeling, for example for subsequent analysis of musical timing. W e demonstrated the effect with the help of already very precise annotations stem- ming from a mechanistic generator . W e surmise that ev en in the case of having multiple annotations of human origin, and hence the potential to use annotator disagreement to pinpoint problematic labels and so obtain better estimates of the true label alignment, the sensiti vity issue will need to be addressed carefully . 7. A CKNO WLEDGMENTS A T esla K40 used for parts of this research was donated by the NVIDIA Corporation. W e also thank the authors and main- tainers of the Lasagne [8] and Theano [9] software packages. 8. REFERENCES [1] Dev ansh Arpit, Stanislaw K. Jastrzebski, Nicolas Ballas, David Krueger , Emmanuel Bengio, Maxinder S. Kan- wal, T egan Maharaj, Asja Fischer, Aaron C. Courville, Y oshua Bengio, and Simon Lacoste-Julien, “ A closer look at memorization in deep networks, ” in Pr oceedings of the 34th International Confer ence on Machine Learn- ing, ICML 2017, Sydne y , NSW , Austr alia, 6-11 August 2017 , 2017, pp. 233–242. [2] Chiyuan Zhang, Samy Bengio, Moritz Hardt, Benjamin Recht, and Oriol V inyals, “Understanding deep learn- ing requires rethinking generalization, ” CoRR , vol. abs/1611.03530, 2016. [3] Rainer K elz, Matthias Dorfer , Filip K orzeniowski, Sebas- tian B ¨ ock, Andreas Arzt, and Gerhard W idmer , “On the potential of simple framewise approaches to piano tran- scription, ” in Pr oceedings of the 17th International So- ciety for Music Information Retrieval Confer ence, ISMIR 2016, New Y ork City , United States, August 7-11, 2016 , 2016, pp. 475–481. [4] Siddharth Sigtia, Emmanouil Benetos, and Simon Dixon, “ An end-to-end neural netw ork for polyphonic piano mu- sic transcription, ” IEEE/A CM T rans. A udio, Speech & Language Pr ocessing , vol. 24, no. 5, pp. 927–939, 2016. [5] Nitish Shirish K eskar , Dheev atsa Mudigere, Jor ge No- cedal, Mikhail Smelyanskiy , and Ping T ak Peter T ang, “On large-batch training for deep learning: Generaliza- tion gap and sharp minima, ” CoRR , vol. abs/1609.04836, 2016. [6] V alentin Emiya, Roland Badeau, and Bertrand David, “Multipitch estimation of piano sounds using a ne w prob- abilistic spectral smoothness principle, ” IEEE T rans. A u- dio, Speech & Languag e Pr ocessing , vol. 18, no. 6, pp. 1643–1654, 2010. [7] Mert Bay , Andreas F . Ehmann, and J. Stephen Do wnie, “Evaluation of multiple-f0 estimation and tracking sys- tems, ” in Pr oceedings of the 10th International So- ciety for Music Information Retrieval Conference, IS- MIR 2009, Kobe International Confer ence Center , Kobe , J apan, October 26-30, 2009 , 2009, pp. 315–320. [8] Sander Dieleman, Jan Schl ¨ uter , Colin Raf fel, Eben Olson, Søren Kaae Sønderby , Daniel Nouri, Daniel Maturana, Martin Thoma, Eric Battenberg, Jack Kelly , Jef frey De Fauw , Michael Heilman, diogo149, Brian McFee, Hen- drik W eideman, takacsg84, peterderiv az, Jon, instagibbs, Dr . Kashif Rasul, CongLiu, Britefury , and Jonas Degrav e, “Lasagne: First release., ” Aug. 2015. [9] James Bergstra, Olivier Breuleux, Fr ´ ed ´ eric Bastien, Pas- cal Lamblin, Razvan Pascanu, Guillaume Desjardins, Joseph Turian, David W arde-Farley , and Y oshua Bengio, “Theano: a CPU and GPU Math Expression Compiler , ” in Pr oceedings of the Python for Scientific Computing Confer ence (SciPy) , 2010.

Original Paper

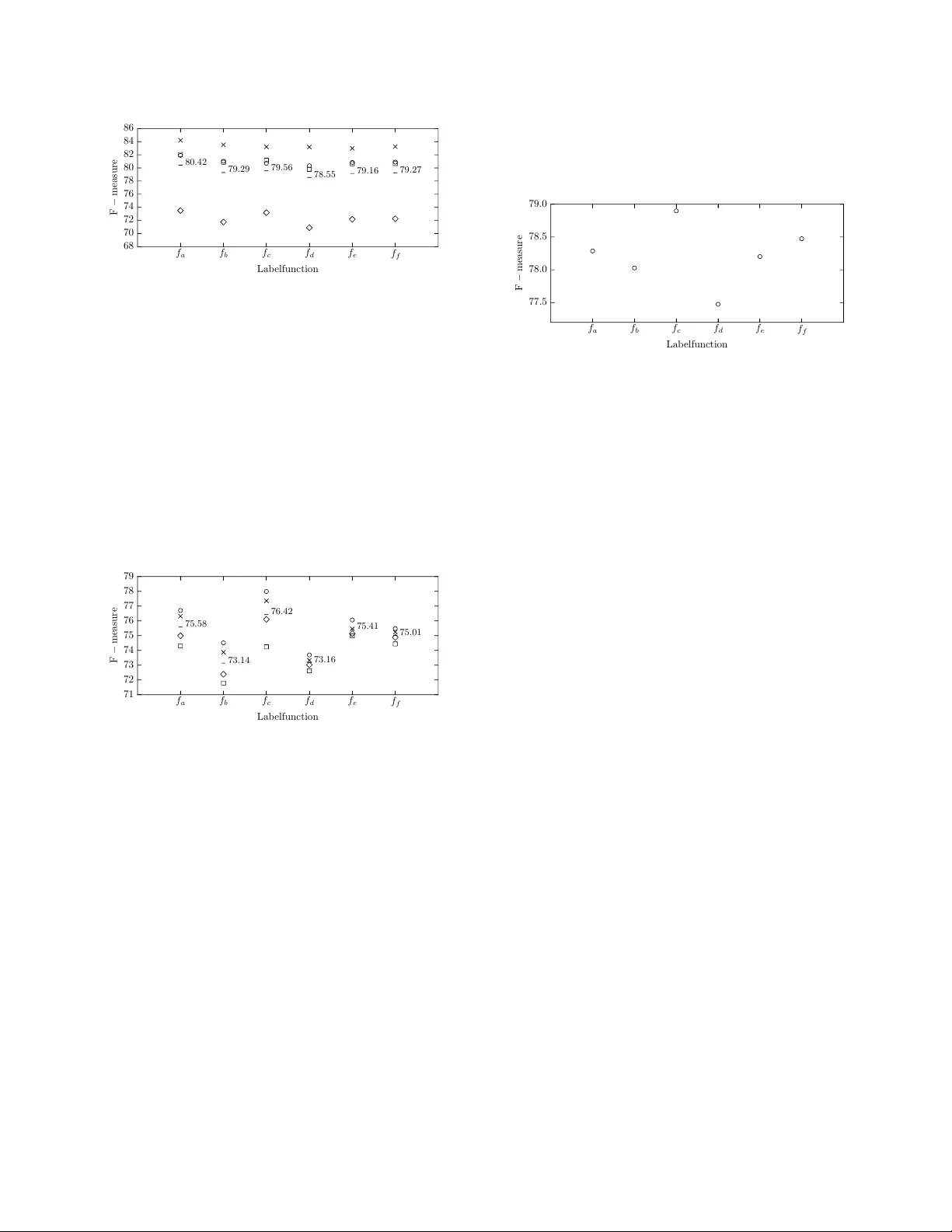

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment