A Pragmatic AI Approach to Creating Artistic Visual Variations by Neural Style Transfer

On a constant quest for inspiration, designers can become more effective with tools that facilitate their creative process and let them overcome design fixation. This paper explores the practicality of applying neural style transfer as an emerging design tool for generating creative digital content. To this aim, the present work explores a well-documented neural style transfer algorithm (Johnson 2016) in four experiments on four relevant visual parameters: number of iterations, learning rate, total variation, content vs. style weight. The results allow a pragmatic recommendation of parameter configuration (number of iterations: 200 to 300, learning rate: 2e-1 to 4e-1, total variation: 1e-4 to 1e-8, content weights vs. style weights: 50:100 to 200:100) that saves extensive experimentation time and lowers the technical entry barrier. With this rule-of-thumb insight, visual designers can effectively apply deep learning to create artistic visual variations of digital content. This could enable designers to leverage AI for creating design works as state-of-the-art.

💡 Research Summary

The paper investigates the practical use of neural style transfer (NST) as a creative aid for visual designers, aiming to reduce design fixation and accelerate idea generation. It adopts the well‑known Johnson et al. (2016) perceptual‑loss based image transformation network, which leverages a pre‑trained VGG‑19 encoder to extract content and style feature maps and then optimises a combined loss function to synthesize a stylised output. The authors focus on four hyper‑parameters that most directly affect both visual quality and computational cost: the number of optimisation iterations, the learning rate of the optimiser, the total‑variation regularisation weight, and the relative weighting of content versus style loss.

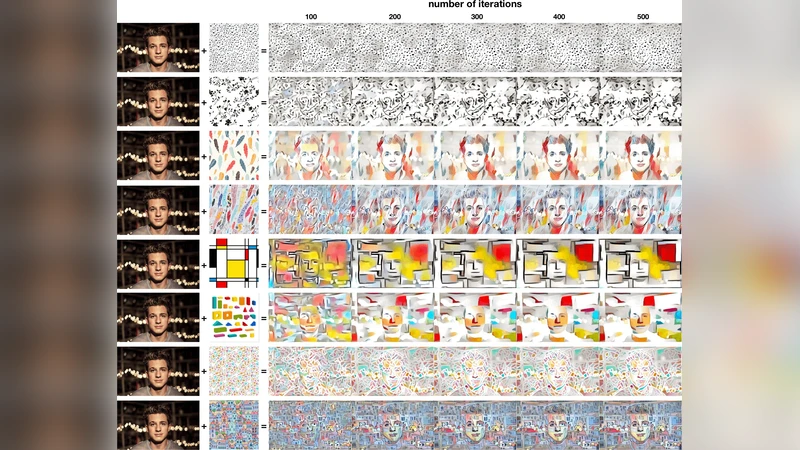

Four controlled experiments were conducted. In the first experiment, iteration counts of 100, 200, 300, and 400 were tested. Results showed that 200–300 iterations provide a sweet spot where the stylised image retains sufficient detail while keeping processing time within a reasonable range; 100 iterations produced under‑stylised outputs, and 400 offered diminishing returns relative to the extra time required.

The second experiment varied the learning rate across 0.05, 0.1, 0.2, 0.4, and 0.6. A learning rate between 0.2 and 0.4 yielded stable convergence, strong style transfer, and sharp details. Lower rates slowed convergence excessively, while higher rates caused loss oscillations and visual artefacts.

The third experiment examined total‑variation (TV) regularisation values of 1e‑3, 1e‑4, 1e‑5, 1e‑6, and 1e‑8. TV regularisation controls image smoothness; larger values suppress noise but also blur fine texture. The authors found that values in the range 1e‑4 to 1e‑8 preserve texture while effectively reducing unwanted speckle, making them suitable for design‑oriented workflows where subtle detail matters.

The final experiment explored the balance between content and style loss by testing ratios of content:style weights at 10:100, 50:100, 100:100, 200:100, and 300:100. Ratios between 50:100 and 200:100 maintained the structural integrity of the original artwork while allowing the style to be clearly perceptible. Extreme ratios either overwhelmed the content (10:100) or left the output insufficiently stylised (300:100).

Synthesising these findings, the authors propose a pragmatic rule‑of‑thumb for designers: run 200–300 optimisation iterations, set the optimiser learning rate to 0.2–0.4, use total‑variation regularisation between 1e‑4 and 1e‑8, and configure the content‑to‑style weight ratio between 50:100 and 200:100. This configuration dramatically reduces the need for time‑consuming trial‑and‑error, lowers the technical entry barrier, and delivers high‑quality stylised images suitable for professional design projects.

The paper also acknowledges limitations. All experiments were performed on static 2‑D images; extensions to 3‑D models, video sequences, or other media were not evaluated. Moreover, the study lacks a formal user‑experience assessment—no surveys or qualitative feedback from designers were collected to gauge perceived creativity or workflow integration. Future work is suggested to incorporate interactive user interfaces that allow real‑time adjustment of the highlighted parameters, to test the approach on diverse media types, and to conduct user studies that measure the impact of AI‑assisted style transfer on creative outcomes.

In conclusion, this research provides concrete, empirically validated guidance for applying neural style transfer in a design context. By translating deep‑learning hyper‑parameter tuning into an accessible checklist, it empowers visual designers to harness state‑of‑the‑art AI techniques without deep technical expertise, thereby fostering more fluid and innovative design processes.

Comments & Academic Discussion

Loading comments...

Leave a Comment