Assessing a mobile-based deep learning model for plant disease surveillance

Convolutional neural network models (CNNs) have made major advances in computer vision tasks in the last five years. Given the challenge in collecting real world datasets, most studies report performance metrics based on available research datasets. In scenarios where CNNs are to be deployed on images or videos from mobile devices, models are presented with new challenges due to lighting, angle, and camera specifications, which are not accounted for in research datasets. It is essential for assessment to also be conducted on real world datasets if such models are to be reliably integrated with products and services in society. Plant disease datasets can be used to test CNNs in real time and gain insight into real world performance. We train a CNN object detection model to identify foliar symptoms of diseases (or lack thereof) in cassava (Manihot esculenta Crantz). We then deploy the model on a mobile app and test its performance on mobile images and video of 720 diseased leaflets in an agricultural field in Tanzania. Within each disease category we test two levels of severity of symptoms - mild and pronounced, to assess the model performance for early detection of symptoms. In both severities we see a decrease in the F-1 score for real world images and video. The F-1 score dropped by 32% for pronounced symptoms in real world images (the closest data to the training data) due to a drop in model recall. If the potential of smartphone CNNs are to be realized our data suggest it is crucial to consider tuning precision and recall performance in order to achieve the desired performance in real world settings. In addition, the varied performance related to different input data (image or video) is an important consideration for the design of CNNs in real world applications.

💡 Research Summary

The paper investigates the real‑world performance of a convolutional neural network (CNN)‑based object detection model for diagnosing foliar diseases of cassava (Manihot esculenta) when deployed on a smartphone application. While recent advances in deep learning have produced impressive results on publicly available research datasets, the authors argue that such evaluations overlook the challenges posed by mobile image capture—variable lighting, camera angles, sensor quality, and background clutter—that are typical in agricultural fields. To bridge this gap, they first train a CNN object detector (likely a YOLO‑style architecture) using existing research images of cassava leaf symptoms, labeling each leaf as “healthy,” “diseased‑mild,” or “diseased‑pronounced.” Data augmentation (rotation, flipping, brightness/contrast changes) is applied to improve robustness, but the training set remains largely controlled and homogeneous.

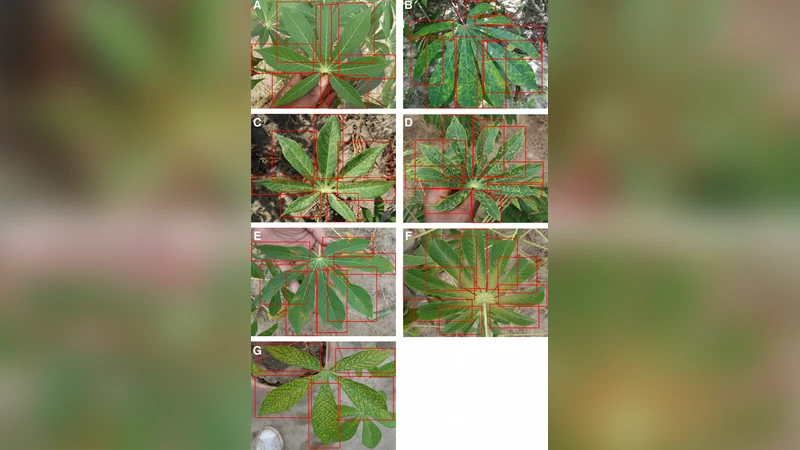

The trained model is then converted to a lightweight TensorFlow Lite format and embedded in an Android app. The app allows users to capture still photos or stream video; each frame is processed on‑device, and detected disease spots are highlighted with bounding boxes and confidence scores. For field validation, the authors collect 720 leaf images and video clips from an actual cassava field in Tanzania, encompassing both mild and pronounced symptom severity levels. This field data exhibits a wide range of real‑world conditions: different smartphone models, inconsistent illumination (sunny, overcast, shadows), varied viewing angles, and natural background elements such as soil and neighboring plants.

Performance is measured using precision, recall, and the harmonic mean F1‑score. The results reveal a pronounced drop in recall when moving from the research dataset to the field dataset, leading to an overall F1‑score reduction of up to 32 % for pronounced symptoms—the category most similar to the training data. Mild symptoms suffer a smaller but still notable decline. Video input further exacerbates the issue: compression artifacts and motion introduce additional false negatives, decreasing recall more than precision. Consequently, the model’s ability to flag diseased leaves early (the primary goal of early‑warning surveillance) is compromised in realistic settings.

The authors interpret these findings as evidence that “real‑world readiness” cannot be inferred from benchmark scores alone. They emphasize that precision‑recall trade‑offs must be deliberately tuned for deployment scenarios where missing a diseased leaf (low recall) can have severe agronomic consequences. Suggested mitigation strategies include: (1) augmenting the training corpus with field‑collected images and employing domain‑adaptation techniques (fine‑tuning, adversarial adaptation) to narrow the distribution gap; (2) dynamically adjusting detection thresholds based on user feedback or confidence calibration to favor higher recall; (3) applying temporal smoothing or motion‑compensation algorithms to video streams to recover missed detections; and (4) further model compression (pruning, quantization) to preserve inference speed on limited‑resource devices while maintaining accuracy.

In conclusion, the study provides a concrete, data‑driven assessment of mobile‑based deep learning for plant disease surveillance, highlighting that deployment on smartphones introduces substantial performance penalties, especially in recall. The work underscores the necessity of field‑centric evaluation, continuous model adaptation, and system‑level design choices (e.g., input modality, user interface) to achieve reliable, scalable disease monitoring in low‑resource agricultural contexts. Future research directions proposed include expanding to multiple crops and disease types, building automated pipelines for field data labeling, and integrating farmer feedback loops to create a self‑improving surveillance ecosystem.

Comments & Academic Discussion

Loading comments...

Leave a Comment