Human Aspects and Perception of Privacy in Relation to Personalization

The concept of privacy is inherently intertwined with human attitudes and behaviours, as most computer systems are primarily designed for human use. Especially in the case of Recommender Systems, which feed on information provided by individuals, their efficacy critically depends on whether or not information is externalized, and if it is, how much of this information contributes positively to their performance and accuracy. In this paper, we discuss the impact of several factors on users’ information disclosure behaviours and privacy-related attitudes, and how users of recommender systems can be nudged into making better privacy decisions for themselves. Apart from that, we also address the problem of privacy adaptation, i.e. effectively tailoring Recommender Systems by gaining a deeper understanding of people’s cognitive decision-making process.

💡 Research Summary

The paper investigates the interplay between user privacy attitudes and information disclosure behavior within recommender systems, highlighting the well‑known “privacy paradox” where users express privacy concerns yet often share personal data. It begins by reviewing literature that separates risk and trust as independent drivers: risk influences users’ intention to disclose, but trust exerts a stronger effect on actual disclosure actions. Building on this, the authors categorize the determinants of disclosure into three interrelated factors: uncertainty, context‑dependence, and malleability.

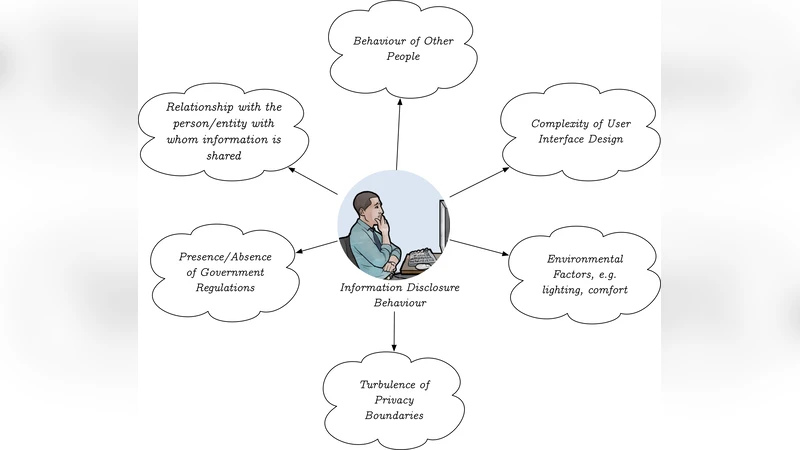

Uncertainty arises from asymmetric information about what data is collected, how it is used, and from complex, hard‑to‑read EULAs. This lack of clarity hampers users’ ability to perform a rational privacy calculus, while at the same time social motivations to share persist. Context‑dependence encompasses physical environment (lighting, temperature), interface design (formal vs casual), and social cues (closeness to the recipient, perceived norms). These situational cues can dramatically shift the amount of data a user is willing to reveal. Malleability refers to the ways system designers can subtly steer user choices through defaults, deceptive UI elements, or other nudges that exploit users’ limited cognitive resources.

The paper then revisits the Privacy‑by‑Design (PbD) framework, emphasizing seven principles—proactive, default privacy, embedded design, full functionality, end‑to‑end security, transparency, and respect for user privacy—and argues that these must be operationalized with behavioral insights. Specific nudging techniques are proposed: visual risk indicators, staged consent dialogs, personalized privacy settings, and transparent data‑use dashboards that empower users to make informed decisions.

Critically, the work is primarily a synthesis of prior studies and lacks original empirical validation. The suggested nudges are not tested in real‑world deployments, and the analysis does not account for cultural, demographic, or age‑related variations in privacy preferences. The authors acknowledge these gaps and call for future research involving controlled experiments, A/B testing of nudges, and multivariate modeling to quantify the relative weights of risk, trust, and contextual factors. Integrating technical privacy safeguards (e.g., differential privacy, homomorphic encryption) with human‑centric design is identified as a promising direction for creating recommender systems that respect user privacy while maintaining recommendation quality.

Comments & Academic Discussion

Loading comments...

Leave a Comment