A Context-based Approach for Dialogue Act Recognition using Simple Recurrent Neural Networks

Dialogue act recognition is an important part of natural language understanding. We investigate the way dialogue act corpora are annotated and the learning approaches used so far. We find that the dialogue act is context-sensitive within the conversation for most of the classes. Nevertheless, previous models of dialogue act classification work on the utterance-level and only very few consider context. We propose a novel context-based learning method to classify dialogue acts using a character-level language model utterance representation, and we notice significant improvement. We evaluate this method on the Switchboard Dialogue Act corpus, and our results show that the consideration of the preceding utterances as a context of the current utterance improves dialogue act detection.

💡 Research Summary

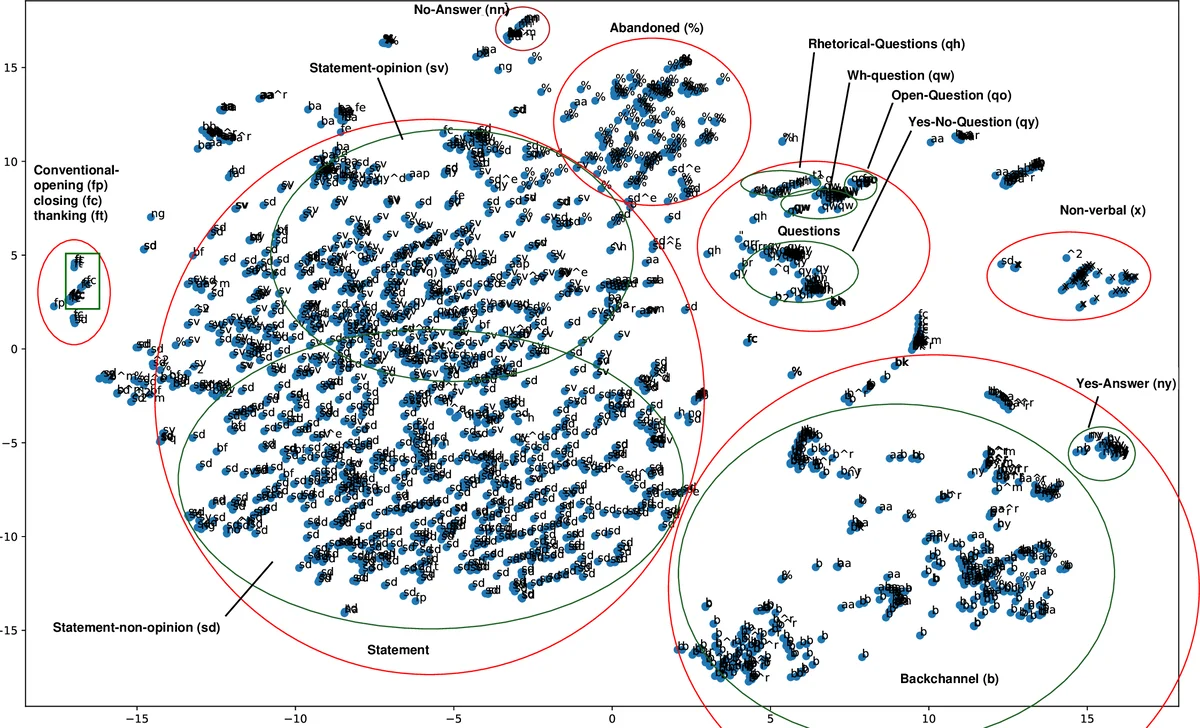

The paper addresses the problem of Dialogue Act (DA) recognition, a crucial component of natural language understanding, by emphasizing the importance of conversational context. While most prior work treats each utterance in isolation—using lexical, syntactic, or prosodic cues, and often relying on Hidden Markov Models (HMMs), Conditional Random Fields (CRFs), or deep neural networks—the authors argue that the DA of a current utterance is frequently determined by preceding utterances. They illustrate this with examples from the Switchboard Dialogue Act (SwDA) corpus, where the same lexical item “yeah” can be labeled as a Backchannel, Yes‑Answer, or other classes depending on the prior discourse.

The authors set out two goals: (1) to analyze how DA corpora are annotated and how existing models handle (or ignore) context, and (2) to propose a simple yet effective context‑based learning approach that can be deployed in real‑time dialogue systems. Their method consists of two stages. First, each utterance is encoded using a pre‑trained character‑level language model: a multiplicative Long Short‑Term Memory network (mLSTM) with 4,096 hidden units, originally trained on ~80 million Amazon product reviews. The model processes the utterance character by character and yields two possible representations: the hidden state after the last character (last‑feature vector) and the average of all hidden states across characters. Empirical tests show that the average vector captures more DA‑relevant information, so it is used for subsequent experiments.

Second, the authors feed these utterance vectors into a simple Elman‑style recurrent neural network (RNN) that also receives a small number of preceding utterance vectors (one to four) as context. The RNN hidden state at time t is computed as hₜ = σ(Wₕ hₜ₋₁ + I sₜ + b), where sₜ is the current utterance vector (augmented with a one‑hot speaker identifier). The output layer applies a softmax over the 42 DA classes. Training uses the Adam optimizer (initial learning rate 1e‑4 with decay), gradient clipping (norm = 1), early stopping, and categorical cross‑entropy loss. Ten‑fold runs are performed to assess stability.

Experiments follow the standard SwDA split (1115 training conversations, 19 test conversations). A baseline multilayer perceptron (MLP) that classifies each utterance independently achieves 73.96 % accuracy using the average feature vector. Adding one preceding utterance raises accuracy to 76.57 %; two utterances to 76.81 %; three utterances to 77.34 %; and four utterances to 77.28 %. The standard deviation across runs stays below 0.3 %, indicating robust performance. Compared to the prior state‑of‑the‑art context model by Kalchbrenner & Blunsom (2013), which reported 73.9 % accuracy, the proposed approach improves by roughly 3.4 percentage points, while using a far simpler architecture and requiring only a few prior turns.

Key contributions of the work are:

- A detailed examination of DA annotation schemes (DAMSL, Switchboard, ICSI) and the observation that many DA classes are highly context‑dependent.

- Introduction of a context‑aware DA classifier that combines a domain‑independent character‑level language model with a lightweight RNN, demonstrating that even minimal context (≤ 3 previous turns) yields substantial gains.

- Empirical evidence that pre‑training on large, unrelated text corpora (Amazon reviews) provides rich representations that generalize well to dialogue tasks, mitigating scalability concerns noted in earlier work.

The authors conclude that modeling discourse compositionality via simple recurrent structures is both effective and practical for real‑time dialogue systems, where future utterances are unavailable. Future directions include extending the approach to hierarchical models that can capture longer discourse, integrating prosodic or acoustic features, and testing on other dialogue domains such as customer‑service chats or task‑oriented systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment