Implementation of Artifact Detection in Critical Care: A Methodological Review

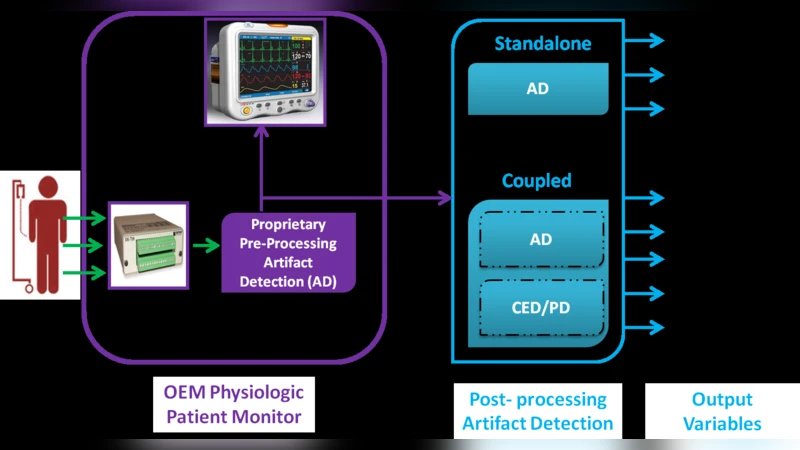

Artifact Detection (AD) techniques minimize the impact of artifacts on physiologic data acquired in Critical Care Units (CCU) by assessing quality of data prior to Clinical Event Detection (CED) and Parameter Derivation (PD). This methodological review introduces unique taxonomies to synthesize over 80 AD algorithms based on these six themes: (1) CCU; (2) Physiologic Data Source; (3) Harvested data; (4) Data Analysis; (5) Clinical Evaluation; and (6) Clinical Implementation. Review results show that most published algorithms: (a) are designed for one specific type of CCU; (b) are validated on data harvested only from one Original Equipment Manufacturer (OEM) monitor; (c) generate Signal Quality Indicators (SQI) that are not yet formalised for useful integration in clinical workflows; (d) operate either in standalone mode or coupled with CED or PD applications; (e) are rarely evaluated in real-time; and (f) are not implemented in clinical practice. In conclusion, it is recommended that AD algorithms conform to generic input and output interfaces with commonly defined data: (1) type; (2) frequency; (3) length; and (4) SQIs. This shall promote (a) reusability of algorithms across different CCU domains; (b) evaluation on different OEM monitor data; (c) fair comparison through formalised SQIs; (d) meaningful integration with other AD, CED and PD algorithms; and (e) real-time implementation in clinical workflows.

💡 Research Summary

The paper presents a methodological review of artifact detection (AD) techniques used in critical care units (CCUs). By examining more than 80 published algorithms, the authors construct six thematic taxonomies—(1) CCU type, (2) physiologic data source, (3) harvested data, (4) data analysis, (5) clinical evaluation, and (6) clinical implementation—to systematically synthesize the state of the art.

The analysis reveals that the majority of AD algorithms are narrowly scoped: they are designed for a single CCU (e.g., ICU, PICU, NICU, OR) and validated on data from a single OEM monitor. Because each monitor implements proprietary preprocessing and provides different signal characteristics, algorithms often become hard‑wired to a specific data format, sampling frequency, and patient demographic. Consequently, re‑use across different units or devices is limited.

In the “physiologic data source” taxonomy, the review highlights substantial heterogeneity among pulse‑oximeter manufacturers (Masimo, Philips, Datex‑Ohmeda, etc.) and notes that signal quality indicators (SQIs) are either absent or expressed in incomparable units. The “harvested data” taxonomy shows that high‑frequency signals (ECG, ABP, PPG) are sampled at ≥100 Hz, whereas low‑frequency parameters (HR, BP, temperature) are logged at 1 Hz or slower, creating mismatched input requirements for AD algorithms.

The “data analysis” taxonomy classifies algorithms by dimensionality (univariate vs. multivariate), focus (stream‑centric, patient‑centric, disease‑centric), and clinical contribution (stand‑alone AD vs. coupled AD). While multivariate approaches are gaining interest, most published work remains univariate and stream‑centric. Only a few studies integrate AD with downstream clinical event detection (CED) or parameter derivation (PD) modules, and even fewer provide real‑time performance assessments.

Clinical evaluation is largely retrospective, with limited sample sizes and few multi‑center, multi‑OEM studies. Real‑time validation—essential for demonstrating reductions in false alarms and improvements in patient safety—is rare. Moreover, the “clinical implementation” taxonomy shows that virtually none of the reviewed AD algorithms have been deployed in production clinical systems. The lack of standardized input/output interfaces and universally defined SQIs hampers integration with existing monitoring infrastructure and with other decision‑support tools.

To address these gaps, the authors propose a set of concrete recommendations:

- Define a generic interface that specifies data type, sampling frequency, segment length, and SQI format, enabling plug‑and‑play reuse of AD modules across different CCU domains and OEM devices.

- Standardize SQIs (e.g., 0‑100 % scale with calibrated error rates) so that downstream CED/PD algorithms can reliably consume quality metrics.

- Conduct cross‑OEM validation studies to assess algorithm robustness against device‑specific biases.

- Develop real‑time testbeds and integrate AD modules into clinical workflows, leveraging open standards such as HL7, IEEE 11073, or FHIR for data exchange.

- Encourage open‑source implementations and community‑driven benchmarking to foster transparent comparison of AD performance.

In conclusion, the review underscores that artifact detection research must shift from isolated, device‑specific prototypes toward interoperable, standards‑based solutions that can be evaluated in real‑time clinical settings. Only by adopting common interfaces, standardized SQIs, and rigorous multi‑device validation can AD algorithms achieve the reusability, comparability, and clinical impact necessary for widespread adoption in modern critical care environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment