Beyond Word Importance: Contextual Decomposition to Extract Interactions from LSTMs

The driving force behind the recent success of LSTMs has been their ability to learn complex and non-linear relationships. Consequently, our inability to describe these relationships has led to LSTMs being characterized as black boxes. To this end, we introduce contextual decomposition (CD), an interpretation algorithm for analysing individual predictions made by standard LSTMs, without any changes to the underlying model. By decomposing the output of a LSTM, CD captures the contributions of combinations of words or variables to the final prediction of an LSTM. On the task of sentiment analysis with the Yelp and SST data sets, we show that CD is able to reliably identify words and phrases of contrasting sentiment, and how they are combined to yield the LSTM’s final prediction. Using the phrase-level labels in SST, we also demonstrate that CD is able to successfully extract positive and negative negations from an LSTM, something which has not previously been done.

💡 Research Summary

This paper presents a novel interpretation method called Contextual Decomposition (CD) designed to explain the predictions made by Long Short-Term Memory (LSTM) networks. The core challenge addressed is the “black box” nature of LSTMs, which, despite their success in learning complex, non-linear relationships (particularly in NLP tasks like sentiment analysis), offer little transparency into how individual predictions are made.

CD’s primary innovation is a mathematical decomposition of the LSTM’s internal states. For any given phrase within an input sequence, CD recursively decomposes the LSTM’s cell state (c_t) and hidden state (h_t) at each time step into two components: a “β” component representing contributions arising solely from the specified phrase, and a “γ” component representing contributions involving interactions with elements outside the phrase (i.e., the context). Formally, this is expressed as h_t = β_t + γ_t and c_t = β_c_t + γ_c_t.

The decomposition leverages the unique gated structure of LSTMs. The key insight is that the element-wise multiplications between gates (e.g., in the cell update equation c_t = f_t ⊙ c_{t-1} + i_t ⊙ g_t) are the vehicle for modeling interactions between variables. CD works by linearizing the activation functions (sigmoid and tanh) within the gate equations, expanding the products of these linearized sums, and then carefully assigning the resulting cross-terms to either the β or γ component based on whether all factors in a term originate from the phrase in question. This process is applied recursively through the sequence. The final output to the classifier, W h_T, is consequently decomposed into W β_T + W γ_T, where W β_T provides a quantitative, interpretable score for the phrase’s direct contribution to the prediction, analogous to a logistic regression coefficient.

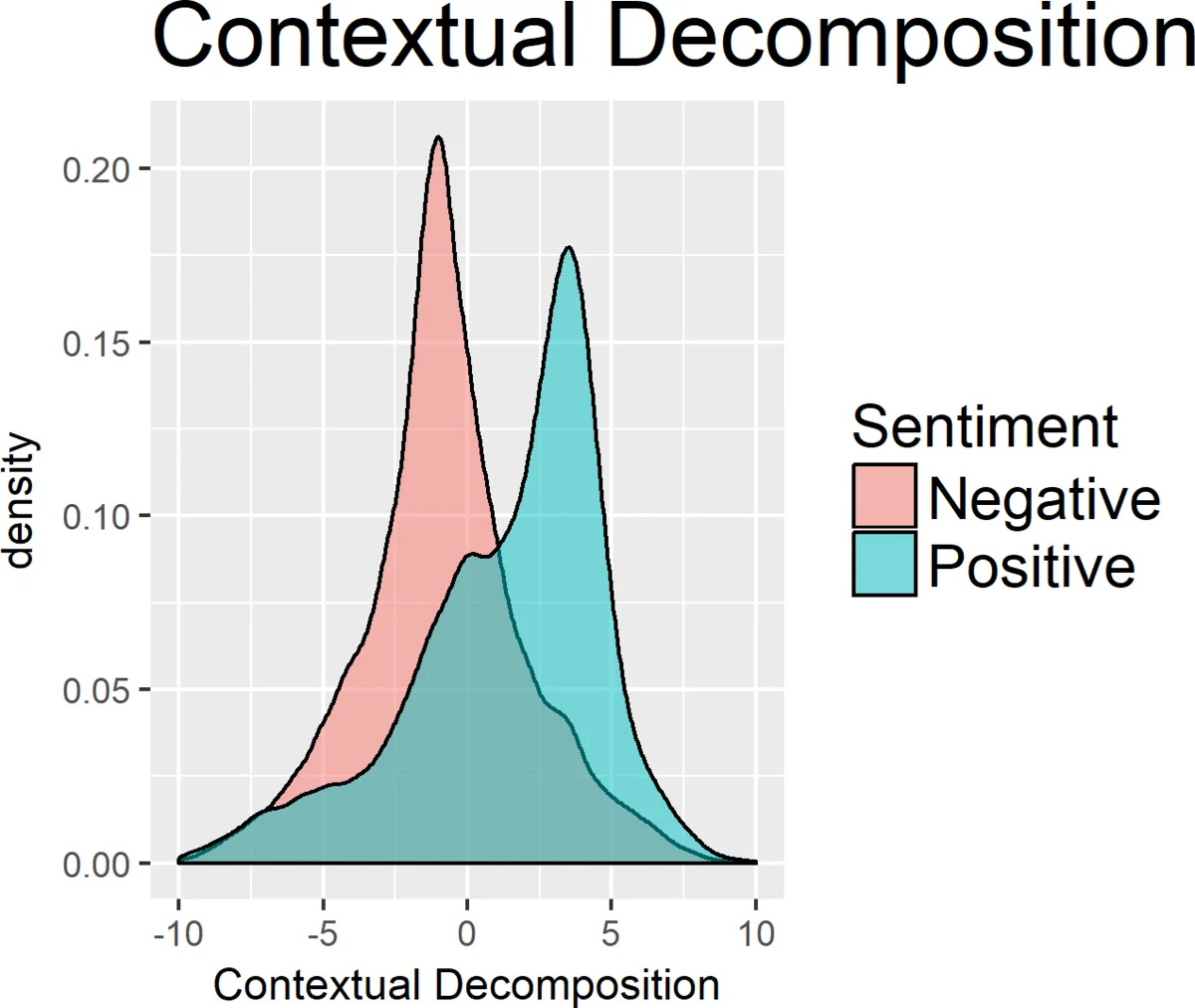

The authors empirically validate CD on sentiment analysis tasks using the Yelp Polarity and Stanford Sentiment Treebank (SST) datasets. First, they show that for the standard task of assigning word-level importance scores, CD performs favorably compared to state-of-the-art baselines including Integrated Gradients, Leave-One-Out, gradient-based methods, and a prior LSTM decomposition technique. Notably, CD more accurately identifies strongly negative words within overall positive reviews, whereas prior methods often incorrectly attenuate or even reverse their sentiment, demonstrating that CD better isolates phrase-level contribution from broader document context.

The most significant results demonstrate CD’s ability to capture compositionality—how words combine to influence the prediction. CD can reliably identify phrases of contrasting sentiment within a sentence and show how their contributions combine. Crucially, using the phrase-level annotations in SST, the authors show that CD can successfully extract instances of positive and negative negation (e.g., “not good”) from the LSTM. This means CD can identify when the LSTM treats a multi-word phrase as a single semantic unit with a composed meaning, a capability beyond mere word importance summation that prior methods lack.

In summary, Contextual Decomposition offers a principled way to open the black box of LSTMs. It moves beyond attributing importance to individual words by explaining how combinations of words interact through the LSTM’s gating mechanisms to yield a final prediction. This provides a deeper, more faithful interpretation of model reasoning, which is critical for debugging, trust, and advancing more interpretable AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment